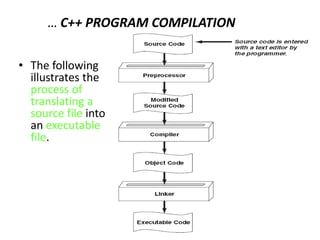

The document provides an introduction to programming and problem solving using computers. It discusses the following key points:

- Problem solving involves determining the inputs, outputs, and steps to solve a problem. Computers can be programmed to solve problems more quickly if they involve extensive inputs/outputs or complex/time-consuming methods.

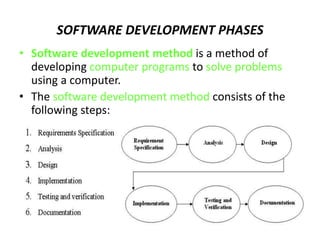

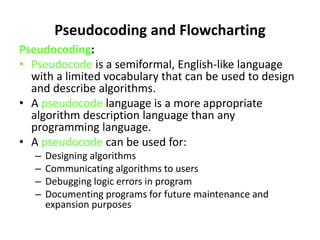

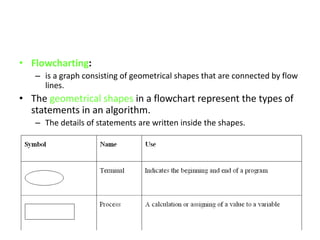

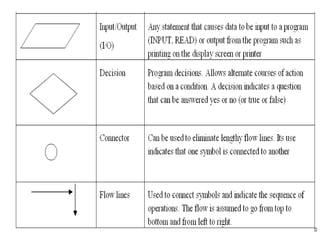

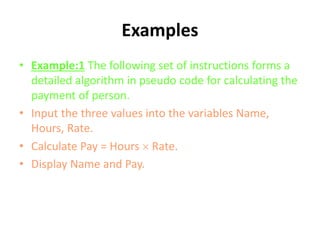

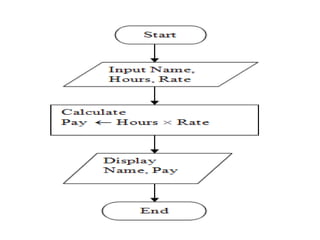

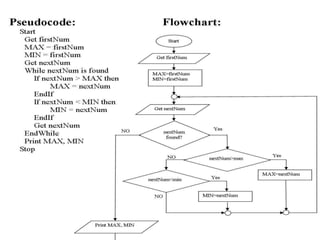

- The software development process includes requirements specification, analysis, design, implementation, testing, and documentation. Algorithms using pseudocode or flowcharts are designed to solve problems.

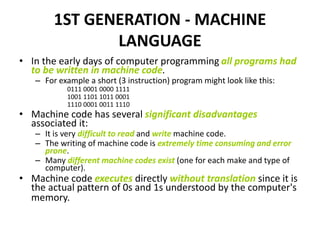

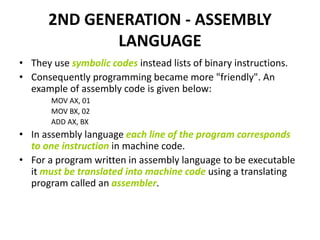

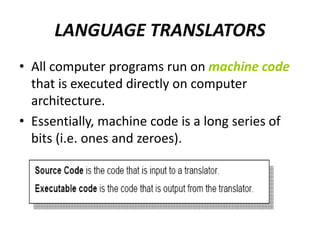

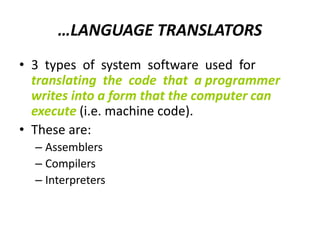

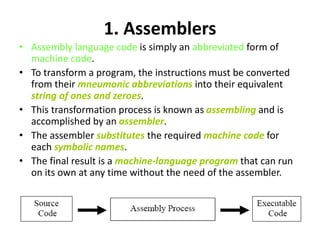

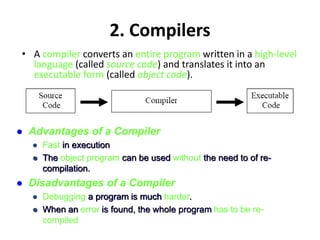

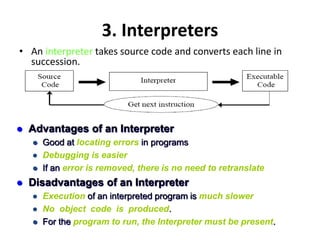

- Programming languages have evolved from low-level machine languages to high-level languages. Earlier generations like assembly language were more machine-oriented while modern languages are more portable, problem-oriented, and easier for humans to read and write

![Types [Levels] of

Programming Languages

• There is only one programming language that

any computer can actually understand and

execute:

– its own native binary machine language.

• All other languages are said to be high level or

low level according to how closely they can be

said to resemble machine code.](https://image.slidesharecdn.com/chapter-1-231020122245-003d0c8a/85/CHAPTER-1-ppt-22-320.jpg)