The document discusses the evolution and principles of the World Wide Web, emphasizing the need for improved data integration beyond current textual indexing methods used by search engines. It explores the design criteria of the web proposed by Tim Berners-Lee, advocating for a 'web of data' that allows for seamless interaction between various data sources and architectures like REST and SOA. The paper aims to eliminate misconceptions surrounding web standards like URI, HTTP, and XML, ultimately focusing on developing a more functional and interoperable web environment.

![12

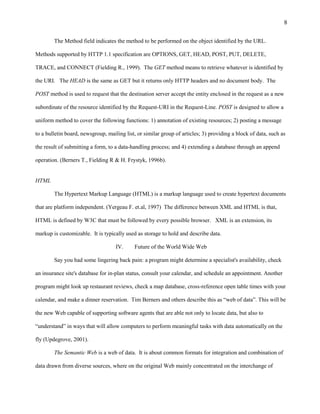

W3C standard, a generic URI syntax consists of hierarchical sequence of components such as scheme,

authority, path, query, and fragment.

URI = scheme ":" hier-part [“?" query] [“#" fragment]

The following are two examples URIs and their component parts:

foo://example.com:8042/over/there?name=ferret#nose

_/ ______________/_________/ _________/ __/

| | | | |

scheme authority path query fragment

| _____________________|__

//

urn: example: animal: ferret: nose

WC3 recommends the use of standard session mechanisms instead of session-based

URIs (W3C, 2003). What does it mean? HTTP/1.1 provides a number of mechanisms for

identification, authentication and session management. Using these mechanisms instead of user-

based or session-based URIs guarantees than the URIs used to serve resources is truly universal

(allowing, for example, people to share, send, or copy them).

For example: Bob tries to visit http://www.example.com/resource, but since it's a rainy Monday

morning, he gets redirected to http://www.example.com/rainymondaymorning/resource. The day

after, when Bob tries to access the resource, he had bookmarked earlier, the server answers that

Bob has made a bad request and serves http://www.example.com/error/thisisnotmondayanymore.

Had the server served back http://www.example.com/resource because the Monday session had

expired, it would have been, if not acceptable, at least harmless. The problem with this is that, it

does not really guarantee that URI’s used are truly universal. The acceptable practice in this

situation is to use some modifiers, like "?" used to pass arguments for cgi, or ";" and to pass other

kind of arguments or context information.](https://image.slidesharecdn.com/cis532restandsemantic03202009-12584337541321-phpapp02/85/Cert-Overview-12-320.jpg)