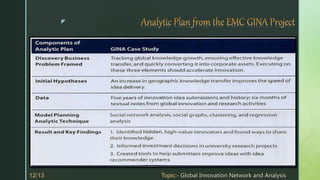

The document describes a case study of how the Global Innovation Network and Analytics (GINA) team at EMC applied data analytics to identify innovators within the company. The GINA team collected structured and unstructured data from various sources. They analyzed the data using descriptive and predictive analytics to understand current innovation activities and identify areas for future investment. Their analyses found high densities of innovators in certain locations and identified boundary spanners and hidden innovators. The project helped EMC cultivate additional intellectual property and forge university partnerships.

![z

The GINA team employed several analytical methods. This included work by

the data scientist using Natural Language Processing (NLP) techniques on

the textual descriptions of the Innovation Roadmap ideas.

Social network analysis using R and Rstudio.

Fig. Social graph [27] visualization of idea submitters and finalists.](https://image.slidesharecdn.com/casestudyonginagobalinnovationnetworkandanalysis-200815180404/85/Case-study-on-gina-gobal-innovation-network-and-analysis-8-320.jpg)