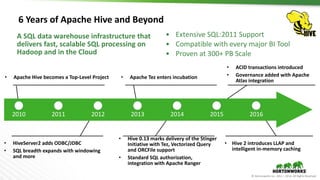

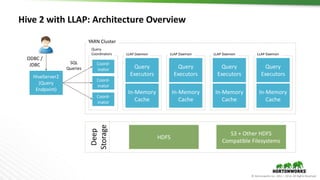

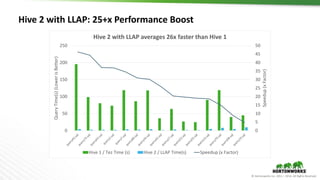

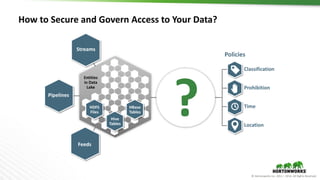

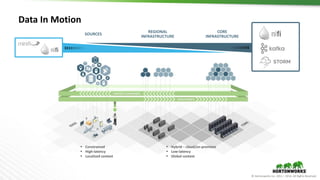

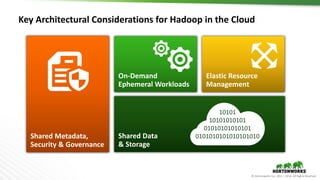

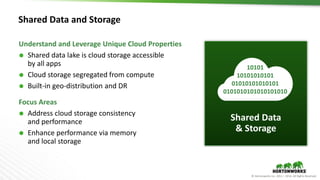

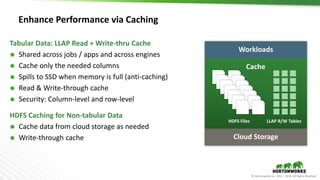

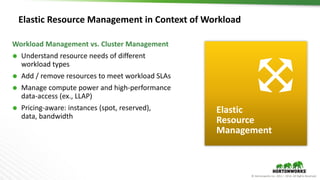

The document outlines the evolution and advancements in the Apache Hadoop ecosystem from its inception in 2006 to 2016, highlighting significant projects like Apache Hive and the improvements in performance and scalability through features like LLAP. It discusses the transition of Hadoop into cloud environments, focusing on architecture, resource management, data governance, and the advantages of cloud storage. Additionally, it emphasizes the importance of enhancing performance through caching and managing workloads effectively within cloud infrastructure.