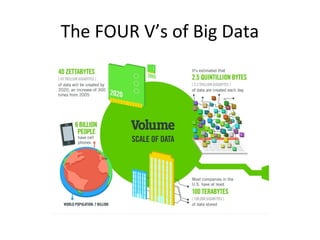

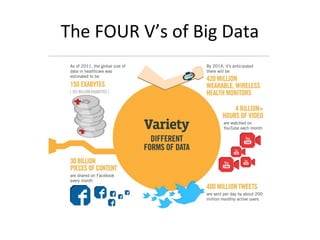

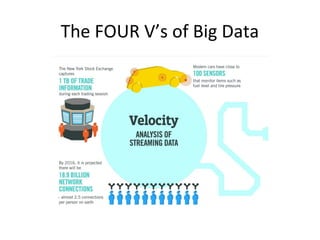

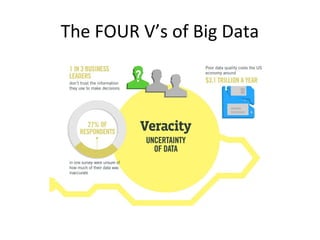

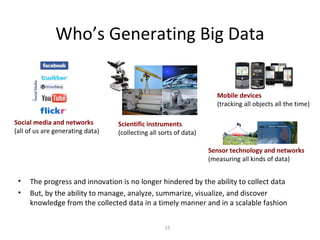

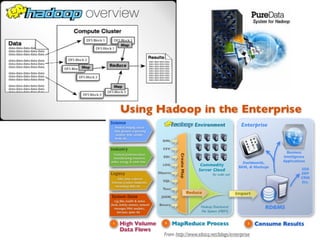

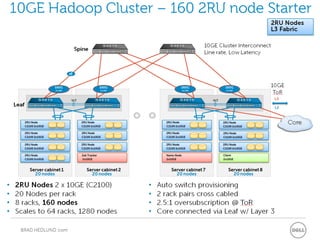

Big data refers to large, complex datasets that are difficult to process using traditional database management tools. There are four key characteristics of big data: volume, velocity, variety, and veracity. Various sources generate big data, including social media, scientific instruments, mobile devices, sensors, and more. Analyzing big data can provide benefits like cost reductions, time reductions, new product development, and smarter business decisions. Hadoop Distributed File System (HDFS) and Hadoop software platform provide scalable and cost-effective infrastructure for storing and processing big data across commodity servers in a cluster.