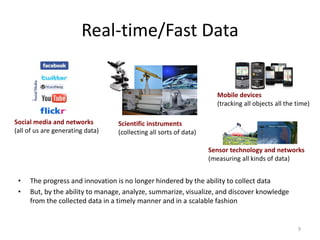

This document provides an introduction to big data. It defines big data as large and complex datasets that are difficult to process using traditional database tools. The key challenges of big data include capturing, storing, searching, sharing, analyzing and visualizing large amounts of diverse data from various sources. Big data is characterized by the 3Vs - volume, velocity and variety. New technologies like cloud computing provide scalable infrastructure for managing and analyzing big data. Hadoop has emerged as a popular platform for distributed storage and processing of large datasets across clusters of commodity hardware.