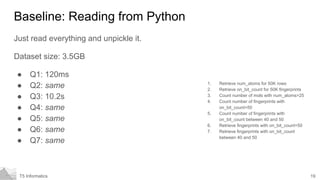

The document discusses different technologies for storing and querying large chemical datasets, known as "big chemical data". It evaluates PostgreSQL, SQLite, MessagePack, FlatBuffers, and Pandas on a test dataset of 4 million compounds from ZINC. For queries like retrieving atom counts for 50k molecules, counting molecules by atom number, and fingerprint lookups, SQLite and MessagePack performed the fastest, completing in under 50ms. PostgreSQL was also very fast with indices, finishing some queries in under 100ms. The document concludes no single technology is best and the complexity of the tool should match the task.

![3T5 Informatics

Defining our terms

Big data is a term for data sets that are so large or complex that traditional

data processing applications are inadequate to deal with them. Challenges

include analysis, capture, data curation, search, sharing, storage, transfer,

visualization, querying, updating and information privacy. The term "big

data" often refers simply to the use of predictive analytics, user behavior

analytics, or certain other advanced data analytics methods that extract

value from data, and seldom to a particular size of data set.[2] "There is little

doubt that the quantities of data now available are indeed large, but that’s

not the most relevant characteristic of this new data ecosystem."[3]

https://en.wikipedia.org/wiki/Big_data](https://image.slidesharecdn.com/bigchemicaldata-distribute-170519062605/85/Big-chemical-data-No-Problem-3-320.jpg)

![22T5 Informatics

Tech 2: Flat buffers

Cross platform serialization library

Binary format, simple to read and write from

multiple languages

Flexible hierarchical schema

http://google.github.io/flatbuffers/index.html

namespace storage_formats;

table Fingerprint {

on_bit_count:ushort;

bytes:[ubyte];

}

table Molecule {

smiles:string;

name:string;

pkl:[ubyte];

num_atoms:ushort;

num_heavy_atoms:ushort;

pattern_fp:Fingerprint;

morgan2_fp:Fingerprint;

num_rotatable_bonds:ushort;

num_rings:ushort;

tpsa:double;

mollogp:double;

molwt:double;

}

root_type Molecule;](https://image.slidesharecdn.com/bigchemicaldata-distribute-170519062605/85/Big-chemical-data-No-Problem-22-320.jpg)

![29T5 Informatics

Tech 6: bcolz

Columnar data format for Python

Compressed on disk and/or in memory

Provides a similar API to numpy

Pretty good querying primitives

Requires a schema

https://github.com/Blosc/bcolz

dtype=[('zincid','S16'),

('smiles','S256'),

('pkl','S512'),

('pfp_obc','u4'),

('pfp_pkl','S256'),

('mfp2_obc','u4'),

('mfp2_pkl','S256'),

('num_atoms','u4'),

('num_heavy_atoms','u4'),

('num_rotatable_bonds','u4'),

('num_rings','u4'),

('tpsa','f8'),

('mollogp','f8'),

('molwt','f8')]](https://image.slidesharecdn.com/bigchemicaldata-distribute-170519062605/85/Big-chemical-data-No-Problem-29-320.jpg)

![30T5 Informatics

Bcolz performance

Dataset size: 2.0GB

● Q1: 8ms

● Q2: 8ms

● Q3: 295ms

● Q4: 83ms

● Q5: 587ms

● Q6: 1.1s

● Q7: 2.2s

1. Retrieve num_atoms for 50K rows

2. Retrieve on_bit_count for 50K fingerprints

3. Count number of mols with num_atoms>25

4. Count number of fingerprints with

on_bit_count=50

5. Count number of fingerprints with

on_bit_count between 40 and 50

6. Retrieve fingerprints with on_bit_count=50

7. Retrieve fingerprints with on_bit_count

between 40 and 50

Q5: len([x for x in tbl.where("mfp2_obc==50", outcols="mfp2_obc")])

Q6: [x for x in tbl.where("(mfp2_obc>40) & (mfp2_obc<50)", outcols="mfp2_pkl")]](https://image.slidesharecdn.com/bigchemicaldata-distribute-170519062605/85/Big-chemical-data-No-Problem-30-320.jpg)

![32T5 Informatics

dask performance

Dataset size: 2.0GB (uses bcolz data)

● Q1: N/A

● Q2: N/A

● Q3: 74ms

● Q4: 116ms

● Q5: 131ms

● Q6: 5.8s

● Q7: 7.8s

1. Retrieve num_atoms for 50K rows

2. Retrieve on_bit_count for 50K fingerprints

3. Count number of mols with num_atoms>25

4. Count number of fingerprints with

on_bit_count=50

5. Count number of fingerprints with

on_bit_count between 40 and 50

6. Retrieve fingerprints with on_bit_count=50

7. Retrieve fingerprints with on_bit_count

between 40 and 50

Q5: len(df.zincid[df.mfp2_obc==50])

Q6: df.mfp2_pkl[df.mfp2_obc.between(40,50,inclusive=False)]](https://image.slidesharecdn.com/bigchemicaldata-distribute-170519062605/85/Big-chemical-data-No-Problem-32-320.jpg)