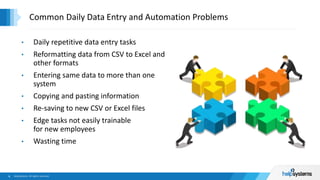

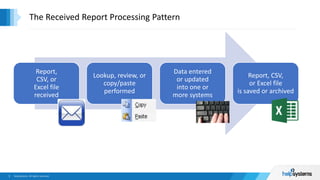

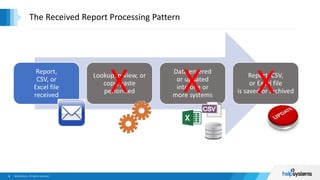

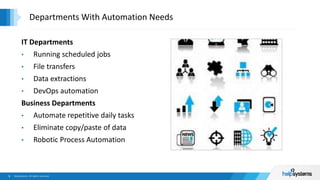

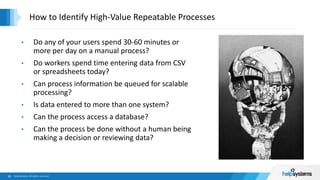

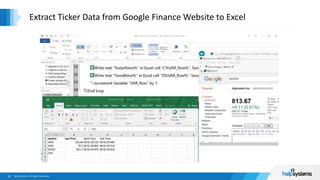

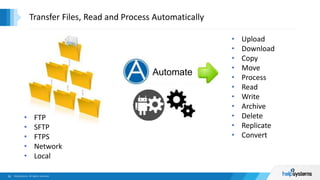

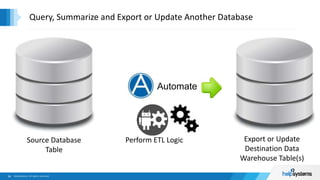

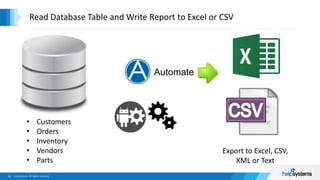

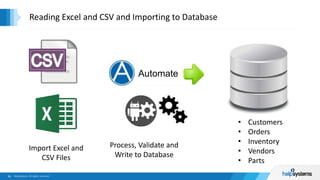

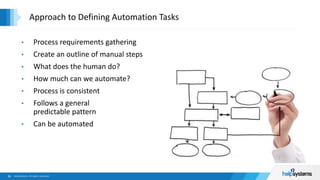

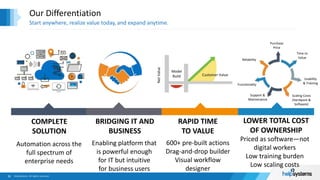

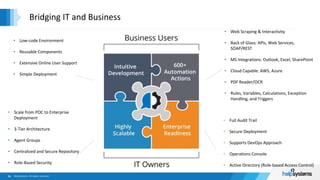

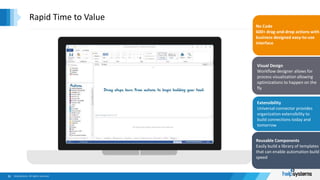

The document outlines a webinar series hosted by HelpSystems, focusing on automating data scraping and extraction. It covers painful data entry processes, automation use cases, and introduces HelpSystems' automation capabilities, featuring a live demo. The agenda includes discussions on automation across various departments, with tools and techniques aimed at improving efficiency and reducing manual data handling.