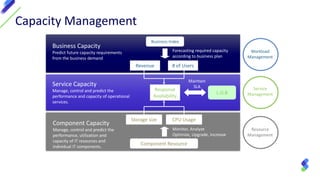

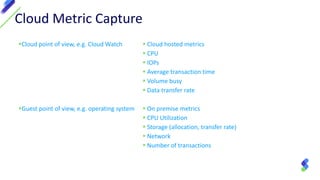

This document discusses cloud capacity management. It begins with an overview of Athene's 360 degree capacity management capabilities and why capacity management is needed to optimize costs, understand system status, and maintain service level agreements. It then defines cloud computing and discusses the various factors involved in cloud capacity management planning, including metrics, hybrid cloud models, and reporting examples. The document outlines Athene's key features for comprehensive capacity management across on-premise and cloud environments.