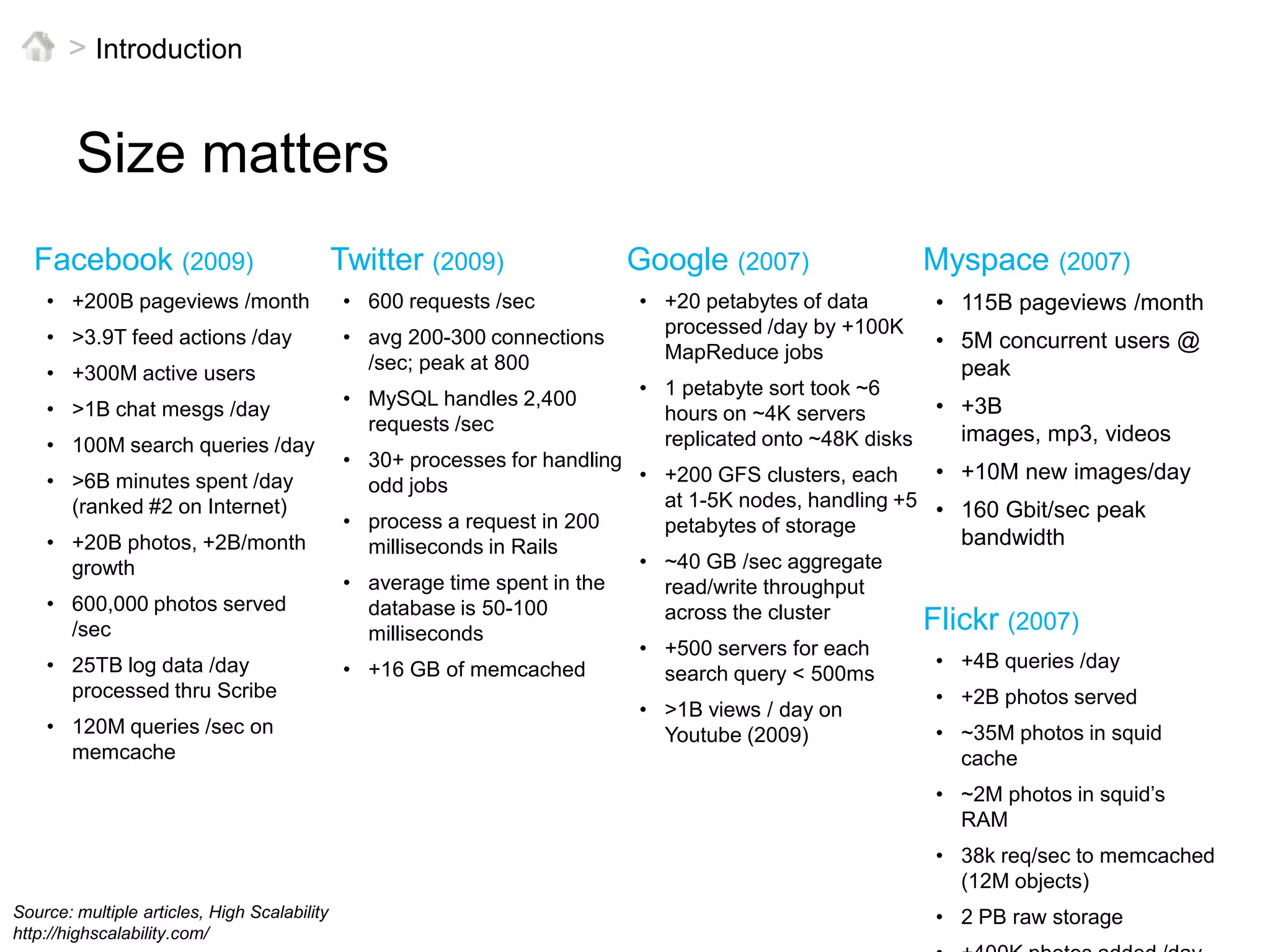

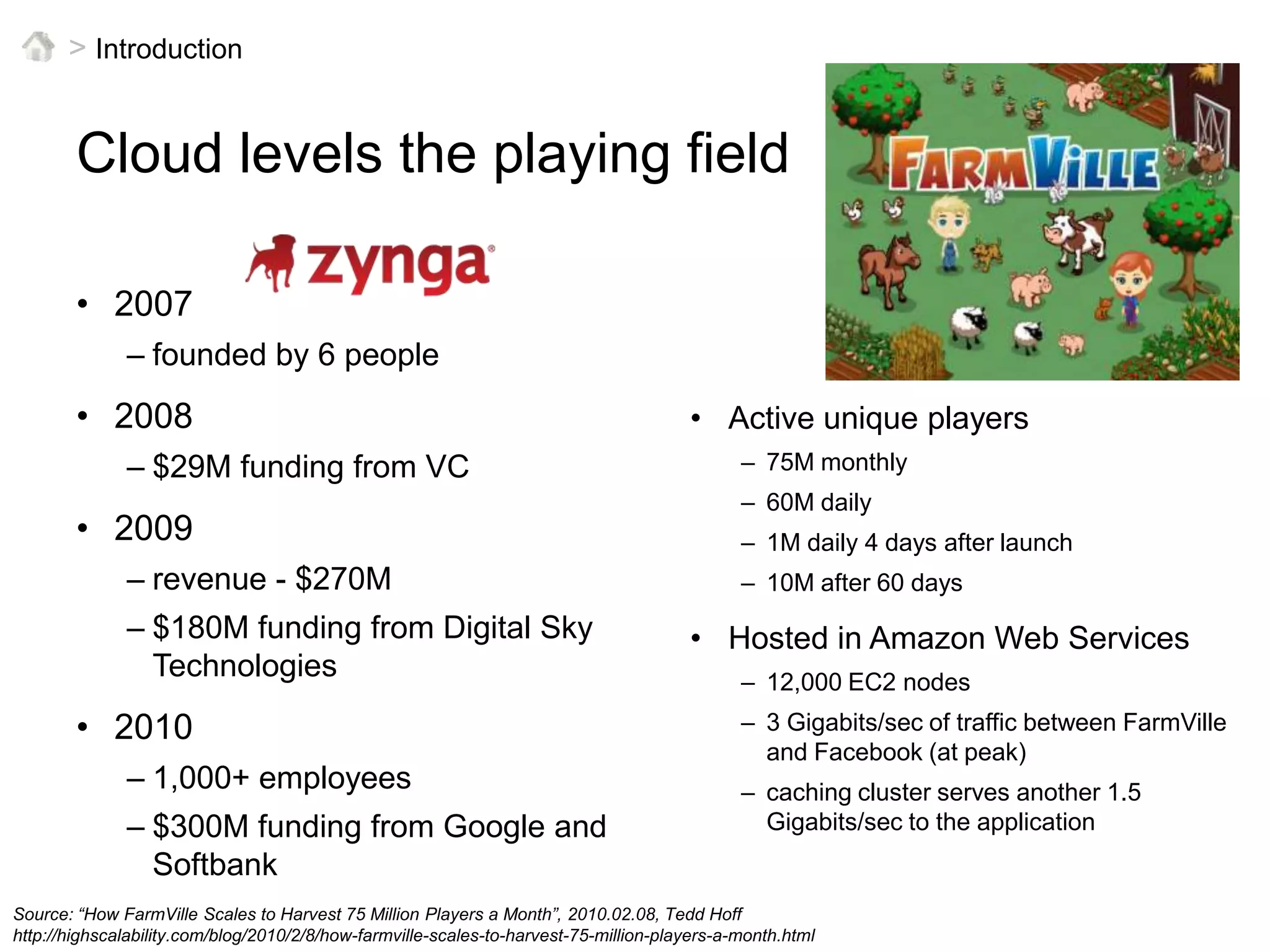

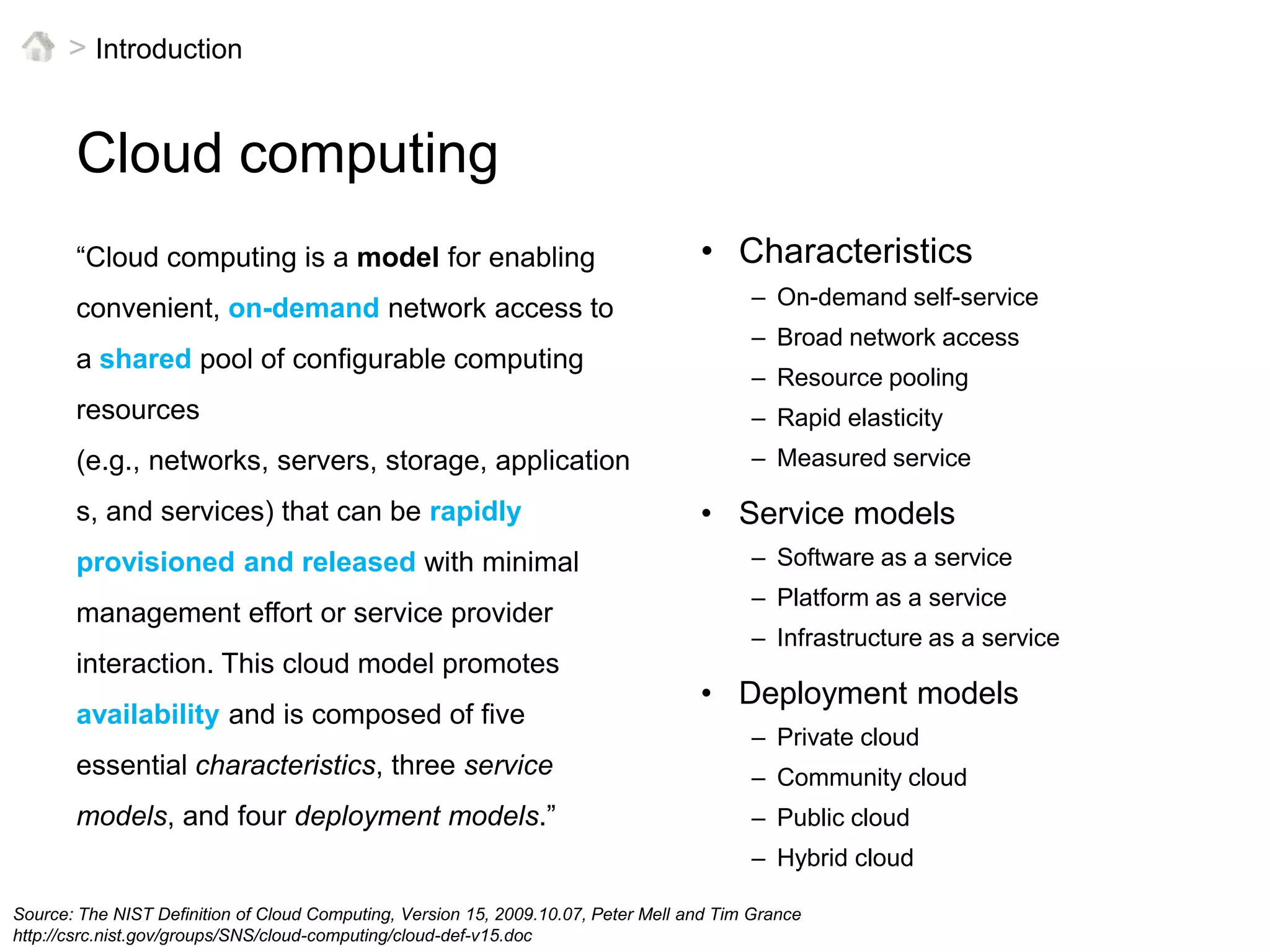

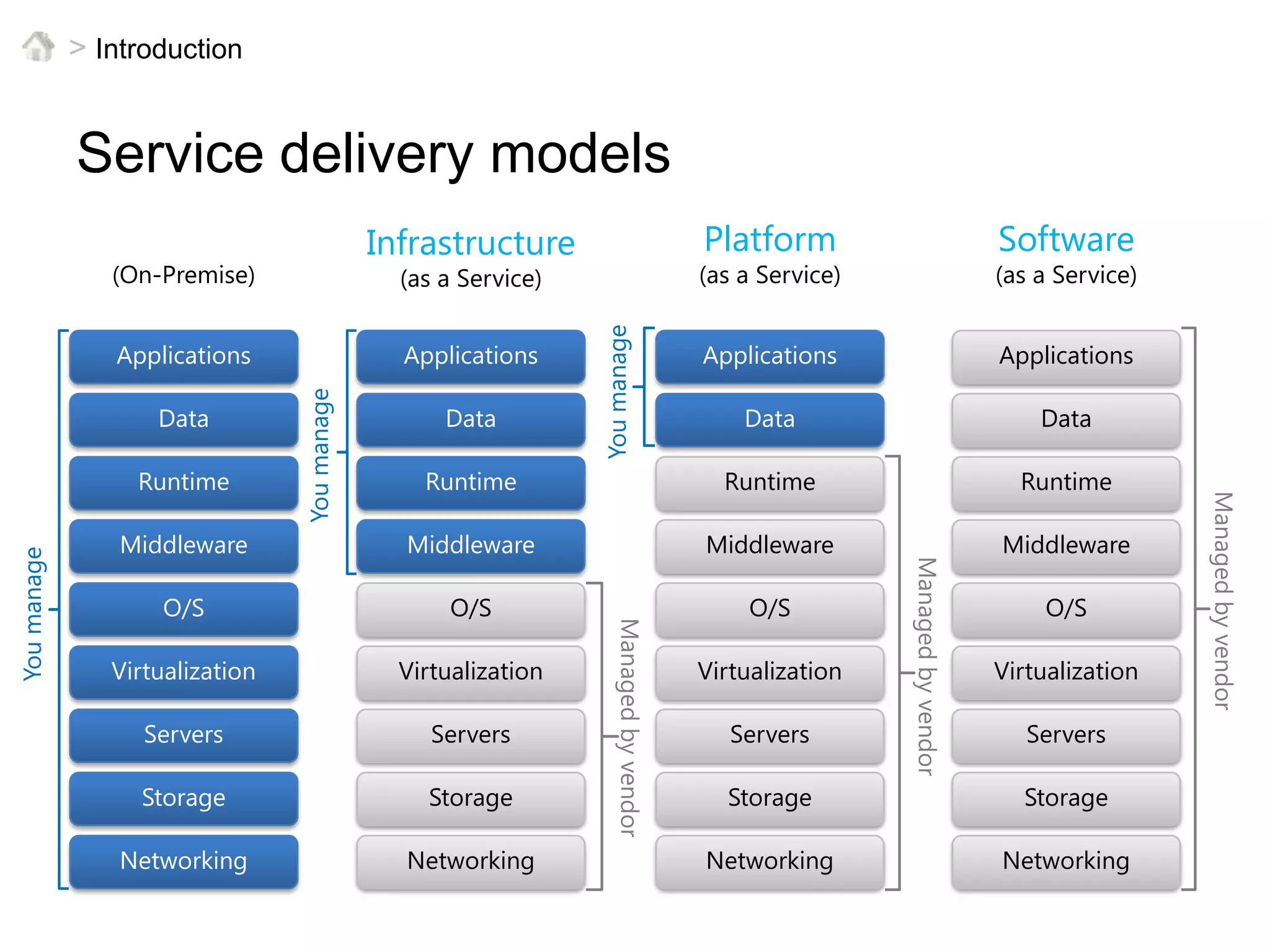

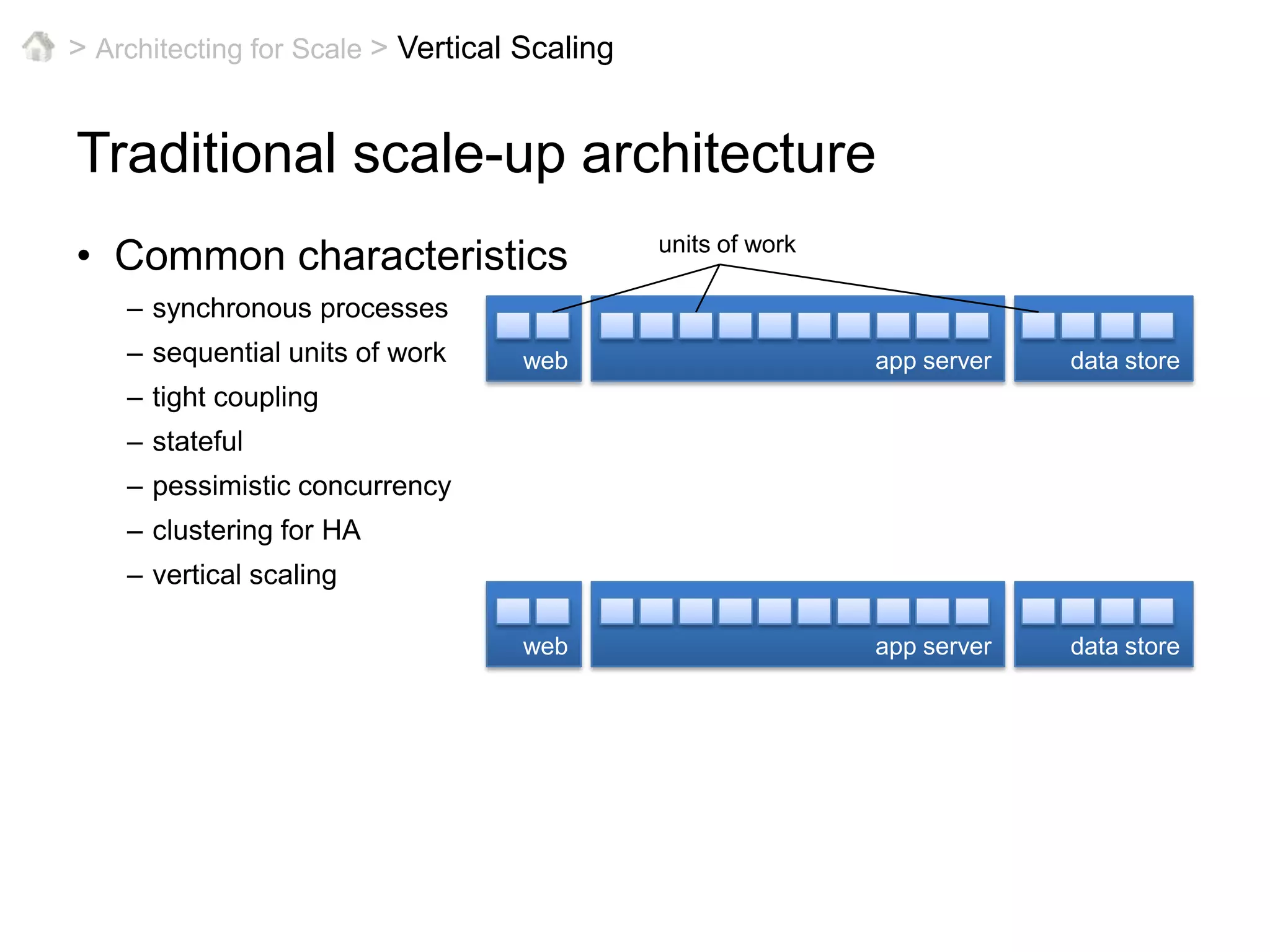

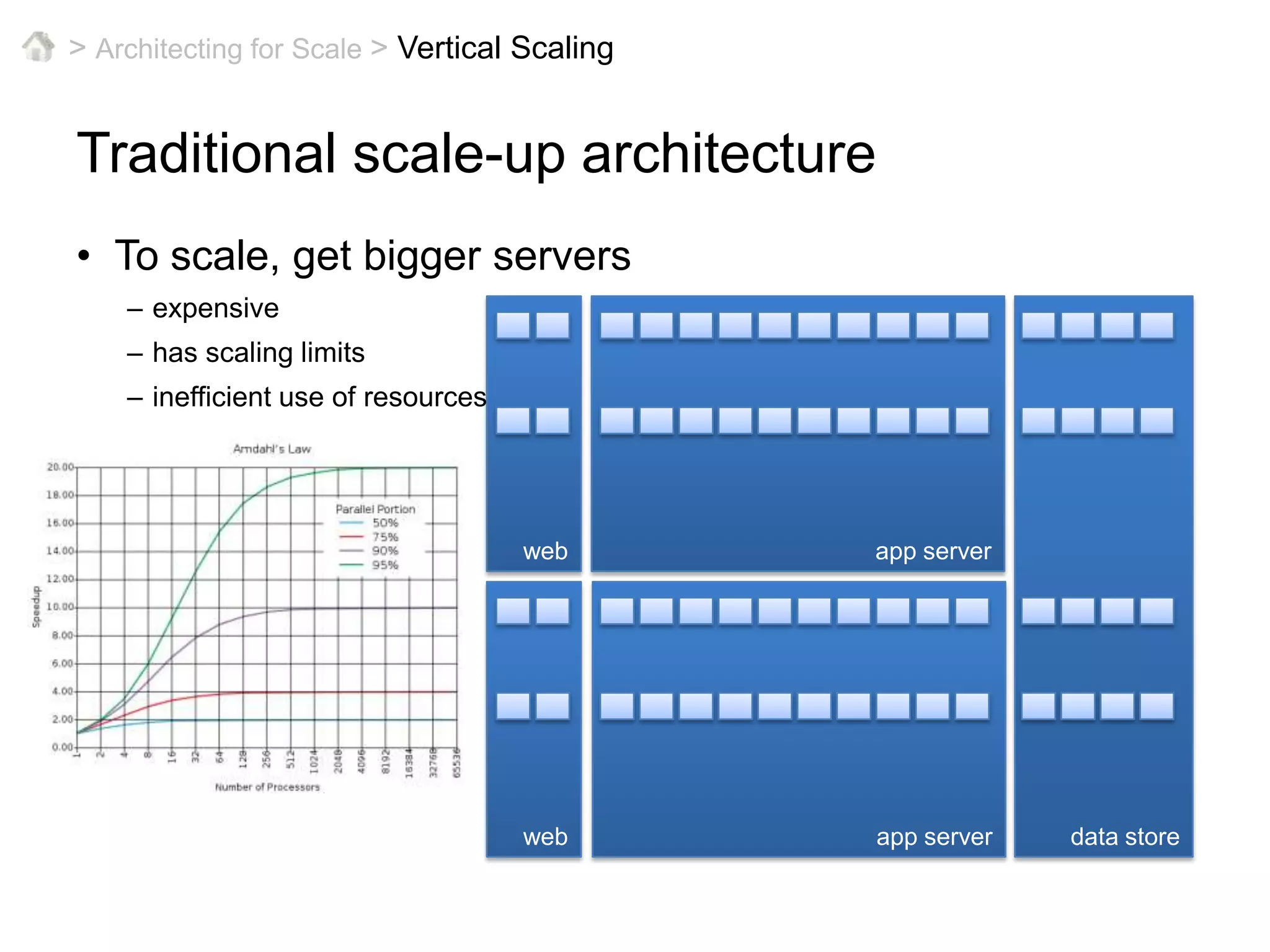

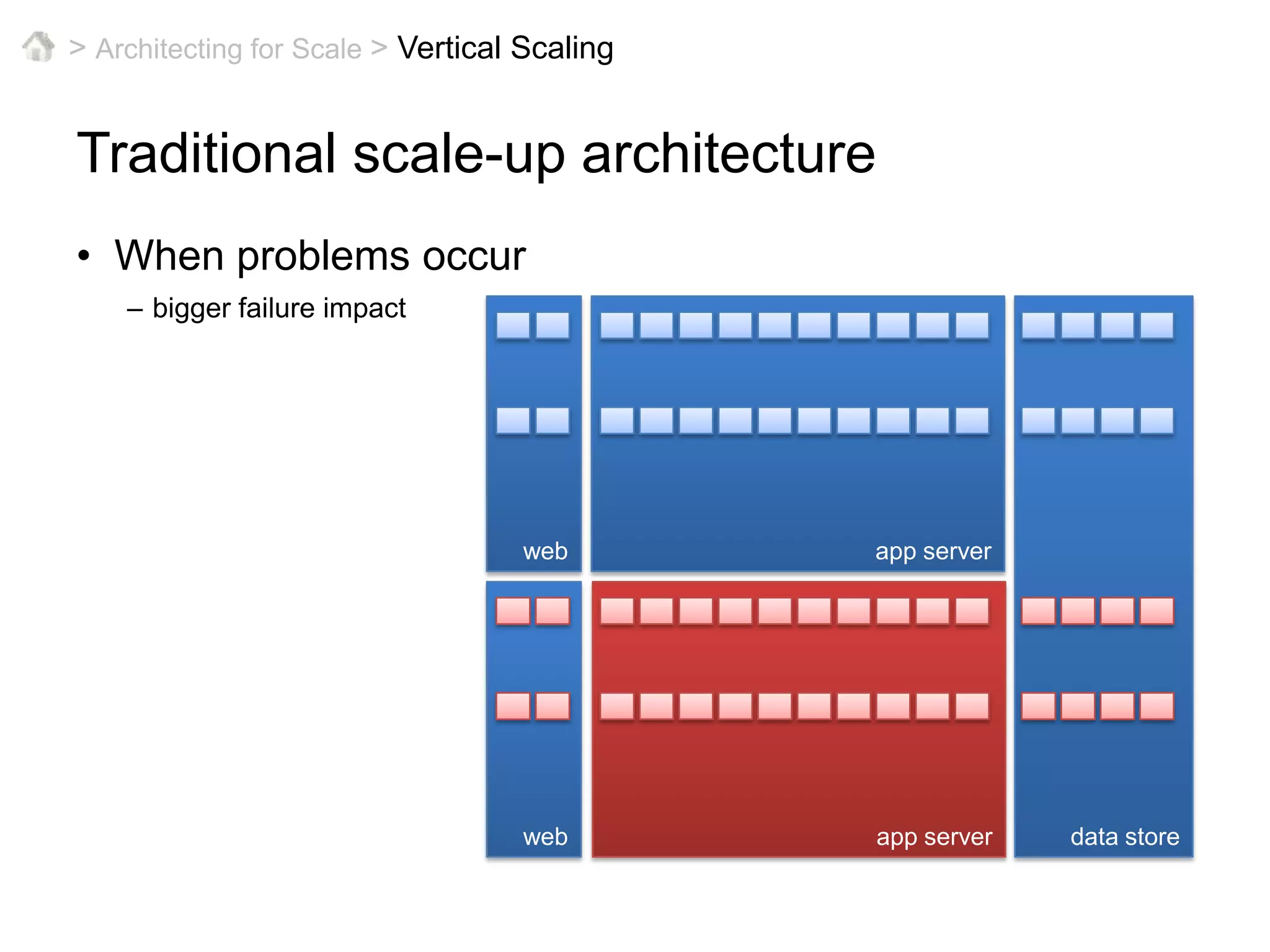

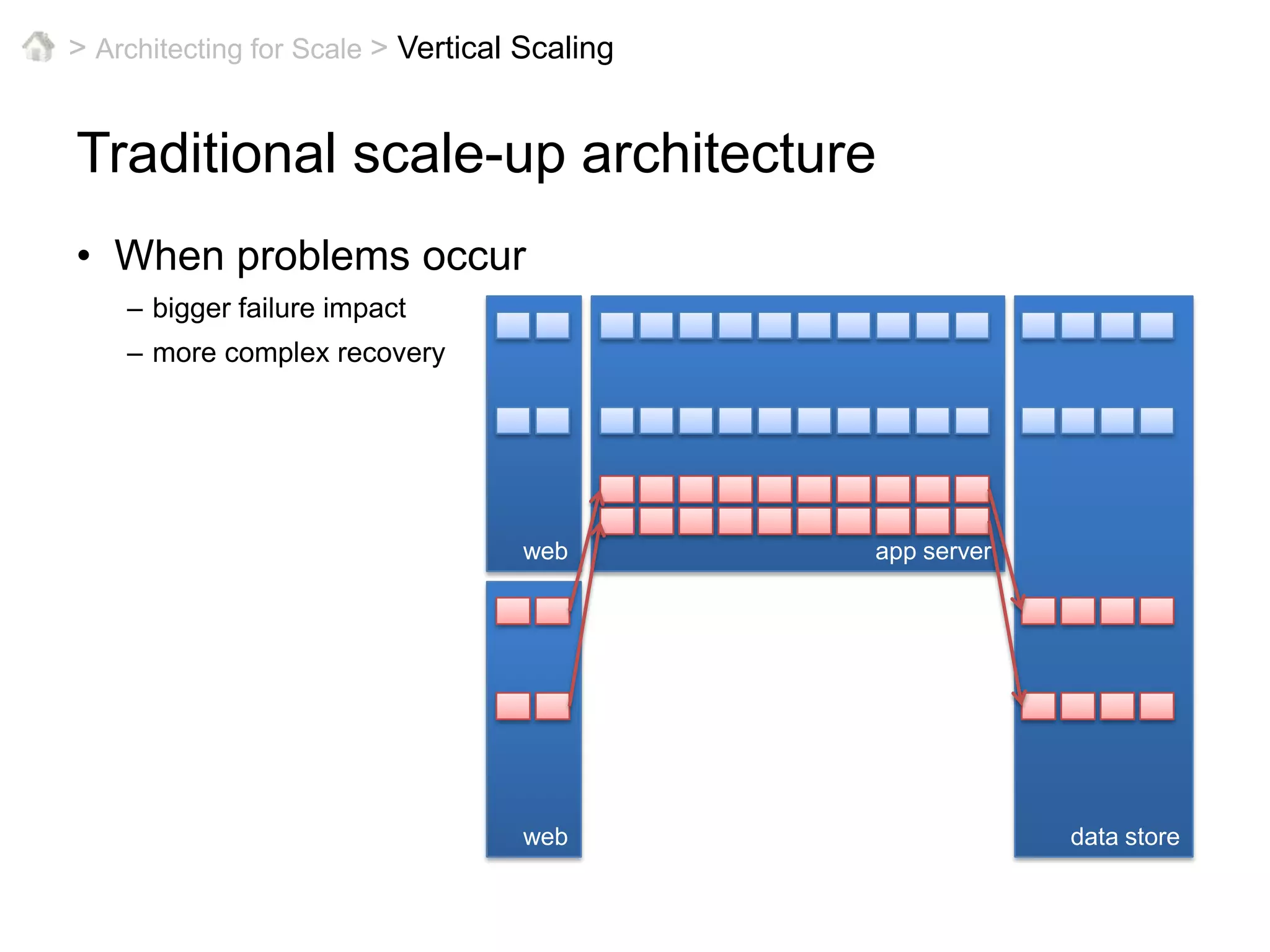

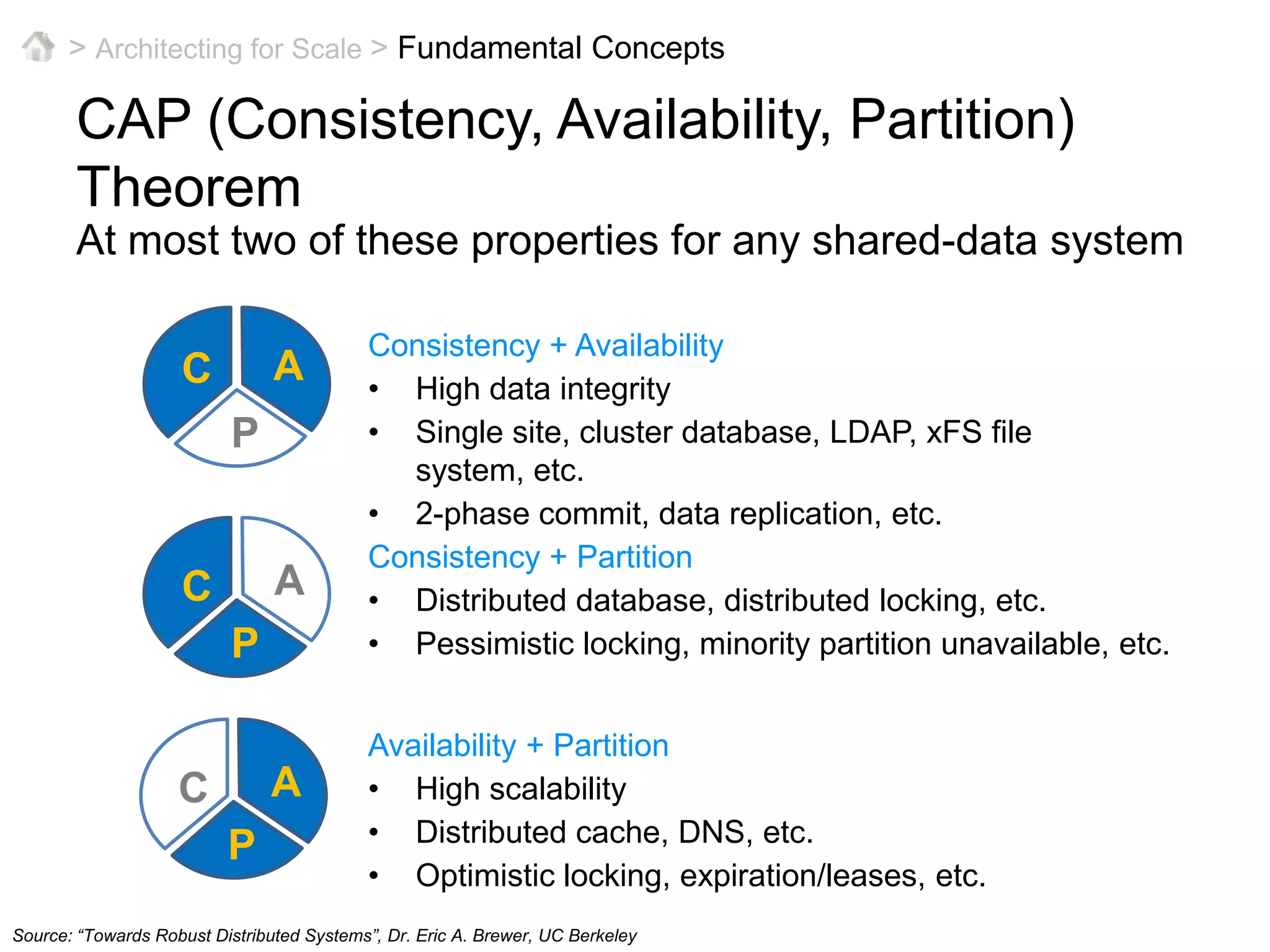

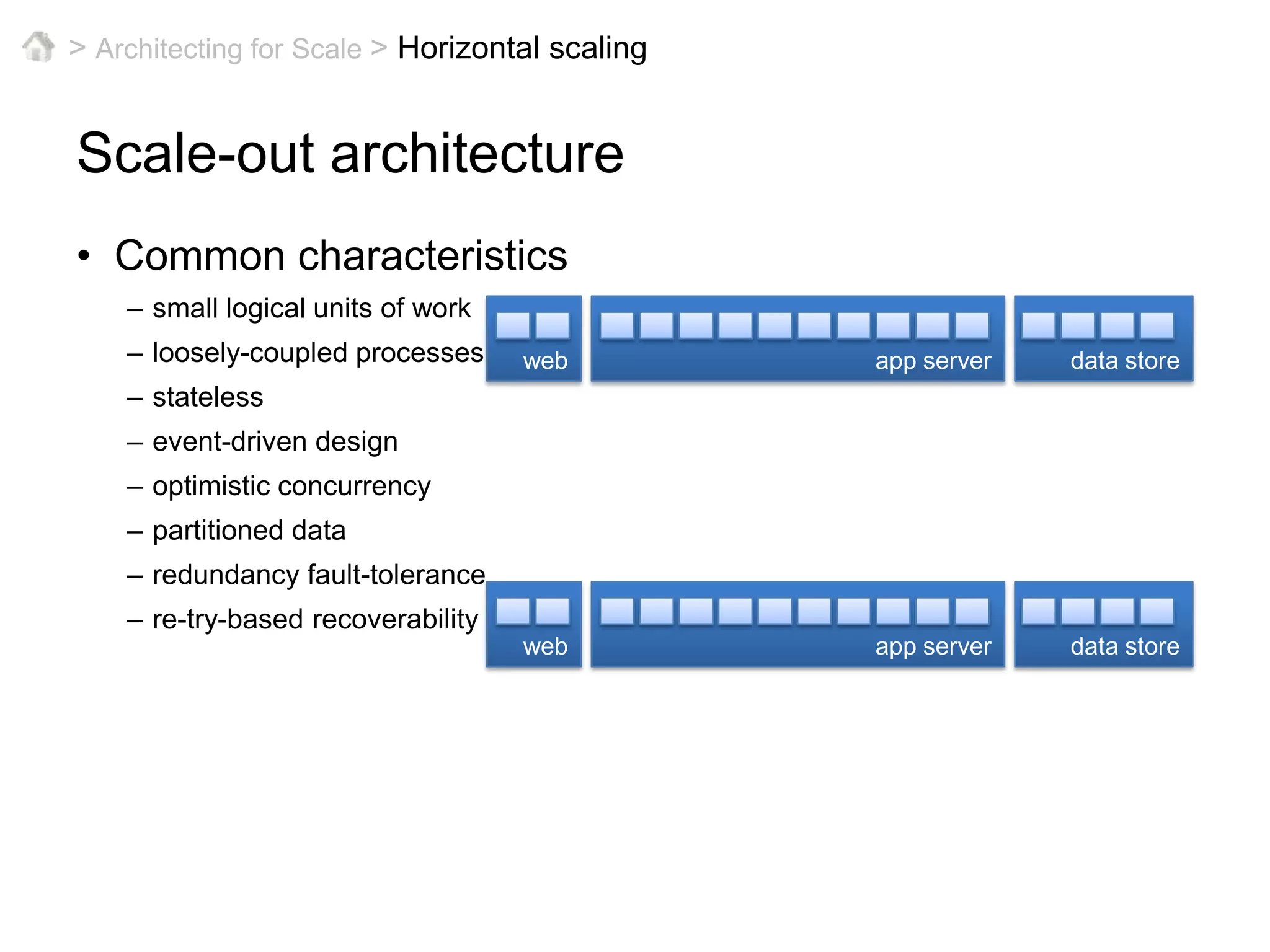

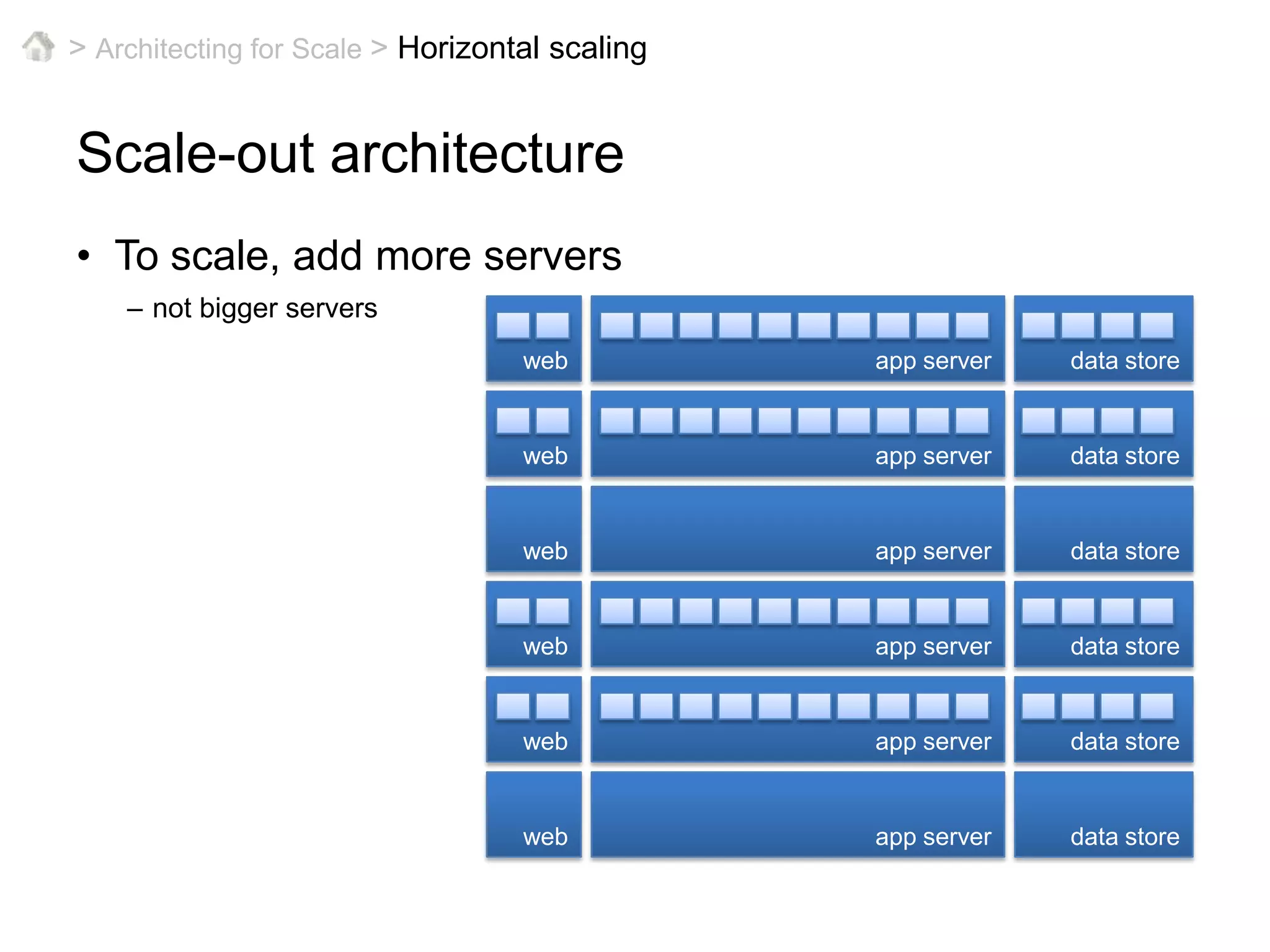

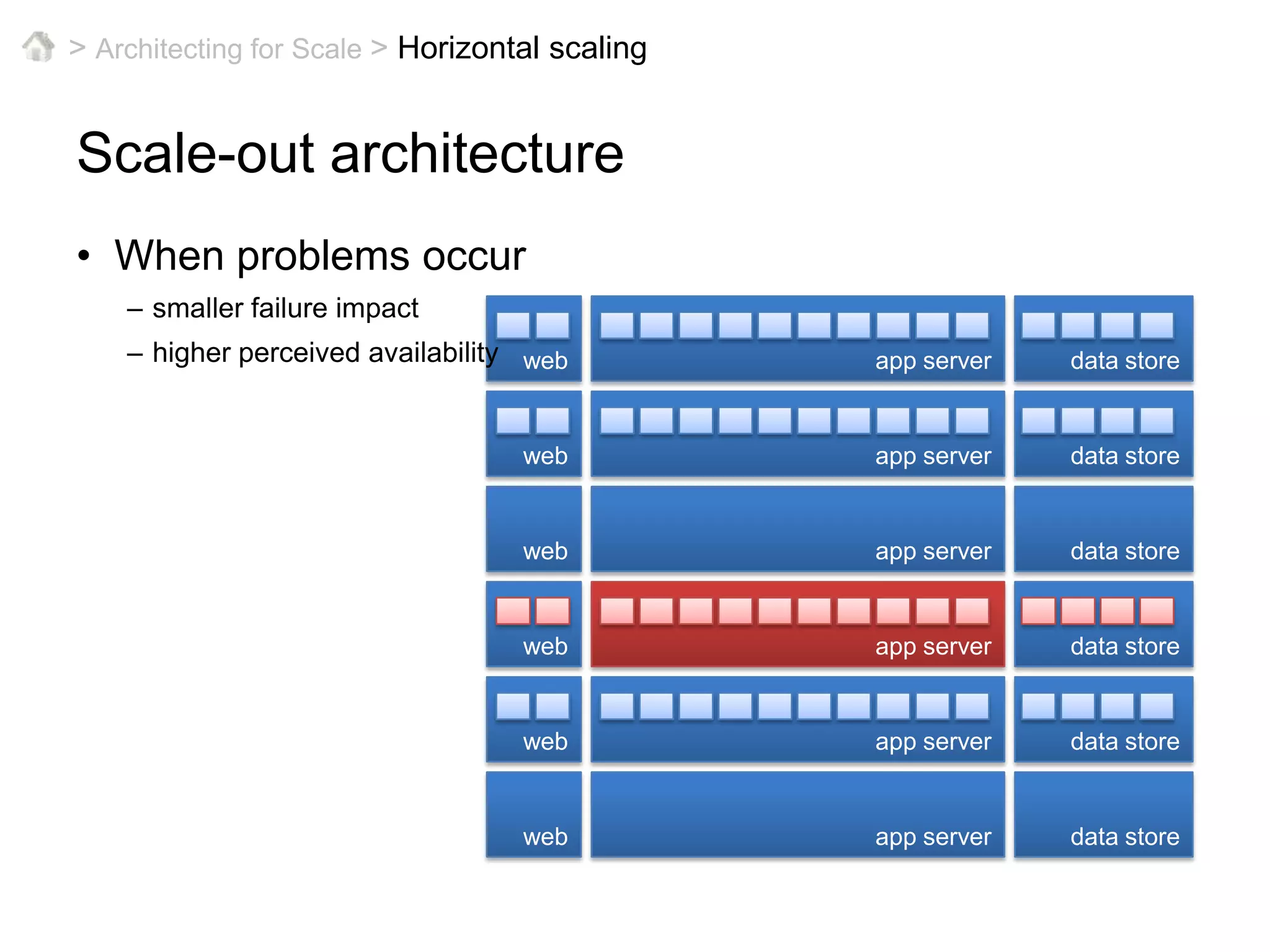

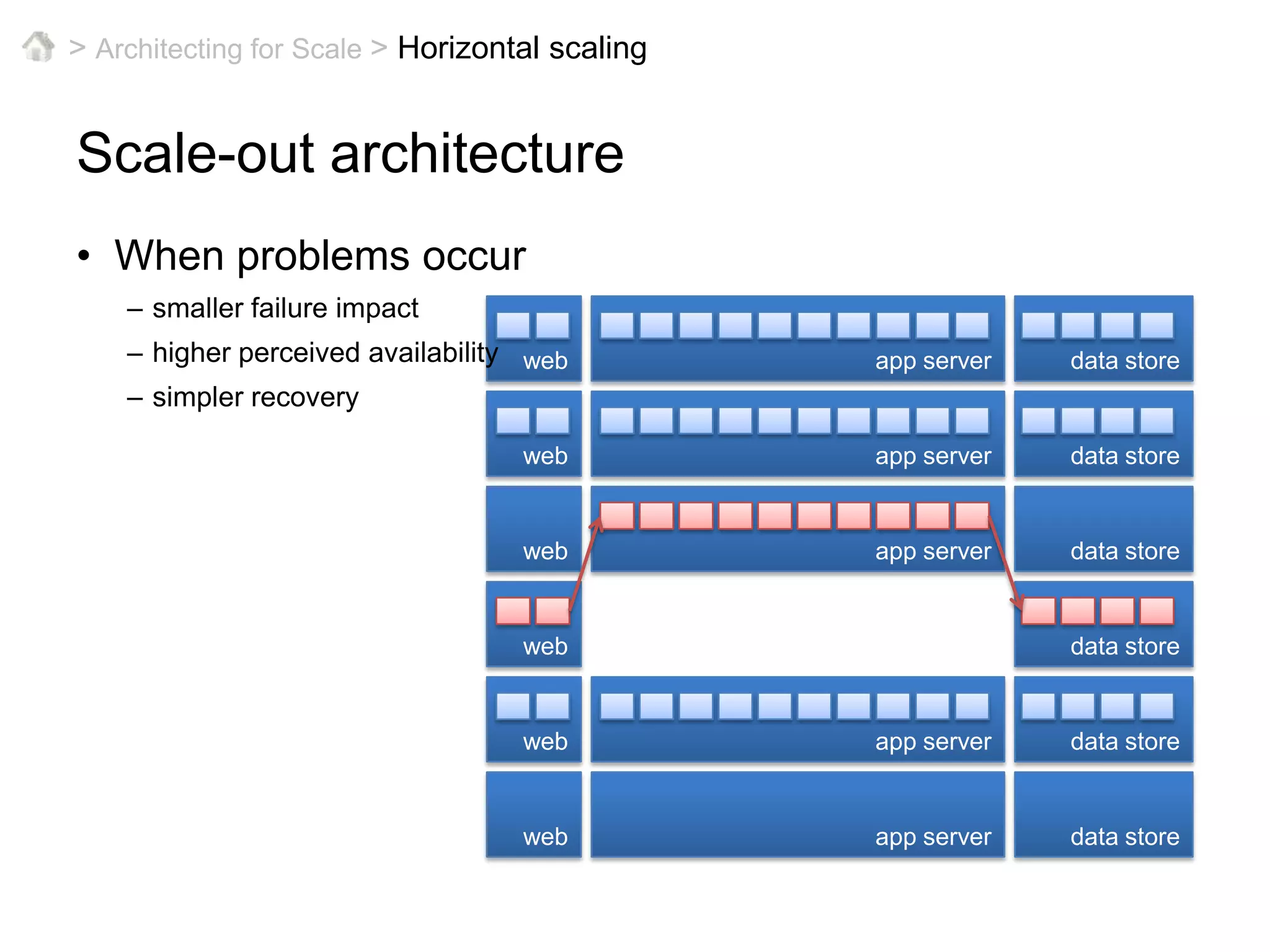

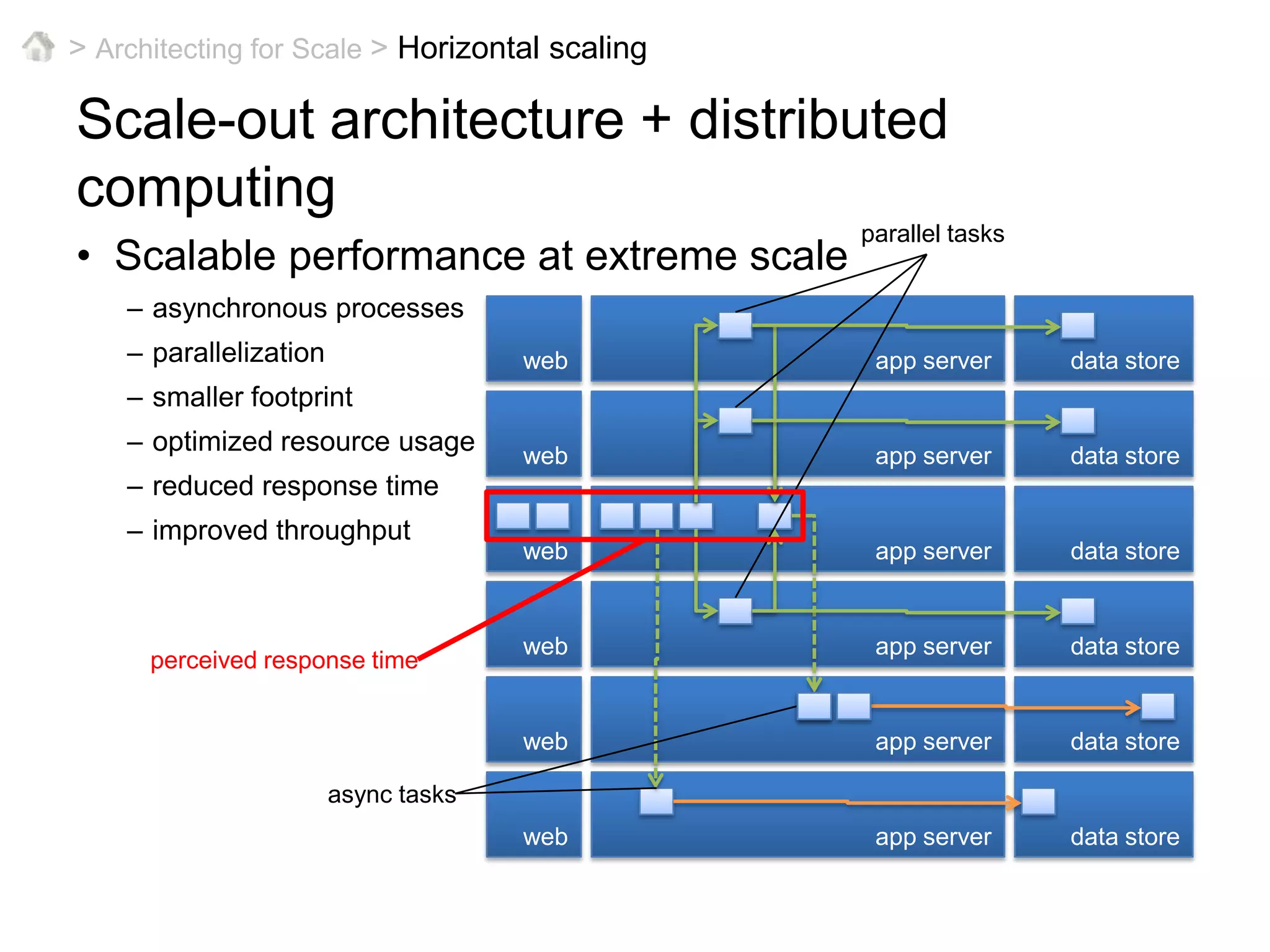

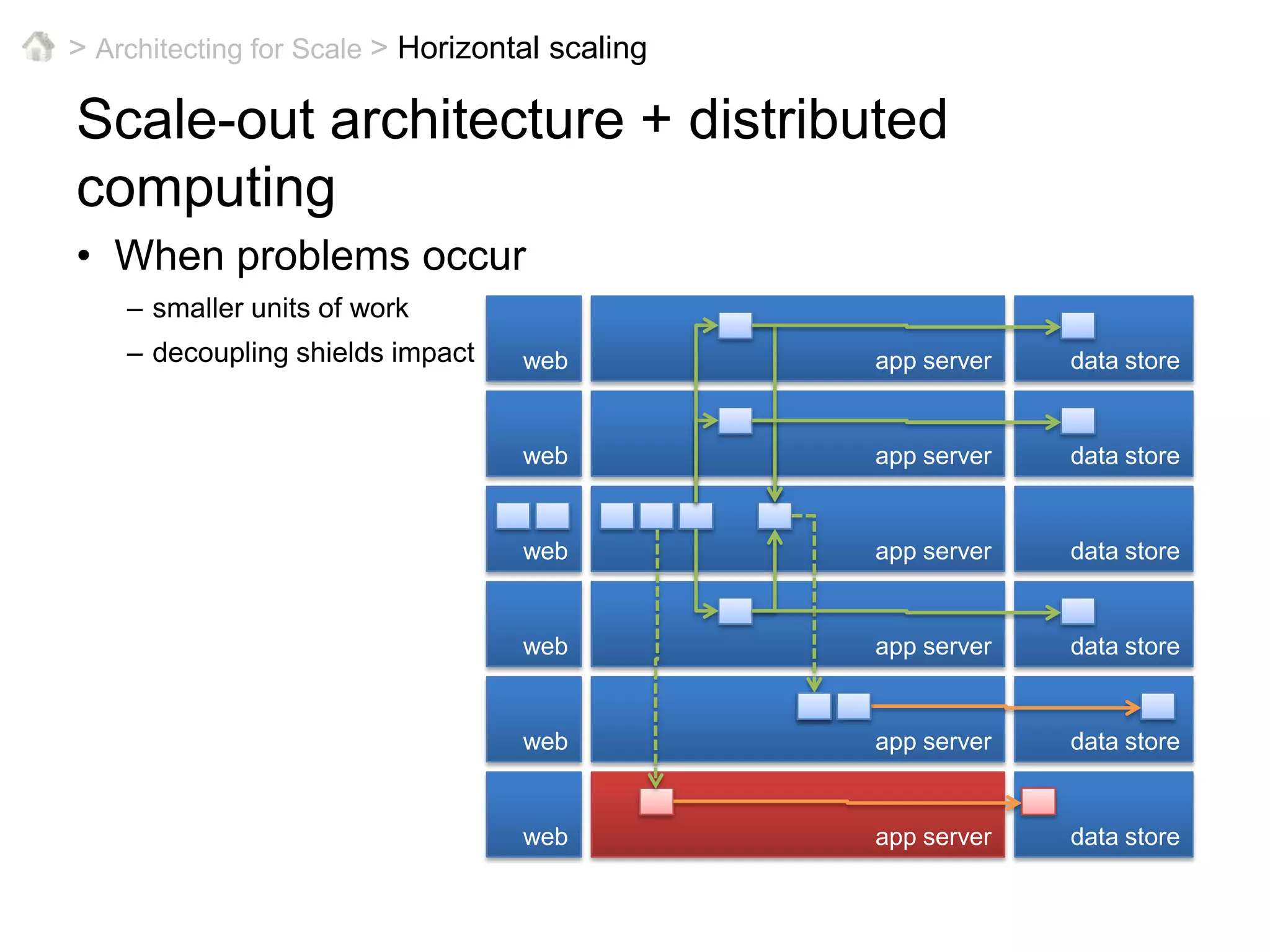

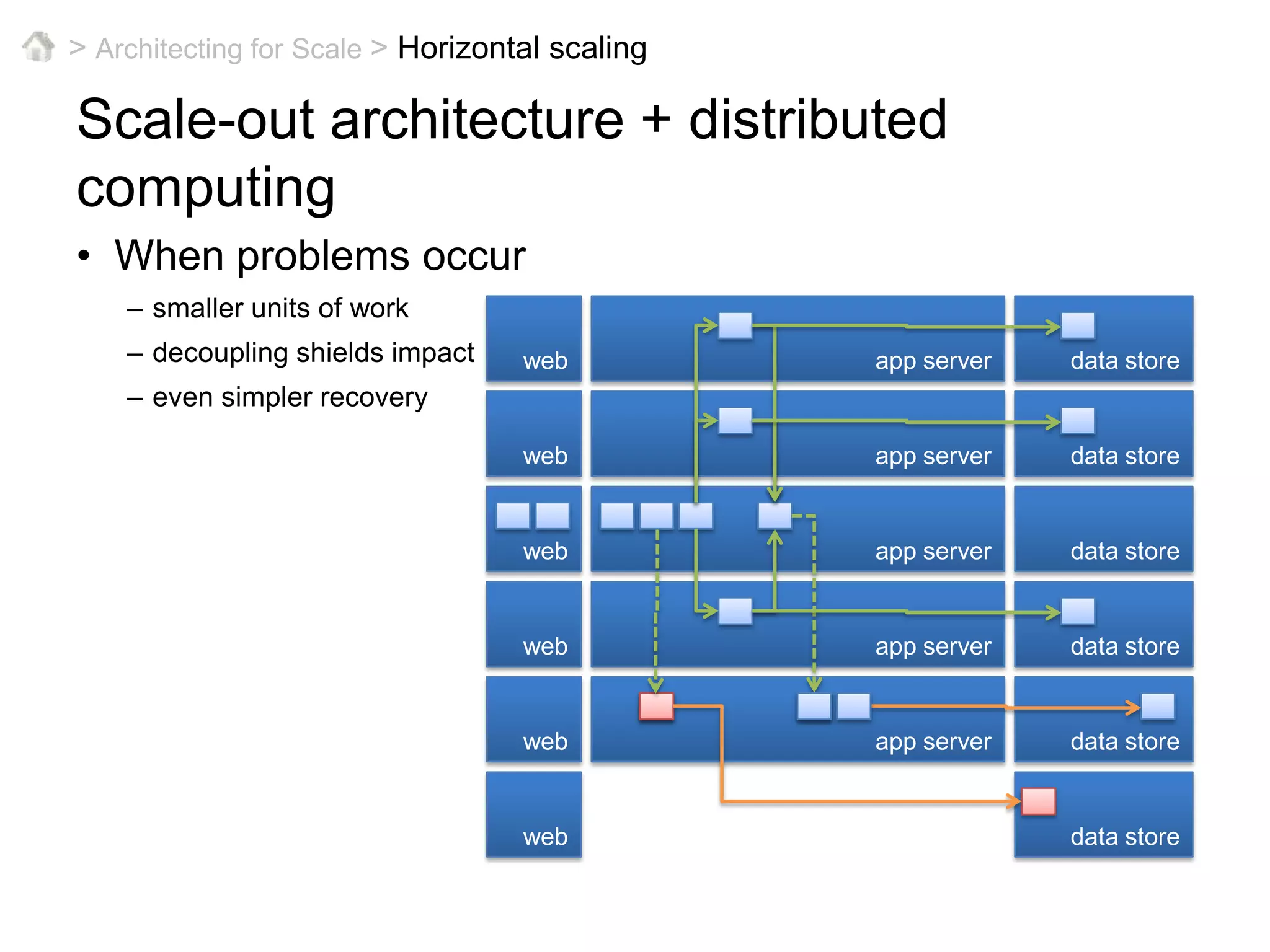

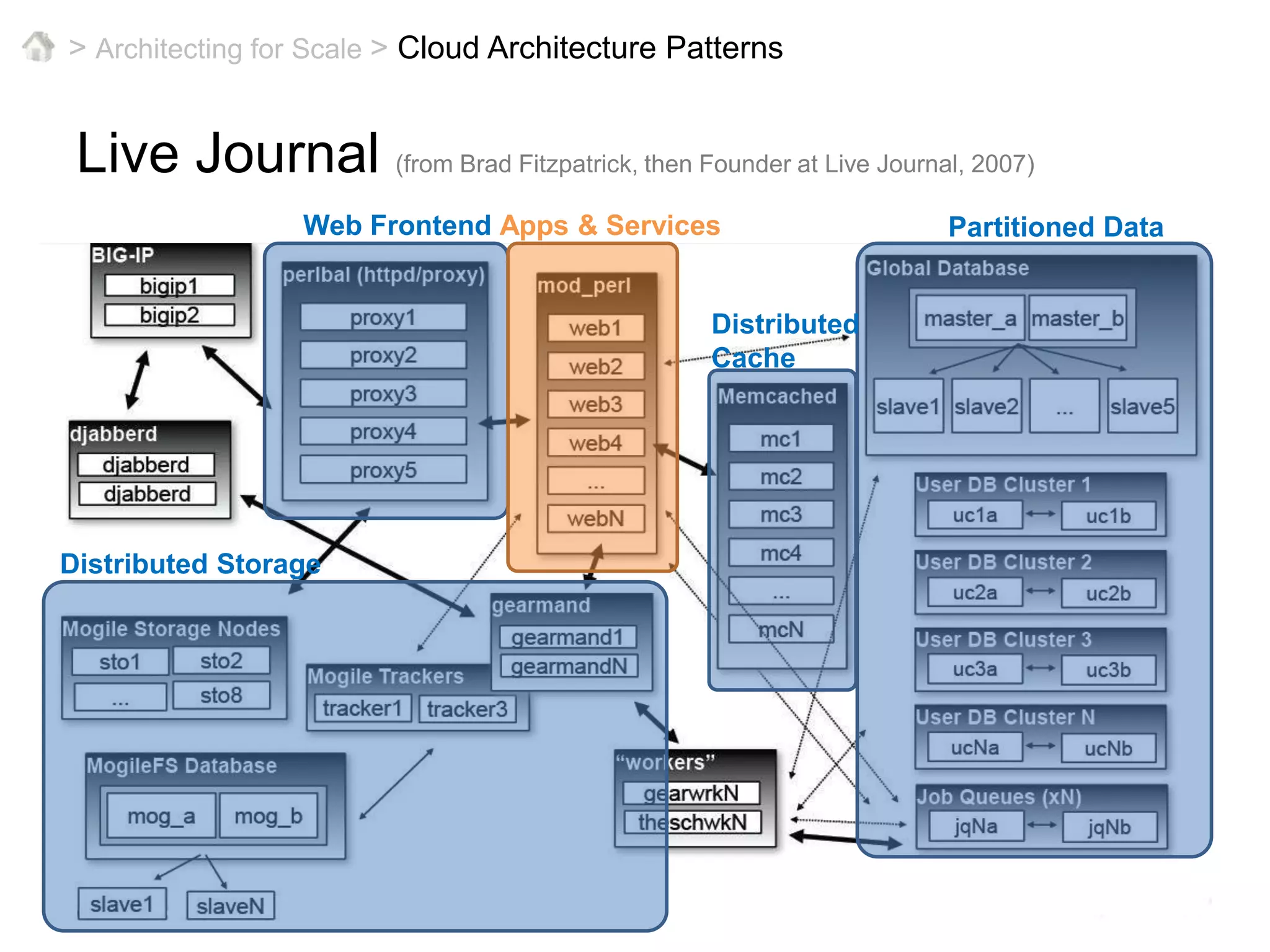

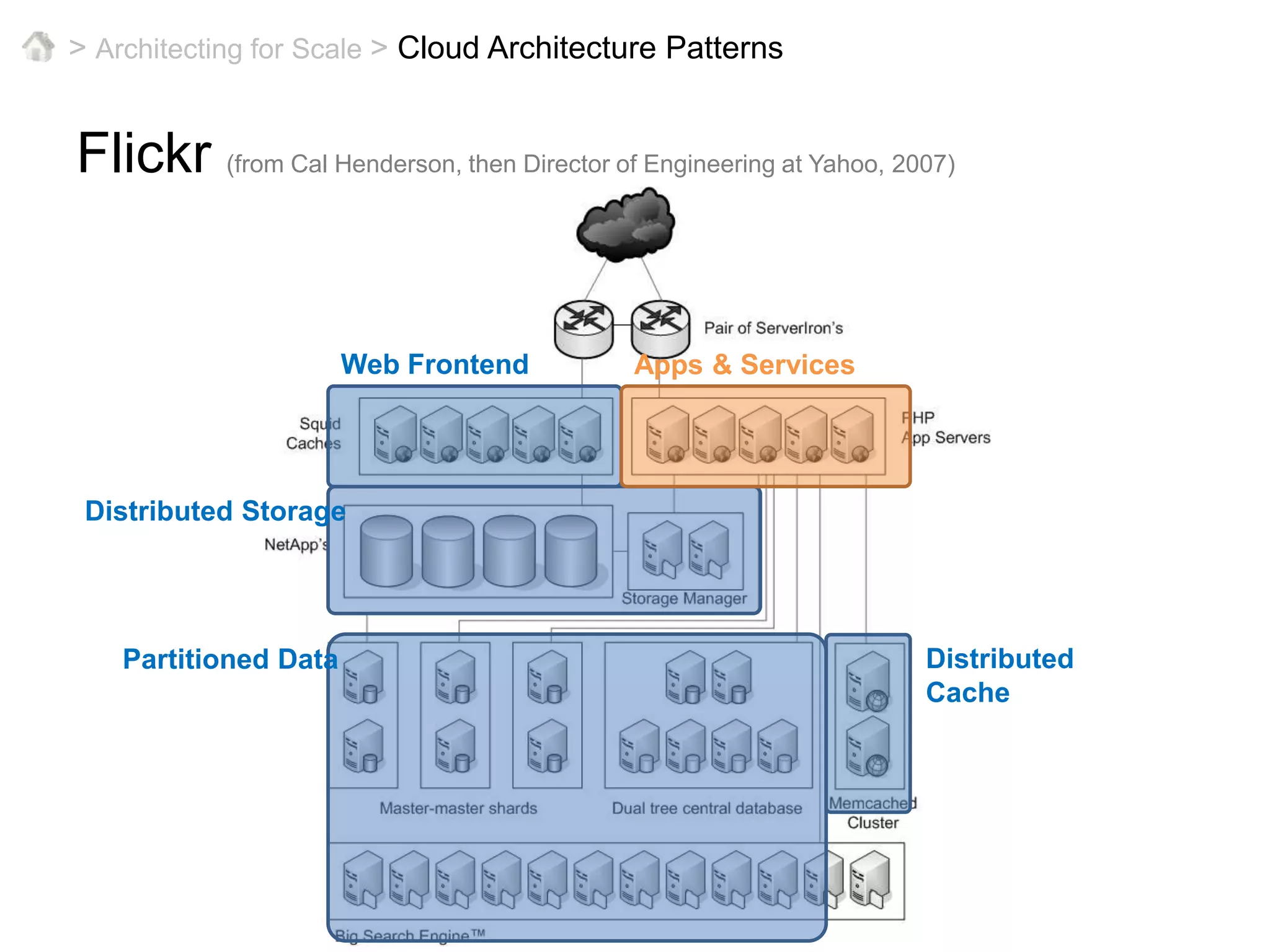

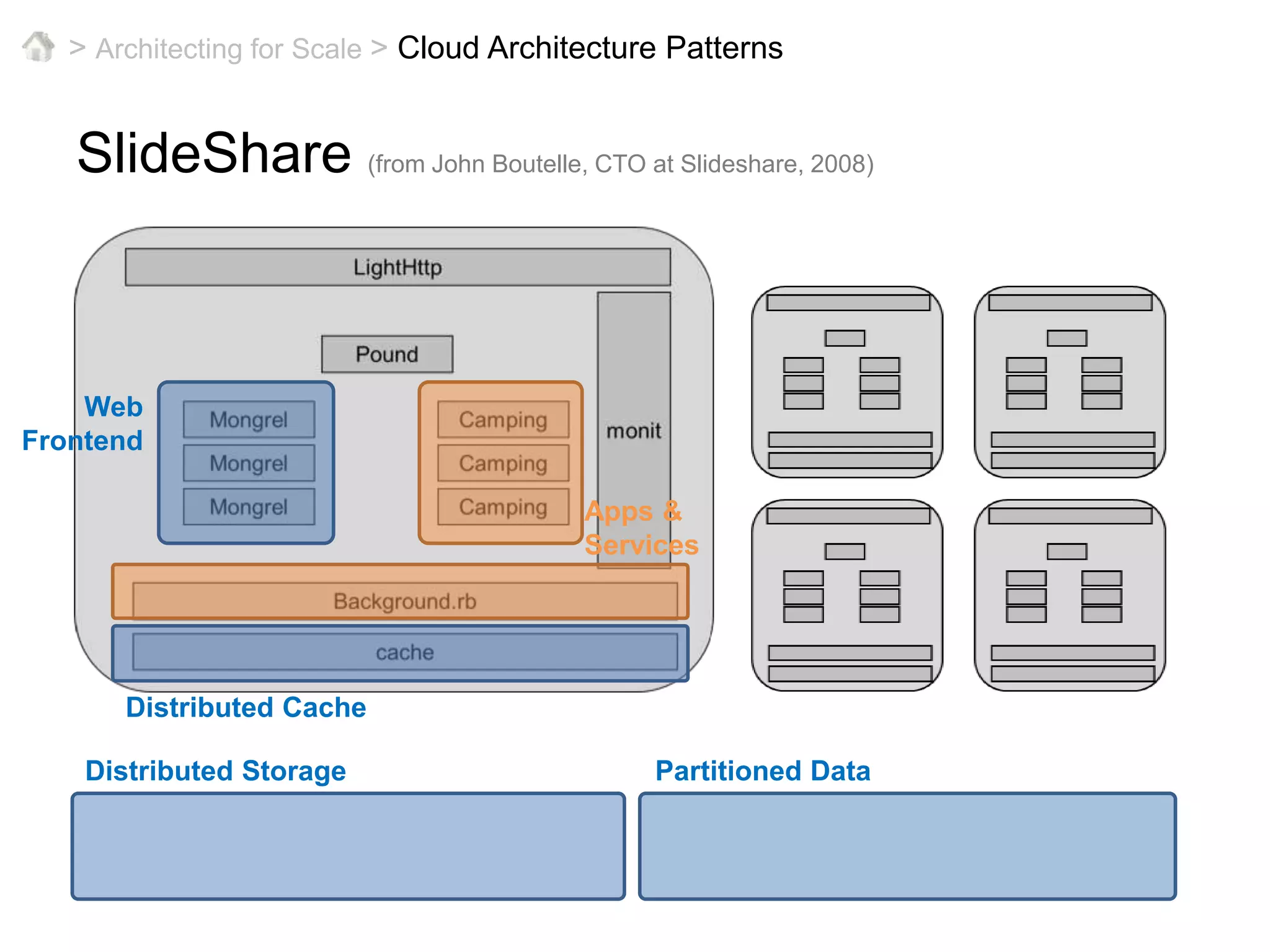

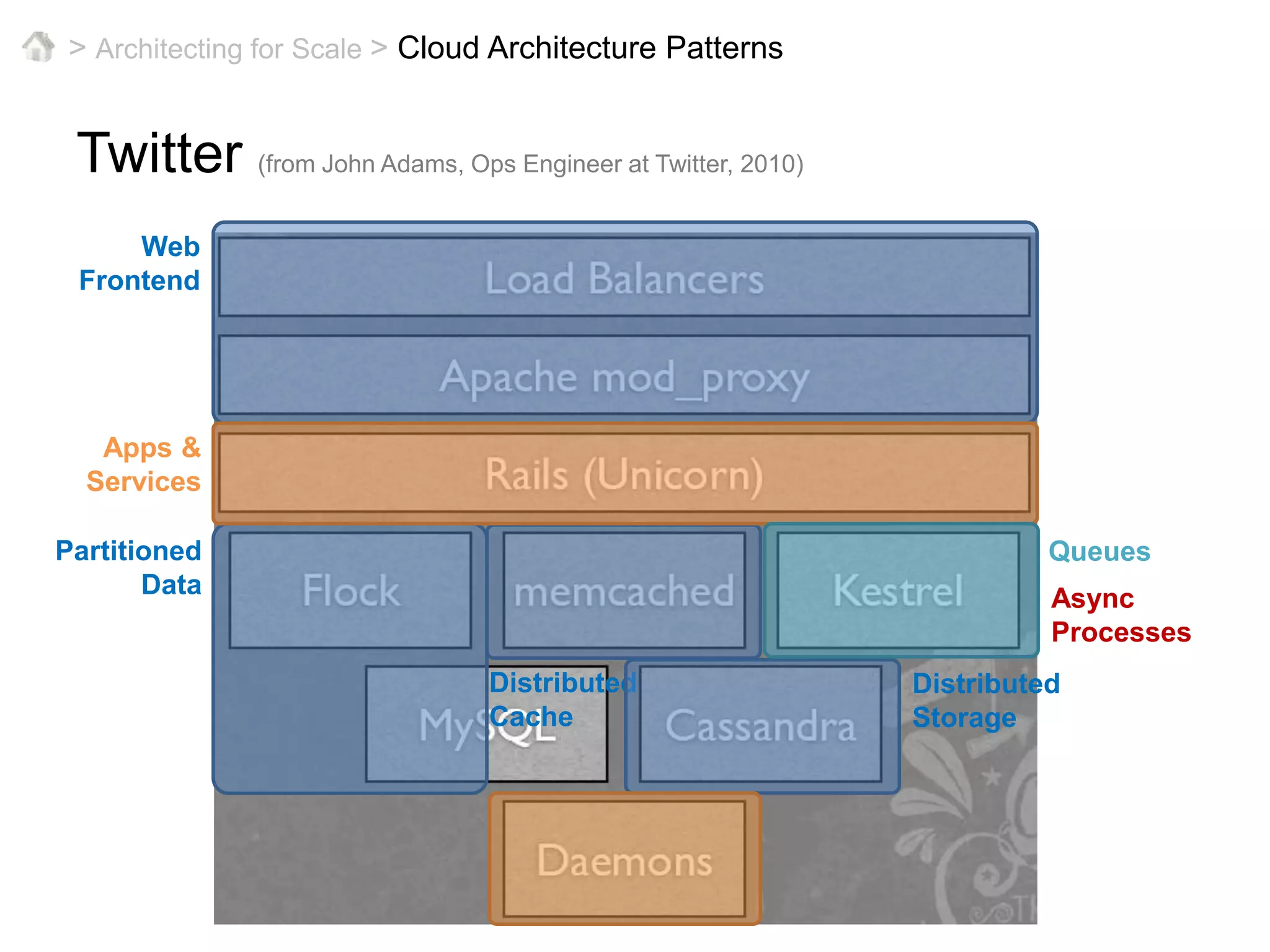

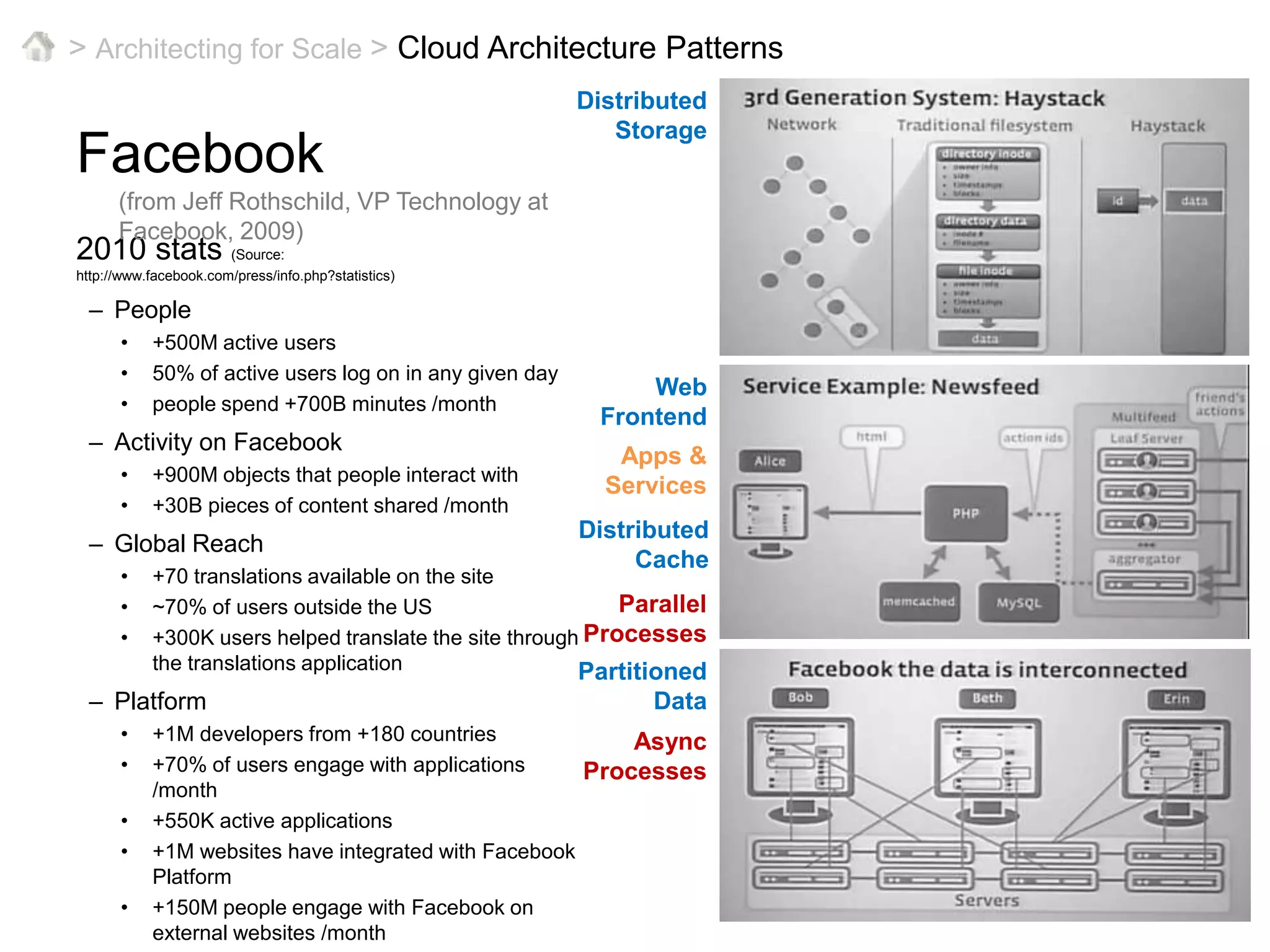

This document provides an overview of architecting cloud applications for scale. It discusses key concepts like horizontal scaling, distributed computing, and common cloud architecture patterns. Specific examples are given of how large companies like Facebook, Twitter, and Flickr architect their systems using horizontal scaling, partitioning, caching, and other techniques to handle massive loads in a scalable way.