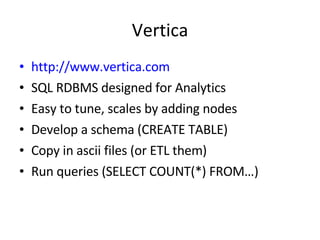

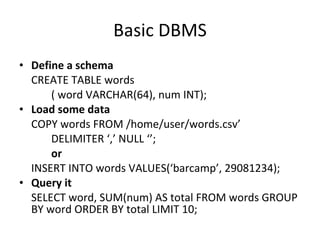

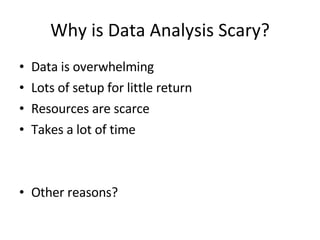

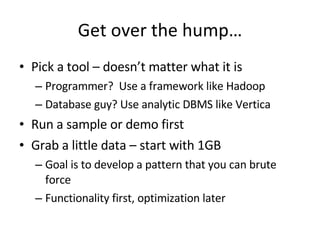

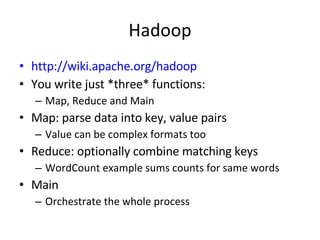

The document provides an overview of getting started with data analysis using Hadoop and Vertica. It recommends starting with a small sample dataset of around 1GB to practice developing patterns before optimizing. For Hadoop, it explains that the Map, Reduce, and Main functions are the core components, with Map parsing data into key-value pairs and Reduce combining matching keys. For Vertica, it notes that basic usage involves defining a schema, loading data via copy or insert, and running queries like counts and aggregations. The goal is to pick a tool, start with a simple demo or sample, and focus on functionality before optimization.

![Analysis is Painless Data Analysis 101 [email_address]](https://image.slidesharecdn.com/analysisispainless-090426115400-phpapp02/75/Analysis-Is-Painless-1-2048.jpg)

![What does MAIN do? Start with a JobConf and give it a name JobConf conf = new JobConf(WordCount.class); conf.setJobName("wordcount"); Set the key and value classes that the Mapper will output and Reducer will input conf.setOutputKeyClass(Text. class); conf.setOutputValueClass(IntWritable. class); Tell it the classes (Combiner is an optional special case reducer) conf.setMapperClass(Map. class); conf.setCombinerClass(Reduce. class); conf.setReducerClass(Reduce. class); Set the format classes for input and output conf.setInputFormat(TextInputFormat. class); conf.setOutputFormat(TextOutputFormat. class); Format specific initialization FileInputFormat. setInputPaths(conf, new Path(args[0])); FileOutputFormat. setOutputPath(conf, new Path(args[1])); Run! JobClient. runJob(conf);](https://image.slidesharecdn.com/analysisispainless-090426115400-phpapp02/85/Analysis-Is-Painless-5-320.jpg)