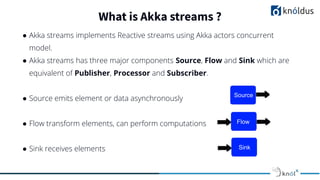

The document is a presentation by Mohd Alimuddin discussing Akka HTTP, which is a suite of libraries focused on HTTP integration for applications using Akka actors and streams. It covers essential concepts such as marshalling, unmarshalling, and the distinction between low-level and high-level APIs. Additionally, it emphasizes etiquette during sessions and guidelines for providing constructive feedback.

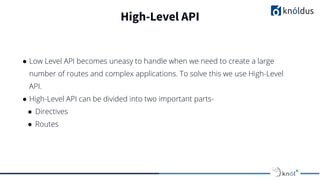

![Directives & Routes

● A Directives is a small building block used for creating arbitrary complex

route structures.

● Routes are highly specialized functions that take a RequestContext and

eventually complete it which happens asynchronously.

● Each Routes is composed of one or more Directives that handles one specific

kind of request.

type Route = RequestContext => Future[RouteResult]](https://image.slidesharecdn.com/akkahttpwithscalabymohdalimuddin1-210614151059/85/Akka-HTTP-with-Scala-11-320.jpg)