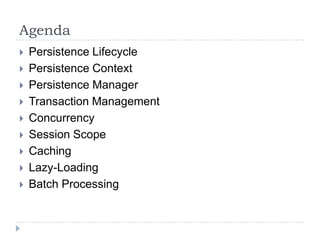

This document provides an overview of advanced Hibernate concepts including:

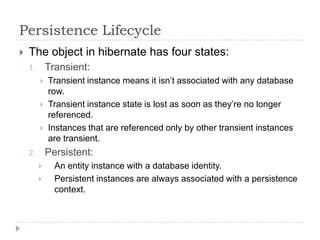

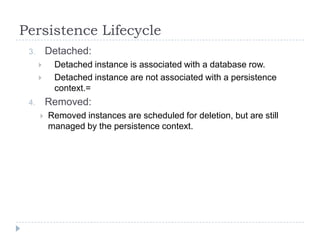

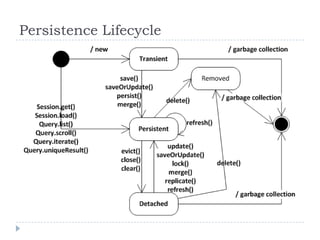

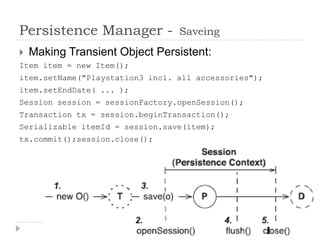

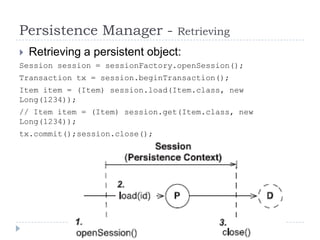

- The persistence lifecycle of objects in Hibernate and the four states objects can be in: transient, persistent, detached, and removed.

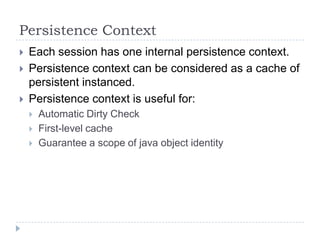

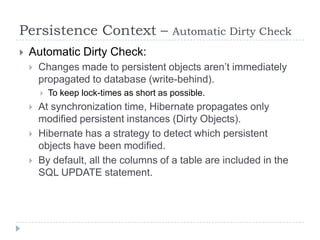

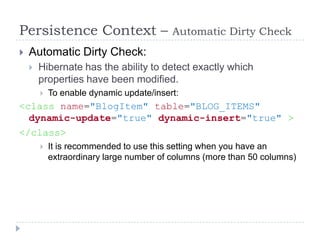

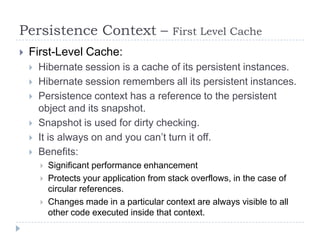

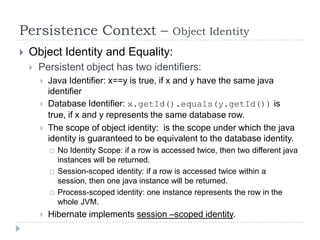

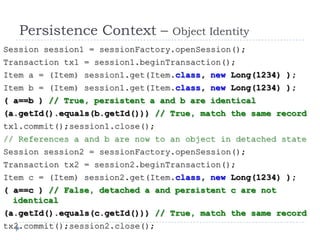

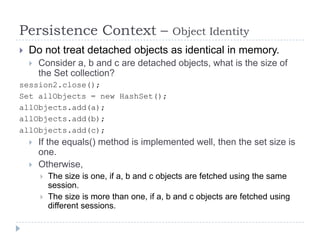

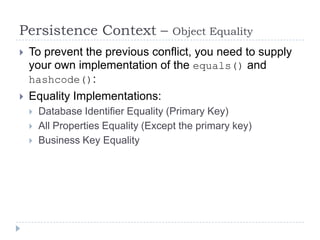

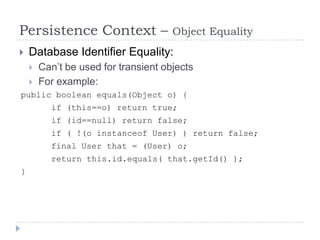

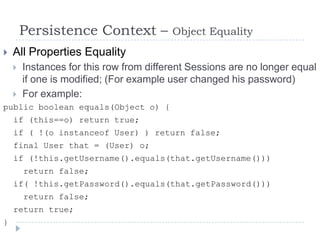

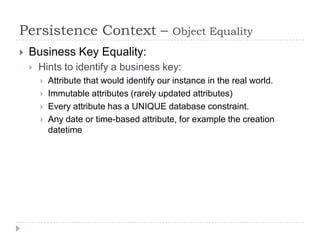

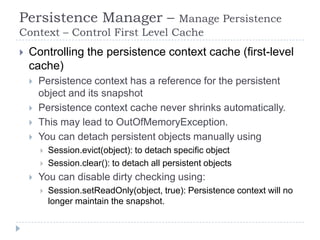

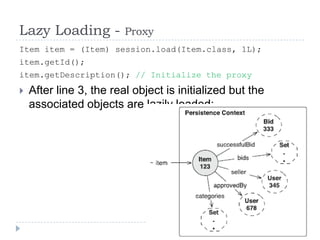

- The persistence context and how it acts as a cache and guarantees object identity within a session scope.

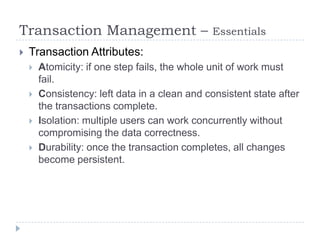

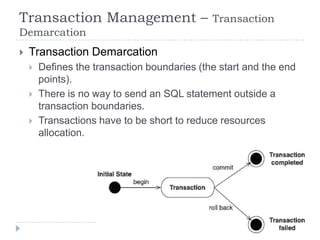

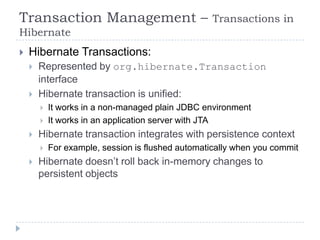

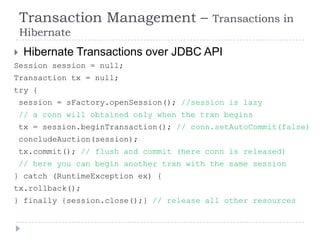

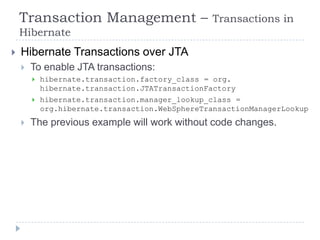

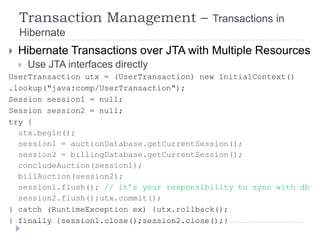

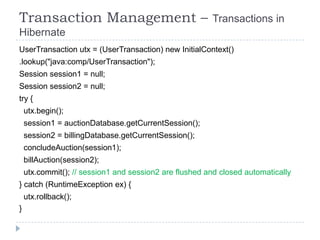

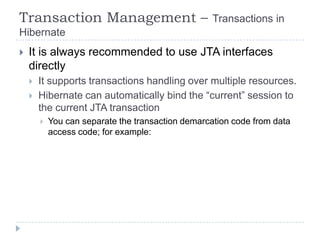

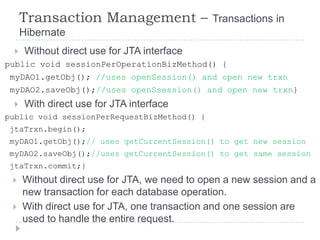

- Transaction management in Hibernate including demarcating transaction boundaries programmatically or declaratively.

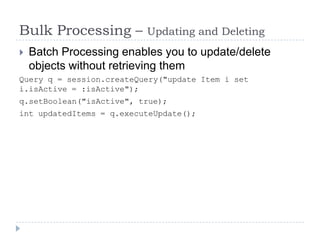

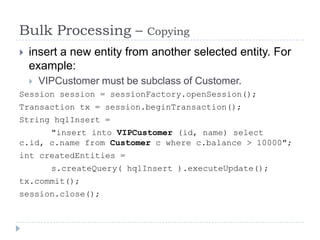

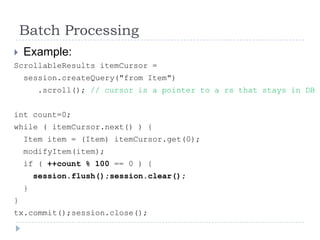

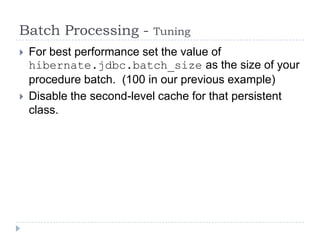

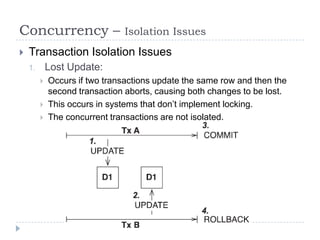

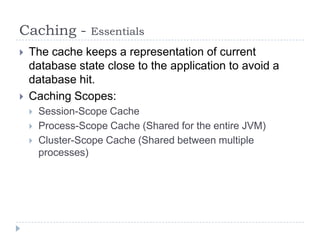

- Additional topics covered include the persistence manager, concurrency control, caching, and batch processing.

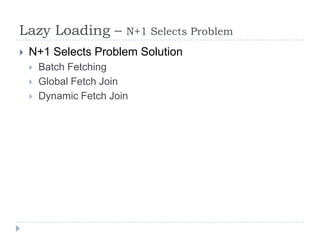

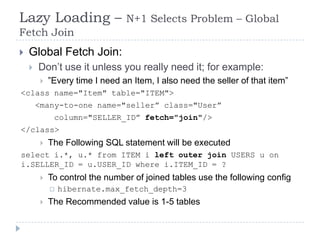

![Lazy Loading – N+1 Selects Problem – Dynamic

Fetch Join

Dynamic Fetch Join

Using HQL

from UserBean u join fetch u.company c where u.name

like '%a%' and c.name = 'STS‟

Select [DISTINCT] u from UserBean u left join fetch

u.addresses a where u.name like '%a%' and a.city =

„amman‟

Using Criteria

Criteria criteira = session.createCriteria(User.class)

.createCriteria(“company”).add…

Criteria criteira = session.createCriteria(User.class)

.createAlias(“company”, “c”).add…

Criteria criteira = session.createCriteria(User.class)

.createAlias(“company”, “c”, JoinType.RIGHT_OUTER_JOIN).

add…](https://image.slidesharecdn.com/advancedhibernatev2-121117011821-phpapp02/85/Advanced-Hibernate-V2-91-320.jpg)