The document discusses the proposed conversion of the Papyrus source projects in the Eclipse Git repository to Maven nature to improve build processes and integration with Hudson. An experiment was conducted to assess the impact of this change on developer workflows, performance, and build consistency, revealing potential problems but generally demonstrating that performance would not be adversely affected. The report suggests that, while the benefits of this conversion are significant, it may be better suited for implementation after the current release cycle to minimize disruption for developers.

![DIA Agency, Inc.

that around and implies the POM dependencies from the MANIFEST.MF just as

PDE does for JDT

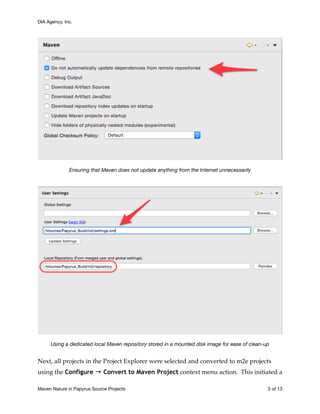

However, for build plug-ins that do not map to internal Eclipse builders, m2e uses an

embedded maven3 engine to run maven plug-ins as required. For example, to do a

clean build of the org.eclipse.papyrus.infra.constraints[.edit[or]] plug-ins as

reconfigured in Gerrit review 41167 :2

•the Maven builder provided by m2e generates the sources from the EMF genmodel

into the src-gen/ folder

•if one deletes the generated sources, it takes two clean re-builds to return to a

correctly compiled project: the first one generates the sources and the second one

re-compiles custom code that depends on the generated code

•this is easily fixed: it is only needed to move the Maven Project Builder ahead

of the other builders in the .project file

The Maven lifecycle mappings for a typical Xtend plug-in project

See https://git.eclipse.org/r/#/c/41167/2

Maven Nature in Papyrus Source Projects of9 13](https://image.slidesharecdn.com/adoptingmavennatureinpapyrussourceprojects-150218210110-conversion-gate01/85/Adopting-the-Maven-Nature-in-Papyrus-Source-Projects-10-320.jpg)