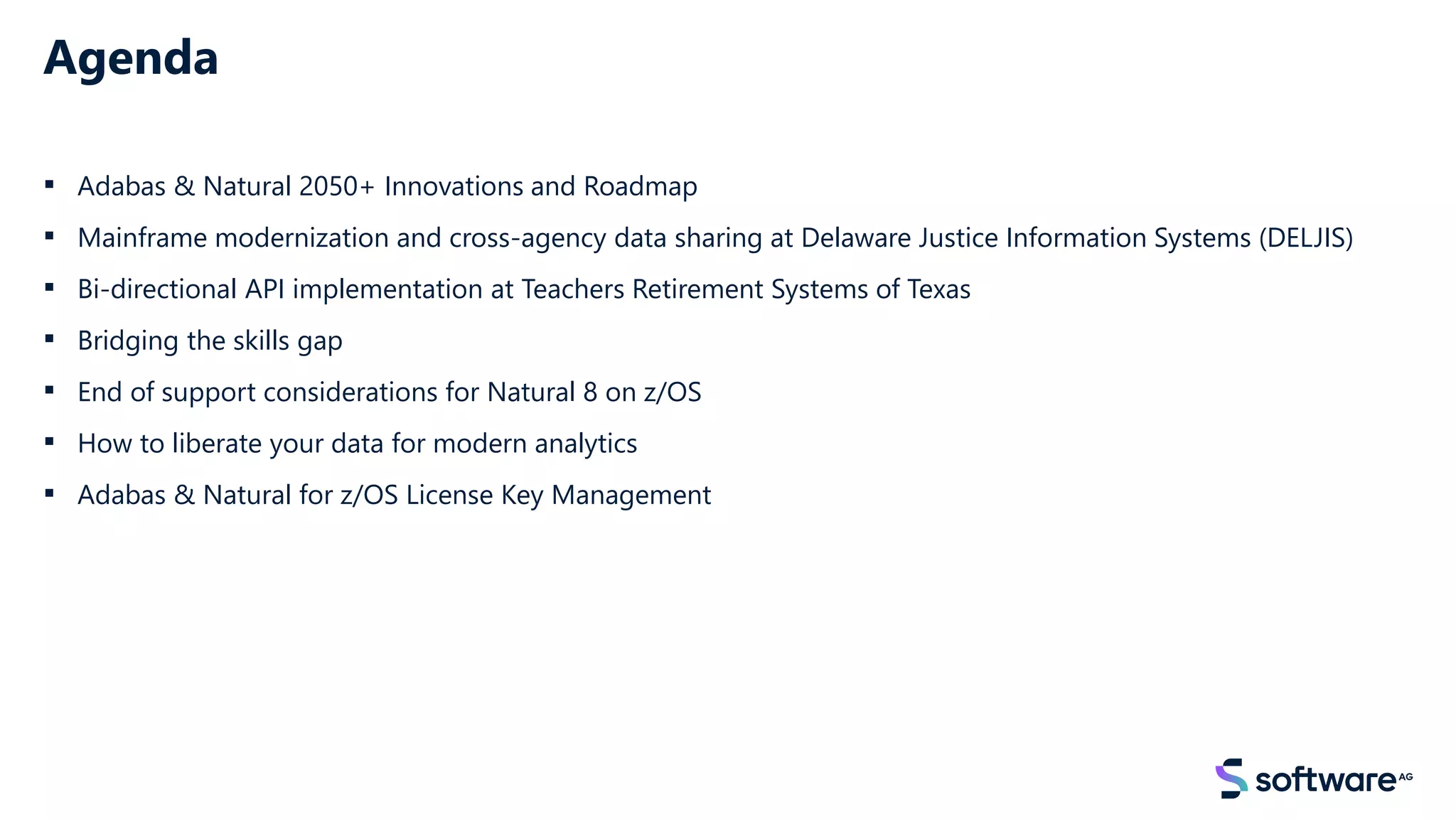

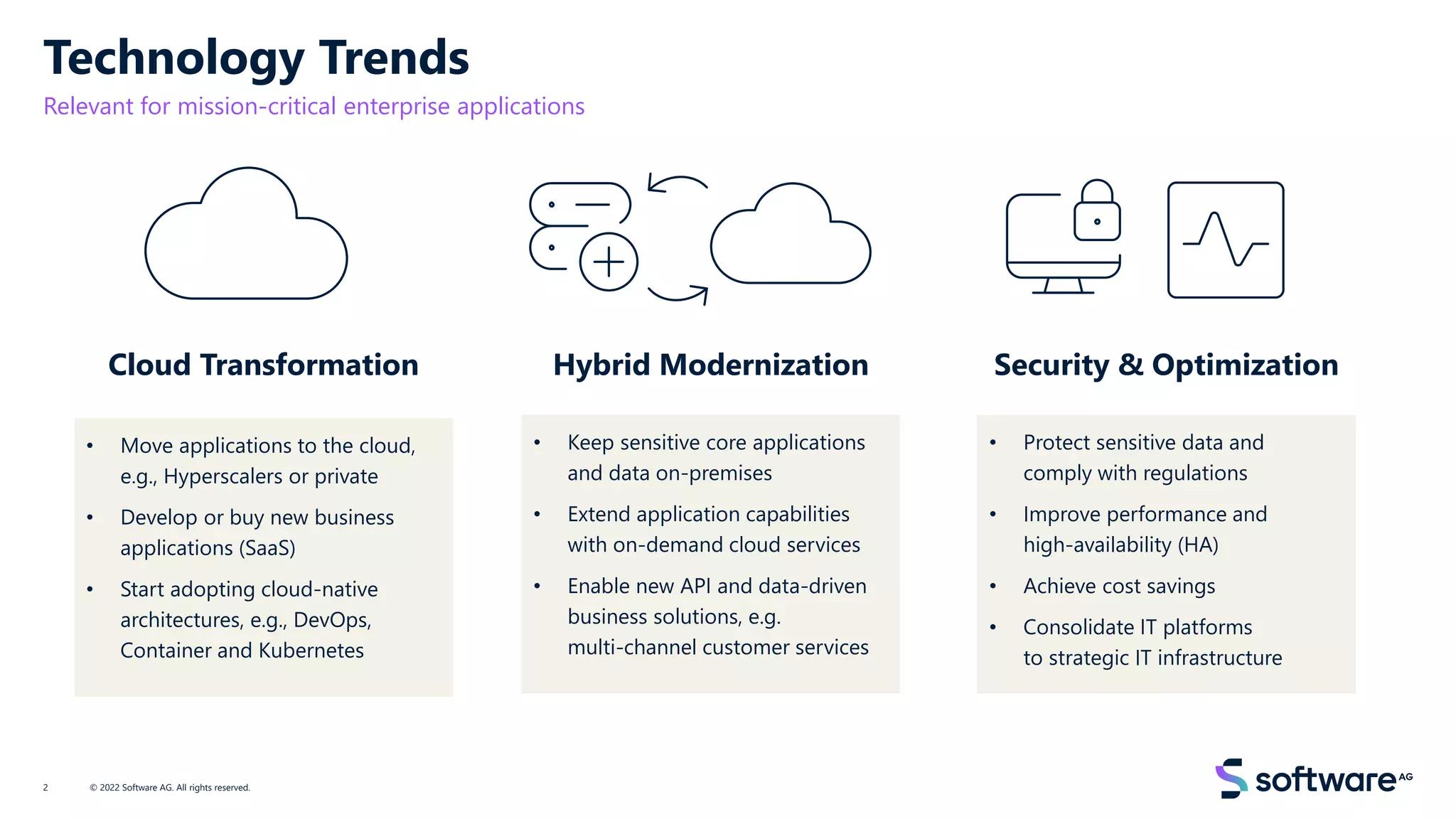

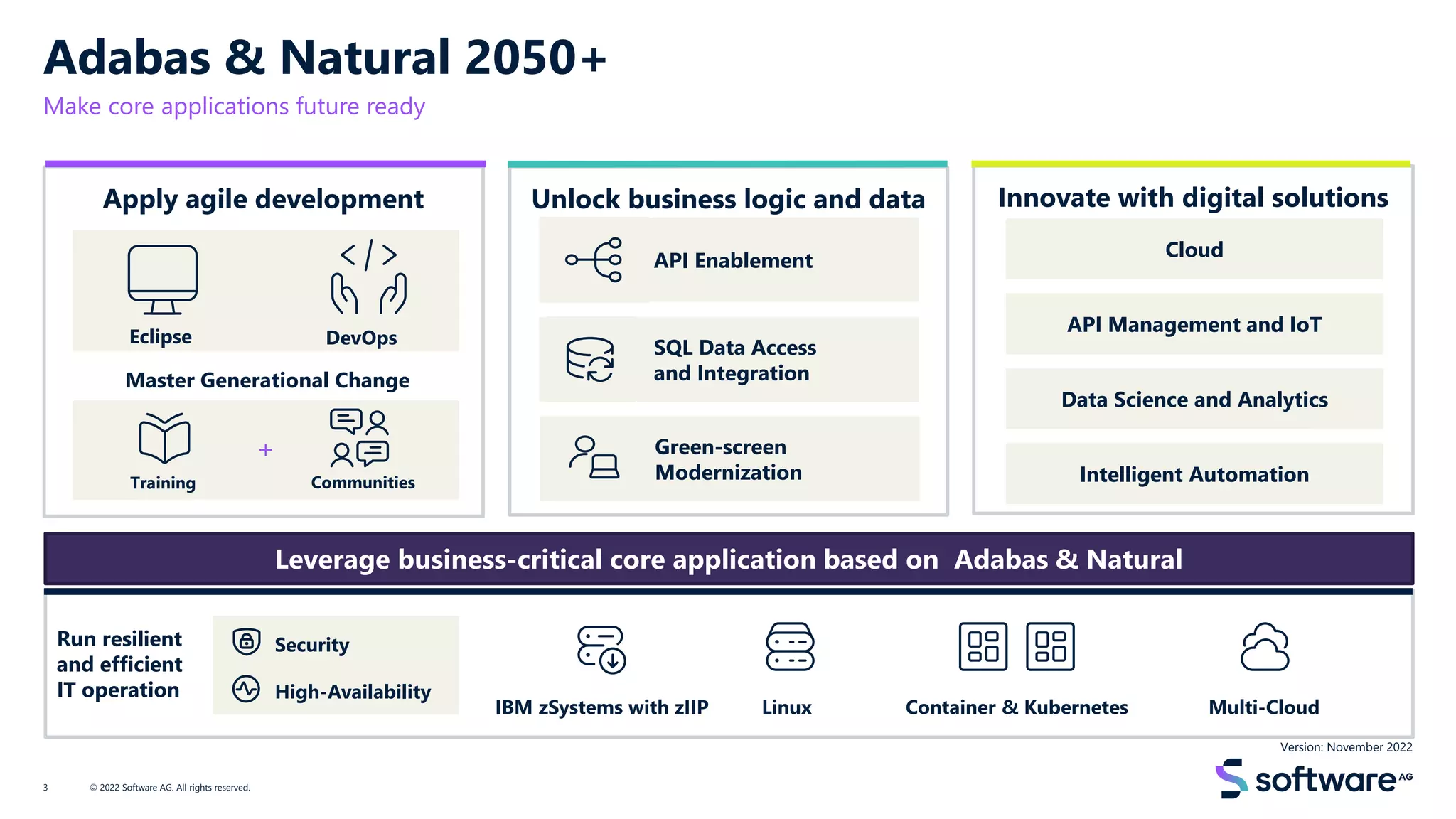

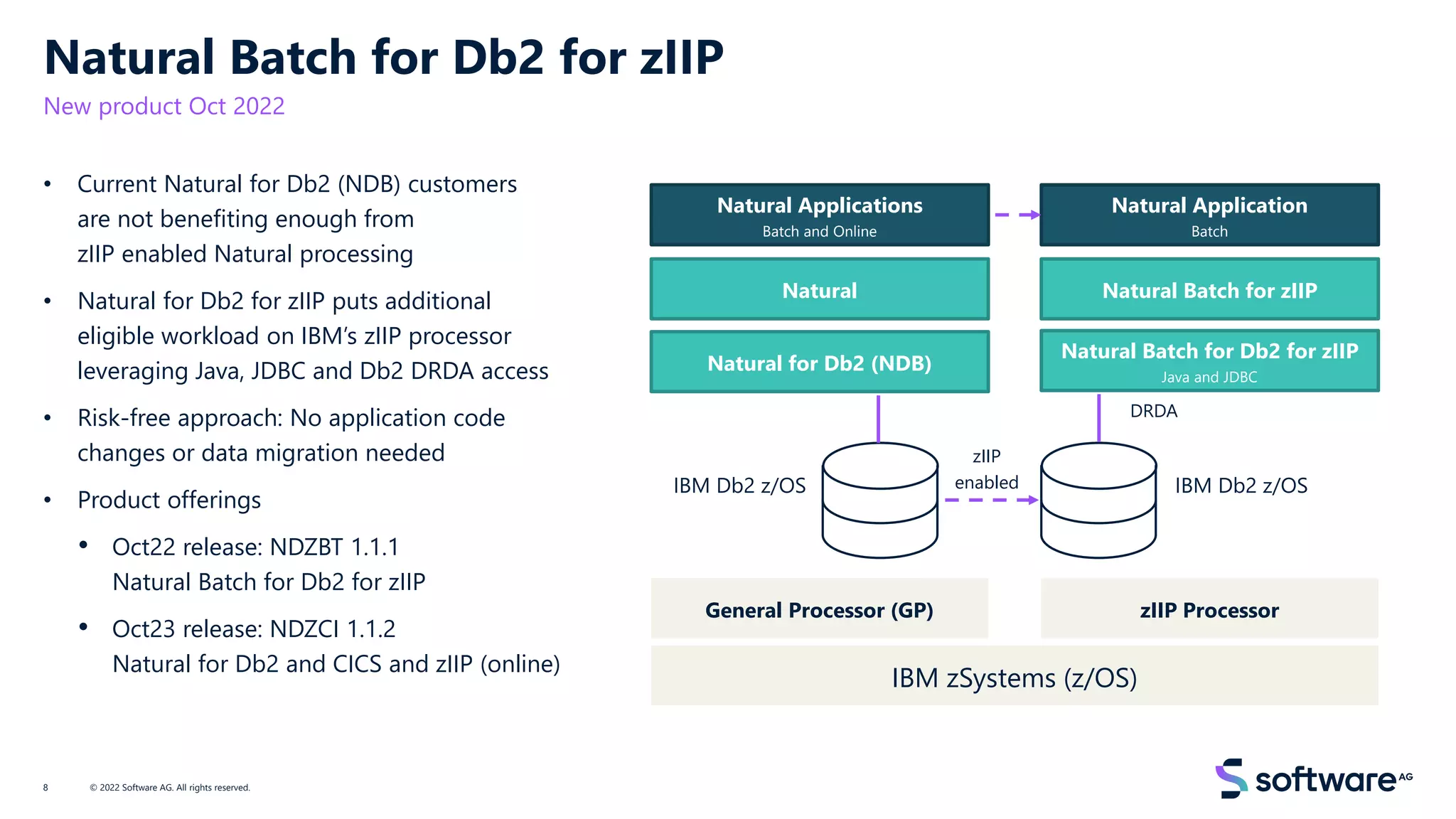

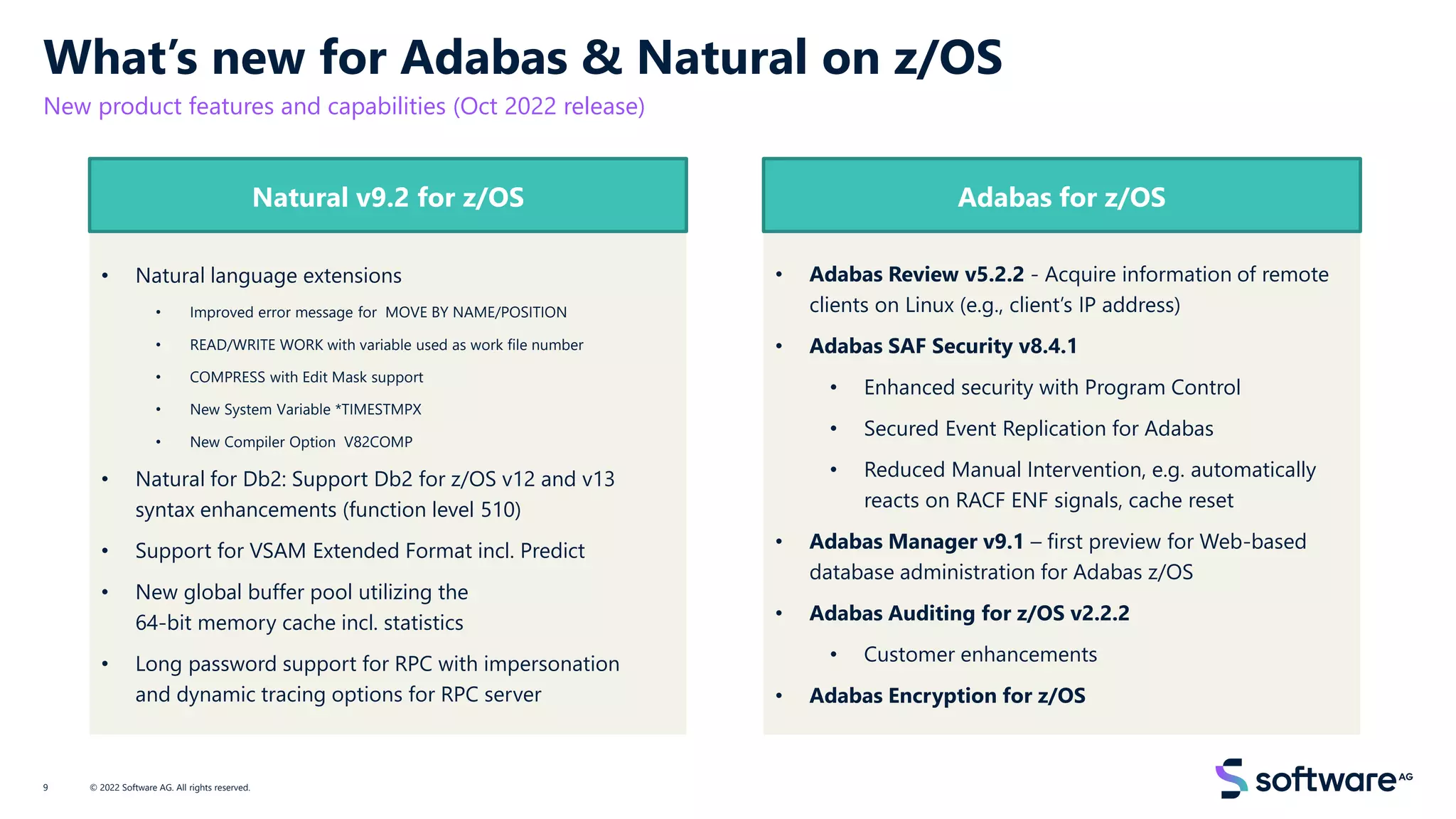

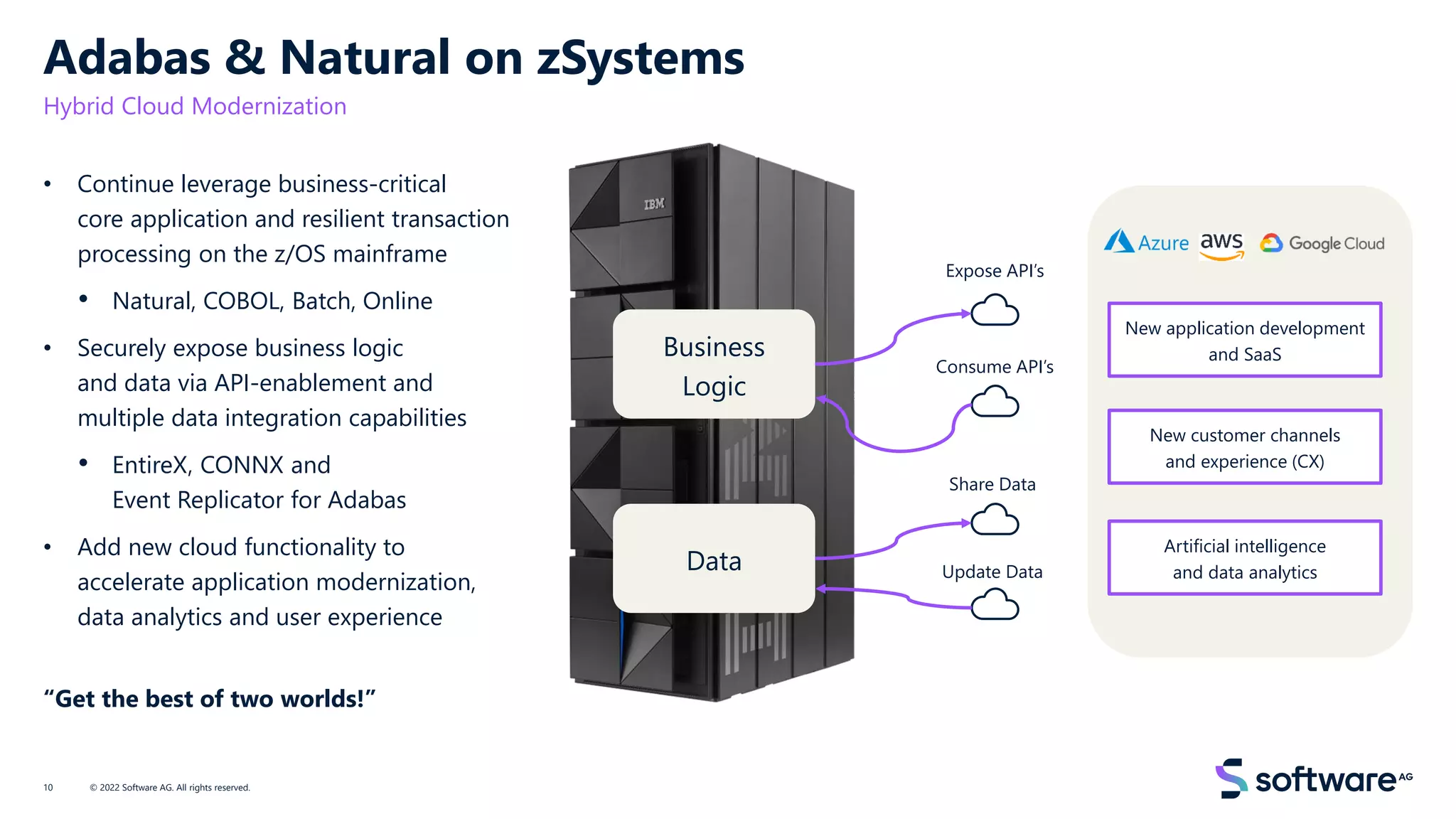

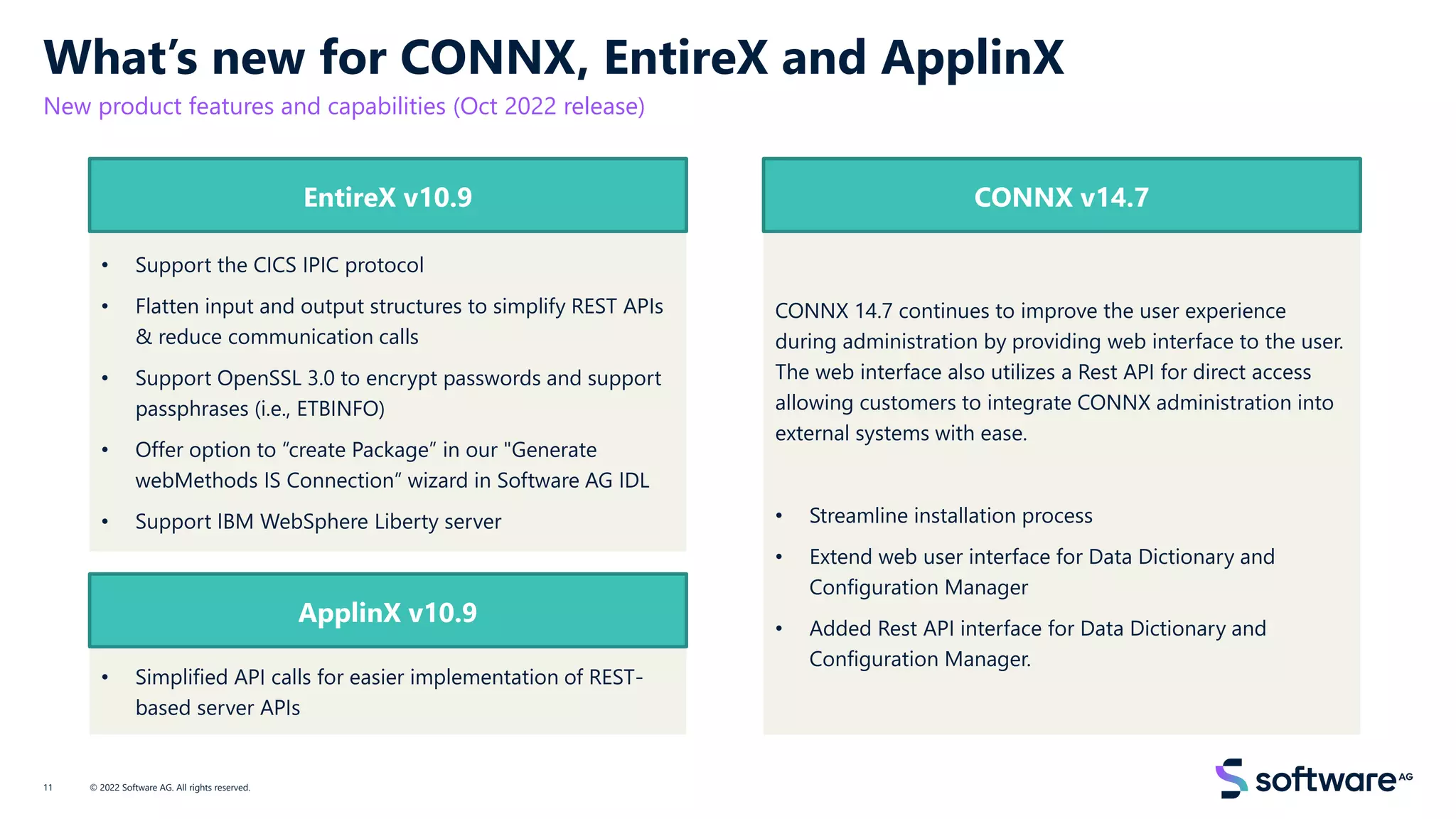

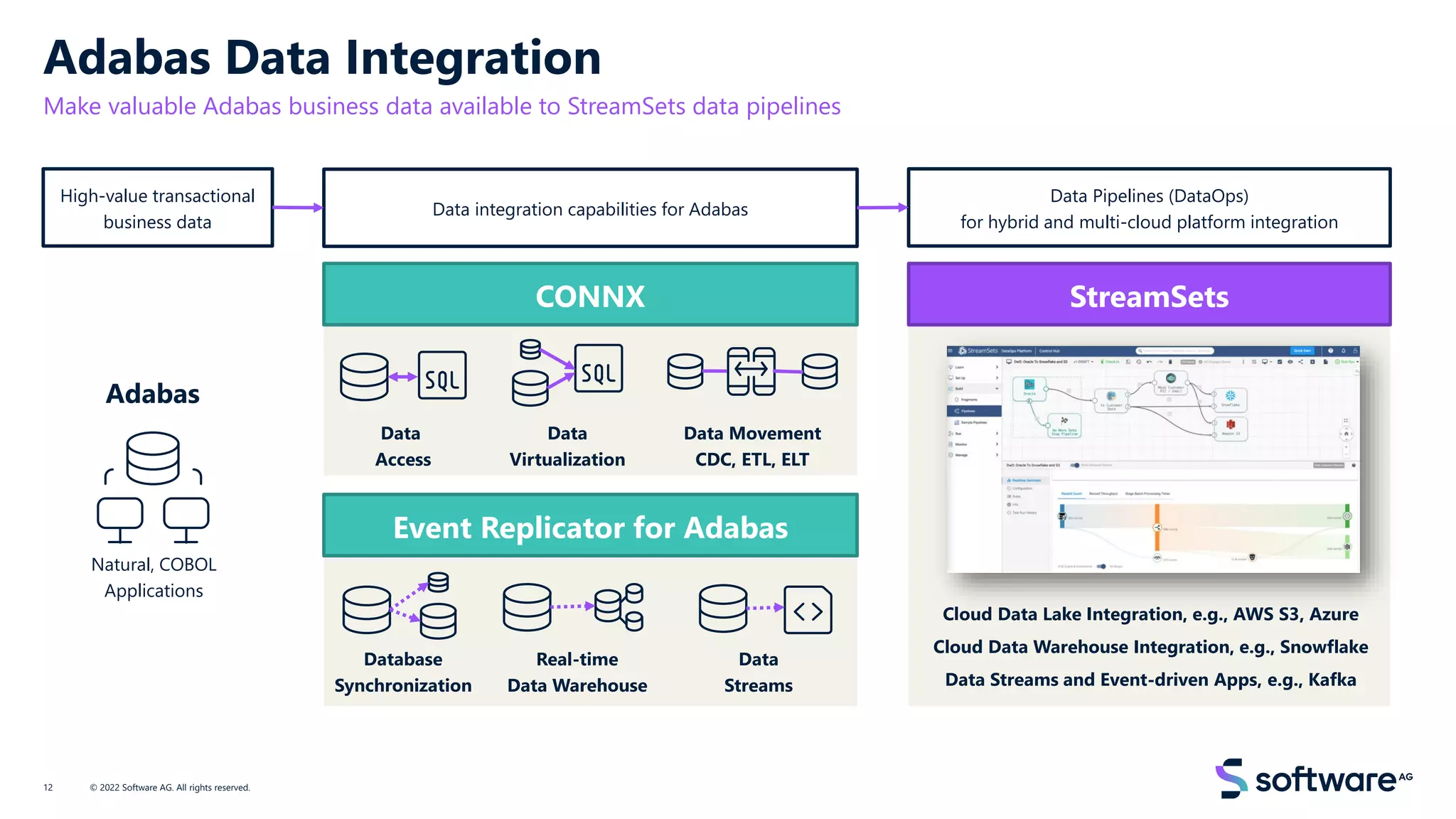

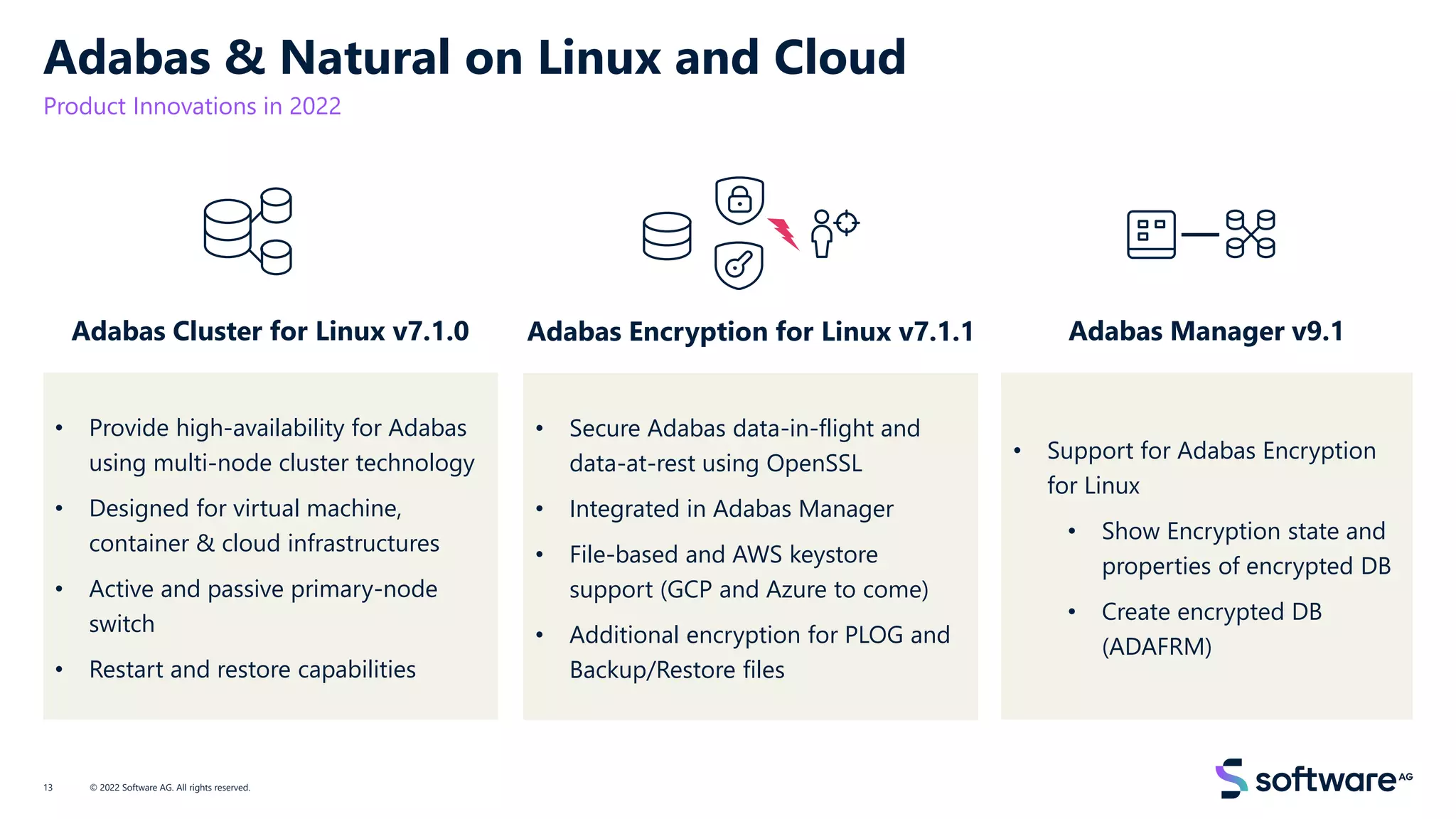

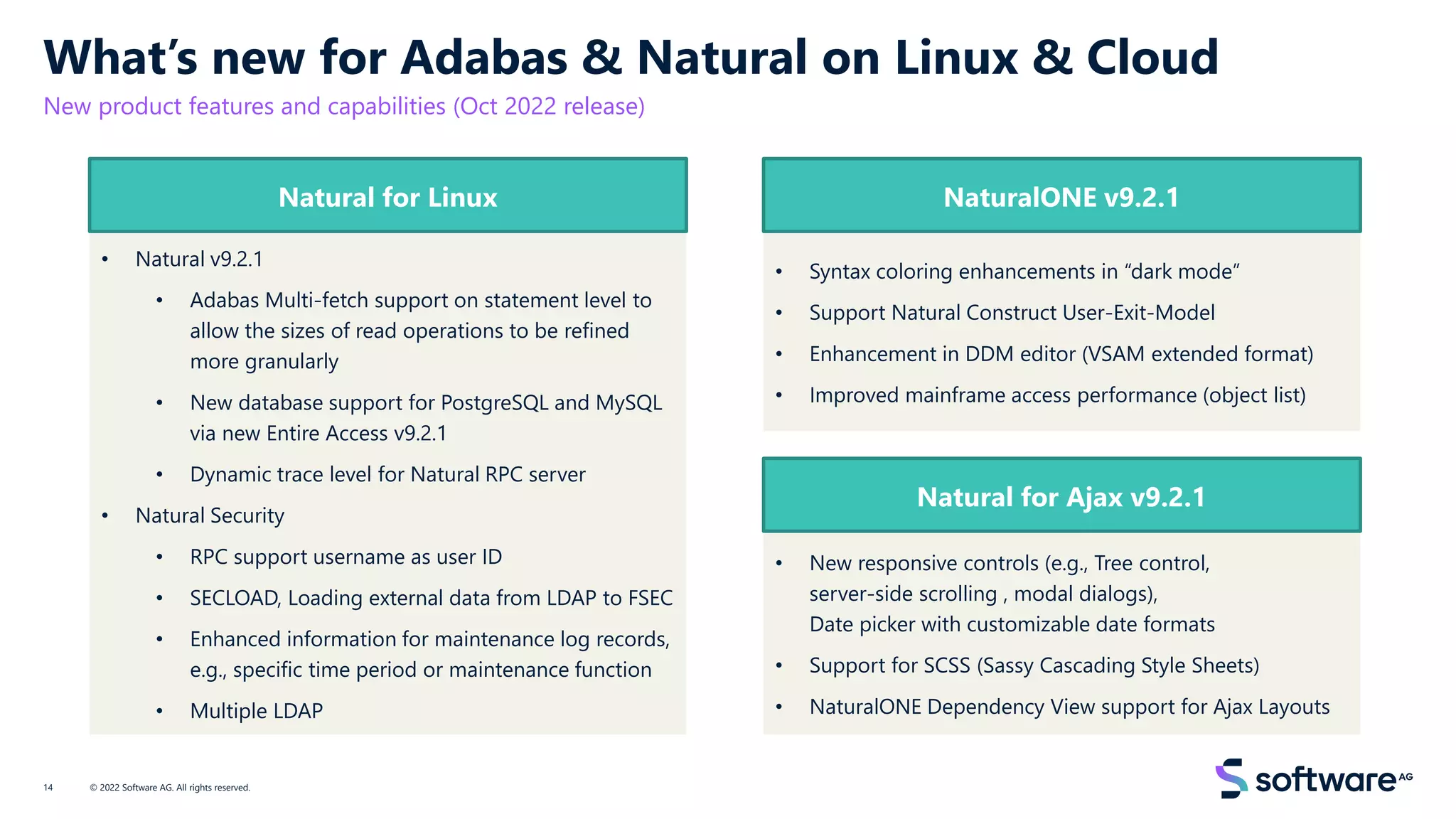

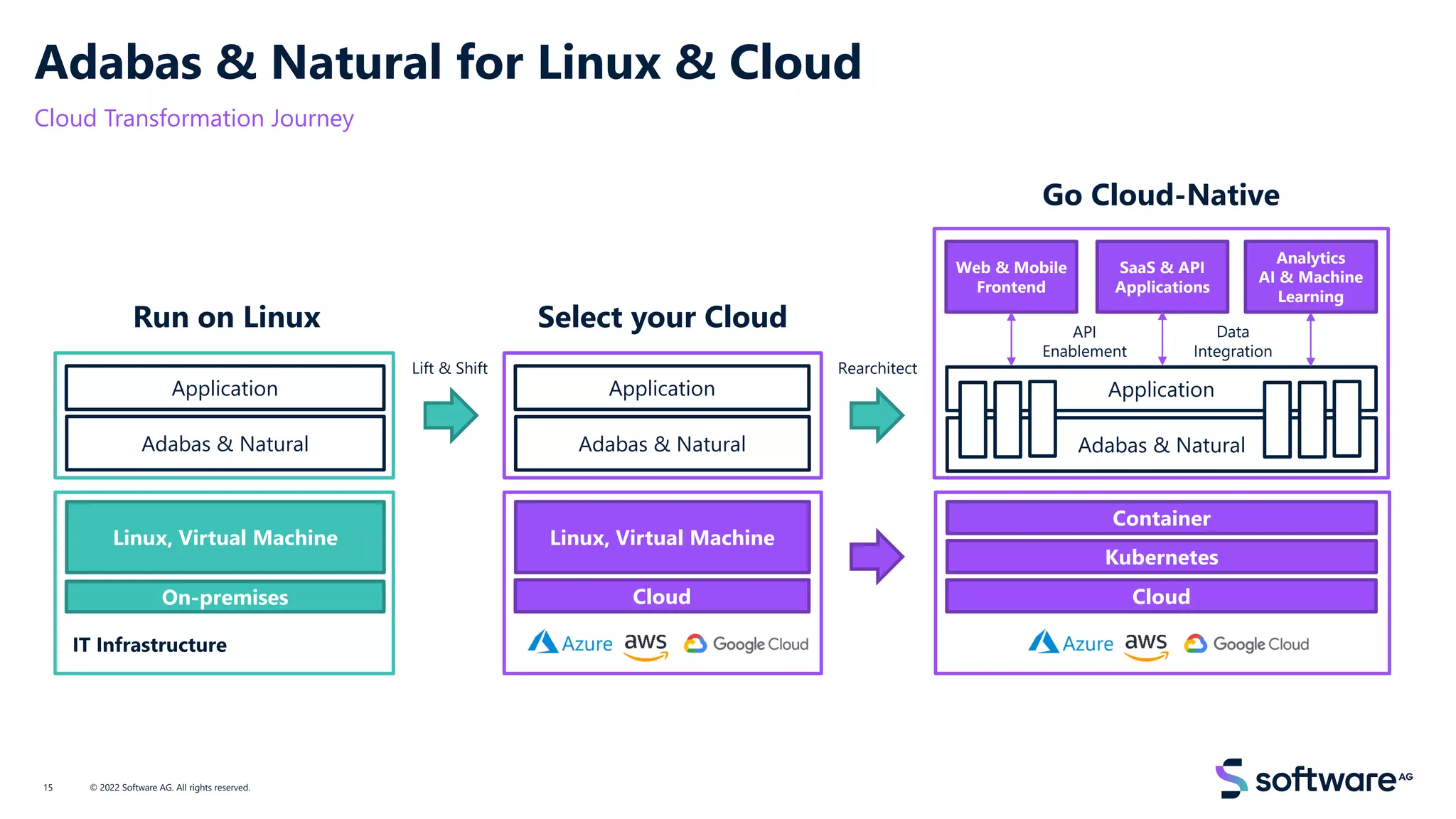

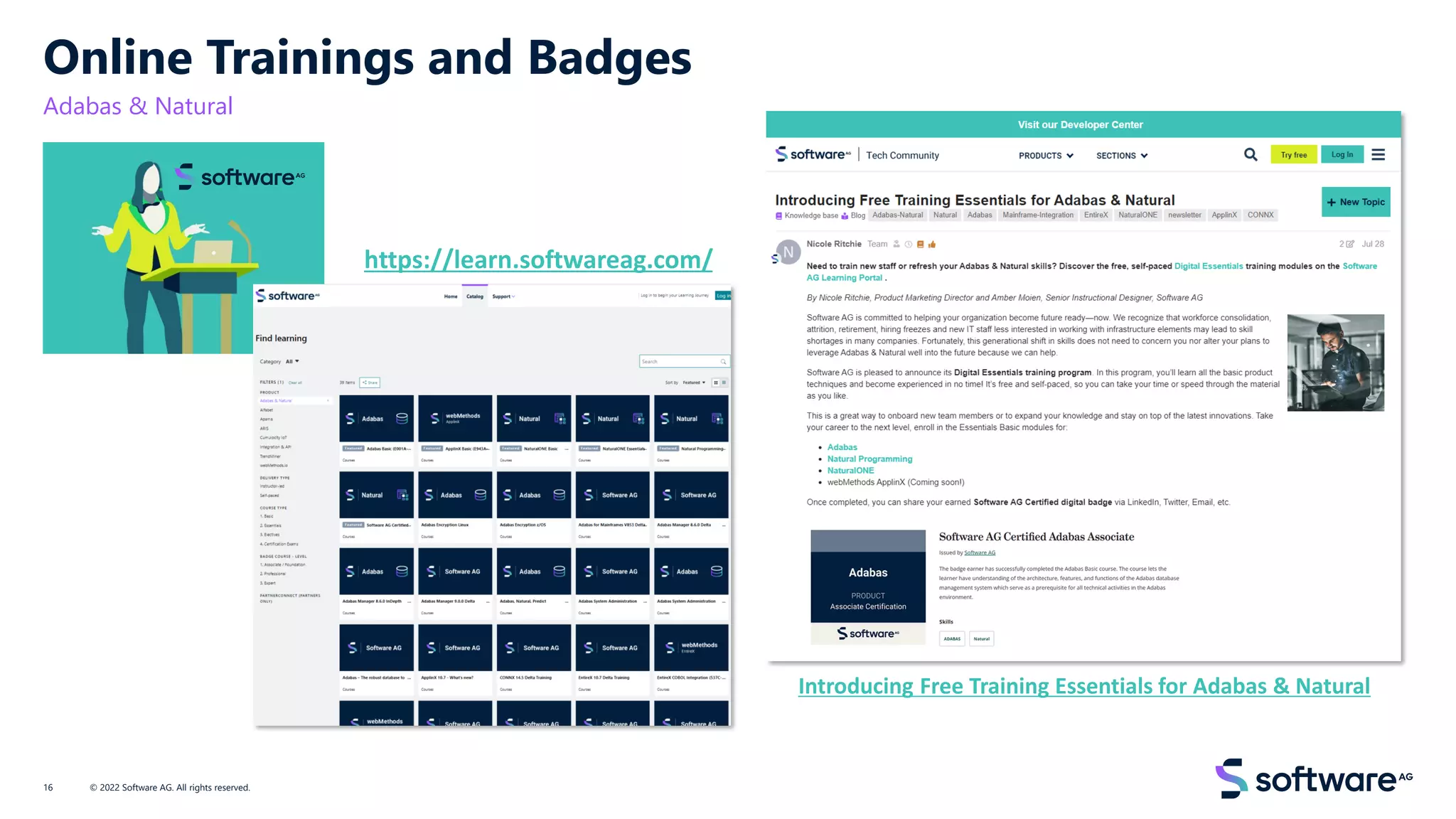

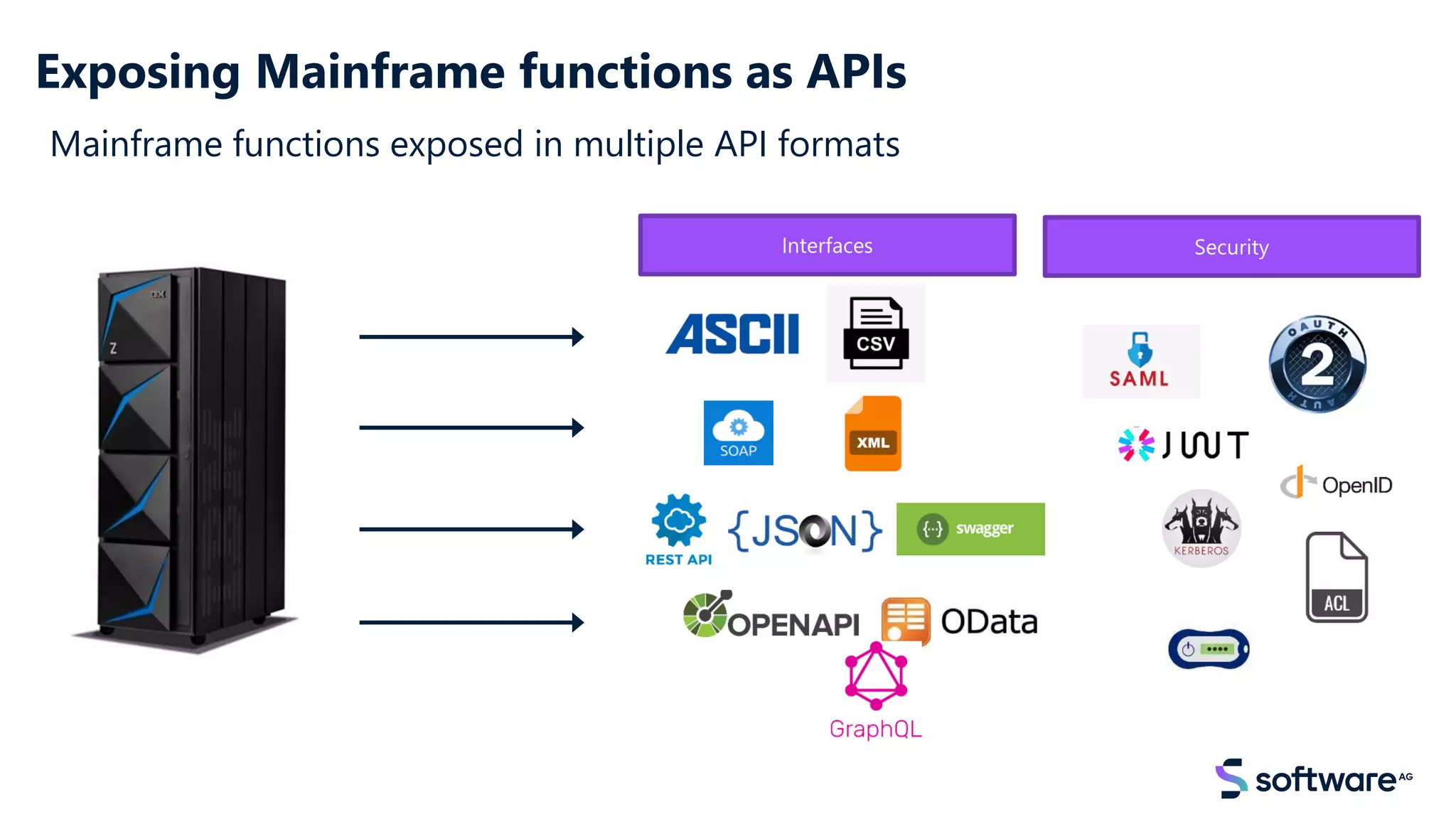

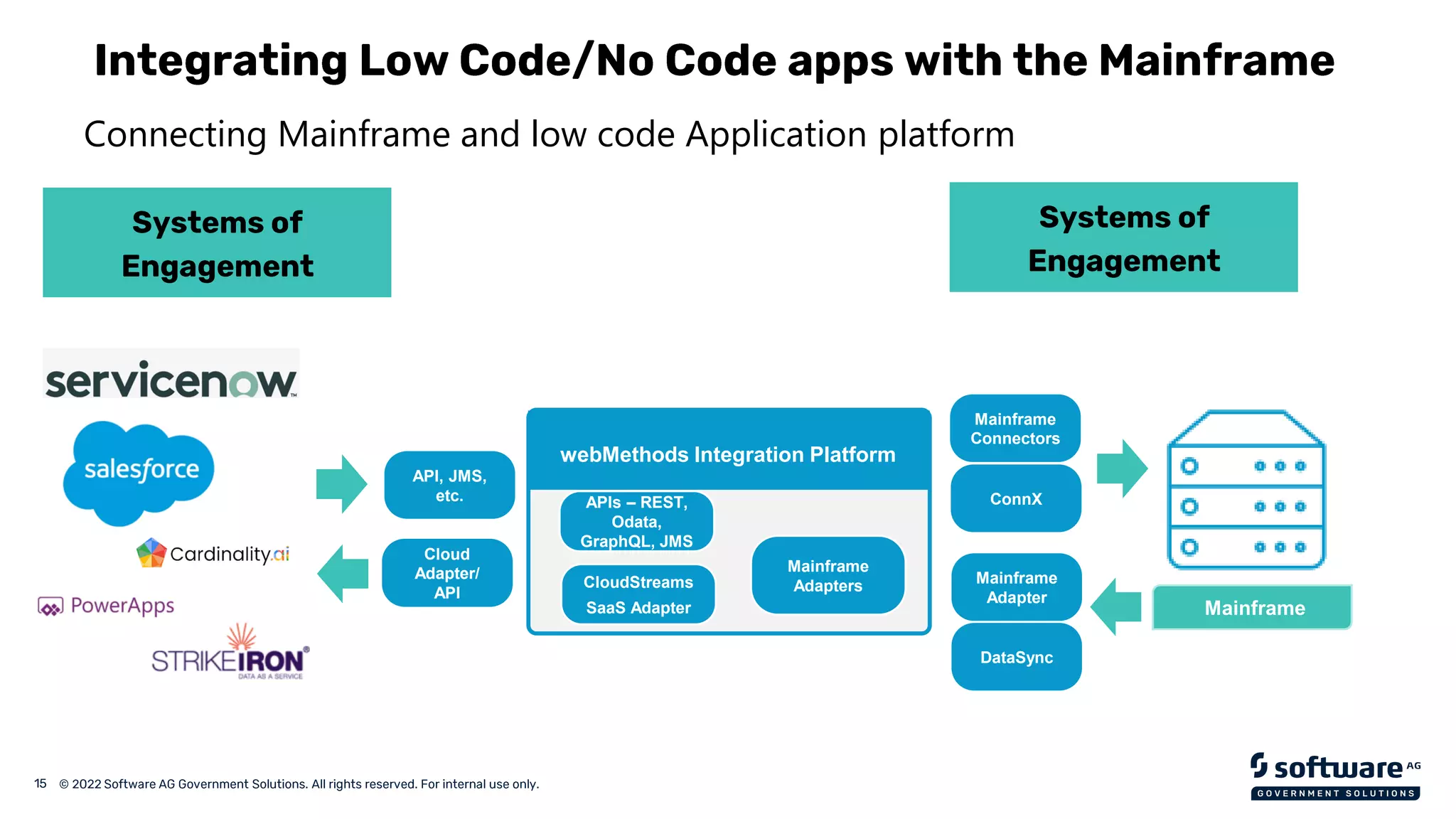

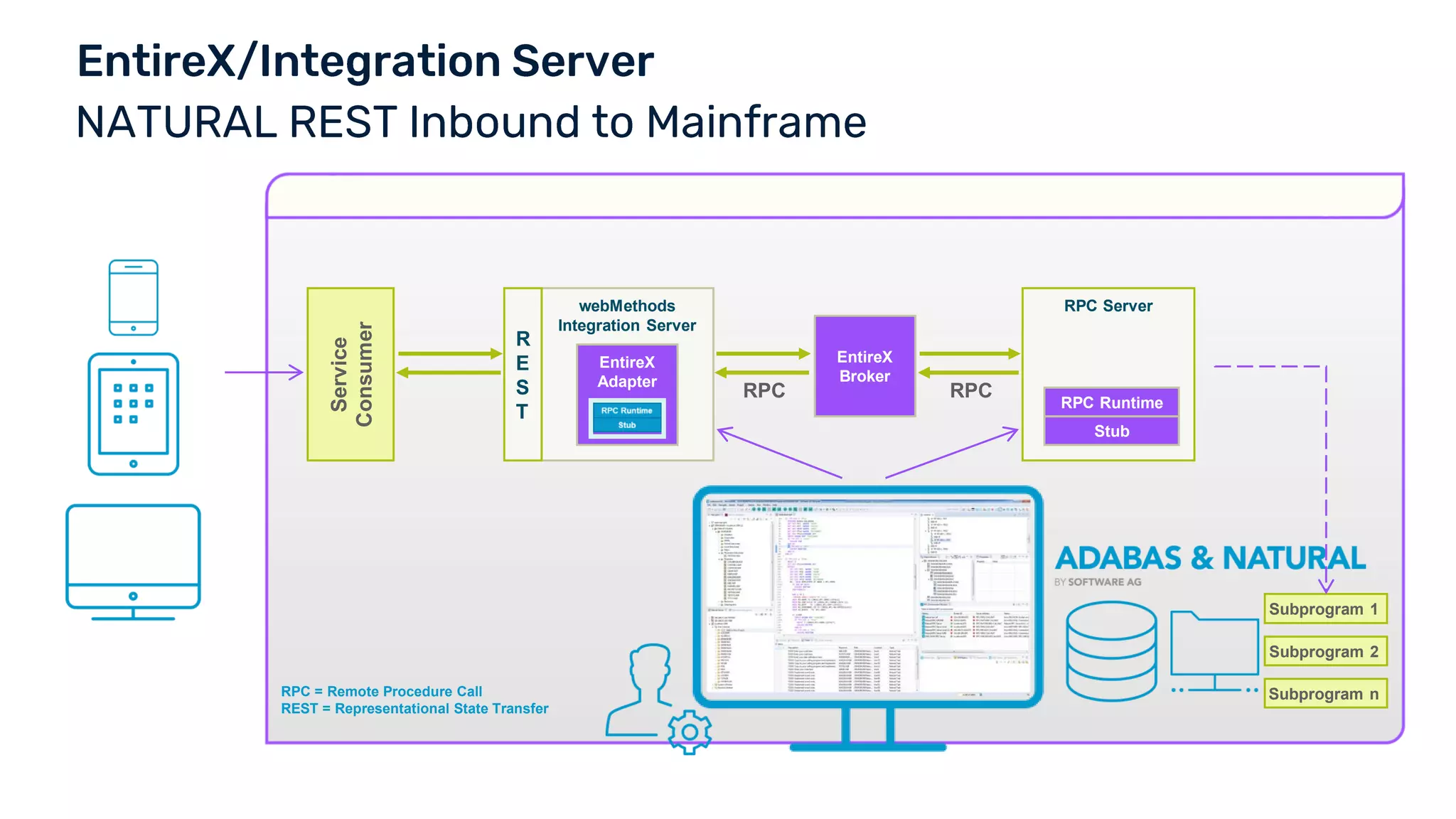

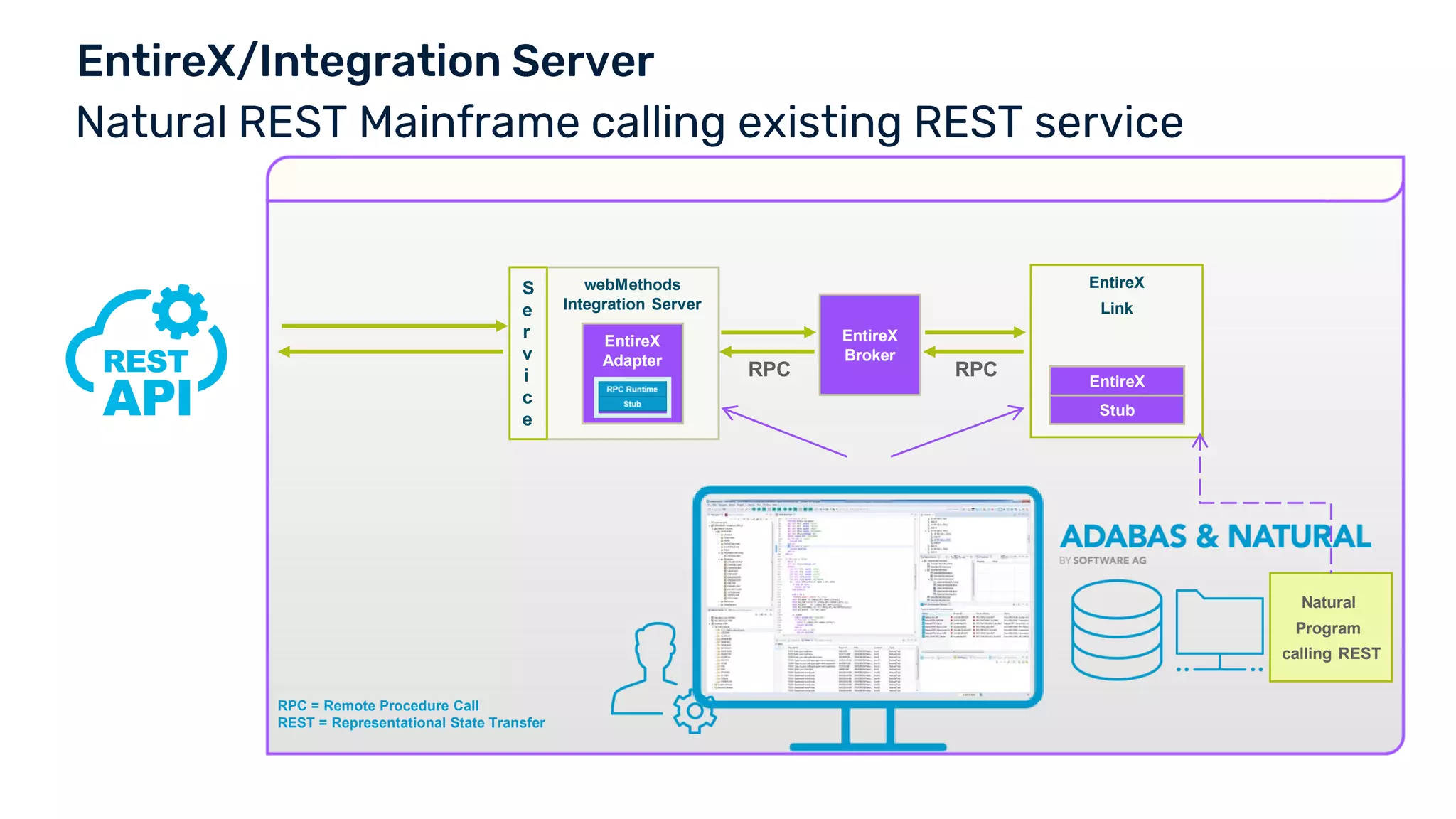

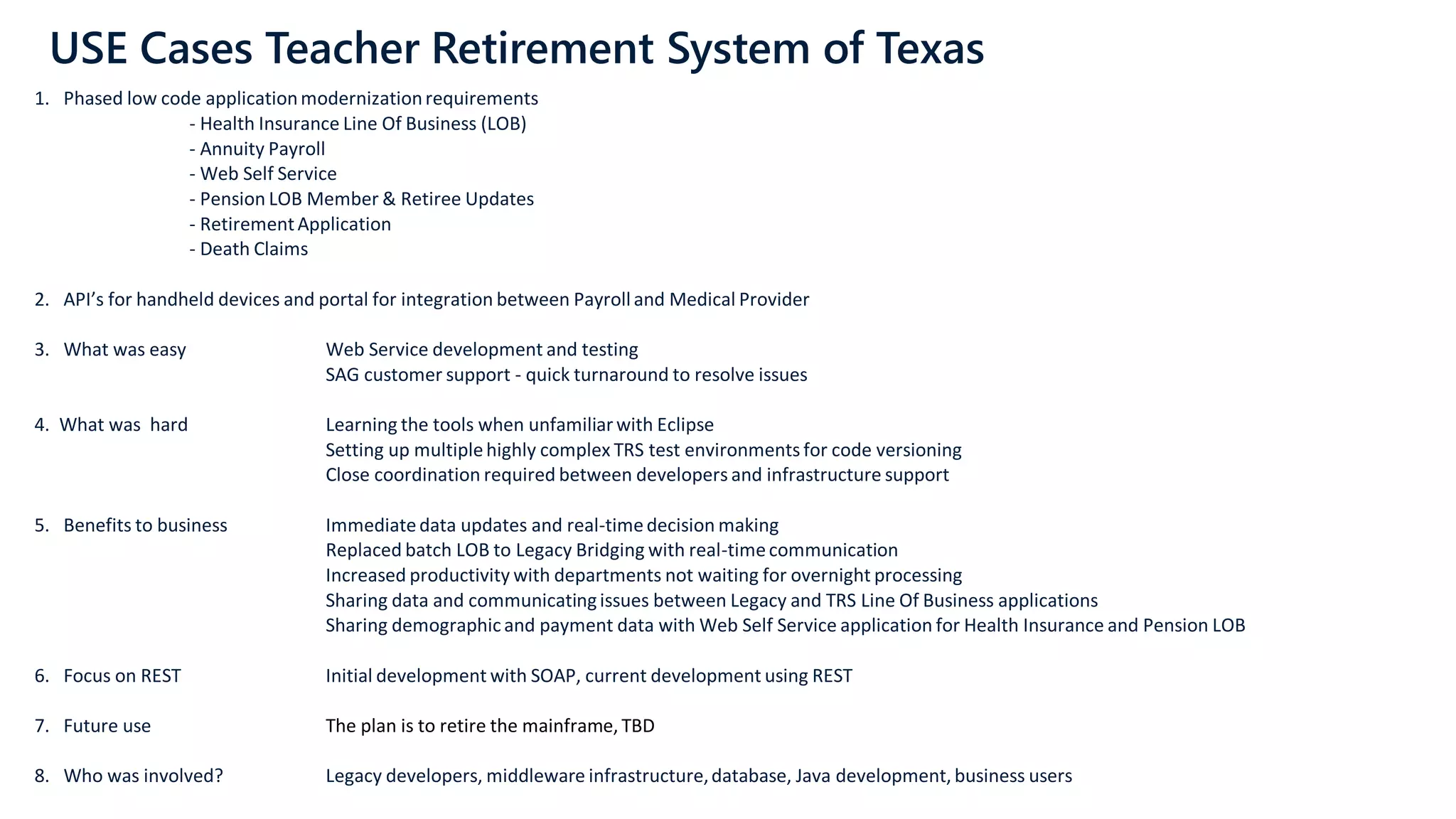

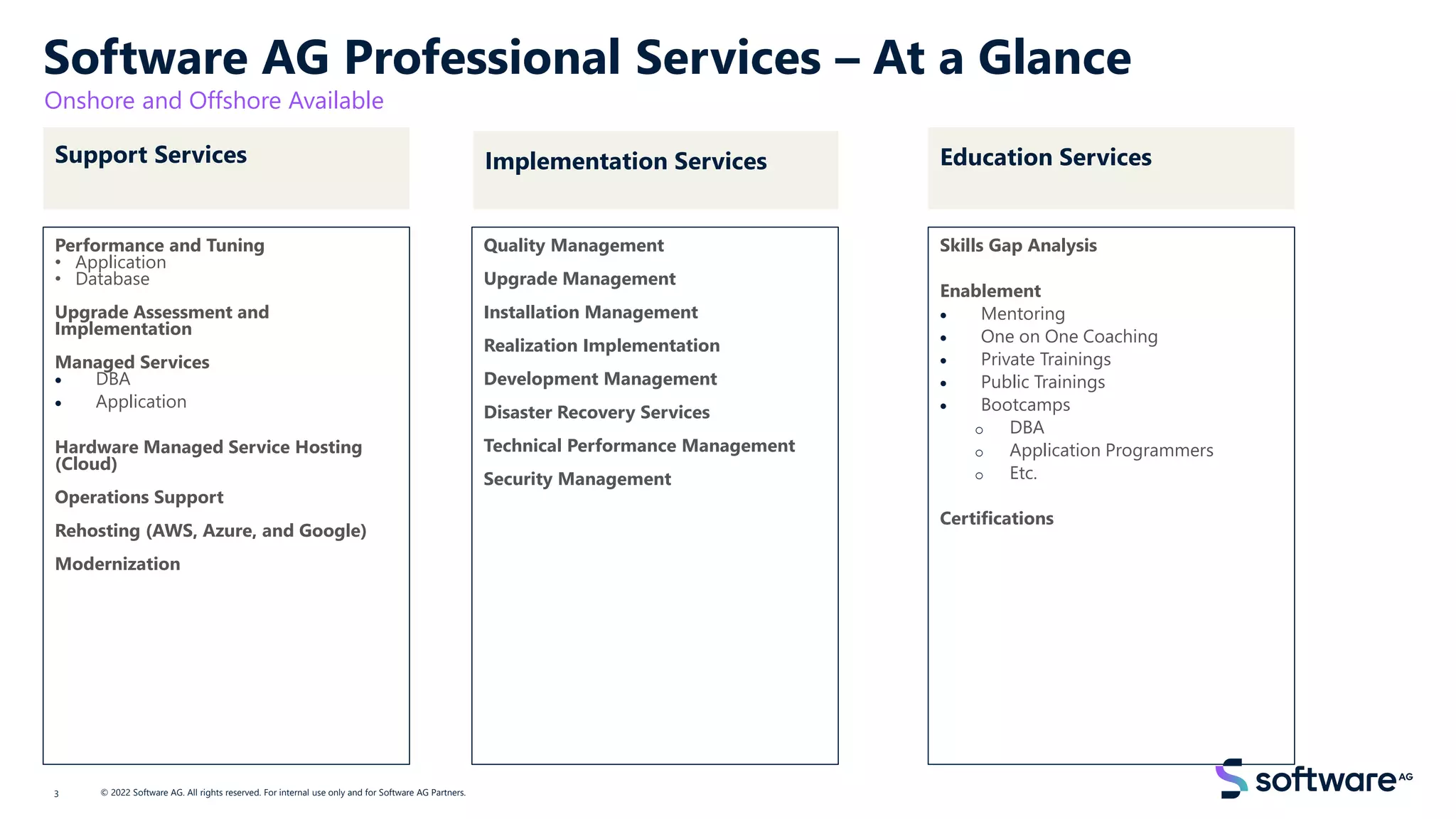

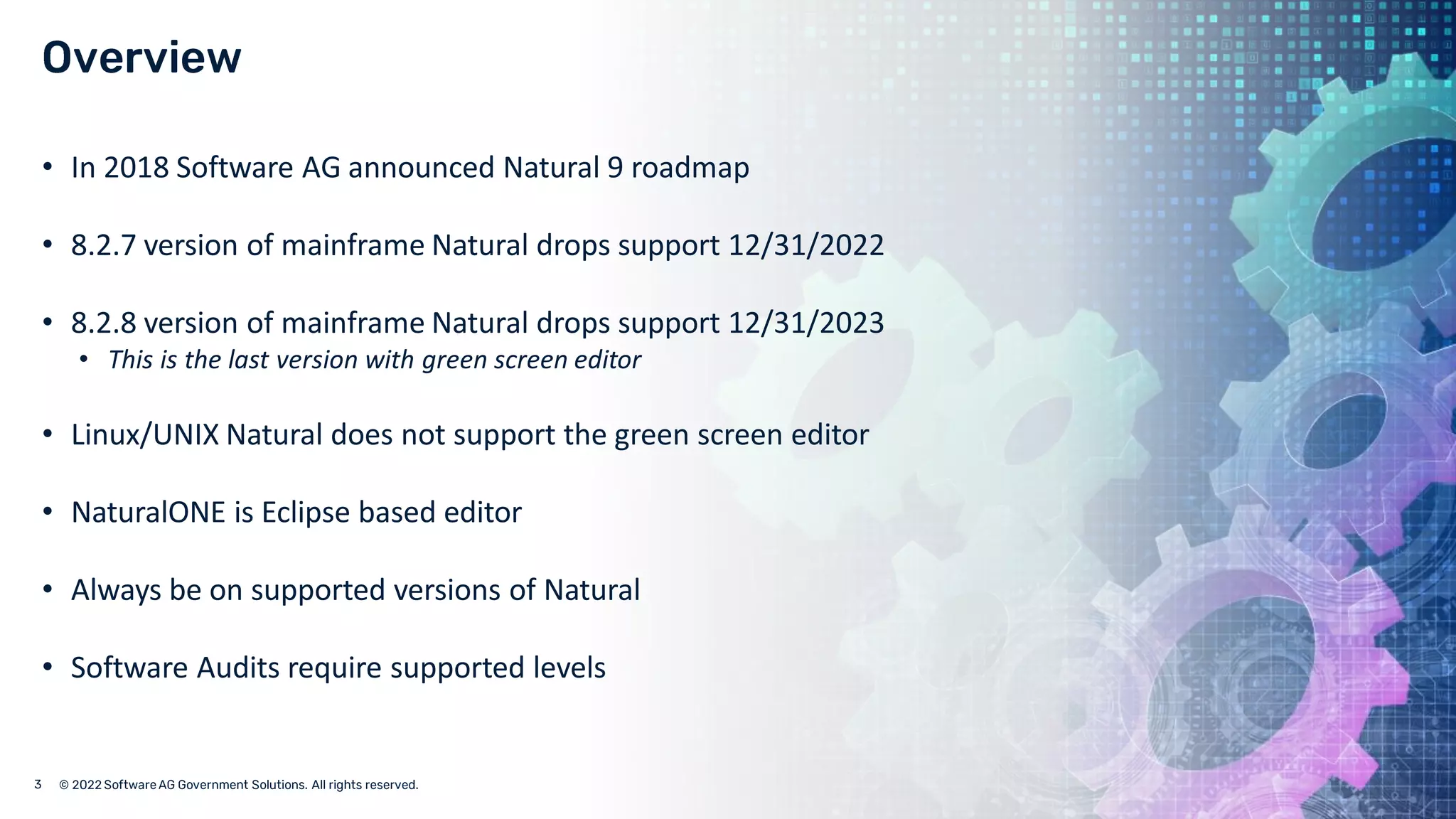

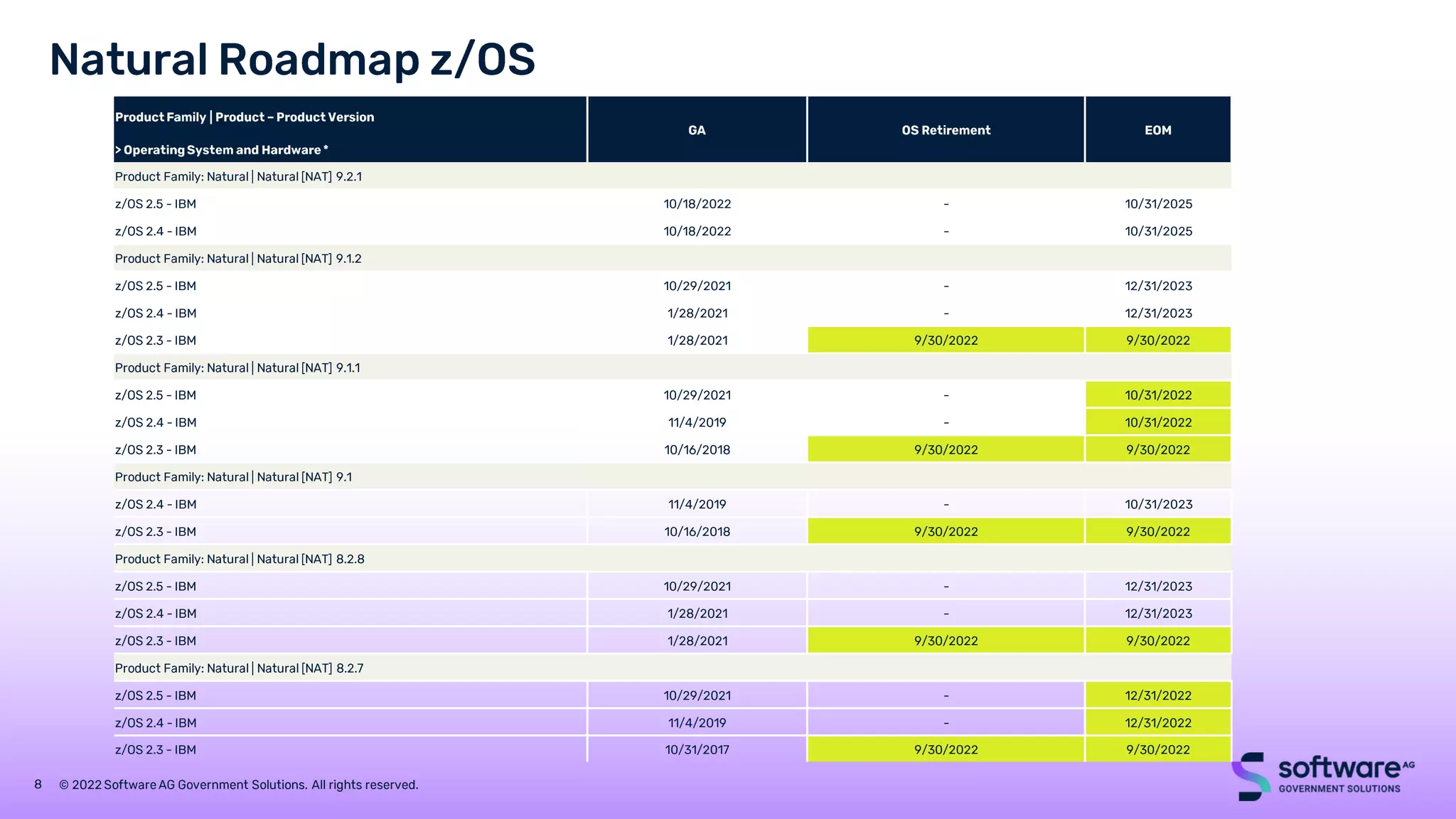

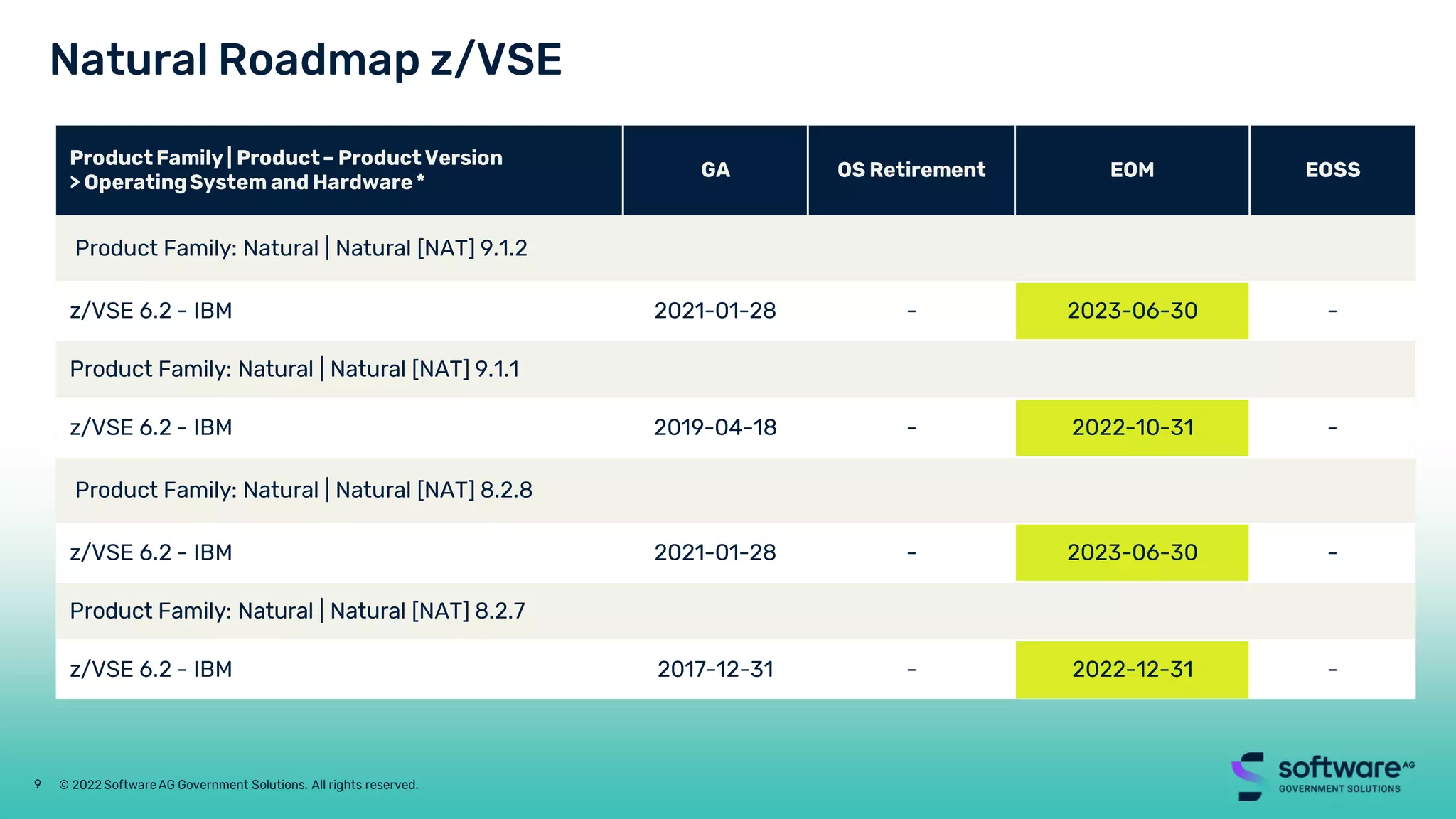

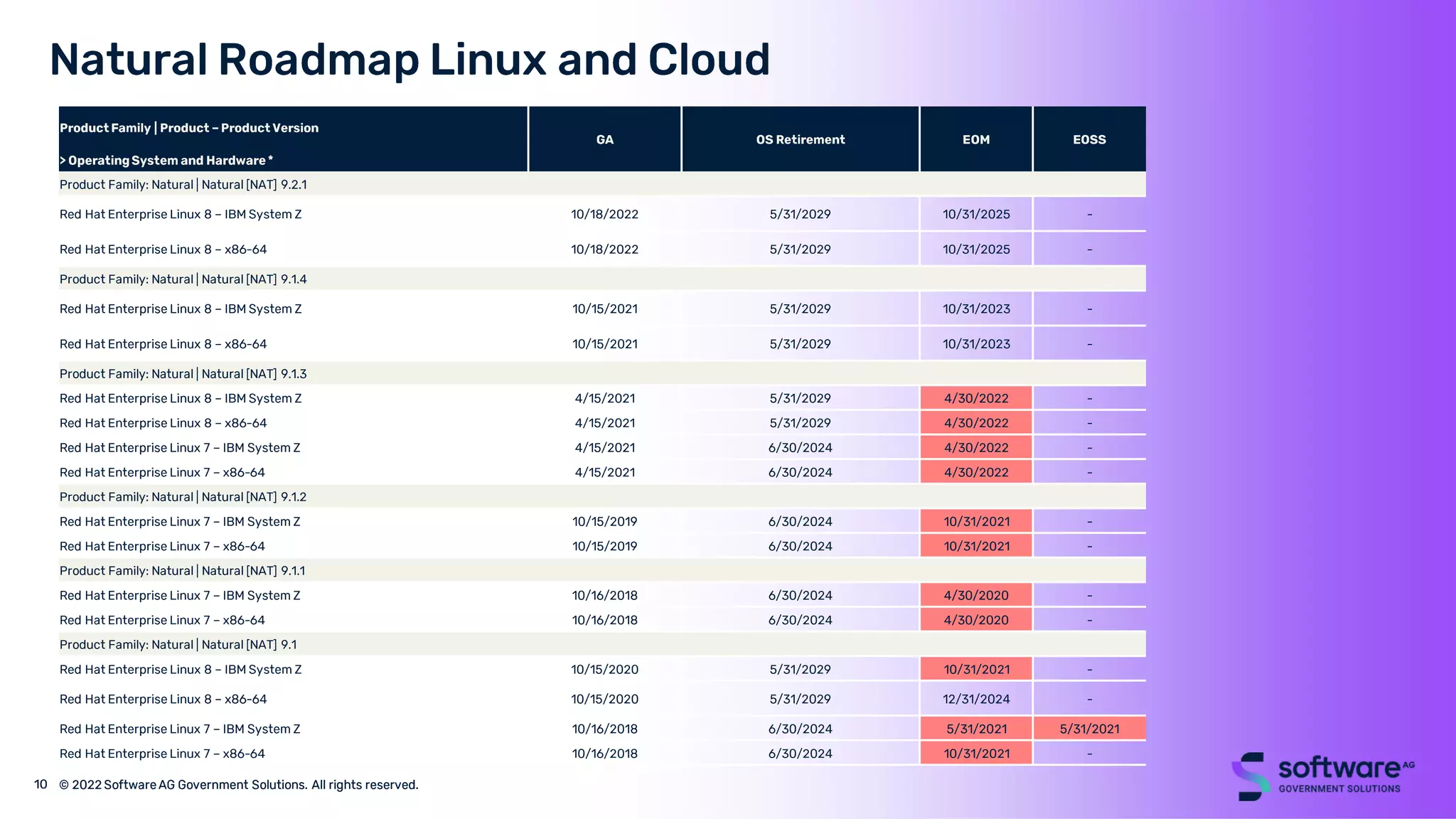

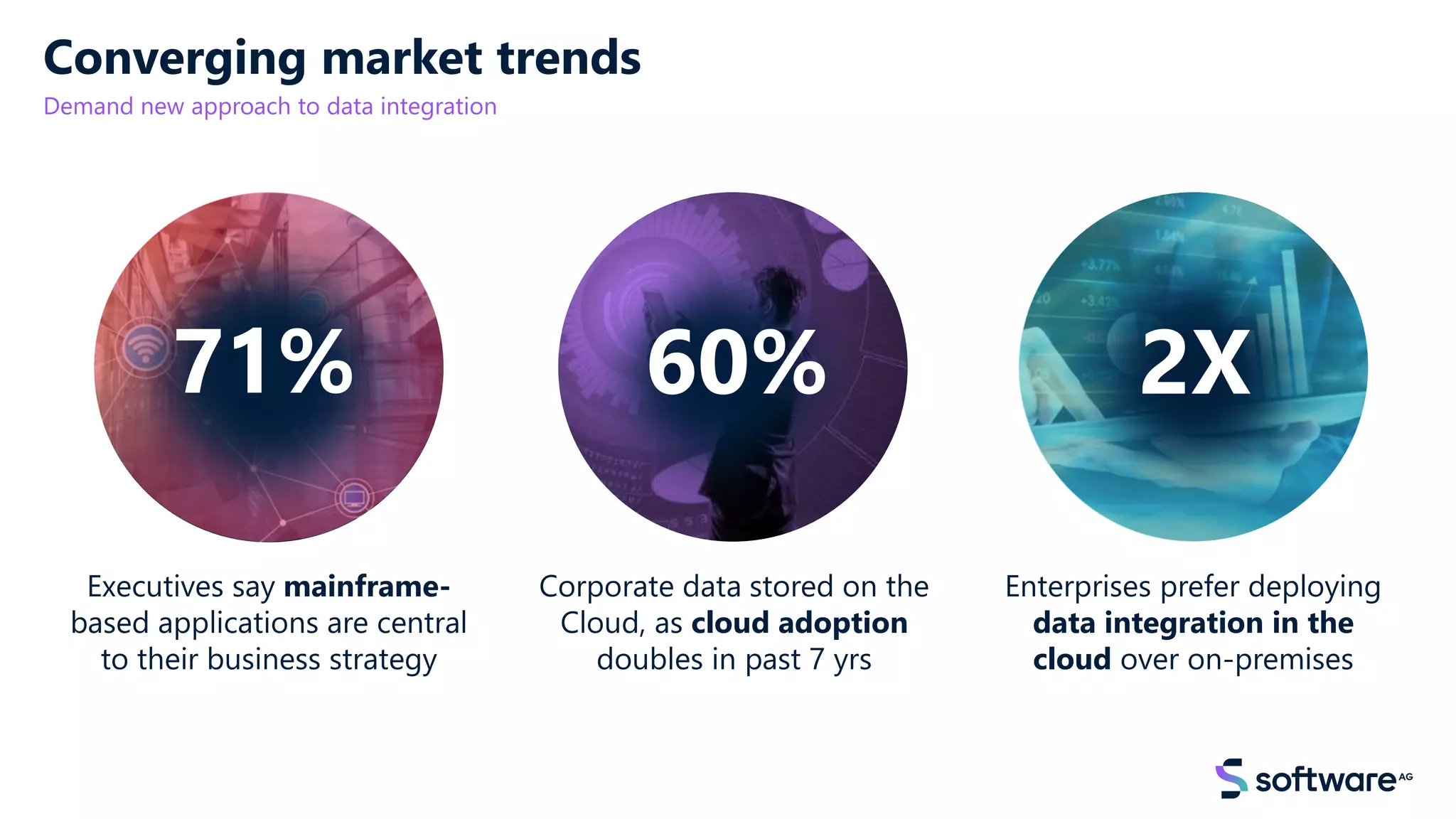

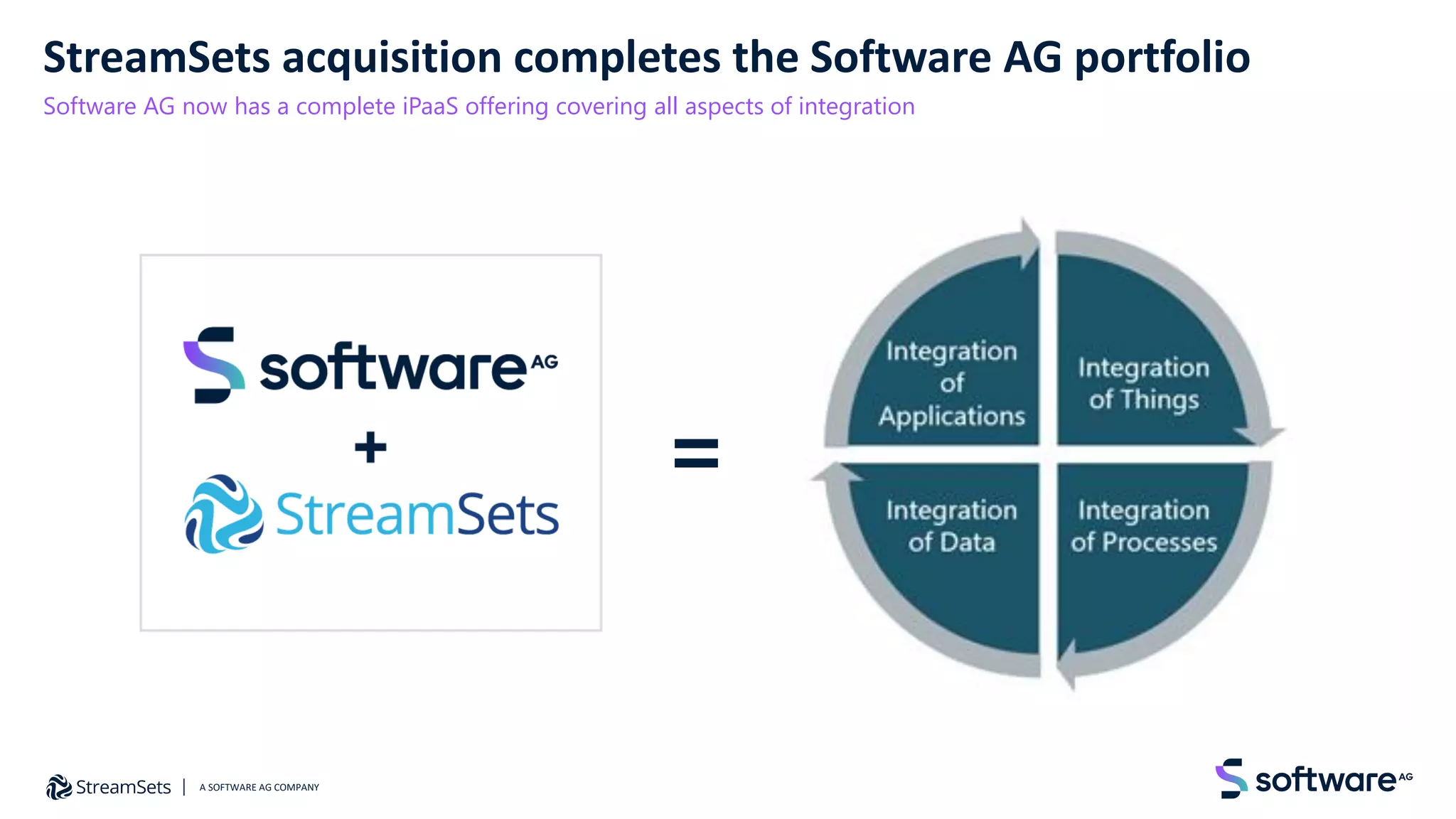

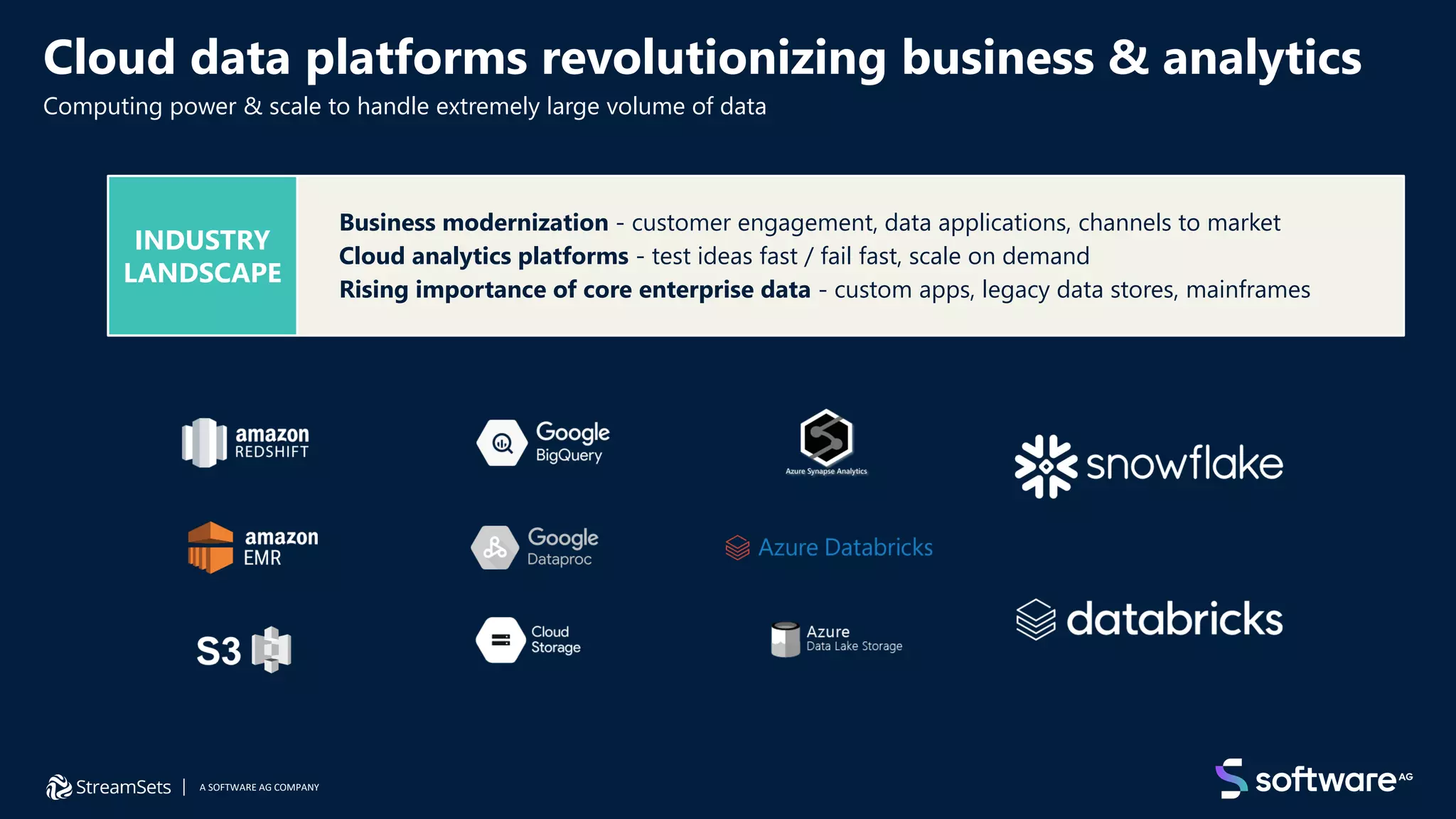

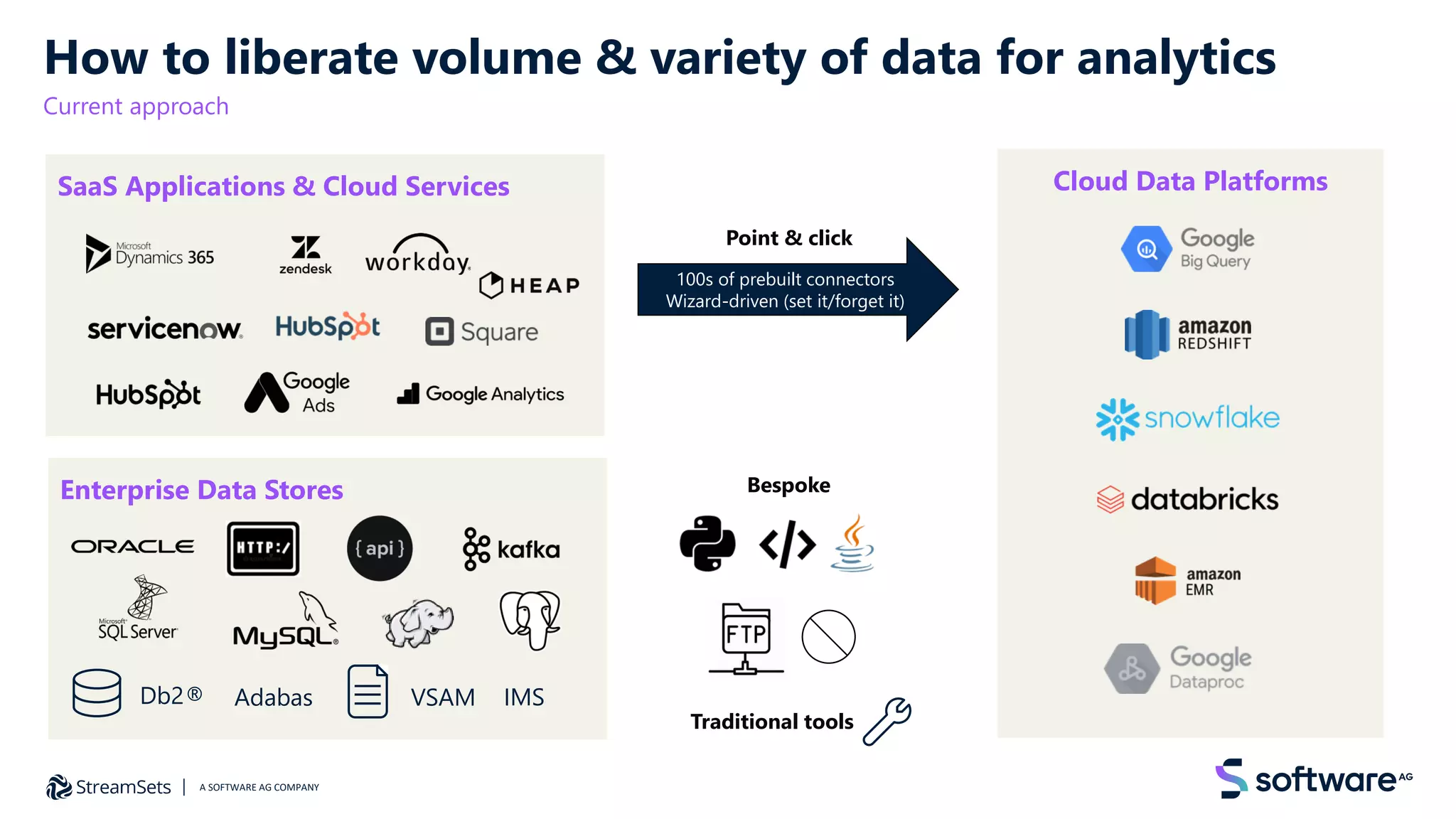

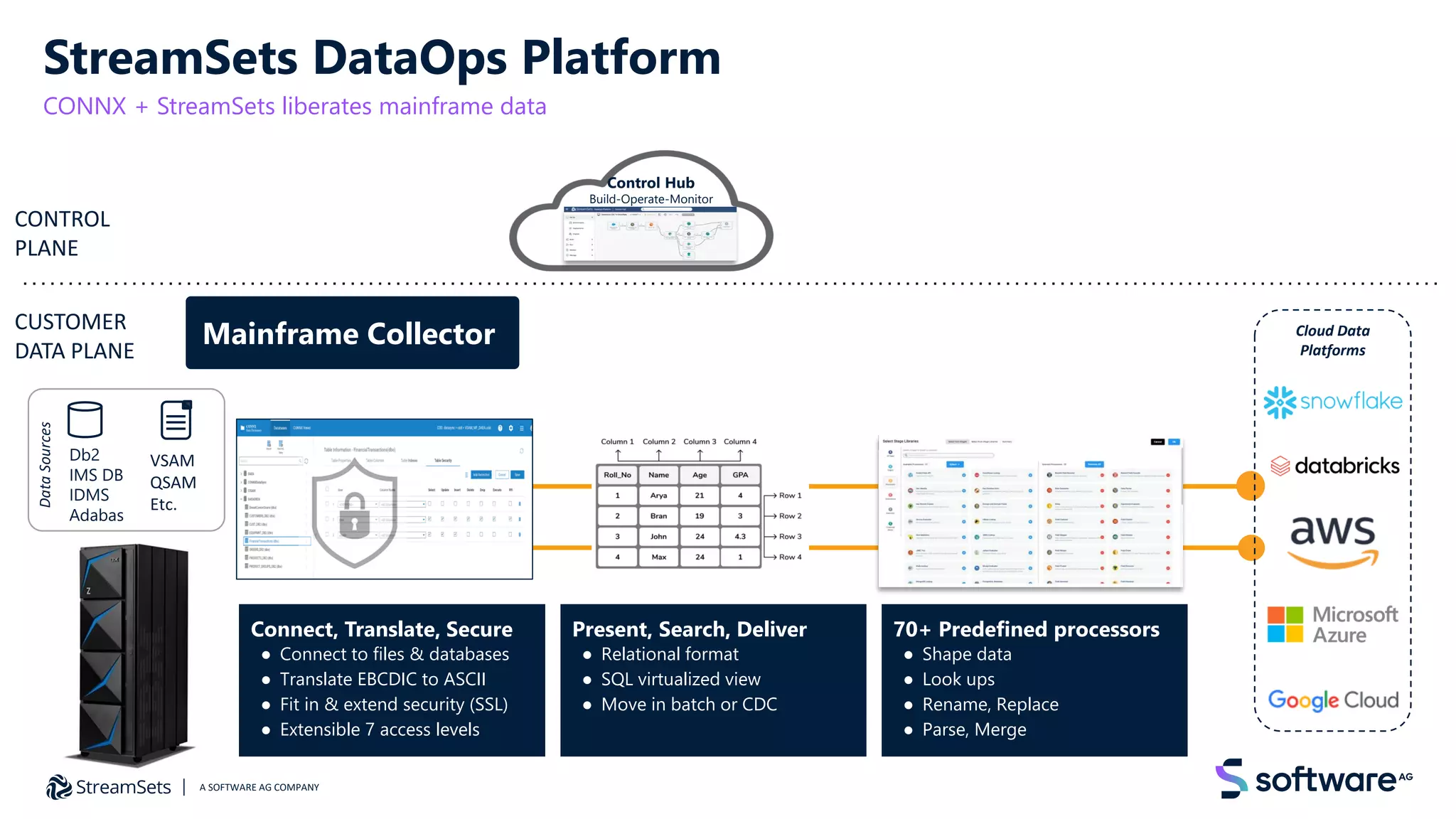

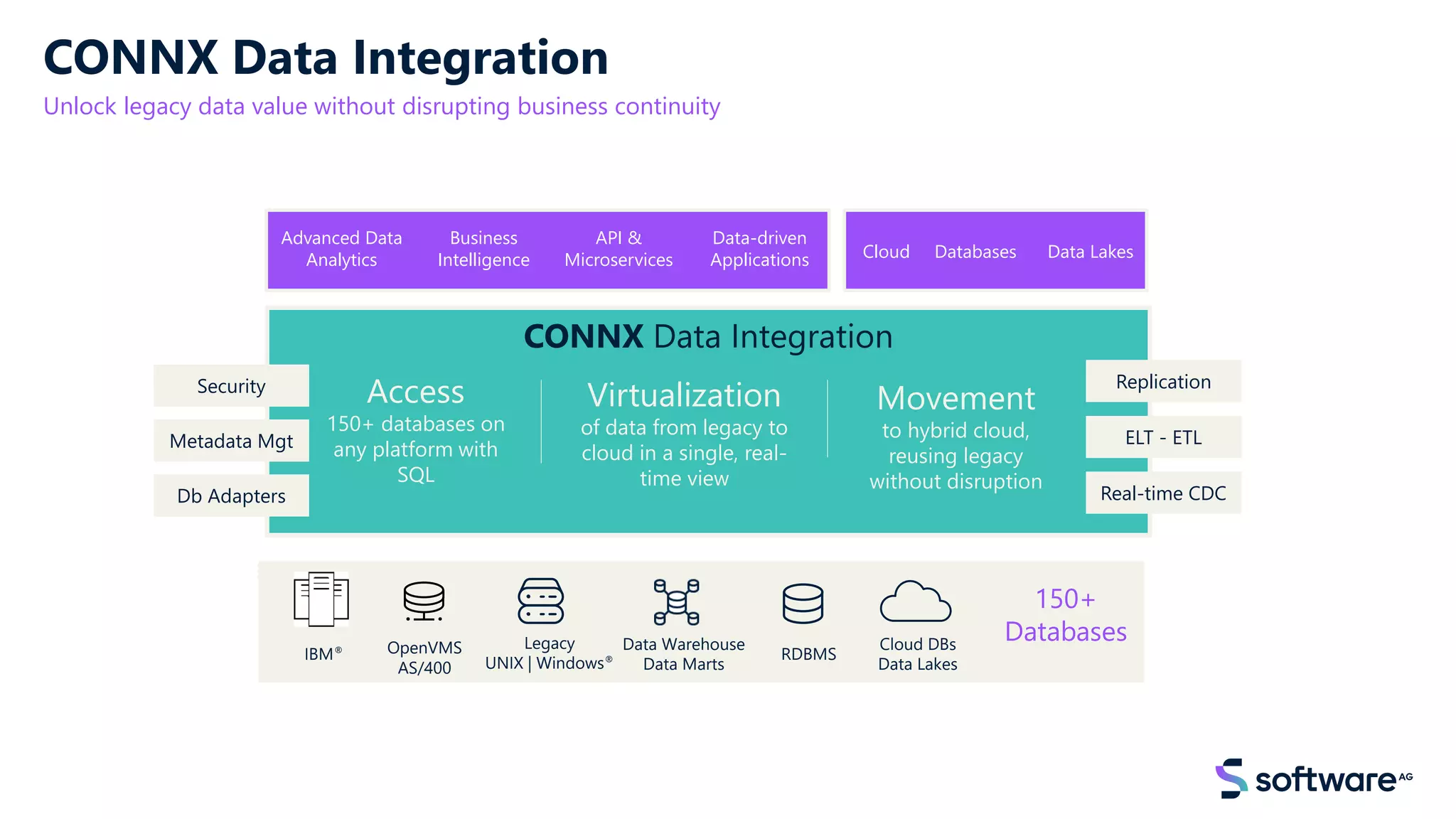

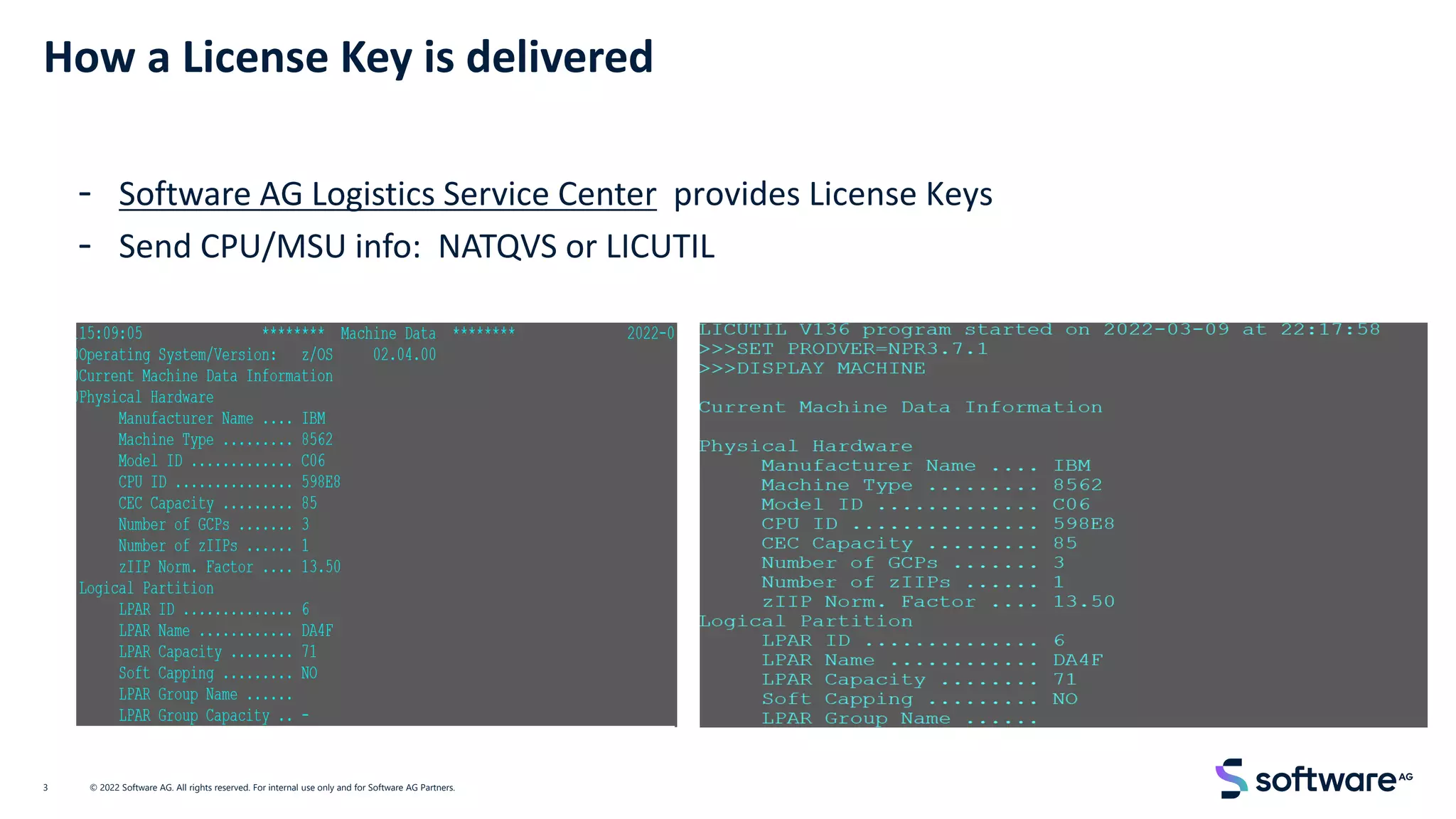

The North America Adabas & Natural User Group virtual meeting on November 17, 2022, covered topics including innovations, mainframe modernization, and API implementation for various organizations. Key discussions focused on bridging skills gaps, end-of-support considerations for certain software versions, and strategies for data liberation and analytics. The meeting emphasized modernizing and securing business-critical applications and integrating new technologies like cloud and API management.