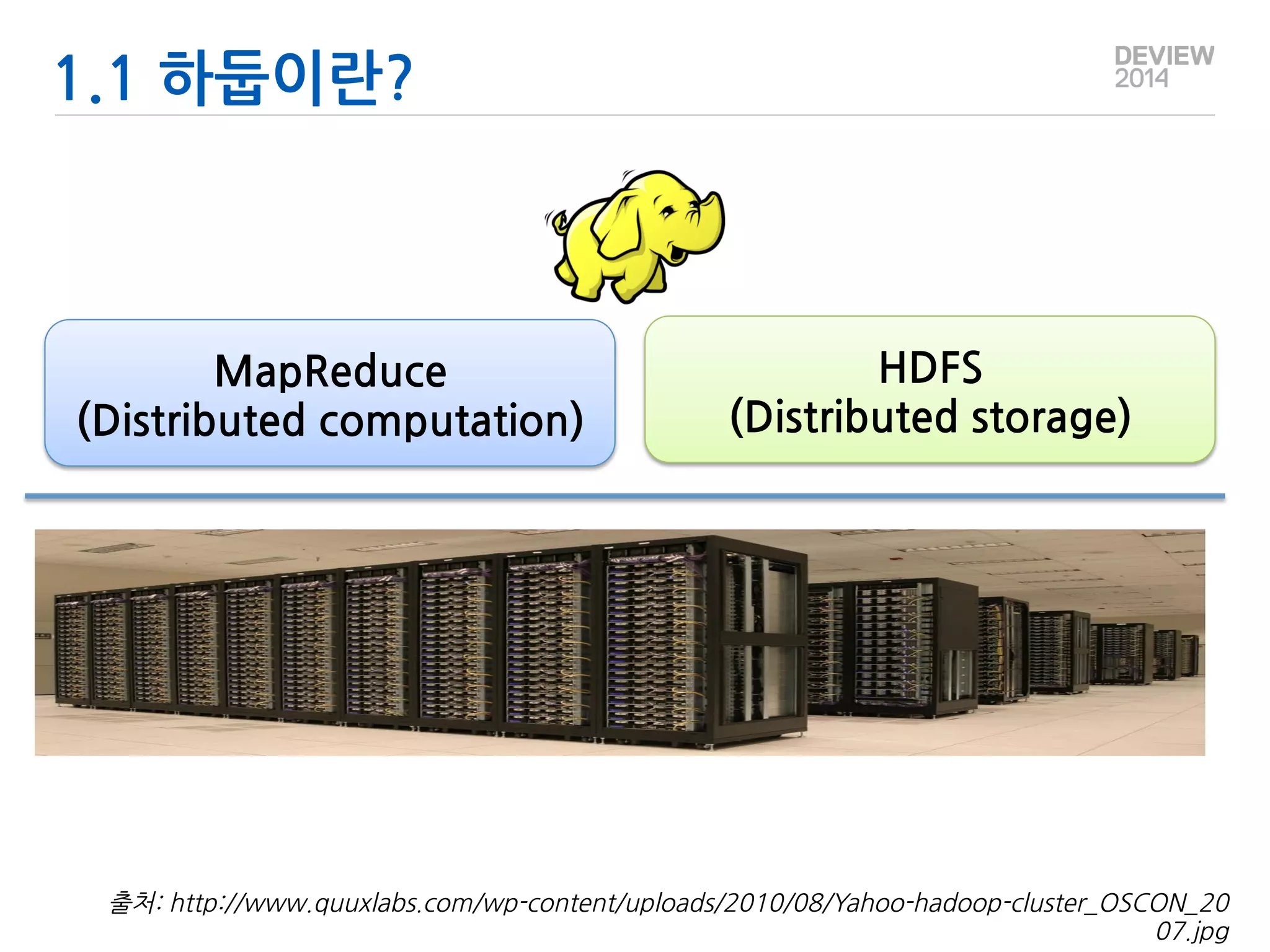

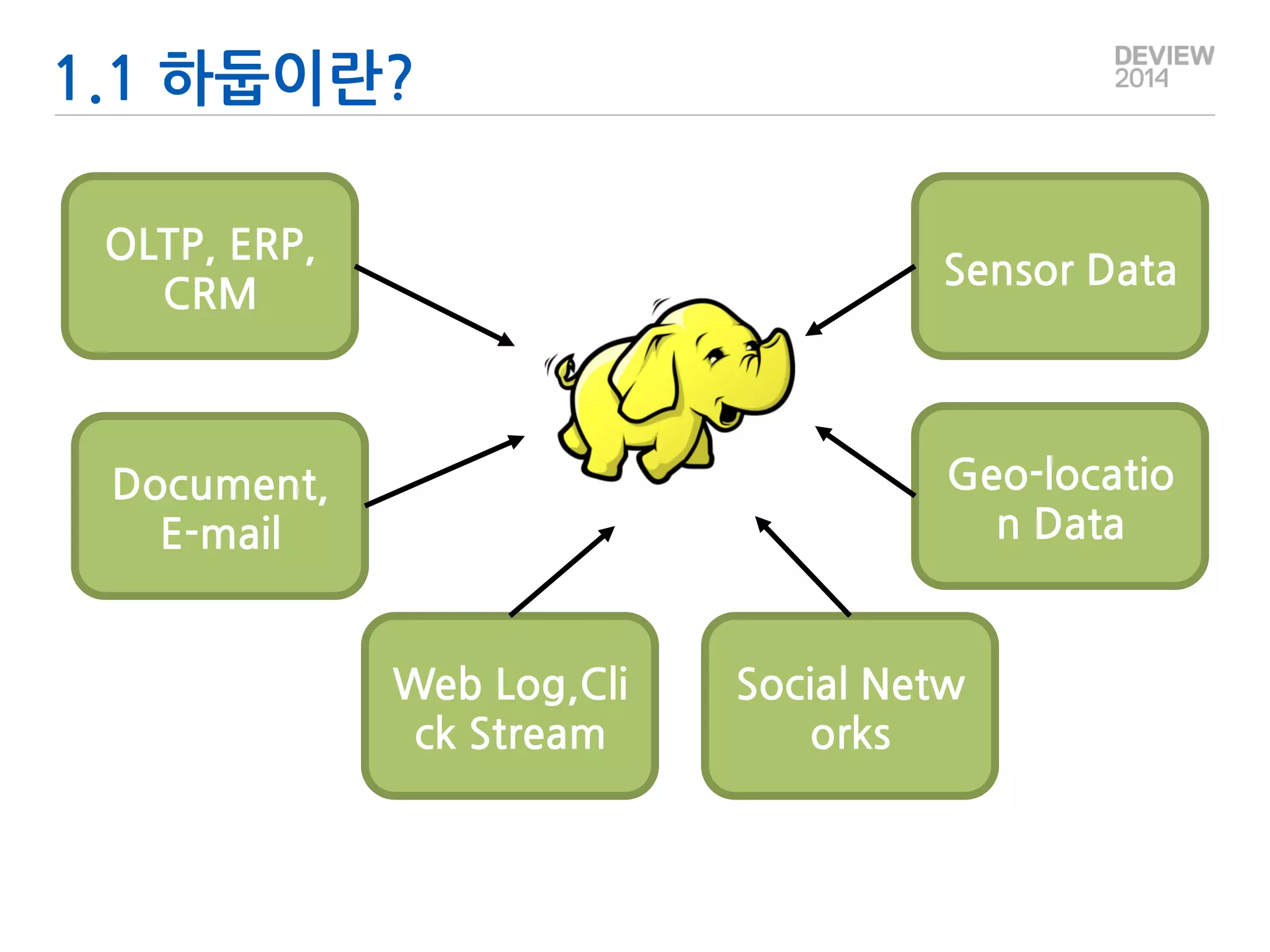

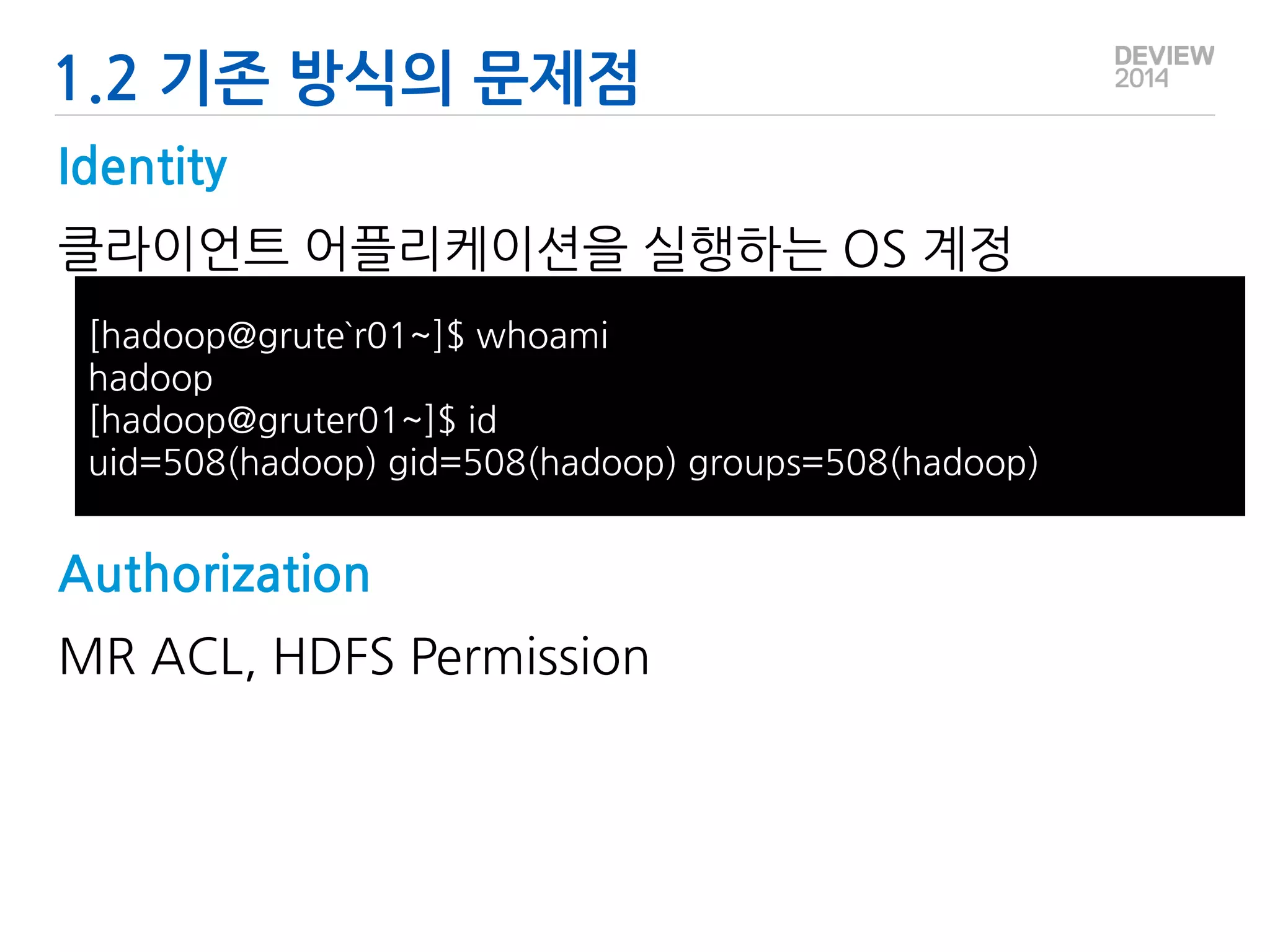

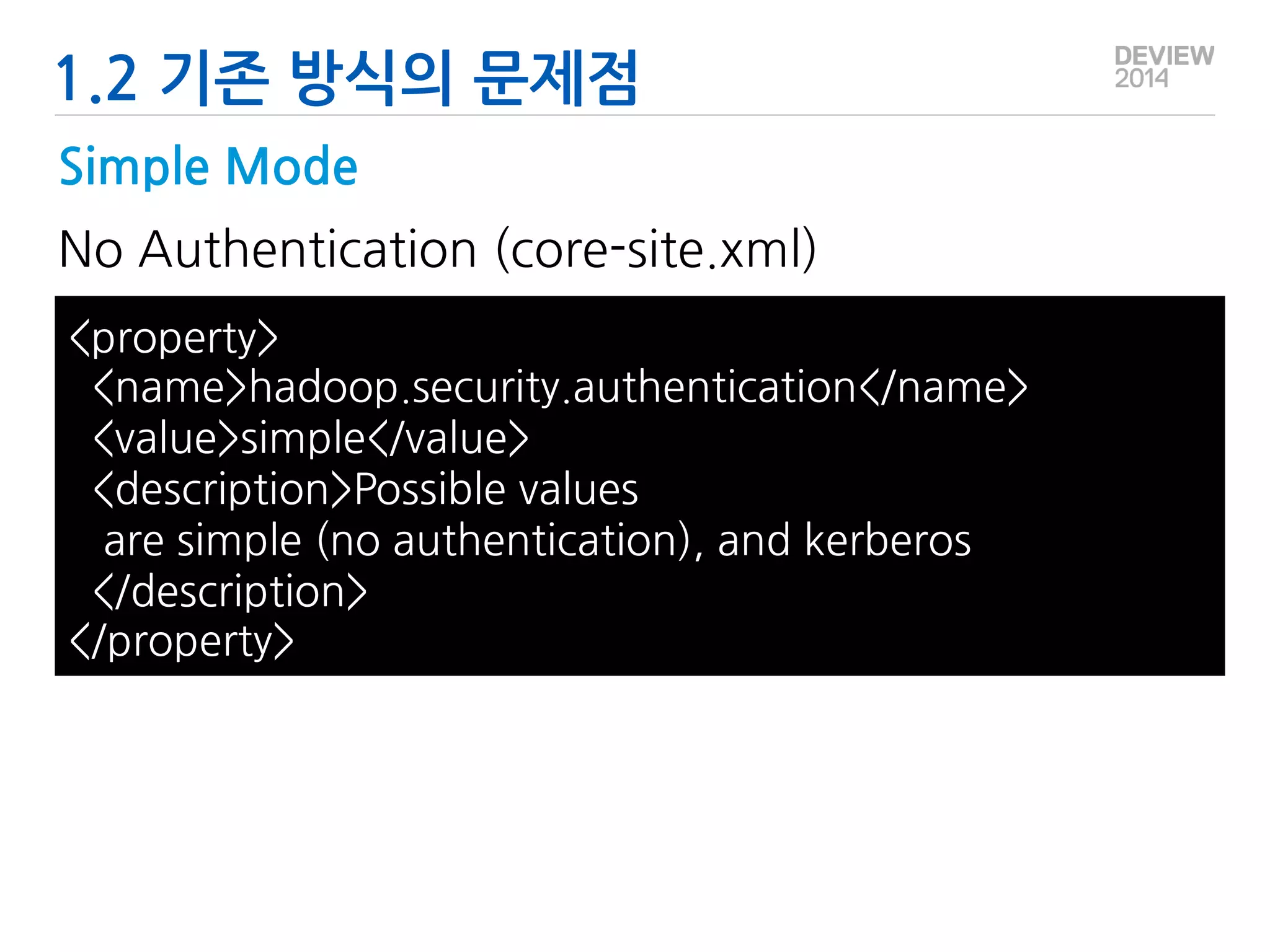

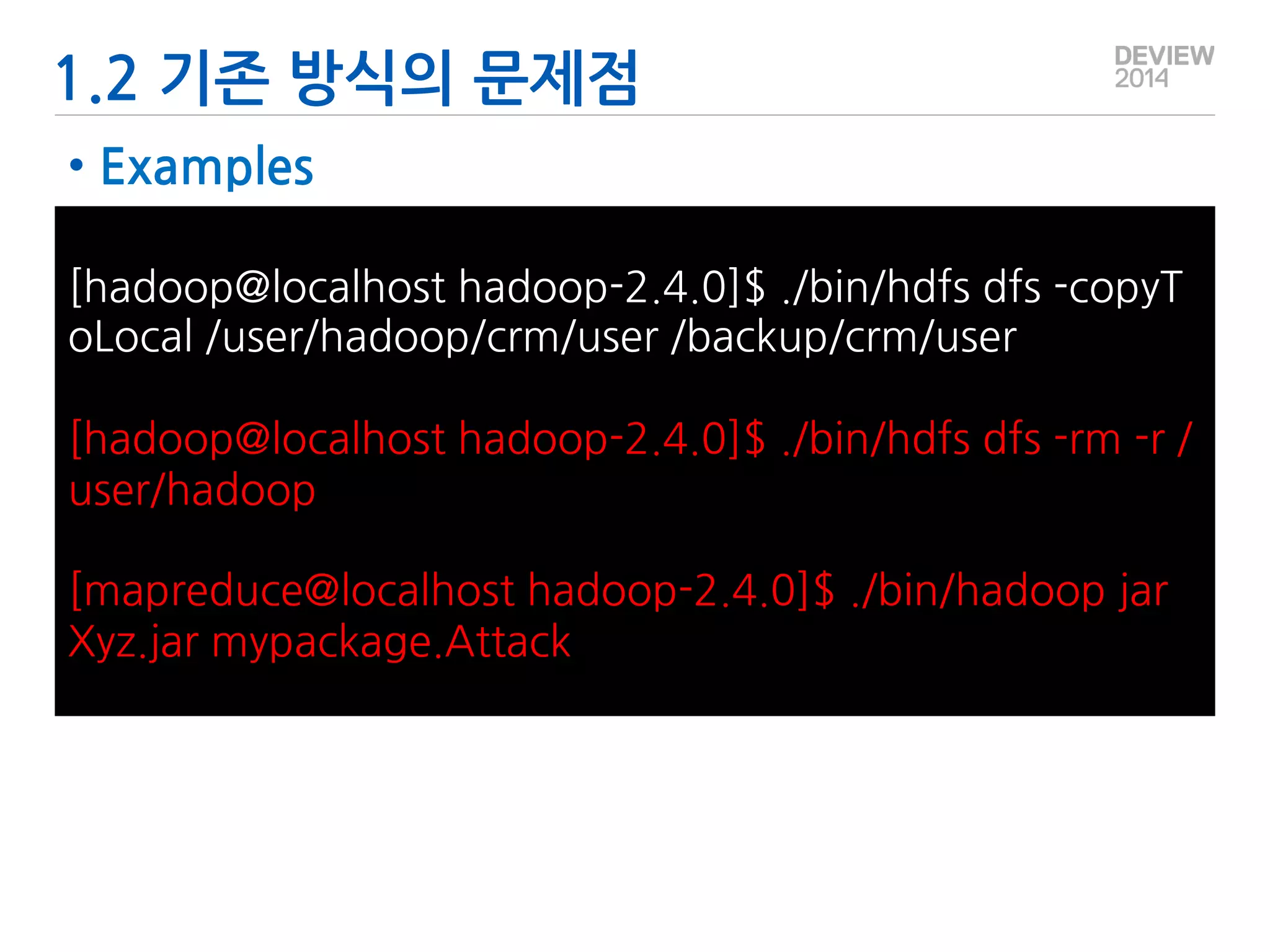

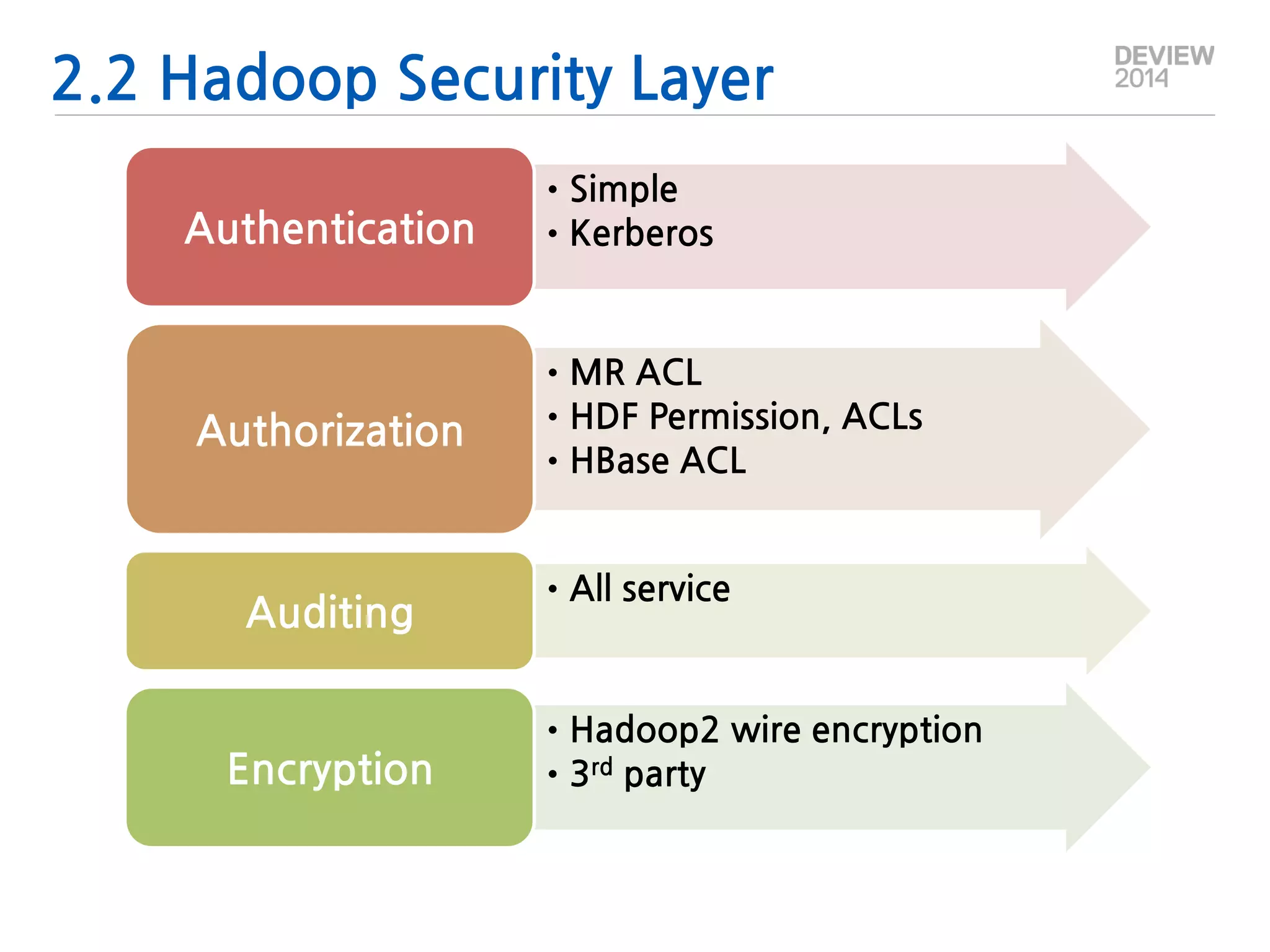

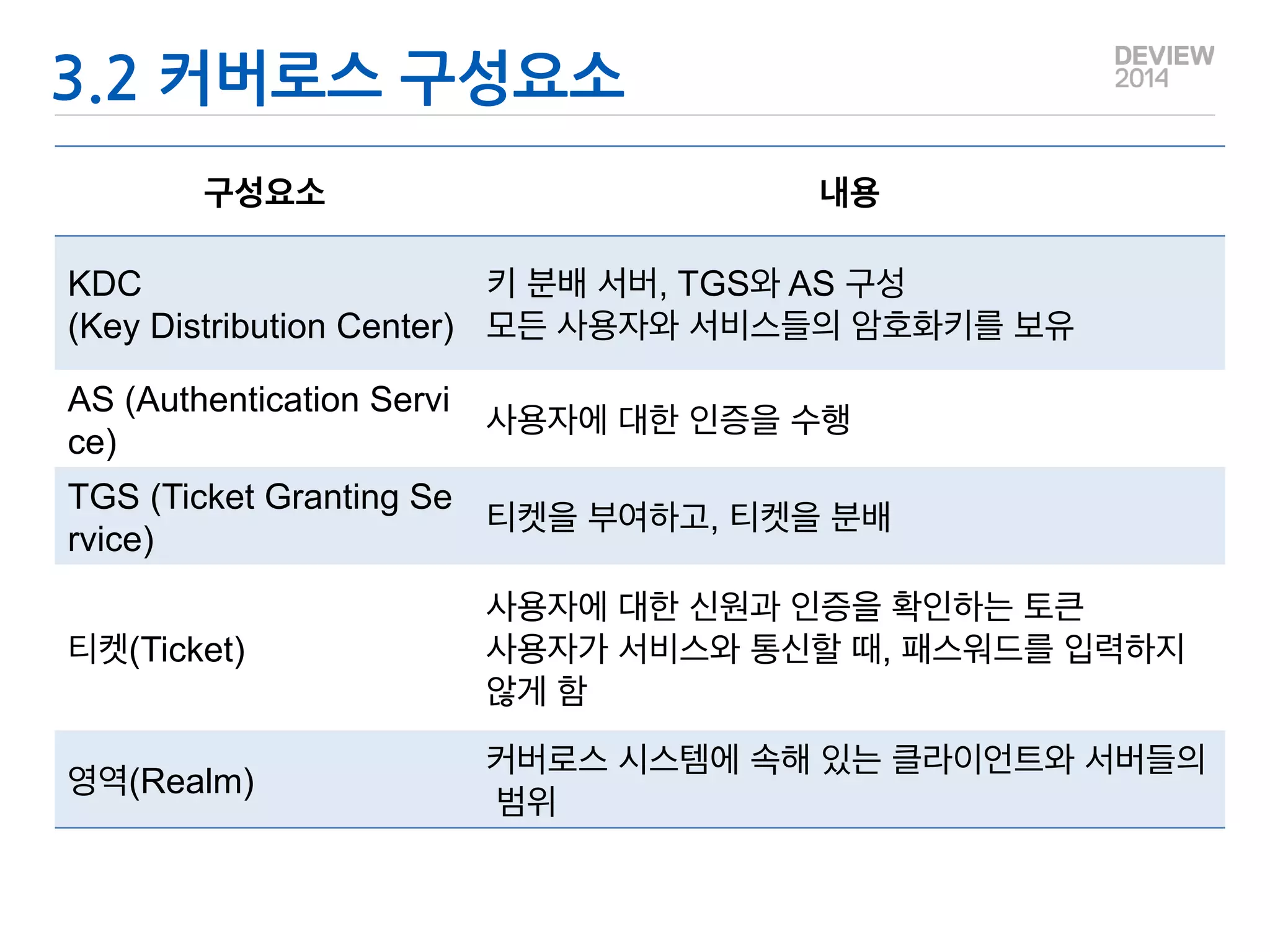

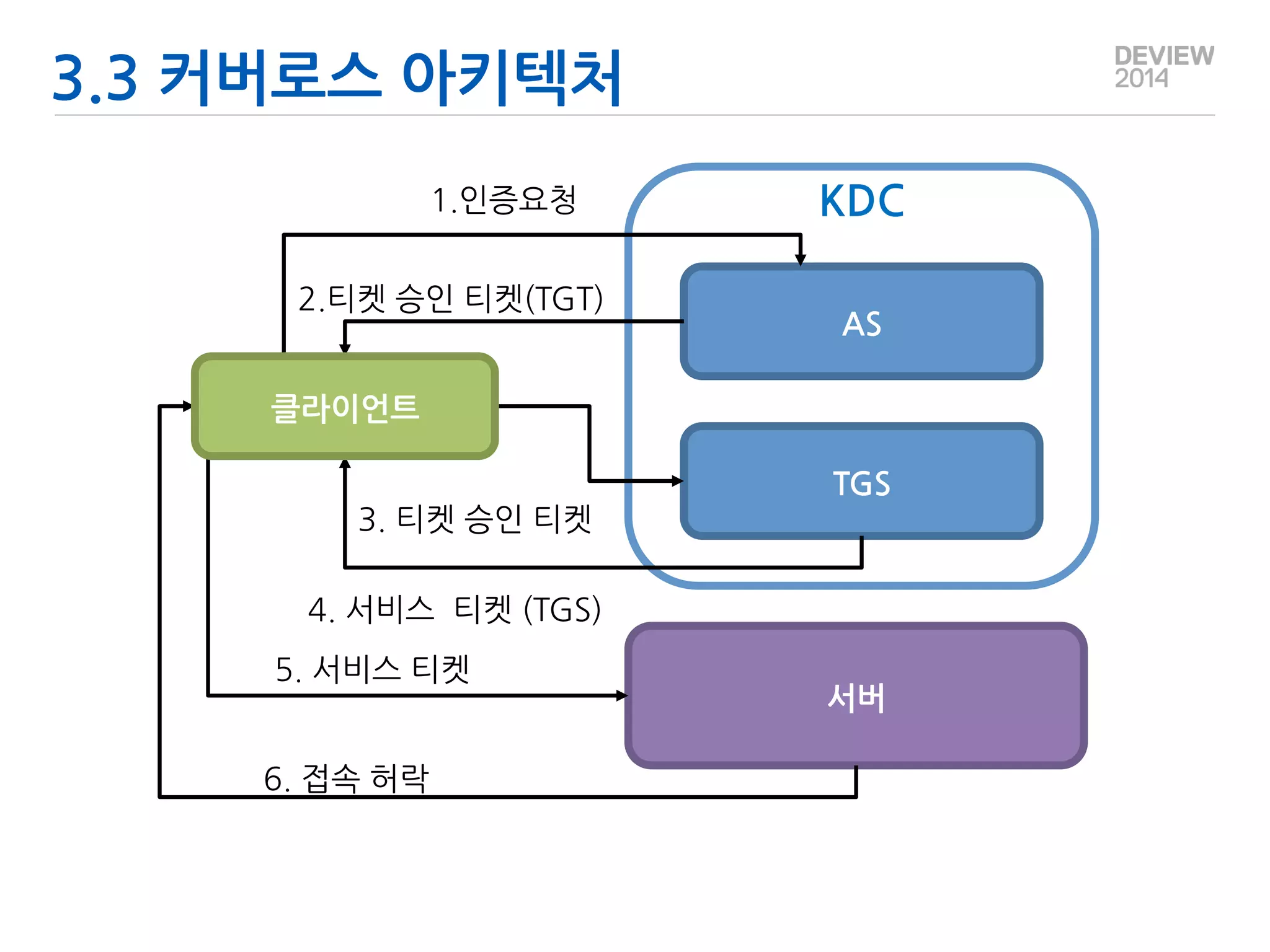

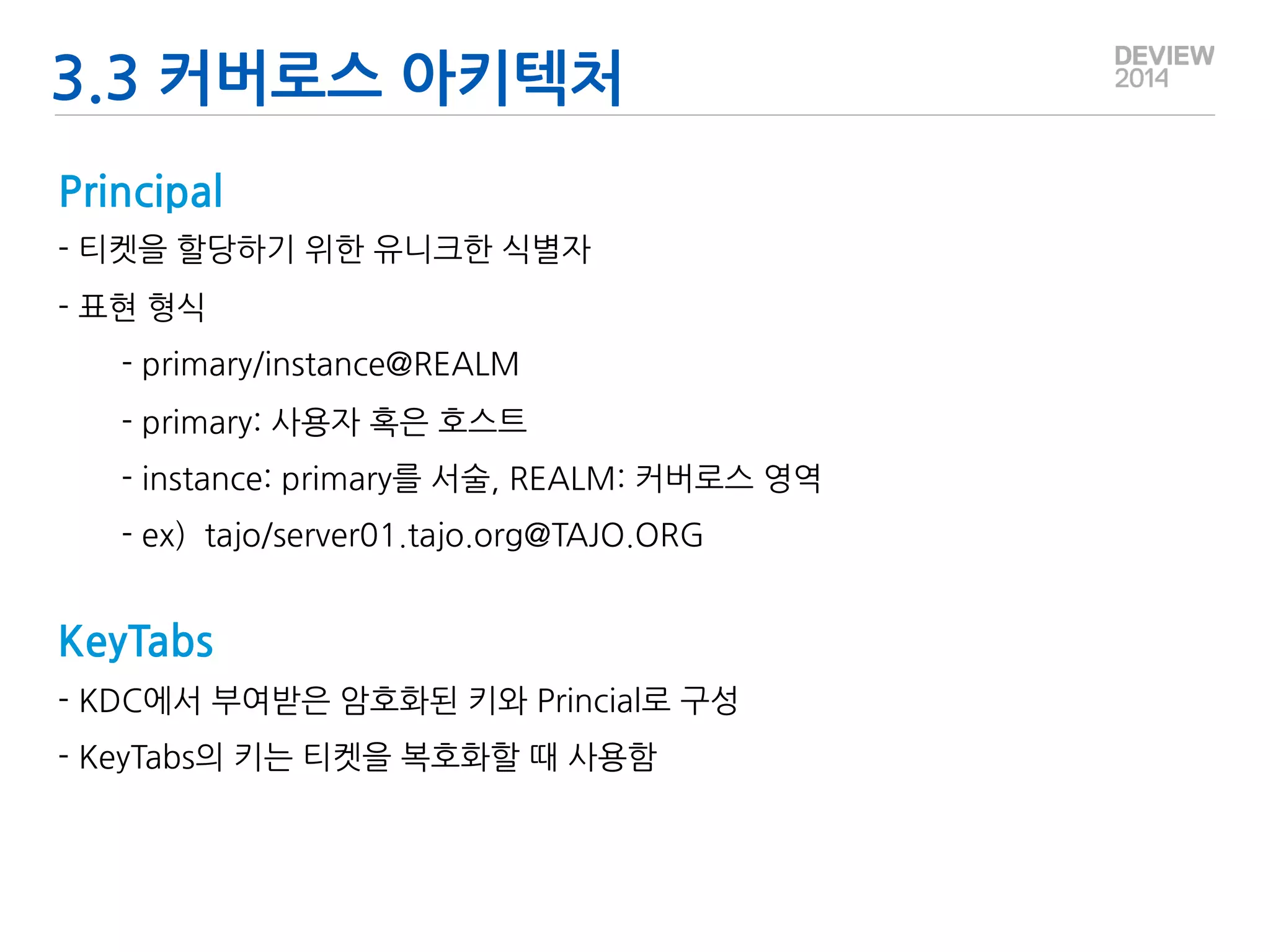

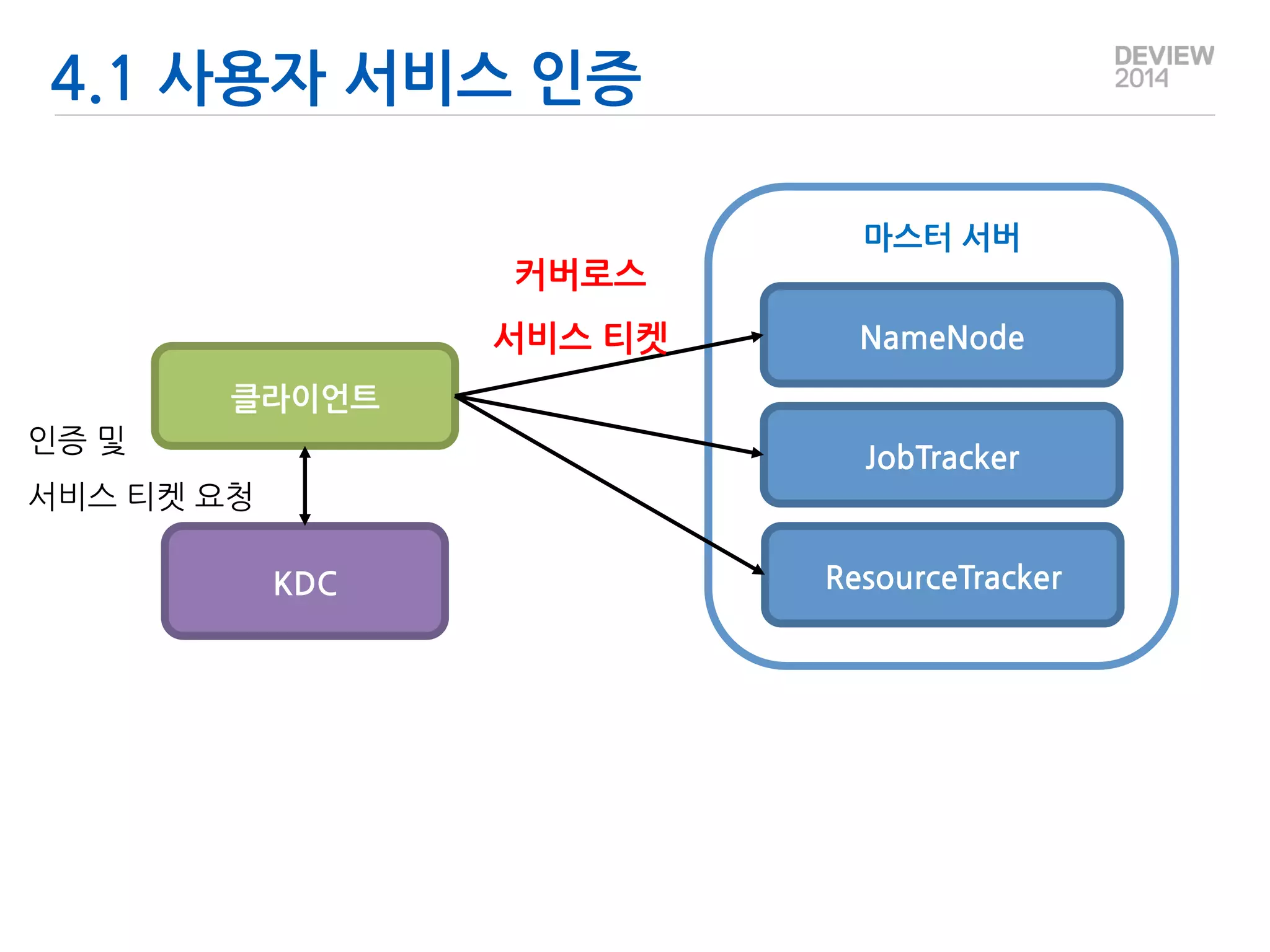

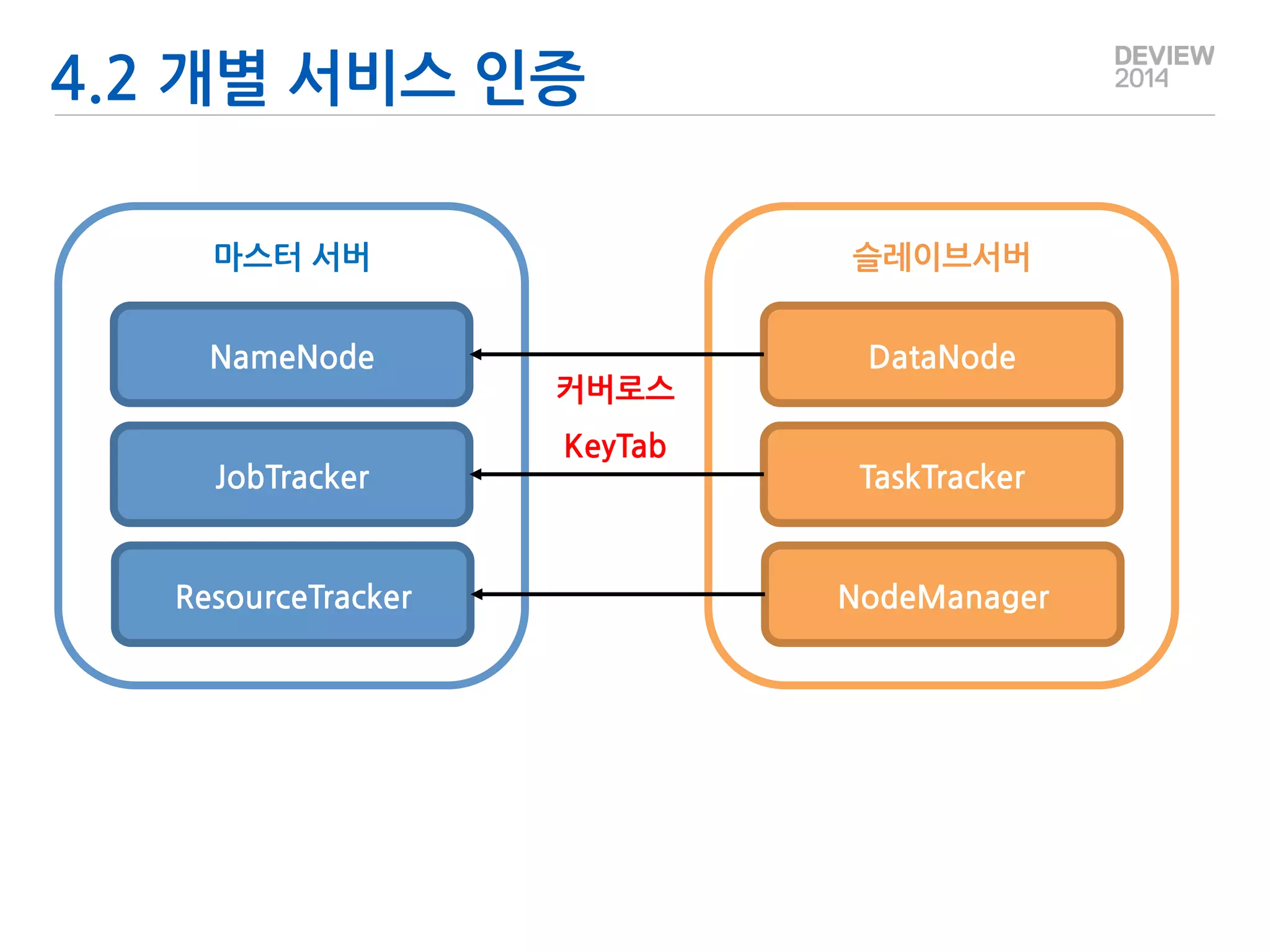

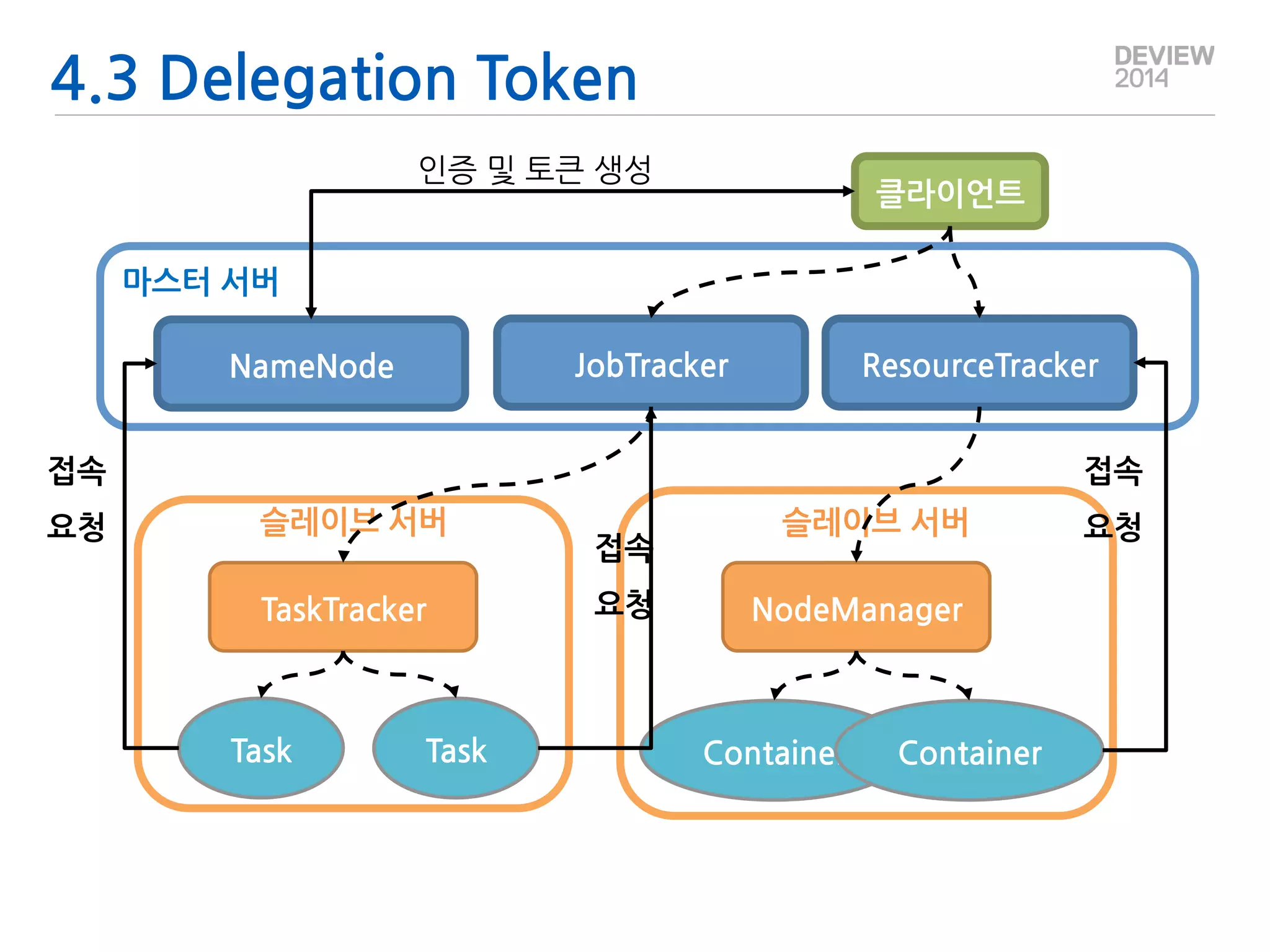

This document outlines an agenda for a presentation on Hadoop security. The agenda includes an overview of Hadoop, concepts of Hadoop security, Kerberos, Hadoop security design, and how to implement Hadoop security. The presenter is introduced as the lead of big data platform development at Gruter Inc. and an Apache Tajo committer.