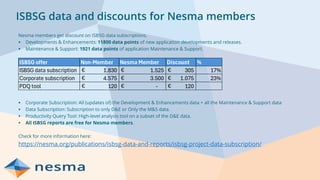

NESMA is an international non-profit organization focused on software measurement and metrics, aimed at improving the use and understanding of these practices across various industries. The webinar discusses key aspects of benchmarking, its benefits, processes, and metrics commonly used to enhance application development and maintenance performance. Key takeaways emphasize the importance of benchmarking for understanding organizational performance and identifying areas for improvement.