Christoph Feichtenhofer; X3D: Expanding Architectures for Efficient Video Recognition , Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2020, pp. 203-213

https://openaccess.thecvf.com/content_CVPR_2020/html/Feichtenhofer_X3D_Expanding_Architectures_for_Efficient_Video_Recognition_CVPR_2020_paper.html

![!"#

n426

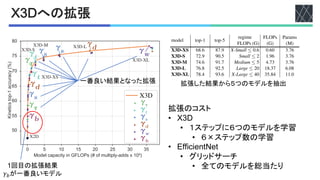

• ベースとなる26モデル

• 軸の探索をするモデル

• Tつの軸を全てCに設定

n426からTつの軸を探索

• フレームレート:𝛾!

• フレーム数:𝛾"

• 解像度:𝛾#

• チャネル幅:𝛾$

• ボトルネック幅:𝛾%

• 深さ:𝛾&

In relation to most of these works, our approach does

not assume a fixed inherited design from 2D networks, but

expands a tiny architecture across several axes in space, time,

channels and depth to achieve a good efficiency trade-off.

3. X3D Networks

Image classification architectures have gone through an

evolution of architecture design with progressively expand-

ng existing models along network depth [7,23,37,51,58,81],

nput resolution [27,57,60] or channel width [75,80]. Simi-

ar progress can be observed for the mobile image classifi-

cation domain where contracting modifications (shallower

networks, lower resolution, thinner layers, separable convo-

ution [24,25,29,48,82]) allowed operating at lower compu-

ational budget. Given this history in image ConvNet design,

a similar progress has not been observed for video architec-

ures as these were customarily based on direct temporal

extensions of image models. However, is single expansion

of a fixed 2D architecture to 3D ideal, or is it better to expand

stage filters output sizes T×S2

data layer stride γτ , 12

1γt×(112γs)2

conv1 1×32, 3×1, 24γw 1γt×(56γs)2

res2

1×12, 24γbγw

3×32, 24γbγw

1×12, 24γw

×γd 1γt×(28γs)2

res3

1×12, 48γbγw

3×32, 48γbγw

1×12, 48γw

×2γd 1γt×(14γs)2

res4

1×12, 96γbγw

3×32, 96γbγw

1×12, 96γw

×5γd 1γt×(7γs)2

res5

1×12, 192γbγw

3×32, 192γbγw

1×12, 192γw

×3γd 1γt×(4γs)2

conv5 1×12, 192γbγw 1γt×(4γs)2

pool5 1γt×(4γs)2 1×1×1

fc1 1×12, 2048 1×1×1

fc2 1×12, #classes 1×1×1

𝛾!が1なので!"](https://image.slidesharecdn.com/20211029x3dexpandingarchitecturesforefcientvideorecognition-220630005015-c564099a/85/X3D-Expanding-Architectures-for-Efficient-Video-Recognition-4-320.jpg)

![!$#性能比較

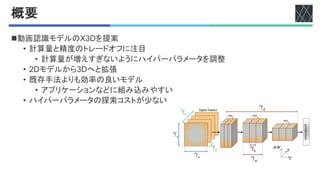

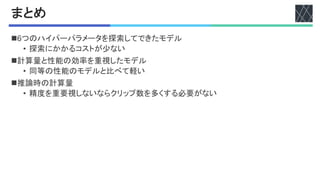

model pre top-1 top-5 test GFLOPs×views Param

I3D [6]

ImageNet

71.1 90.3 80.2 108 × N/A 12M

Two-Stream I3D [6] 75.7 92.0 82.8 216 × N/A 25M

Two-Stream S3D-G [76] 77.2 93.0 143 × N/A 23.1M

MF-Net [9] 72.8 90.4 11.1 × 50 8.0M

TSM R50 [41] 74.7 N/A 65 × 10 24.3M

Nonlocal R50 [68] 76.5 92.6 282 × 30 35.3M

Nonlocal R101 [68] 77.7 93.3 83.8 359 × 30 54.3M

Two-Stream I3D [6] - 71.6 90.0 216 × NA 25.0M

R(2+1)D [64] - 72.0 90.0 152 × 115 63.6M

Two-Stream R(2+1)D [64] - 73.9 90.9 304 × 115 127.2M

Oct-I3D + NL [8] - 75.7 N/A 28.9 × 30 33.6M

ip-CSN-152 [63] - 77.8 92.8 109 × 30 32.8M

SlowFast 4×16, R50 [14] - 75.6 92.1 36.1 × 30 34.4M

SlowFast 8×8, R101 [14] - 77.9 93.2 84.2 106 × 30 53.7M

SlowFast 8×8, R101+NL [14] - 78.7 93.5 84.9 116 × 30 59.9M

SlowFast 16×8, R101+NL [14] - 79.8 93.9 85.7 234 × 30 59.9M

X3D-M - 76.0 92.3 82.9 6.2 × 30 3.8M

X3D-L - 77.5 92.9 83.8 24.8 × 30 6.1M

X3D-XL - 79.1 93.9 85.3 48.4 × 30 11.0M

Table 4. Comparison to the state-of-the-art on K400-val & test.

We report the inference cost with a single “view" (temporal clip with

spatial crop) × the numbers of such views used (GFLOPs×views).

“N/A” indicates the numbers are not available for us. The “test”

model pretrain mAP GFLOPs×views Param

Nonlocal [68] ImageNet+Kinetics400 37.5 544 × 30 54.3M

STRG, +NL [69] ImageNet+Kinetics400 39.7 630 × 30 58.3M

Timeception [28] Kinetics-400 41.1 N/A×N/A N/A

LFB, +NL [71] Kinetics-400 42.5 529 × 30 122M

SlowFast, +NL [14] Kinetics-400 42.5 234 × 30 59.9M

SlowFast, +NL [14] Kinetics-600 45.2 234 × 30 59.9M

X3D-XL Kinetics-400 43.4 48.4 × 30 11.0M

X3D-XL Kinetics-600 47.1 48.4 × 30 11.0M

Table 6. Comparison with the state-of-the-art on Charades.

SlowFast variants are based on T×τ = 16×8.

model data top-1 top-5 FLOPs (G) Params (M)

EfficientNet3D-B0

K400

66.7 86.6 0.74 3.30

X3D-XS

val

68.6 (+1.9) 87.9 (+1.3) 0.60 (−1.4) 3.76 (+0.5)

EfficientNet3D-B3 72.4 89.6 6.91 8.19

X3D-M 74.6 (+2.2) 91.7 (+2.1) 4.73 (−2.2) 3.76 (−4.4)

EfficientNet3D-B4 74.5 90.6 23.80 12.16

X3D-L 76.8 (+2.3) 92.5 (+1.9) 18.37 (−5.4) 6.08 (−6.1)

EfficientNet3D-B0

K400

64.8 85.4 0.74 3.30

X3D-XS

test

66.6 (+1.8) 86.8 (+1.4) 0.60 (−1.4) 3.76 (+0.5)

EfficientNet3D-B3 69.9 88.1 6.91 8.19

X3D-M 73.0 (+2.1) 90.8 (+2.7) 4.73 (−2.2) 3.76 (−4.4)

EfficientNet3D-B4 71.8 88.9 23.80 12.16

X3D-XL - 79.1 93.9 85.3 48.4 × 30 11.0M

Table 4. Comparison to the state-of-the-art on K400-val & test.

We report the inference cost with a single “view" (temporal clip with

spatial crop) × the numbers of such views used (GFLOPs×views).

“N/A” indicates the numbers are not available for us. The “test”

column shows average of top1 and top5 on the Kinetics-400 testset.

model pretrain top-1 top-5 GFLOPs×views Param

I3D [3] - 71.9 90.1 108 × N/A 12M

Oct-I3D + NL [8] ImageNet 76.0 N/A 25.6 × 30 12M

SlowFast 4×16, R50 [14] - 78.8 94.0 36.1 × 30 34.4M

SlowFast 16×8, R101+NL [14] - 81.8 95.1 234 × 30 59.9M

X3D-M - 78.8 94.5 6.2 × 30 3.8M

X3D-XL - 81.9 95.5 48.4 × 30 11.0M

Table 5. Comparison with the state-of-the-art on Kinetics-600.

Results are consistent with K400 in Table 4 above.

Kinetics-600 is a larger version of Kinetics that shall

demonstrate further generalization of our approach. Re-

sults are shown in Table 5. Our variants demonstrate similar

performance as above, with the best model now providing

slightly better performance than the previous state-of-the-

art SlowFast 16×8, R101+NL [14], again for 4.8× fewer

FLOPs (i.e. multiply-add operations) and 5.5× fewer param-

eter. In the lower computation regime, X3D-M is compara-

ble to SlowFast 4×16, R50 but requires 5.8× fewer FLOPs

and 9.1× fewer parameters.

Charades [49] is a dataset with longer range activities. Ta-

model pre top-1 top-5 test GFLOPs×views Param

I3D [6]

ImageNet

71.1 90.3 80.2 108 × N/A 12M

Two-Stream I3D [6] 75.7 92.0 82.8 216 × N/A 25M

Two-Stream S3D-G [76] 77.2 93.0 143 × N/A 23.1M

MF-Net [9] 72.8 90.4 11.1 × 50 8.0M

TSM R50 [41] 74.7 N/A 65 × 10 24.3M

Nonlocal R50 [68] 76.5 92.6 282 × 30 35.3M

Nonlocal R101 [68] 77.7 93.3 83.8 359 × 30 54.3M

Two-Stream I3D [6] - 71.6 90.0 216 × NA 25.0M

R(2+1)D [64] - 72.0 90.0 152 × 115 63.6M

Two-Stream R(2+1)D [64] - 73.9 90.9 304 × 115 127.2M

Oct-I3D + NL [8] - 75.7 N/A 28.9 × 30 33.6M

ip-CSN-152 [63] - 77.8 92.8 109 × 30 32.8M

SlowFast 4×16, R50 [14] - 75.6 92.1 36.1 × 30 34.4M

SlowFast 8×8, R101 [14] - 77.9 93.2 84.2 106 × 30 53.7M

SlowFast 8×8, R101+NL [14] - 78.7 93.5 84.9 116 × 30 59.9M

model pretrain mAP GFLOPs×views Param

Nonlocal [68] ImageNet+Kinetics400 37.5 544 × 30 54.3M

STRG, +NL [69] ImageNet+Kinetics400 39.7 630 × 30 58.3M

Timeception [28] Kinetics-400 41.1 N/A×N/A N/A

LFB, +NL [71] Kinetics-400 42.5 529 × 30 122M

SlowFast, +NL [14] Kinetics-400 42.5 234 × 30 59.9M

SlowFast, +NL [14] Kinetics-600 45.2 234 × 30 59.9M

X3D-XL Kinetics-400 43.4 48.4 × 30 11.0M

X3D-XL Kinetics-600 47.1 48.4 × 30 11.0M

Table 6. Comparison with the state-of-the-art on Charades.

SlowFast variants are based on T×τ = 16×8.

model data top-1 top-5 FLOPs (G) Params (M)

EfficientNet3D-B0 66.7 86.6 0.74 3.30

"#$%&#'()*++

"#$%&#'(),++

-./0/1%(

事前

学習

既存

手法

同等の性能よりも

計算量削減](https://image.slidesharecdn.com/20211029x3dexpandingarchitecturesforefcientvideorecognition-220630005015-c564099a/85/X3D-Expanding-Architectures-for-Efficient-Video-Recognition-8-320.jpg)

![!$#性能比較

SlowFast, +NL [14] Kinetics-600 45.2 234 × 30 59.9M

X3D-XL Kinetics-400 43.4 48.4 × 30 11.0M

X3D-XL Kinetics-600 47.1 48.4 × 30 11.0M

Table 6. Comparison with the state-of-the-art on Charades.

SlowFast variants are based on T×τ = 16×8.

model data top-1 top-5 FLOPs (G) Params (M)

EfficientNet3D-B0

K400

66.7 86.6 0.74 3.30

X3D-XS

val

68.6 (+1.9) 87.9 (+1.3) 0.60 (−1.4) 3.76 (+0.5)

EfficientNet3D-B3 72.4 89.6 6.91 8.19

X3D-M 74.6 (+2.2) 91.7 (+2.1) 4.73 (−2.2) 3.76 (−4.4)

EfficientNet3D-B4 74.5 90.6 23.80 12.16

X3D-L 76.8 (+2.3) 92.5 (+1.9) 18.37 (−5.4) 6.08 (−6.1)

EfficientNet3D-B0

K400

64.8 85.4 0.74 3.30

X3D-XS

test

66.6 (+1.8) 86.8 (+1.4) 0.60 (−1.4) 3.76 (+0.5)

EfficientNet3D-B3 69.9 88.1 6.91 8.19

X3D-M 73.0 (+2.1) 90.8 (+2.7) 4.73 (−2.2) 3.76 (−4.4)

EfficientNet3D-B4 71.8 88.9 23.80 12.16

X3D-L 74.6 (+2.8) 91.4 (+2.5) 18.37 (−5.4) 6.08 (−6.1)

Table 7. Comparison to EfficientNet3D: We compare to a 3D

version of EfficientNet on K400-val and test. 10-Center clip testing

is used. EfficientNet3D has the same mobile components as X3D.

but was found by searching a large number of models for op-

timal trade-off on image-classification. This ablation studies

if a direct extension of EfficientNet to 3D is comparable to

X3D (which is expanded by only training few models). Effi-

cientNet models are provided for various complexity ranges.

233#'#%$&4%&56との比較

456のハイパーパラメータ探索が優れている

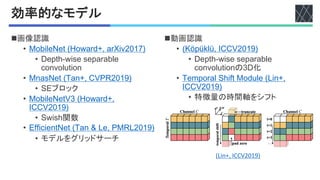

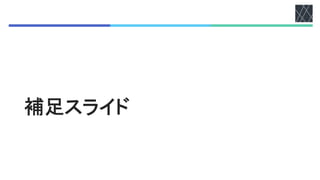

Inference cost per video in TFLOPs (# of multiply-adds x 1012

)

0.0 0.2 0.4 0.6 0.8

76

74

72

70

68

66

64

Kinetics

top-1

accuracy

(%)

78

SlowFast 8x8, R101+NL

SlowFast 8x8, R101

X3D-XL

X3D-M

X3D-S

CSN (Channel-Separated Networks)

TSM (Temporal Shift Module)

Figure 3. Accuracy/complexity trade-off on Kinetics-400 for dif-

ferent number of inference clips per video. The top-1 accuracy

(vertical axis) is obtained by K-Center clip testing where the num-

ber of temporal clips K ∈ {1, 3, 5, 7, 10} is shown in each curve.

The horizontal axis shows the full inference cost per video.

Inference cost. In many cases, like the experiments before,

推論時のクリップ数と認識率

!75クリップの

比較

5クリップ以上は

計算量に対して認識率の伸びが悪い](https://image.slidesharecdn.com/20211029x3dexpandingarchitecturesforefcientvideorecognition-220630005015-c564099a/85/X3D-Expanding-Architectures-for-Efficient-Video-Recognition-9-320.jpg)

![学習 %&'()*'+,-.//0

nバッチサイズ:C32V

• Y0[1台につき8クリップ

• クリップでバッチノーマライゼーショ

ン

n反復数:2WTエポック

nオプティマイザー:JY6

• S'S+-&GSX3UI

• =+$]"&)?+,>^X5×10-.

• 学習率XCUT

nドロップアウト:3UW

nスケジューラ

• 最初の333反復

• 線形ウォームアップ

• コサインスケジューラ

• 𝜂 8 0.5 cos

/

/!"#

𝜋 + 1

• 𝜂:CUT

• 𝑛012:最大学習反復数](https://image.slidesharecdn.com/20211029x3dexpandingarchitecturesforefcientvideorecognition-220630005015-c564099a/85/X3D-Expanding-Architectures-for-Efficient-Video-Recognition-15-320.jpg)

![学習

nO$-+&$,%FT33

• 反復数:O$-+&$,%FV33の倍

• スケジューラも同様に倍

n!">#>?+%

• O$-+&$,%のモデルをファインチューニ

ング

• バッチサイズ:CT

• 学習率:3U32

• =+$]"&)?+,>^:10-.

• 入力のフレームレートを半分

• 長い時間を推論するため

n_/_

• 反復数:Tエポック

• 最初のC333反復

• 線形ウォームアップ

• LZ[閾値:3UI](https://image.slidesharecdn.com/20211029x3dexpandingarchitecturesforefcientvideorecognition-220630005015-c564099a/85/X3D-Expanding-Architectures-for-Efficient-Video-Recognition-16-320.jpg)

![!$#性能比較 (アクション検出)

nデータセット

• _/_);YG@A)!/0123CE

n推論

• C28𝛾#×C2𝛾#にセンタークロップ

XL

M

S

model AVA pre val mAP GFLOPs Param

LFB, R50+NL [71]

v2.1 K400

25.8 529 73.6M

LFB, R101+NL [71] 26.8 677 122M

X3D-XL 26.1 48.4 11.0M

SlowFast 4×16, R50 [14]

v2.2 K600

24.7 52.5 33.7M

SlowFast, 8×8 R101+NL [14] 27.4 146 59.2M

X3D-M 23.2 6.2 3.1M

X3D-XL 27.4 48.4 11.0M

Table 8. Comparison with the state-of-the-art on AVA. All meth-

ods use single center crop inference; full testing cost is directly](https://image.slidesharecdn.com/20211029x3dexpandingarchitecturesforefcientvideorecognition-220630005015-c564099a/85/X3D-Expanding-Architectures-for-Efficient-Video-Recognition-17-320.jpg)

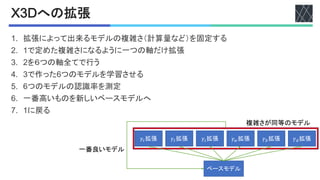

![追加実験

n6+(&"F=$%+)%+(>#>89+),'-B'9G&$'-

• 通常の56畳み込みに変更し𝛾%を変更

• パラメータ数が同等になるように

• 通常の56畳み込みに変更

nJ=$%"

• 1+N[に変更で性能が少し低下

• メモリを優先するなら1+N[

nJKブロック

• 削除で性能低下

• パフォーマンスに大きな影響を与えている

model top-1 top-5 FLOPs (G) Param (M)

X3D-S 72.9 90.5 1.96 3.76

CW conv b= 0.6 68.9 88.8 1.95 3.16

CW conv 73.2 90.4 17.6 22.1

swish 72.0 90.4 1.96 3.76

SE 71.3 89.9 1.96 3.60

(a) Ablating mobile components on a Small model.

X3D

C

Table A.1. Ablations of mobile components for video classification on K

parameters, and computational complexity measured in GFLOPs (floatin

input of size t⇥112 s⇥112 x. Inference-time computational cost is repo

The results show that removing channel-wise separable convolution (CW

increases mutliply-adds and parameters at slightly higher accuracy, while

[5] Ross Girshick, Ilija Radosavovic, Georgia Gkioxari, Piotr

Dollár, and Kaiming He. Detectron. https://github.

com/facebookresearch/detectron, 2018. 1

[6] Priya Goyal, Piotr Dollár, Ross Girshick, Pieter Noord-

huis, Lukasz Wesolowski, Aapo Kyrola, Andrew Tulloch,

Yangqing Jia, and Kaiming He. Accurate, large minibatch

SGD: training ImageNet in 1 hour. arXiv:1706.02677, 2017.

1

[7] Chunhui Gu, Chen Sun, David A. Ross, Carl Vondrick, Car-

oline Pantofaru, Yeqing Li, Sudheendra Vijayanarasimhan,

George Toderici, Susanna Ricco, Rahul Sukthankar, Cordelia

[1

[1

[1

model top-1 top-5 FLOPs (G) Param (M)

X3D-S 72.9 90.5 1.96 3.76

CW conv b= 0.6 68.9 88.8 1.95 3.16

CW conv 73.2 90.4 17.6 22.1

swish 72.0 90.4 1.96 3.76

SE 71.3 89.9 1.96 3.60

(a) Ablating mobile components on a Small model.

model top-1 top-5 FLOPs (G) Param (M)

X3D-XL 78.4 93.6 35.84 11.0

CW conv, b= 0.56 76.0 92.6 34.80 9.73

CW conv 79.2 93.5 365.4 95.1

swish 78.0 93.4 35.84 11.0

SE 77.1 93.0 35.84 10.4

(b) Ablating mobile components on an X-Large model.

Table A.1. Ablations of mobile components for video classification on K400-val. We show top-1 and top-5 classification accuracy (%

parameters, and computational complexity measured in GFLOPs (floating-point operations, in # of multiply-adds ⇥109

) for a single c

input of size t⇥112 s⇥112 x. Inference-time computational cost is reported GFLOPs ⇥10, as a fixed number of 10-Center views is us

The results show that removing channel-wise separable convolution (CW conv) with unchanged bottleneck expansion ratio, b, drastica

increases mutliply-adds and parameters at slightly higher accuracy, while swish has a smaller effect on performance than SE.

[5] Ross Girshick, Ilija Radosavovic, Georgia Gkioxari, Piotr

Dollár, and Kaiming He. Detectron. https://github.

com/facebookresearch/detectron, 2018. 1

quality estimation of multiple intermediate frames for vid

interpolation. In Proc. CVPR, 2018. 1

[17] Alex Krizhevsky, Ilya Sutskever, and Geoffrey E Hinton. Im](https://image.slidesharecdn.com/20211029x3dexpandingarchitecturesforefcientvideorecognition-220630005015-c564099a/85/X3D-Expanding-Architectures-for-Efficient-Video-Recognition-19-320.jpg)