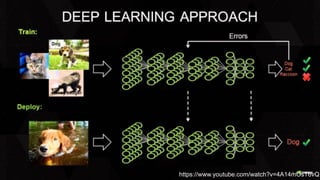

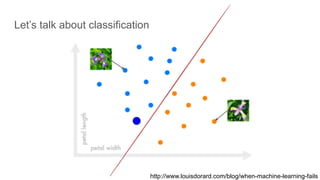

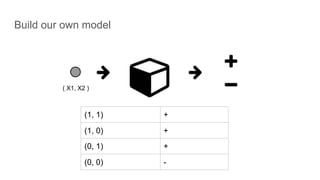

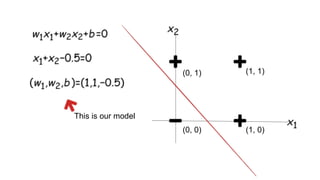

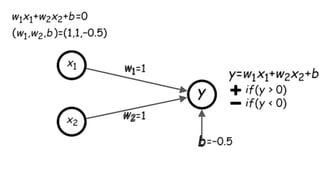

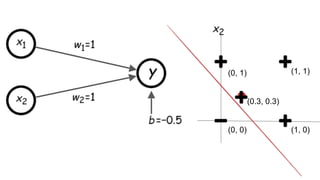

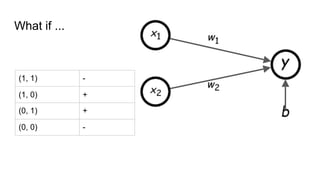

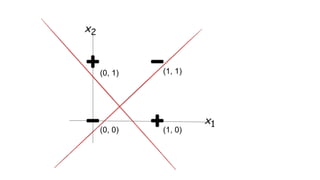

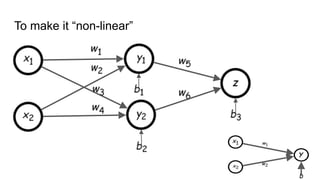

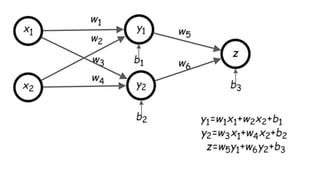

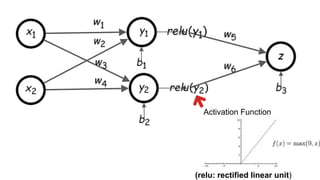

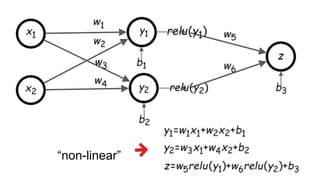

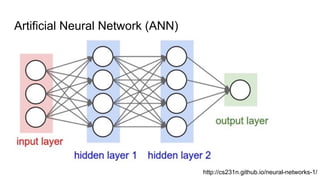

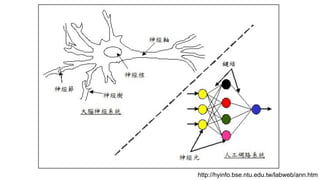

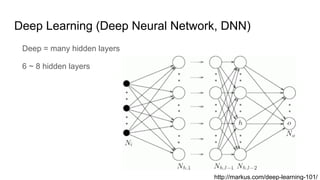

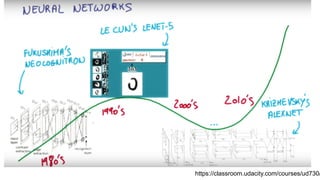

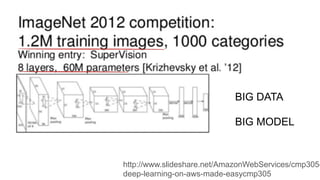

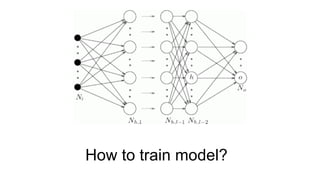

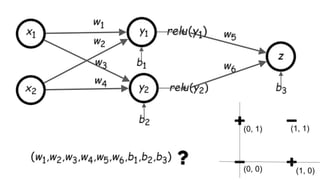

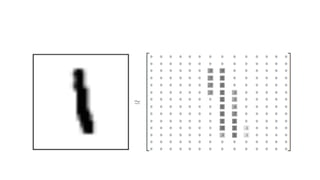

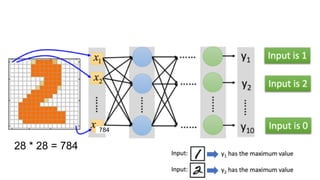

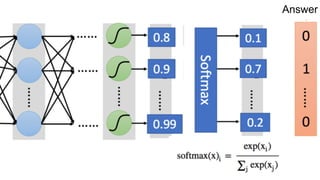

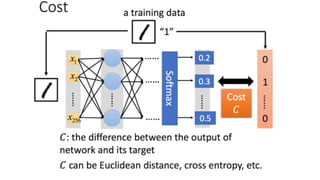

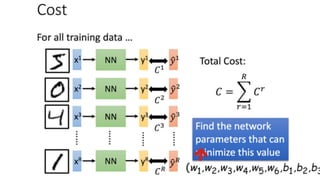

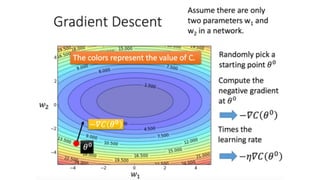

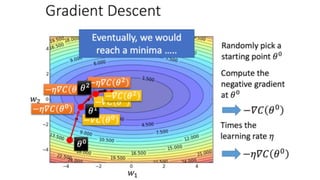

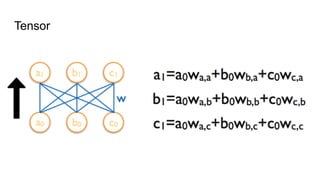

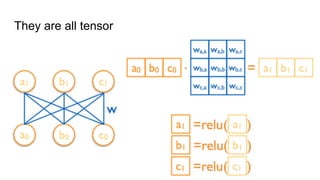

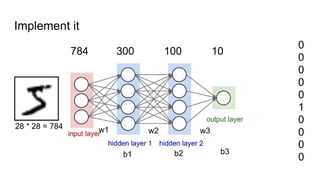

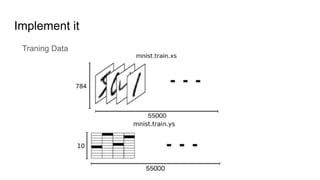

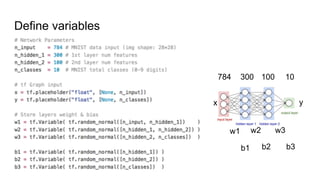

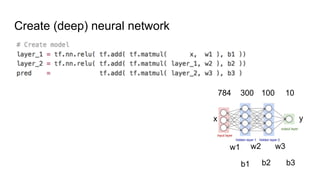

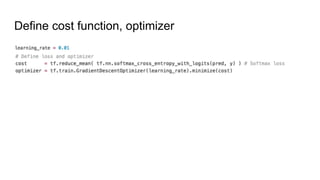

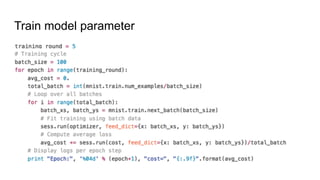

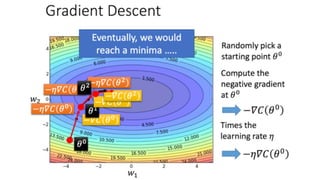

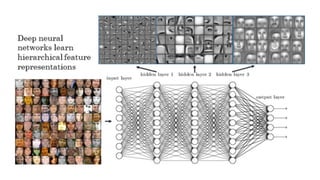

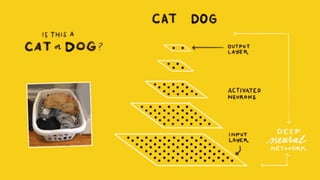

The document provides an introduction to deep learning, focusing on its definition, applications in classification problems, and the use of TensorFlow for model training. It covers the structure of deep neural networks, the training process, and offers resources for practical implementation. Additionally, it discusses deep learning's suitability for various tasks, including handwriting recognition and its deployment in products by Google.