This document discusses two systems that use gestural interfaces for 3D navigation of maps using the Wiimote and Kinect controllers. The systems, called Wing and King, allow natural 3D navigation without using traditional point-and-click interfaces. An empirical user study evaluated how the degree of body involvement with each controller affected the user experience. Results showed that gestural interfaces can immerse users in a dynamic 3D experience and move interaction beyond the novice level quickly by exploiting physical movement.

![Wiimote and Kinect: Gestural User Interfaces add a

Natural third dimension to HCI.

∗

Rita Francese Ignazio Passero Genoveffa Tortora

University of Salerno University of Salerno University of Salerno

via Ponte Don Melillo, 1 via Ponte Don Melillo, 1 via Ponte Don Melillo, 1

Fisciano (SA), Italy Fisciano (SA), Italy Fisciano (SA), Italy

francese@unisa.it ipassero@unisa.it tortora@unisa.it

ABSTRACT nect, Wiimote, Human Computer Interaction, Empirical Eva-

The recent diffusion of advanced controllers, initially de- luation.

signed for the home game console, has been rapidly followed

by the release of proprietary or third part PC drivers and 1. INTRODUCTION

SDKs suitable for implementing new forms of 3D user in-

On the first day of April 2011, Google was announcing

terfaces based on gestures. Exploiting the devices currently

the revolutionary Gmail Motion Beta application. Thanks

available on the game market, it is now possible to enrich,

to standard webcams and Google’s patented spatial track-

with low cost motion capture, the user interaction with desk-

ing technology, Gmail Motion claimed it would detect user

top computers by building new forms of natural interfaces

movements and translate them into meaningful characters

and new action metaphors that add the third dimension as

and commands. Despite the April Fool character of this an-

well as a physical extension to interaction with users. This

nounce (one of the authors need to confess that, forgiving

paper presents two systems specifically designed for 3D ges-

the particular day, he tried to experiment the announced

tural user interaction on 3D geographical maps. The pro-

service), on the supporting website [4] there are sentences

posed applications rely on two consumer technologies both

referred to gestural interfaces that sound indubitably inter-

capable of motion tracking: the Nintendo Wii and the Mi-

esting: “Easy to learn: Simple and intuitive gestures”, “Im-

crosoft Kinect devices. The work also evaluates, in terms of

proved productivity: In and out of your email up to 12%

subjective usability and perceived sense of Presence and Im-

faster” as well as “Increased physical activity: Get out of

mersion, the effects on users of the two different controllers

that chair and start moving today”.

and of the 3D navigation metaphors adopted. Results are

Because of its main typewriting nature, the mailing activ-

really encouraging and reveal that, users feel deeply immerse

ity, is not the best target for benefiting of a gestural inter-

in the 3D dynamic experience, the gestural interfaces quickly

face, but the technology is now mature and offers new con-

bring the interaction from novice to expert style and enrich

sumer hardware that can easily support applications based

the synthetic nature of the explored environment exploiting

on natural human computer interaction. Traditional GUIs

user physicality.

adopt mouse and keyboard building the interaction with the

user on artificial elements like windows, menus or buttons.

Categories and Subject Descriptors Natural user interfaces disappear behind the content, and

H.5.2 [Information Interfaces and Presentation]: User direct manipulation style (e.g., touch, voice commands and

Interfaces; B.4.2 [Input/Output and Data Communi- gestures) is the primary interaction method adopted [2, 10].

cation]: Input/Output Devices. Despite that, too often the window/icons/mouse metaphors

contaminate the gestural interfaces vanishing their efficacy

[28], the user is involved in a frustrating experience: the

General Terms motion capture based interface fails in being effective since

Design, Experimentation, Human Factors. it’s used only for mimicking the classic mouse interaction.

Indeed, differently from artifacts (e.g., documents, pictures,

Keywords videos, etc. ), the cursor arrow is not a good target for

direct manipulation interfaces. Taking a look at game mar-

3D Interfaces, Natural User Interfaces, Motion Capture, Ki-

ket, the game consoles capable of motion capture (almost

∗Corresponding author. all now) limit the window/icon interaction only to the basic

operations (e.g., game menus and console administration),

and offer to the players gaming experiences based on the

analogies between control gestures and the real ones.

Permission to make digital or hard copies of all or part of this work for In this paper, we describe two applications that adopt ges-

personal or classroom use is granted without fee provided that copies are tural interaction for controlling user navigation of Bing maps

not made or distributed for profit or commercial advantage and that copies [11]. The two applications, Wing (Wiimote Bing) and King

bear this notice and the full citation on the first page. To copy otherwise, to (Kinect Bing), represent the occasion for experimenting,

republish, to post on servers or to redistribute to lists, requires prior specific in the context of 3D environments, two natural interfaces

permission and/or a fee.

AVI ’12, May 21-25, 2012, Capri Island, Italy based on two consumer controllers: the Nintendo Wii Re-

Copyright 2012 ACM 978-1-4503-1287-5/12/05 ...$10.00. mote (also known as Wiimote) [19] and the Microsoft Kinect

116](https://image.slidesharecdn.com/1-120824030429-phpapp02/75/1-1-2048.jpg)

![Figure 1: The Wiimote and Nunchuk sensors con-

figuration adopted for the Wing gestural interface.

Figure 2: Wing: the Wiimote and Nunchuk motion

[13]. The novelty of the interaction proposed is in the con-

controllers during navigation.

trolling metaphors that completely abandon the point and

click interaction of the Bing classic PC navigation for two

natural interfaces with the users based on gestures. Aiming

ture device simply based on an Infra Red emitter and two

at evaluating how the degree of body involvement affects

video cameras. However, thanks to motion detection, all

the user perceptions about the experience, the two applica-

these controllers let the gaming experience to be realistically

tions have been empirically evaluated via the Usability Sat-

based on gestures analogous to the mimicked ones. While

isfaction Questionnaires [9] and via the well know Witmer

Wiimote and Kinect are imported from game console world

and Singer’s one [30], specific for assessing perceived sense

to the computer one, Asus and PrimeSense were propos-

of Presence and Immersion in a virtual environment. Re-

ing Wavi Xtion, their low cost motion capture alternative,

sults confirmed the enthusiastic impressions we previously

specifically designed for PCs and smart TVs [1]. Exploiting

had observing that the users were quickly feeling comfort-

the availability of a simple connection with normal PCs and

able with the interfaces and were pleasantly interacting with

the diffusion of official or unofficial SDKs [26, 16, 21] for

both systems.

developing desktop applications controlled by these devices,

the Research is exploring new interaction instruments and

2. BACKGROUND modalities as well as new natural interfaces for the more dif-

In the past years, the game console market has been a re- ferent applications in several disciplines, ranging from teach-

ally competitive sector that was exploiting and often driving ing to medicine.

the development of state of the art processing and graphical

technologies to compete on a really exigent customer popu- 2.1 Wiimote and Applications

lation. Recently, the market trend has changed and, follow- Wii Remote (often shorten as Wiimote) [19] was intro-

ing the evolving customer preferences, has been mainly fo- duced in 2006 by Nintendo and promoted the success of the

cused on realistic gestural human-computer interfaces than Wii console. The Wiimote communicates over a wireless

on computing performance or on graphical capabilities of Bluetooth connection, offers a set of classic joypad buttons

the proposed products [23]. With Wii TM console, Nintendo and senses acceleration along three axis. Wiimote is also

(2006) has proposed a game platform not particularly ex- equipped with an optical sensor that, associated to an Infra

citing in terms of performance but it broke several records Red (IR) source (i.e., the Wii sensor bar), allows to deter-

as best sold console [32]. Reasons for this success are the mine where the device is pointing. Wiimote can be comple-

revolutionary characteristics of Wii control system, the Wi- mented with Motion Plus that adds a gyroscope to improve

imote [8] (shown in Figure 1), its high expandability with the detection of complex movements [29]. The device is

several accessories and the possibility of offering to users equipped with 5.5 Kilobytes of memory (almost adopted for

experiential games improved with active gestures and re- user customisations) and adopts as feedback mechanisms a

ally effective playing metaphors. The success of Nintendo speaker, a vibration motor and four light emitting leds [8].

Wii clearly states the influence of the associated novel ges- Nunchuk is an extension that plugs into Wiimote via a con-

tural interfaces on user satisfaction. Following Nintendo, nection cable and adds 2 buttons, an analog joistick as well

Sony and Microsoft, the other two competitors in the game as an independent three axis accelerometer (usually associ-

console sector, were proposing their motion sensing game ated with the secondary hand of the user). The adoption

controllers for answering to the user need of playing in a of motion sensing game controllers on desktop computers

natural manner. Their answers to the market demand have enables to implement novel interfaces capable of deeply in-

been the PlayStation MoveTM (2008) and, only in November volving users in realistic experiences. In [28], the authors,

2010, the KinectTM controller. While the Wiimote and PS considering touch based interfaces, claim natural user inter-

Move offer two similar controlling experience to the users, faces to be characterised mainly by a high learnability. In

limited by the need of holding the controllers with hands, our case, the users quickly and spontaneously move from

Kinect represents the first consumer full body motion cap- what we consider a basic navigation style to an expert one,

117](https://image.slidesharecdn.com/1-120824030429-phpapp02/75/1-2-2048.jpg)

![but, as shown in the Evaluation Section, we are also inter-

ested in the impact of user perceptions and involvement on

the proposed 3D navigation, as in the case of the kinesthetic

learning experience proposed in [5]. In this paper, Ho-Shing

Ip et al. propose a didactic experience exploiting the inter-

play between body, mind and emotions for amplifying the

learning value and a model for investigating the effects of im-

mersive body movement interaction with virtual characters

and scenarios. In particular, they adopt the Wiimote and

the Nunchuk extension for controlling the flight of a bird in

a Hummingbird Flying Scenario. As [5], we also exploit the

amplifying effects on usability and user involvement due to

natural interaction style interfaces and their physical nature

for giving physicality to the synthetic environments adopted.

De Paolis et al. propose, in [3], a serious game based on the

philological reconstruction of city life in the XIII century.

As input peripheral, the authors adopt a Wiimote controller

with the Balance Board extension, that adds four pressure

sensors to the control system and is used for detecting walk- Figure 3: King: the Kinect device controls Bing

ing gestures. Yang and Lee, in [32], propose the adoption maps navigation.

of Wiimote as a wireless presentation controller and a wire-

less mouse. They adopt the IR sensor for tracking the Wi-

imote pointing direction of up to four users. Santos et al., in and on gesture recognition [7]. The motion capture is per-

[22], perform a user study aiming at comparing two differ- formed analysing the raw depth images provided by a Kinect

ent Wiimote configurations with the classic desktop mouse sensor.

in controlling Google Earth navigation. Both the proposed Phan adopts the OpenNI toolkit and develops a Kinect

Wiimote configurations mimic with two buttons the mouse: client for controlling Second Life gestures aiming at estab-

one detects user movements via accelerometer, the other via lishing a direct channel between the user body and his/her

the IR sensor. The study reveals that Wiimote presents sev- avatar [24]. Also in this case, the aim is improving the vir-

eral advantages over desktop and mouse. Differently from tual environment experience and the perceived immersion by

[22], we adopt and evaluate two applications based on Wi- letting the user interface to disappear behind real gestures.

imote and Kinect controllers and propose two natural in- Boulos et al. base on Kinect their application, Kinoogle

terfaces explicitly designed for 3D navigation and really far [6], and develop a gestural interface for controlling Googole

from the classic desktop metaphors. Earth navigation. The proposed gestures are mainly based

on hand tracking and resemble the classic multitouch inter-

2.2 Kinect and Applications action style. Differently from them, we propose a navigation

control that is inspired to natural flight gestures and user ac-

With Kinect, Microsoft distributes, as a controller for

tions reflect on the map navigation according to metaphoric

Xbox system, the first motion capture device on the con-

similarity.

sumer market. The device is available from November 2010,

the first unofficial SDK is dated December 2010 [26, 27],

while the first official SDK for PC users was released by 3. WING AND KING APPLICATIONS

Microsoft on June 2011 in beta version and is free for non Wing and King are the two controller applications devel-

commercial uses [16]. An estimation of Kinect marketing oped on Wiimote with Brian Peek’s SDK [21] and on Kinect

success can be done if we consider that within the first 25 with the official SDK [16]. Both applications control a Bing

days, Microsoft sold 2.5 millions Kinect devices [23]. map client [11] and react to user gestures inspired by well

Kinect sensor embeds a four-element linear microphone accepted metaphors.

array capable of sophisticated acoustic echo cancellation, It is important to point out that Bing maps represent just

noise suppression and direction localisation as well as an an instance of 3D navigable environments and provide us the

IR emitter and two cameras that deliver depth information, dimensions for experimenting our natural interfaces. During

colour images and skeleton tracking data. The natural user the evaluation phases we noticed, for both Wing and King

interface API, in the Kinect for Windows SDK, enables ap- systems, that the users quickly and spontaneously moved

plications to access and manipulate the data collected by from novice use, characterised by single navigating com-

the sensor [16]. The optimal working distance ranges from mands at a time, to a sort of expert use, when they started

0.8 to 4 meters. In this range, the depth and skeleton views to combine turning with altitude and movement commands,

detect users only if the entire body fits within the captured generating a more complicate navigation path. A video of

frame but the device pointing direction can be adjusted by the applications is available at [20].

a motorised tilting mechanism. For overcoming the working

distance restrictions still maintaining a good screen readabil- 3.1 Wing and the Wiimote Controller

ity, we were visualising the King client via a room projector Wing is a Bing map navigator controlled by the accelerom-

(we were doing the same for Wing, aiming at avoiding screen eters of a Nintendo Wiimote and a Nunchuk [8]. The ap-

differences to bias the proposed evaluation). plication is developed in C# [15] and connects to both con-

In the context of 3D models navigation, Lacolina et al. trollers via bluetooth using the Wiimote lib [21]. The con-

adopt natural interfaces based both on multitouch tables trollers and the dimensions adopted for building the Wing

118](https://image.slidesharecdn.com/1-120824030429-phpapp02/75/1-3-2048.jpg)

![natural interface are shown in Figure 1. In the image, the

movements detected as controls are depicted on the Wiimote

(right side of the image) and on the Nunchuk (left side) de-

vices. Wing proposes to users two interaction metaphors

well diffused and accepted in videogame sector. The main

controller acts on forward/backwards movements when ro-

tated along its longer dimension (i.e., Roll). Its inclination

(i.e., Pitch) determines if the navigation turns. Both the

gestures are inspired to the motorcycle metaphor: while the

Wiimote acts with its Roll rotation as a motorcycle throt-

tle command connected to navigation forward and backward

movements, the turning gestures resemble the handlebar of

the imaginary motorcycle during turning. The forward and

backward movements can be requested at different speeds

according to the Wiimote rotating angle. The aeroplane

cloche metaphor is implemented on the Nunchuk and con-

trols altitude: its tilting direction determines vertical varia-

tions of the map navigation.

Figure 2 shows the Wing application and how users grasp Figure 4: The King Control gestures.

the controls during the navigation. The Wing user holds

both controllers with forearms aligned with the elbows. The

turning gestures are detected when the user turns the Wi-

imote: the credibility of the handlebar metaphor is deeply

perceived and, during navigation, we observed a big part

of users keeping Wiimote and Nunchuk aligned even if the

two components are independent. The altitude gestures are

activated by Nunchuk when the user rotates the wrist up-

wards (increases alt.) and backwards (decreases alt.) or

when he/she accordingly bends the forearm on the elbow. In

both cases the gesture well reflects the videogame action of

pitching up/down an aeroplane cloche. Altitude, movement

and turning commands can be combined obtaining complex

flight/navigation behaviours. All the proposed gestures are

becoming more and more popular among gamers [17, 18, 19] Figure 5: The results of ASQ questionnaires.

and are ready to be extended to PC users.

3.2 King and the Kinect controller detected when the user moves one (slow motion) or both

hands (fast motion) ahead of the elbows. This corresponds

King is a Bing map navigator based on Microsoft Kinect to the skeleton depicted in (b) (arms bent) but is also de-

controller. The application has been developed in C# and tected when the user extends forward his arms.

associates to the Bing map a simple window showing a paper The bird/plane metaphor does not contemplate a backward

aeroplane on a sky background. The application controls the movement and we do not violate this assumption providing

Kinect sensors via the official SDK [16]. a surrogate gesture. Figure 4 (c) and (d) depict the turn ges-

Figure 3 shows the King application and the Bing map tures that were previously described. Altitude of navigation

client during the navigation. The paper aeroplane reflects is controlled by gestures (e) and (f). The idea is in exposing

the gesture performed by the user and is the only feedback to King users two gestures that could be easily associated

mechanism (useful, if we consider that respect to Wing, the to rise or going down effects, avoiding the need of dynam-

King interface is completely hand-free). King proposes to ically mimicking the bird flight gesture that is quite tiring.

its users the bird (or aeroplane) metaphor and customises For rising or decreasing altitude, King users are required to

on it the gestures associated to the various commands. Fig- start and continue the static gesture ((e) or (f)) until the

ure 3 shows a user performing a left turn: she inclines the desired observation height is achieved. Figure 4 depicts also

aligned arms downward on the left as a bird or an aero- the states of the feedback paper aeroplane accorded to the

plane would have done and while the flight on the Bing map gesture detected. The movement, turning and altitude ges-

turns, accordingly, the paper plane in the feedback window tures proposed as King natural interface can be combined

performs a similar rotation. The idea is to mimic the bird’s obtaining the desired navigation experience.

wing movements, when possible, with the arm gestures. At

the moment, the game market still does not offer examples

of similar gestures but generic natural interfaces for sport, 4. EVALUATION

fighting or dancing games are already available [14, 25, 12]. The Wing and King applications have been evaluated in

Figure 4 shows the controlling gestures used for King map an laboratory session organised according to the suggestions

navigator. The neutral position for the navigation is de- provided by Wohlin et al. in [31] aiming at assessing per-

picted in sub-picture (a): when the user stands with open ceived usability and sense of Presence in the Virtual En-

and aligned arms, the navigation halts. The gesture asso- vironment [30]. Participants of the study have been 24 (8

ciated to forward moving is depicted in sub-plot (b) and is girls) undergraduate students and employees of our Faculty

119](https://image.slidesharecdn.com/1-120824030429-phpapp02/75/1-4-2048.jpg)

![who volunteered taking part to the experiment. The stu-

dents population we selected was chosen from a program Table 1: Witmer and Singer questions

Were you involved in the experimental task

that does not require or provide particular competences in 1 INV

to the extent that you lost track of time?

3D virtual environments, games and natural user interfaces. How involved were you in the virtual envi-

Their ages ranged between 18 and 41 years old with an av- 2 INV

ronment experience?

erage of 24. Before starting the experiment, we assessed How well could you concentrate on the as-

participant skills in the videogames and natural interfaces signed tasks or required activities rather

3 DF

sectors. In our sample, 8 participants indicated to be playing than on the mechanisms used to perform

digital games at least once a week, three were Nintendo Wii those tasks or activities?

players and just two of them were using Xbox and Kinect. How much did the control devices interfere

4 with the performance of assigned tasks or DF

4.1 Subjective Usability with other activities?

How responsive was the environment to ac-

Subjective usability has been evaluated via the After-Scena- 5 CF

tion that you initiated (or performed)?

rio (ASQ) and the Computer System Usability (CSUQ) ques- How natural was the mechanism which

tionnaires that, as shown by Lewis, provide strong evidence 6 controlled movement through the environ- CF

of generalizability of results and of wide applicability. The ment?

questions have been evaluated on the seven-point Likert 7 How natural did your interactions with the CF

scale anchored from 1 (strongly disagree) to 7 (strongly environment seem?

How proficient in moving and interacting

agree).

8 with the virtual environment did you feel CF

The ASQ is a three-item questionnaire that is used to

at the end of the experience?

assess participant satisfaction after the completion of each Were you able to anticipate what would

task and evaluates the time to complete the task, the ease 9 happen in response to the actions that you CF

of completion and the adequacy of support information. performed?

The CSUQ questionnaire is made by 19 questions assess- How quickly did you adjust to the virtual

ing user satisfaction with system usability and can be aggre- 10 CF

environment experience?

gated in four factors: 11 How compelling was your sense of moving CF

around inside the virtual environment?

• Overall Evaluation (OVR), How much did your experiences in the

12 virtual environment seem consistent with CF

• System Usefulness (USE), your real-world experiences?

• Information Quality (INFO),

• Interface Quality (INTERF); our empirical evaluation from the Witmer and Singer ques-

tionnaire. The questions are reported in Table 1 aggregated

More details on the questionnaires and the questions are under three factors:

available in [9].

• Involvement (INV),

4.2 Presence and Immersion

• Distracion Factor (DF),

In this work we adopt Bing maps as a 3D virtual environ-

ment in which experimenting the Wing and King interfaces. • Control Factor (CF).

3D environments have a significant advantage over settings

based on 2D technology since they induce a strong Presence Also the answers to this questionnaire have been formulated

sensation in their users [30]. During Bing map navigation, on the seven-point Likert scale: from 1 (strongly disagree)

users move in a virtual space generated by the computer, to 7 (strongly agree).

react to actions and change their point of view on the scene

with movement. Witmer and Singer define Presence as “the 4.3 Experiment Design

subjective experience of being in one place or environment, In the proposed usability study, participants tried in quick

even when one is physically situated in another” and “...pres- succession our gestural interfaces engaging in two naviga-

ence refers to experiencing the computer-generated environ- tion tasks. After being singularly instructed on the Wing

ment rather than the actual physical locale”. As stated by and King systems, the users were required to complete the

them, several factors contribute to increase presence: Con- navigation of two geographical paths involving well known

trol, Realism, Distraction and Sensory input. Presence is Italian cities:

maximised when the user interacts with the environment in

a natural manner, controls the events, when he sees the sys- • SEA:Cagliari-Napoli-Palermo

tem behaving as expected and the 3D environment changing • LAND:Genova-Roma-Venezia

accordingly to his commands. The minimisation of distrac-

tions that can occur when a user has problems in controlling Both tasks are comparable in terms of distances and diffi-

the navigation, as an example, can increase the perceived culties in localising the target cities. However, aiming at

immersion in the experience and the virtual environment. avoiding to bias the evaluation with task or tested appli-

As suggested in [5], we hypothesised that the physical di- cation orders, we adopted for the experiment a balanced

mension of the proposed interfaces may influence user sense paired design as suggested in [31]: we divided our users in

of immersion in the proposed navigation experience. Aim- two groups: each member of the same group was starting

ing at assessing the degree of Presence perceived by users the experiment with the same system. Among each group,

during the tasks, we extracted 12 questions appropriate for half of the participants was starting with the SEA task and

120](https://image.slidesharecdn.com/1-120824030429-phpapp02/75/1-5-2048.jpg)

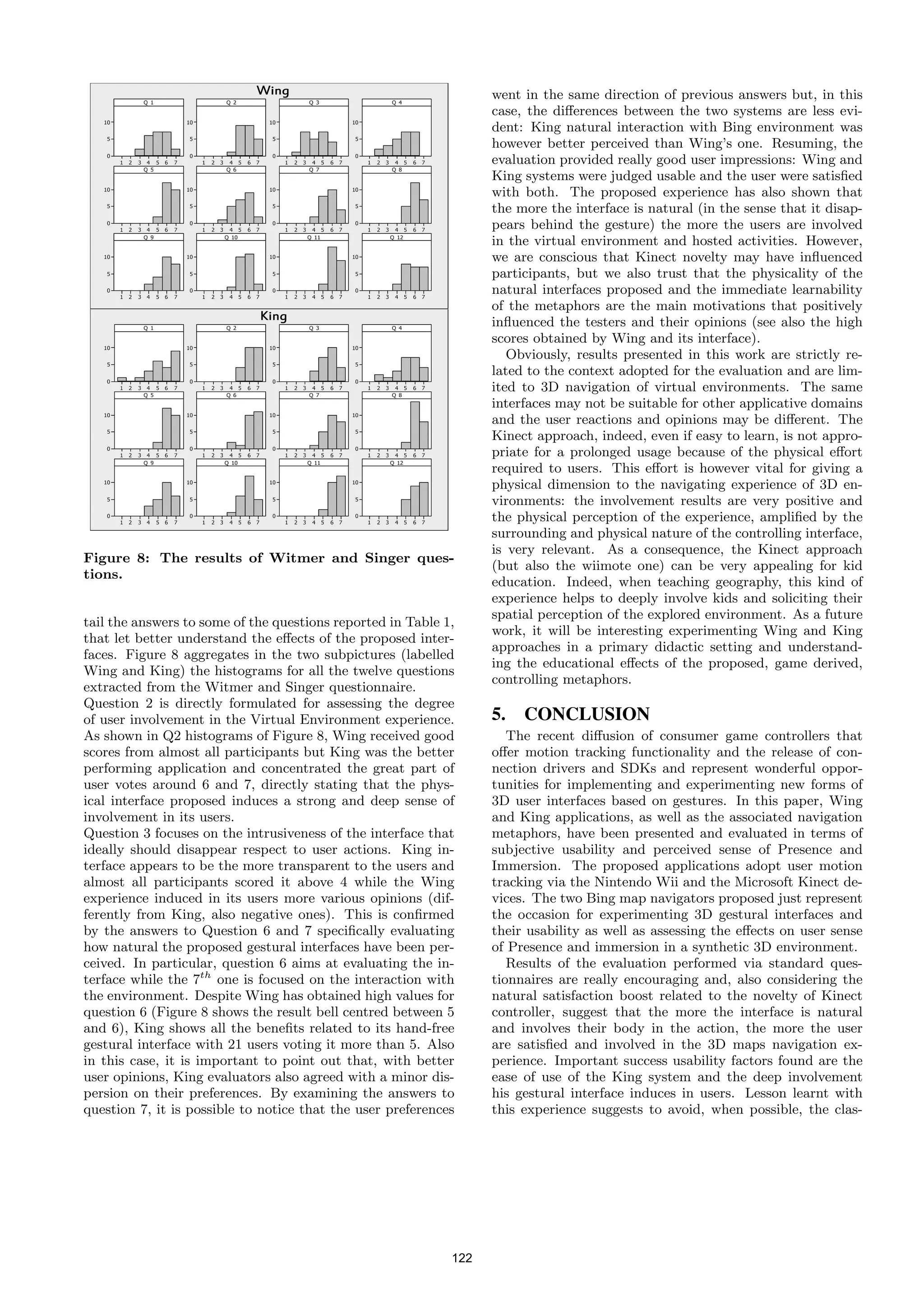

![Figure 6: The results of CSUQ questionnaires. Figure 7: The results of Presence questionnaire.

Table 2: CSUQ categories details Table 3: Witmer and Singer categories details

OVR USE INFO INTERF INV DF CF

µ σ µ σ µ σ µ σ µ σ µ σ µ σ

Wing 5.13 1.08 4.85 1.21 5.17 0.94 5.75 1.33 Wing 5.39 0.83 4.41 1.14 5.87 0.27

King 5.78 0.75 5.89 0.78 5.4 0.82 6.25 0.85 King 5.89 0.69 5.39 0.94 6.14 0.26

the other with the LAND one. After each task, all partici- servation of users during the study suggests the same conclu-

pants filled the ASQ, the CSUQ questionnaire and answered sion: participants have been almost all disappointed at the

the questions from Witmer and Singer questionnaire [30] re- end of their King task showing that they would prefer con-

ported in Table 1. Let us point out that question 4 results tinuing the experience based on bird/aeroplane metaphor.

has been reversed before aggregating the DF category. Once assessed the degree of Usability and perceived System

Usefulness for both Wing and King systems, we extended

4.4 Results the evaluation to user perceptions in terms of Presence and

The first good impressions on the usability of the proposed Involvement in the virtual experience. At this aim, we ex-

systems were collected during the experiment by listening tracted 12 questions from Witmer and Singer questionnaire

at participant comments and observing their behaviours. to integrate ASQ and CSUQ ones. As shown in Table 1, the

These insights were lately confirmed by examining the ques- Presence questionnaire is aggregated in three factors aiming

tionnaires answers. Figure 5 shows the boxplots depicting at assessing how users experience the computer-generated

the ASQ results that gave a preliminary idea about task environment rather than their physical locale. The adoption

difficulties, user preferences and perceived usability of the of a controller based natural interface (Wing) and a hand-

proposed interfaces. The users globally assigned really high free natural one (King) let us understand if, in the context

scores to both systems but the effects of the King feedback of 3D map navigation, physical gestures (and the sensor de-

mechanism (the paper aeroplane) brought higher the INFO vice nature) increase experience likability and involvement

score associated to King respect to its competitor. In the deepness. Figure 7 shows the Presence questionnaire results

same direction the EASE boxes confirm the tasks performed aggregated in boxplots respect to the previously described

via the Kinect interface to have been perceived as easier re- factors. The measures of central tendency and spread for the

spect to Wing ones and this categories show the bigger dif- Presence categories are reported in Table 3. Also in the case

ference among the two systems. Also the TIME categories of Presence and Involvement, Wing opinions show a higher

state that the two tasks have been perceived in the same variability respect to King ones and, accordingly, user im-

manner respect to the time assigned, but the results are al- pressions are more concordant for King system respect to

ways characterised by a little preference for King system. Wing. What is really remarkable is that, despite Wing sys-

Figure 6 reports the results of CSUQ aggregated in the four tem had a good success in user evaluations, higher result

categories suggested by Lewis. The first observation on data values were obtained by the King experience. As an exam-

is about dispersion: comparing the two boxplots in Figure ple, Involvement factor was evaluated µ=5.39 for Wing while

6, it is evident that users perceptions are characterised by a King was performing better (µ=5.89). Both systems have

higher variability for Wing scores respect to King ones. The been positively judged in term of Control Factor, as shown

latter system was also perceived as better respect to all the in the rightmost boxes of both subplots of Figure 7: Table 3

four aggregating factors but exhibits higher differences re- details King to obtain a µ=6.14 while Wing scores µ=5.87.

spect to OVR (Overall Usability) and USE (System Useful- The Wing interface (µ=4.41), respect to the category Dis-

ness) categories (as shown in Figure 6 and detailed in Table traction Factor, has been perceived a little less effective than

2): the better performance of King is mainly concentrated the King one (µ=5.39). This has been probably due to the

in its System Usefulness and influences the overall opinion hand-free interface that was built tanks to the adoption of

about the systems. Table 2 resumes, via the µ and σ values, Kinect sensor: while the Wing user holds the wiimote, the

the results of CSUQ categories. All the considerations de- Microsoft device has proved to be really effective in letting

ducted on Figure 6, on boxes (and consequently on medians) the interface to disappear behind natural gestures.

reflect, obviously, on the values reported in Table 2: King Respect to the sense of Presence and Involvement in the

encounters more user enthusiasm than Wing. A direct ob- Bing Virtual Environment it can be interesting to further de-

121](https://image.slidesharecdn.com/1-120824030429-phpapp02/75/1-6-2048.jpg)

![sic window/icon/mouse interaction for experimenting, ob- [15] Microsoft. Visual c#. retrieved on December 2011

viously for appropriate tasks (i.e., not keyboard intensive, from http://msdn.microsoft.com/en-

etc.), new gestures and new forms of physical commands. us/library/kx37x362.aspx.

As a future work, we intend to experiment the interfaces in [16] Microsoft. Kinect sdk for developers. retrieved on

a kid geographical didactic context that will probably bene- December 2011 from http://kinectforwindows.org/,

fit of the effects of the proposed experiences and interfaces. 2011.

[17] Nintendo. Donkey kong jet race. retrieved on

6. ACKNOWLEDGMENTS December 2011 from http://tinyu.me/VdTB7.

We would like to thank the little Giuseppe for his natu- [18] Nintendo. Mario kart wii. retrieved on December 2011

ral attitude to game and his children’s insatiable desire to from http://www.mariokart.com/wii/launch/.

explore. [19] Nintendo. Wii remote. retrieved on December 2011

from http://www.nintendo.com/wii/what-is-

7. REFERENCES wii/#/controls.

[1] ASUS. Wavi xtion. retrieved on December 2011 from [20] I. Passero. Two bing maps controllers based on kinect

http://event.asus.com/wavi. and wiimote devices. retrieved on December 2011 from

[2] J. Blake. Natural User Interfaces in .NET (Early http://youtu.be/ITtd02h5G5w.

Access Edition). Manning Publications Co., [21] B. Peek. Wiimote lib: Managed library for nintendo’s

Greenwich, CT, 2010. wiimote. retrieved on December 2011 from

[3] L. De Paolis, G. Aloisio, M. Celentano, L. Oliva, and http://wiimotelib.codeplex.com/.

P. Vecchio. Mediaevo project: A serious game for the [22] B. Santos, B. Prada, H. Ribeiro, P. Dias, S. Silva, and

edutainment. In Computer Research and Development C. Ferreira. Wiimote as an input device in google

(ICCRD), 2011 3rd International Conference on, earth visualization and navigation: A user study

volume 4, pages 524 –529, march 2011. comparing two alternatives. In Information

[4] Google. Gmail motion beta. retrieved on December Visualisation (IV), 2010 14th International

2011 from Conference, pages 473 –478, july 2010.

http://mail.google.com/mail/help/motion.html. [23] K. Sung. Recent videogame console technologies.

[5] H. Ip, J. Byrne, S. Cheng, and R. Kwok. The samal Computer, 44(2):91 –93, feb. 2011.

model for affective learning: A multidimensional [24] P. Thai. Using kinect and openni to embody an avatar

model incorporating the body, mind and emotion in in second life: Gesture & emotion transference.

learning. In DMS 2011 : The 17th International retrieved on December 2011 from

Conference on Distributed Multimedia Systems, pages http://tinyu.me/o2GzQ.

1–6, august 2011. [25] UBISOFT. Fighters uncaged. retrieved on December

[6] M. Kamel Boulos, B. Blanchard, C. Walker, 2011 from http://fighters-uncaged.uk.ubi.com/.

J. Montero, A. Tripathy, and R. Gutierrez-Osuna. [26] Various. Openkinect. retrieved on December 2011

Web gis in practice x: a microsoft kinect natural user from http://openkinect.org.

interface for google earth navigation. International [27] N. Villaroman, D. Rowe, and B. Swan. Teaching

Journal of Health Geographics, 10(1):45, 2011. natural user interaction using openni and the

[7] S. A. Lacolina, A. Soro, and R. Scateni. Natural microsoft kinect sensor. In Proceedings of the 2011

exploration of 3d models. In Proceedings of the 9th conference on Information technology education,

ACM SIGCHI Italian Chapter International SIGITE ’11, pages 227–232, New York, NY, USA,

Conference on Computer-Human Interaction: Facing 2011. ACM.

Complexity, CHItaly, pages 118–121, New York, NY, [28] D. Wigdor and D. Wixon. Brave NUI World:

USA, 2011. ACM. Designing Natural User Interfaces for Touch and

[8] J. Lee. Hacking the nintendo wii remote. Pervasive Gesture. Morgan Kaufmann, 1 edition, Apr. 2011.

Computing, IEEE, 7(3):39 –45, july-sept. 2008. [29] C. A. Wingrave, B. Williamson, P. D. Varcholik,

[9] J. Lewis. Ibm computer usability satisfaction J. Rose, A. Miller, E. Charbonneau, J. Bott, and J. J.

questionnaires: psychometric evaluation and LaViola Jr. The wiimote and beyond: Spatially

instructions for use. International Journal of convenient devices for 3d user interfaces. Computer

Human-Computer Interaction, 7(1):57–78, 1995. Graphics and Applications, IEEE, 30(2):71 –85,

[10] W. Liu. Natural user interface- next mainstream march-april 2010.

product user interface. In Computer-Aided Industrial [30] B. Witmer and M. Singer. Measuring presence in

Design Conceptual Design (CAIDCD), 2010 IEEE virtual environments: A presence questionnaire.

11th International Conference on, volume 1, pages 203 Presence, 7(3):225–240, 1998.

–205, nov. 2010. [31] C. Wohlin, P. Runeson, M. H¨st, M. C. Ohlsson,

o

[11] Microsoft. Bing maps beta. retrieved on December B. Regnell, and A. Wessl´n. Experimentation in

e

2011 from http://www.bing.com/maps/. software engineering: an introduction. Kluwer

[12] Microsoft. Dance central 2. retrieved on December Academic Publishers, Norwell, MA, USA, 2000.

2011 from http://tinyu.me/vO2eN. [32] Y. Yang and L. Li. Turn a nintendo wiimote into a

[13] Microsoft. Kinect. retrieved on December 2011 from handheld computer mouse. Potentials, IEEE, 30(1):12

http://www.xbox.com/en-US/Kinect. –16, jan.-feb. 2011.

[14] Microsoft. Kinect sport season two. retrieved on

December 2011 from http://tinyu.me/EZFNk.

123](https://image.slidesharecdn.com/1-120824030429-phpapp02/75/1-8-2048.jpg)