This document provides an introduction and overview of a project on vision-based hand gesture recognition. It discusses the motivation for the project and how hand gestures can provide a more natural human-computer interaction compared to traditional input devices like keyboards and mice. The document outlines the objectives of the project, which are to develop a system that can identify specific hand gestures using a webcam and interpret them to control mouse operations on a computer. It also provides an overview of the organization of the project report and the topics that will be discussed in subsequent chapters, such as the literature review, proposed methodology, results, and conclusions.

![HGR Project Report Page 4

1.3 Gesture Based Applications:

Gesture based applications are broadly classified into two groups on the basis of

their purpose: multidirectional control and a symbolic language.

3D Design: CAD (computer aided design) is an HCI which provides a platform for

interpretation and manipulation of 3-Dimensional inputs which can be the gestures.

Manipulating 3D inputs with a mouse is a time consuming task as the task involves a

complicated process of decomposing a six degree freedom task into at least three

sequential two degree tasks. Massachuchetttes institute of technology [3] has come up

with the 3DRAW technology that uses a pen embedded in polhemus device to track the

pen position and orientation in 3D.A 3space sensor is embedded in a flat palette,

representing the plane in which the objects rest .The CAD model is moved synchronously

with the users gesture movements and objects can thus be rotated and translated in order

to view them from all sides as they are being created and altered.

Tele presence: There may raise the need of manual operations in some cases such as

system failure or emergency hostile conditions or inaccessible remote areas. Often it is

impossible for human operators to be physically present near the machines [4]. Tele

presence is that area of technical intelligence which aims to provide physical operation

support that maps the operator arm to the robotic arm to carry out the necessary task, for

instance the real time ROBOGEST system constructed at University of California, San

Diego presents a natural way of controlling an outdoor autonomous vehicle by use of a

language of hand gestures. The prospects of tele presence includes space, undersea

mission, medicine manufacturing and in maintenance of nuclear power reactors.

Virtual reality: Virtual reality is applied to computer-simulated environments that can

simulate physical presence in places in the real world, as well as in imaginary worlds.

Most current virtual reality environments are primarily visual experiences, displayed

either on a computer screen or through special stereoscopic displays [6]. There are also

some simulations include additional sensory information, such as sound through speakers](https://image.slidesharecdn.com/ced375d5-78a6-43be-9712-a616a2febe93-150801100722-lva1-app6891/75/HGR-thesis-4-2048.jpg)

![HGR Project Report Page 24

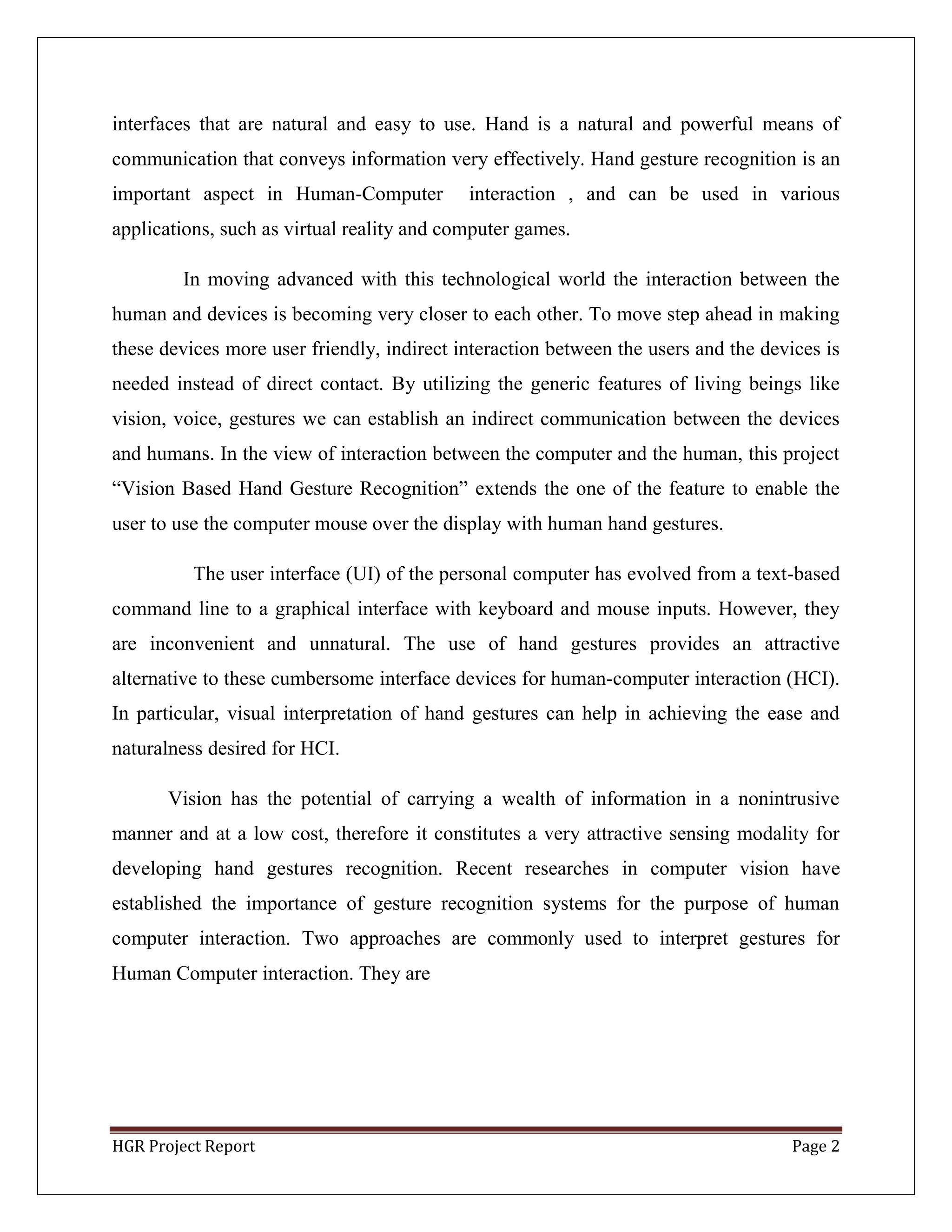

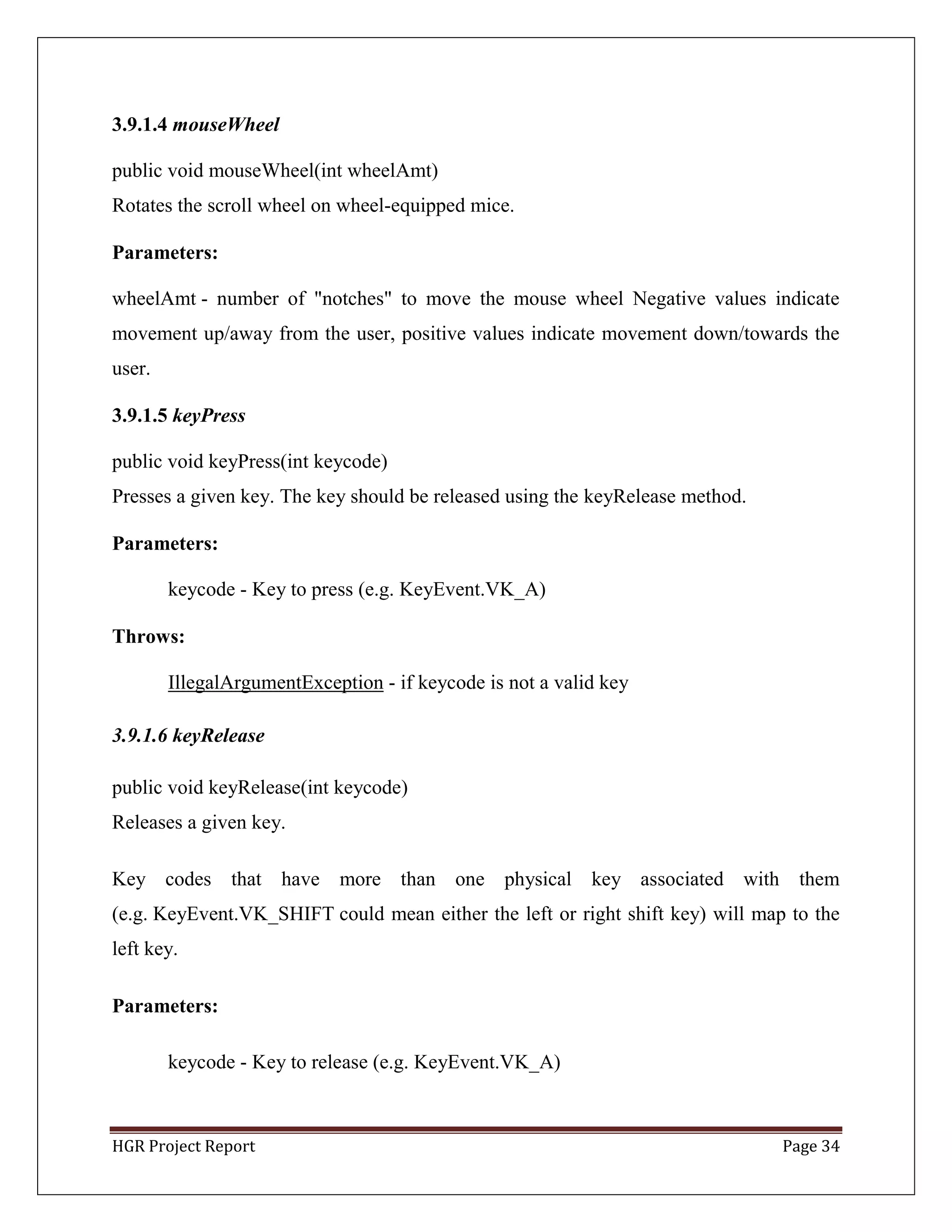

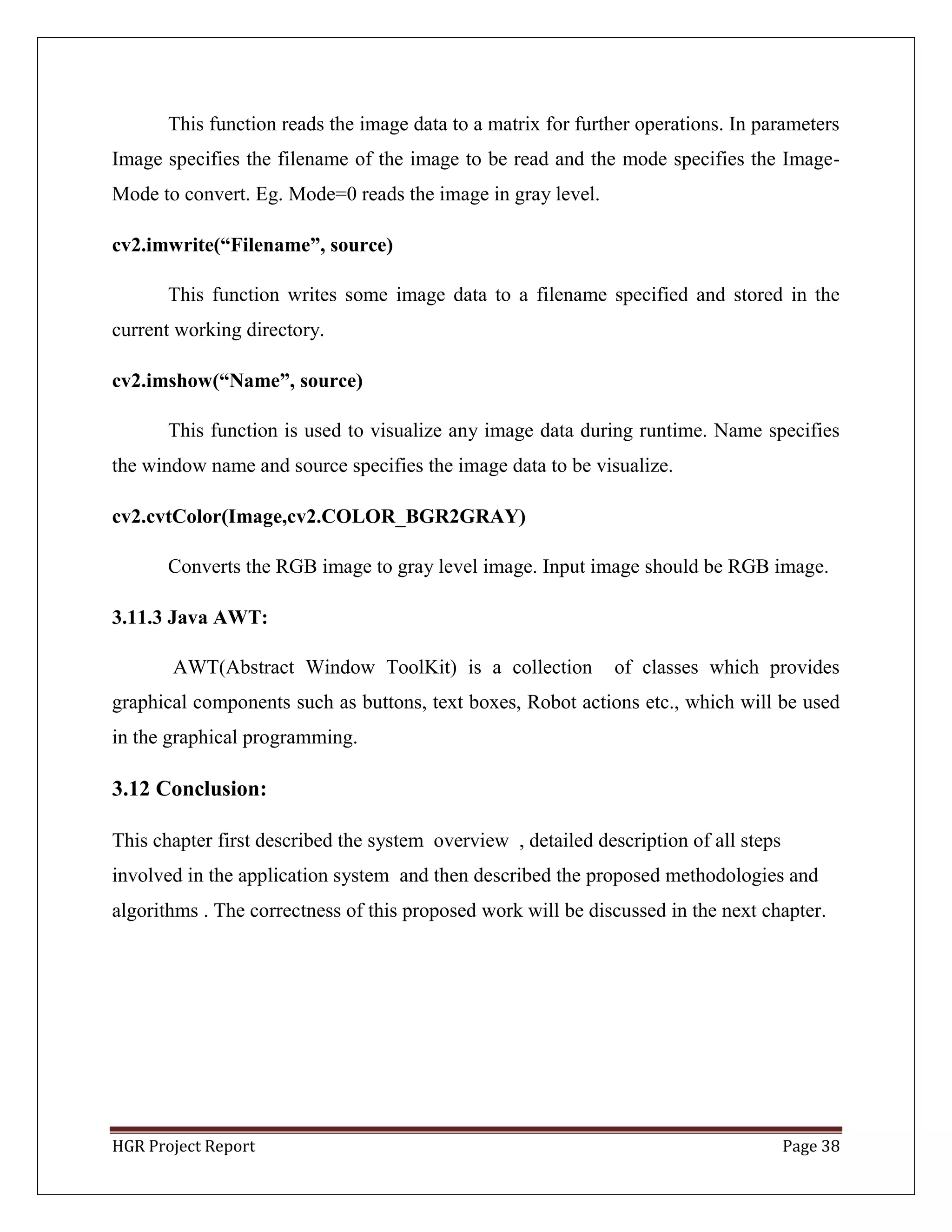

3.4 Color Segmentation:

In color based thresholding, static values of hand color are considered for

thresholding. RGB values of hand are taken with minimum RGB value and

maximum RGB value as a range. These ranges are selected after analysing the

general range of color of human hands. Then the input image frame uses these two

minimum and maximum RGB values for thresholding. Any pixel between this

range is considered as the hand pixel so it is set to 1 and pixels outside this range are

considered as background pixel is set to 0.

Figure 8: Color Segmentation Example

As there are no background constraints in this method, it is highly prone to noise.

This method can also work on general backgrounds with a slight constraint that the

background should not contain pixels that lie between the ranges specified. Else extra

processing like selecting the largest contour explained further is required to ensure such

white blobs are not detected as hand. The thresholding values are very tricky to

select. For some user’s,the color of the hand could vary a lot and be outside the

specified range thus making the system unable to detect such user’s hand.

lower_hand = np.array([0,30,60])

upper_hand = np.array([20,150,250])

mask = cv2.inRange(img lower_hand, upper_hand)](https://image.slidesharecdn.com/ced375d5-78a6-43be-9712-a616a2febe93-150801100722-lva1-app6891/75/HGR-thesis-24-2048.jpg)

![HGR Project Report Page 26

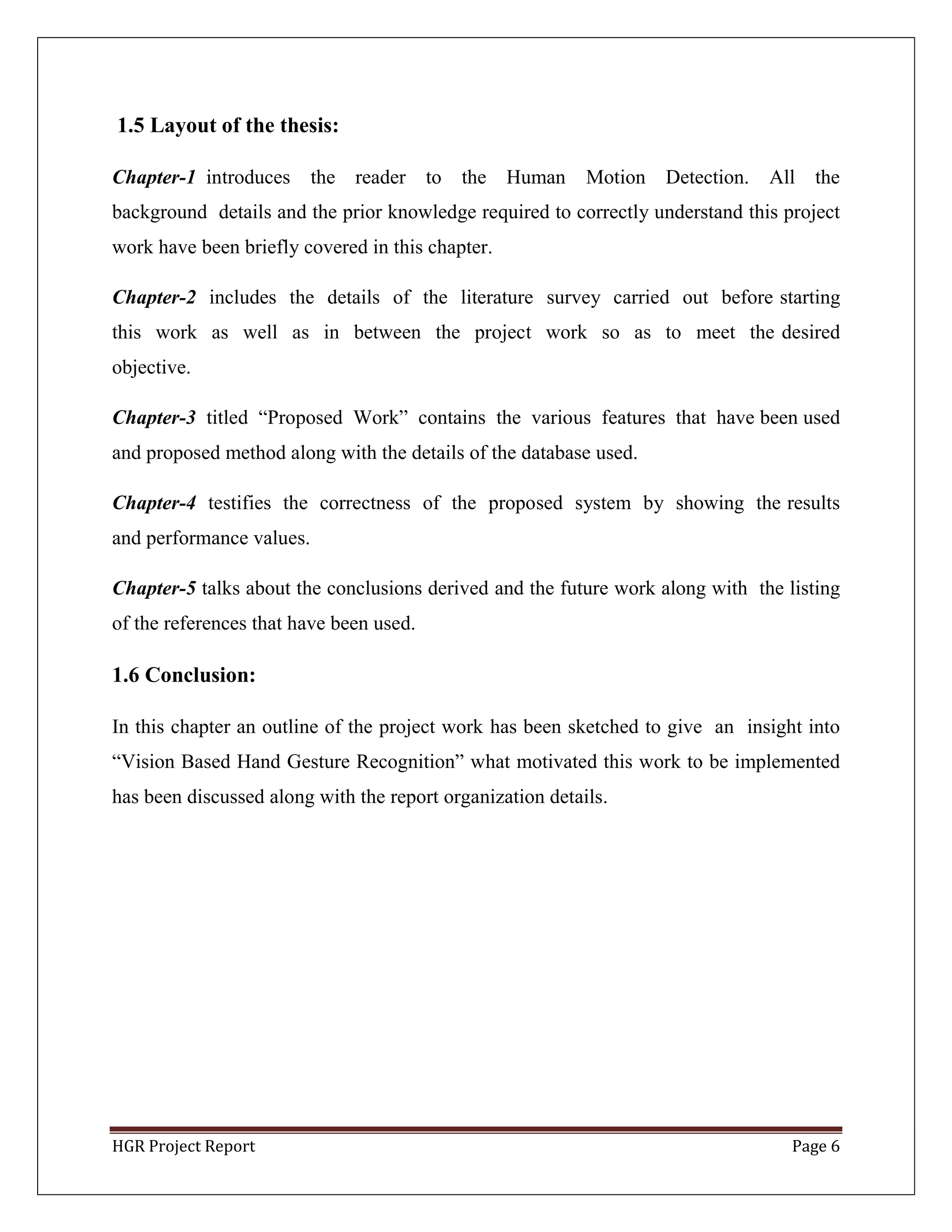

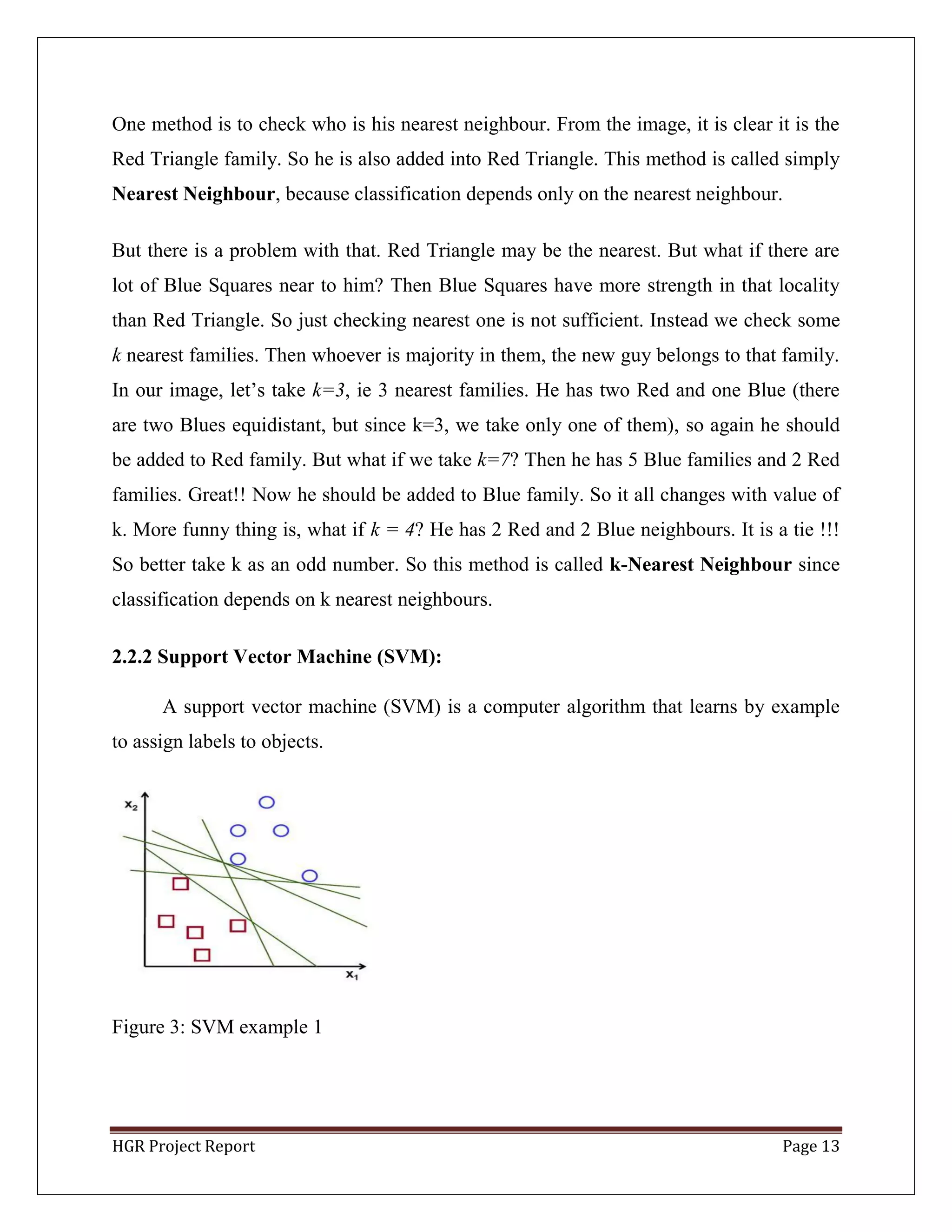

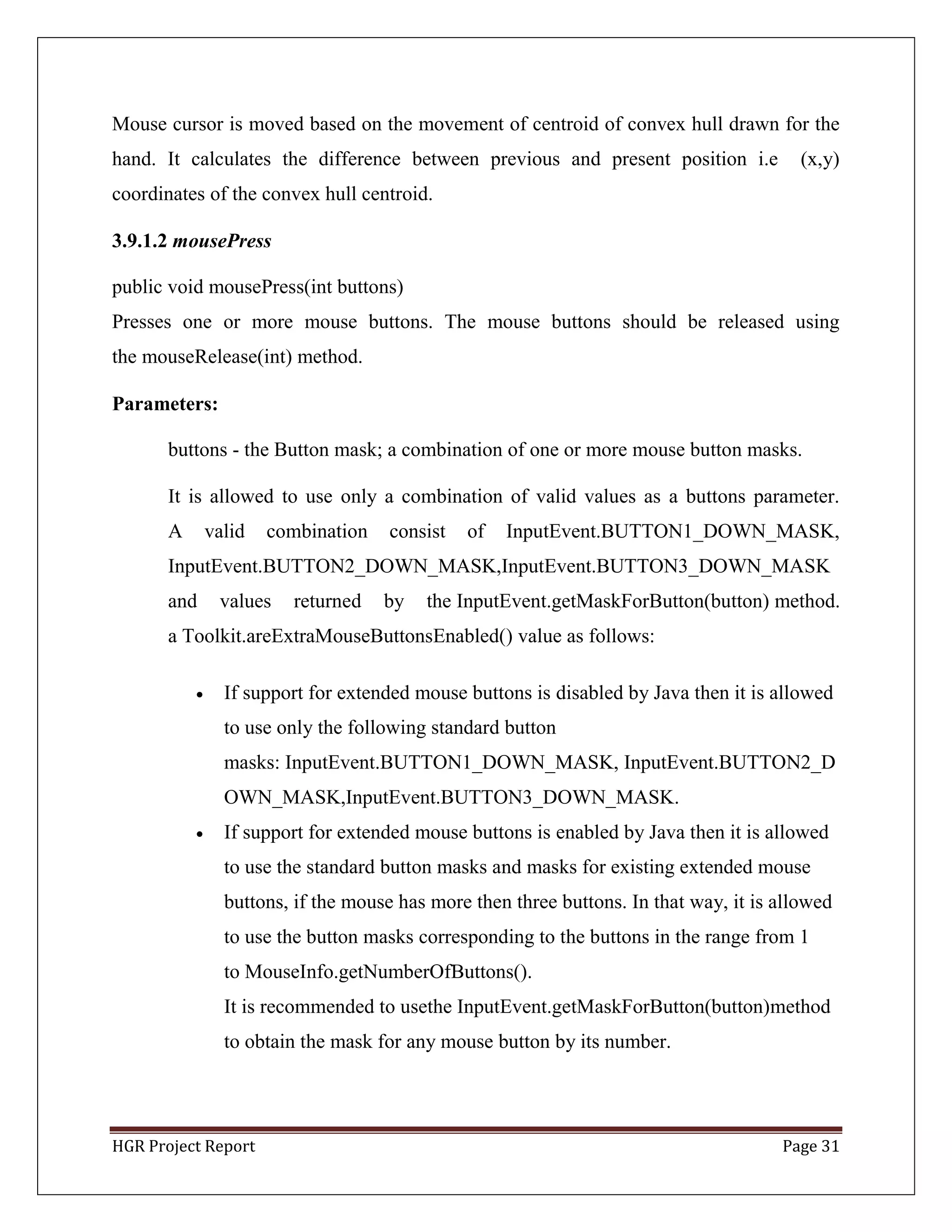

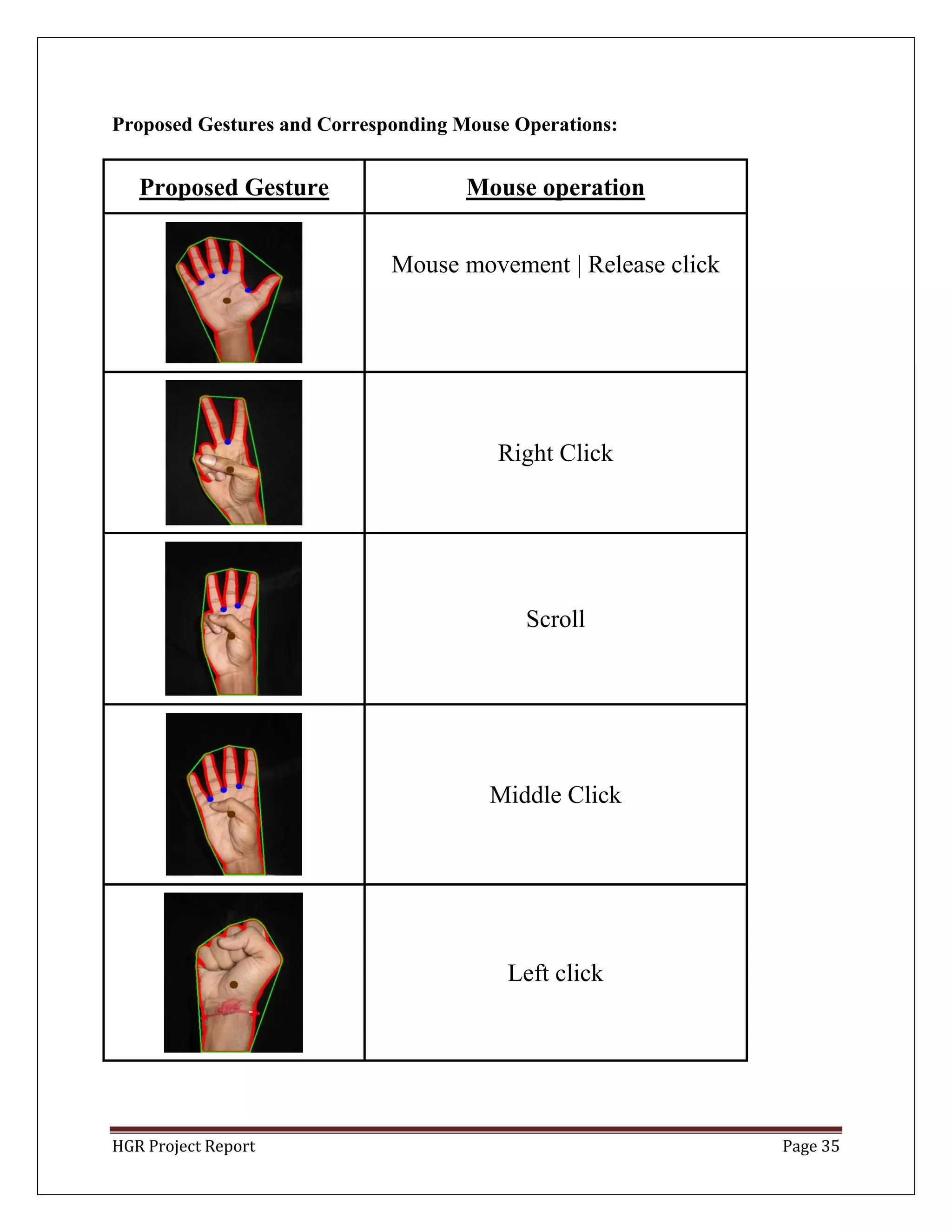

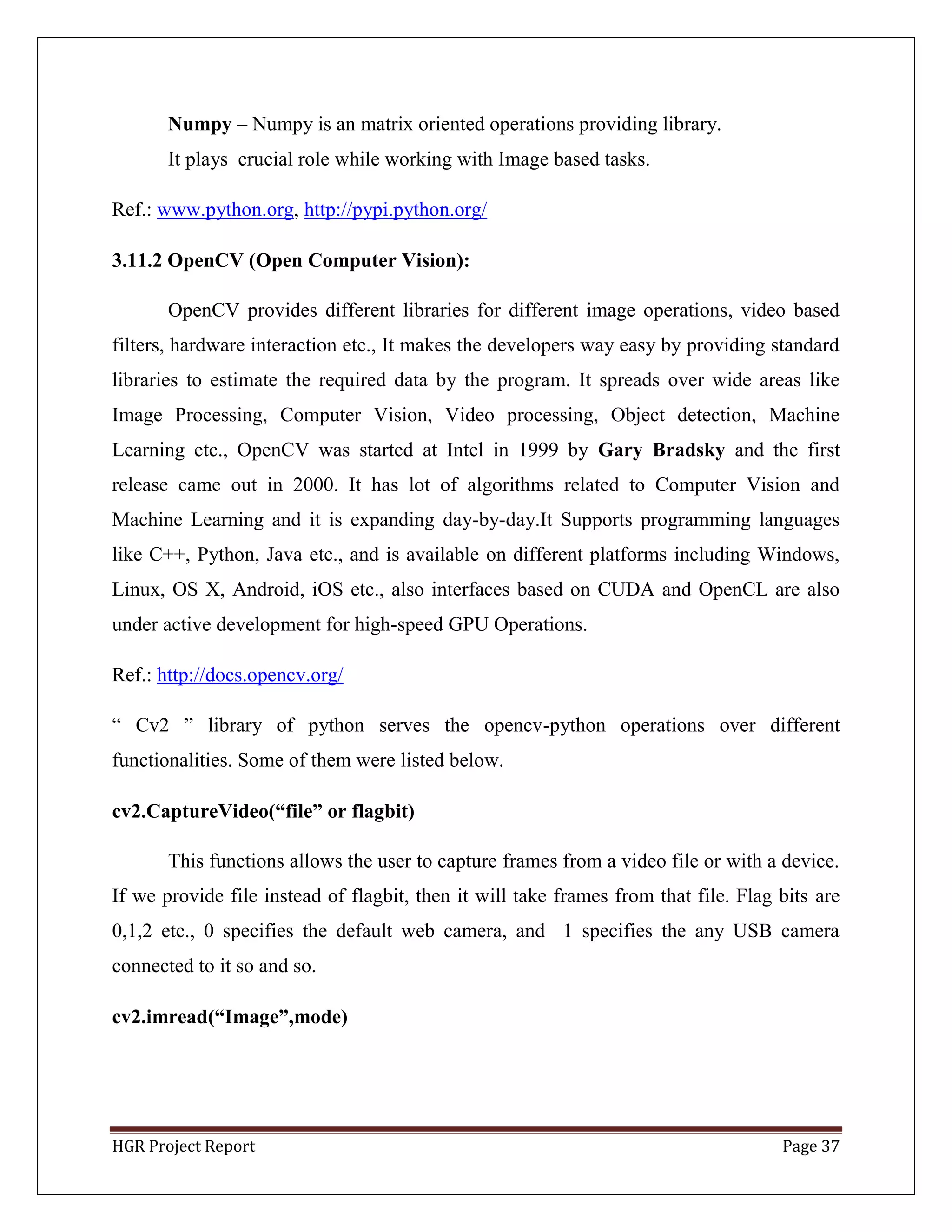

3.6 Convex Hull:

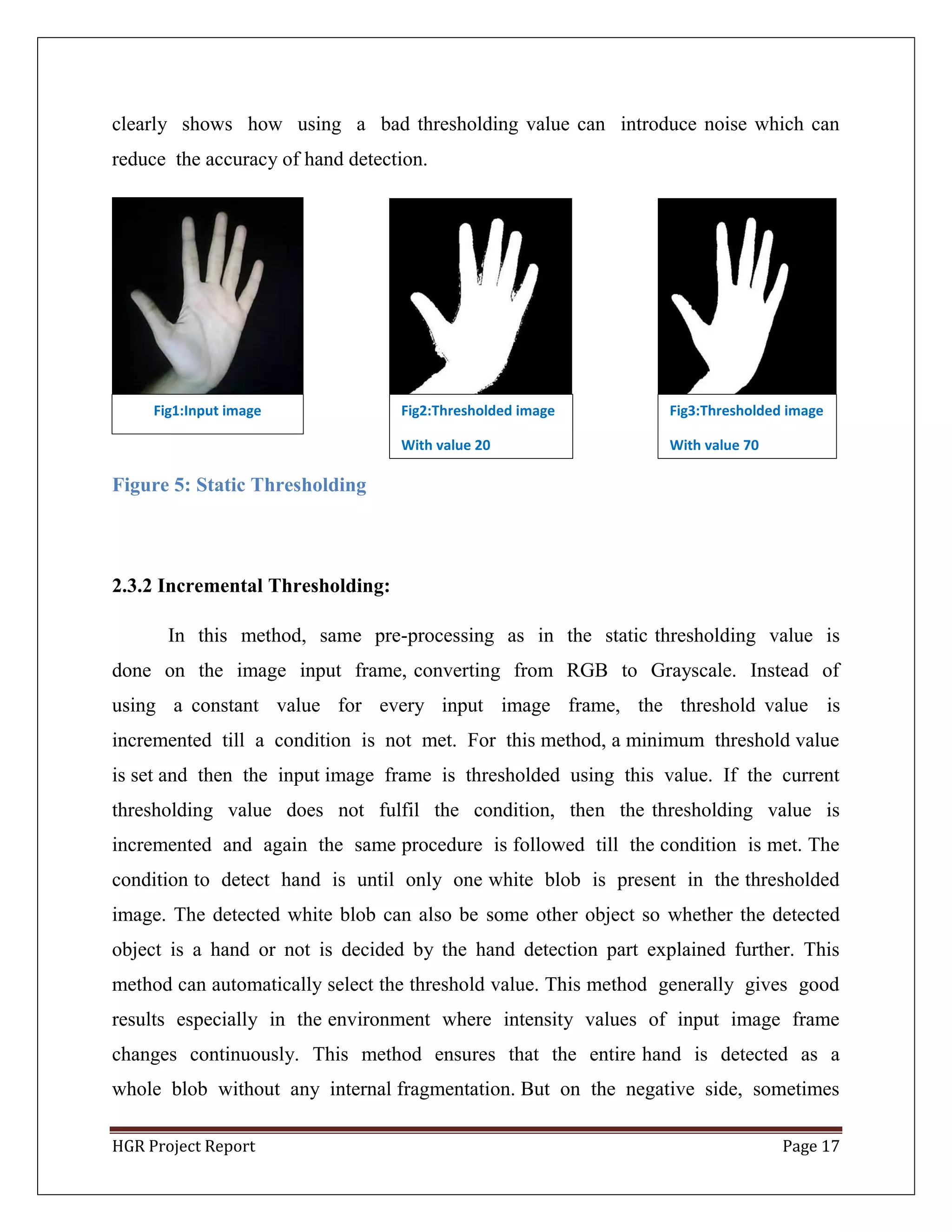

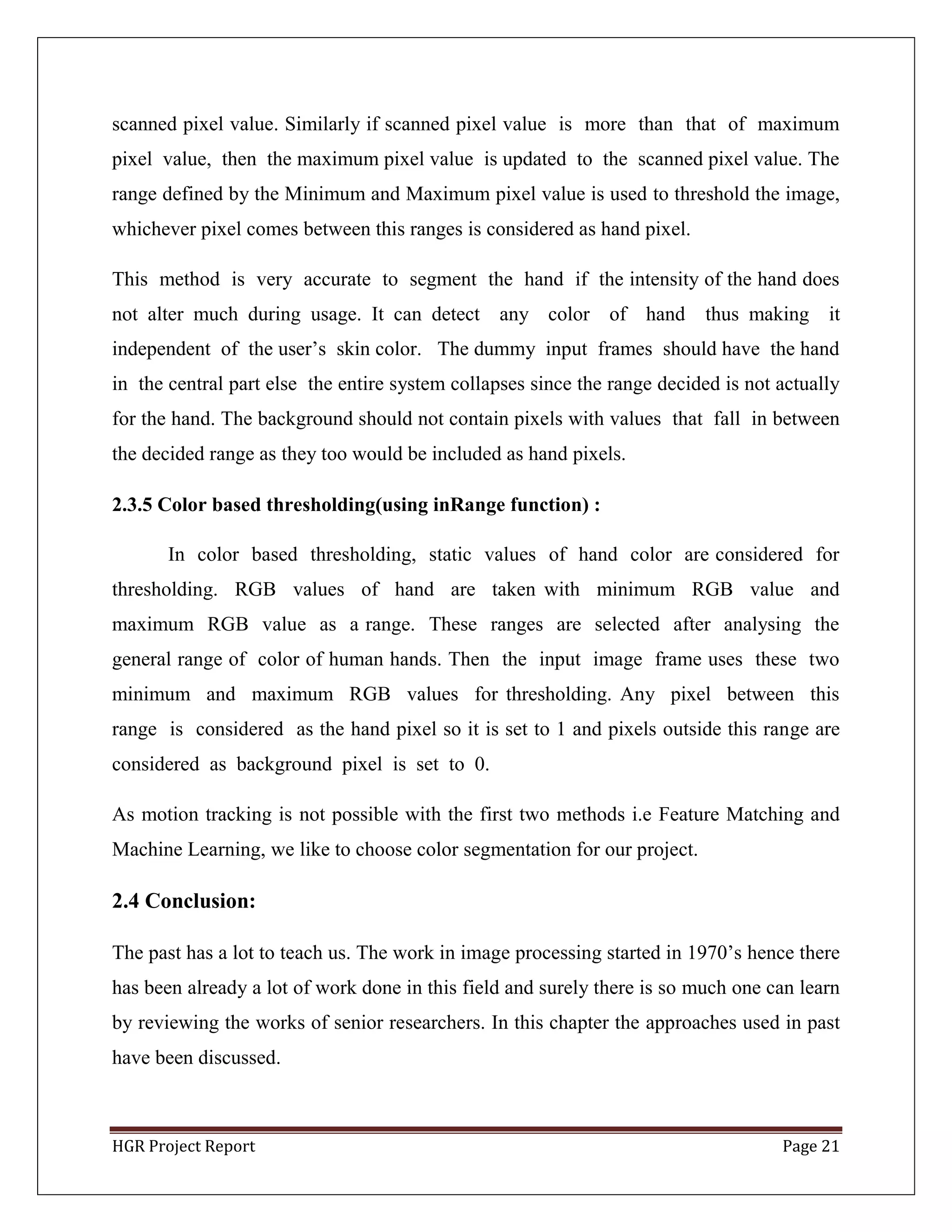

The convex hull of a set of points in the euclidean space is the smallest convex set that

contains all the set of given points. For example, when this set of points is a

bounded subset of the plane, the convex hull can be visualized as the shape formed

by a rubber band stretched around this set of points. Convex hull is drawn around the

contour of the hand, such that all contour points are within the convex hull. This makes

anenvelope around the hand contour. Figure 7 shows the convex hull formed around

the detected hand.

Figure 10: Convex Hull of the Input Image

hull = cv2.convexHull(points[, hull[, clockwise[, returnPoints]]

Arguments details:

points are the contours we pass into.

hull is the output, normally we avoid it.

clockwise : Orientation flag. If it is True, the output convex hull is

oriented clockwise. Otherwise, it is oriented counter-clockwise.

To draw all the contours in an image:

cv2.drawContours(img, contours, -1, (0,255,0), 3)

To draw an individual contour, say 4th contour:

cv2.drawContours(img, contours, 3, (0,255,0), 3)

But most of the time, below method will be useful:

cnt = contours[4]

cv2.drawContours(img, [cnt], 0, (0,255,0), 3)](https://image.slidesharecdn.com/ced375d5-78a6-43be-9712-a616a2febe93-150801100722-lva1-app6891/75/HGR-thesis-26-2048.jpg)

![HGR Project Report Page 44

REFERENCES:

[1] G. R. S. Murthy, R. S. Jadon. (2009). ―A Review of Vision Based Hand

Gestures Recognition,‖ International Journal of Information Technology and Knowledge

Management, vol. 2(2), pp. 405 -410.

[2] R. Lockton. ―Hand Gesture Recognition Using Computer Vision.‖

http://research.microsoft.com/en-us/um/people/awf/bmvc02/project.pdf

[3] S. Mitra, T. Acharya ―Gesture recognition: a survey‖, IEEE Trans Syst Man Cybern

Part C Appl Rev 37(3):311–324 (2007).

[4] Fakhreddine Karray, Milad Alemzadeh, Jamil Abou Saleh, Mo Nours Arab,

(2008) .―Human Computer Interaction: Overview on State of the Art‖, International

Journal on Smart Sensing andn Intelligent Systems, Vol. 1(1)

[5] R. Fergus, P. Perona, and A. Zisserman. Object class recognition by unsupervised

scale-invariant learning. InCVPR,volume 2, pages 264–271, 2003

[6] S. Ullman, M. Vidal-Naquet, and E. Sali. Visual features of intermdediate complexity

and their use in classification.Nature Neuroscience, 5(7):682–687, 2002.

[7] M. Weber, M. Welling, and P. Perona. Unsupervised learningof models for

recognition. InECCV, Dublin, Ireland, 2000.

[8] D. G. Lowe, ―Distinctive image features from scale invariant keypoints,‖

International Journal of Computer Vision,2004.

[9] Mikolajczyk, K. 2002. Detection of local features invariant to affine

transformations,Ph.D. thesis,Institut National Polytechnique de Grenoble, France

[10] Pope, A.R., and Lowe, D.G. 2000. Probabilistic models of appearance for 3-D object

recognition.International Journal of Computer Vision, 40(2):149-167

[11] Ke, Y., Sukthankar, R.: PCA-SIFT: A more distinctive representation for localimage

descriptors. In: CVPR (2). (2004) 506 – 513

[12] A. Blake and M. Isard. 3D position, attitude and shape input using video tracking of

hands and lips. In Proceedings of SIGGRAPH 94, pages 185{192, 1994}

[13] J. Segen. Gest: a learning computer visionsystem that recognizes gestures. In

Machine Learning IV. Morgan Kau man, 1992. editedby Michalski et. al.](https://image.slidesharecdn.com/ced375d5-78a6-43be-9712-a616a2febe93-150801100722-lva1-app6891/75/HGR-thesis-44-2048.jpg)

![HGR Project Report Page 45

[14] J. M. Rehg and T. Kanade. Digiteyes: visionbased human hand tracking. Technical

Report CMU-CS-93-220, Carnegie Mellon School of Computer Science, Pittsburgh, PA

15213,1993.

[15] D. Rubine and P. McAvinney. Programmable finger-tracking instrument controllers.

Computer Music Journal, 14(1):26{41, 1990

[16] RichardWatson, ―Gesture recognition techniques‖, Technical report, Trinity

College, Department of Computer Science, Dublin, July, Technical Report No. TCD-CS-

93-11, 1993

[17] Chan Wah Ng, Surendra Ranganath, ―Real-time gesture recognition system and

application‖, Image Vision Comput, 20(13-14): 993-1007 ,2002

[18] Thomas G. Zimmerman , Jaron Lanier , Chuck Blanchard , Steve Bryson , Young

Harvill, ―A hand gesture interface device‖, SIGCHI/GI Proceedings, conference on

Human factors in computing systems and graphics interface, p.189-192, April 05- 09,

Toronto, Ontario, Canada, 1987

[19] Lalit Gupta and Suwei Ma ―Gesture-Based Interaction and Communication:

Automated Classification of Hand Gesture Contours‖ , IEEE transactions on systems,

man, and cybernetics—part c: applications and reviews, vol. 31, no. 1, February 2001](https://image.slidesharecdn.com/ced375d5-78a6-43be-9712-a616a2febe93-150801100722-lva1-app6891/75/HGR-thesis-45-2048.jpg)