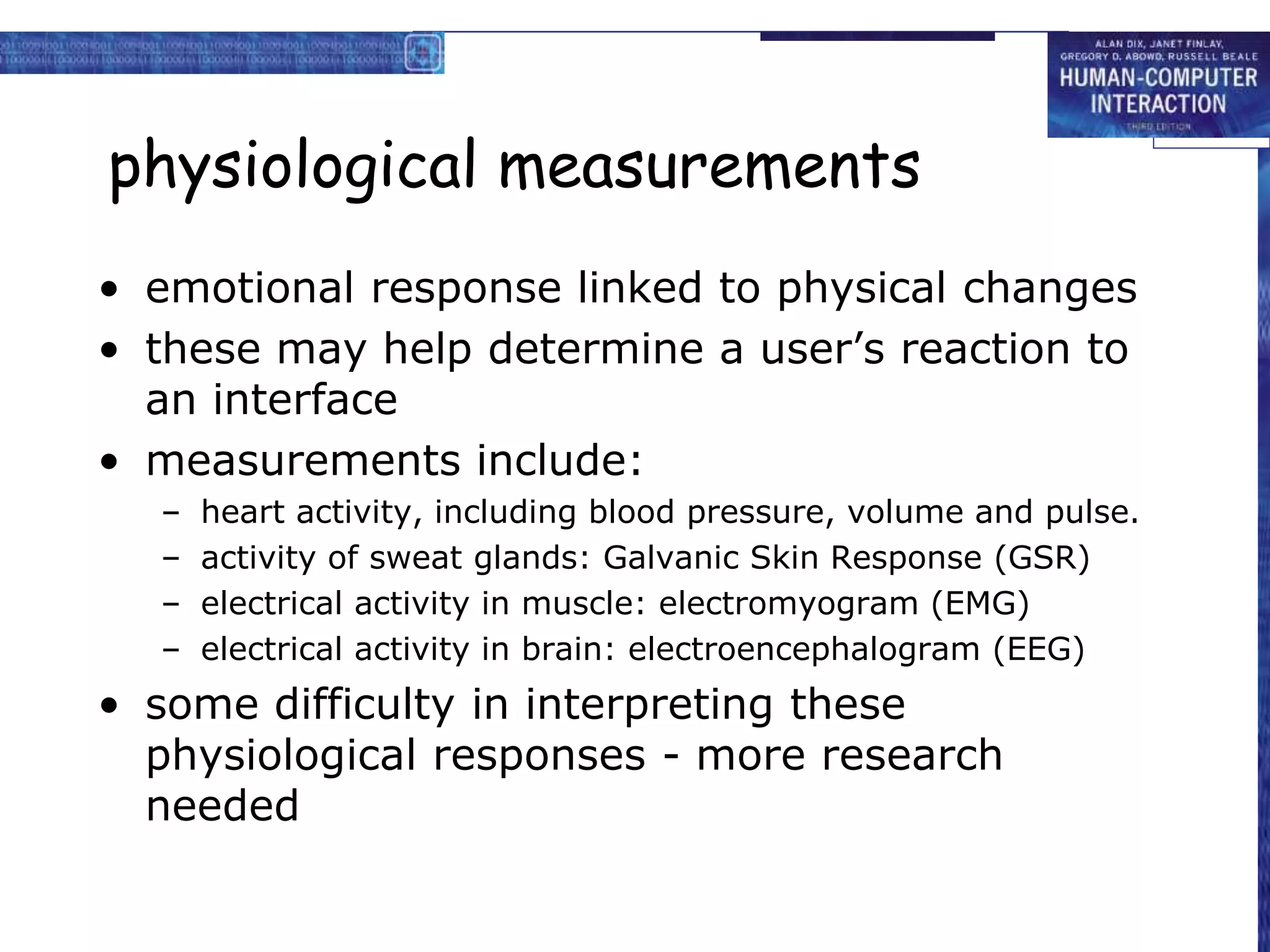

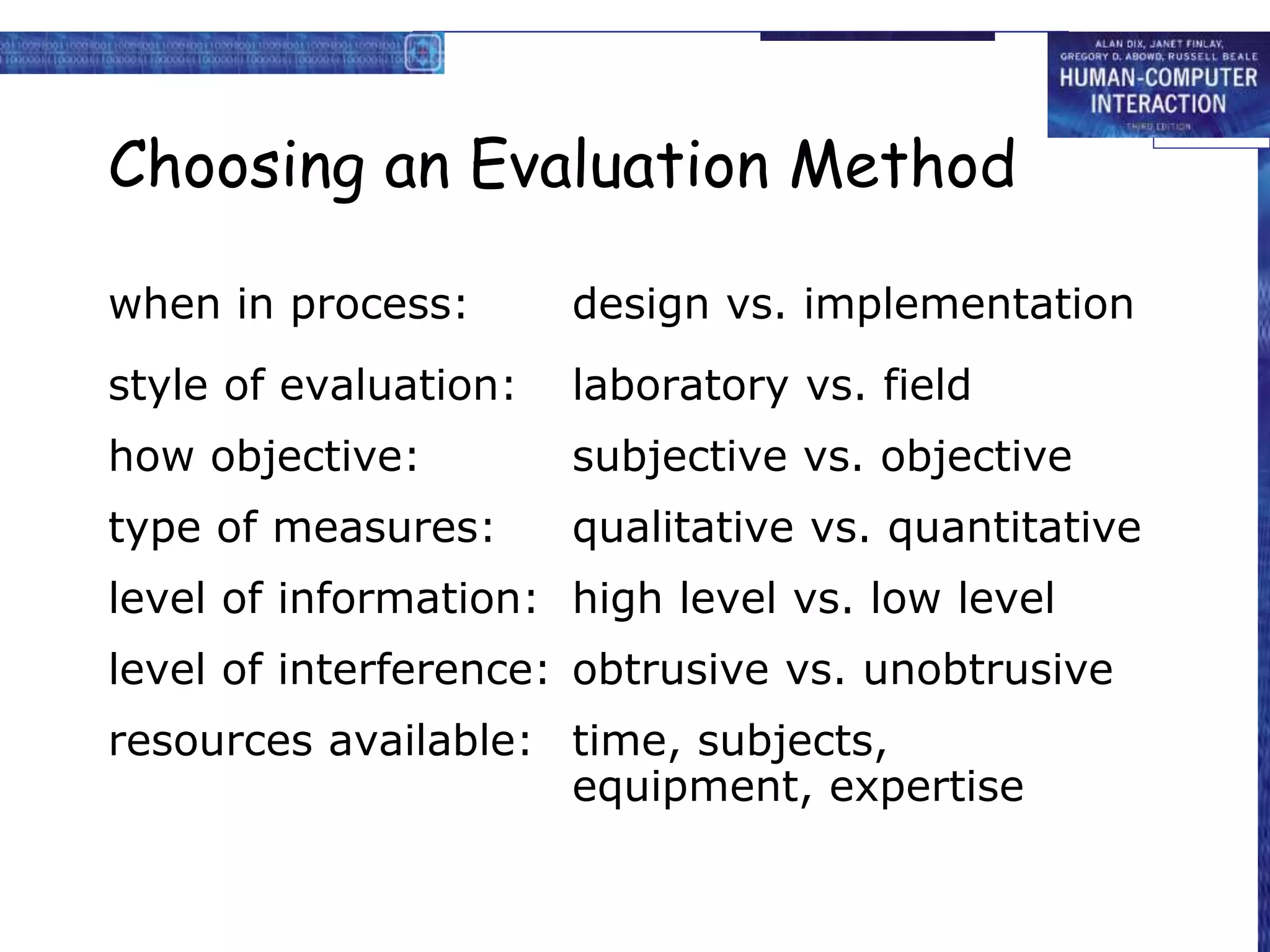

This document discusses various techniques for evaluating interactive systems and user interfaces. It describes evaluation techniques including cognitive walkthroughs, heuristic evaluation, experimental evaluation with users, and field studies. It also covers methods for gathering user data like think-aloud protocols, cooperative evaluation, interviews, questionnaires, and physiological measures like eye tracking. The goal of evaluation is to assess functionality, user experience, and specific problems in a systematic way throughout the design process.