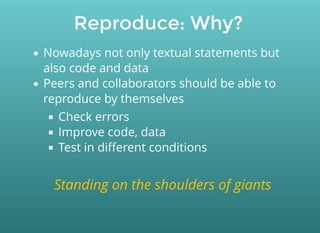

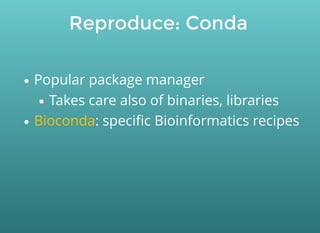

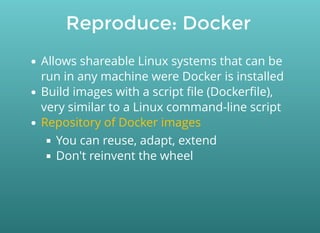

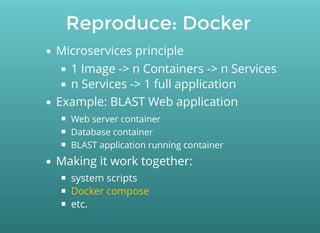

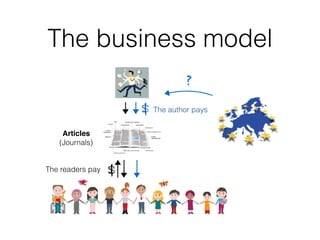

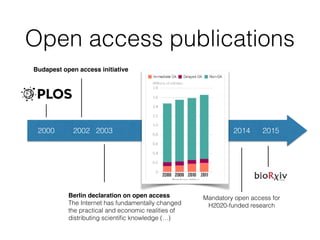

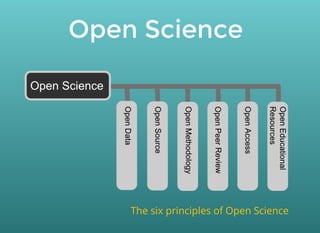

This document discusses open science practices for publishing and sharing research outputs like publications, data, code, and software. It covers topics like open access, documenting work, version control, reproducibility, and using platforms and workflows like Docker, Nextflow, and Galaxy to package and share research objects. The overall message is that applying open science principles of transparency, accessibility, and reproducibility can help researchers collaborate and build on each other's work.

![Tag and track: Where?

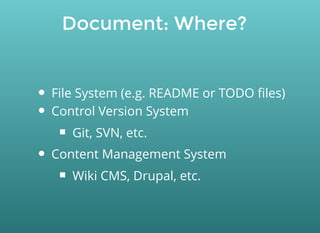

Code, documentation:

Control Version System (Git, SVN, etc.)

Interfaces:

(local installation)

Wiki CMS (e.g. )

Data, files

Plain Git (small files) or

Document Management Systems

Github

Gitlab

[Semantic] MediaWiki

Git with large files](https://image.slidesharecdn.com/oaweek17fosterwebinarguillaumetoni-171024122025/85/Open-Access-Week-2017-Life-Sciences-and-Open-Sciences-worfkflows-and-tools-34-320.jpg)