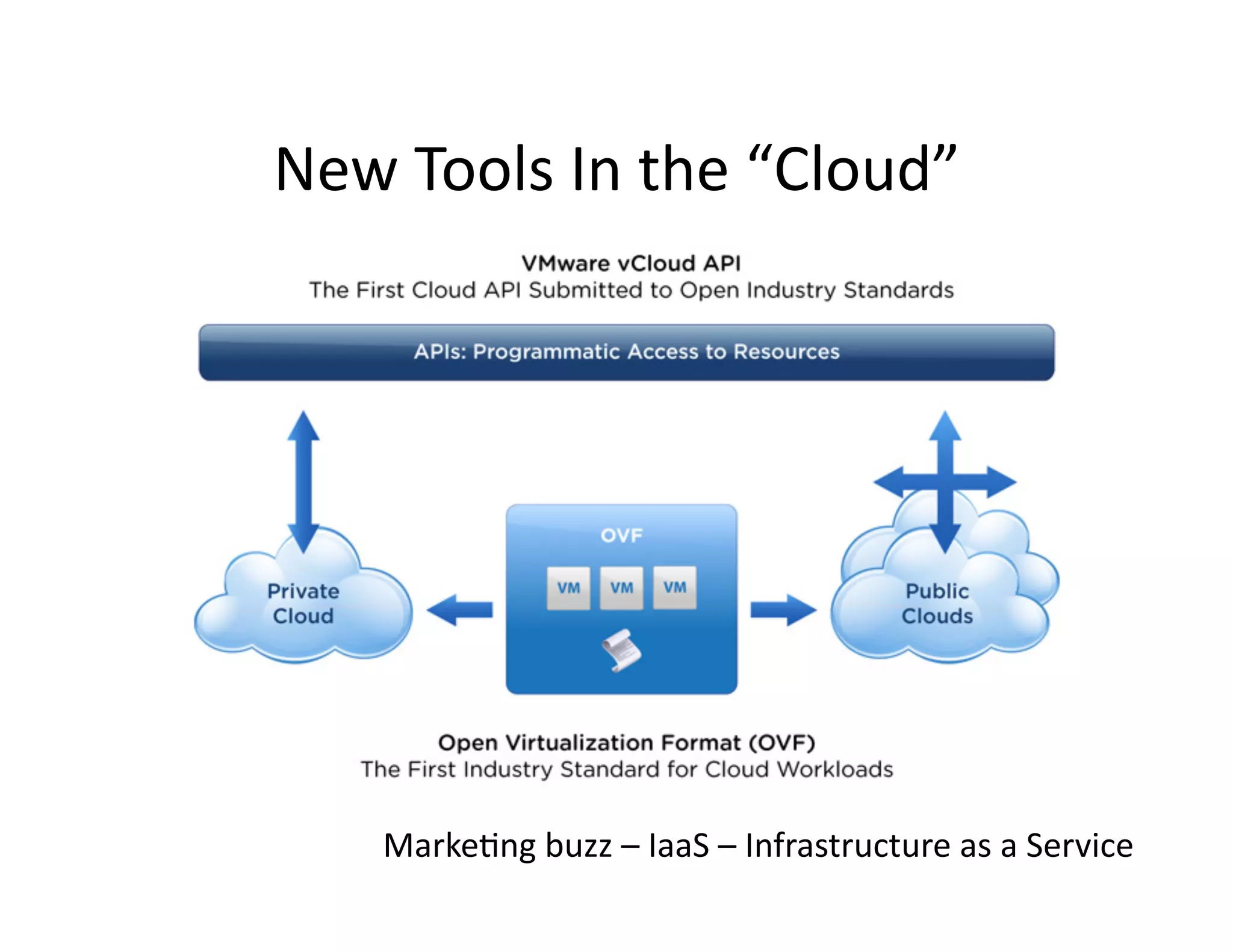

The document discusses the management of stored data, emphasizing that about 80% of data is rarely accessed and the remainder is predictably accessed, suggesting a need for a balance of flash and disk storage. It highlights the importance of predictability and efficiency in modern infrastructure, including cloud services and caching strategies. Additionally, it notes the significant financial implications of data storage and concludes with a recommendation to invest in flash storage where possible.