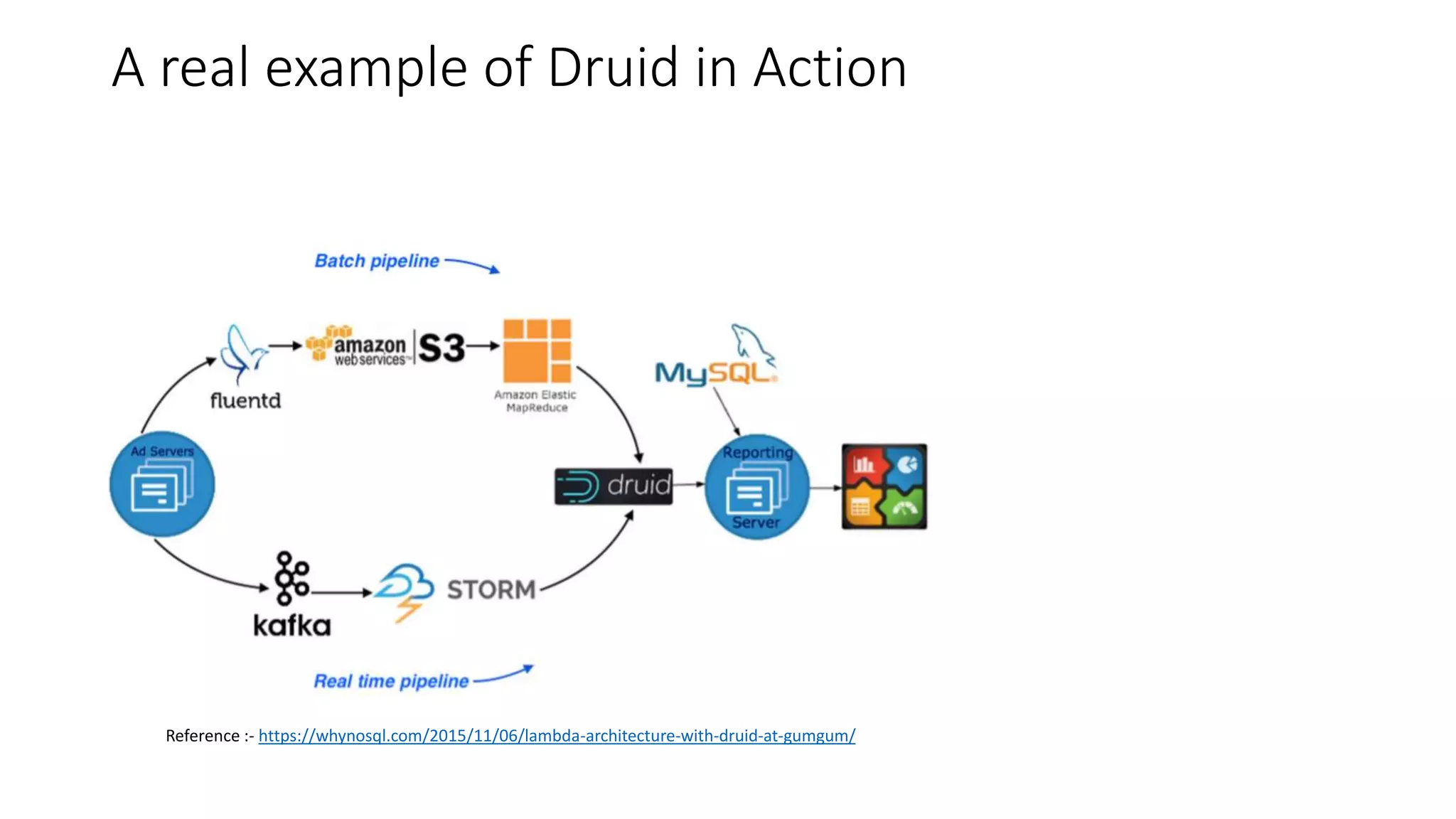

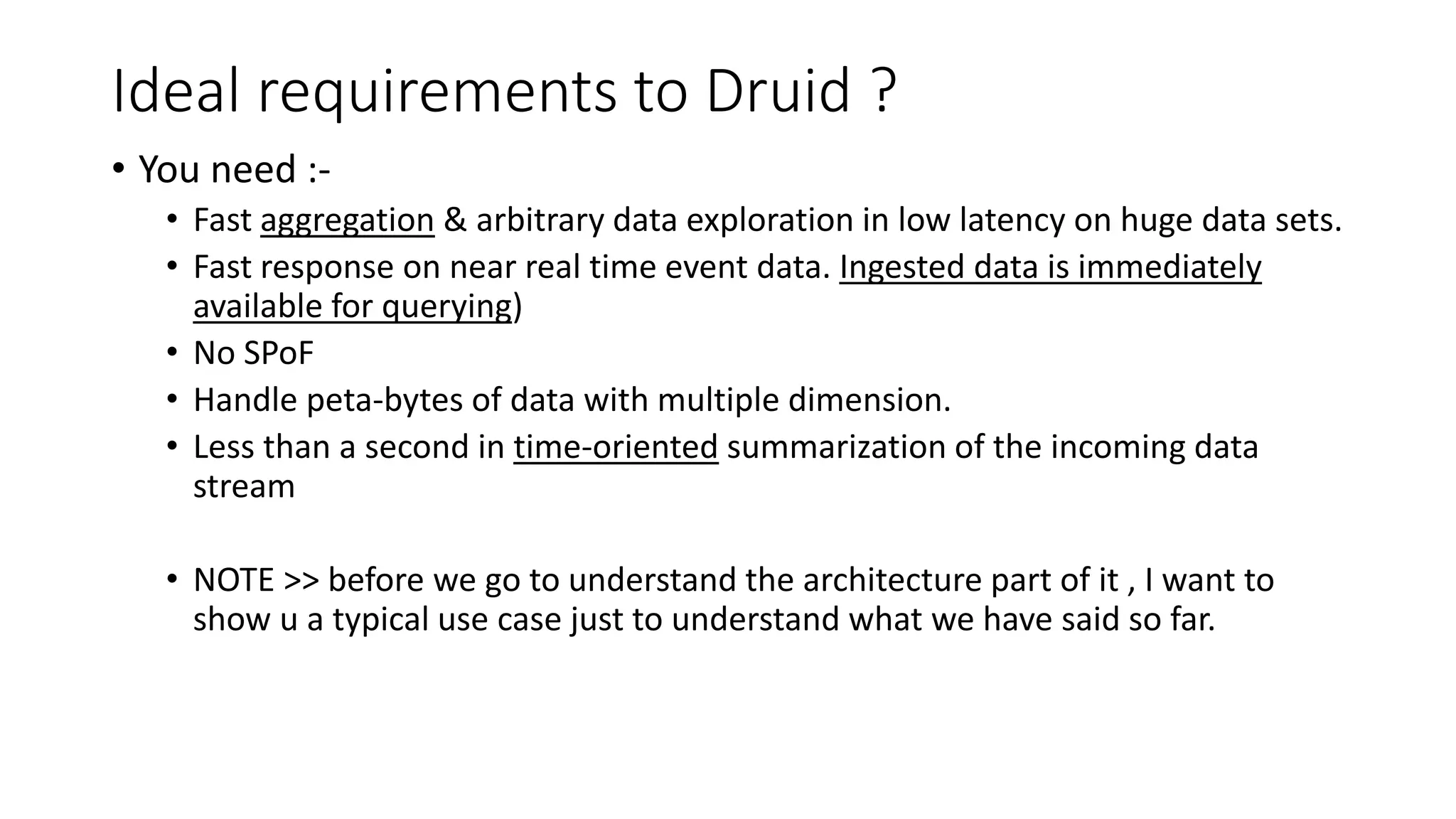

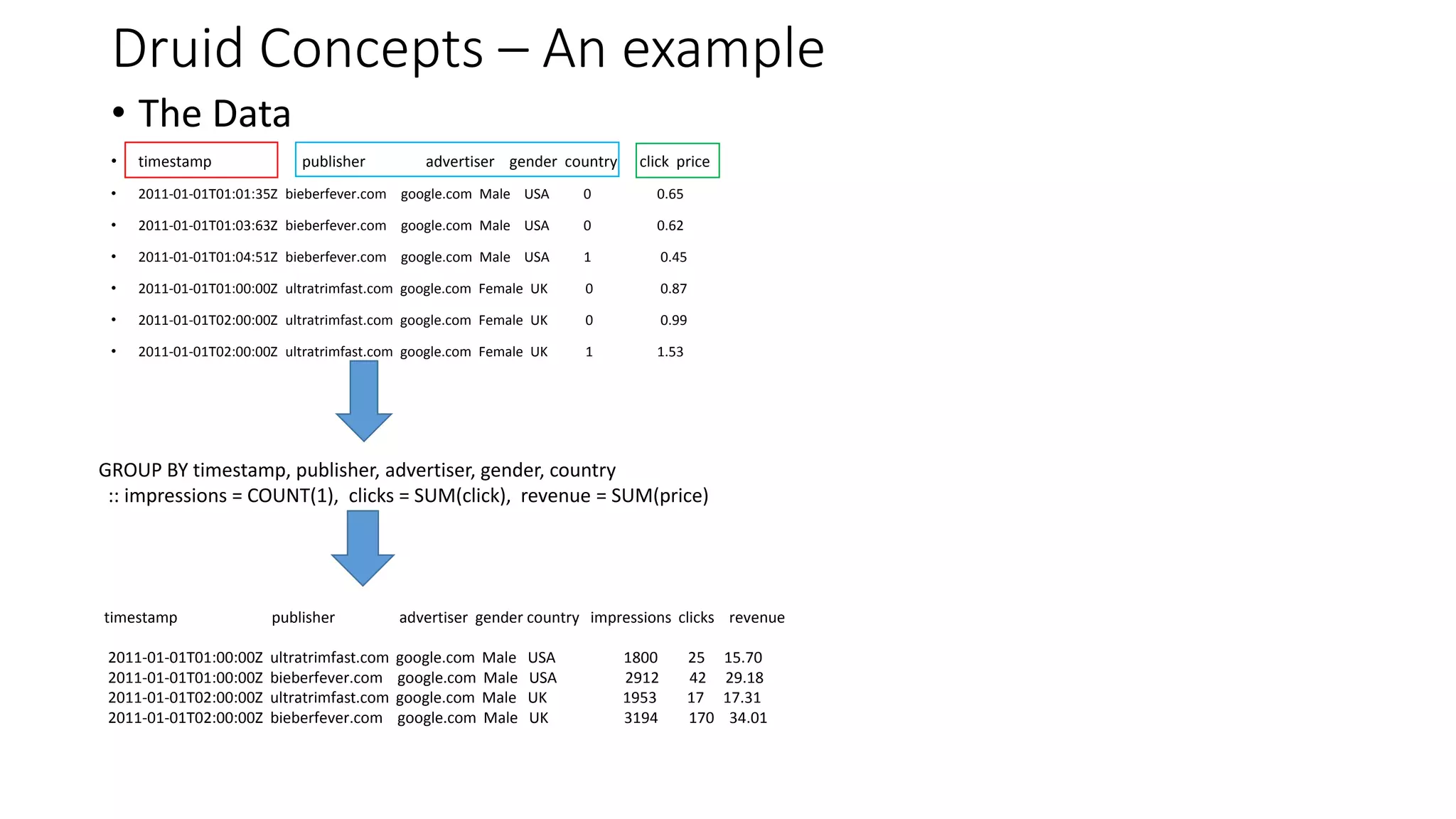

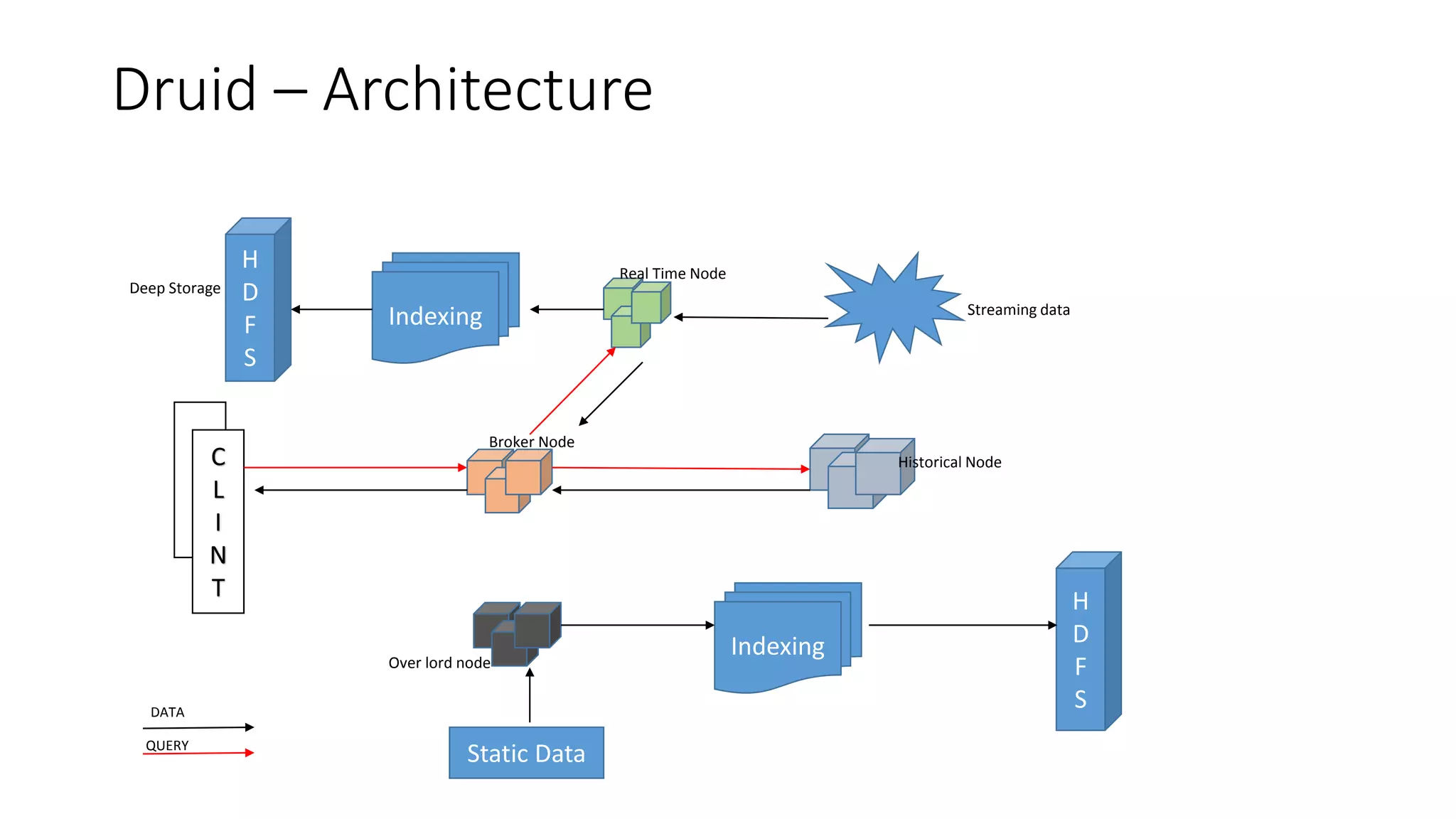

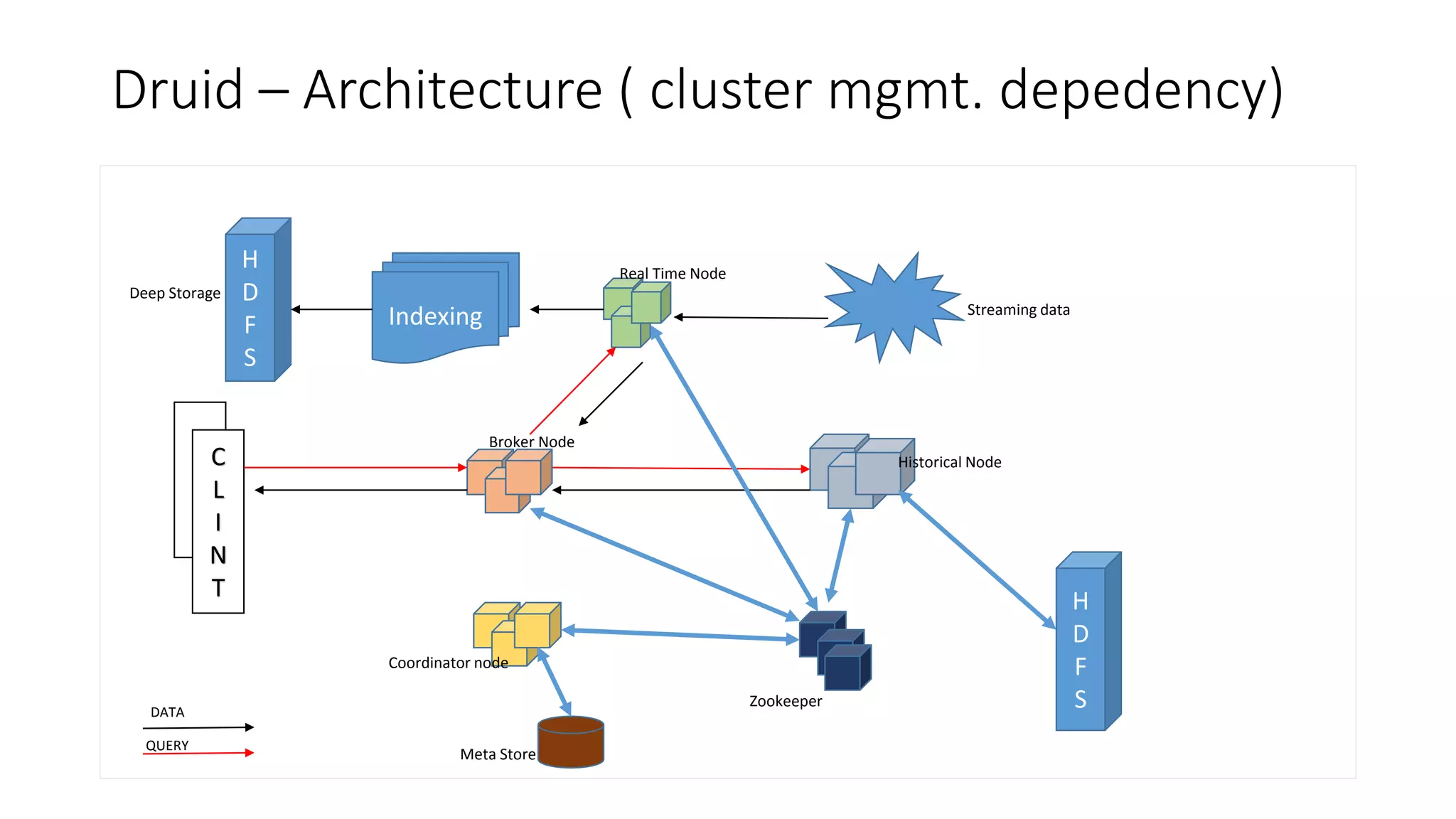

Druid is an open source, fast, distributed column-oriented data store designed for low latency ingestion and fast ad-hoc analytics, with strong support for real-time streaming ingestion. It has gained adoption across industries such as Metamarkets, Airbnb, Alibaba, Cisco, and eBay for applications involving high-volume event processing and complex queries. Key features include sub-second response times for aggregations, scalability to handle petabytes of data, and efficient data storage and query capabilities.

![Query commands

• TopN

• This will result Top N pages with latency in descending order.

• curl -L -H'Content-Type: application/json' -XPOST --data-binary @quckstart/Test/query/pageviewsLatforCount-top-

latency-pages.json http://localhost:8082/druid/v2/?pretty

• Timeseries

• This will result total latency , filtered by user=“alice” and "granularity": "day“ . [ “all” ]

• curl -L -H'Content-Type: application/json' -XPOST --data-binary @ckstart/Test/query/pageviewsLatforCount-timeseries-

pages.json http://localhost:8082/druid/v2/?pretty

• groupBy

• A) This is will result aggregated latency grpBy user+url

• curl -L -H'Content-Type: application/json' -XPOST --data-binary

@quickstart/Test/query/pageviewsLatforCount-aggregateLatencyGrpByURLUser.json

http://localhost:8082/druid/v2/?pretty

• B) This will result aggregated page count (i.e. number of url accessed ) grpBy user

• curl -L -H'Content-Type: application/json' -XPOST --data-binary

@quickstart/Test/query/pageviewsLatforCount-countURLAccessedGrpByUser.json

http://localhost:8082/druid/v2/?pretty](https://image.slidesharecdn.com/understanding-apache-druid-170714112335/75/Understanding-apache-druid-17-2048.jpg)