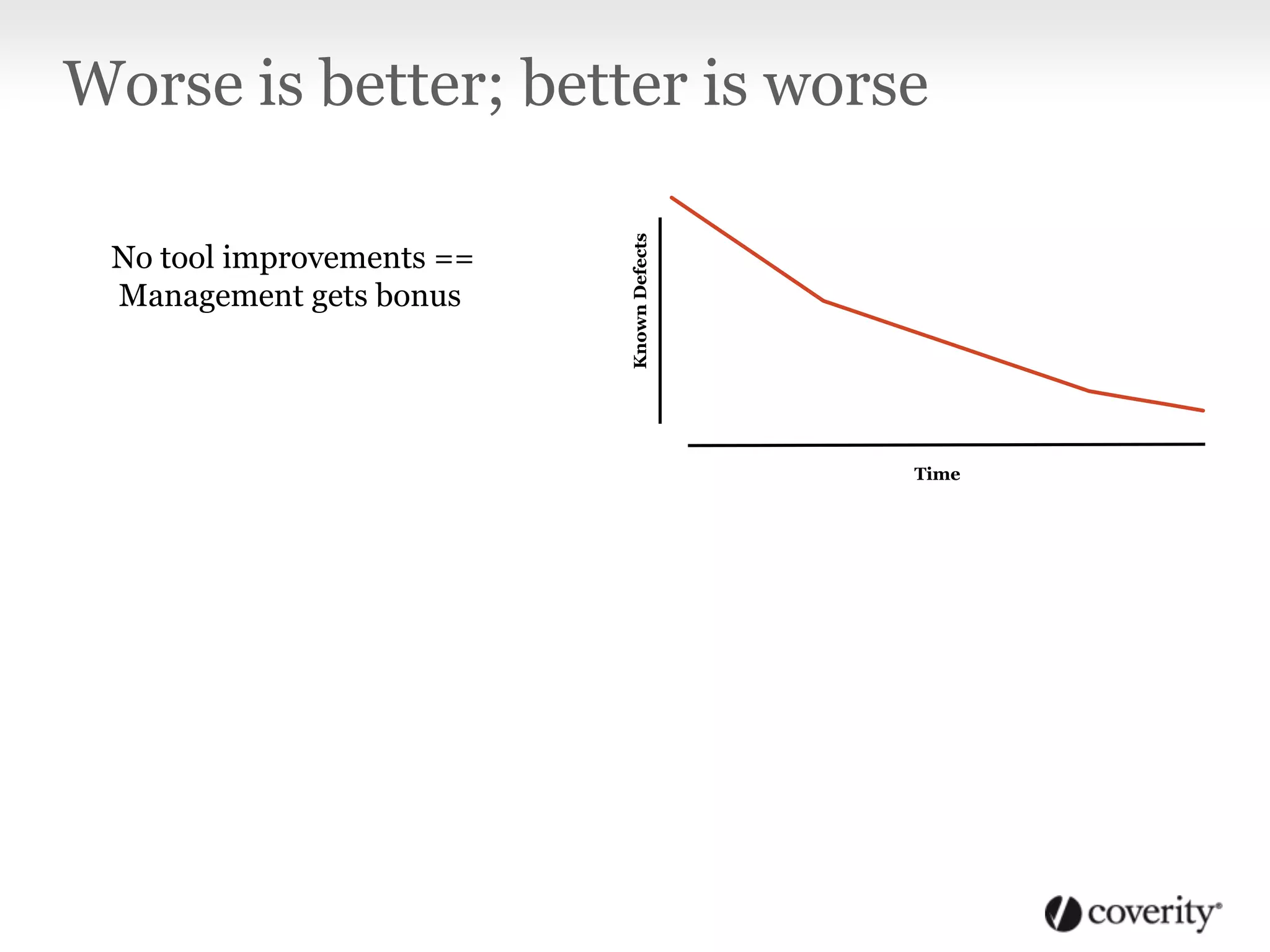

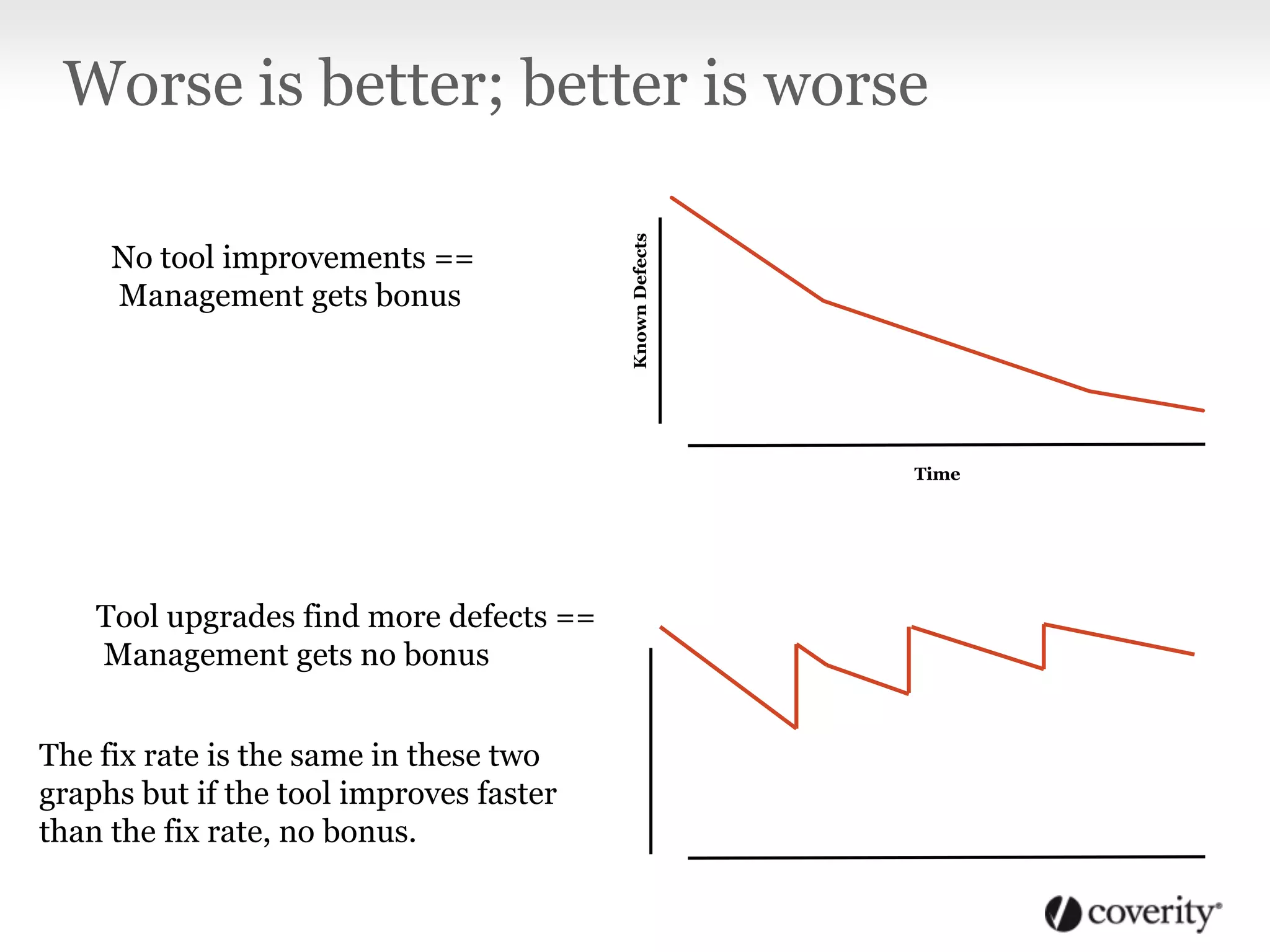

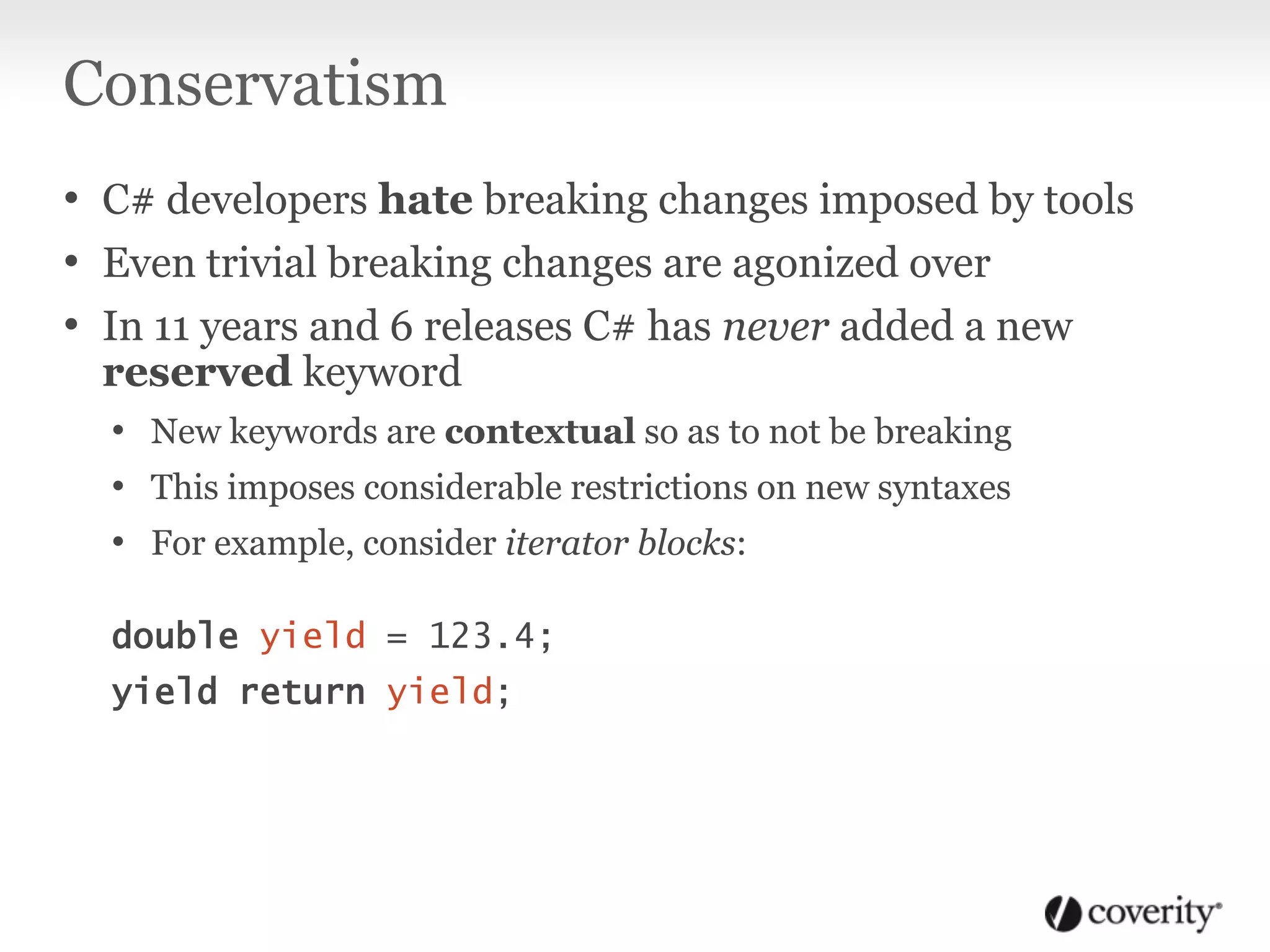

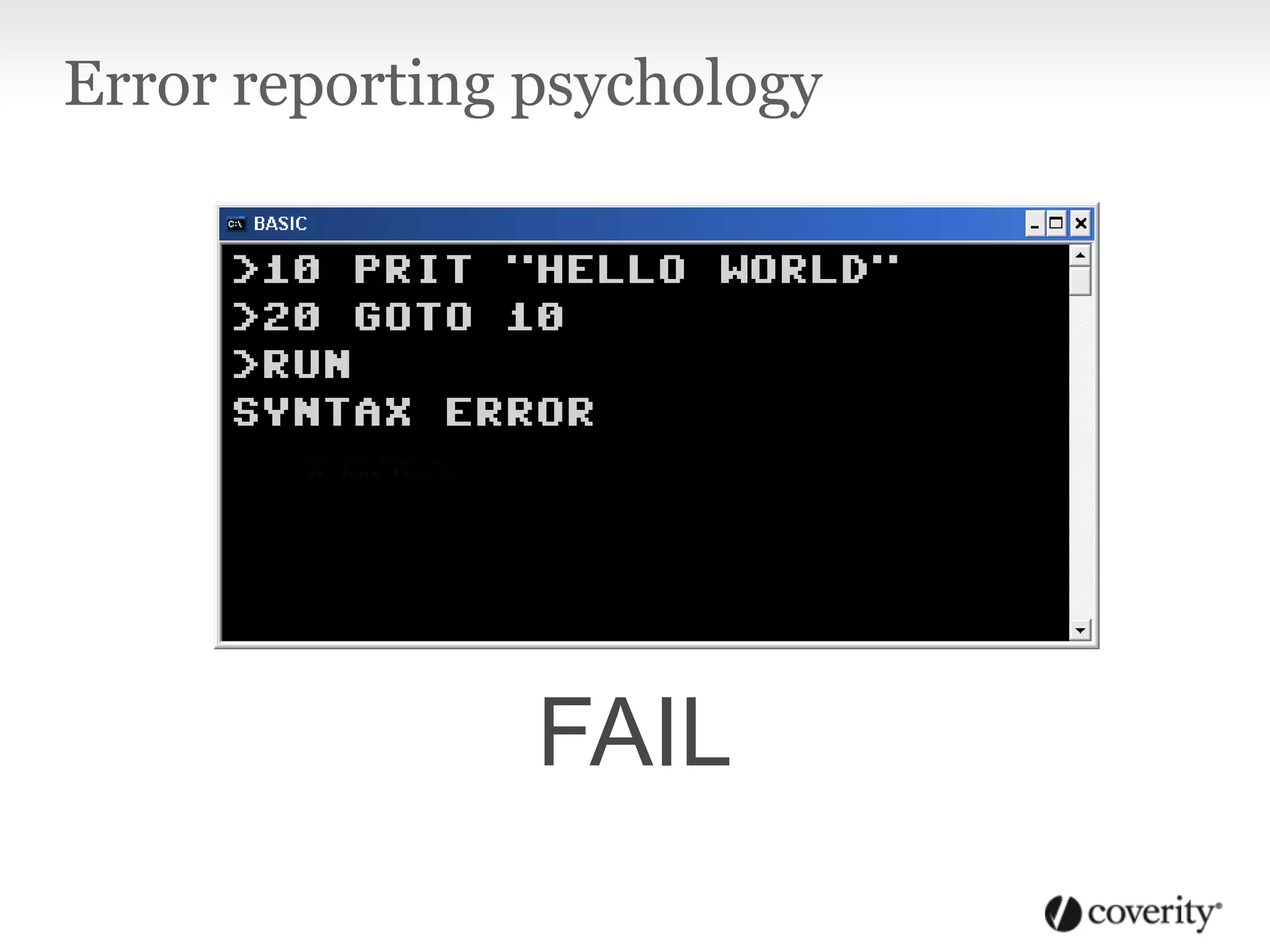

The document discusses the psychological aspects of language design and static analysis in C#, emphasizing how developer characteristics affect the adoption of tools. It highlights the importance of understandable error messages and the resistance developers have towards breaking changes, as well as the need for static analysis tools to align with developer psychology to promote effective use. The conclusion stresses the balance between theoretical techniques and practical developer needs for successful tool integration.

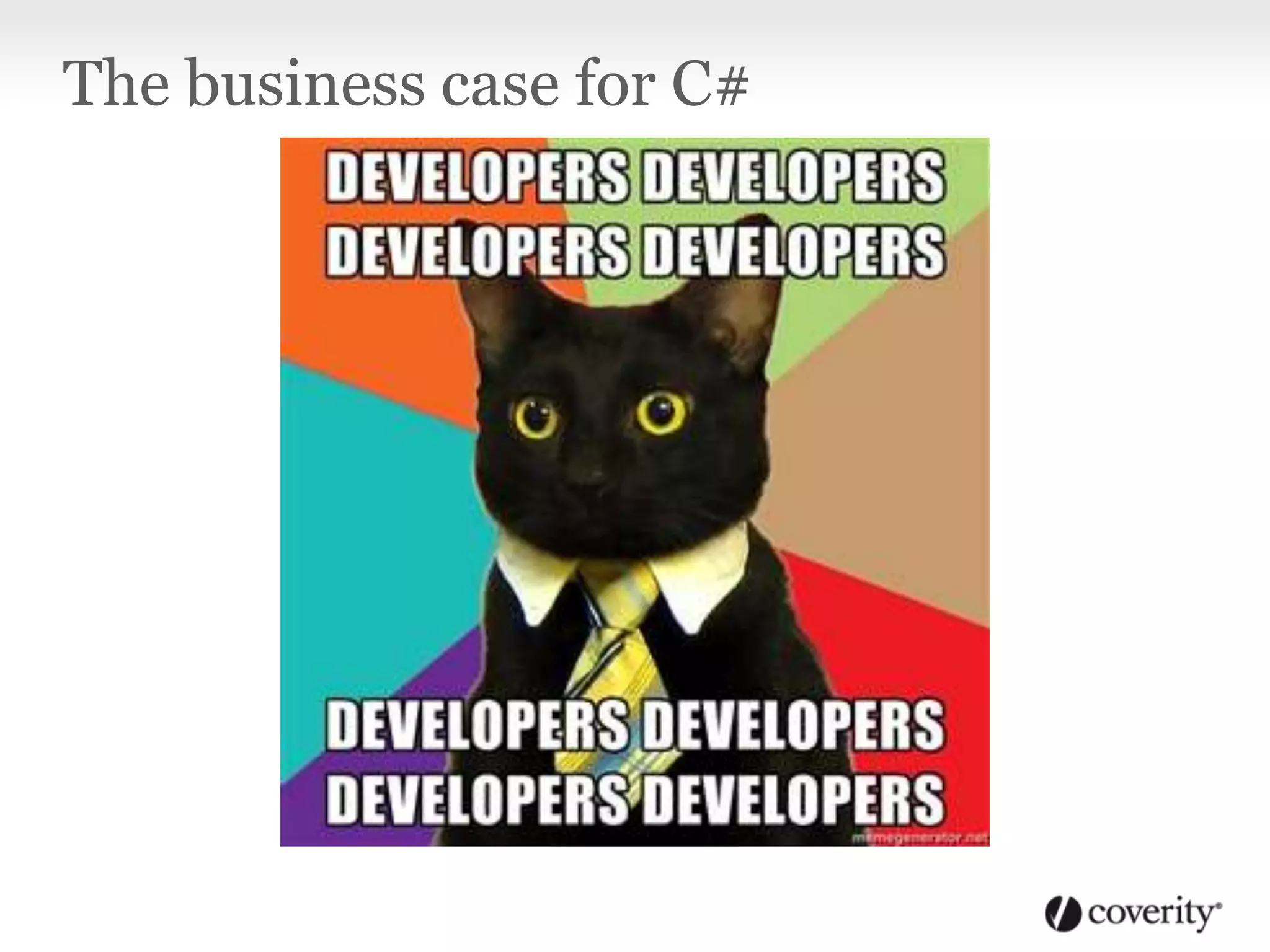

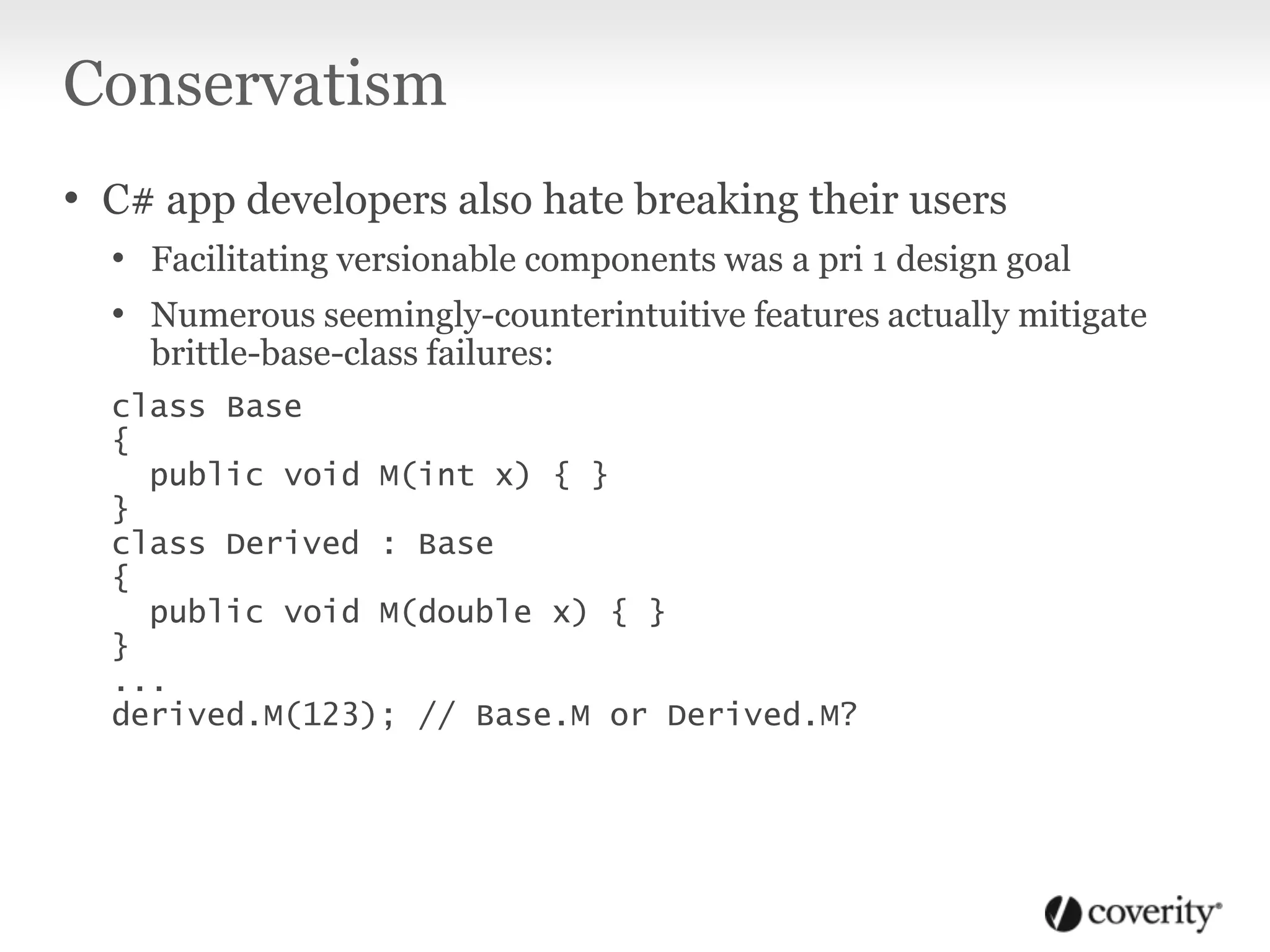

![Displaying good defect messages

public void GetThing(Type type, bool includeFrobs)

{

bool isFrob = (type != null) &&

typeof(IFrob).IsAssignableFrom(type);

object instance = this.objects[this.name]

if (instance is IFrob && includeFrobs)

{ [...] }

else if (type.IsAssignableFrom(instance.GetType())

{ [...] }](https://image.slidesharecdn.com/psychofcsharpanalysis-130708120610-phpapp01/75/The-Psychology-of-C-Analysis-47-2048.jpg)

![Displaying good defect messages

public void GetThing(Type type, bool includeFrobs)

{

Assuming type is null.

type != null evaluated to false.

bool isFrob = (type != null) &&

typeof(IFrob).IsAssignableFrom(type);

object instance = this.objects[this.name]

instance is IFrob evaluated to true.

includeFrobs evaluated to false.

if (instance is IFrob && includeFrobs)

{ [...] }

Dereference after null check:

dereferencing type while it is null.

else if (type.IsAssignableFrom(instance.GetType())

{ [...] }](https://image.slidesharecdn.com/psychofcsharpanalysis-130708120610-phpapp01/75/The-Psychology-of-C-Analysis-48-2048.jpg)