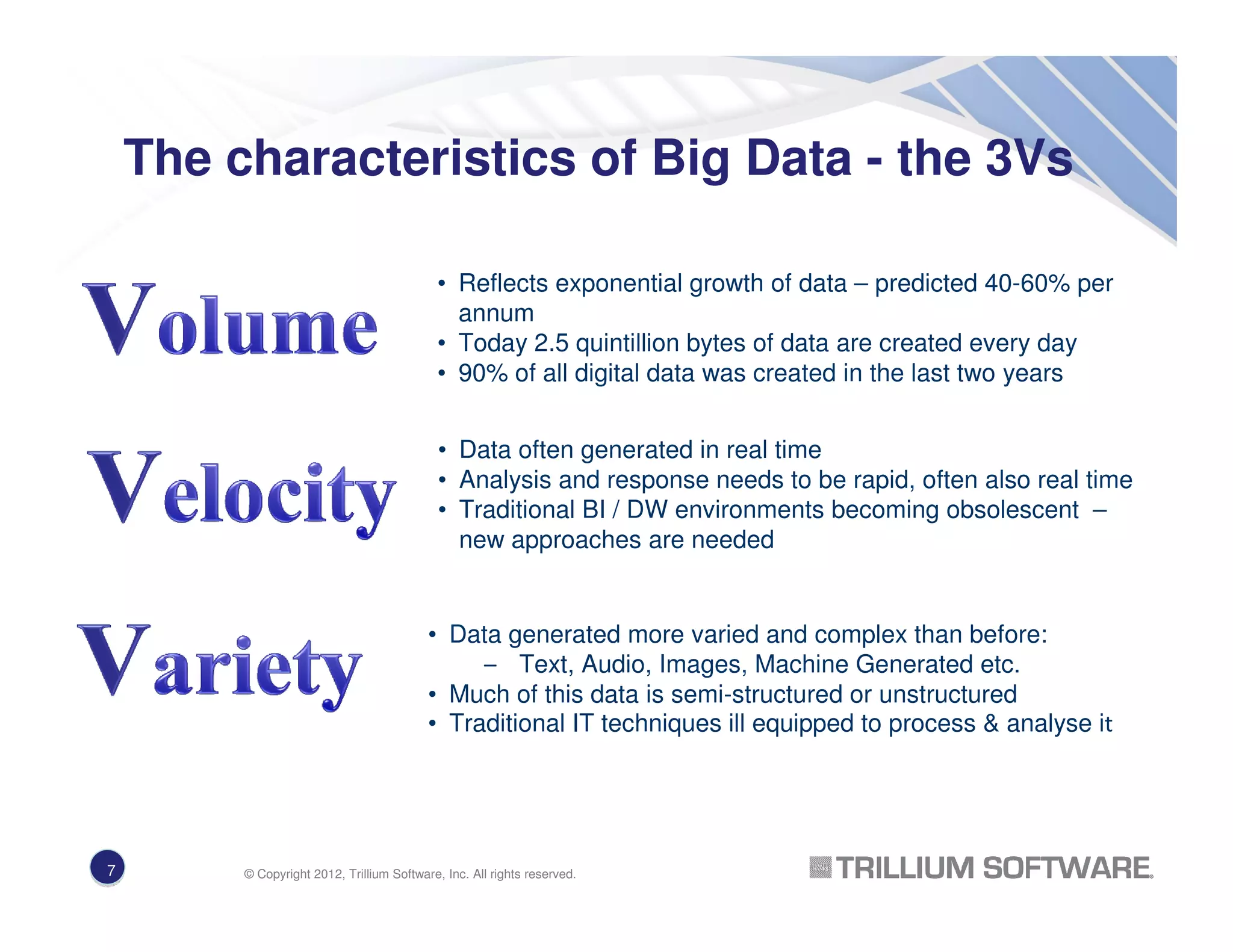

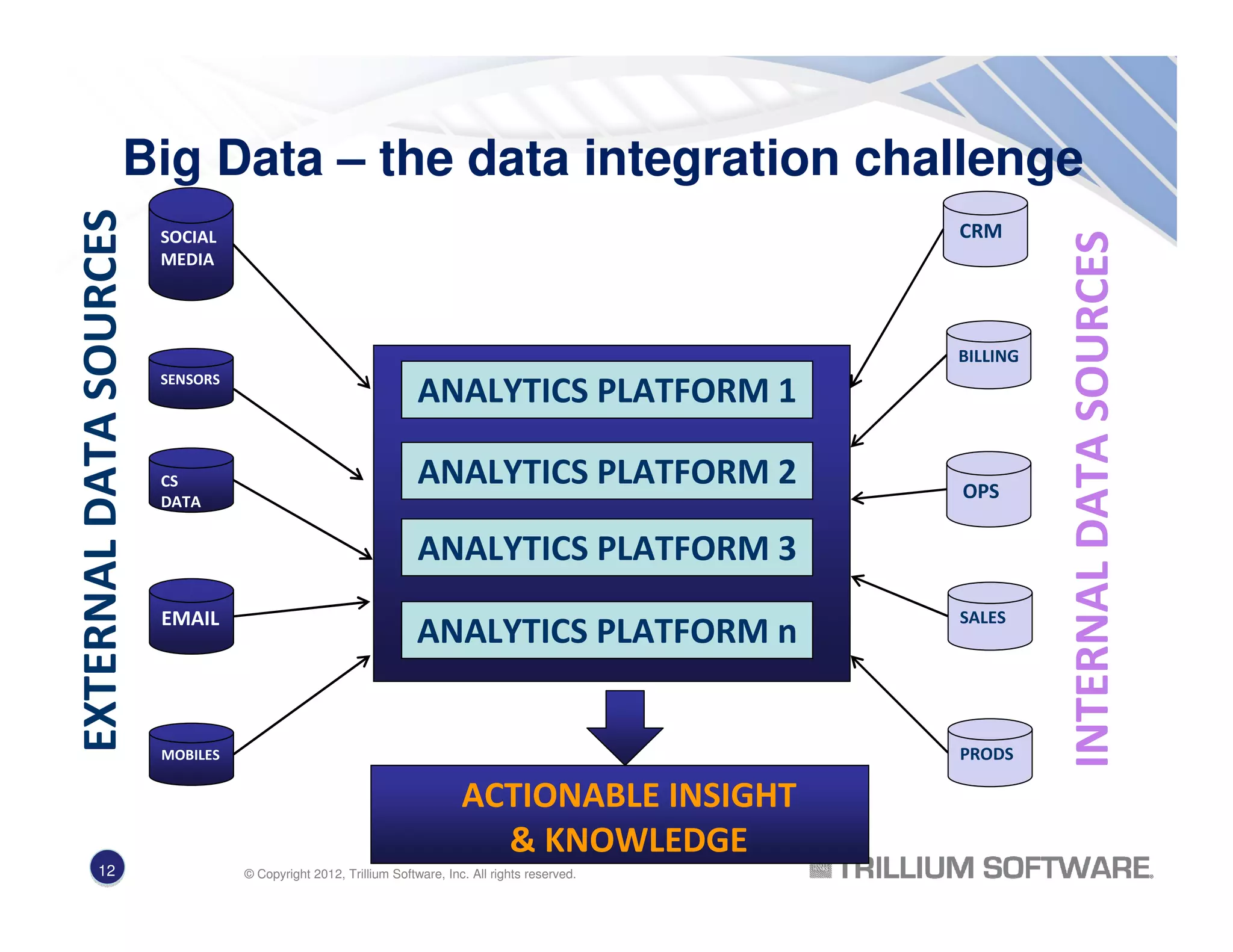

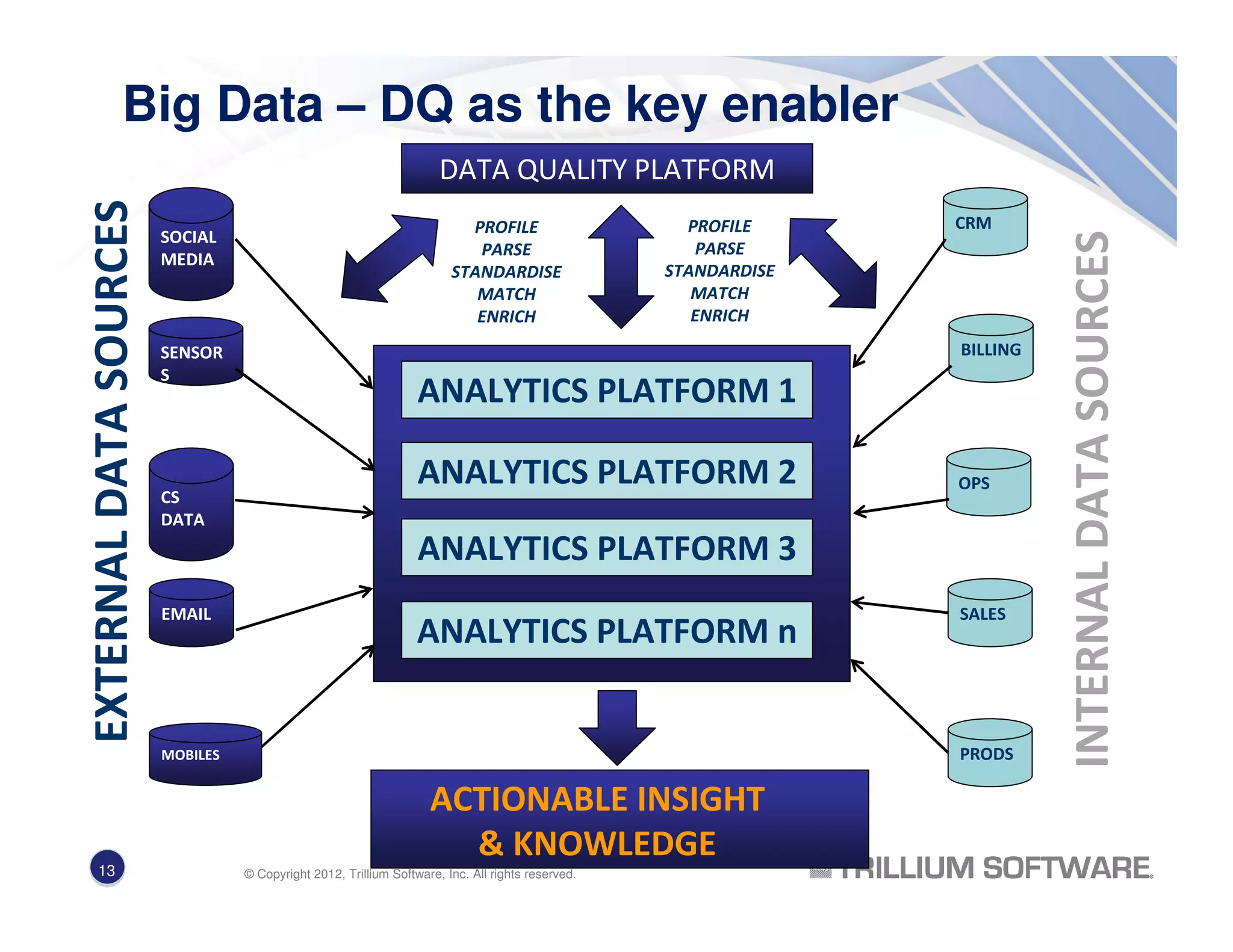

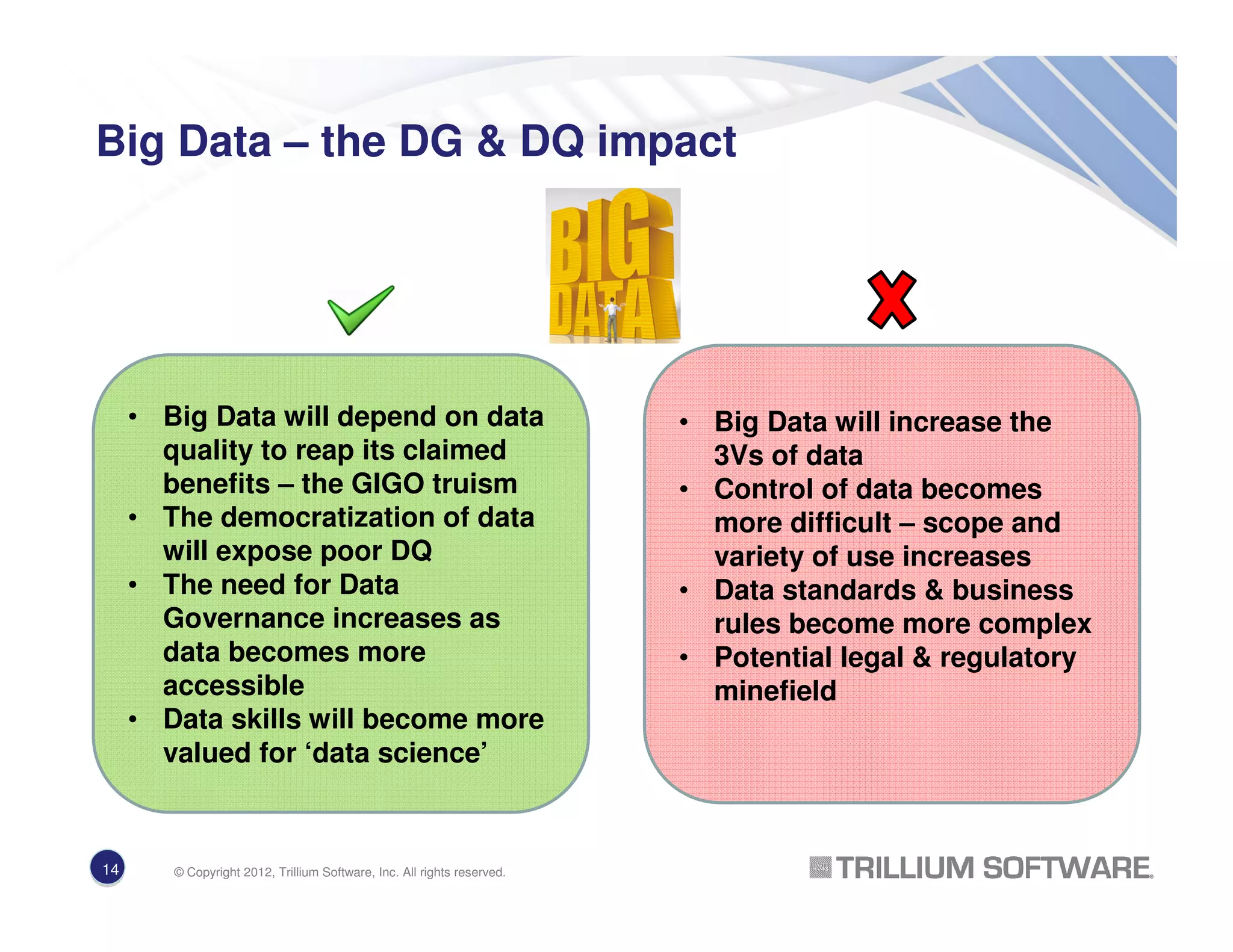

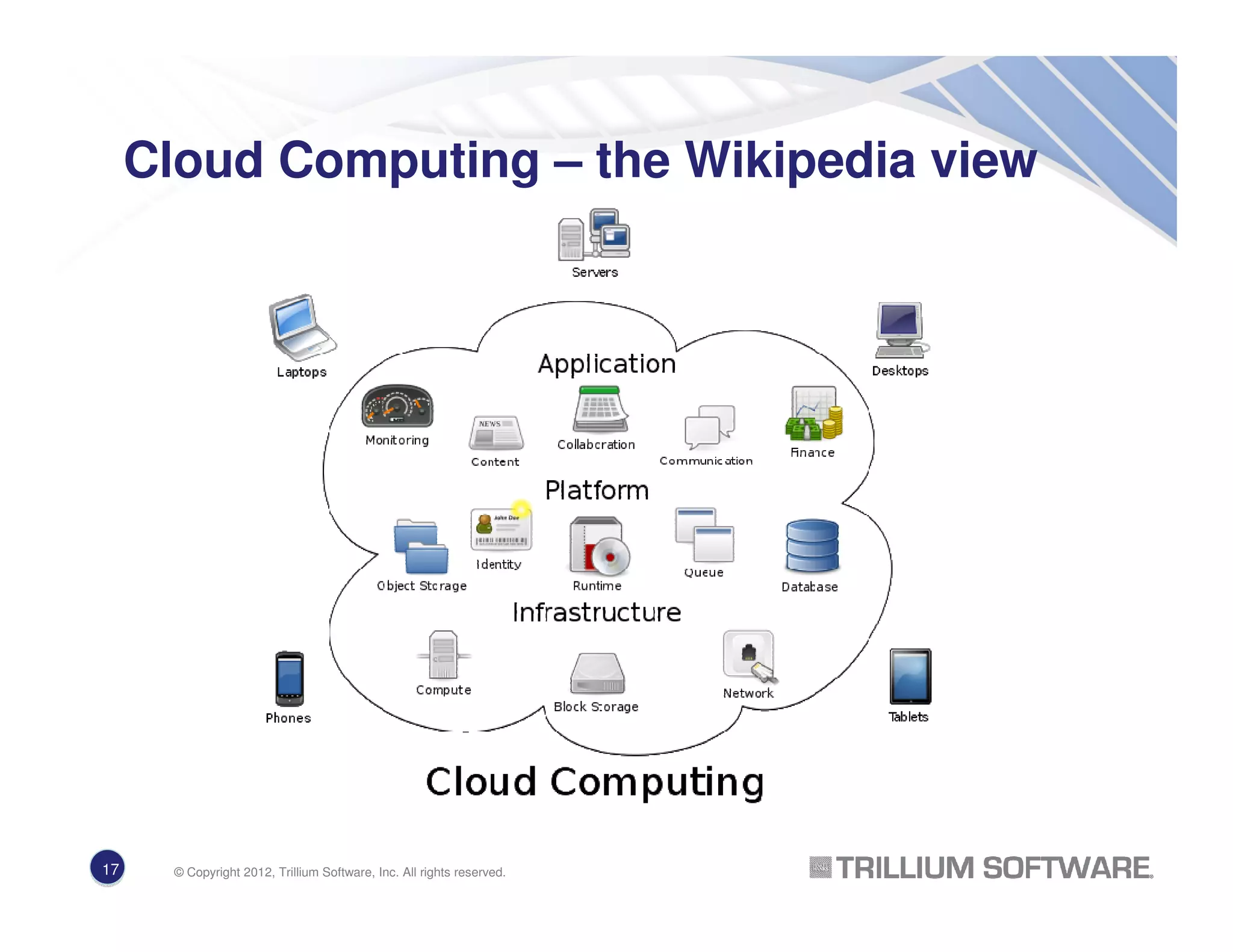

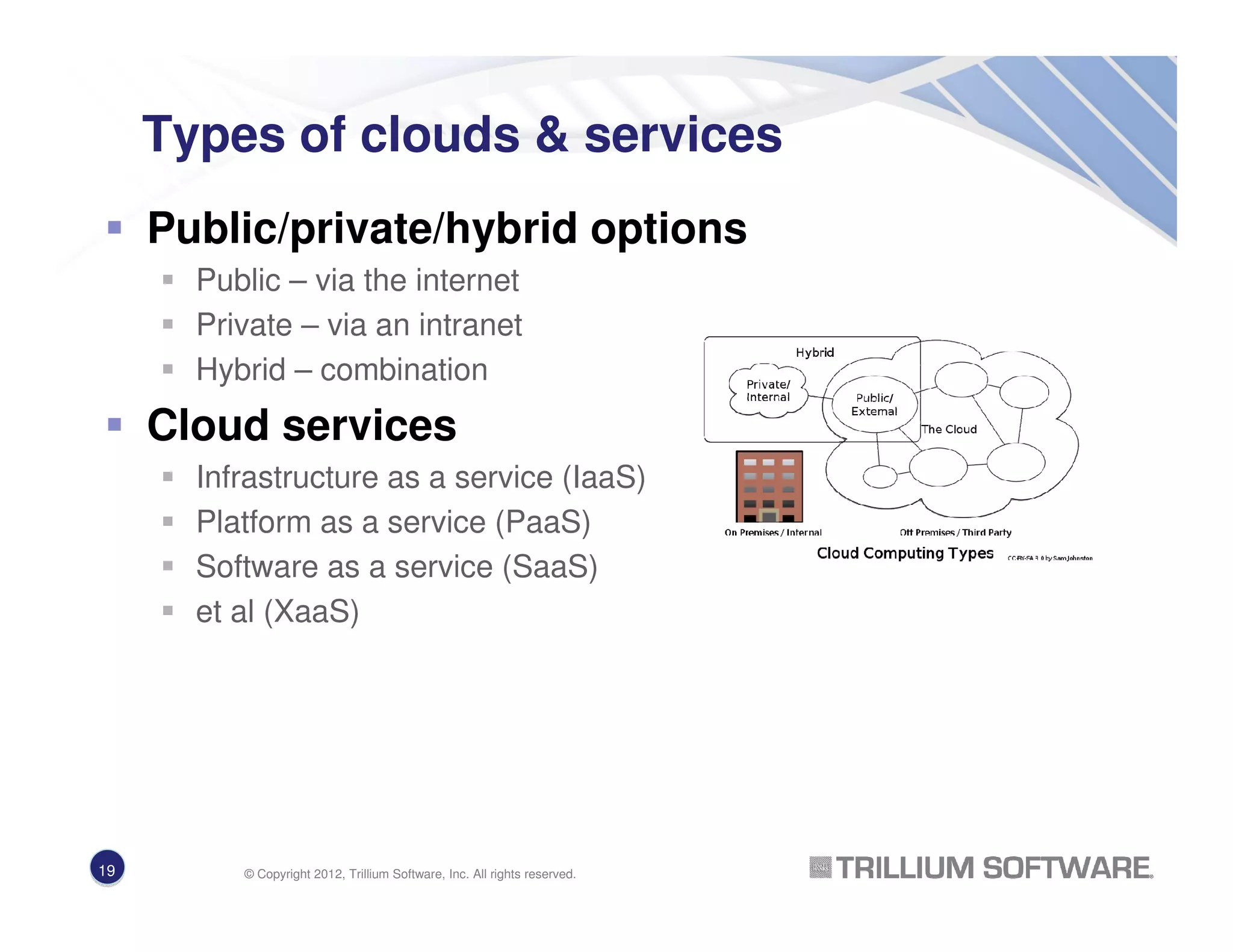

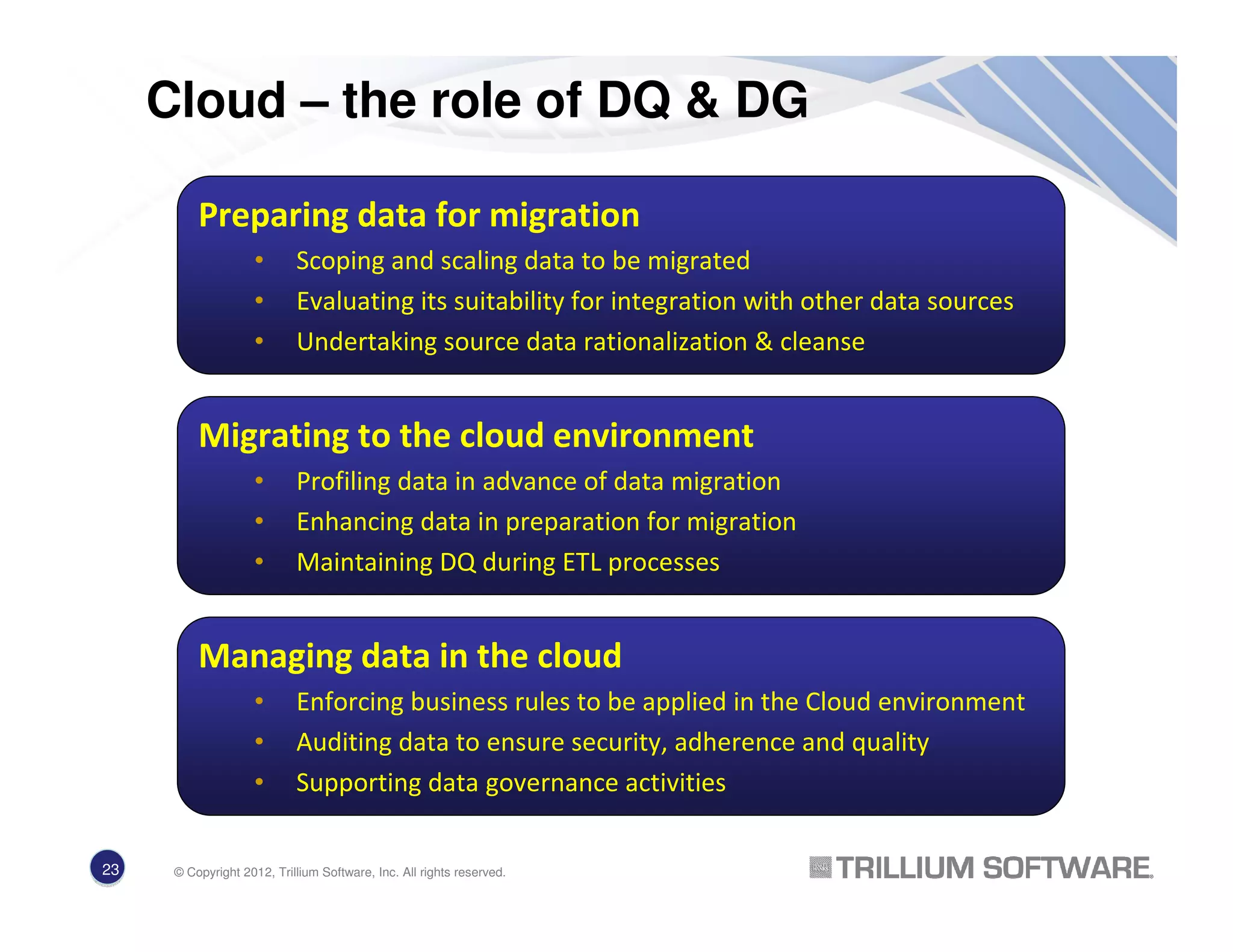

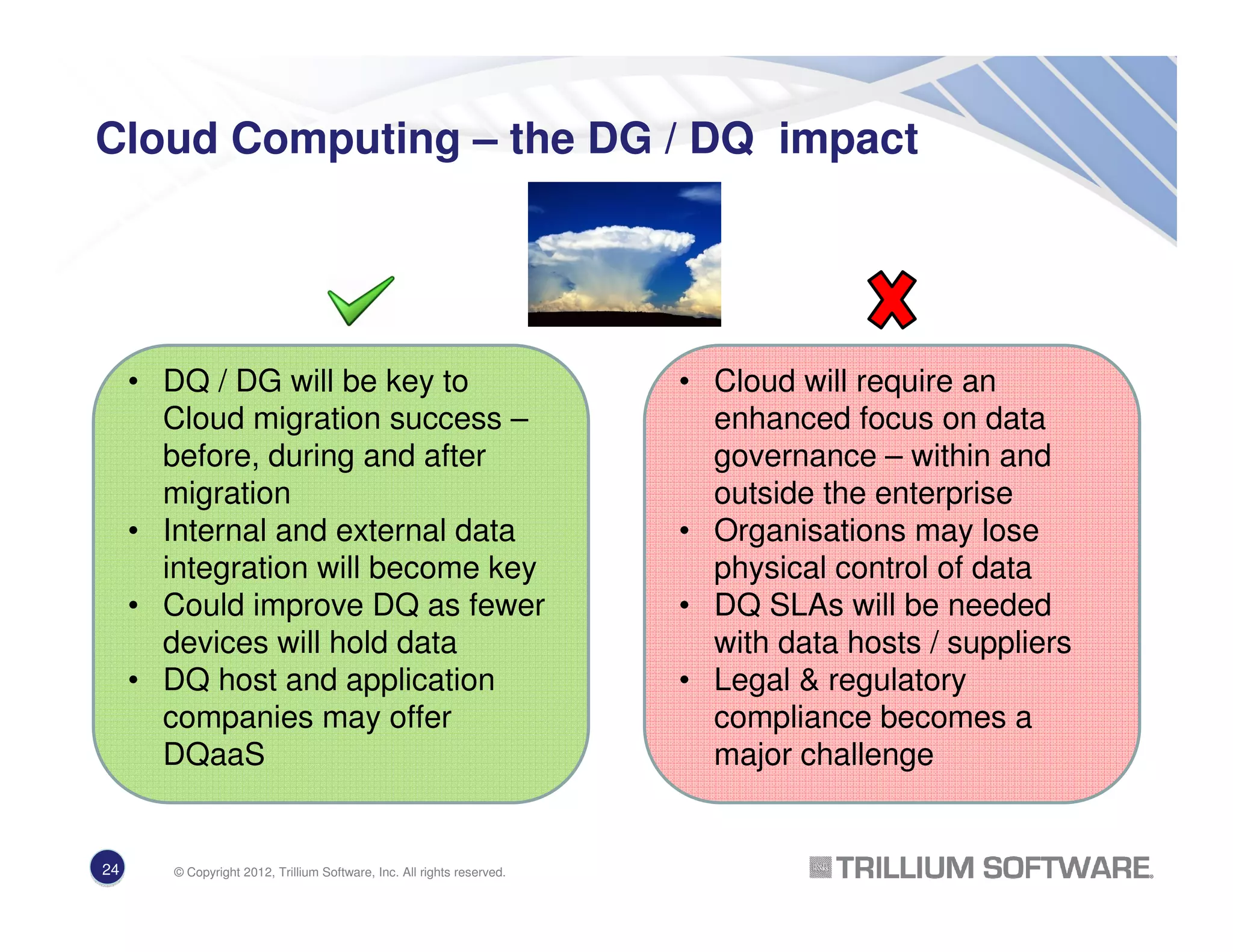

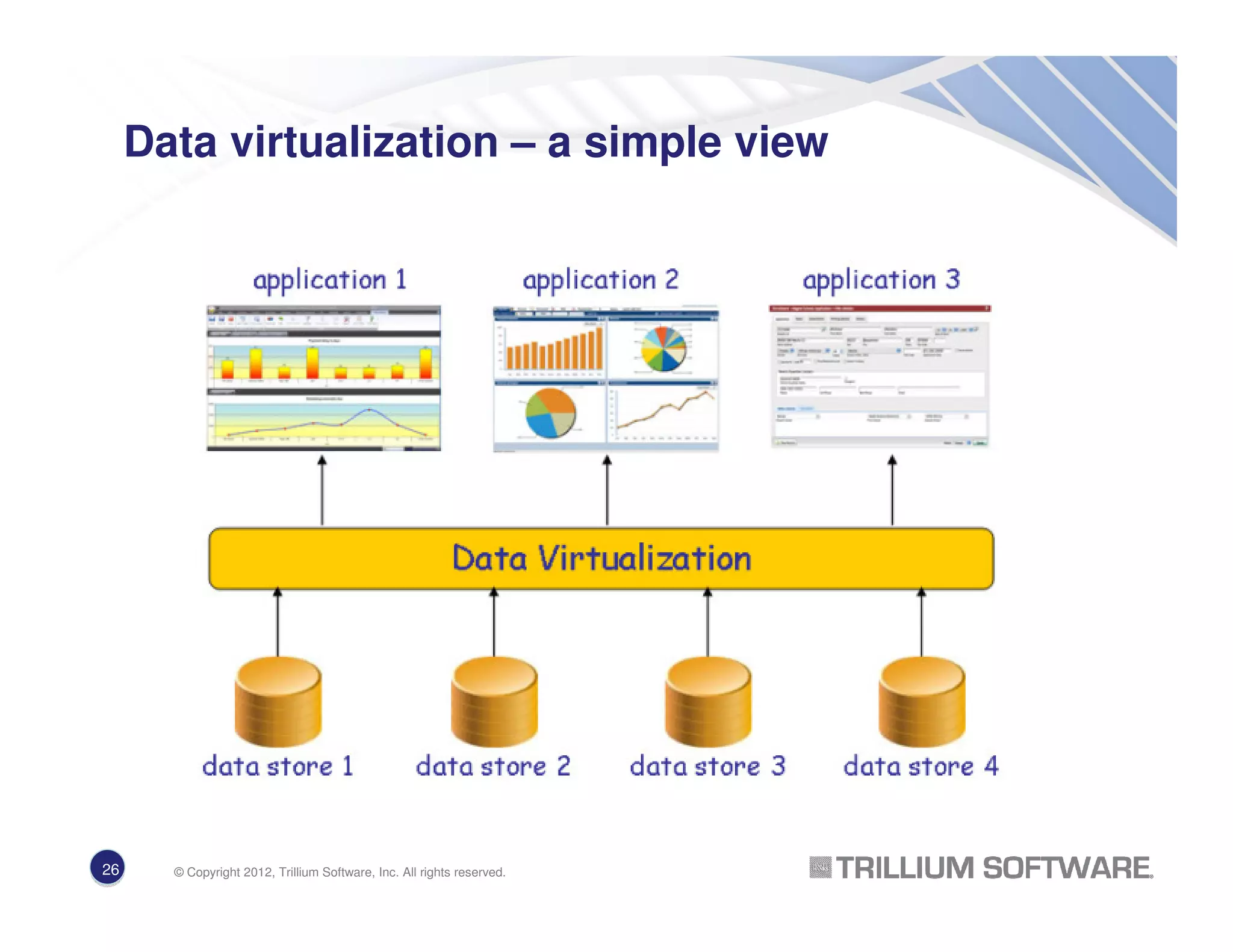

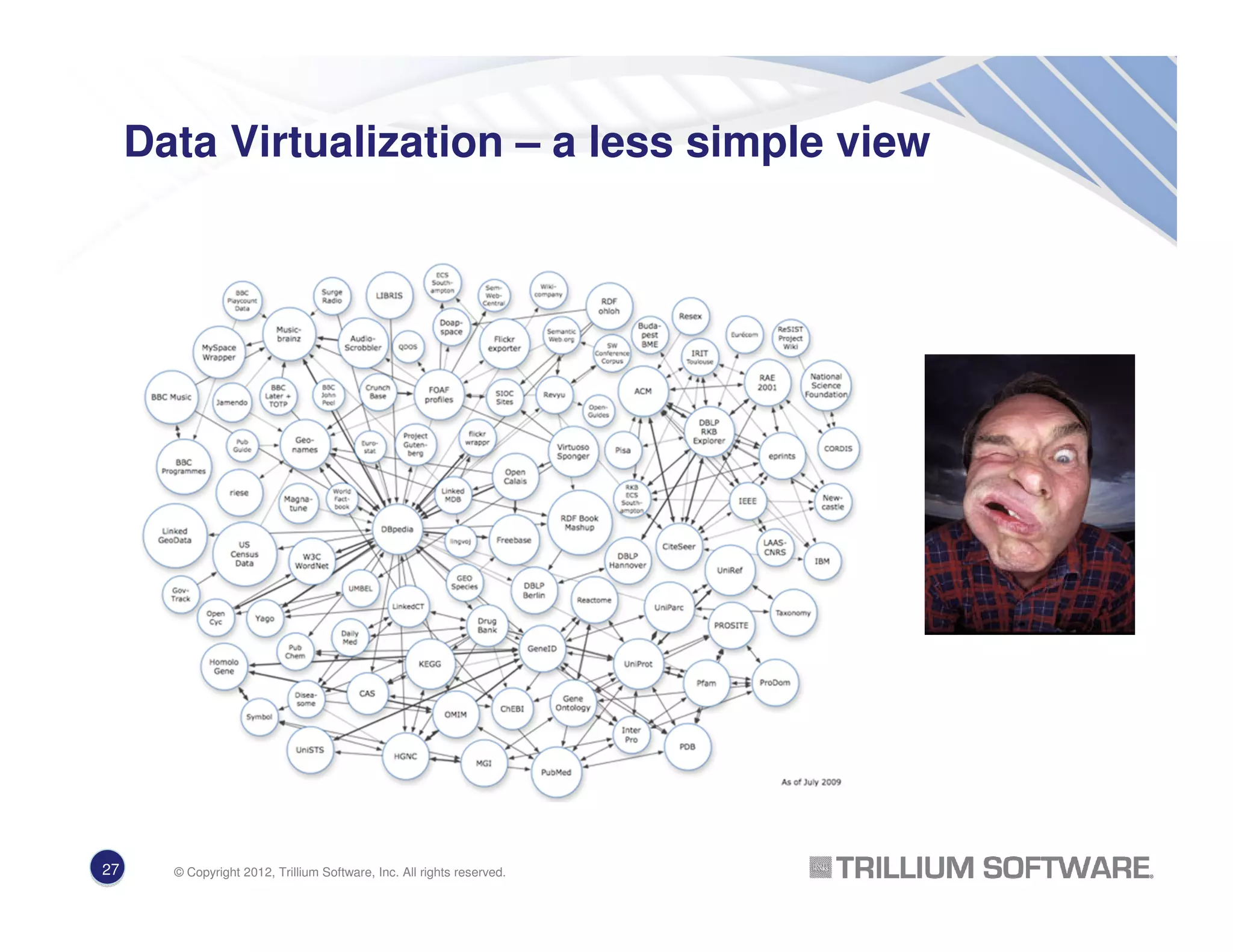

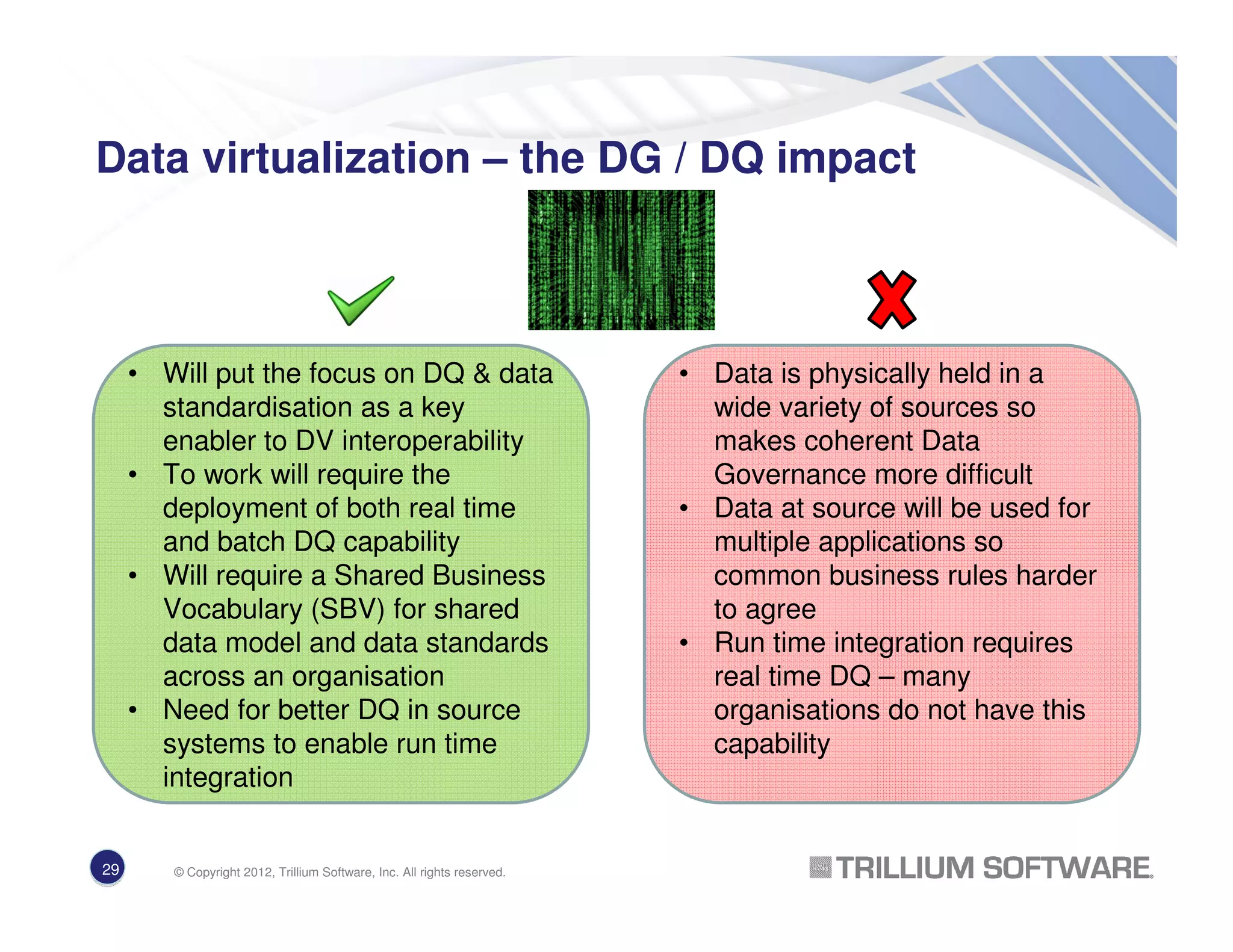

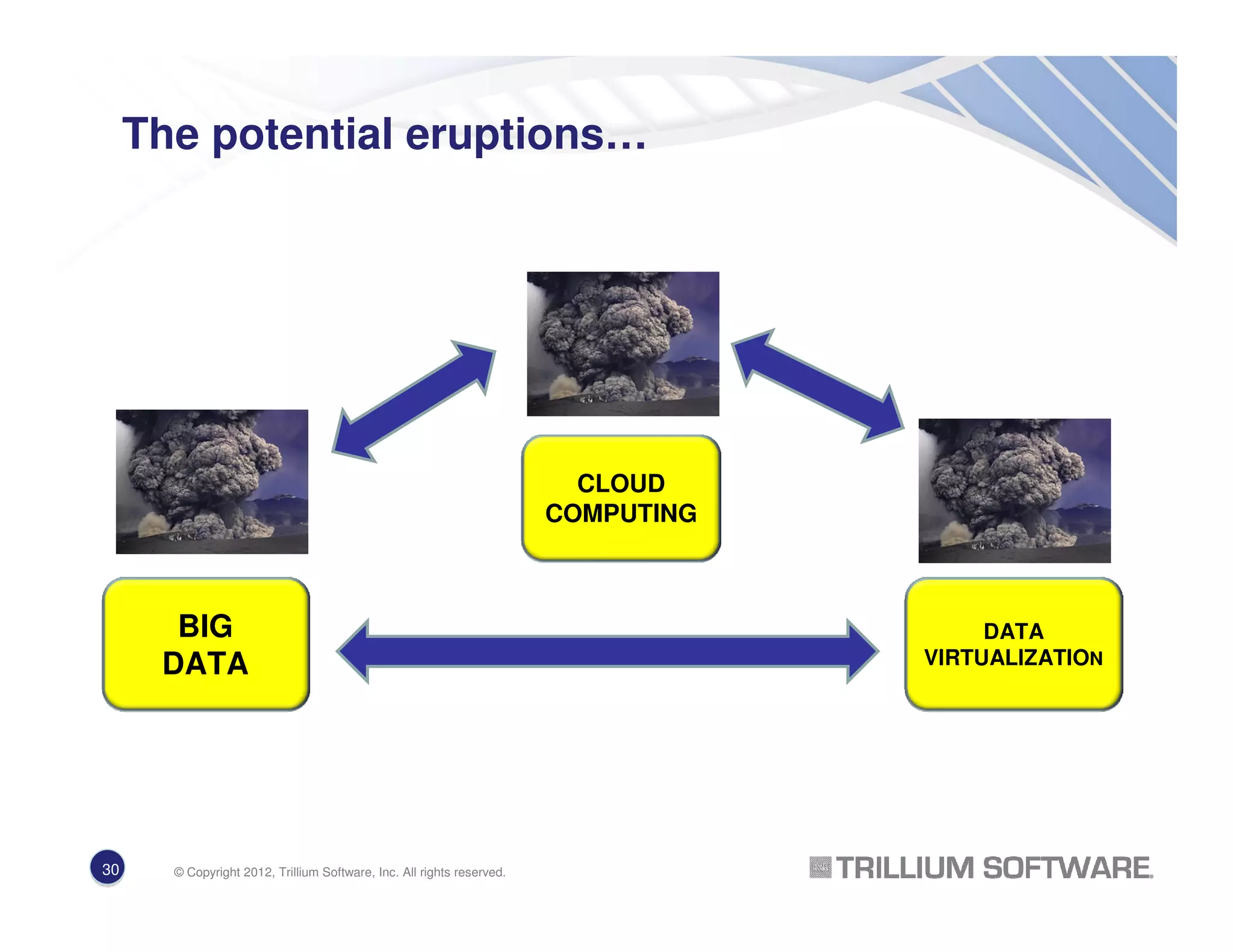

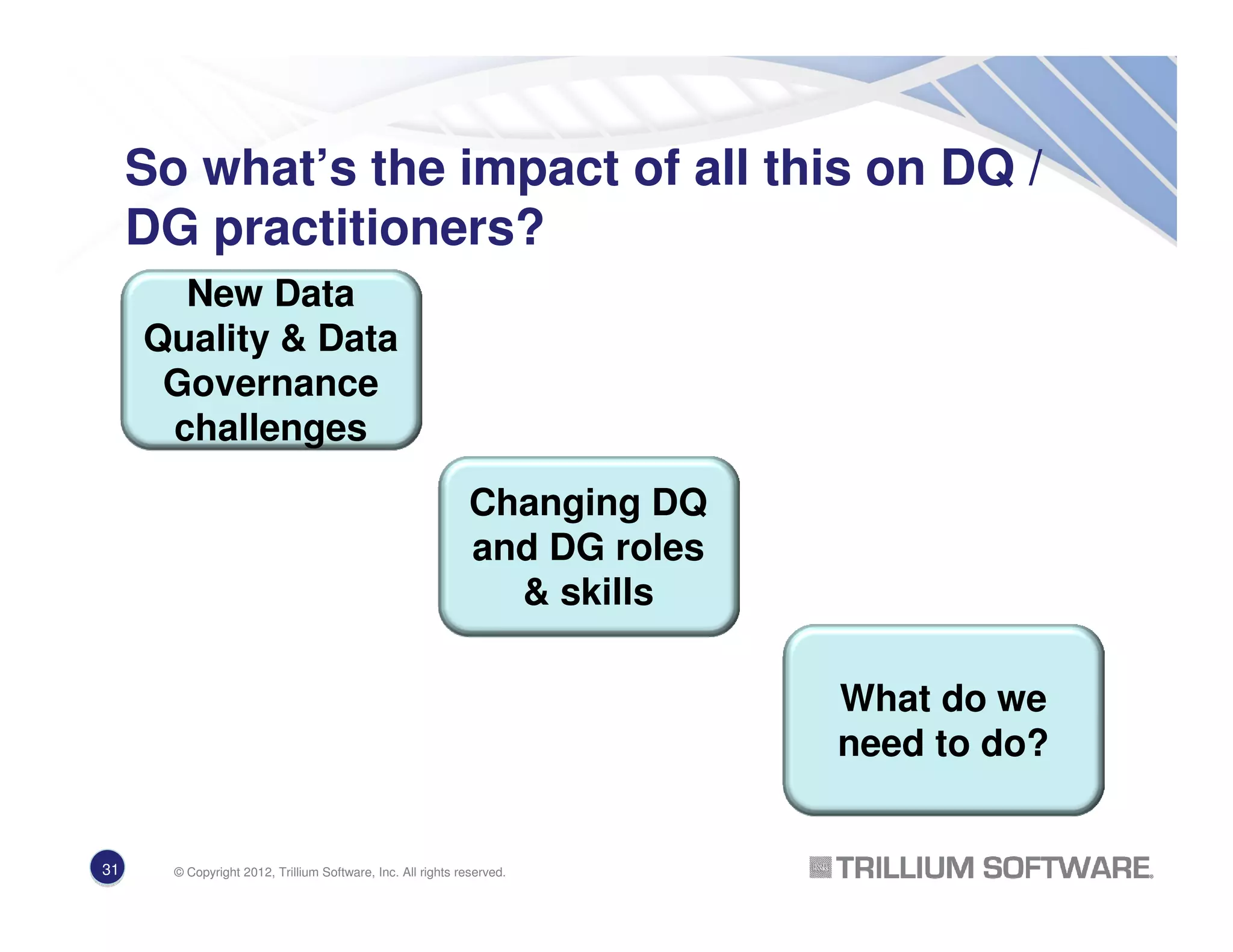

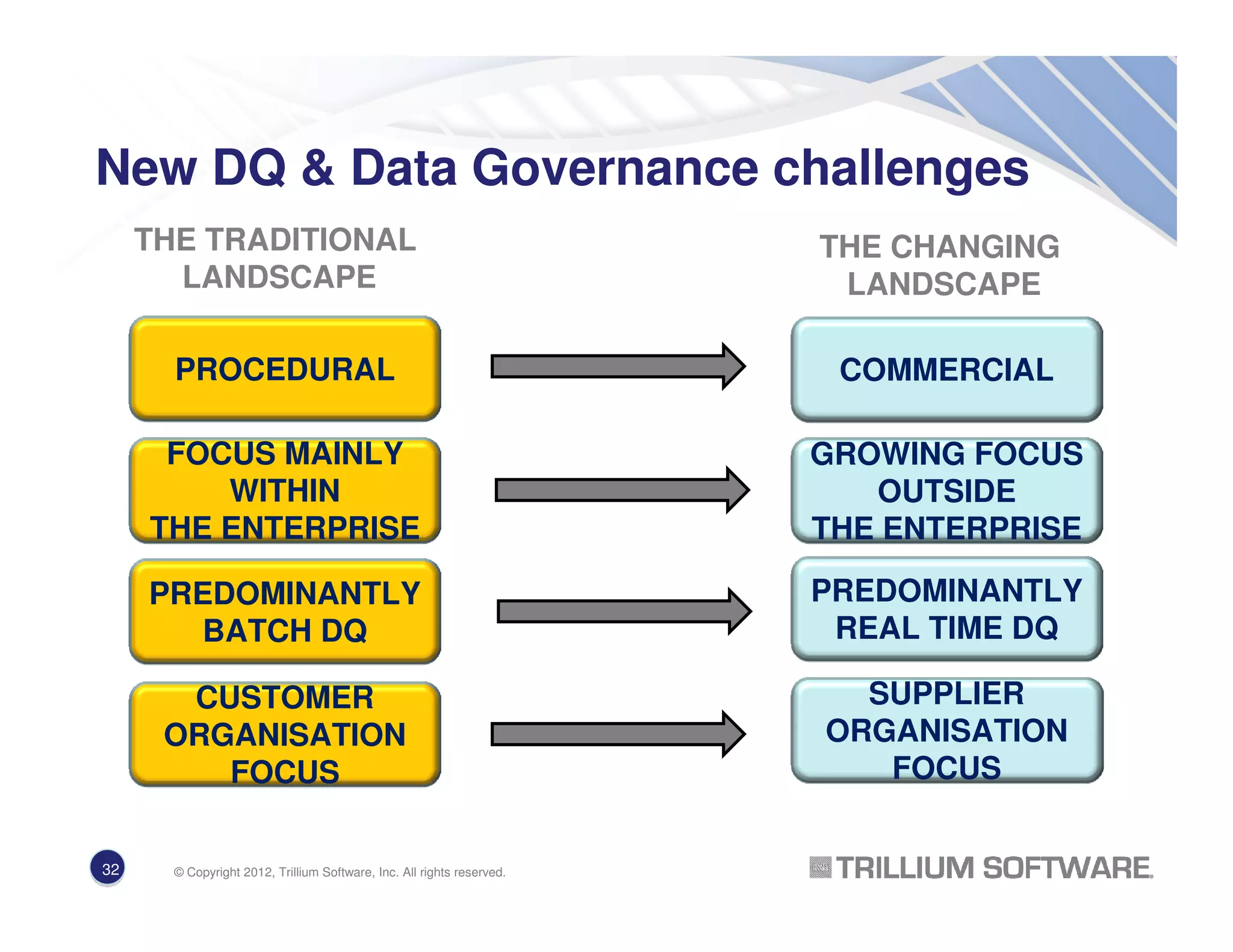

The document discusses how new technologies like big data, cloud computing, and data virtualization are changing the data quality and data governance landscape. It provides information on each of these emerging trends, including how they generate and utilize large amounts of diverse data sources, provide data and analytic services remotely over the internet, and create virtual integrated views of distributed data. It also notes that these new approaches increase the need for strong data governance and high quality data to ensure proper control, integration, and use of data across organizations.