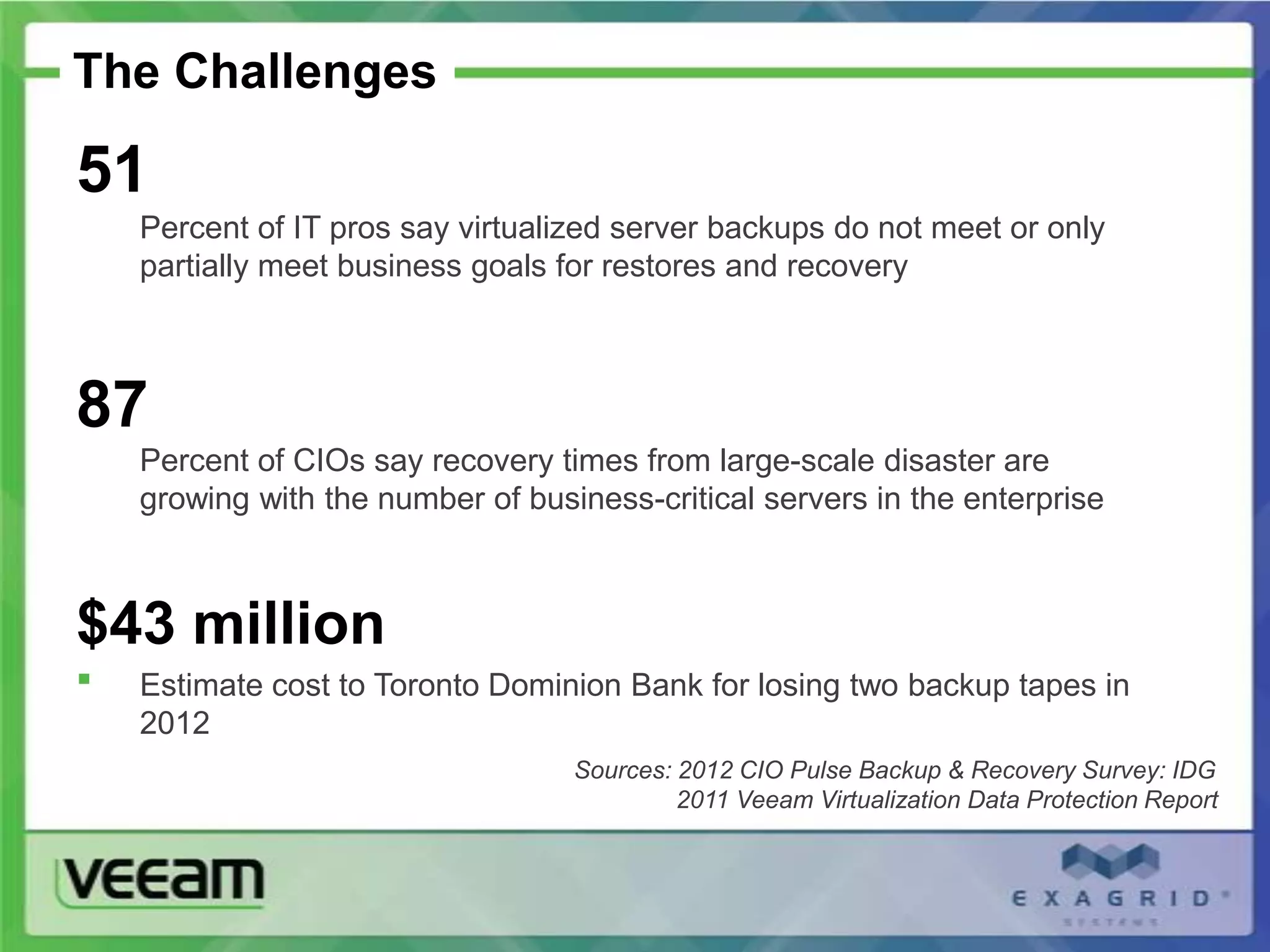

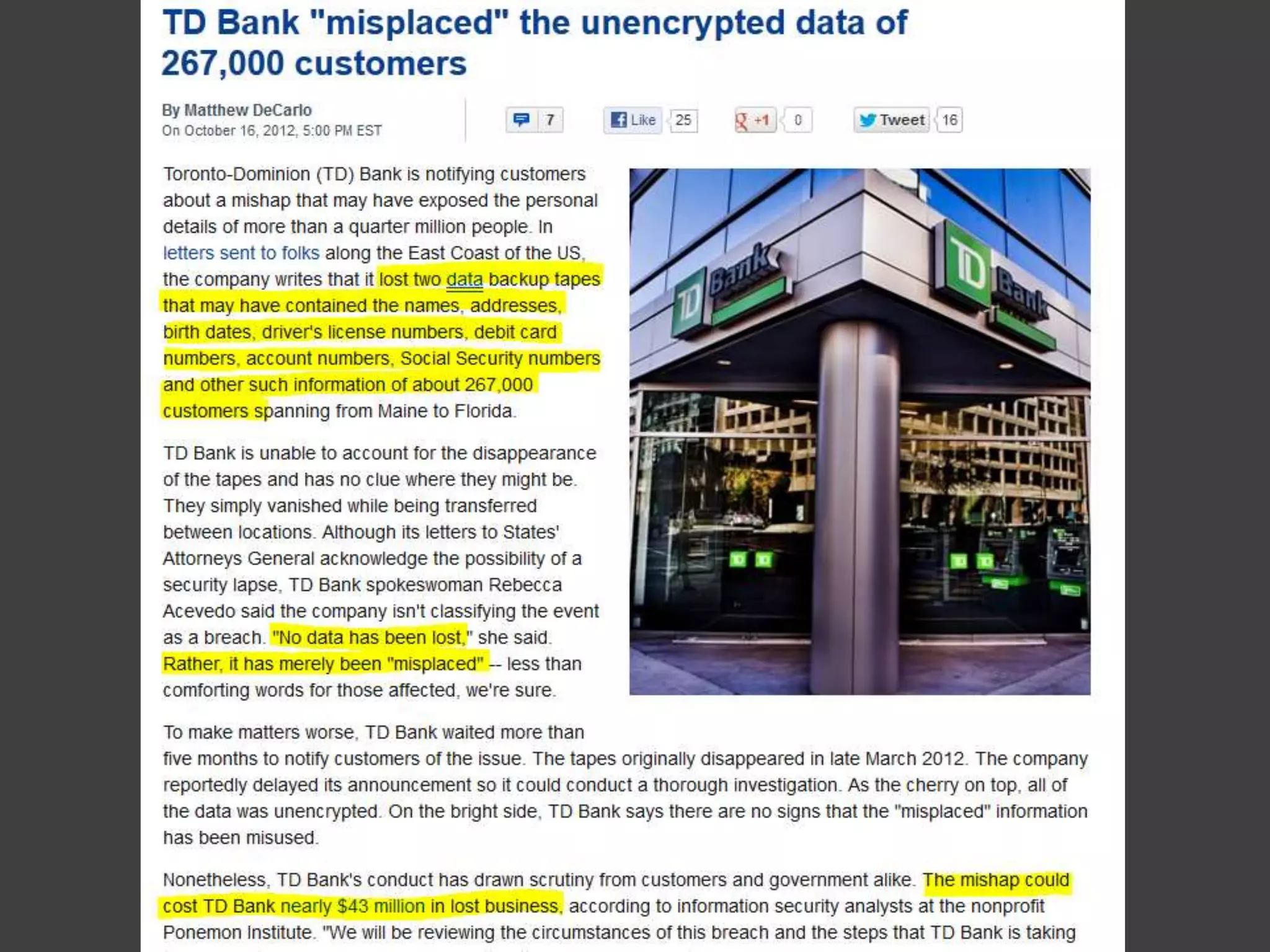

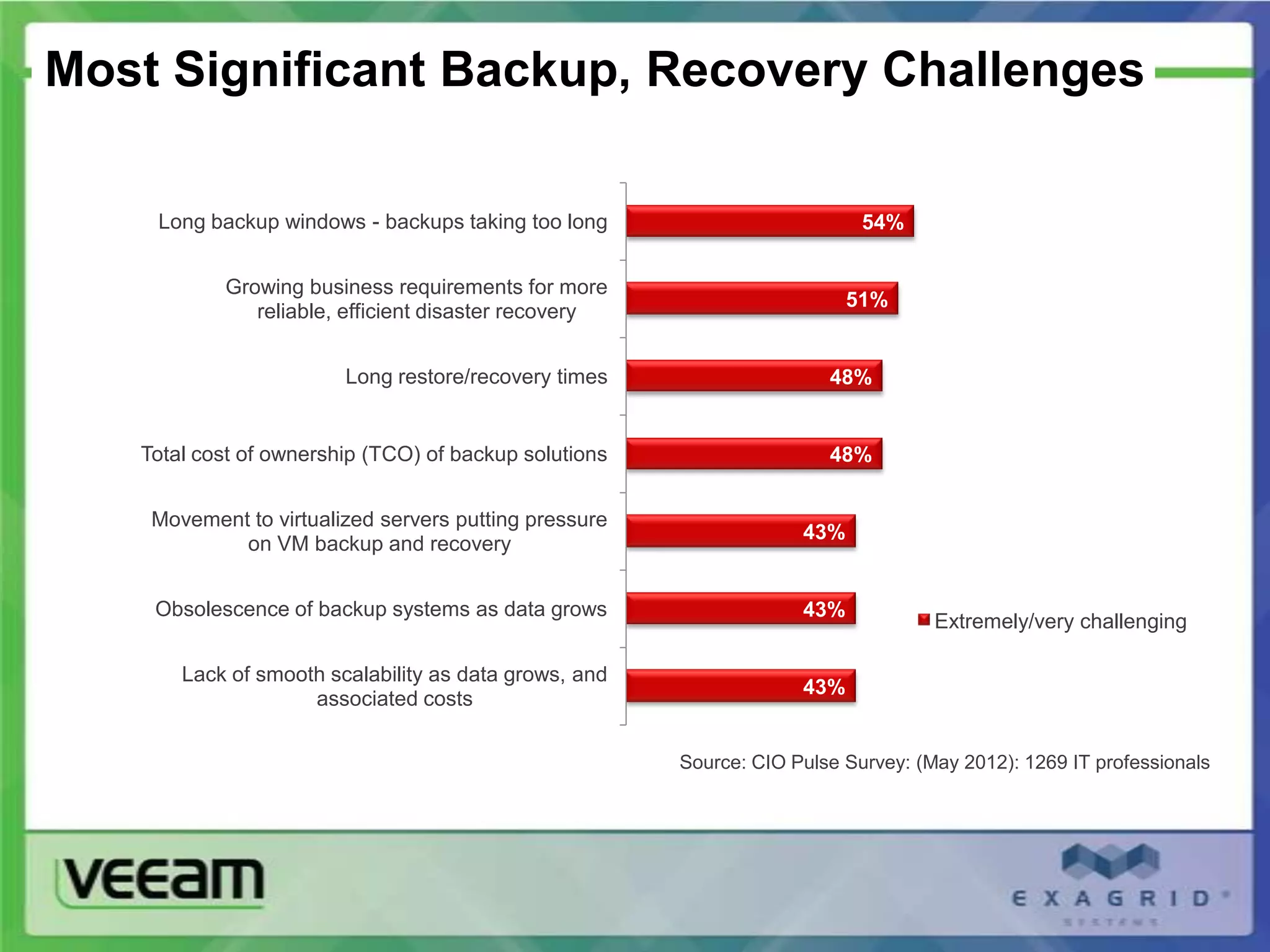

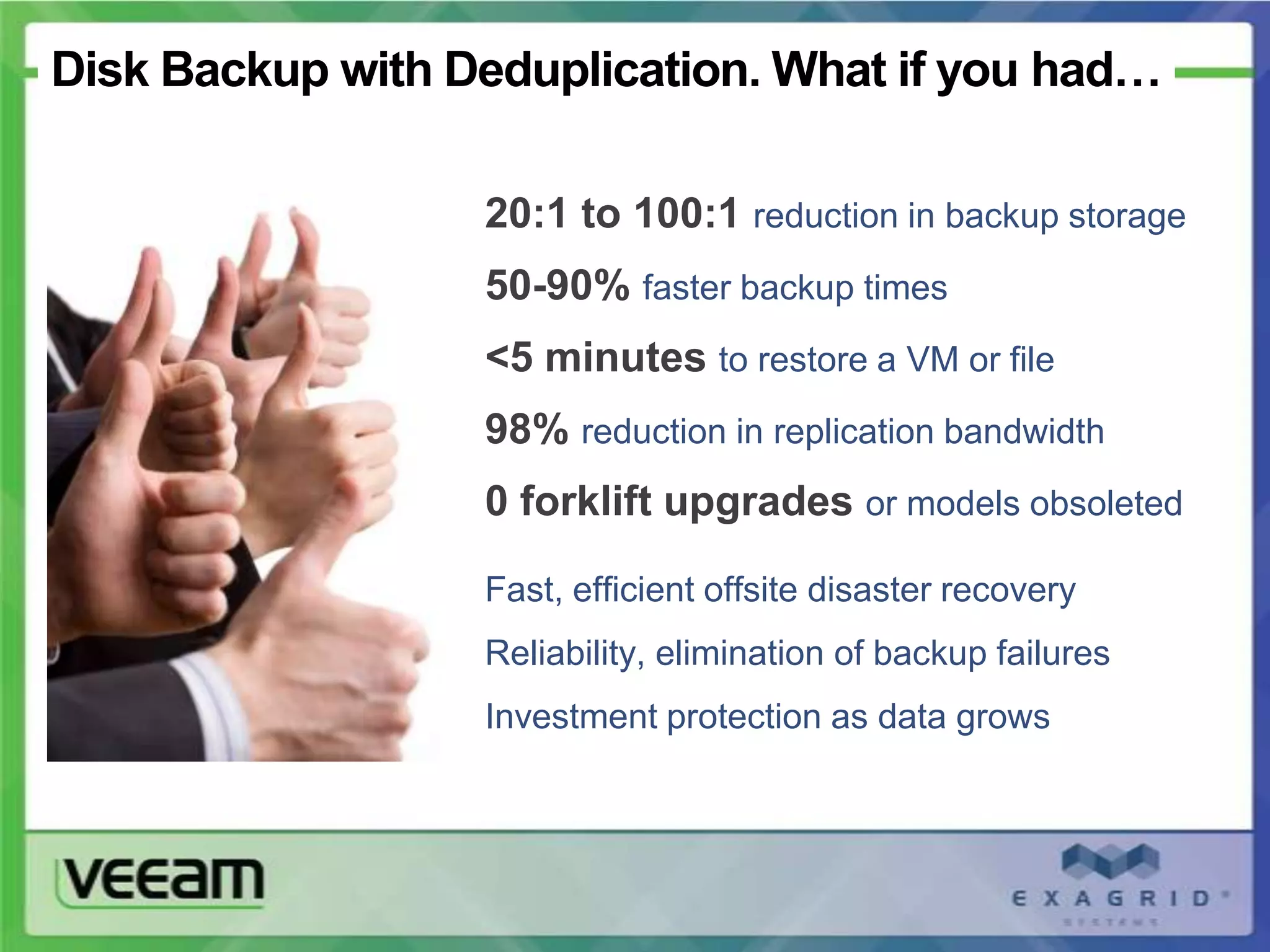

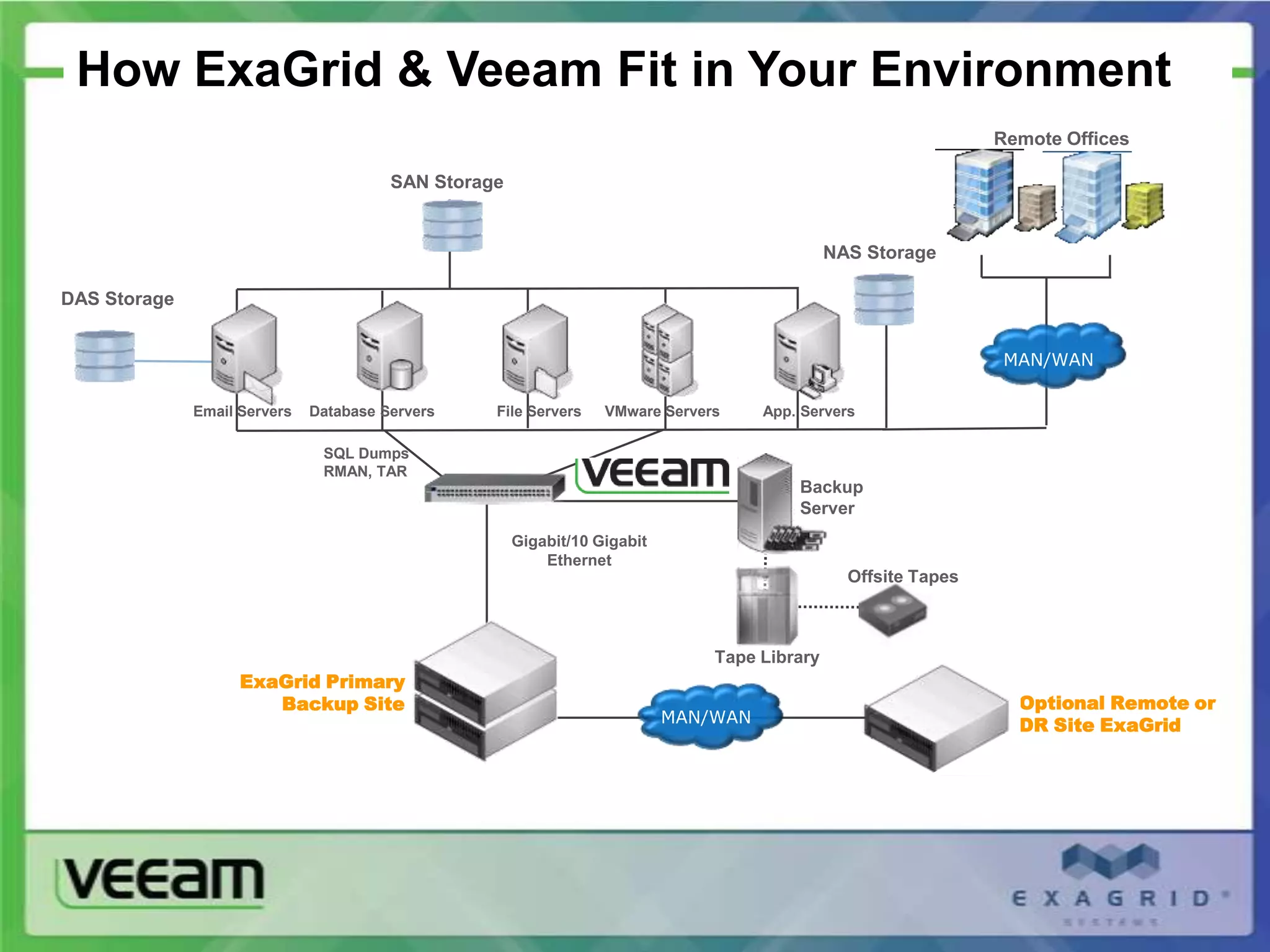

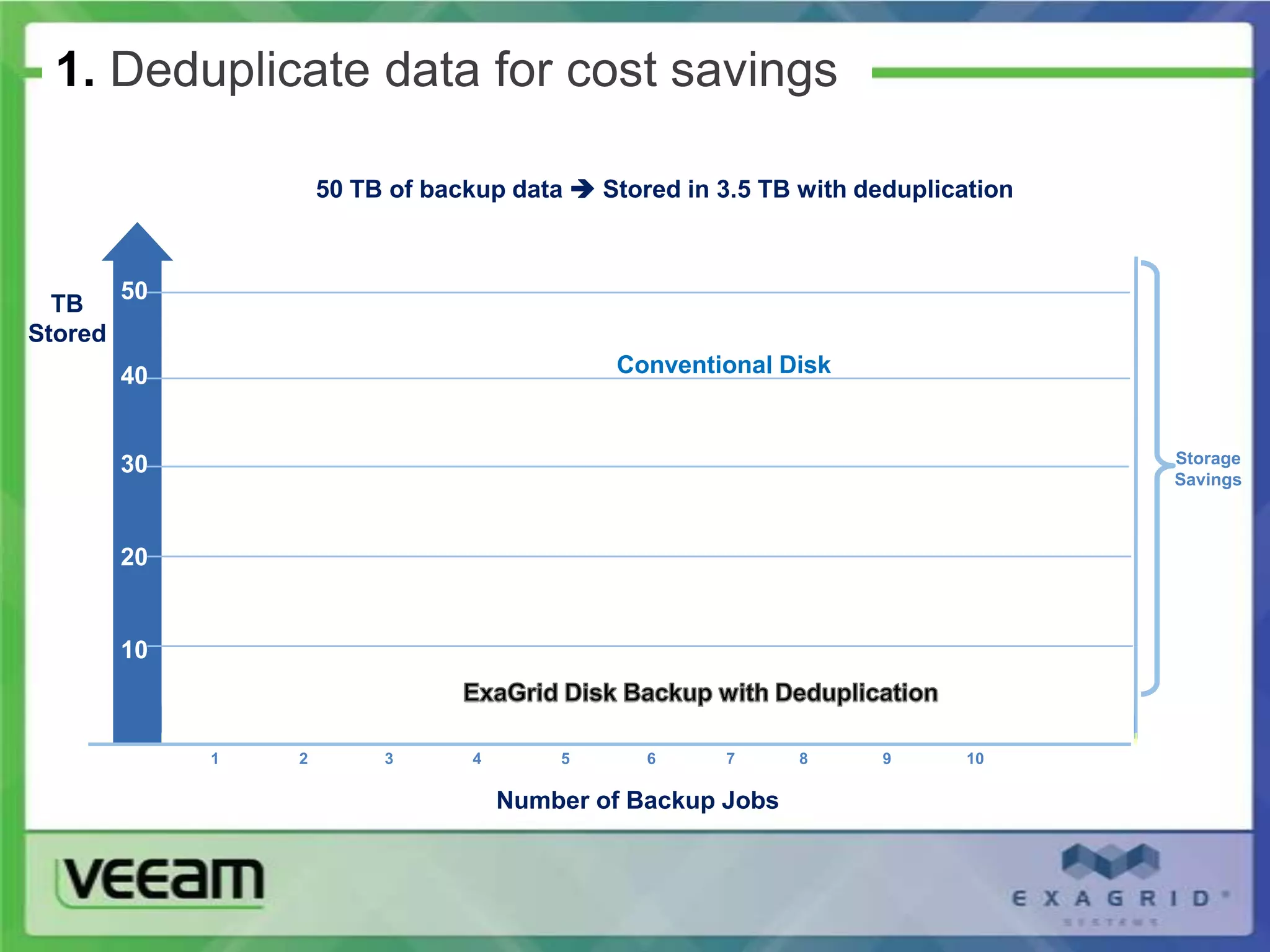

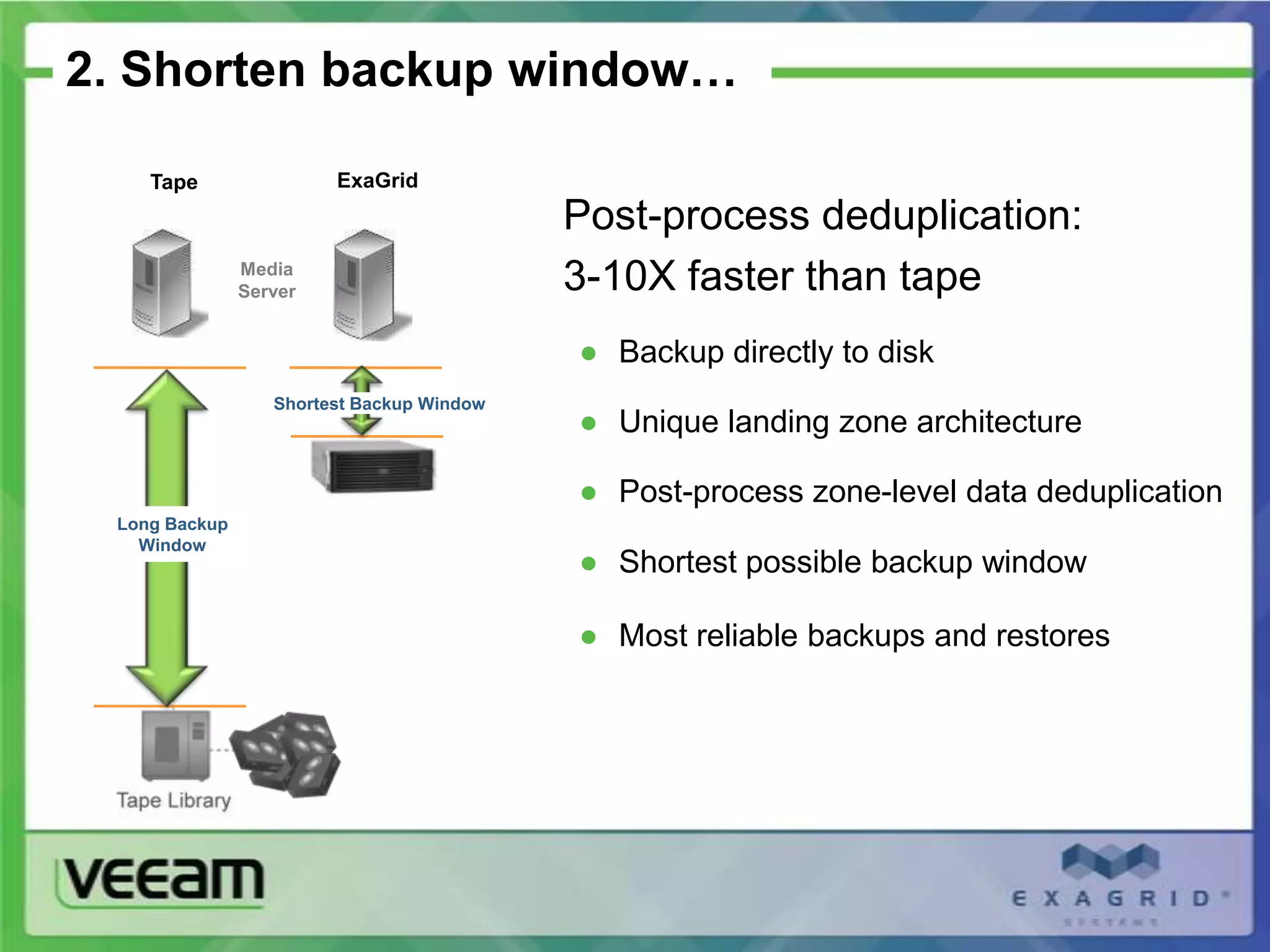

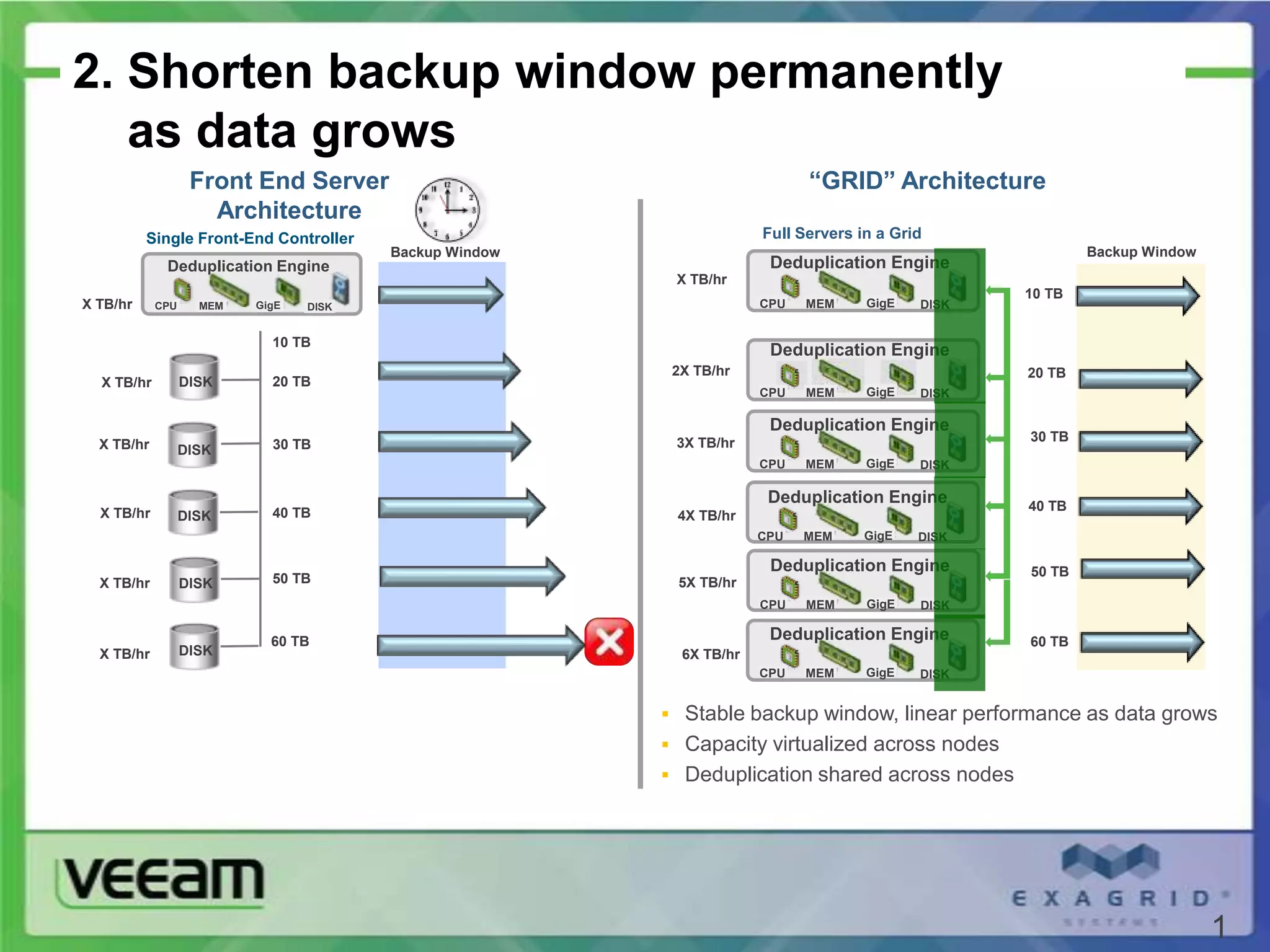

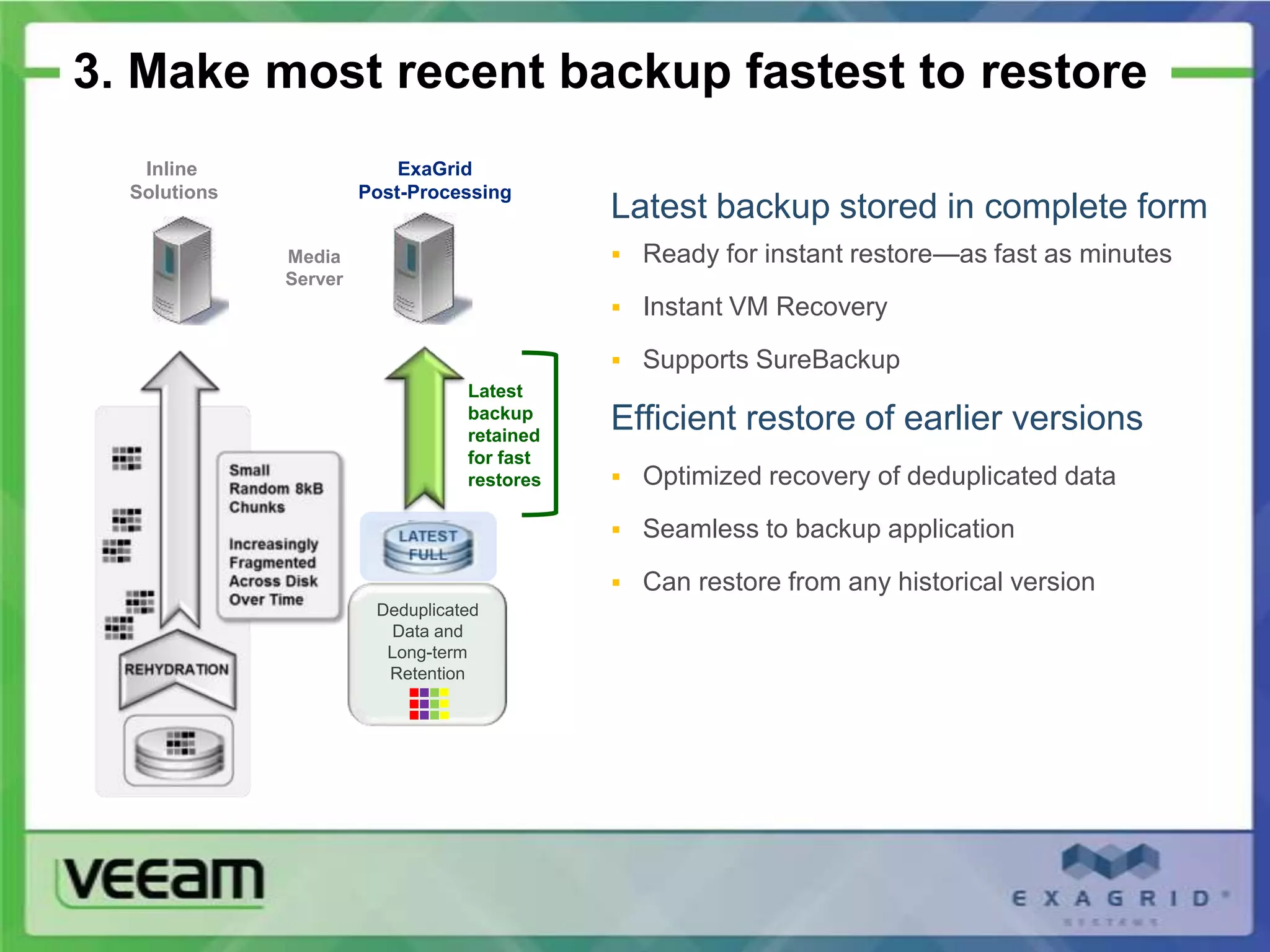

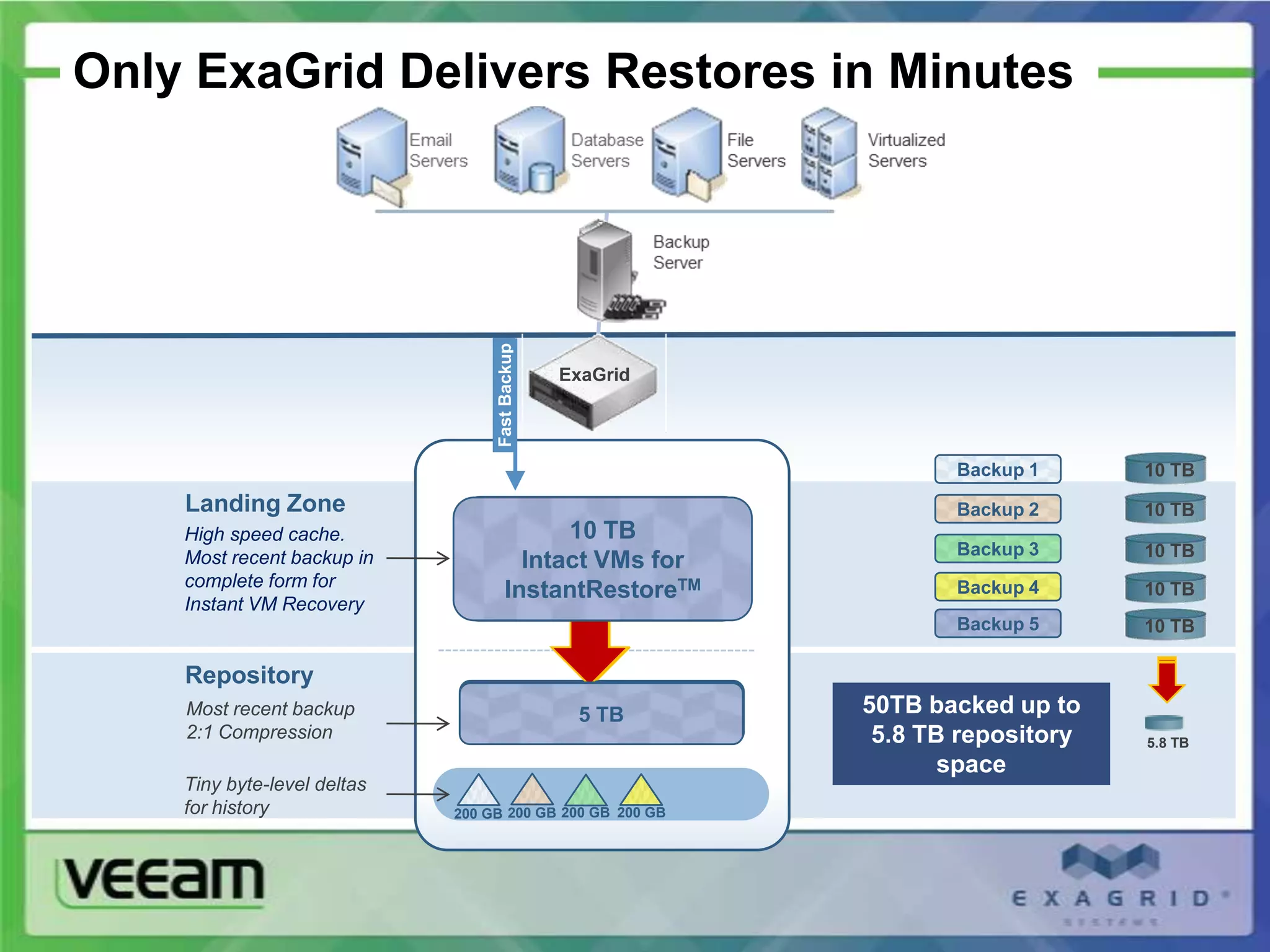

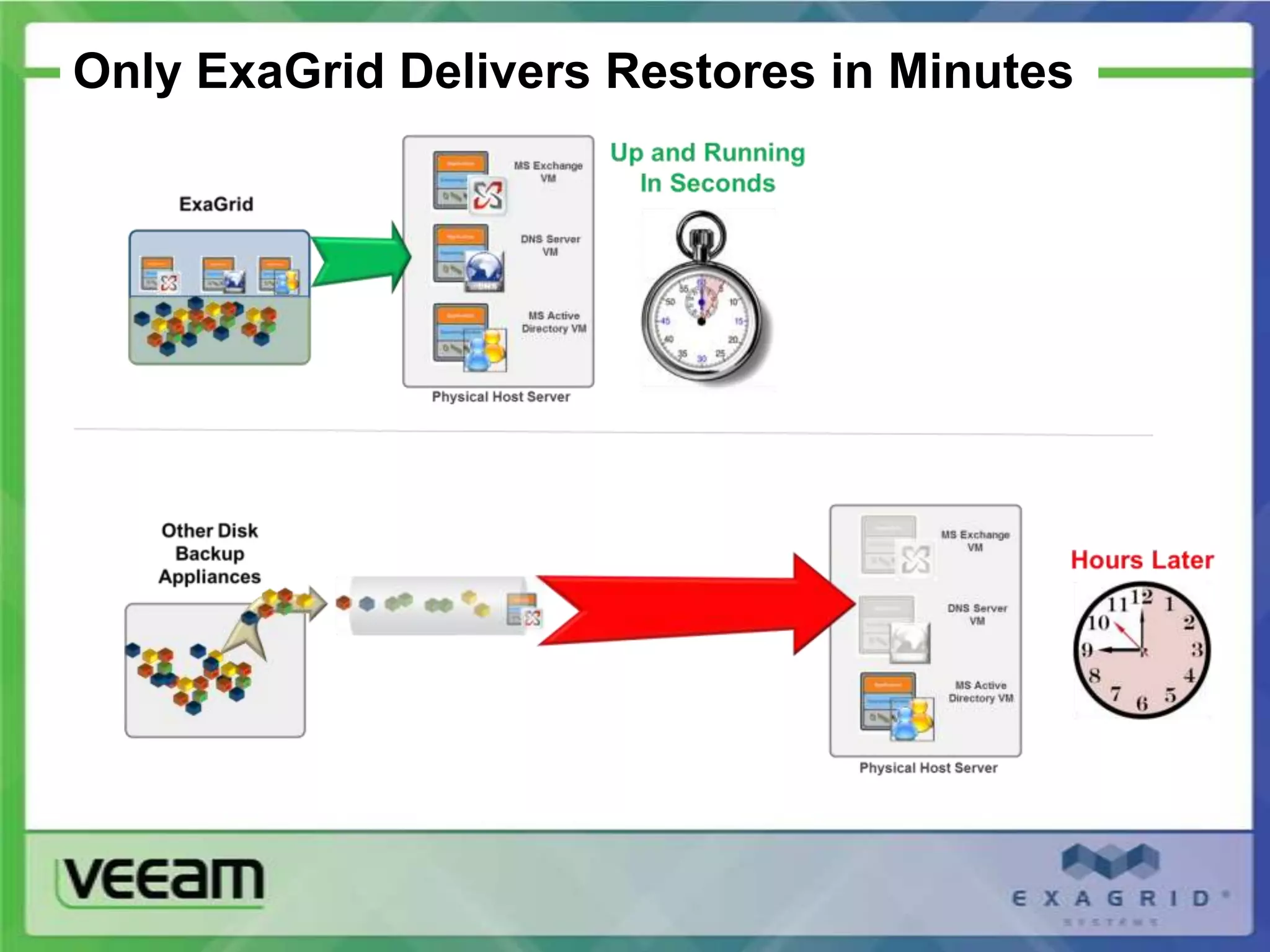

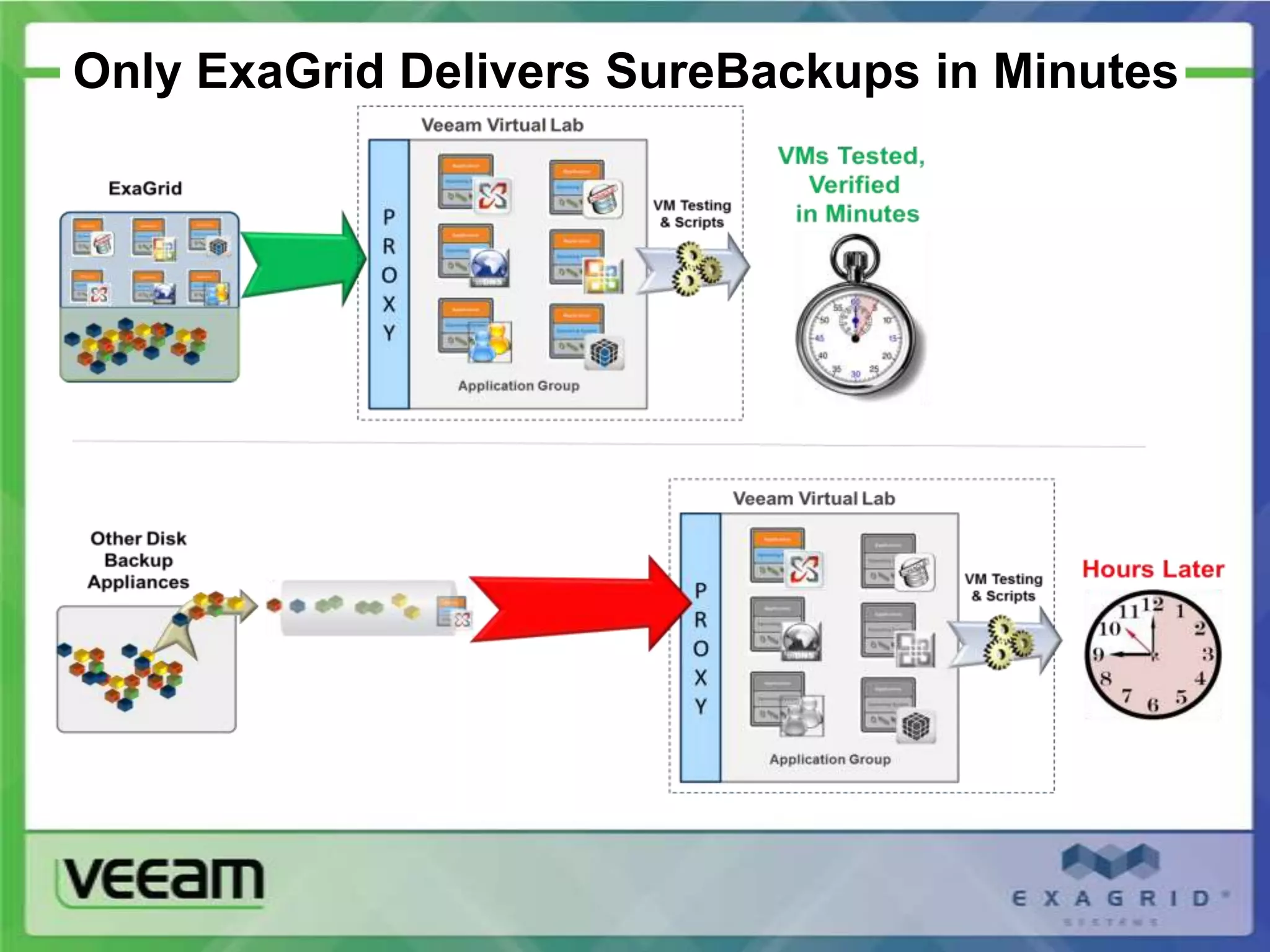

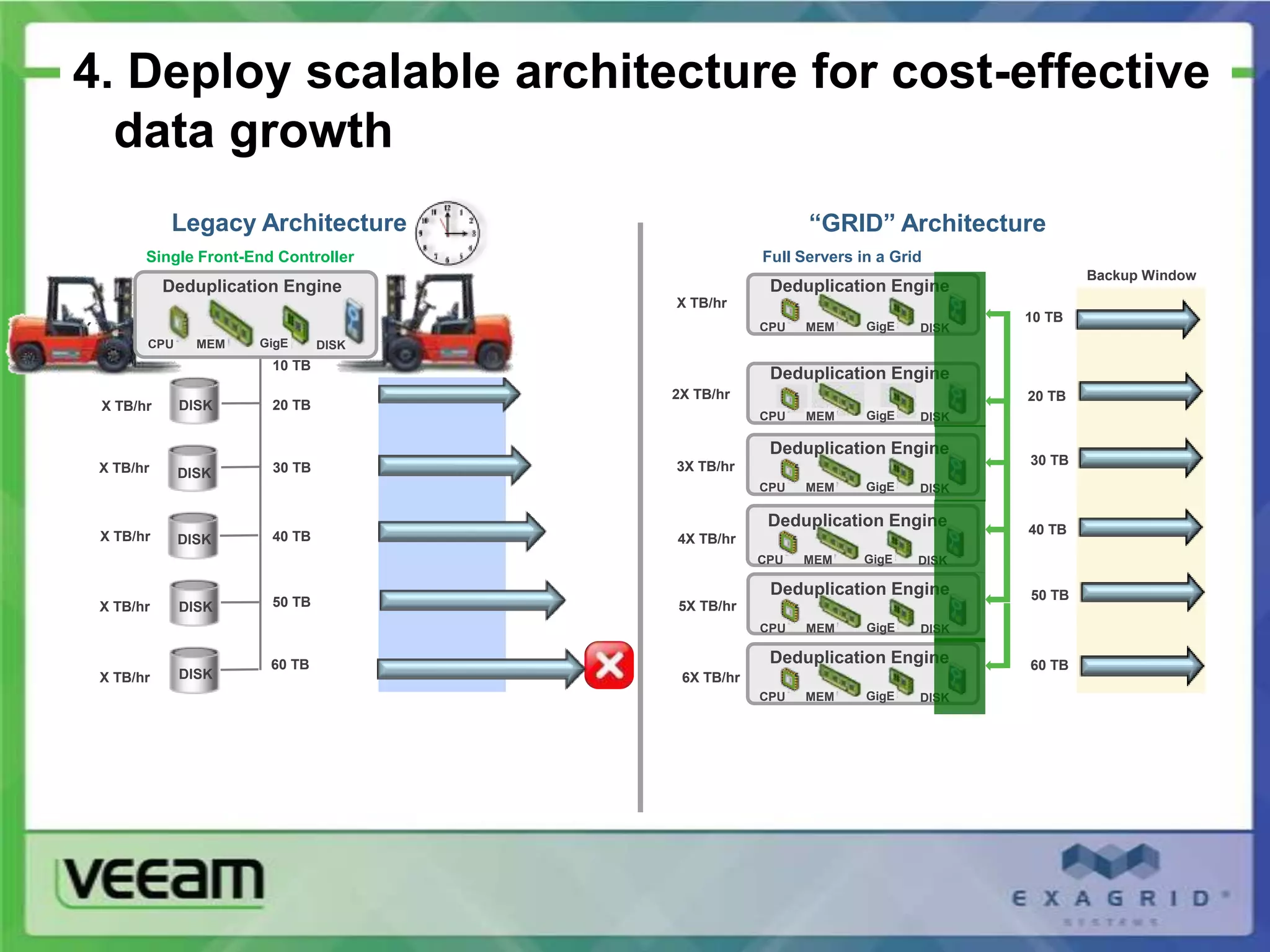

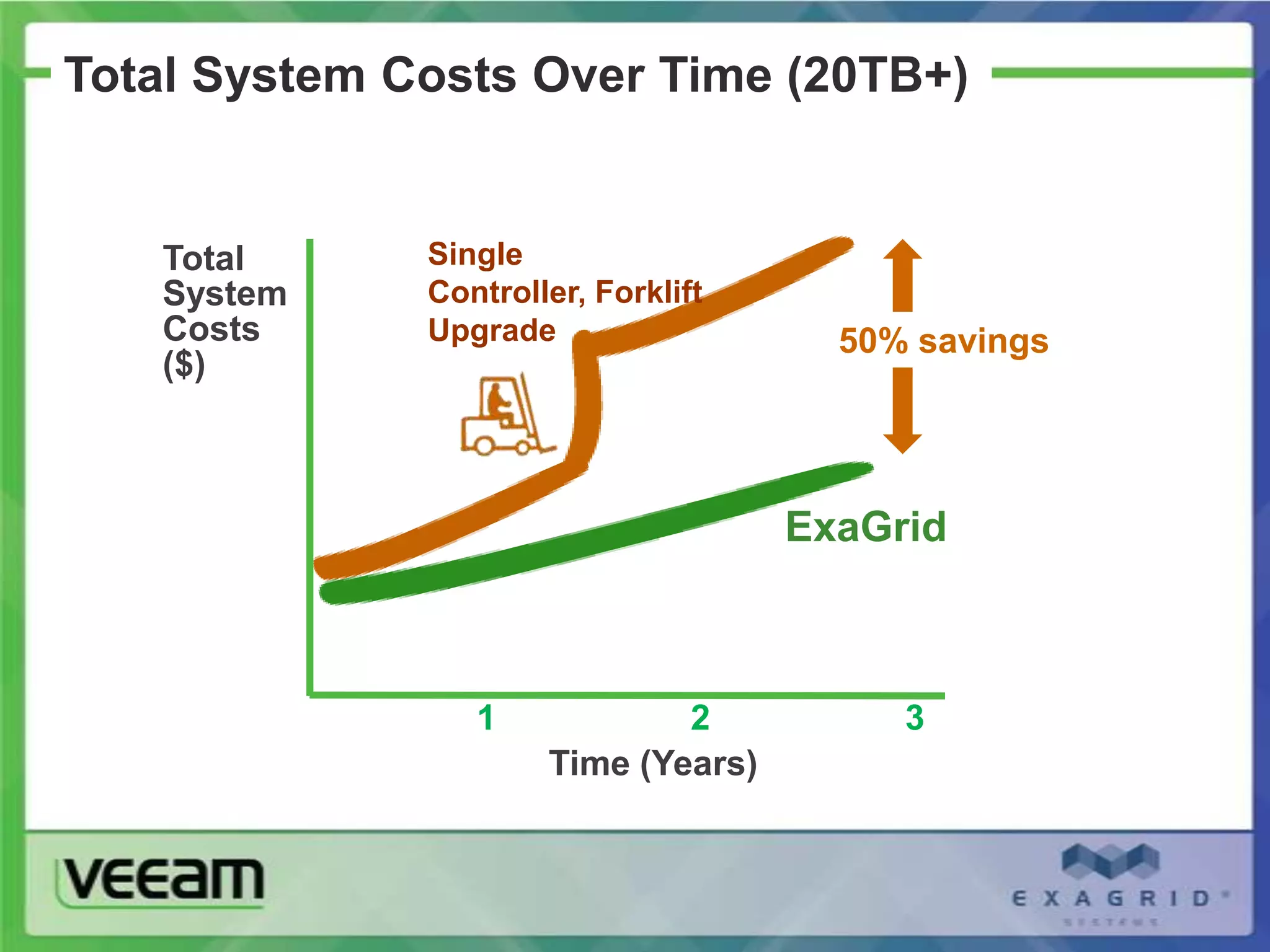

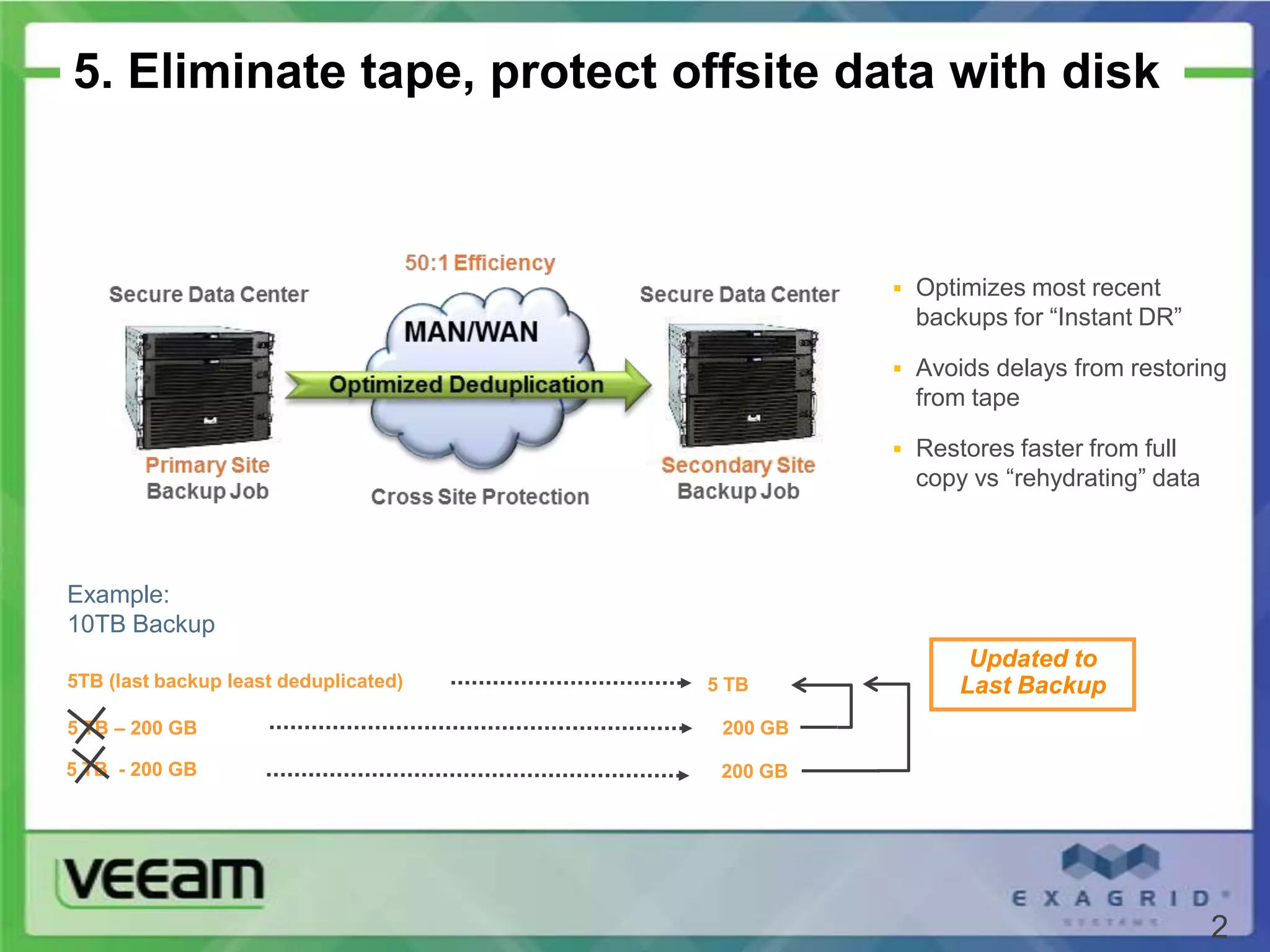

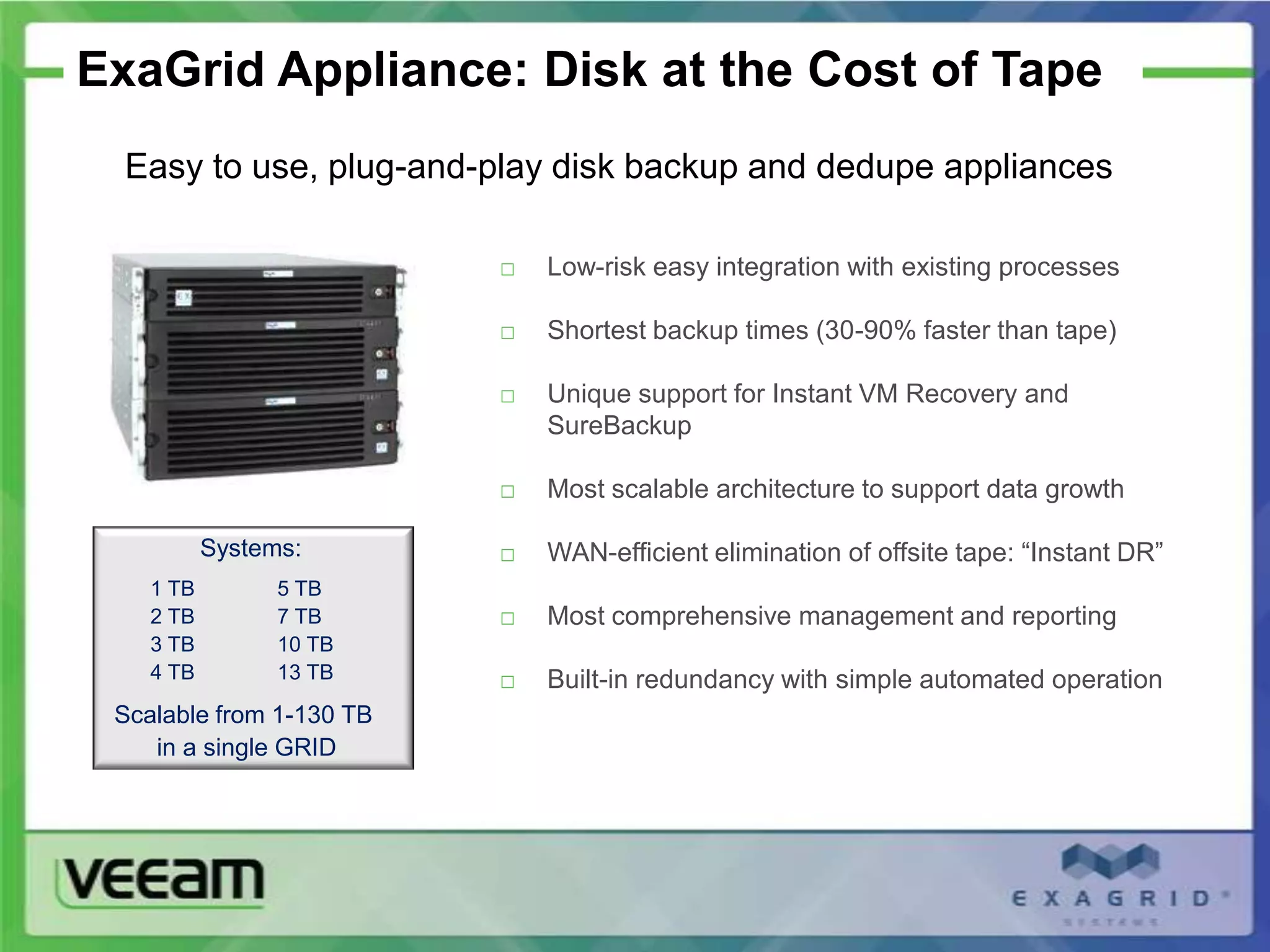

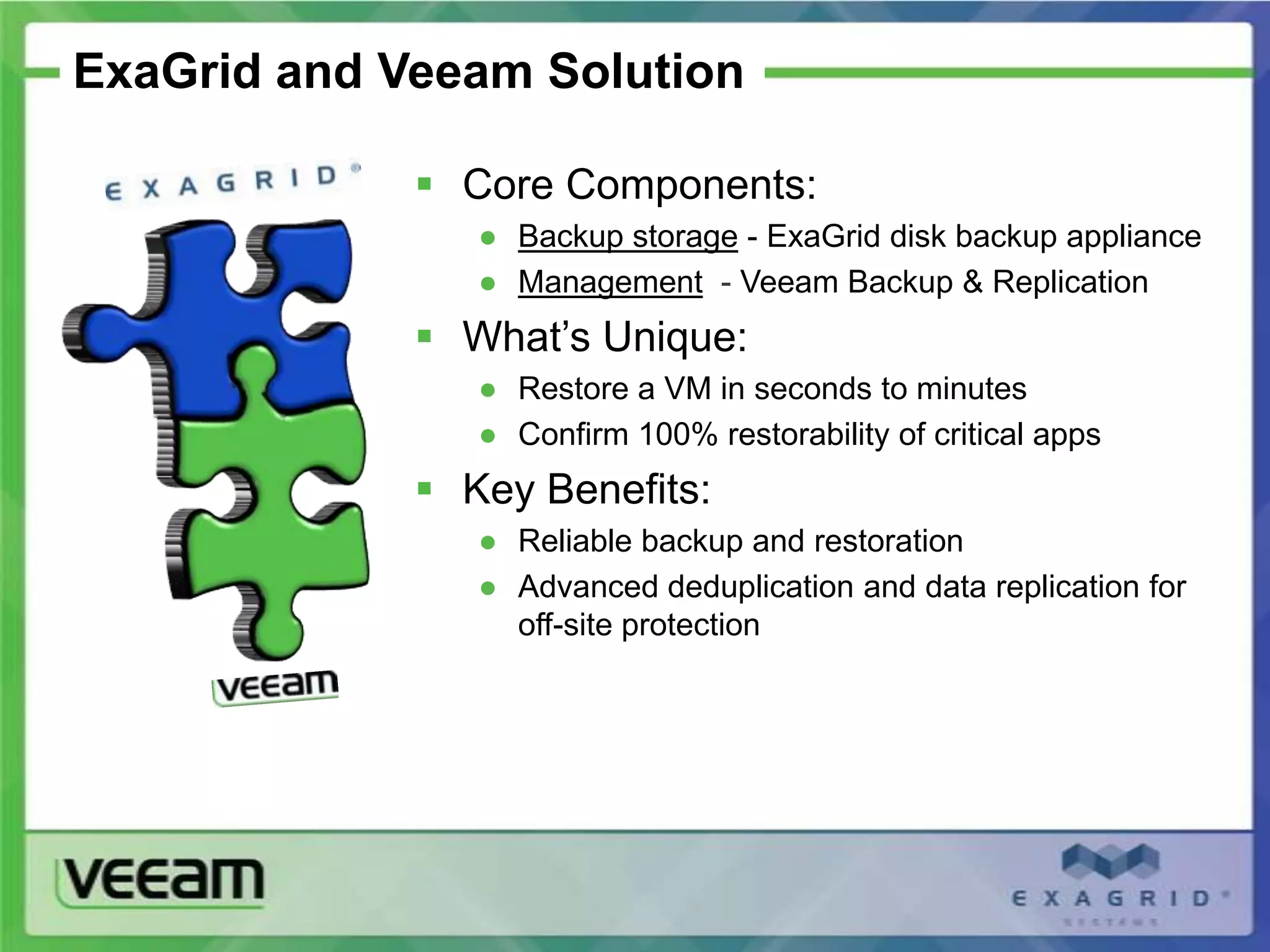

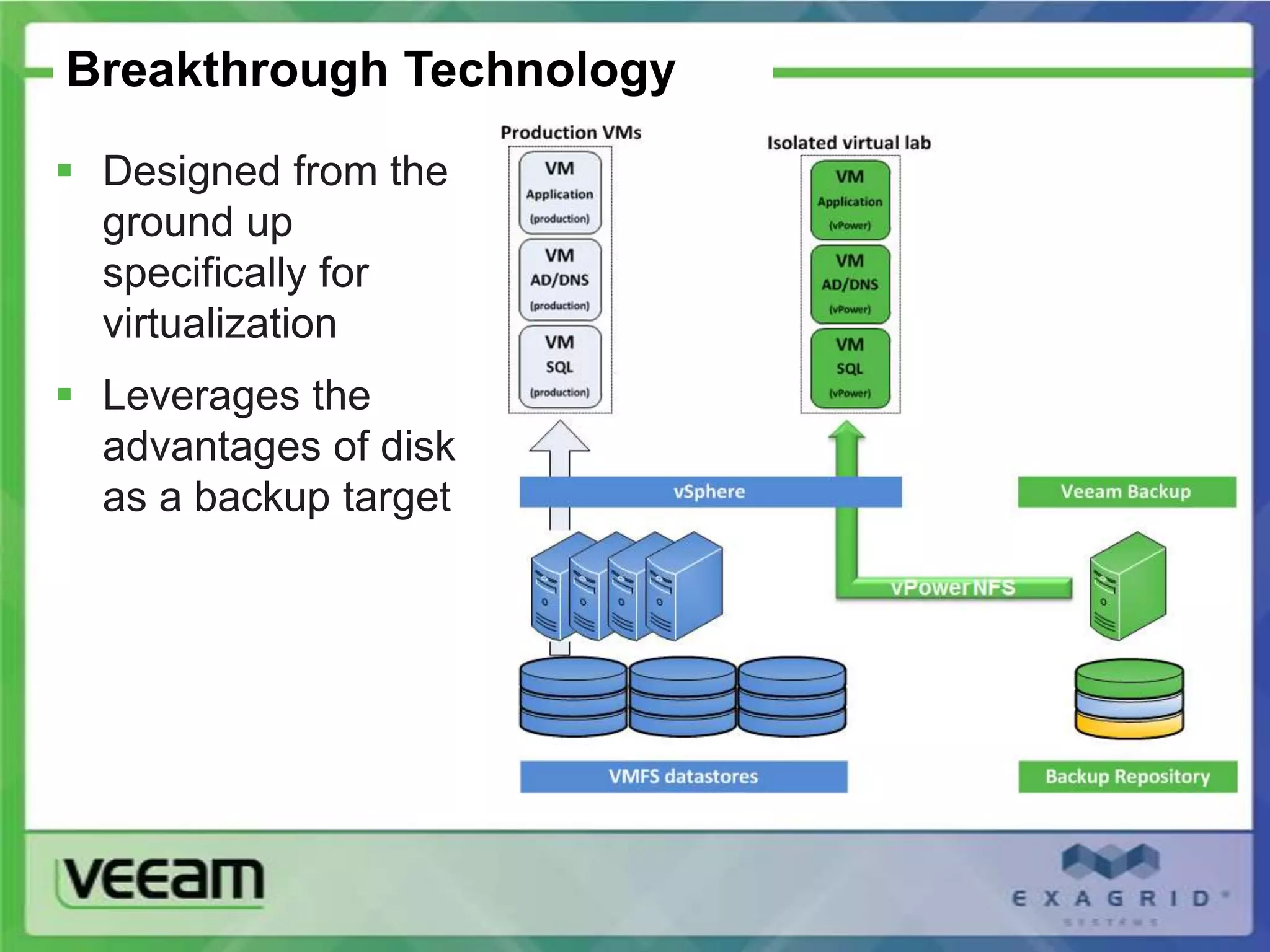

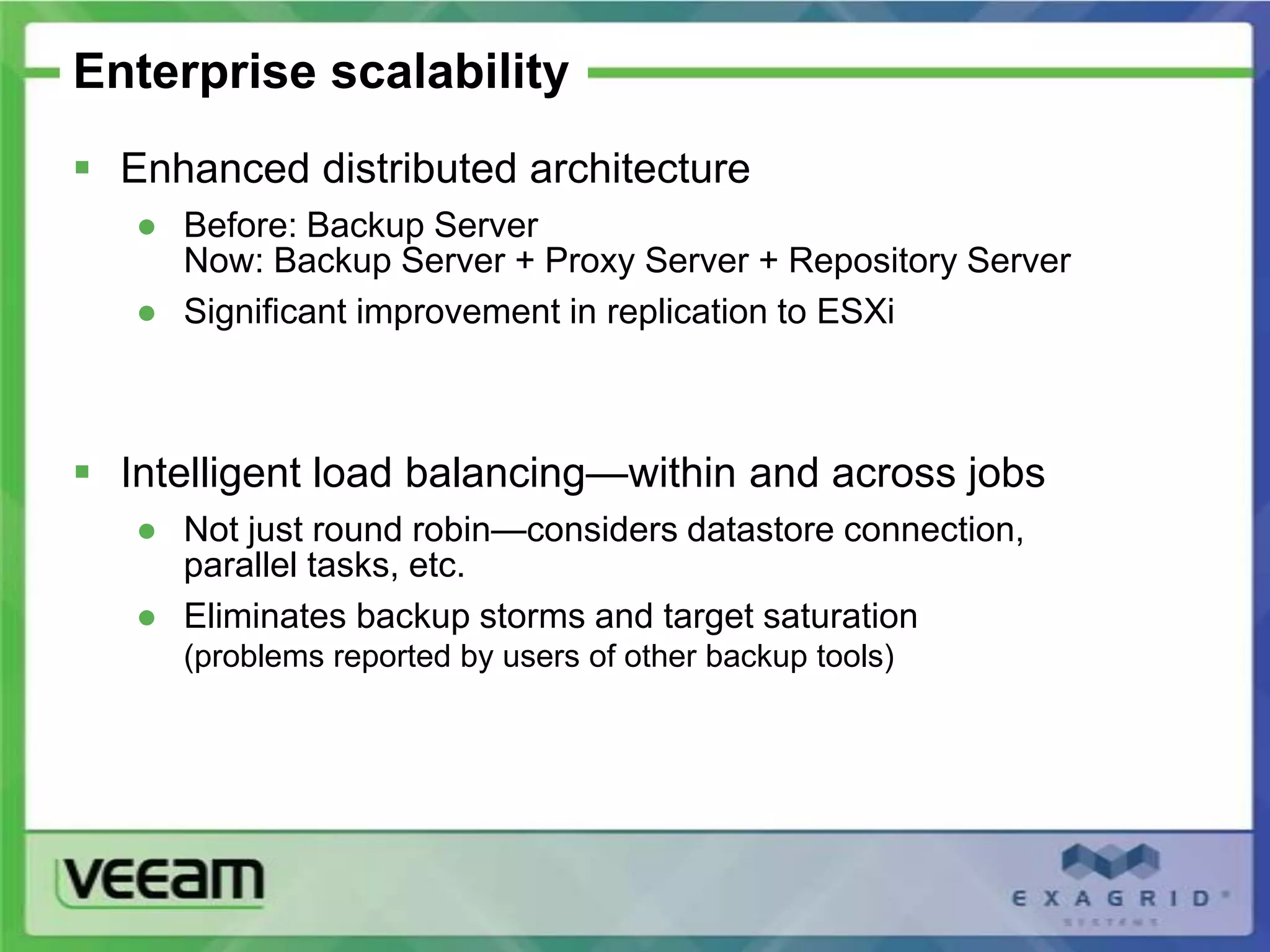

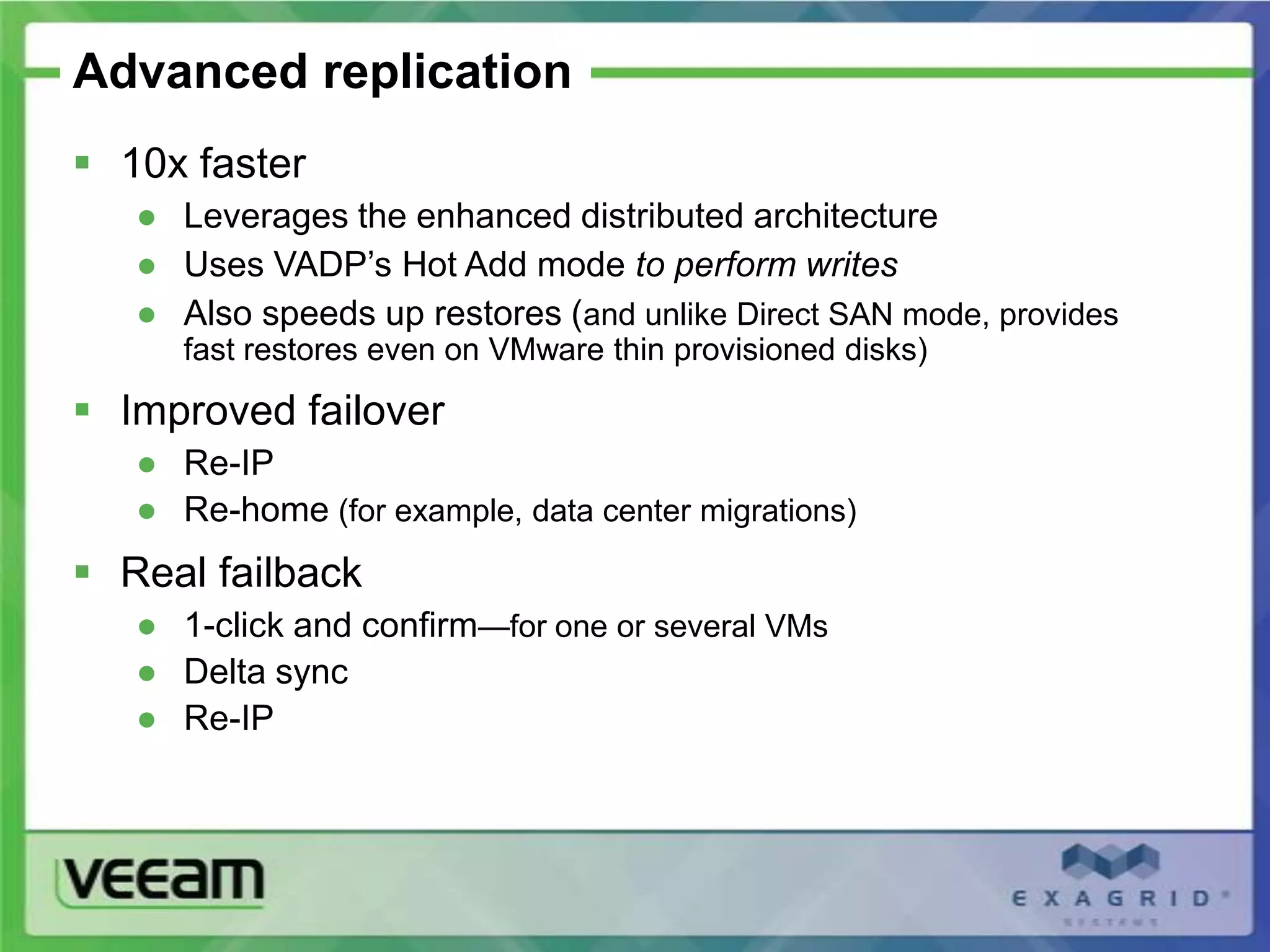

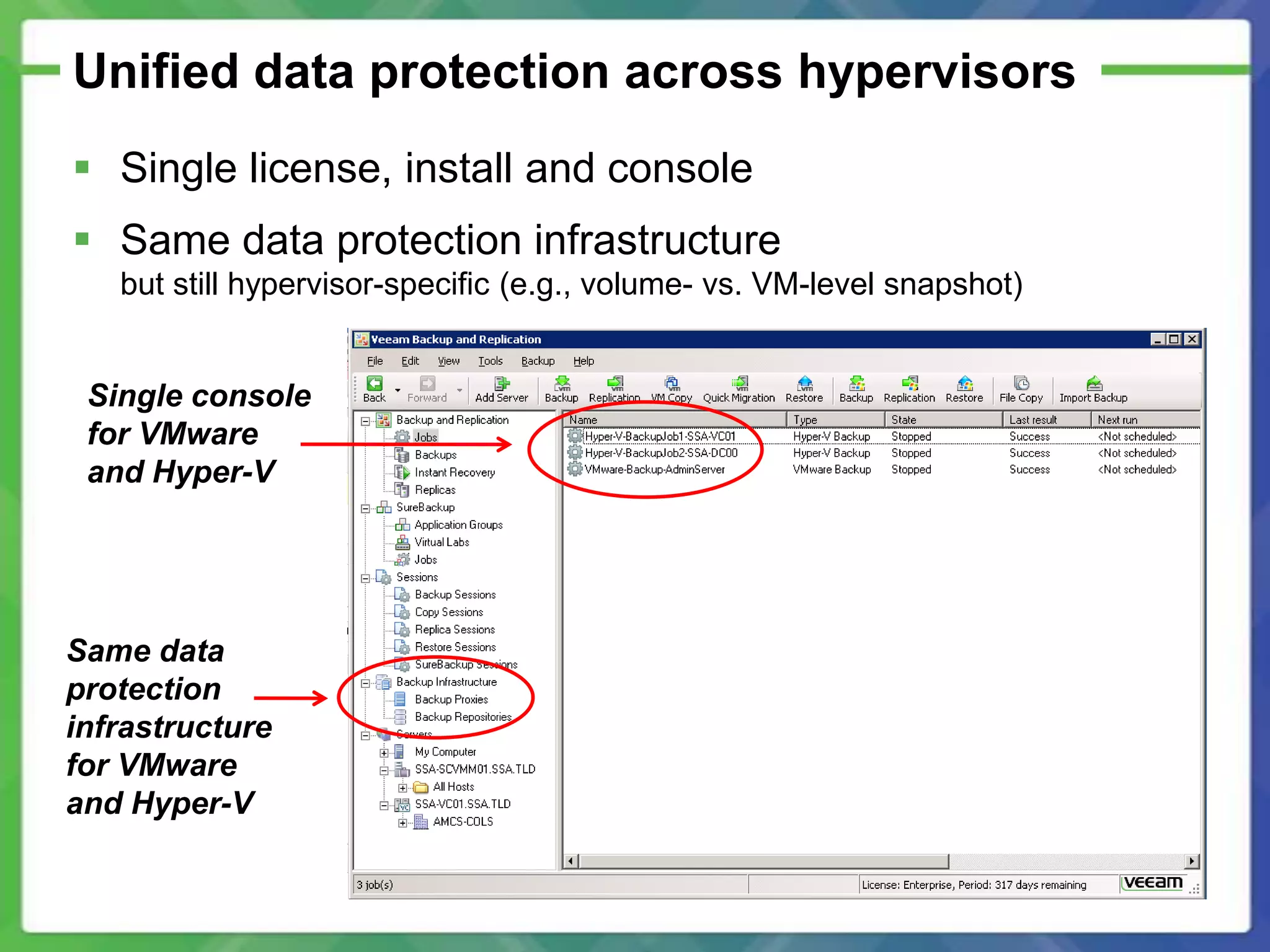

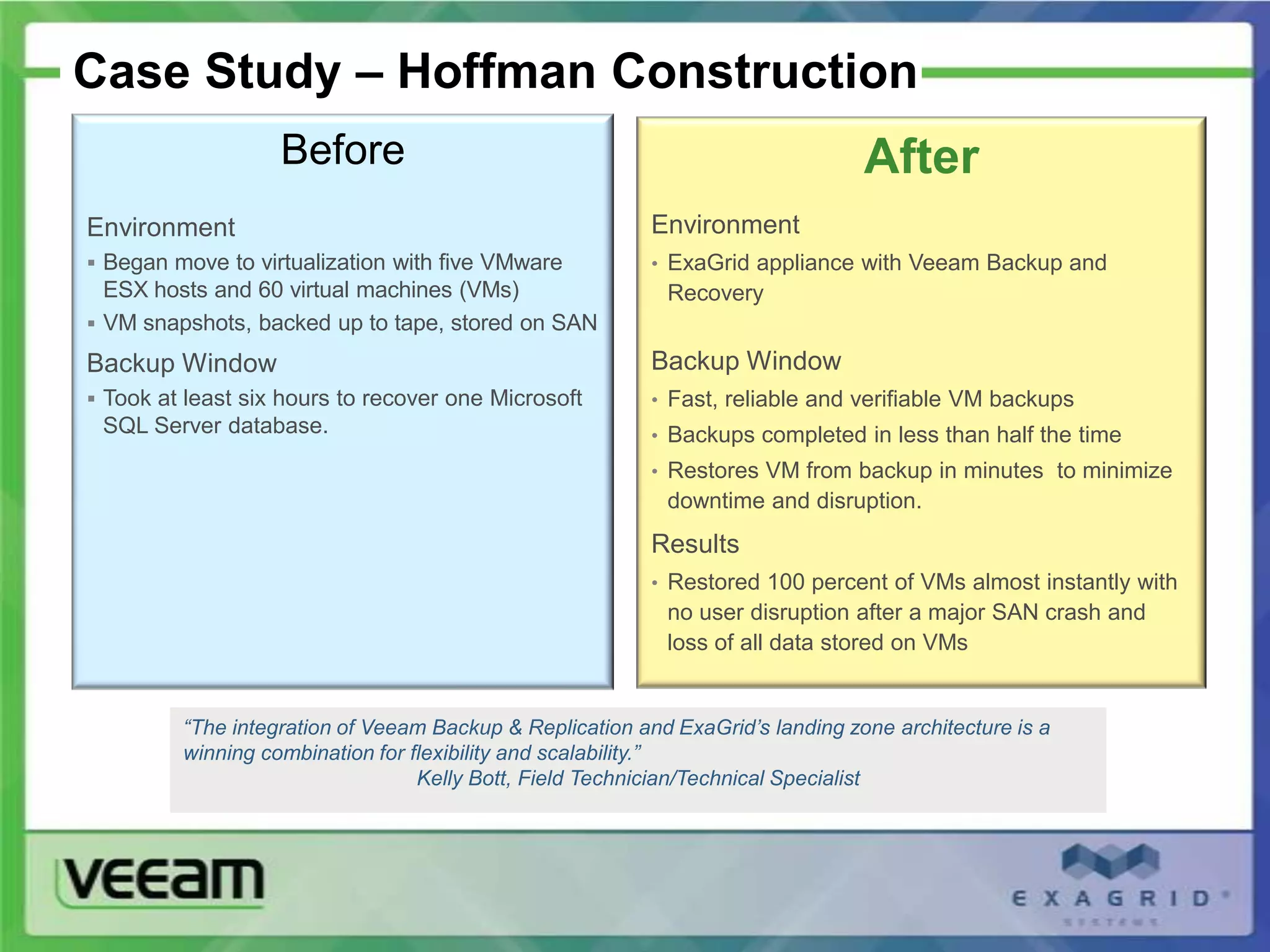

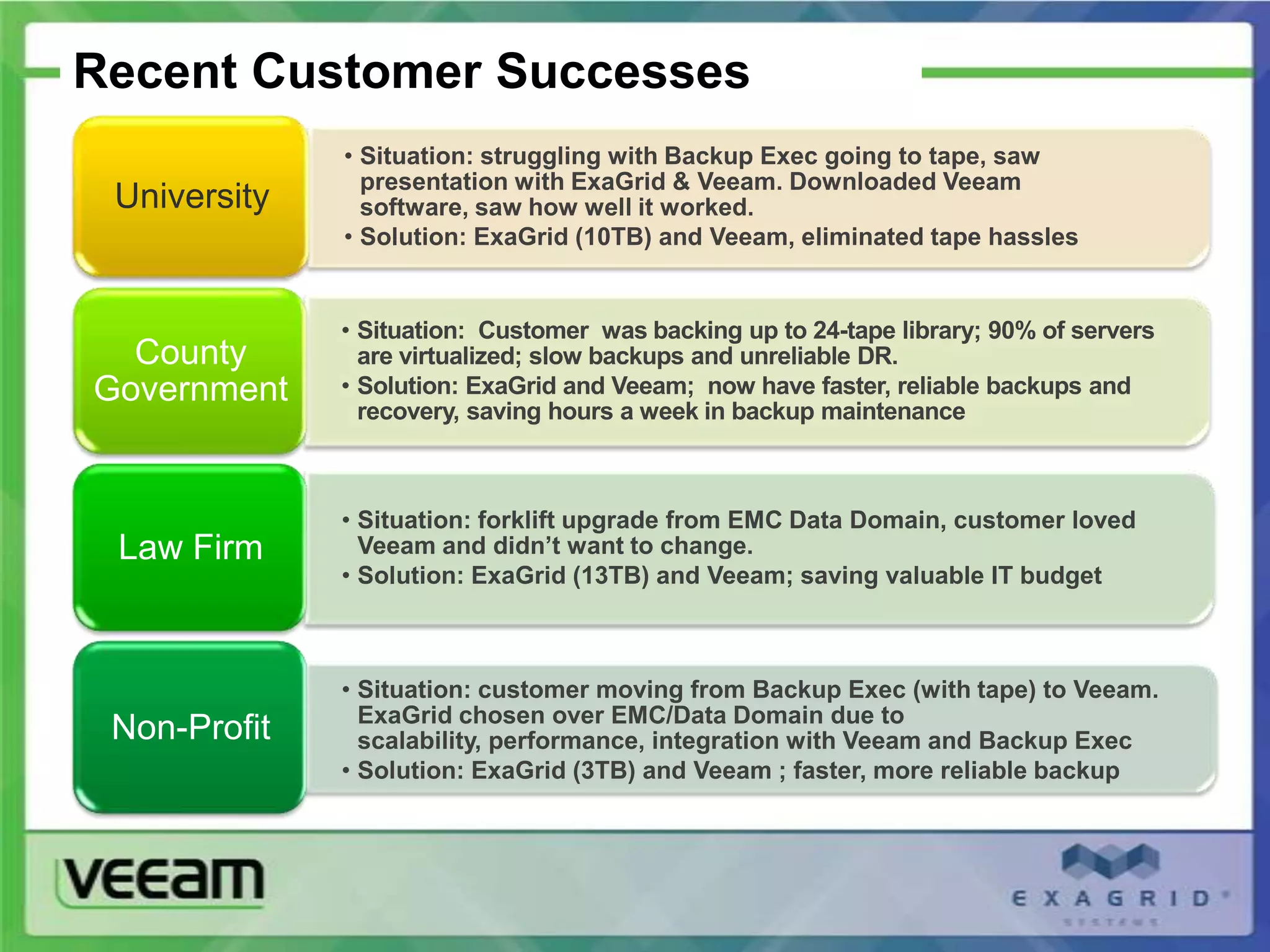

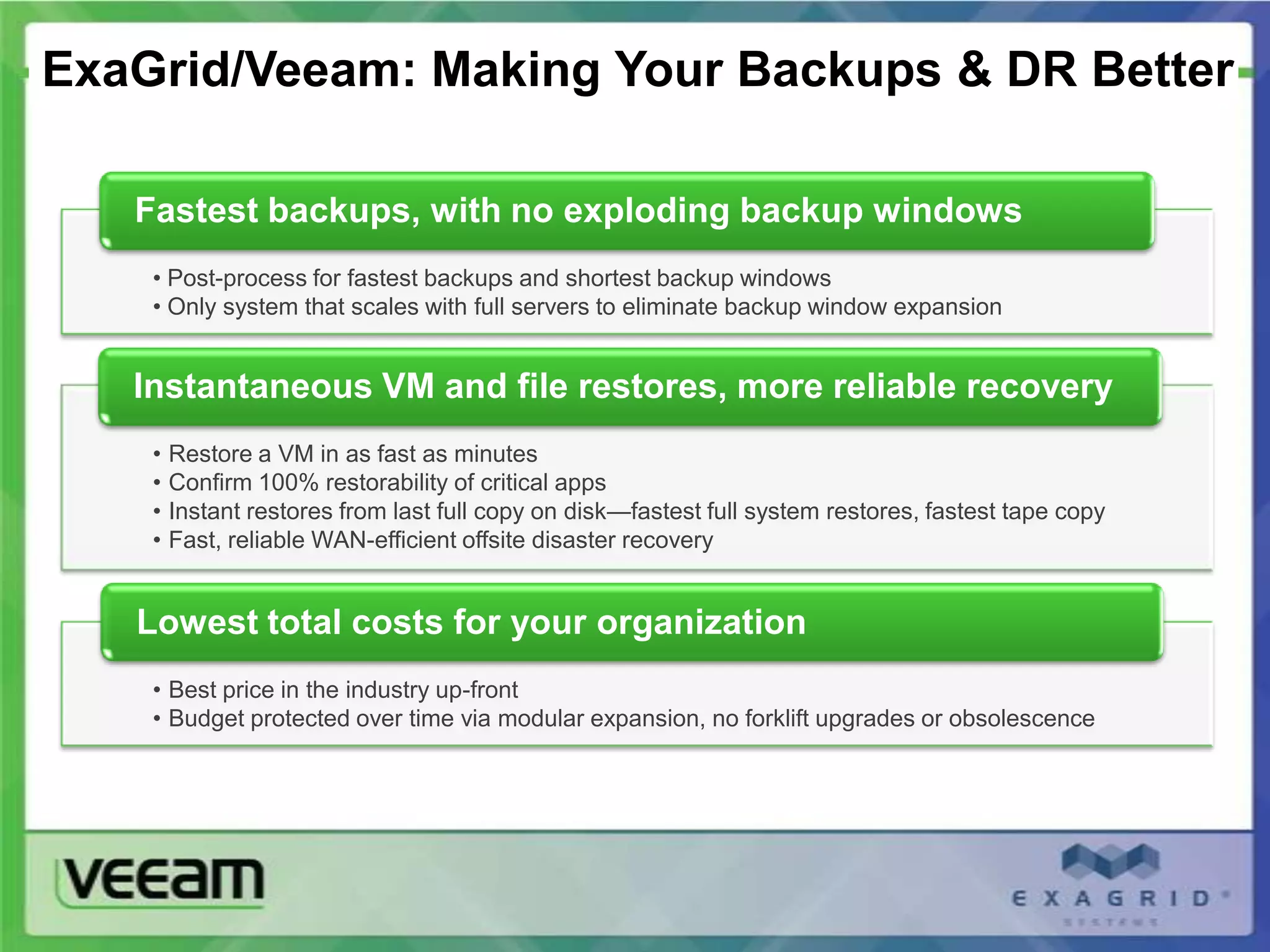

This document discusses the challenges of backing up virtualized servers and provides an overview of ExaGrid and Veeam Backup & Replication as an integrated solution. 51% of IT professionals report that their virtual server backups do not fully meet recovery goals. ExaGrid addresses this with disk-based backup using data deduplication for faster backups and restores in minutes along with a scalable architecture. When used with Veeam, customers can leverage their virtual environments for reliable backup and recovery. The presentation provides examples of how together ExaGrid and Veeam have helped customers improve their backup and disaster recovery.