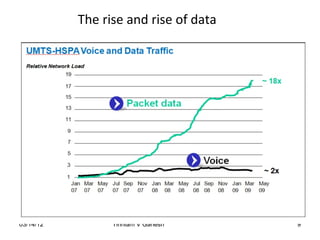

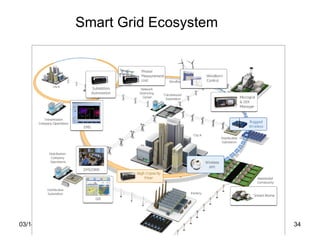

The document discusses several technology trends that will endure including Long Term Evolution (LTE), smart grids, software defined networks, NoSQL, Near Field Communication (NFC), Internet of Things (IoT)/Machine to Machine (M2M), big data, social networks, and cloud computing. It provides a high-level overview of each technology trend without going into detail about any specific one.