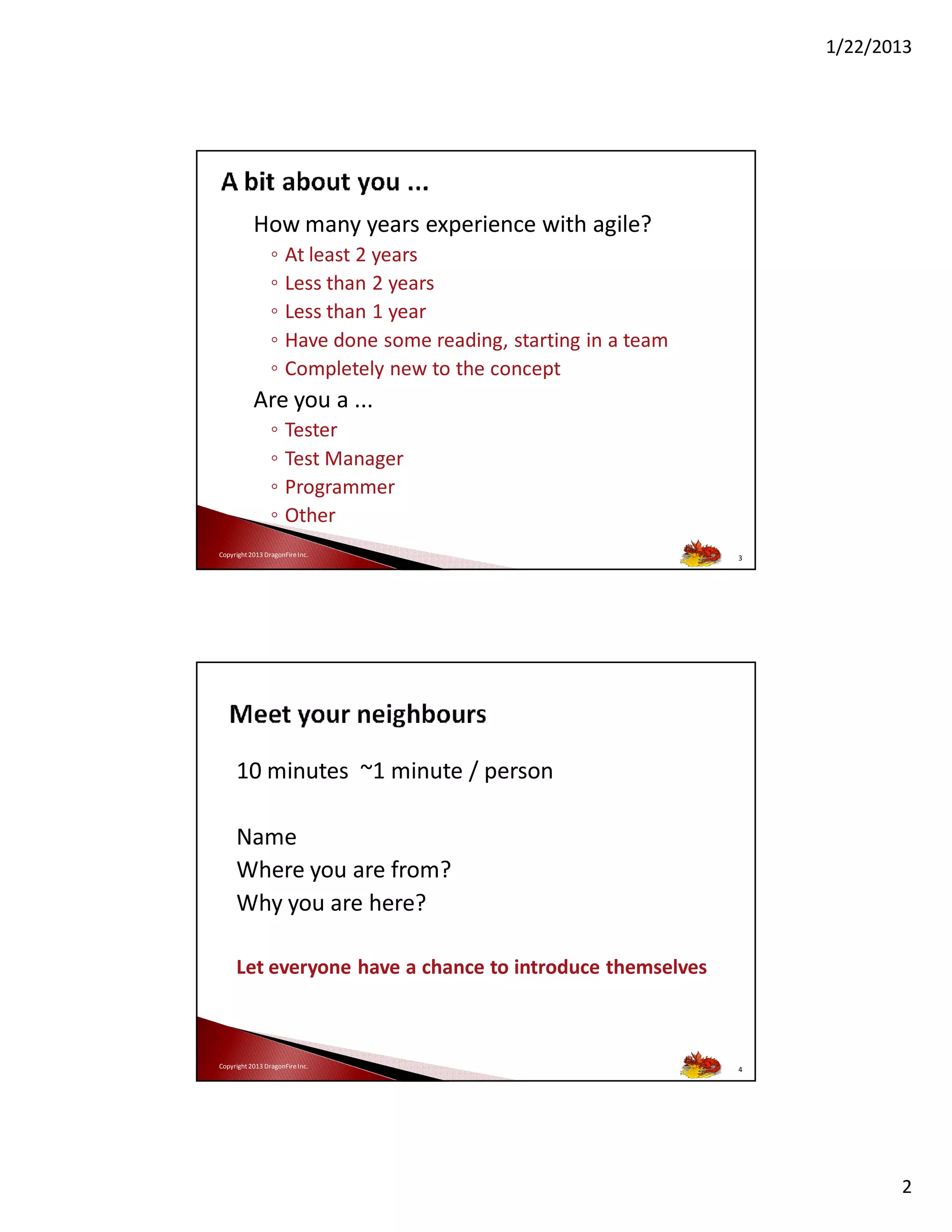

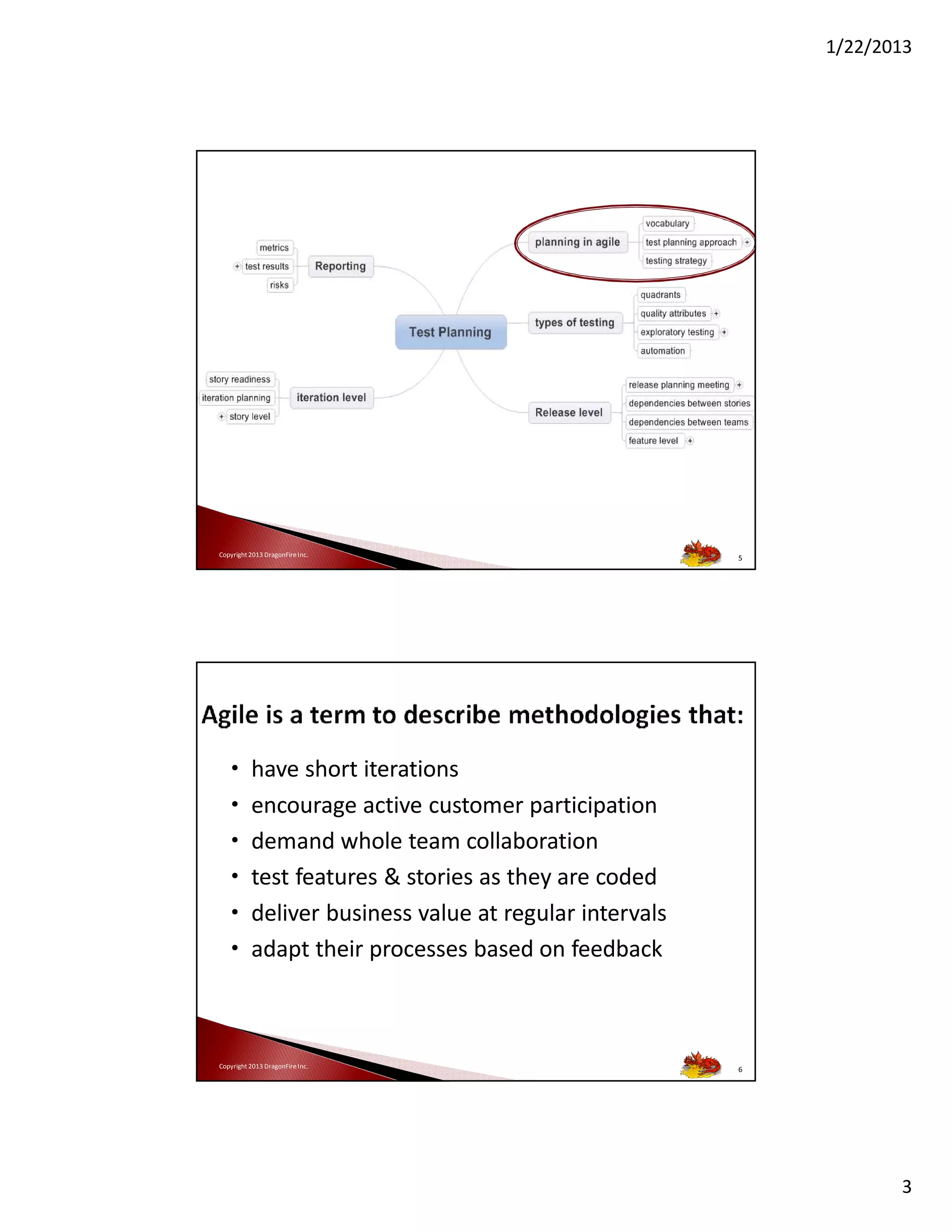

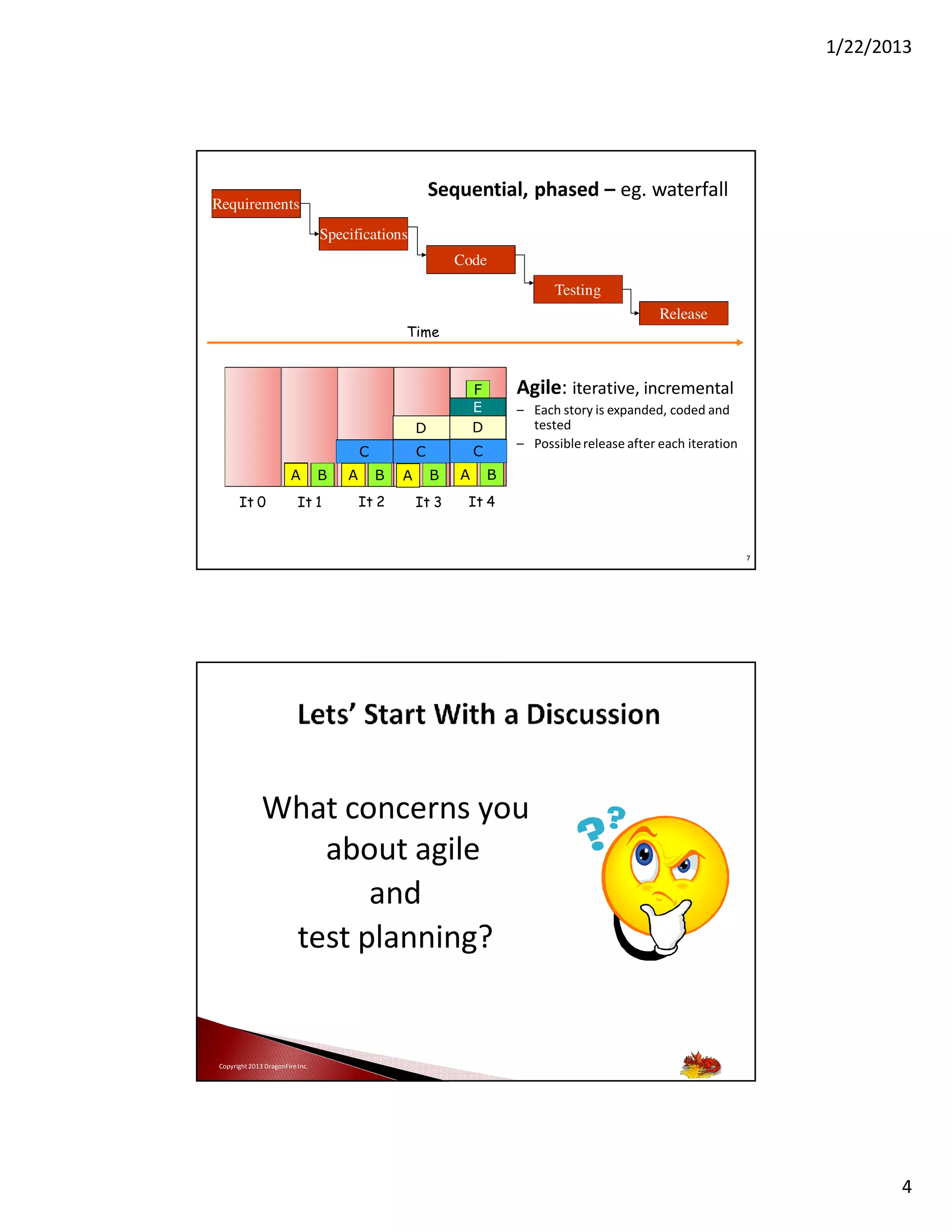

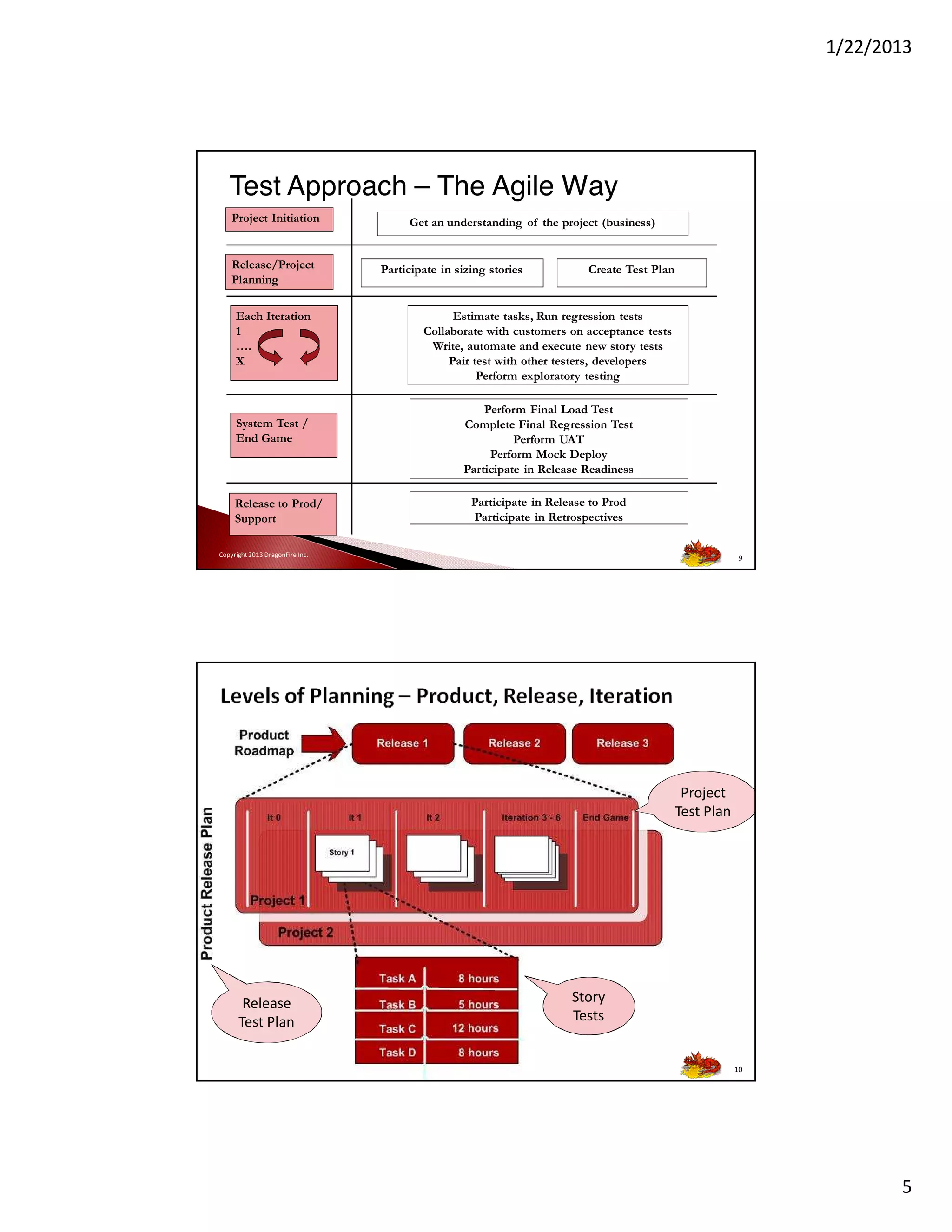

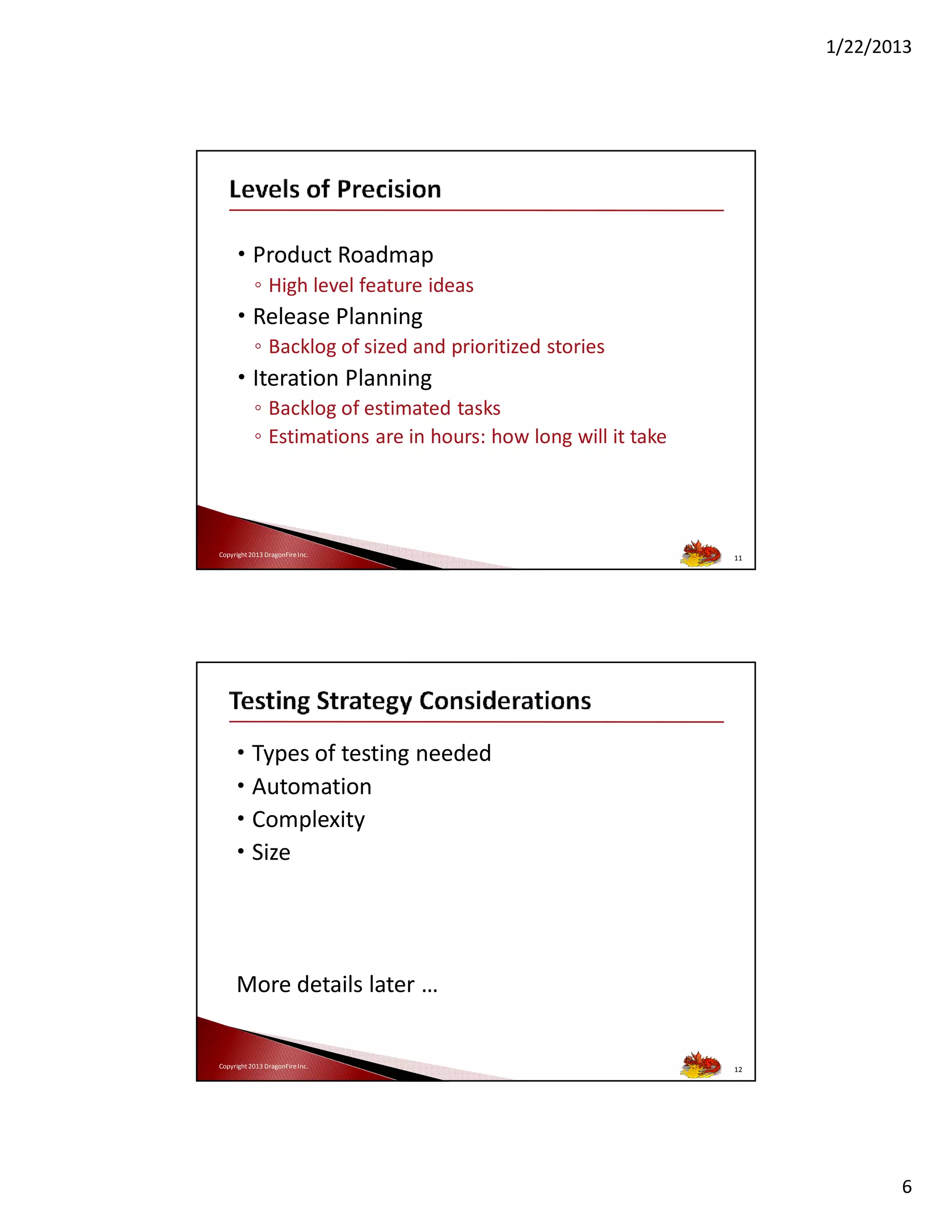

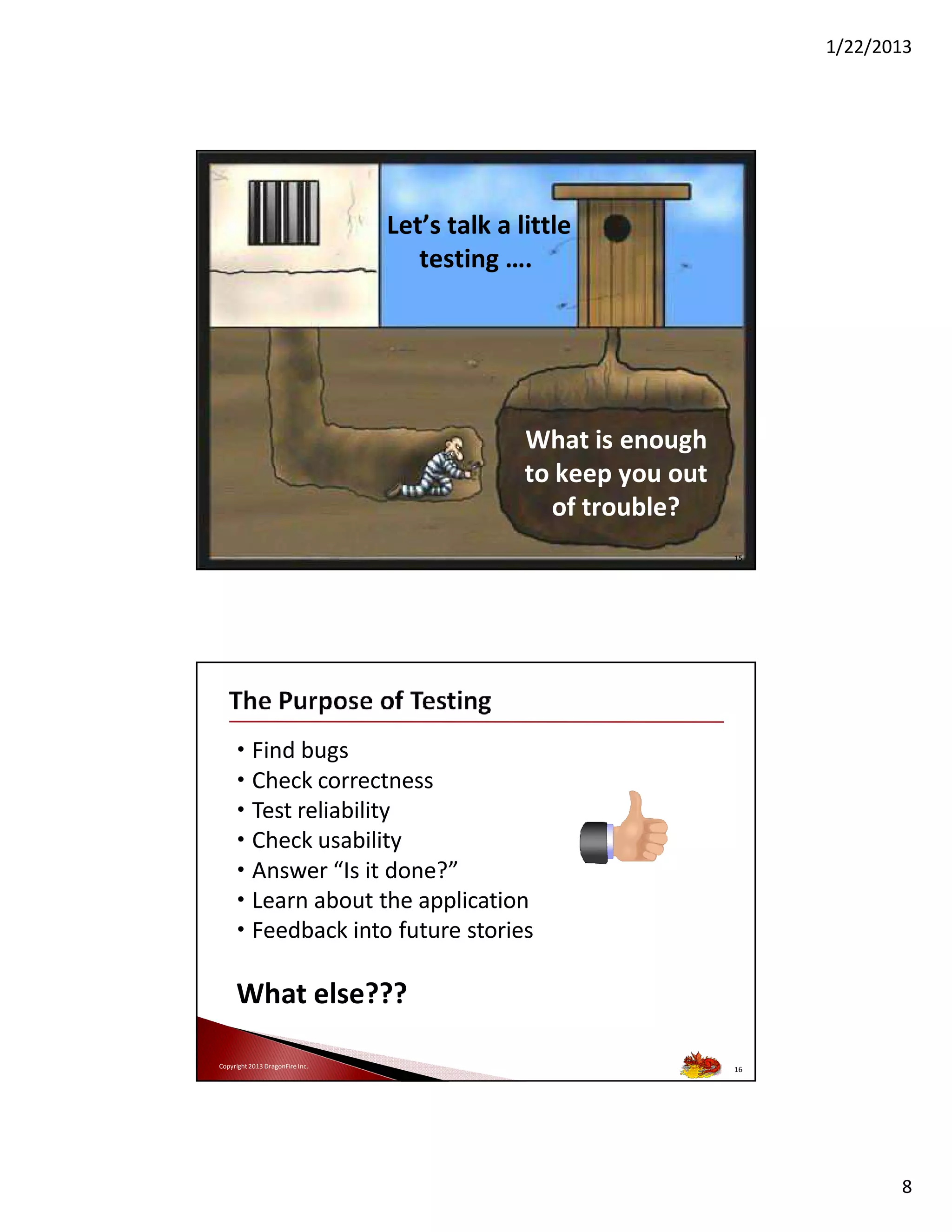

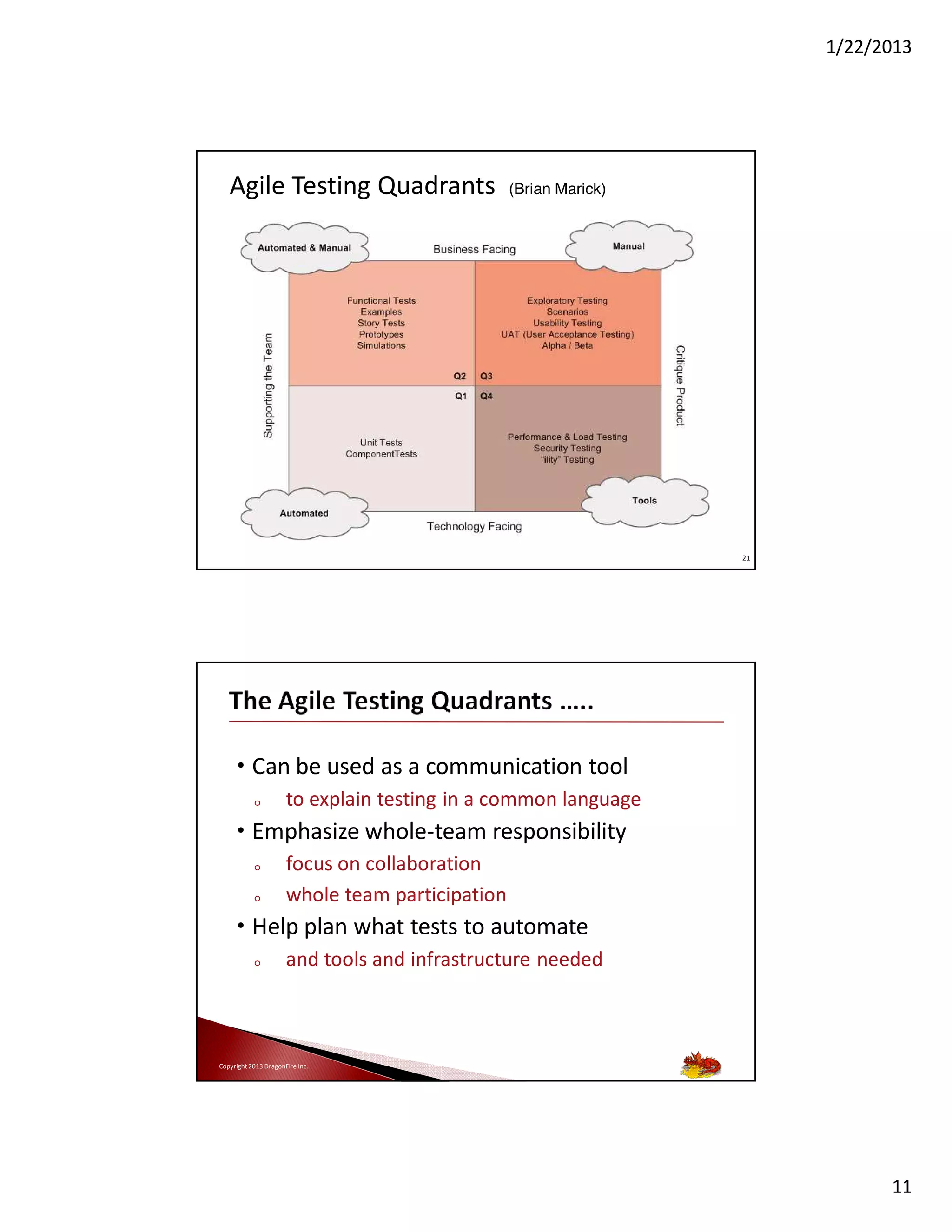

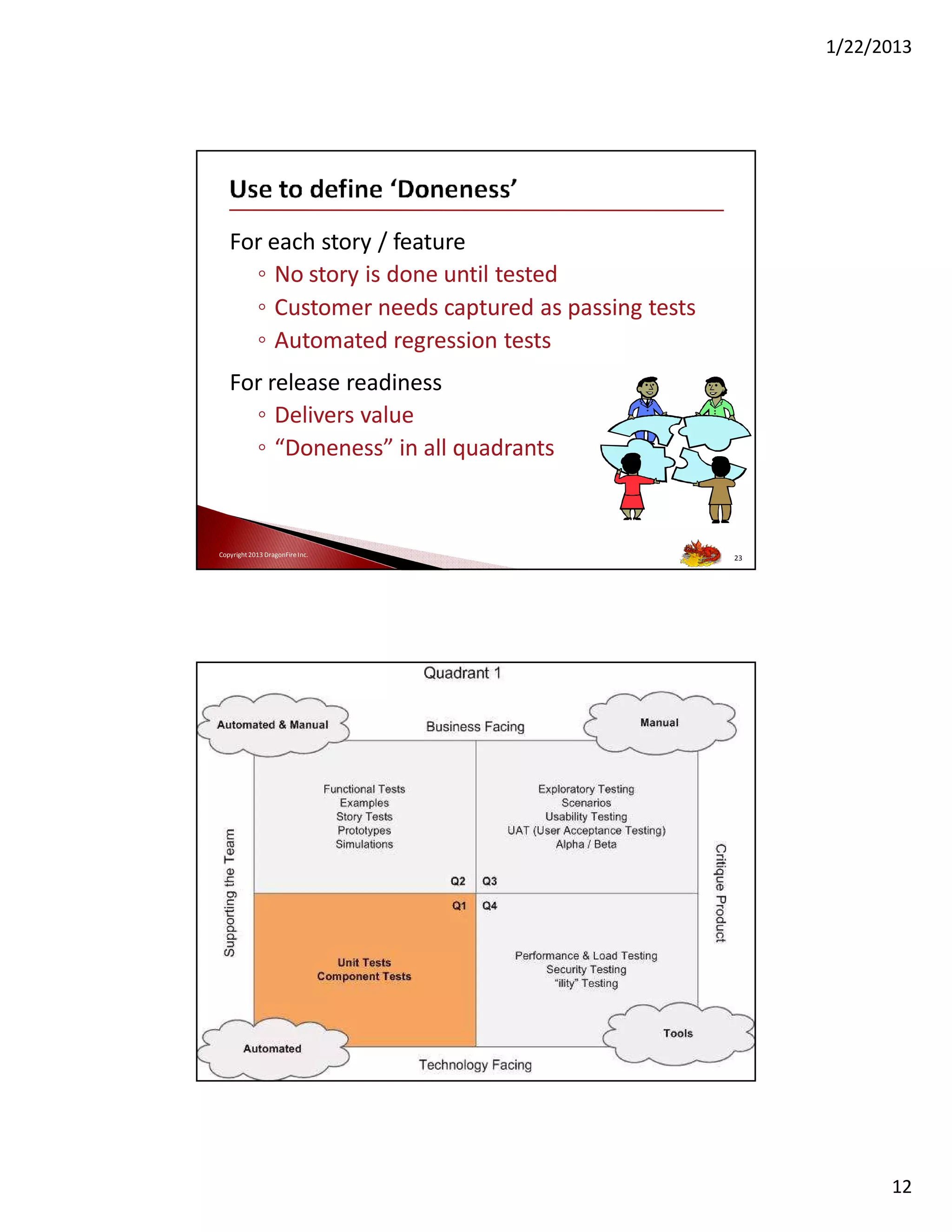

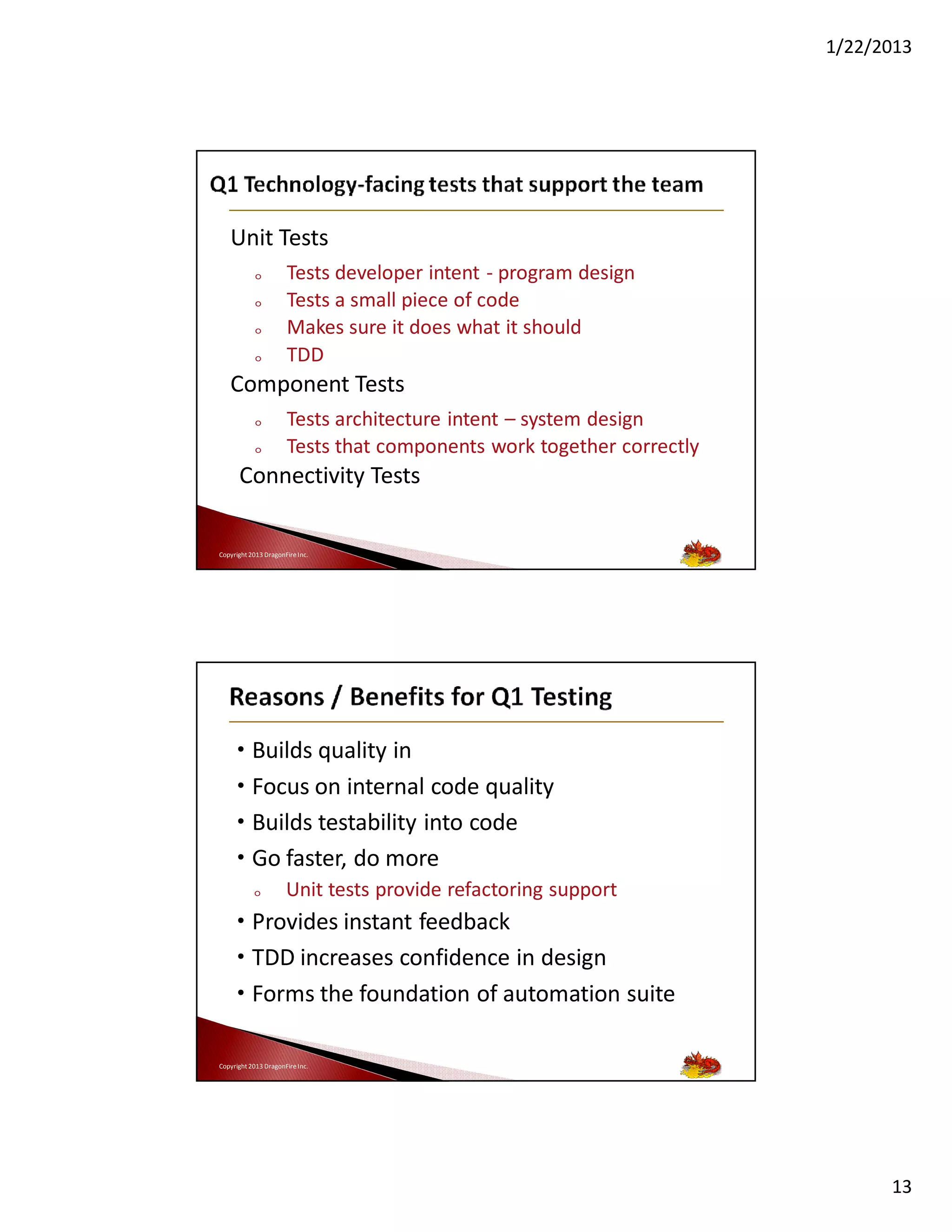

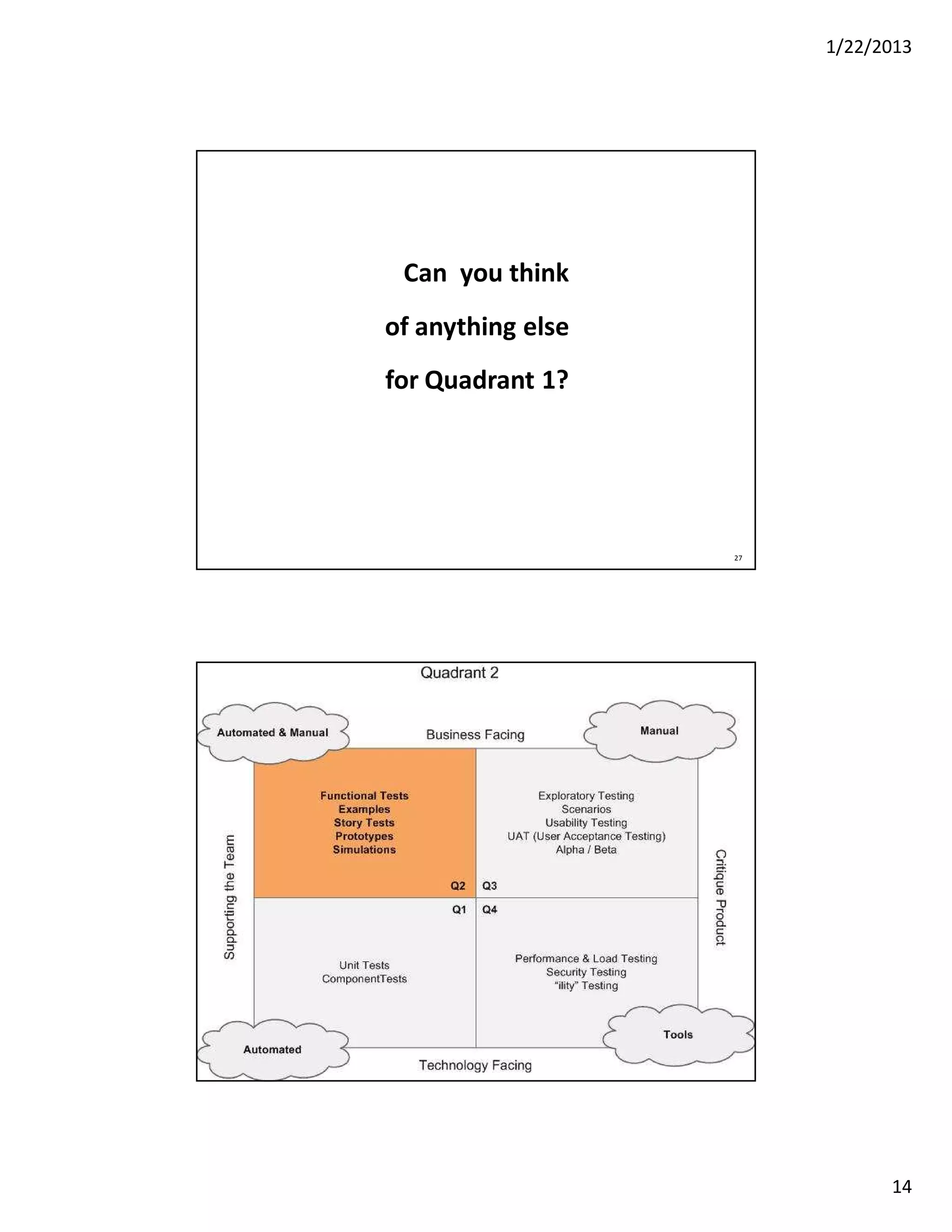

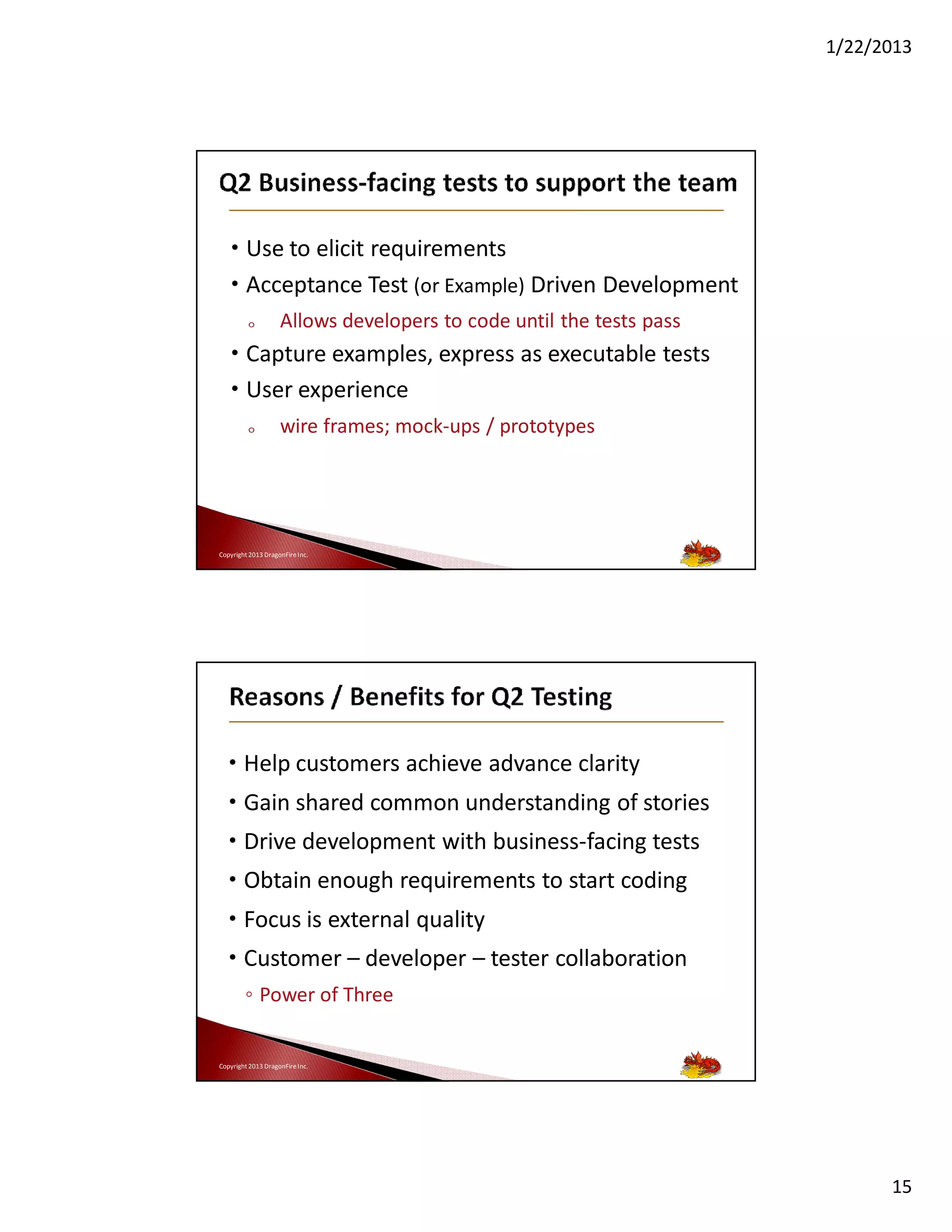

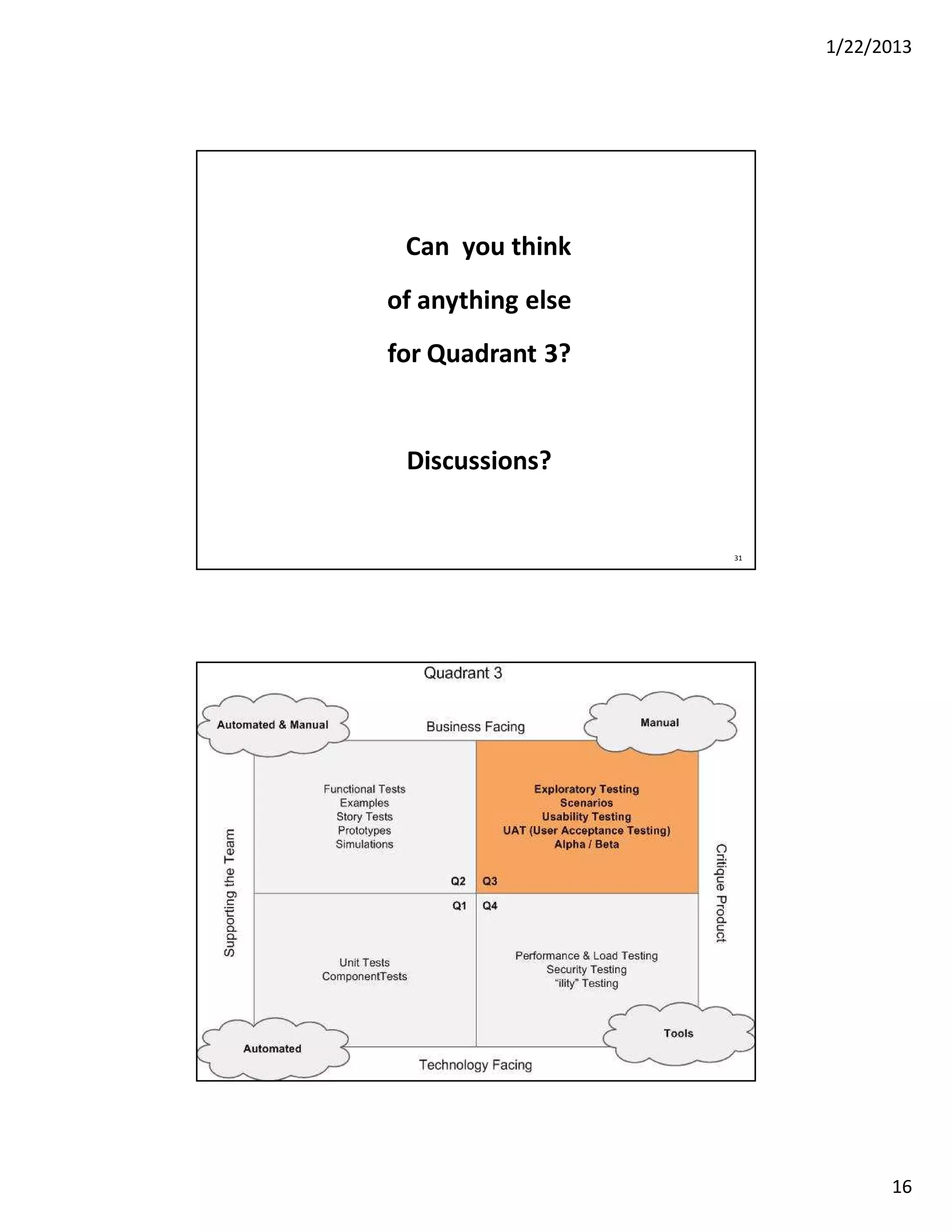

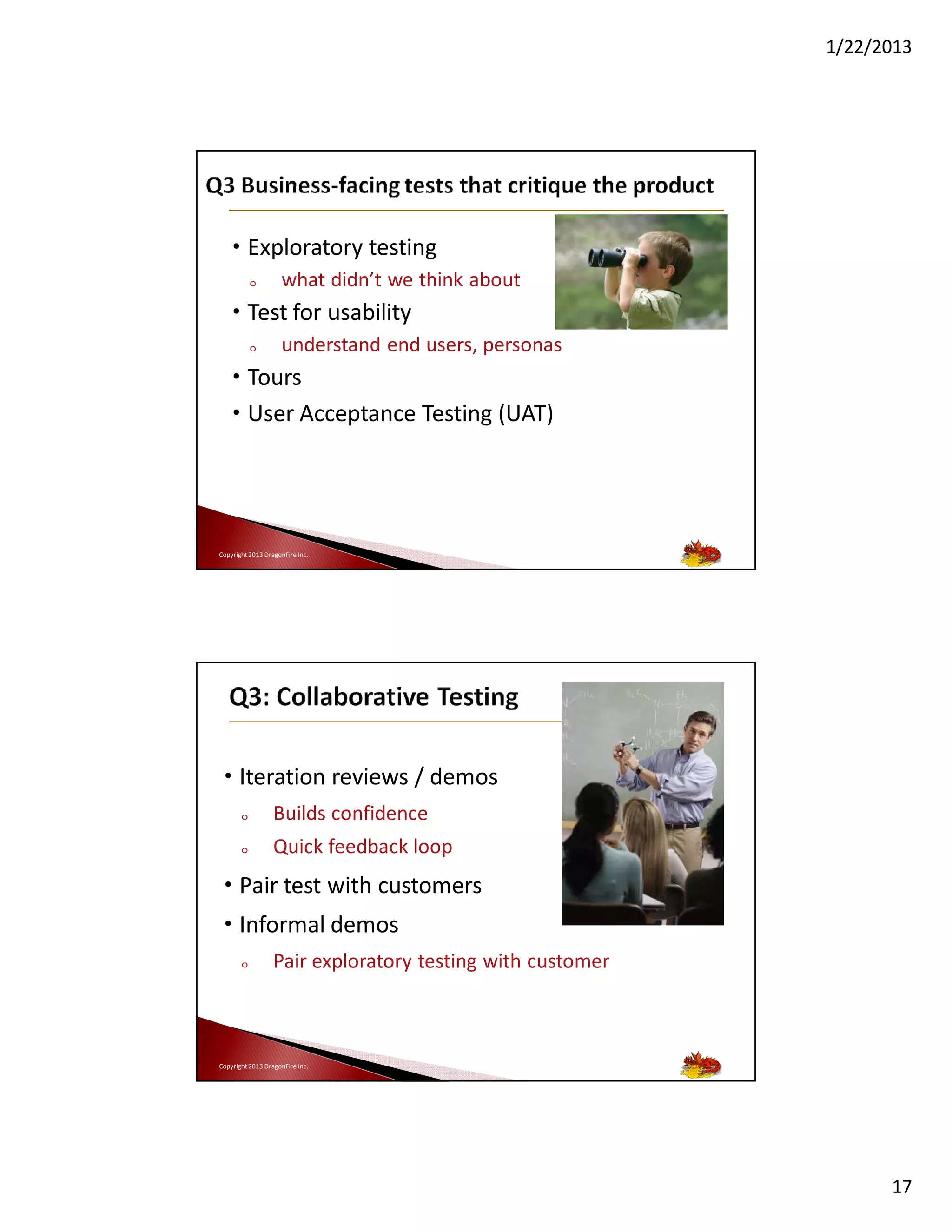

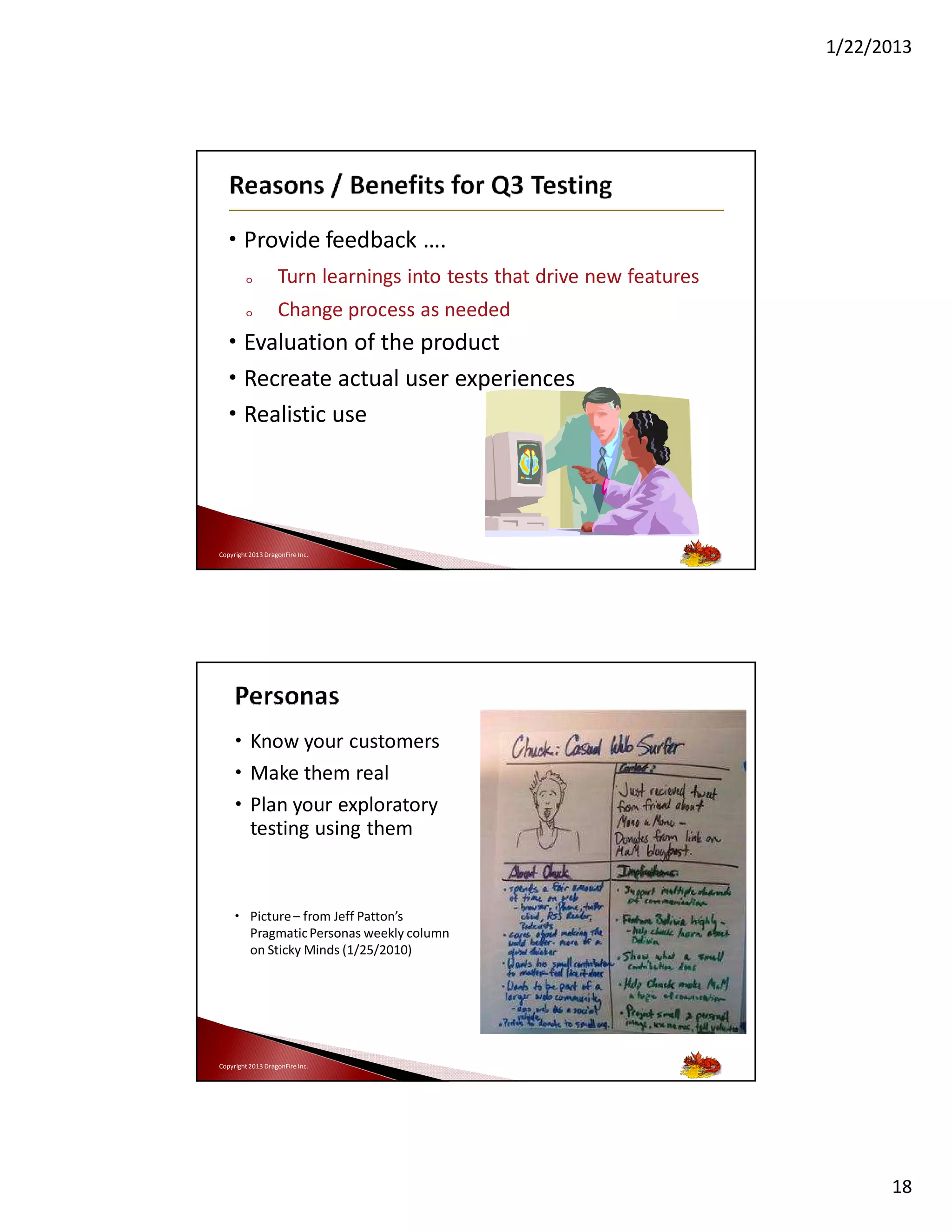

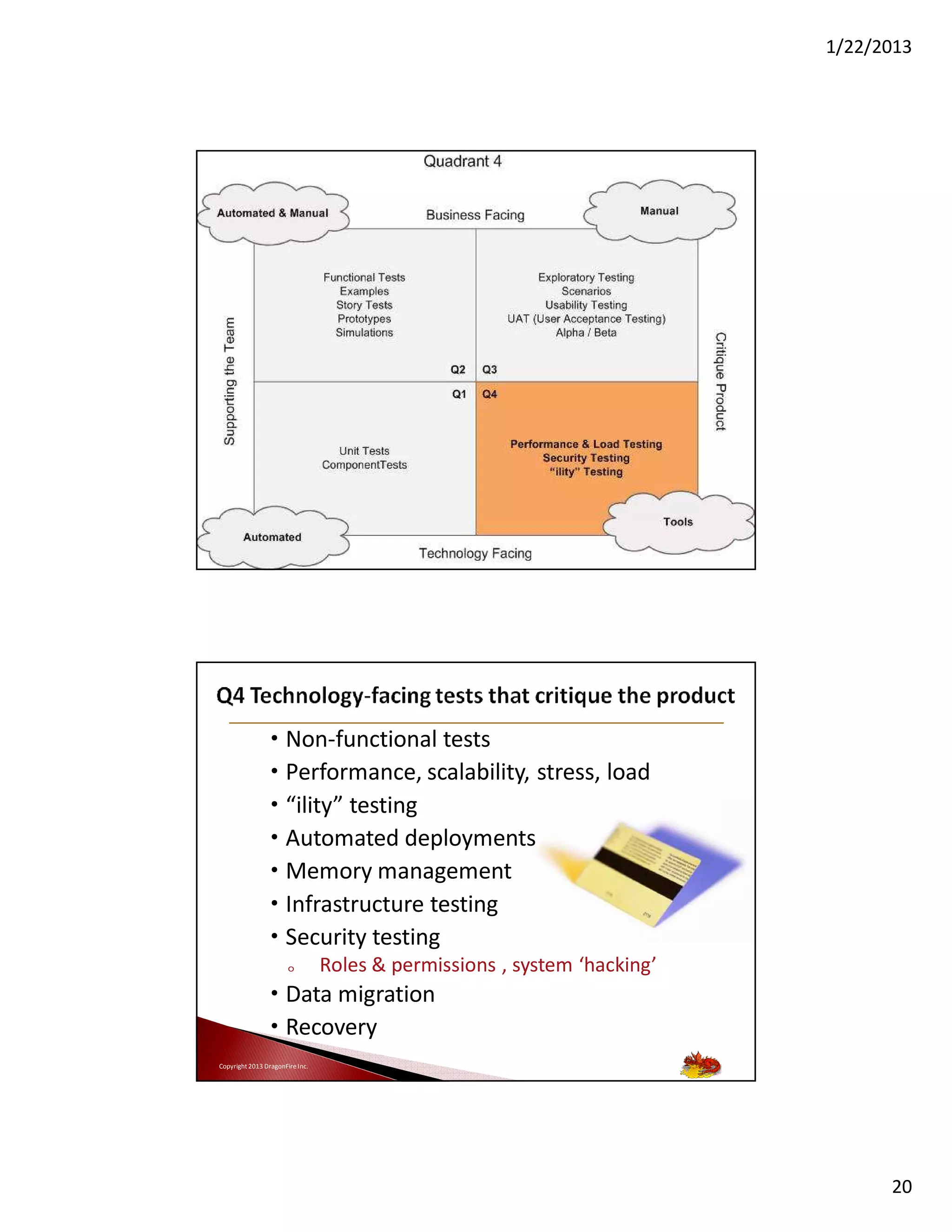

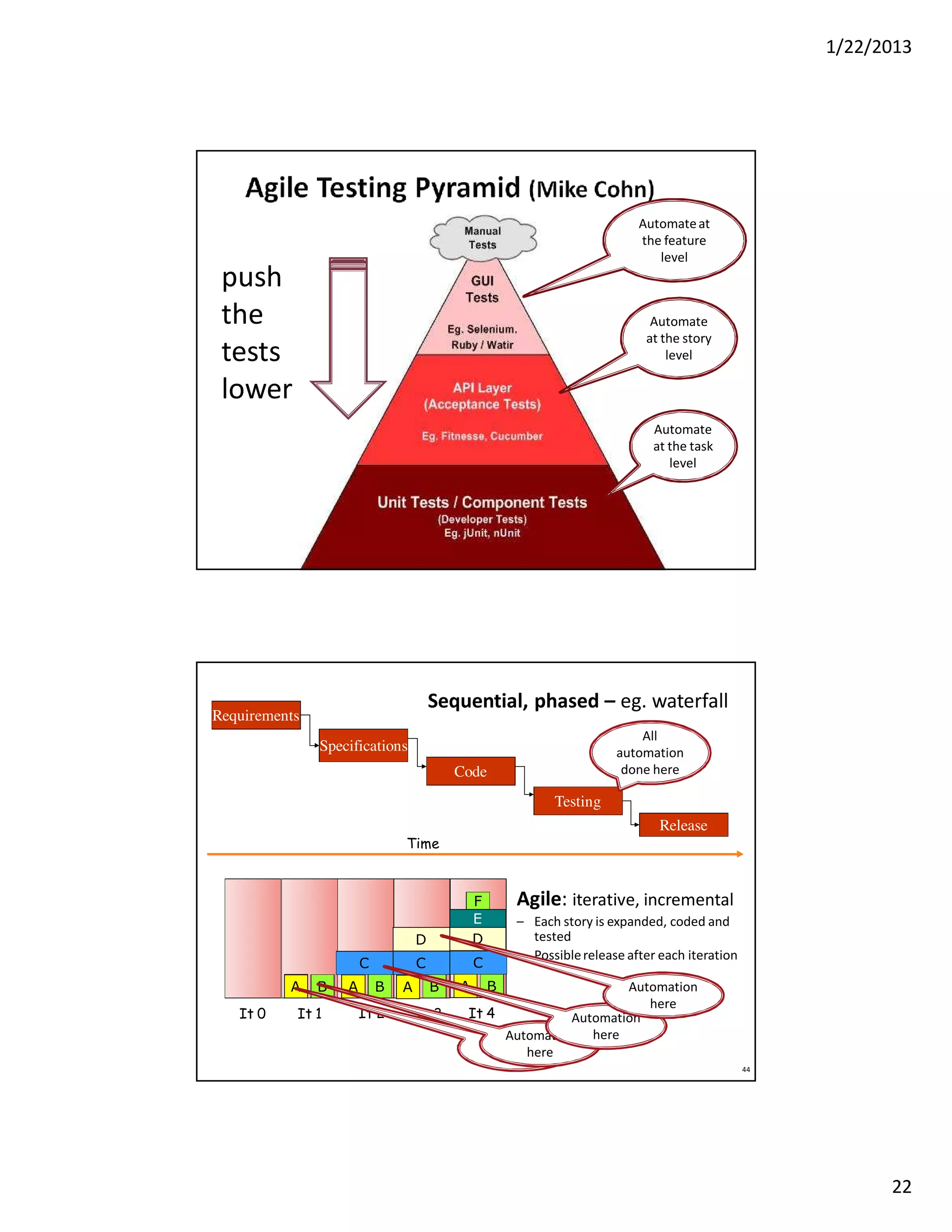

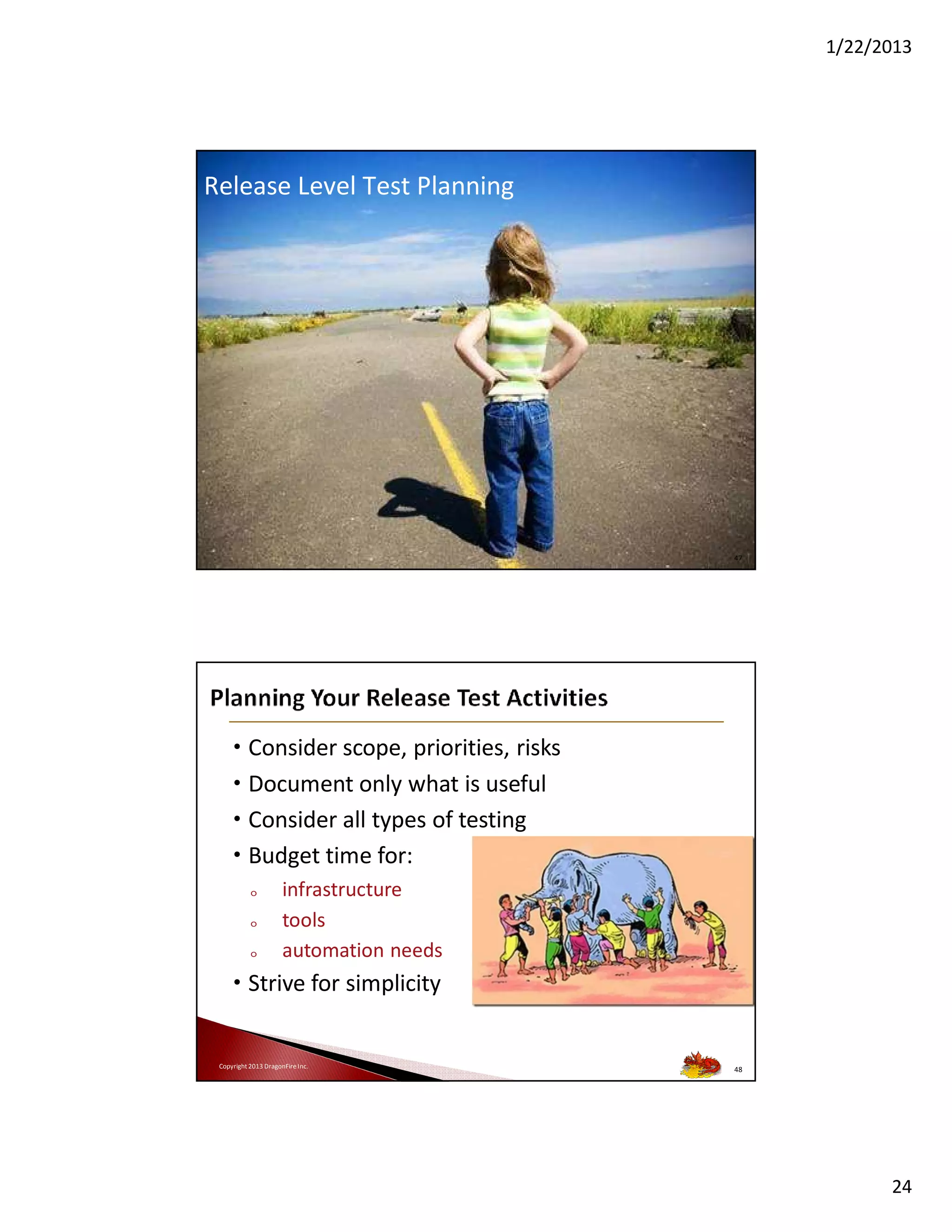

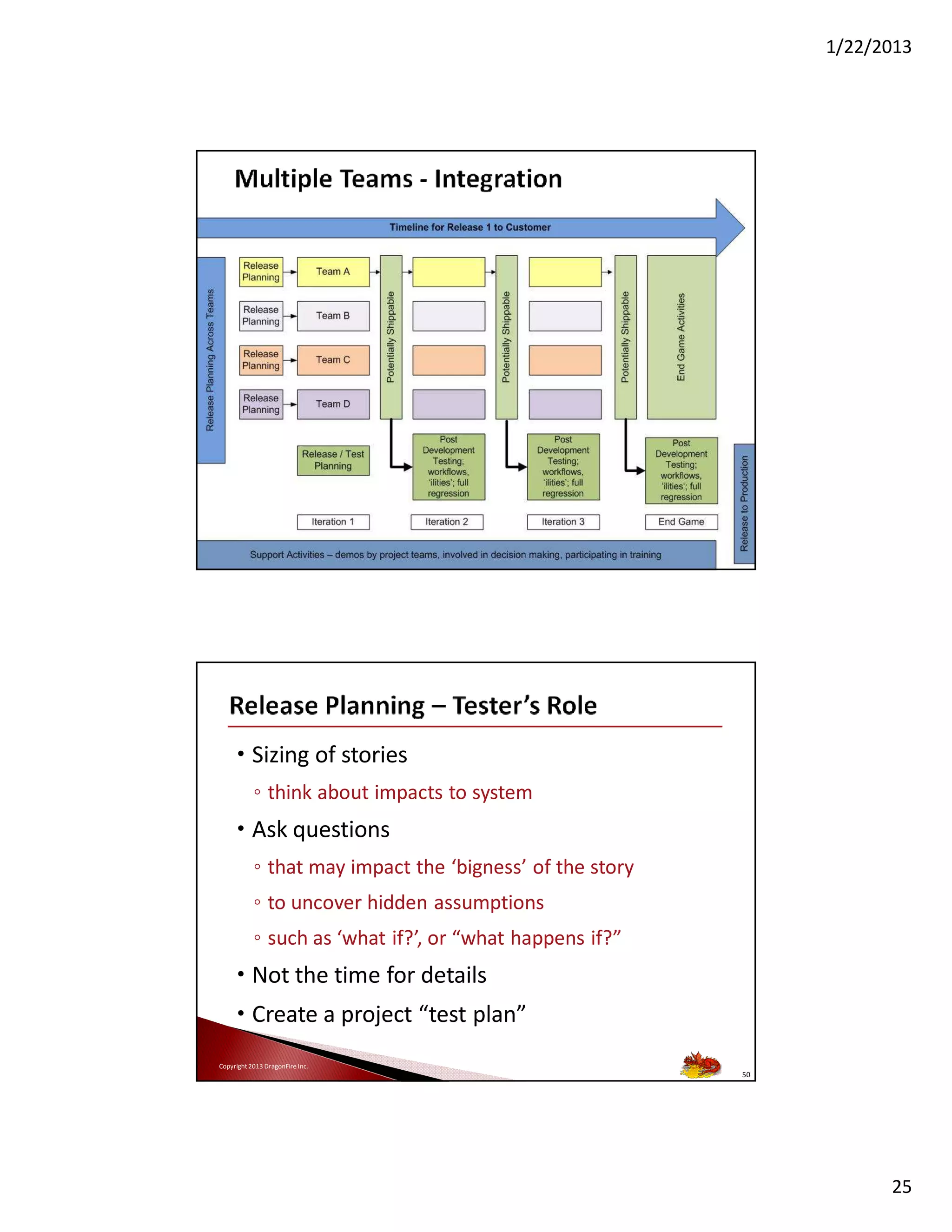

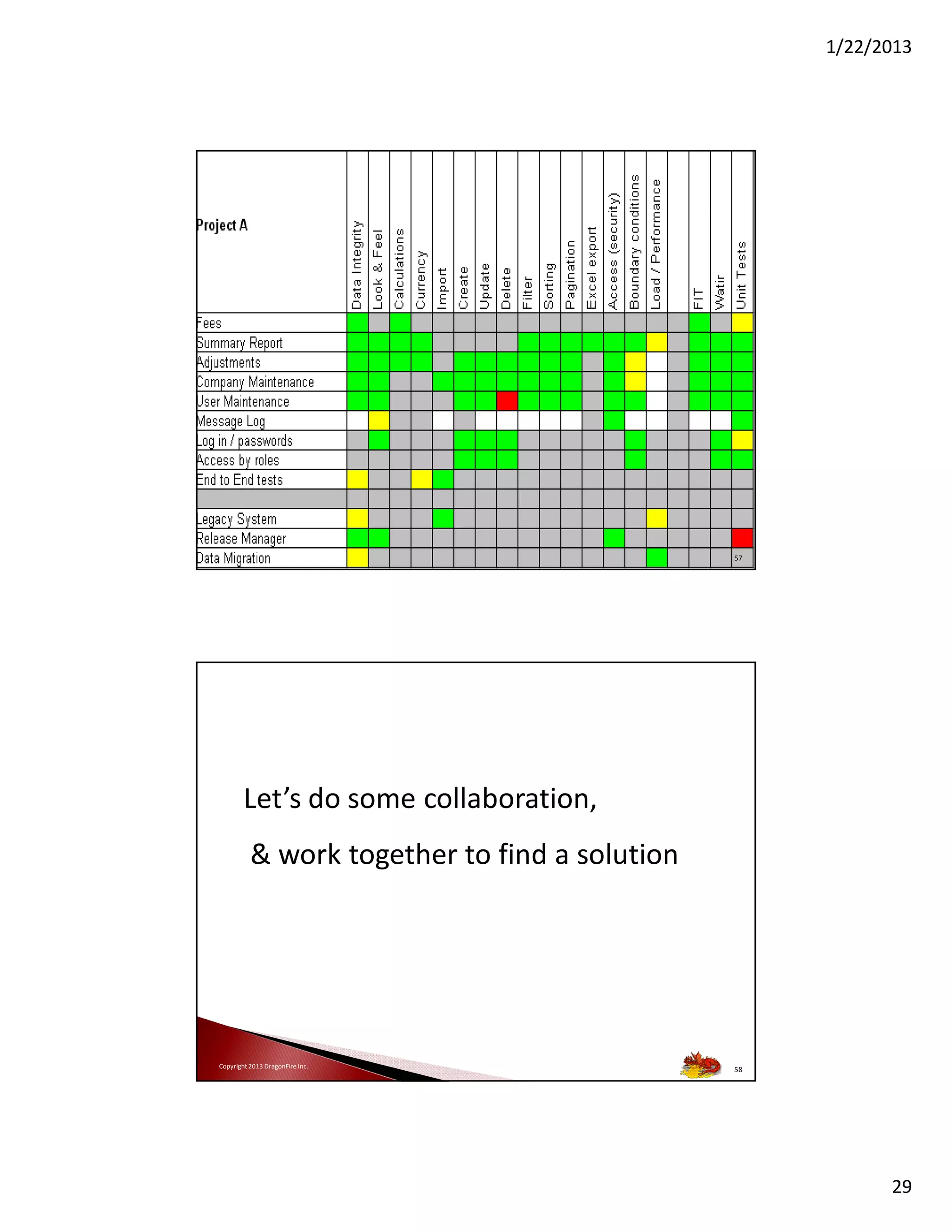

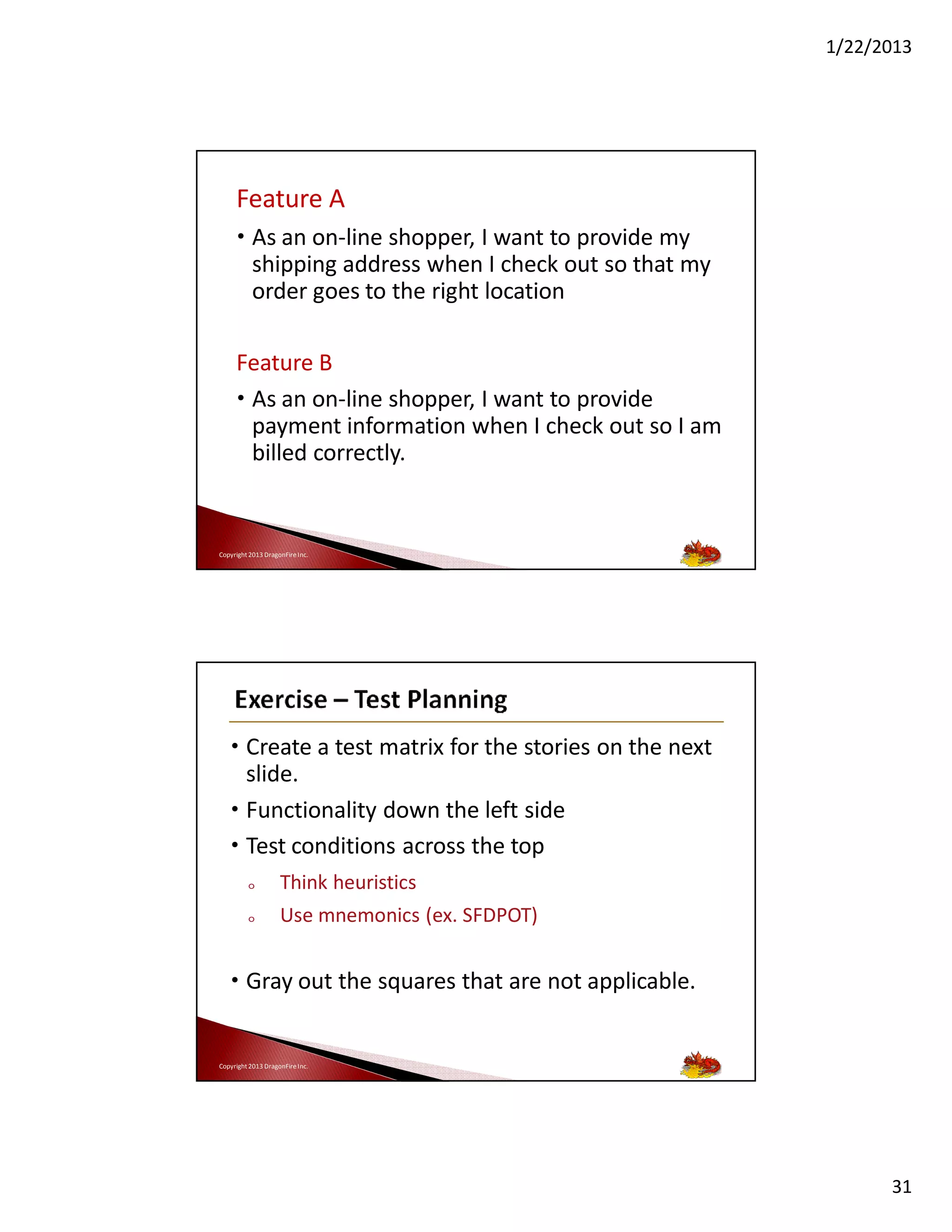

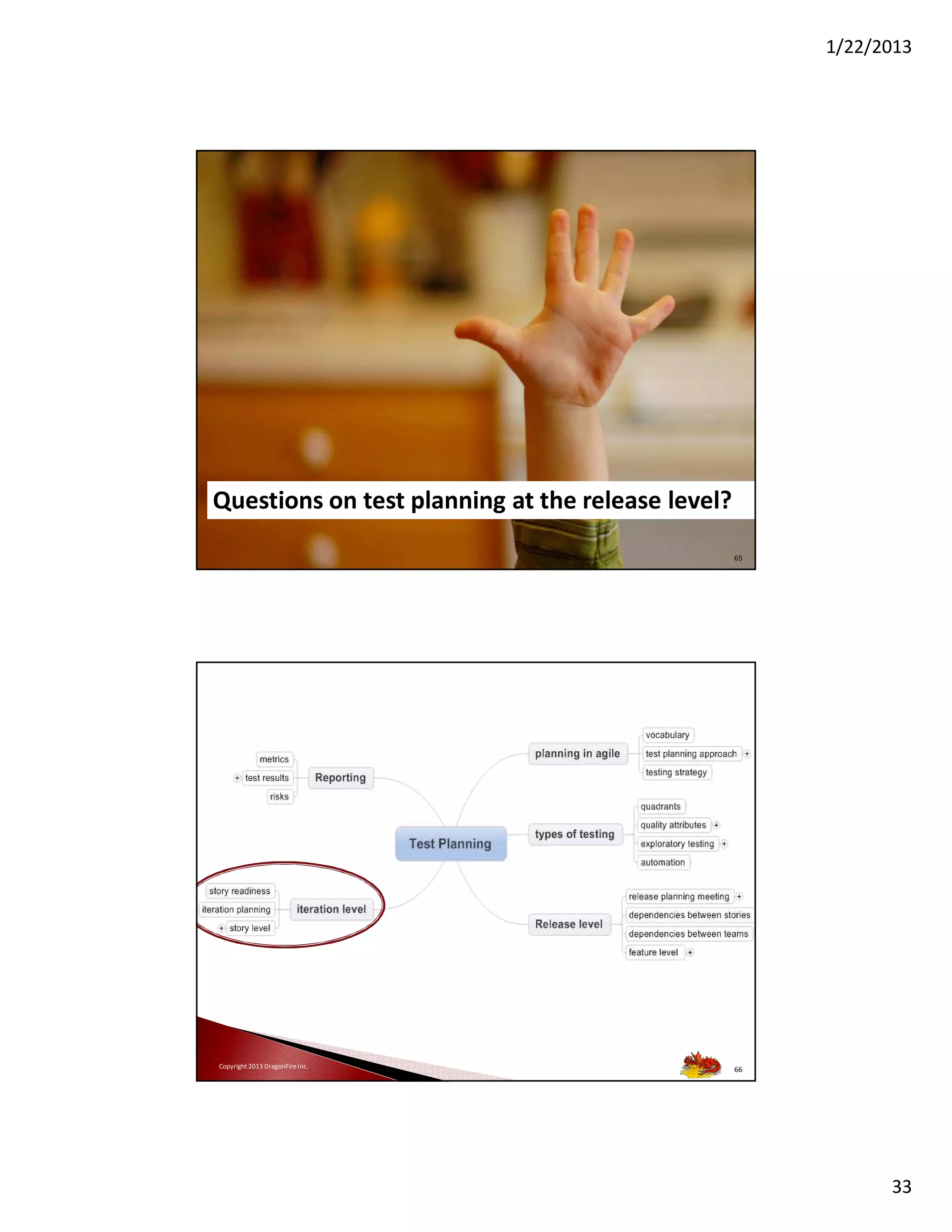

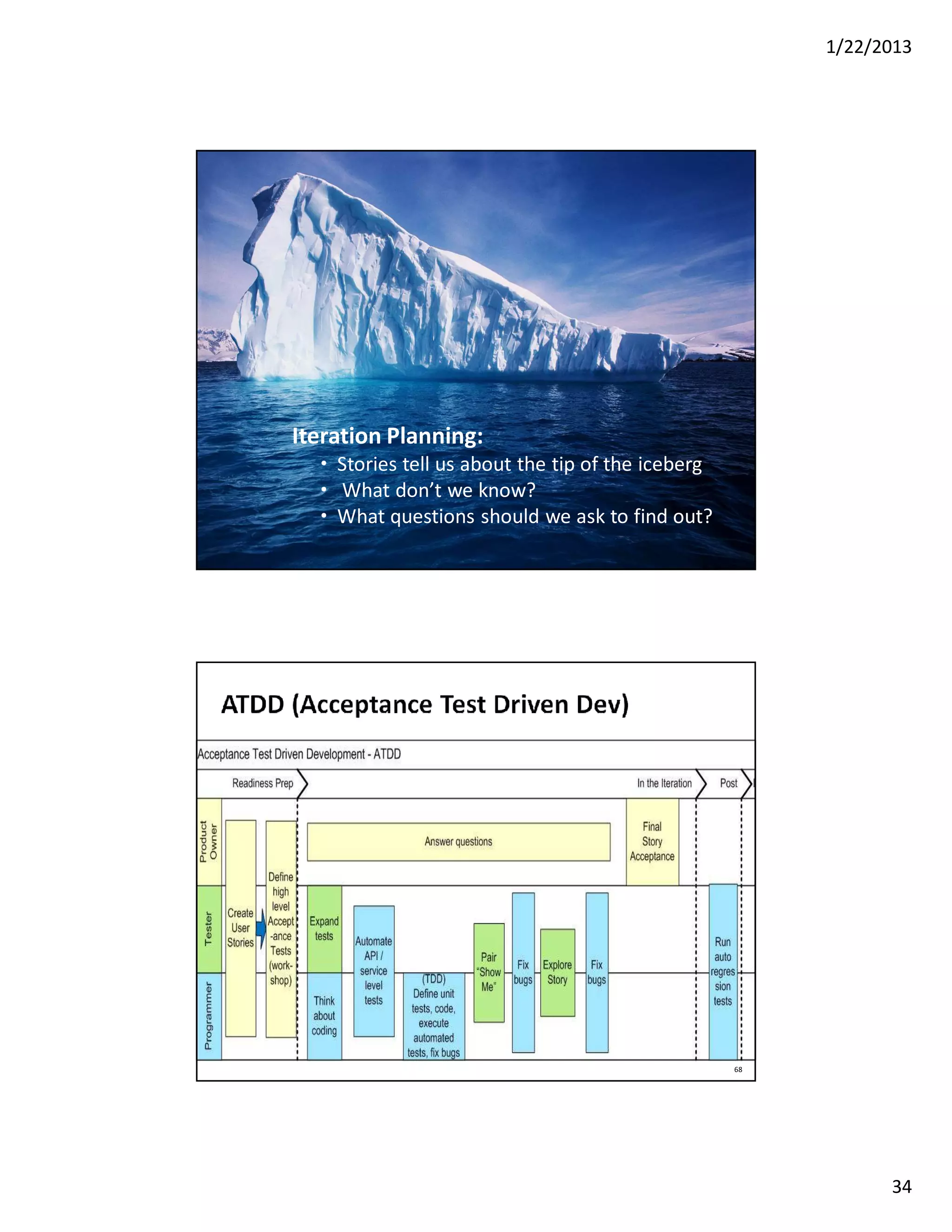

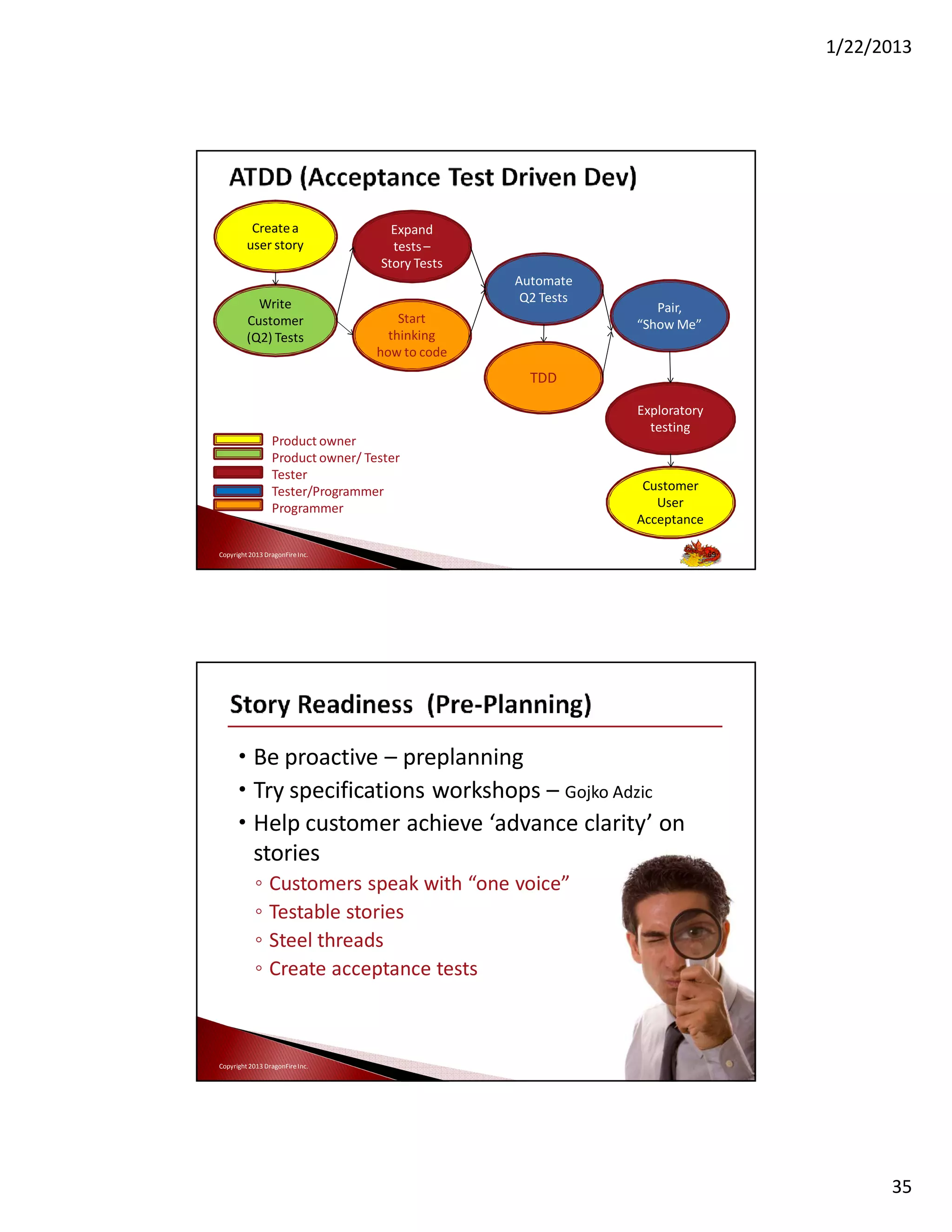

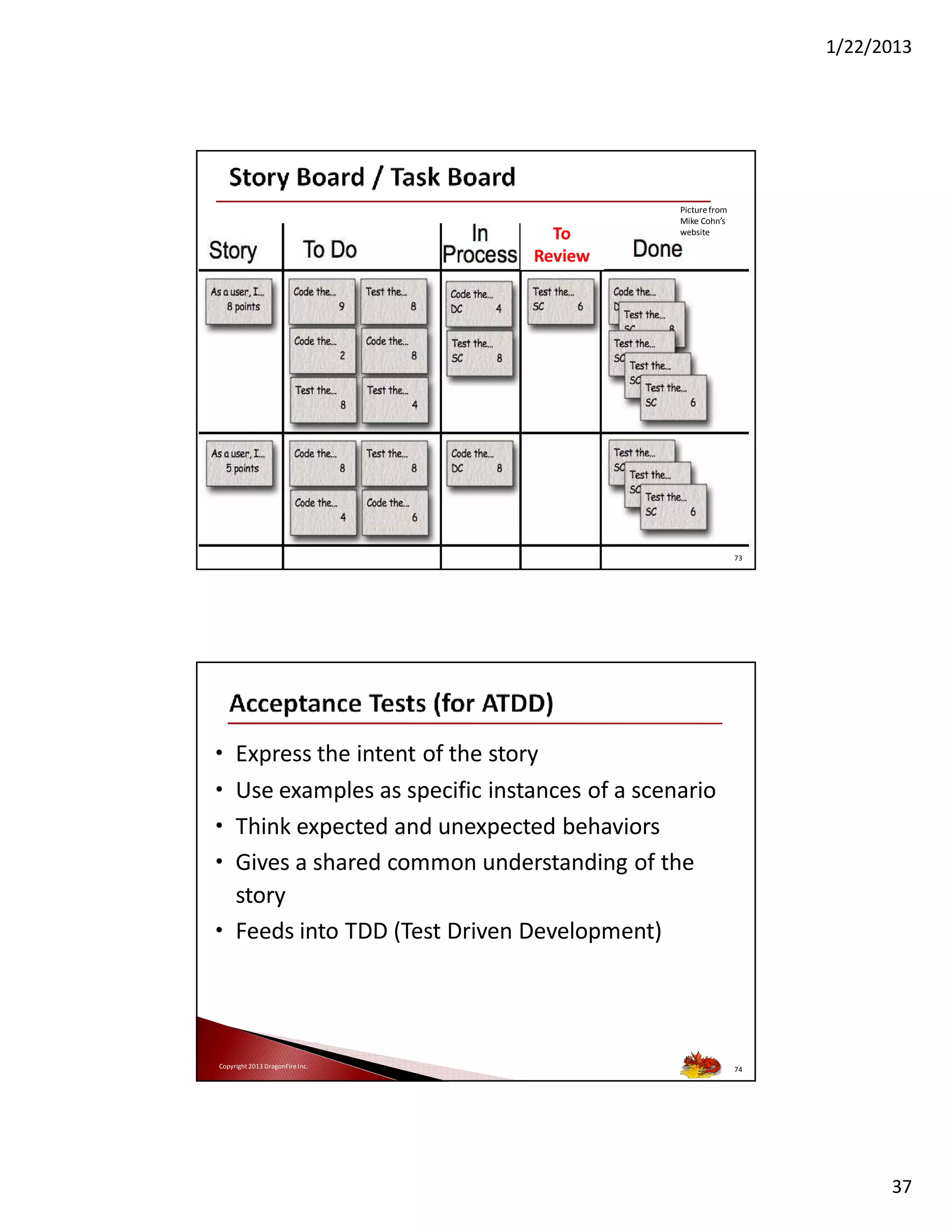

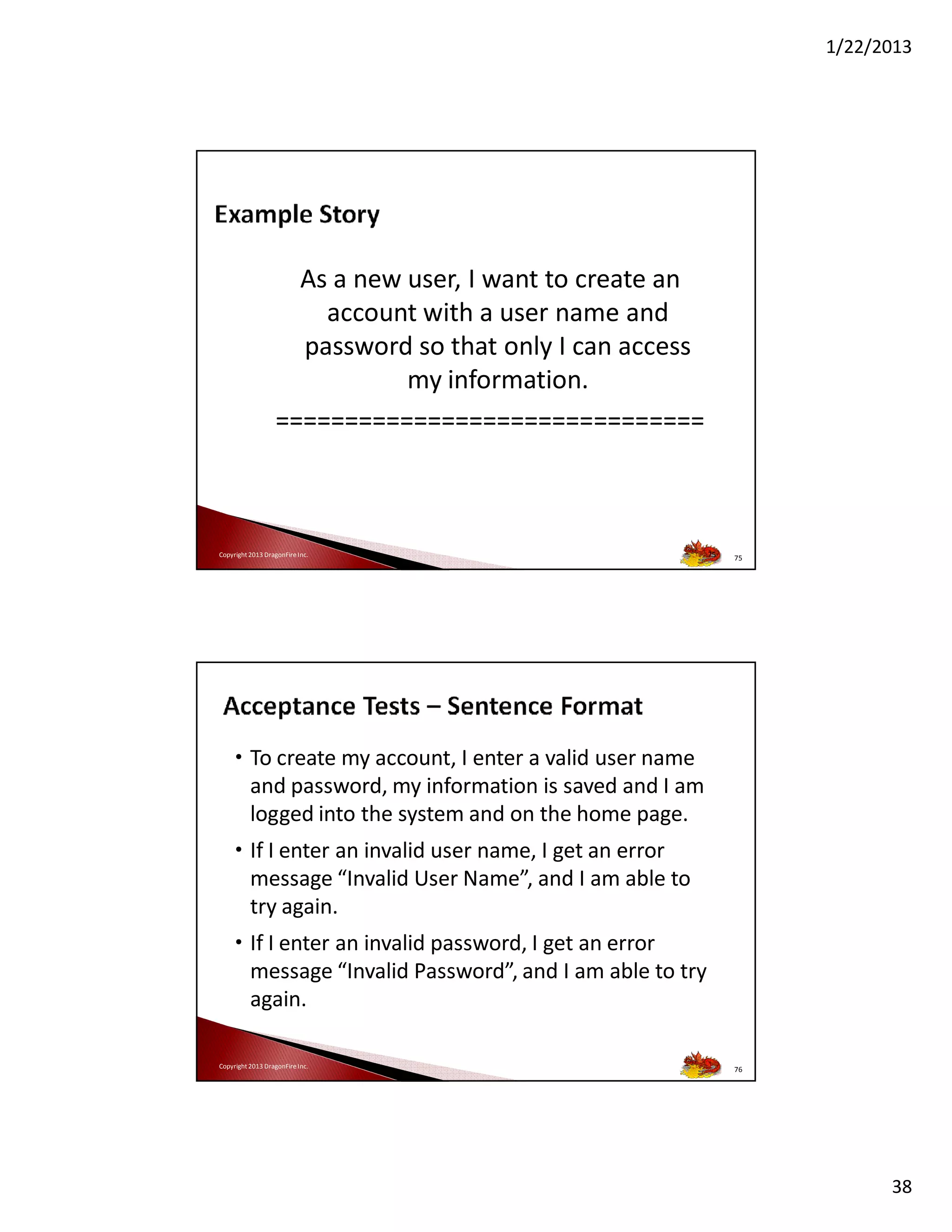

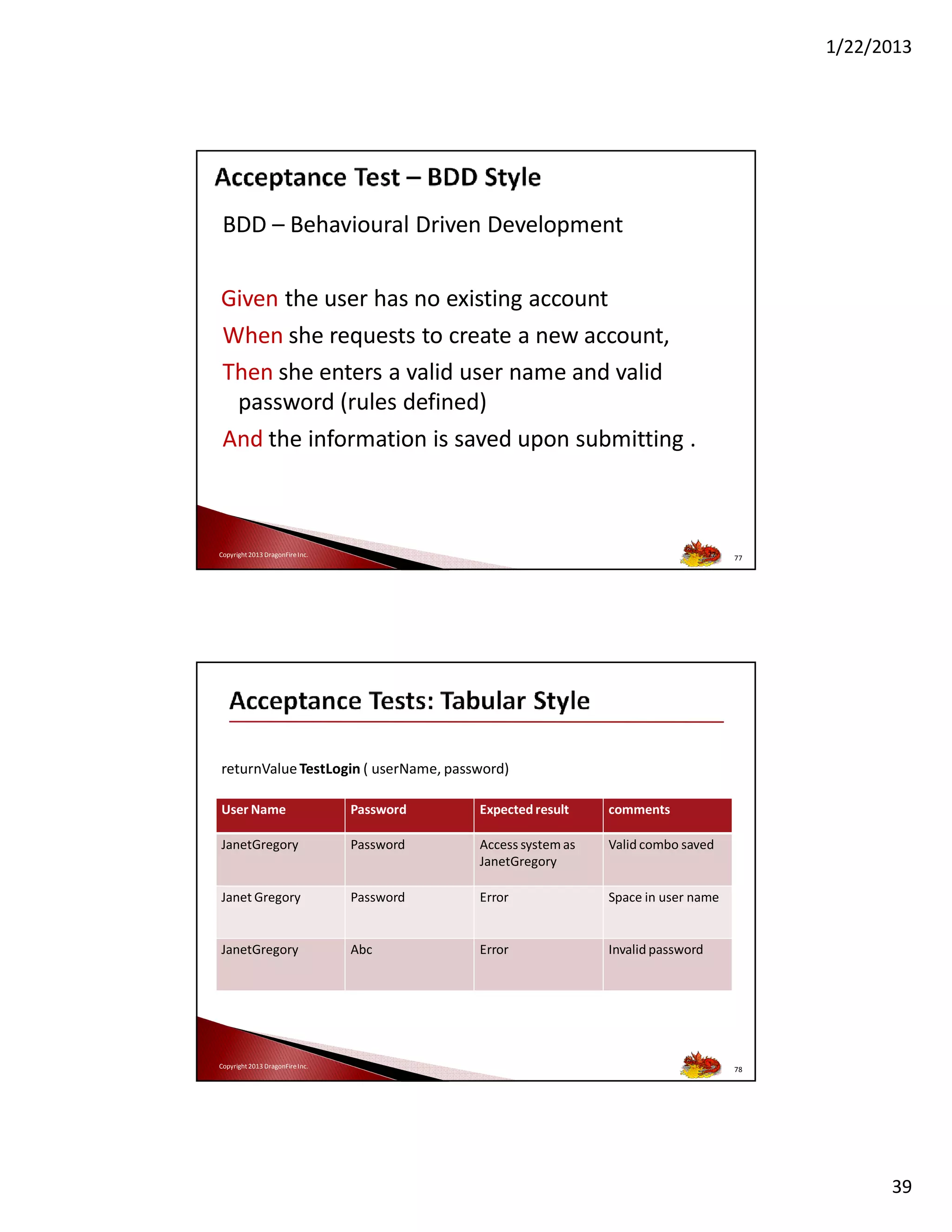

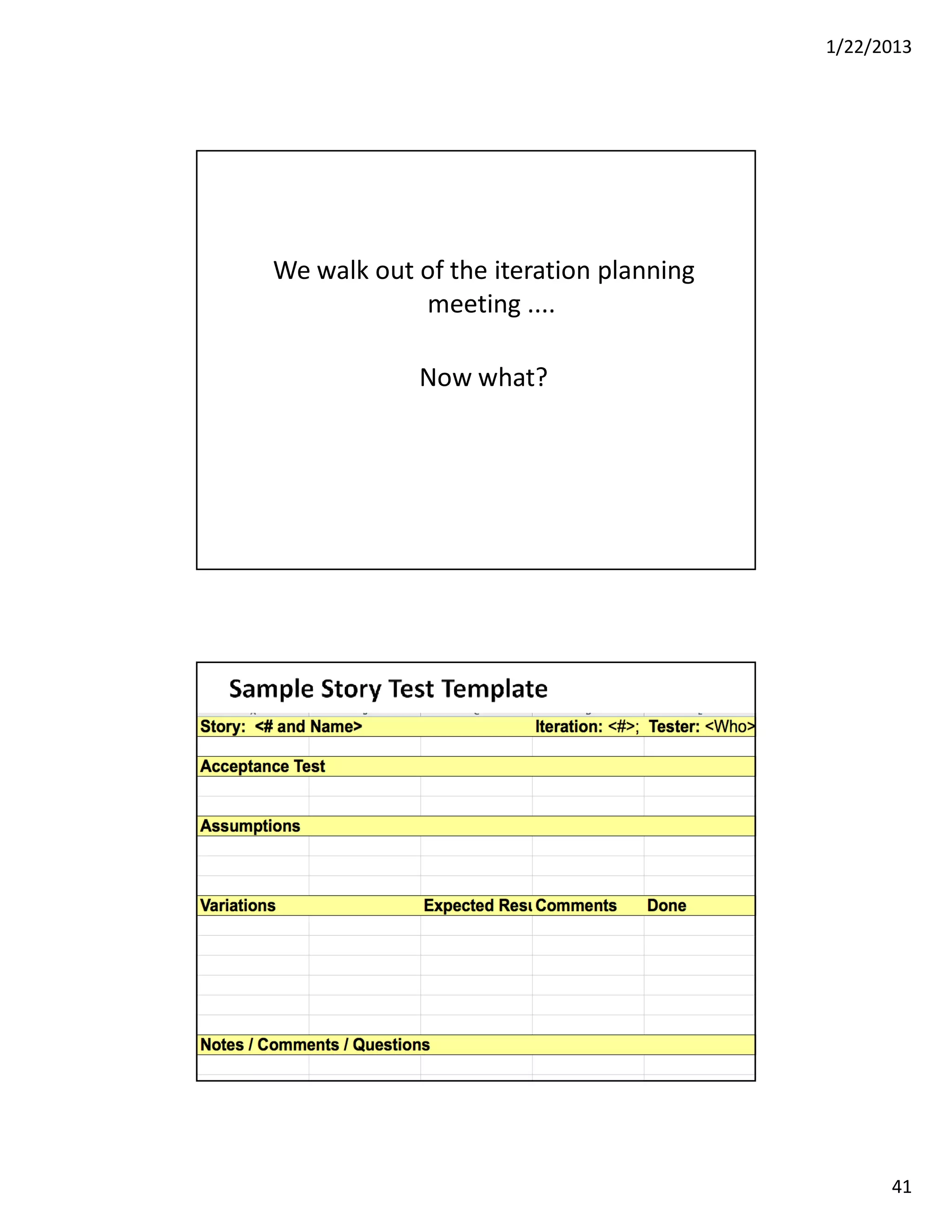

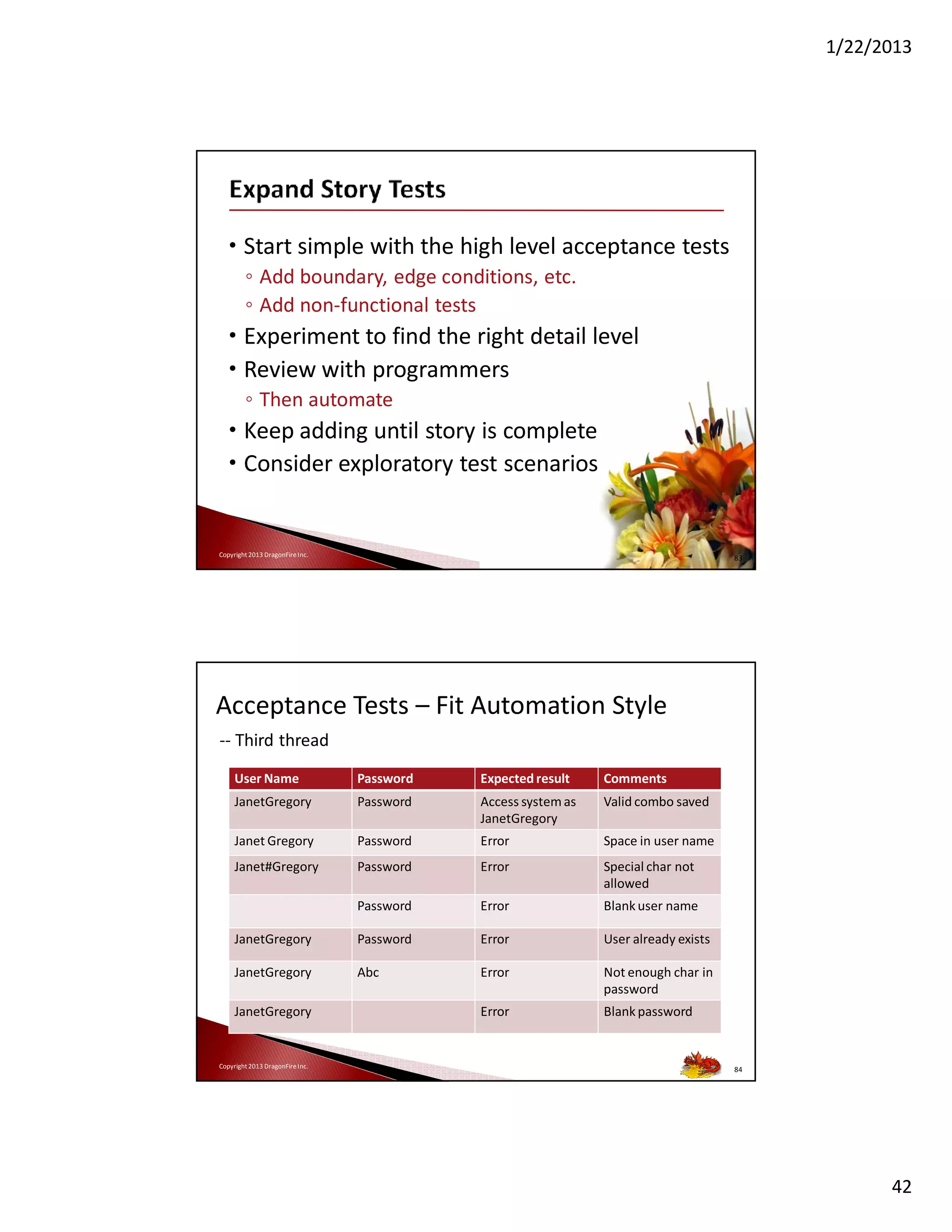

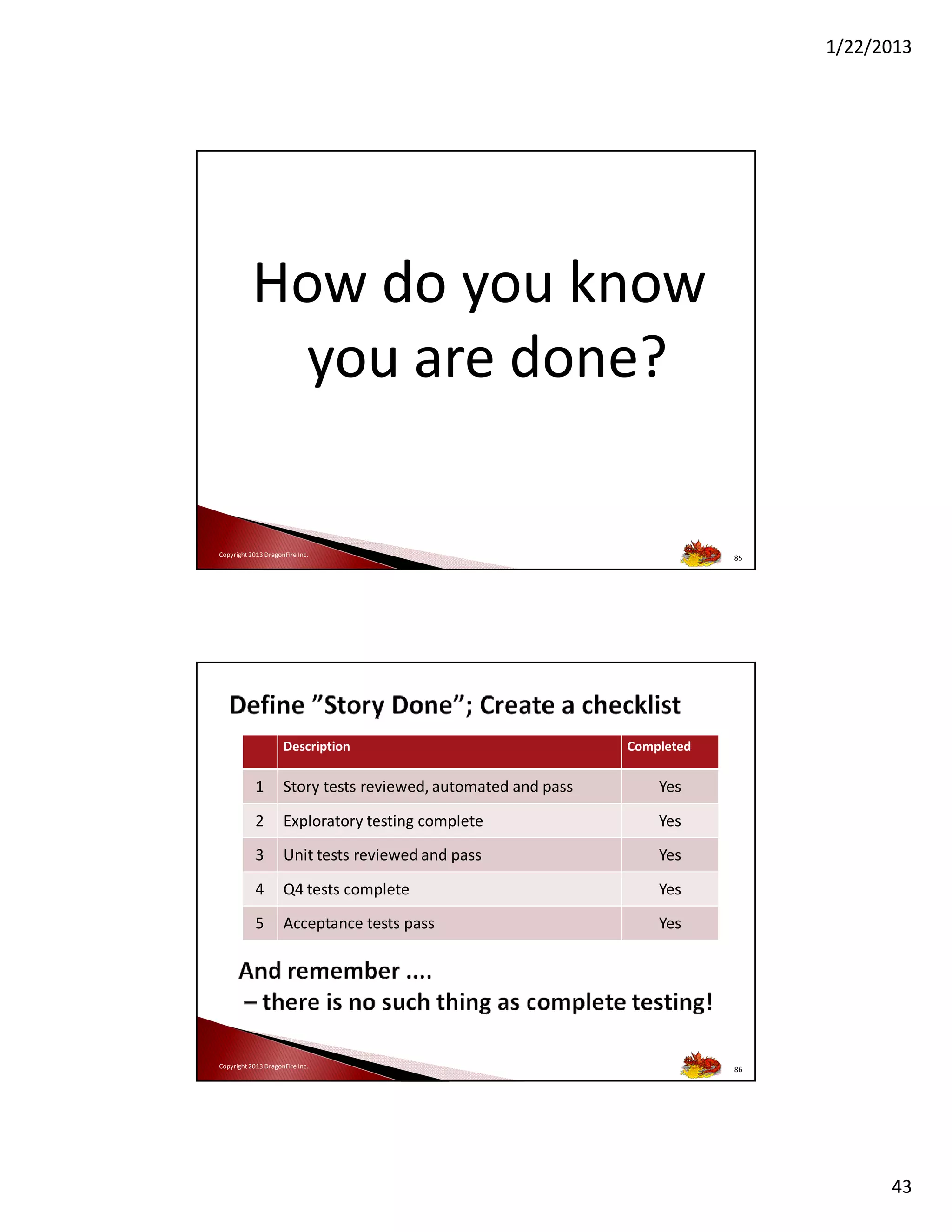

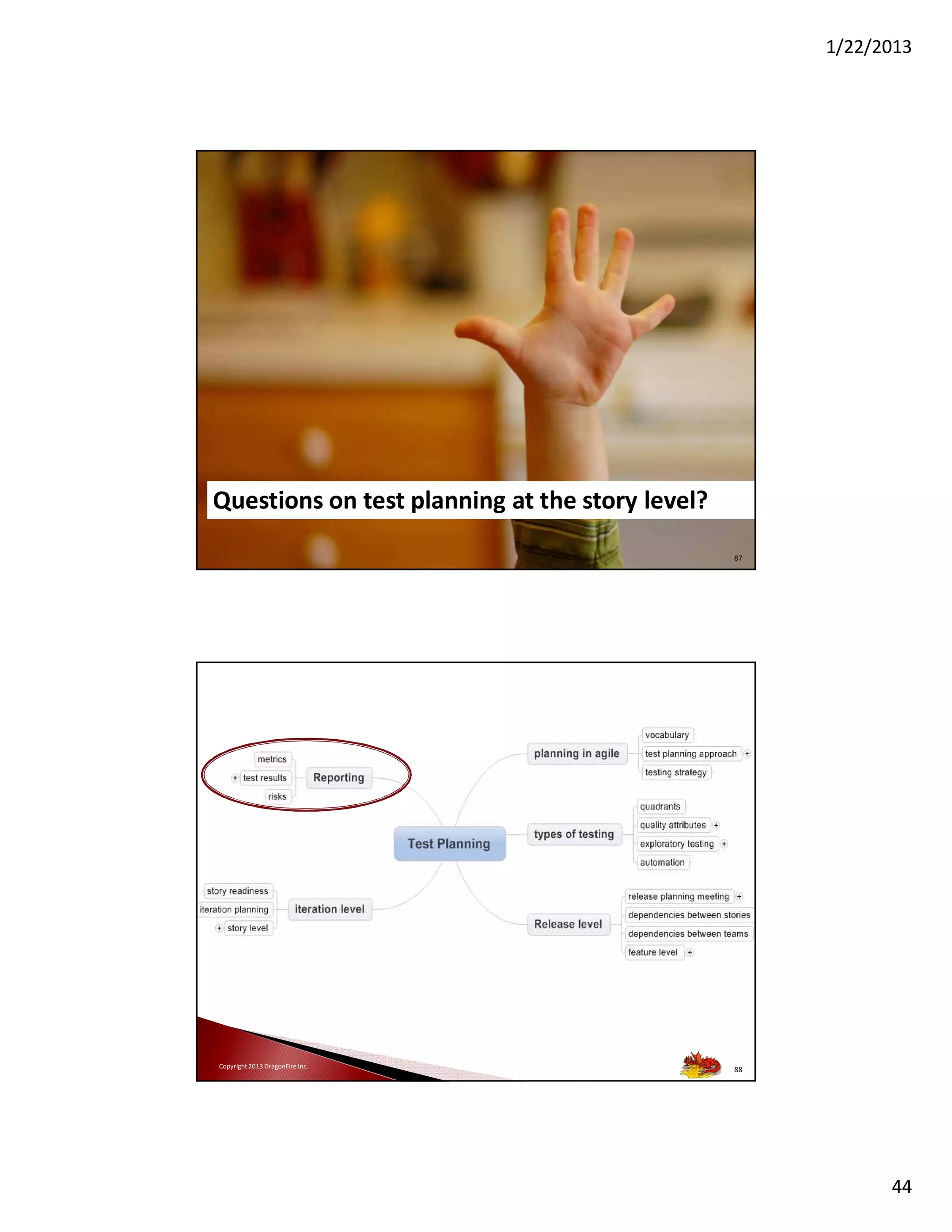

The document discusses agile testing methodologies, emphasizing collaborative techniques among testers, developers, and customers to foster effective test planning and execution. It highlights the importance of adapting testing processes based on iterative feedback, the classification of test types using agile testing quadrants, and the necessity of integrating acceptance tests to ensure quality in software development. Additionally, it provides insights on how to create structured testing strategies, including automation and exploratory testing, to enhance product delivery.