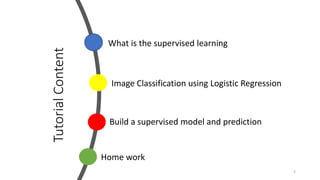

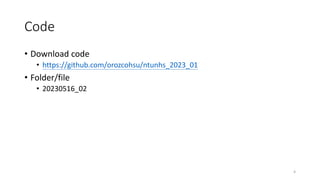

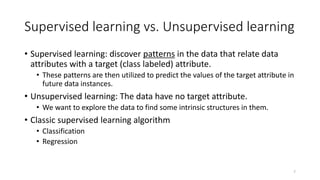

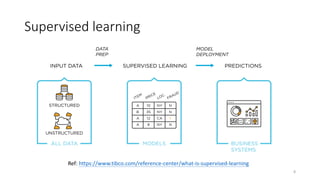

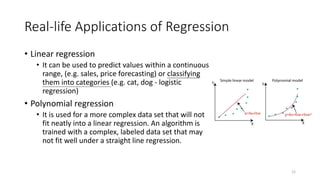

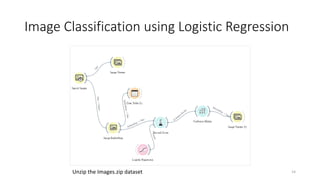

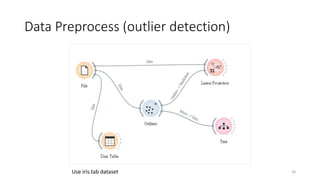

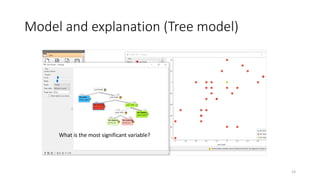

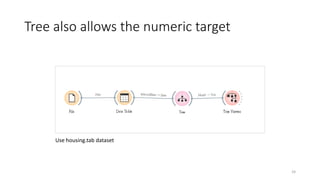

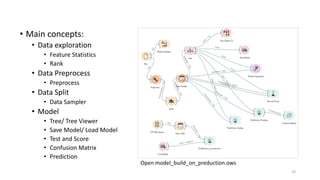

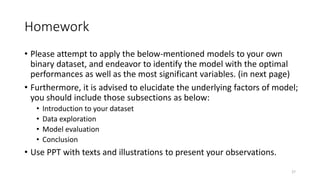

The document outlines key concepts of supervised learning, including its definition, differences from unsupervised learning, and applications such as classification and regression. It provides tutorials on image classification using logistic regression and discusses various real-life applications like spam detection and price forecasting. It also includes homework assignments to implement different models on binary datasets, focusing on model evaluation and significant variables.