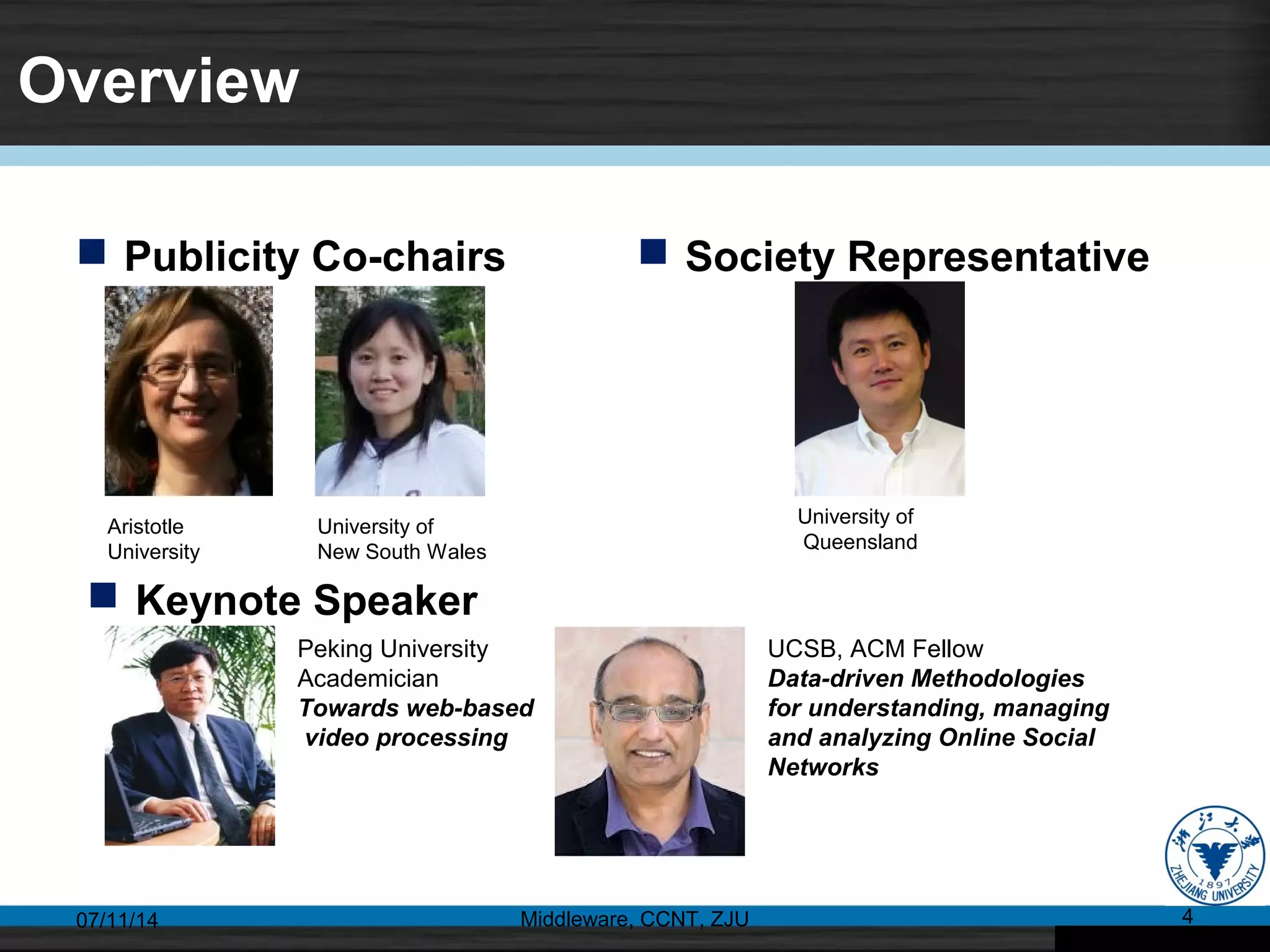

The 14th International Conference on Web Information System Engineering (WISE 2013) took place in Nanjing, China, from October 13 to 15, 2013, featuring 48 research papers with a 24% acceptance rate from 38 countries. Keynotes addressed topics from web-based video processing to big data security, while sessions included various areas such as web mining, recommendation systems, and social networks. The conference also showcased innovative algorithms and frameworks for improving web applications across multiple domains.