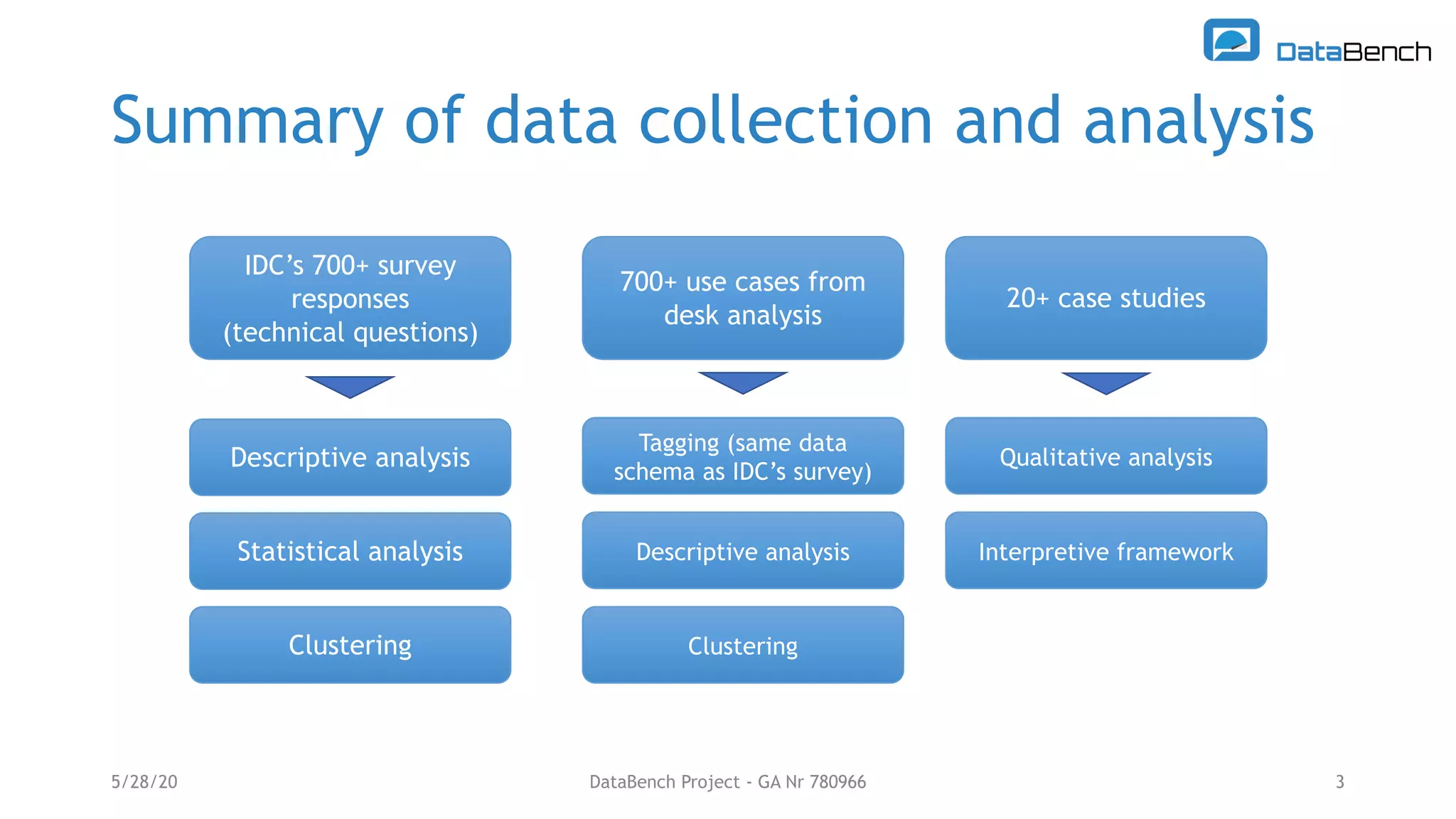

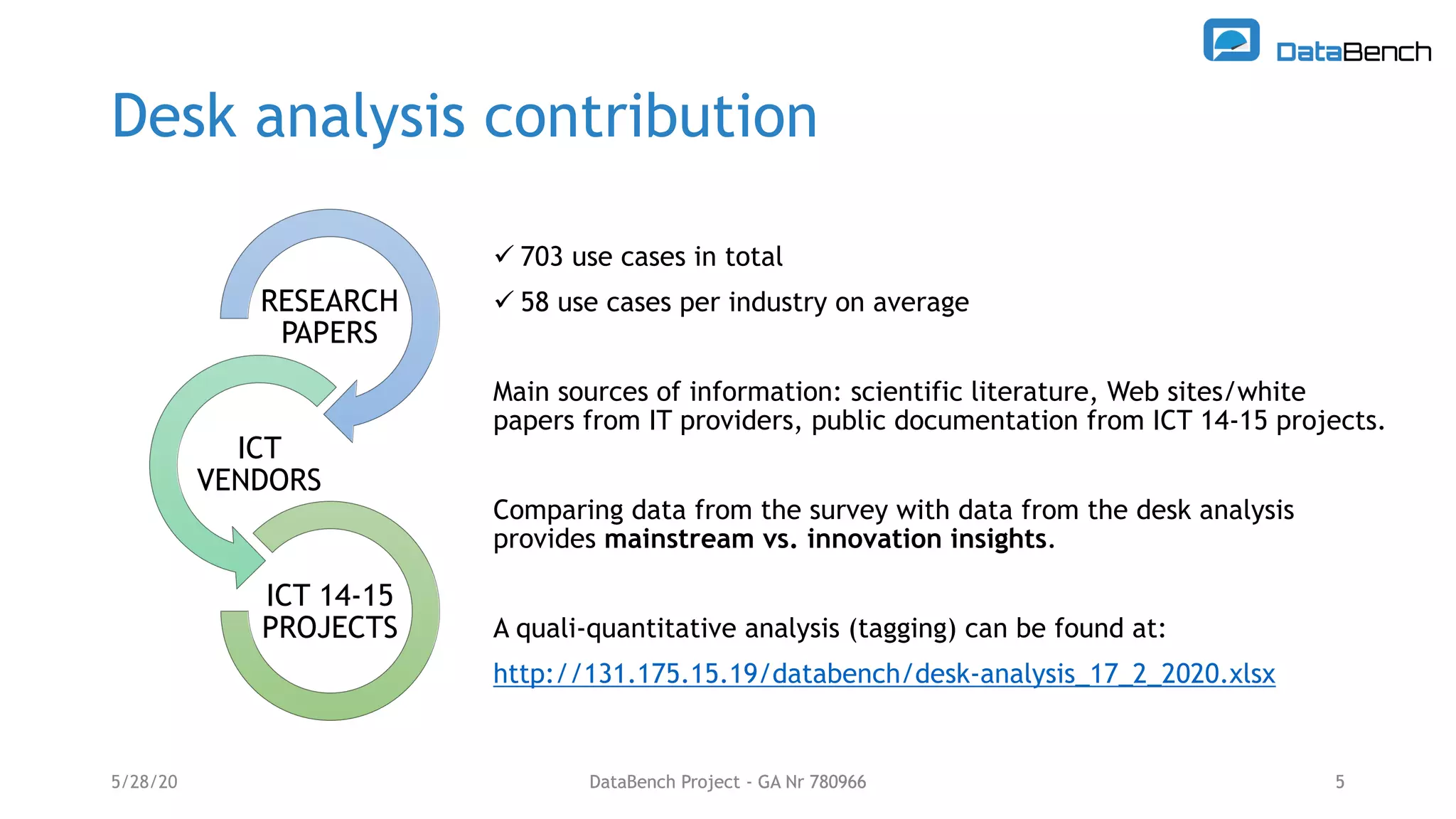

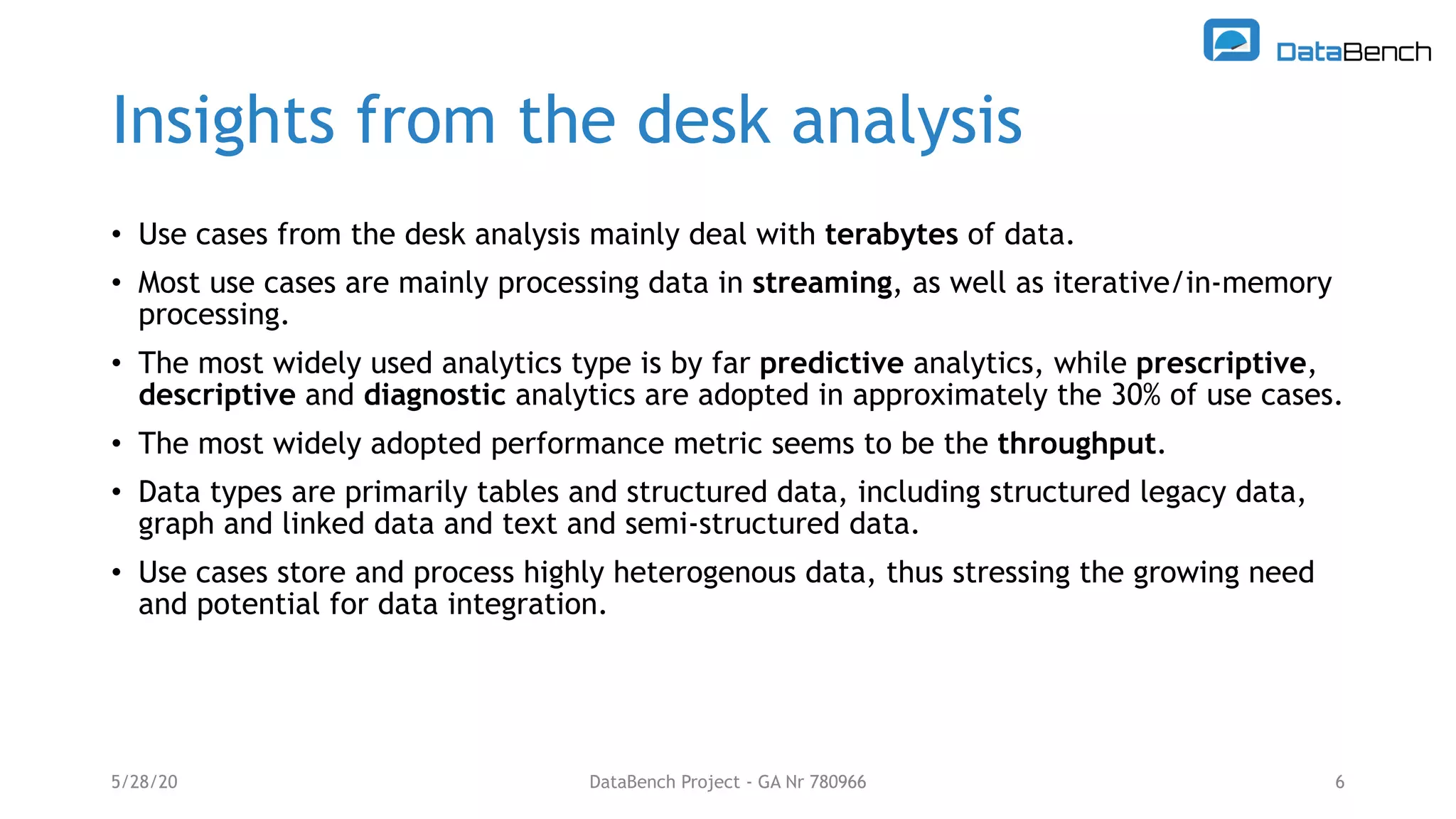

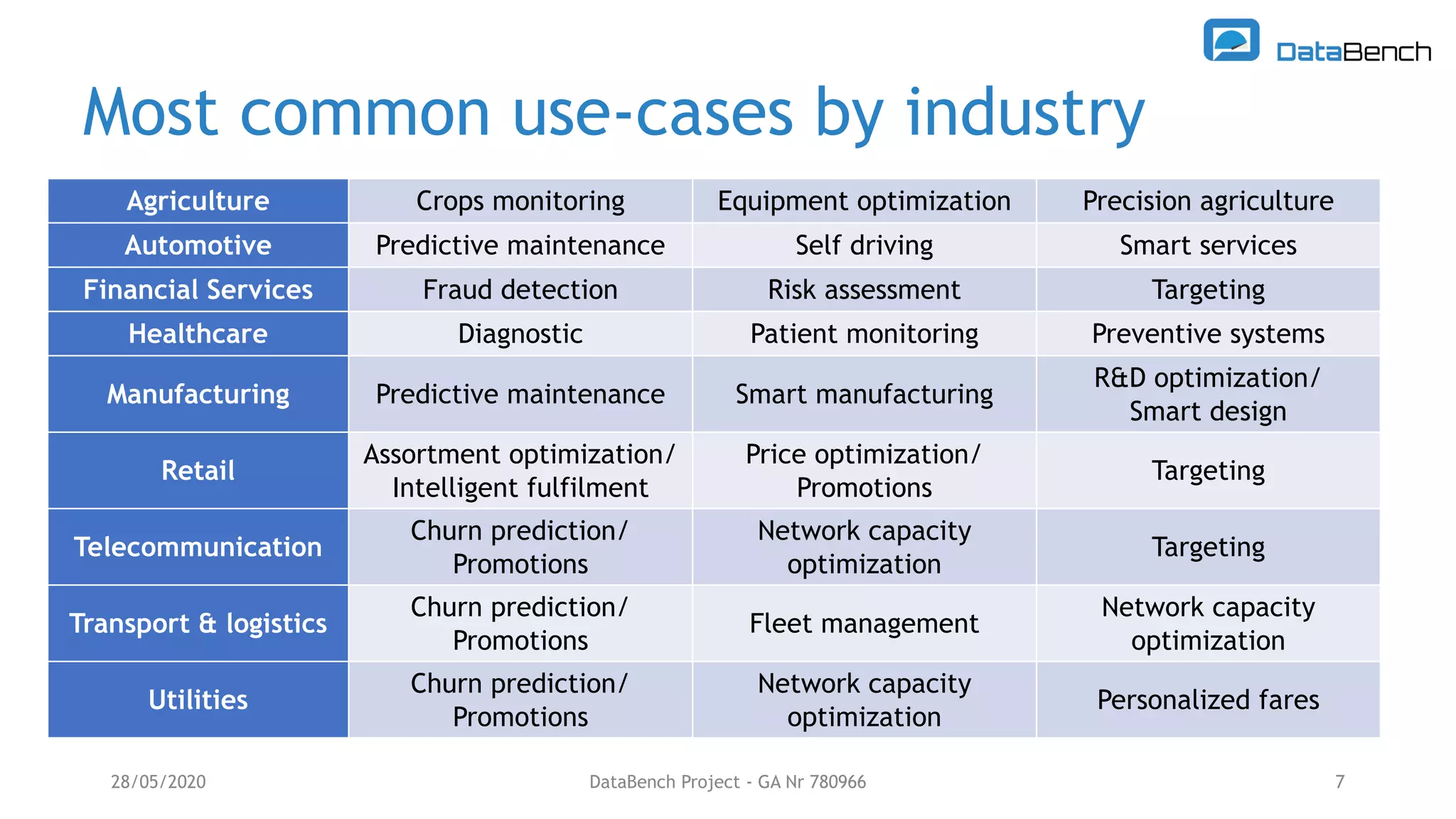

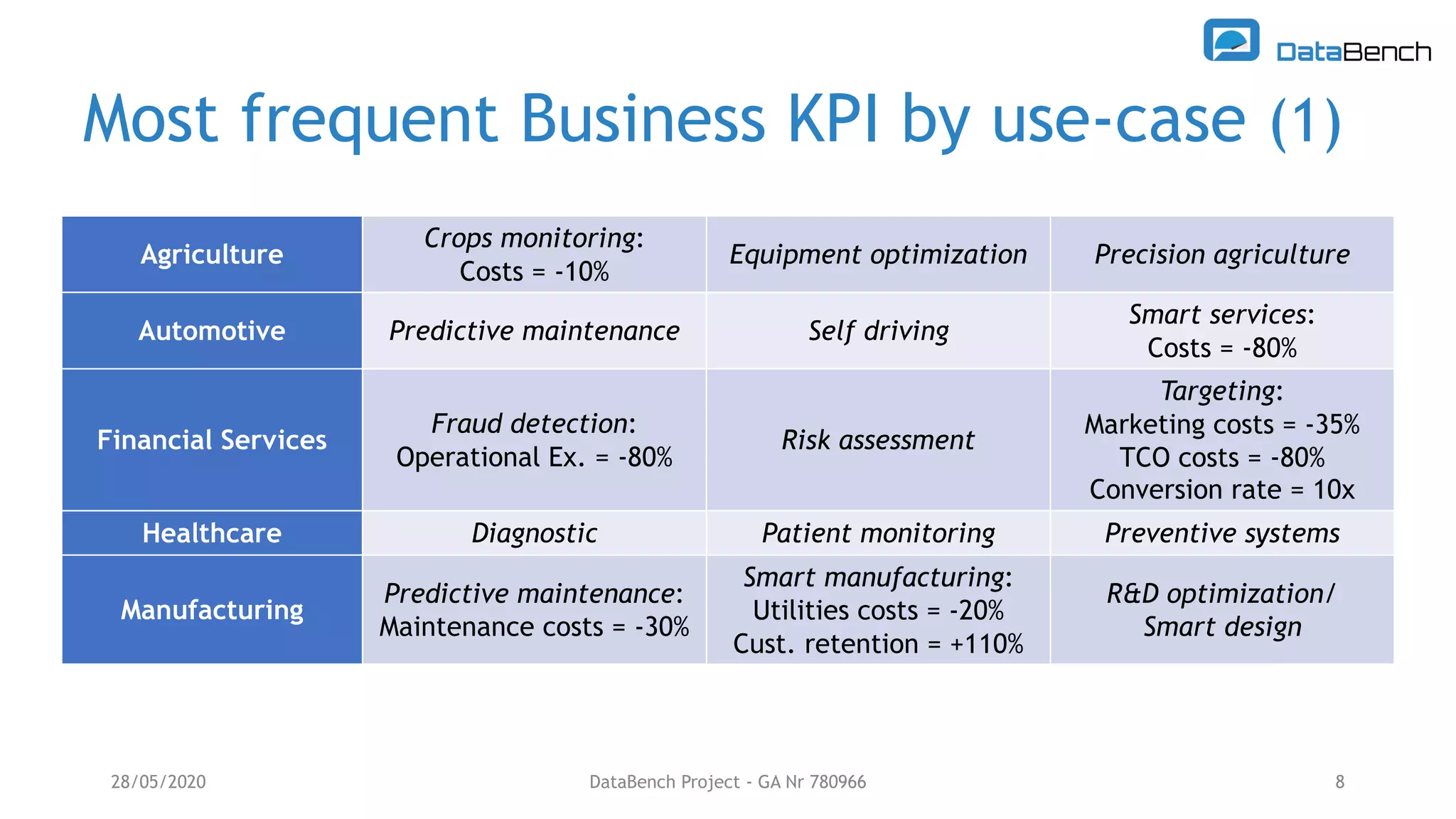

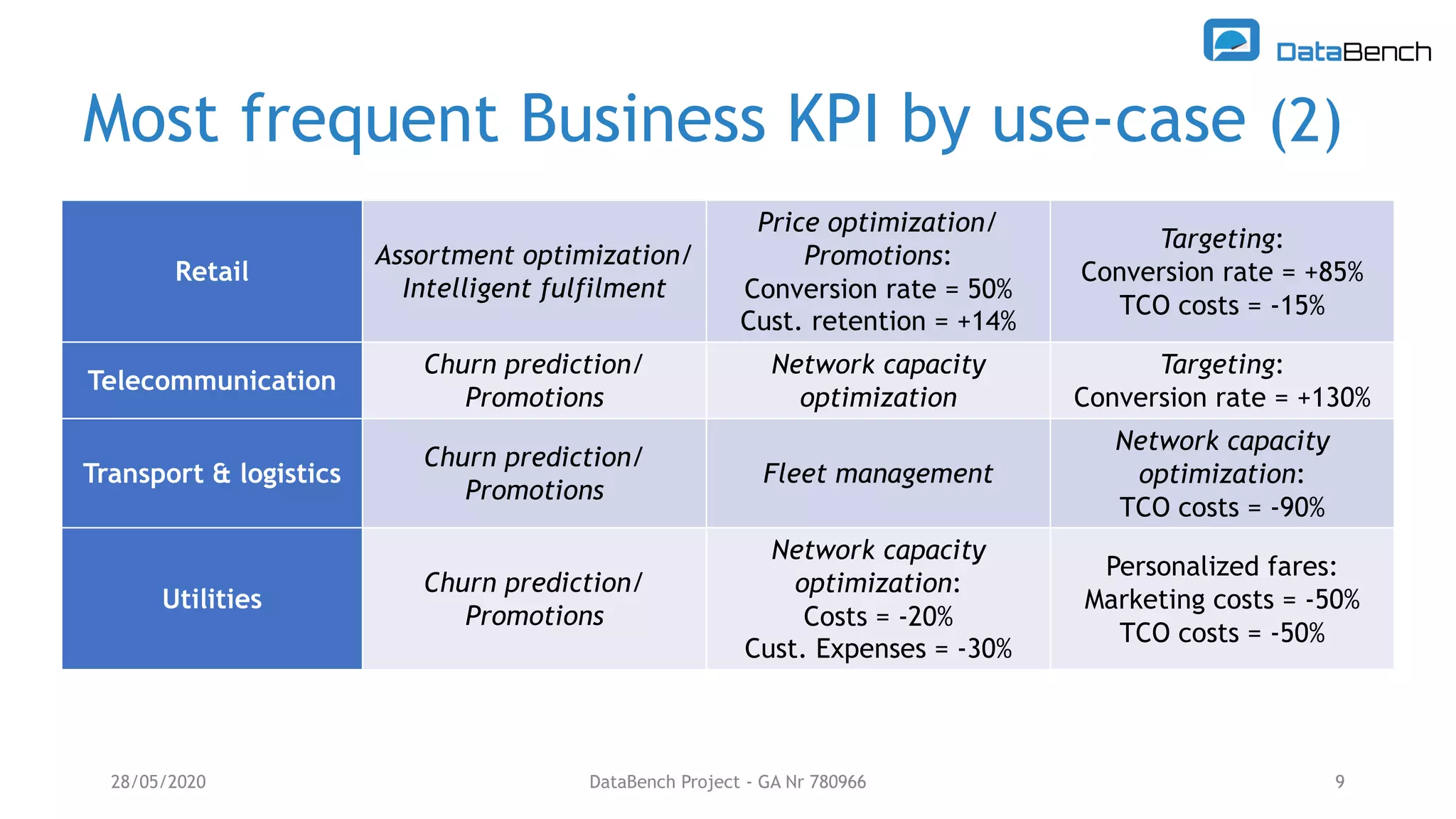

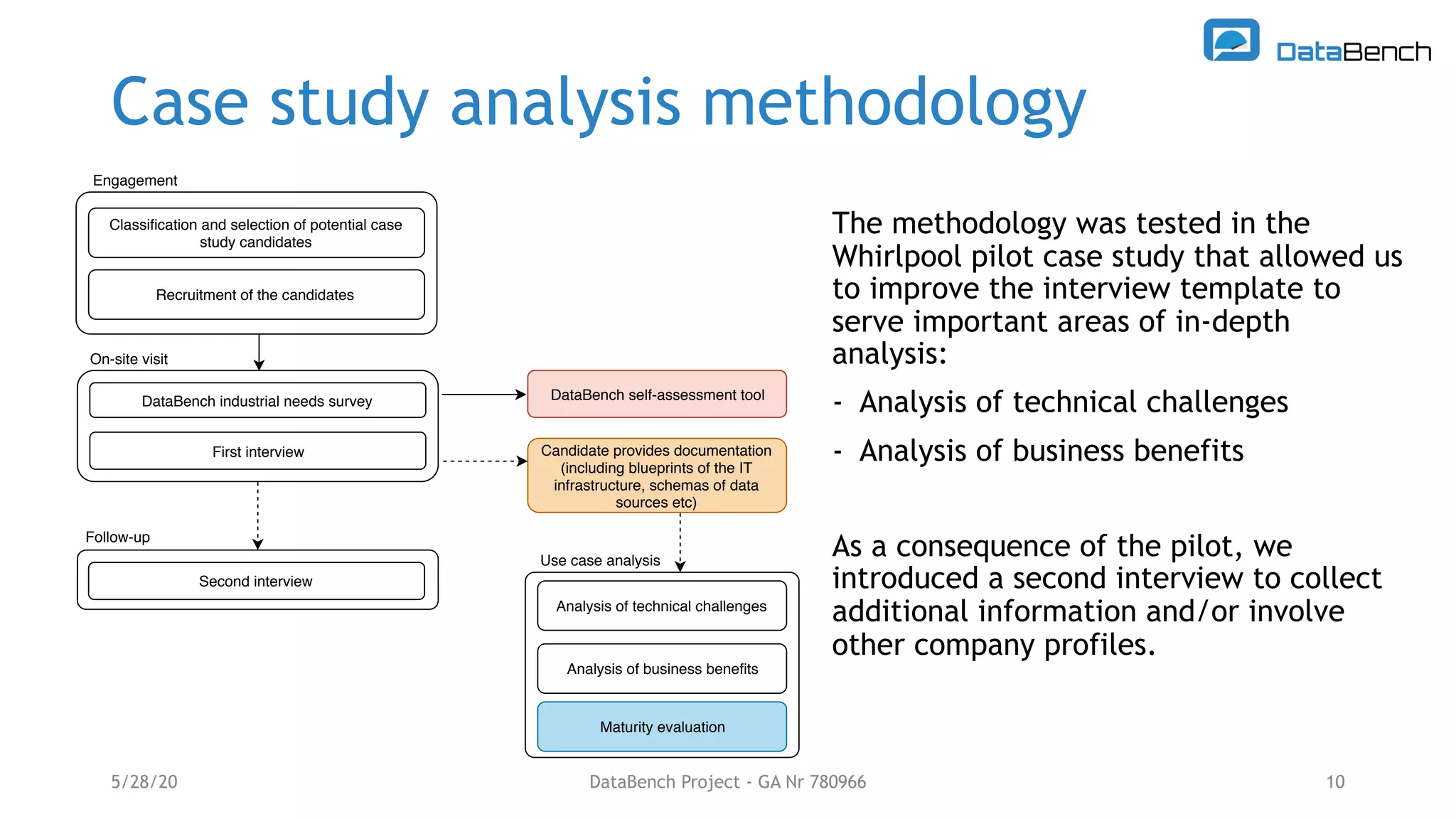

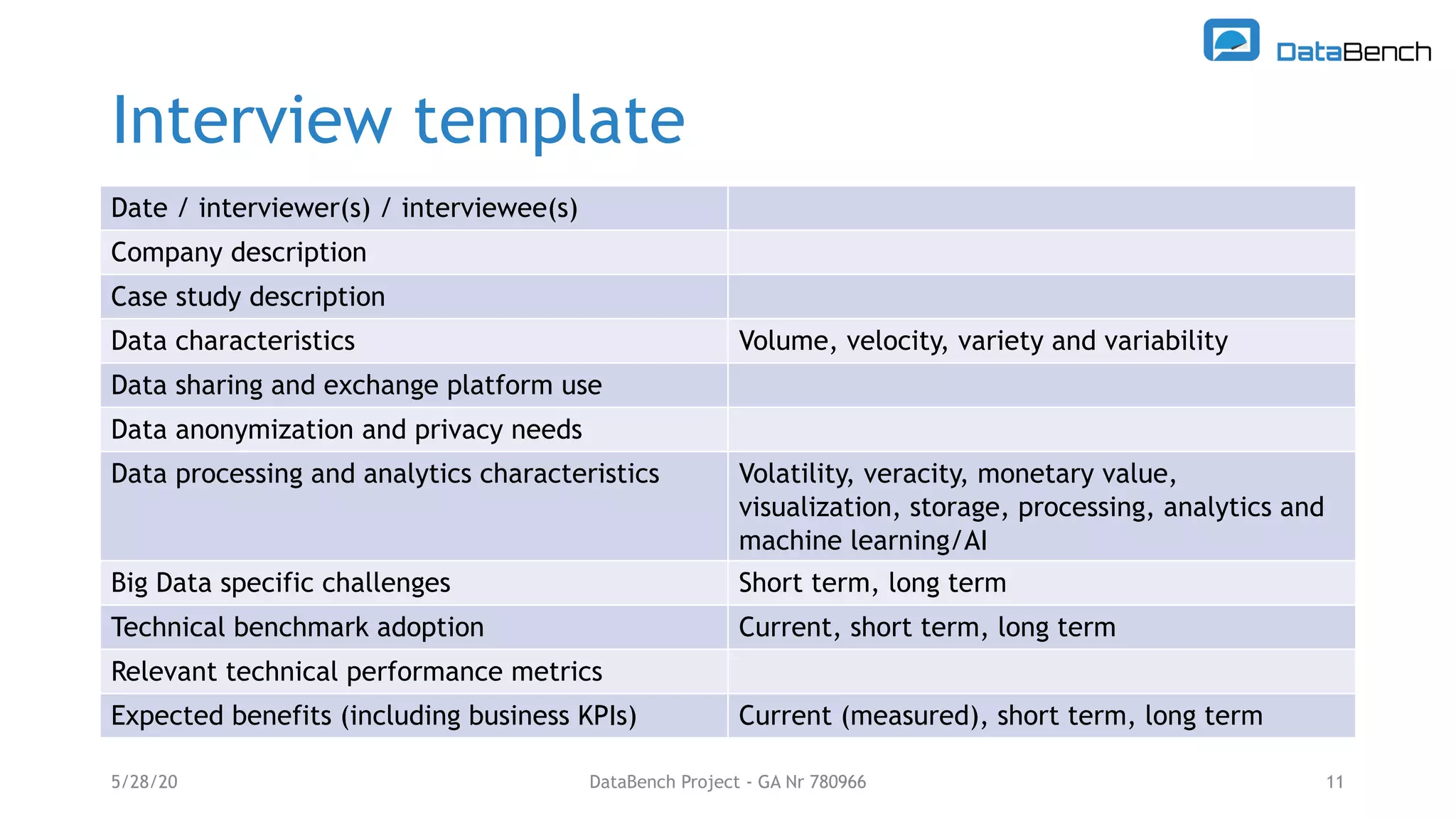

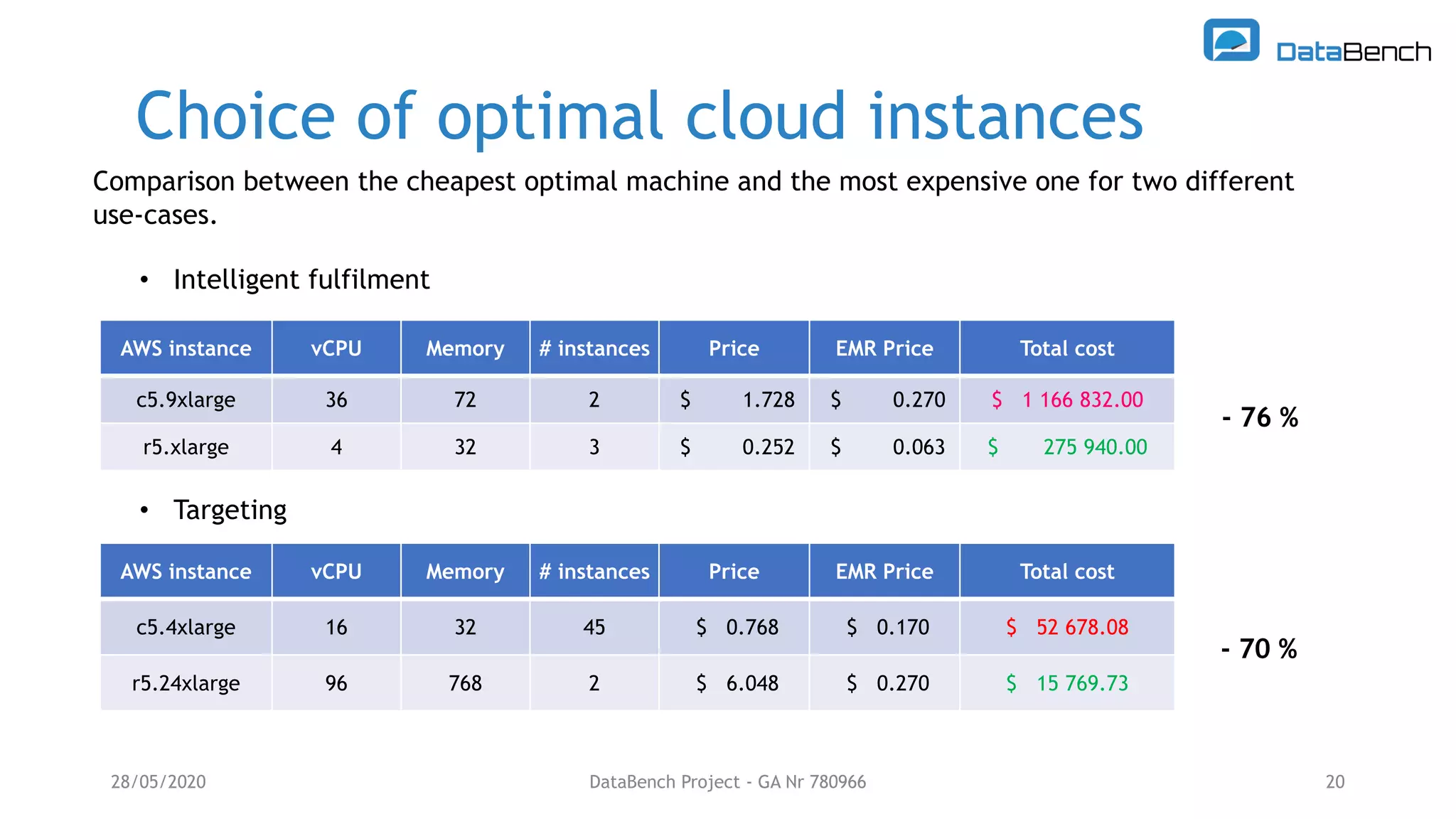

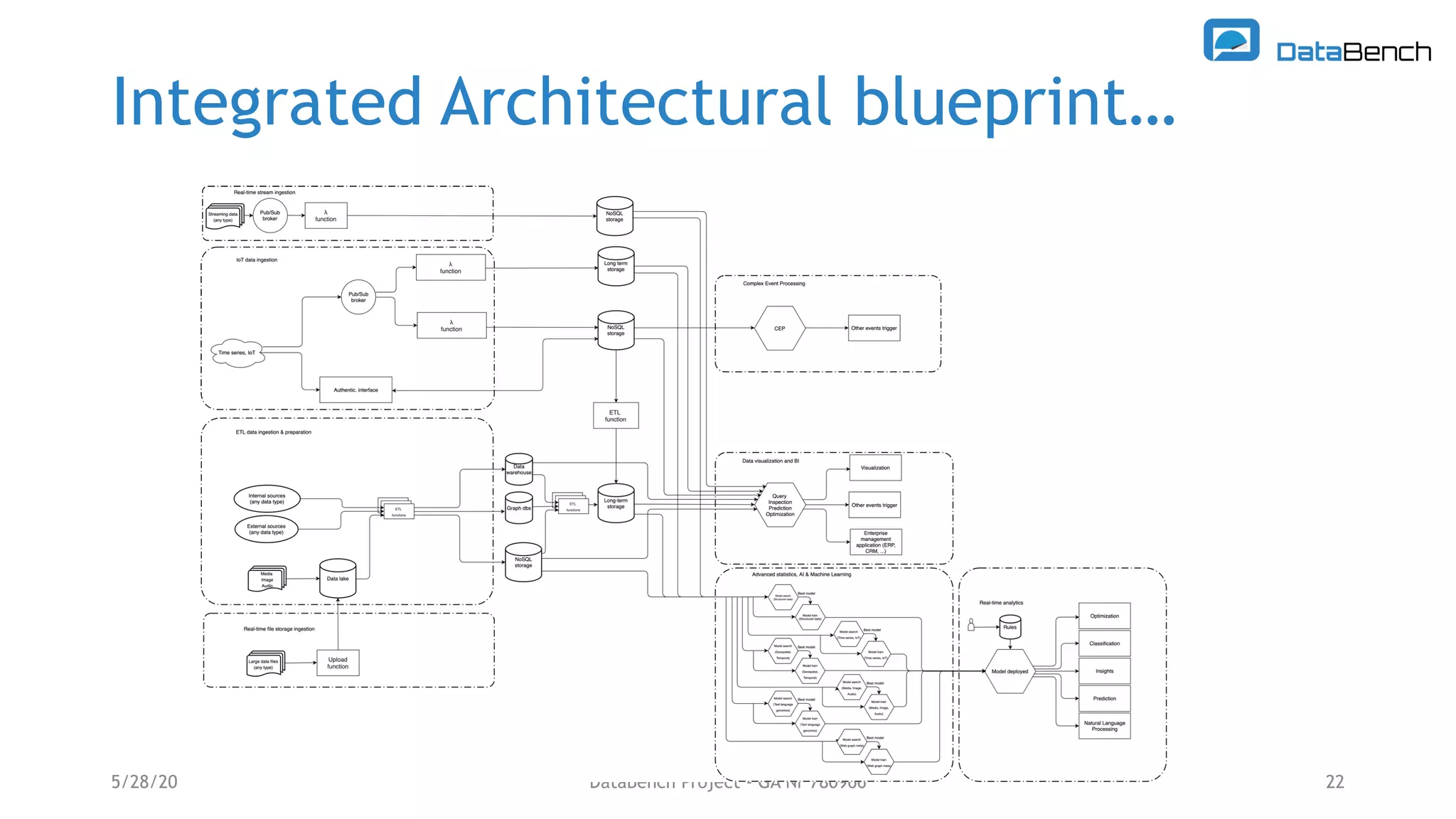

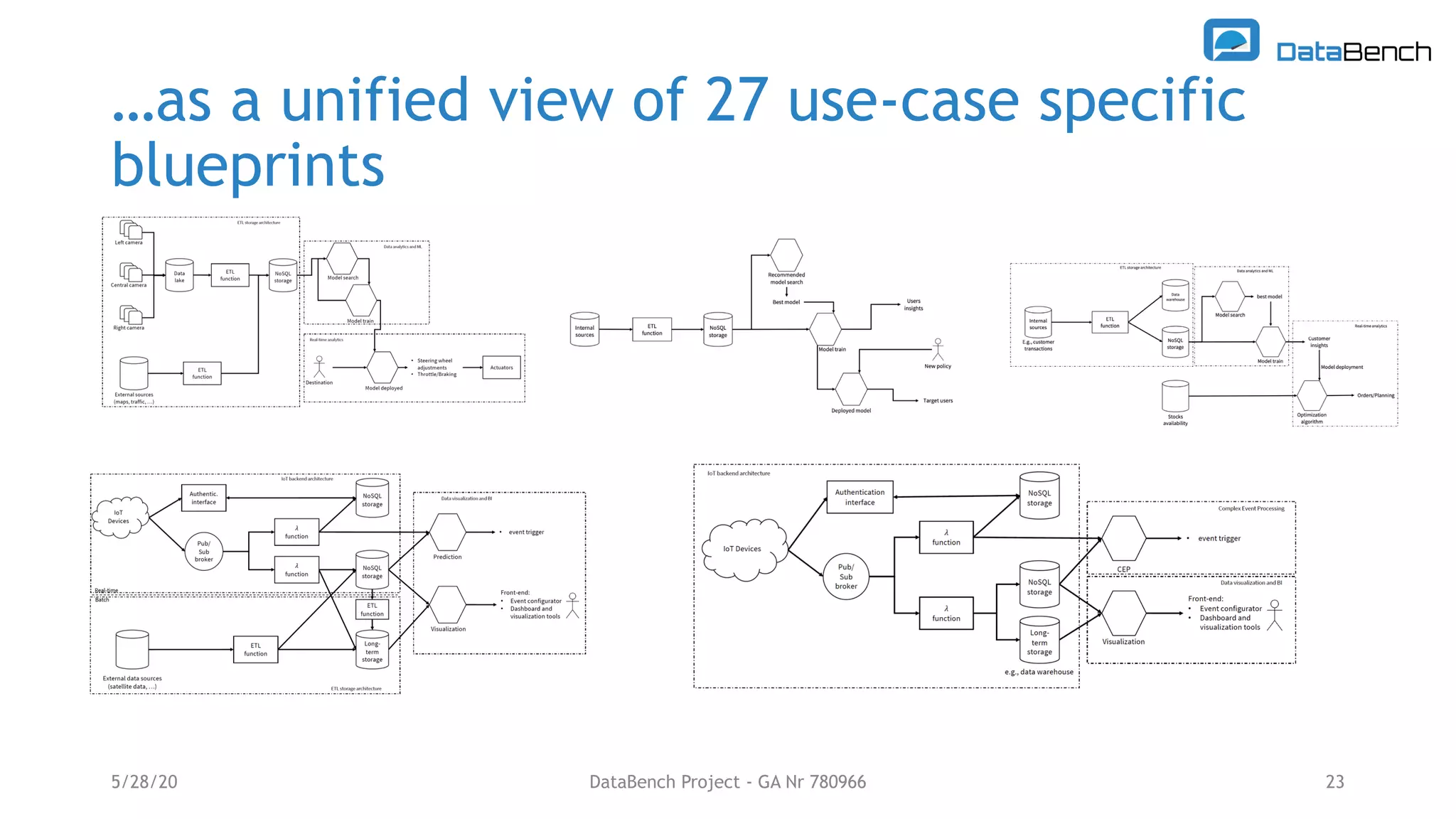

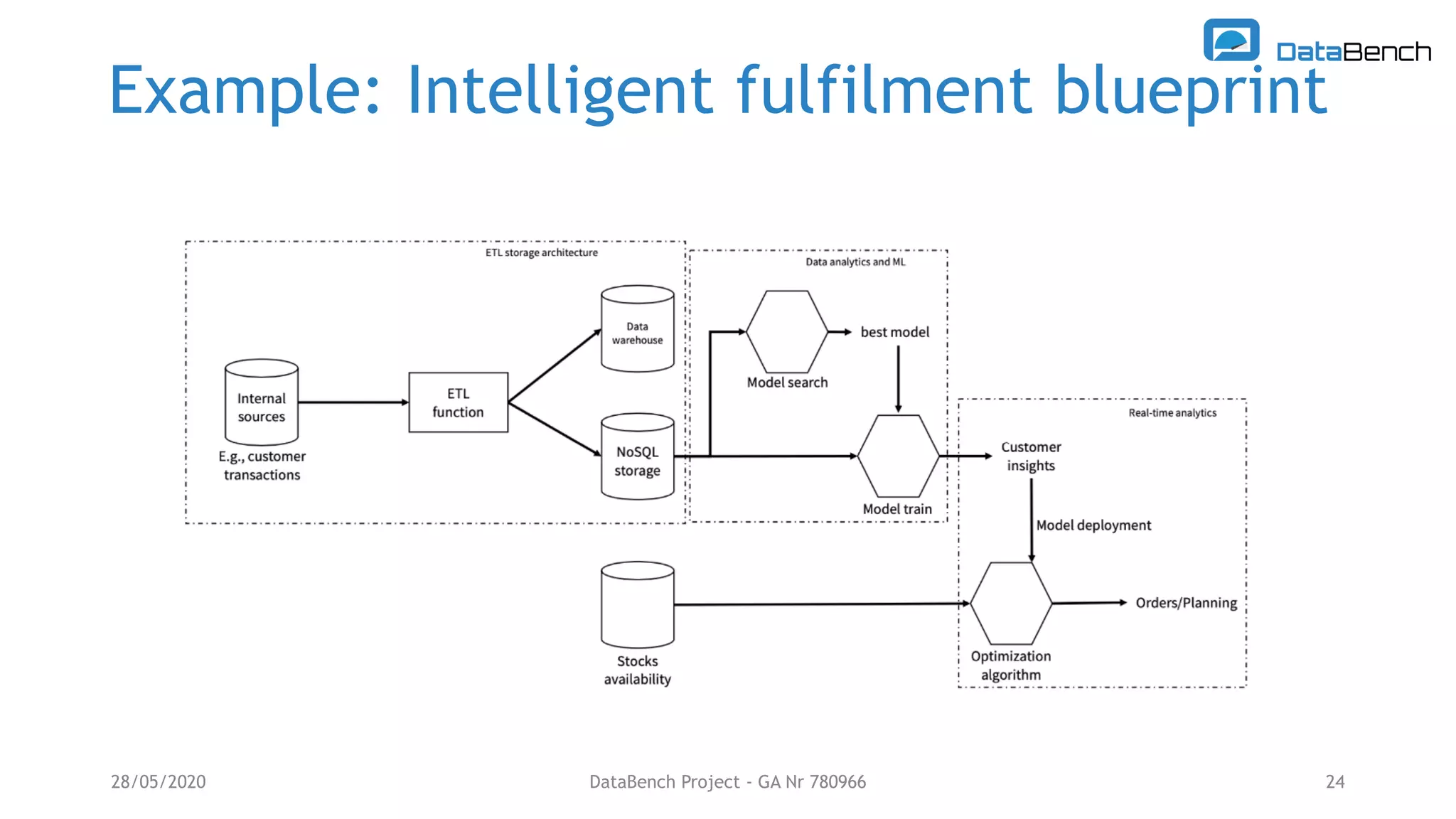

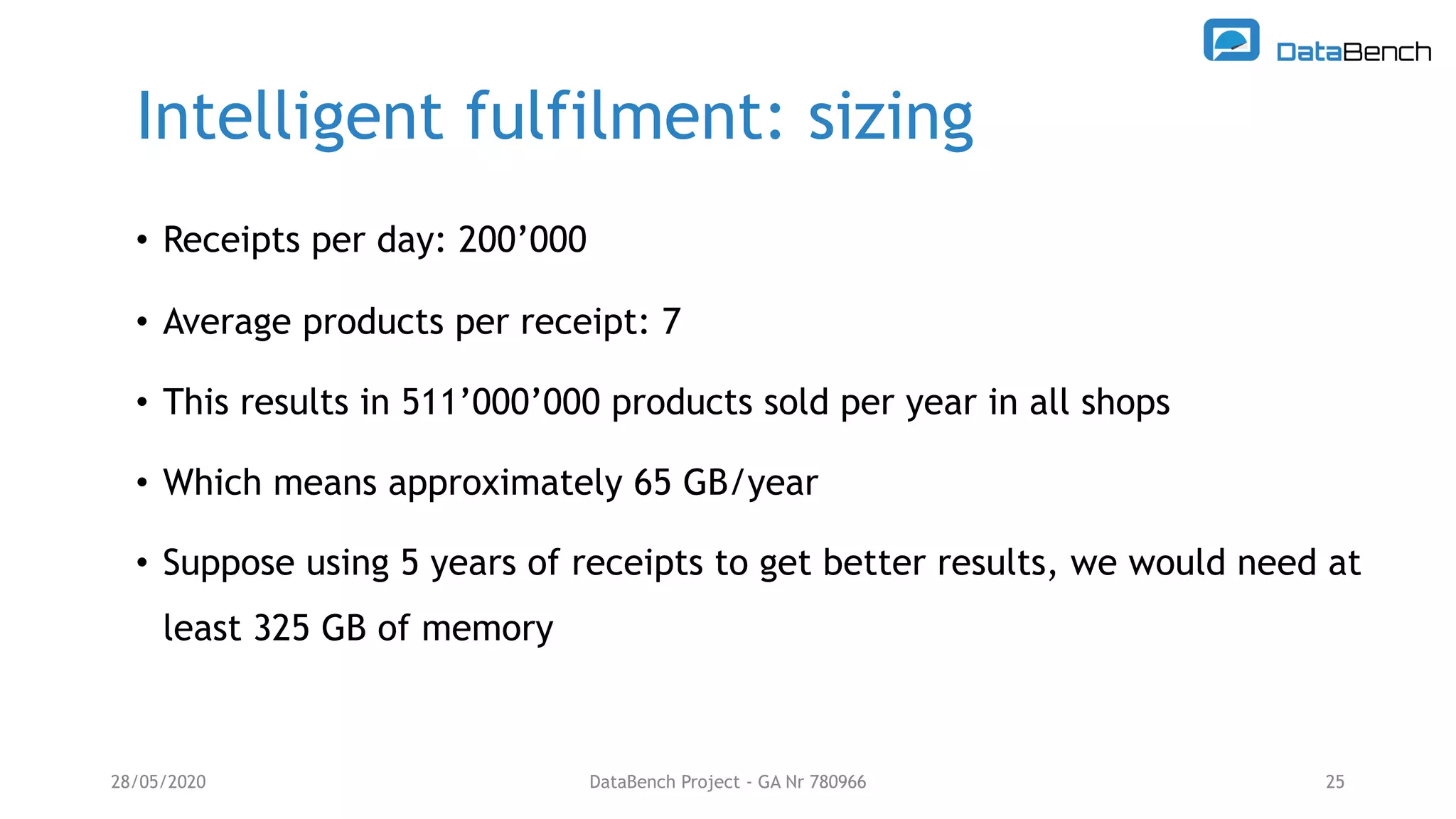

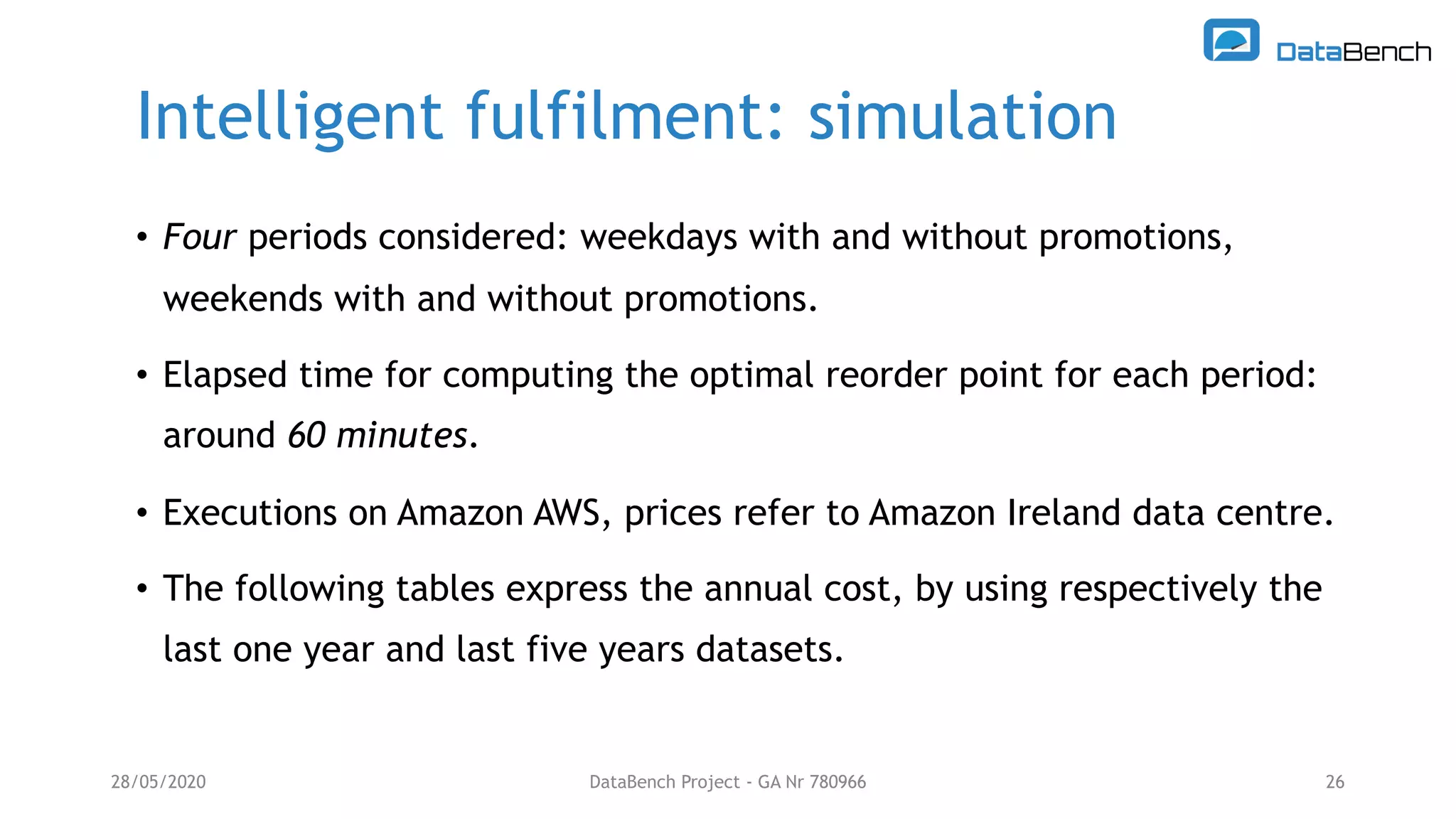

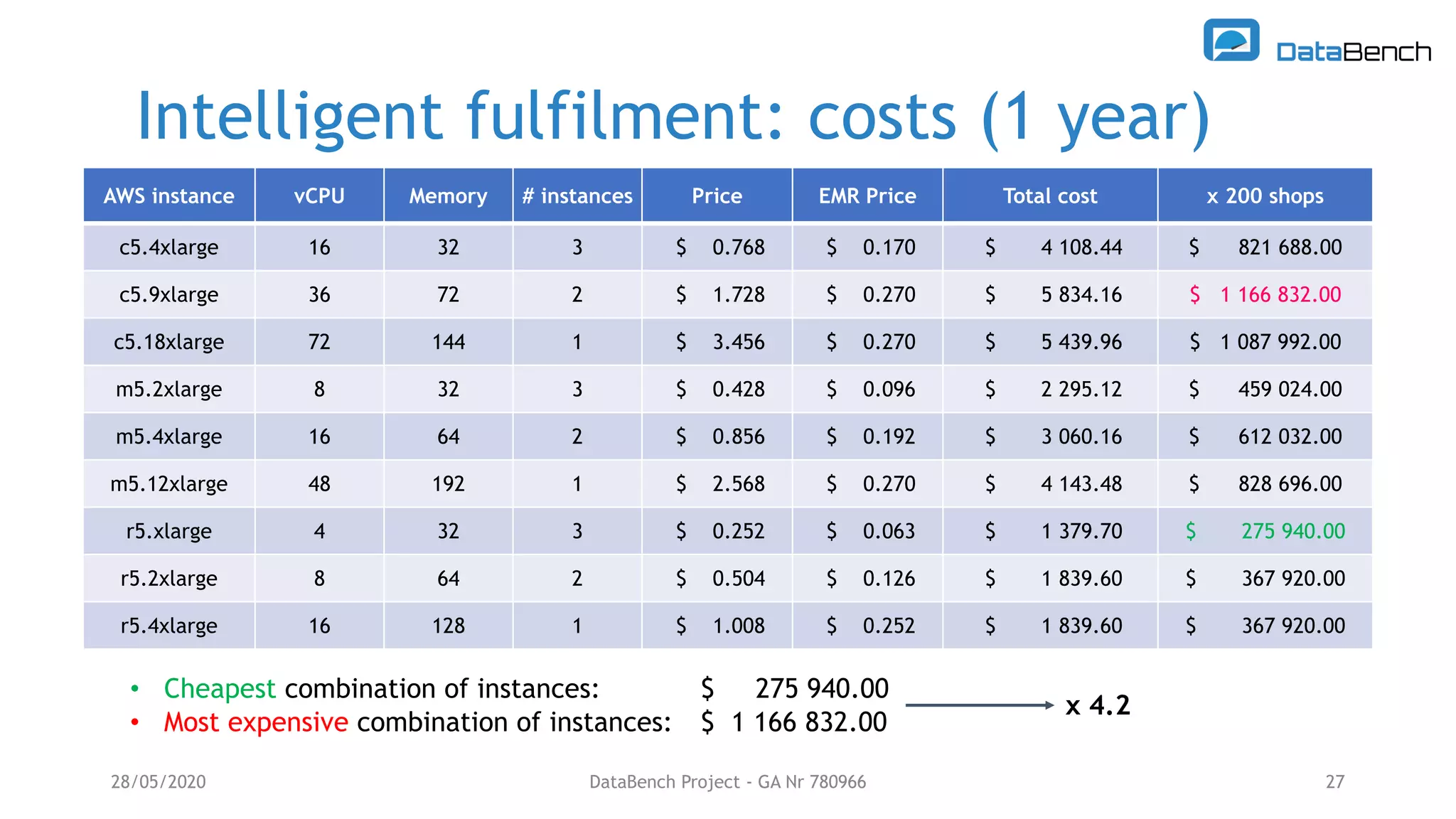

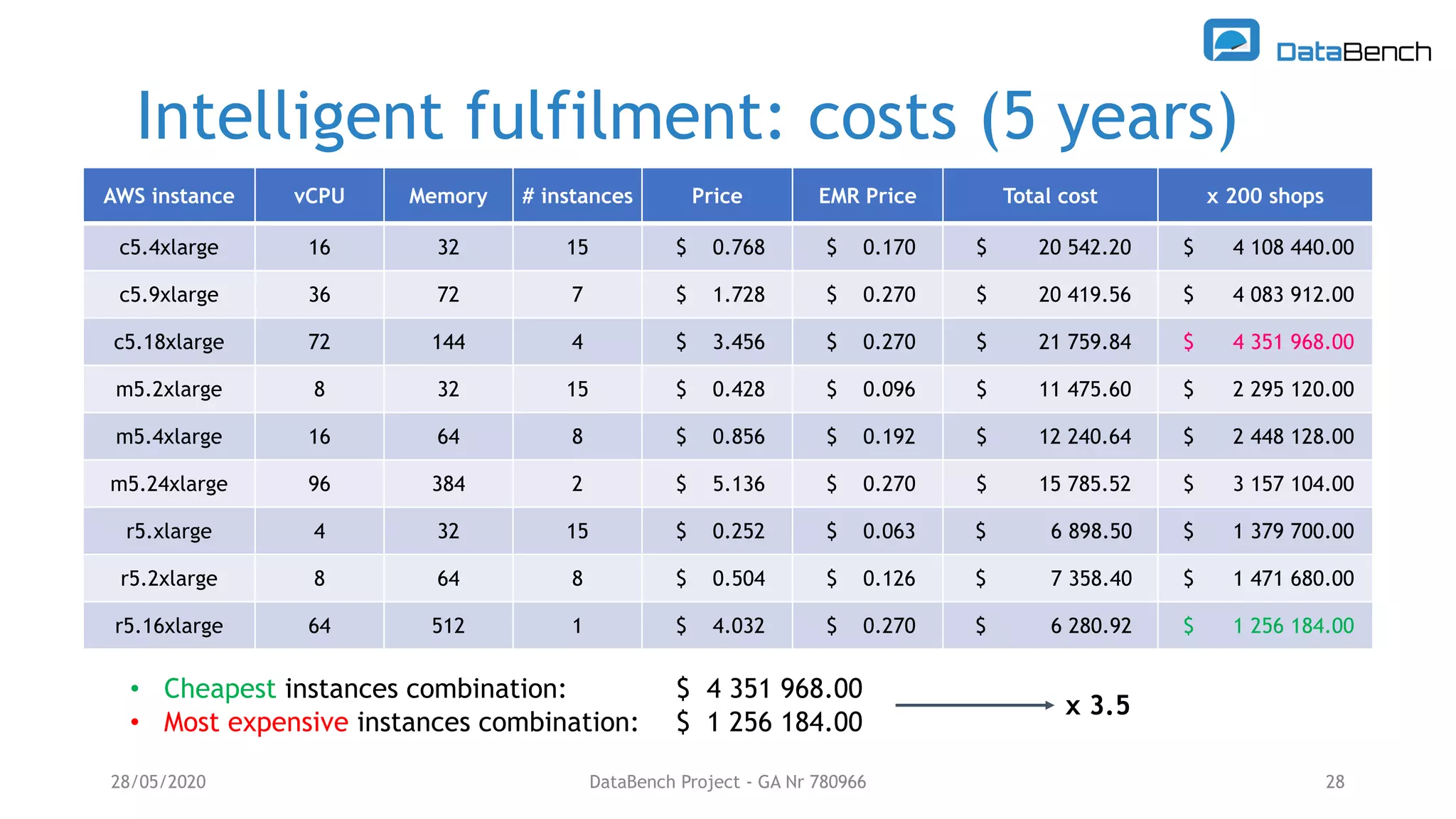

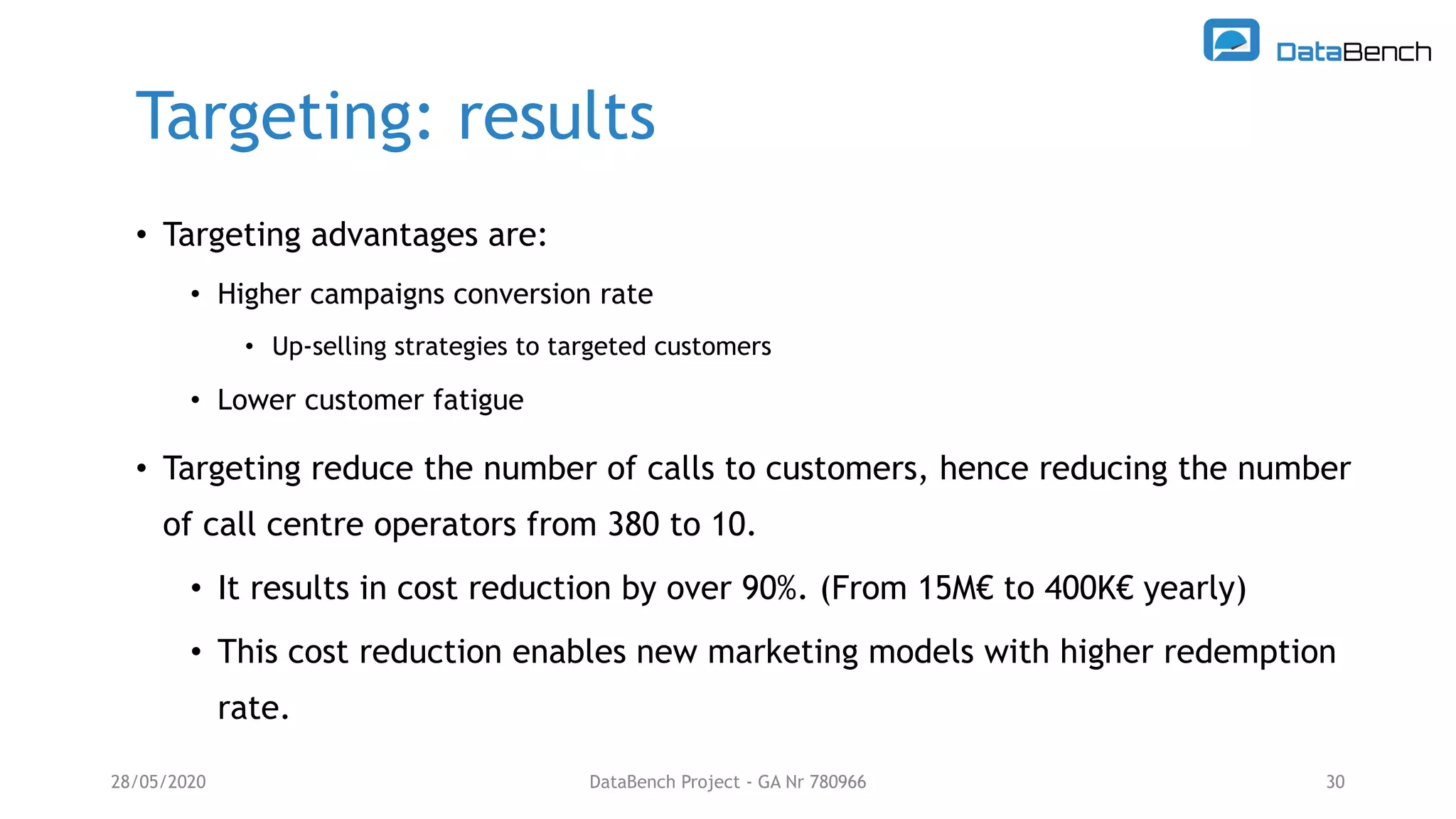

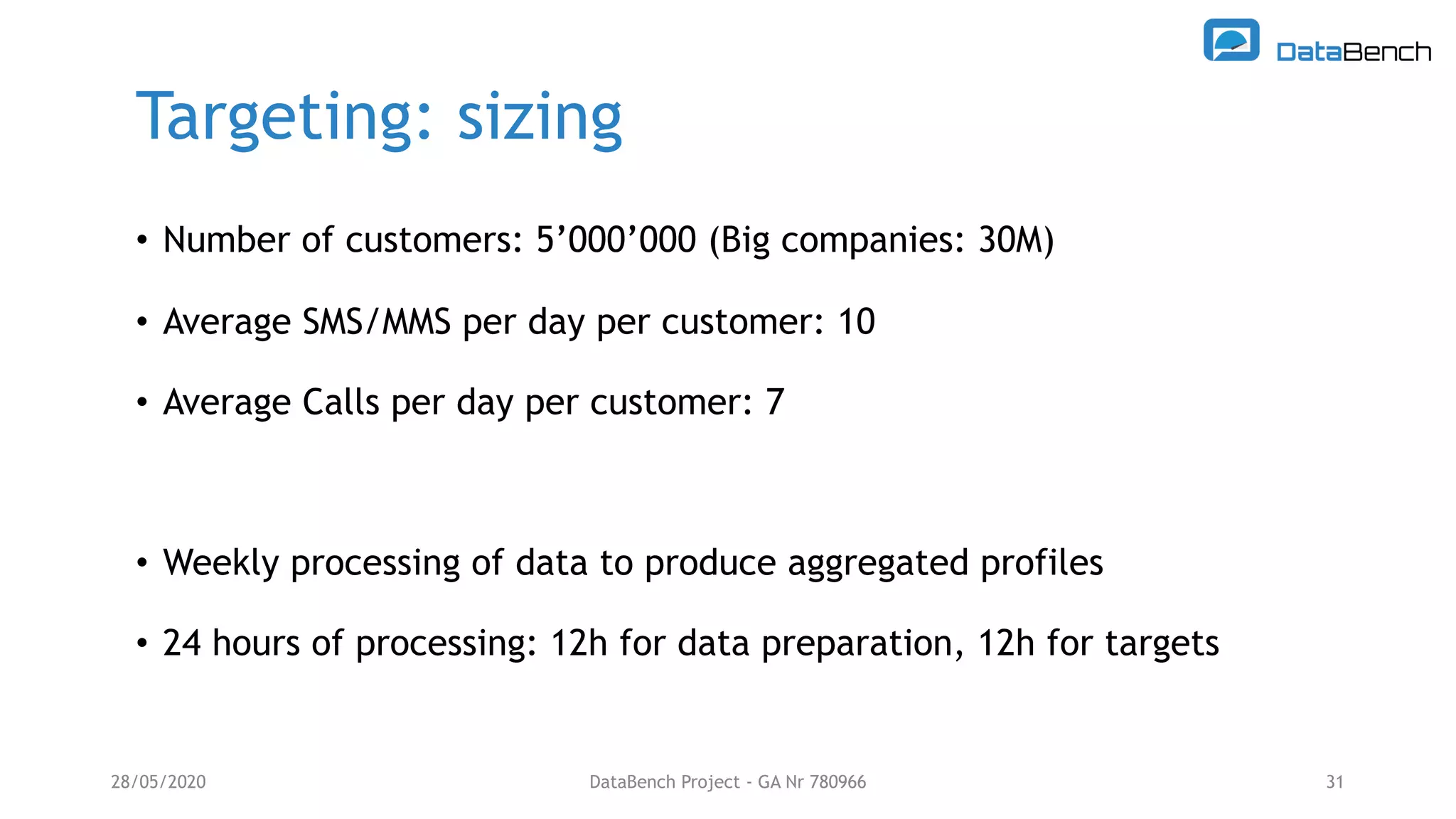

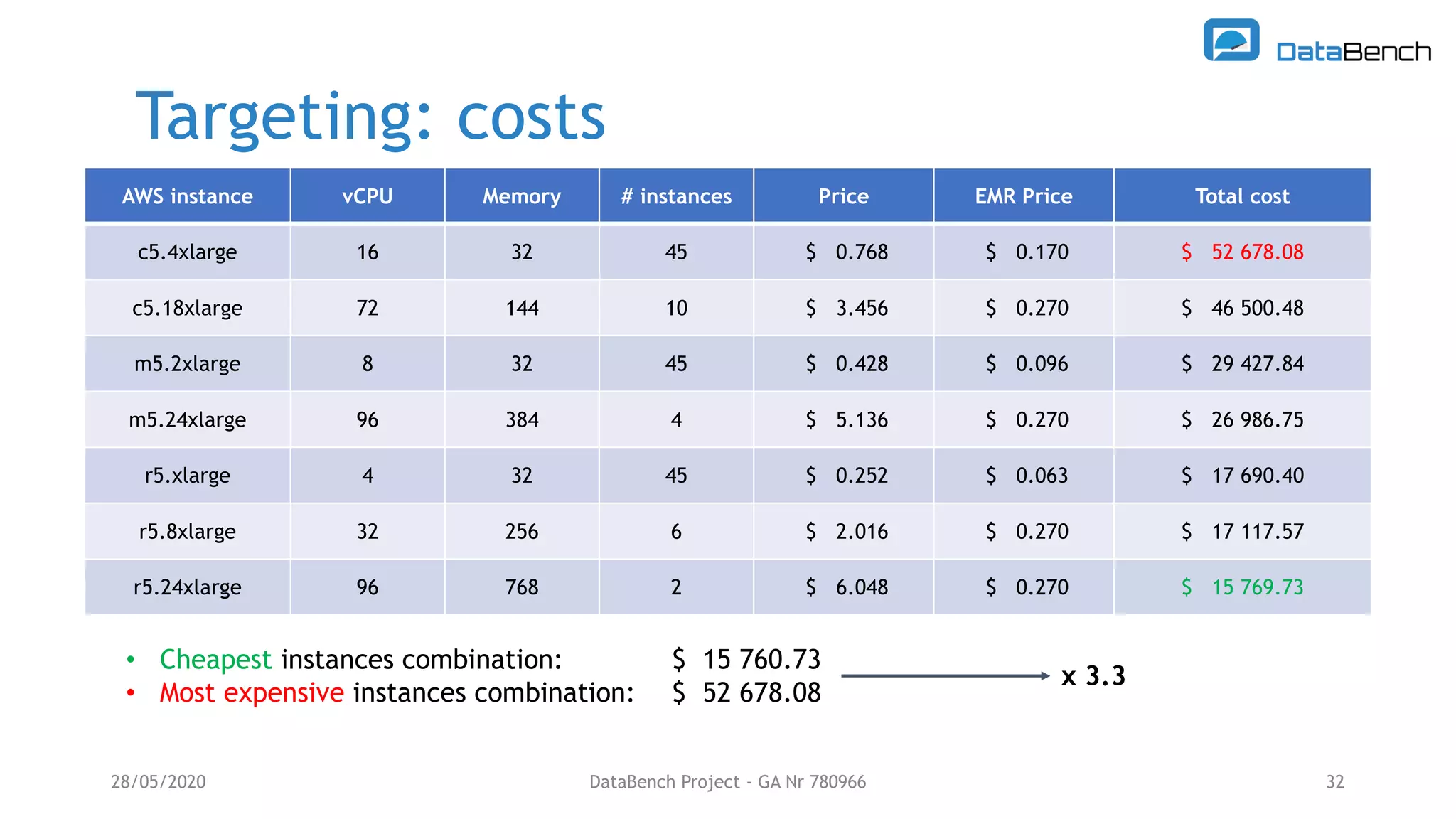

The Databench project evaluates the impact of big data technologies on business performance through industrial benchmarks and case studies. It identifies trends in data handling and analytics, highlighting the importance of data integration and technical benchmarking for informed decision-making. Key insights reveal the varying use of analytics types and the benefits of cloud solutions, with specific case studies demonstrating successful applications across multiple industries.