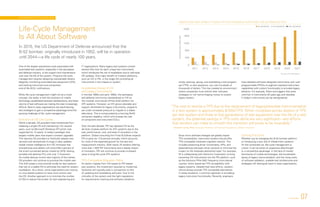

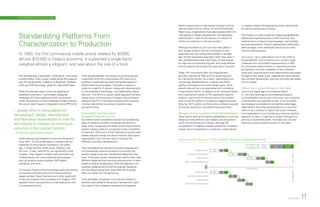

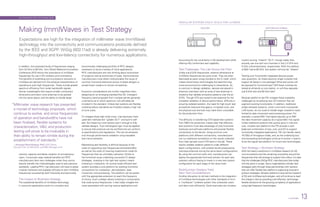

This document provides an overview of trends in automated test and measurement. It discusses how semiconductor companies are using real-time data analytics to reduce manufacturing test costs by harvesting production test data. It also discusses how test management software is becoming more important for handling new programming languages. Additionally, it discusses how RFIC companies are reusing IP and standardizing hardware to reduce costs and time to market across the product design cycle from characterization to production.