This document discusses exploring metaphorical data in technical documents. It begins by describing how over 1,800 metaphors were collected from various websites and compiled into a CSV file. These include simple metaphors like "sea of fire" as well as more complex metaphors used by Shakespeare. The document then discusses using regular expressions and the Hadoop framework to analyze technical documents and identify if any of the collected metaphors are present. It summarizes several articles on metaphors, identifying five common types of metaphors used, and discusses rules for identifying metaphors. Finally, it discusses how metaphors are conceptual and how we structure our thinking based on common metaphors like "argument is war" and concepts of time.

![Exploring Metaphorical data on Technical

Documents

Lakshmi Himabindu Jonnalagadda

Department of Computer Science

University of North Carolina

Charlotte – 28262

hjonnala@uncc.edu

Abstract--Metaphor is a figure of speech in which a word or a

phrase is applied to an object to which it is not literally

applicable. A csv or text file is taken which contains a

reasonable number of metaphors that are collected from

different websites. Another huge technical document is taken on

which analysis is done to identify if any metaphors collected

earlier are present or not. Initially, this is implemented using

Java programming language and regular expression concepts.

Later on, jobs might be run on Hadoop to identify if technical

documents contain any metaphors that were collected into the

text file.

This report focuses on how exactly metaphors are identified,

problems faced, results obtained and insights gained while doing

this project.

Key words: Metaphors, Shakespeare, Hadoop, and technical

documents,regular expressions.

I. INTRODUCTION

Metaphors are collected from the Internet from various

websites. All these metaphors are collated into a csv file format

or a txt file format so that it can be used for comparison later on.

Over One thousand and eight hundred metaphors are collected

into the csv file, which includes very simple ones like

“Sea of fire

And also long ones like ‘All the world's a stage, and all

the men and women merely players. They have their

exits and their entrances’.

The job interview was a rope ladder dropped from

heaven.

Words are the weapons with which we wound.”[1]

Few metaphors are common that we use in our everyday lives

like ‘Boiling mad’, ‘Breaking News’, ‘Brilliant Idea’, ‘Her

bubbly personality’ etc.

Complex or poetic metaphors are also collected that

Shakespeare used in his Sonnets and in his other works. Few

popular examples of Shakespeare metaphors are:

“Look, love, what envious streaks

Do lace the severing clouds in yonder East:

Night's candles are burnt out, and jocund day

Stands tiptoe on the misty mountain tops” [5]

“Why art thou yet so fair? Shall I believe

that unsubstantial Death is amorous;

And that the lean abhorrèd monster keeps

Thee here in dark to be his paramour?” [5]

“His two chamberlains Will I with wine and wassail so

convince, That memory, the warder of the brain, Shall

be a fume, and the receipt of reason A limbeck only.”

[5]

“Time travels in divers paces with divers persons . . .

I’ll tell you who Ti m e ambles withal, who Time trots

withal, who Time gallops withal, and who he stands tall

withal”[9]

"So, haply slander--Whose whisper o'er the world's

diameter, As level as the cannon to his blank,

Transports his poison'd shot--may miss our name,

And hit the woundless air." [5]

Shakespeare used Rose as a metaphor to symbolize a married

woman, while a rose that is withered on the stem describes a

spinster. Shakespeare used metaphors to describe many more

topics such as life, time, universe, etc. His plays are full of

metaphors mainly related to birds, war, music, food, clothing,

love etc.

Initially regular expressions are used to check if any

metaphors saved in our dataset is present in technical documents.

A regular expression is a string used to describe a search pattern.

It is used to search any patterns in just one line even if program

is written in Java, C, .Net or PHP. Quantifiers are used for

searching patterns. These Quantifiers ‘?’, ‘*’, ‘+’ are used more

for searching patterns. For example: b*c regular expression

matches strings c, bc, bbc, bbbc etc. * searches for zero or more

occurrences of the preceding element. And ‘?’ searches for zero

or one occurrences of element preceding it. A simple exampleon

how to regular expressions is:

Regular expression for writing a username will be

something like this ^[ 𝑎 − 𝑧0 − 9− −]{3,15}$. Here ^ indicates

start of a line. A-z0-9 represents that username can have

alphabets and digits in it. Underscore and hyphen in the RE

matches if there are any underscore or hyphen in the username.

{3,15} says that username can have a minimum length of 3

characters and a maximumof 15 characters. There are tools like

GREP and PowerGrep using which regular expressions can be

used more efficiently.

Hadoop framework is used to run jobs on it, to verify if any

metaphors are present in technical documents or not. Hadoop is

an open source framework where we can store and process large](https://image.slidesharecdn.com/70a3ffcd-5ced-43fc-b804-c6716bc9f2cd-161110162233/75/Study_Report-1-2048.jpg)

![amounts of data making use of its distributed environment. It is

highly reliable and scalable.

Natural Language Processing is growing field, which is related

to Human Computer Interaction, and Artificial Intelligence. In

comparing text between two files, we are making use of few

concepts from Natural Language Processing”.[7]

II. SUMMARY OF ARTICLES ON METAPHORS

Many researches have been performed on finding metaphors

in text automatically using Natural Language Processing

concepts. Processing of metaphors automatically can be divided

into two tasks: metaphor recognition and metaphor interpretation.

Metaphor Recognition is finding the difference between a literal

and metaphor in a text document. Metaphor Interpretation is

finding the meaning of the metaphor. Metaphors are discussed in

four views, they are:

a) Comparison View

b) Interpretation View

c) Conceptual View

d) Selections Restrictions view

Fass made the first approach to identify metaphors

automatically in a text document. He developed a system to

distinguish between literals, metaphors and anomalies and also

to interpret metaphors; this was done in step-by-step process.

(Matter to be added here)

Results: Five types of metaphors are identified during research

process.

a) Metaphors of Space: Many metaphors are found in the

area of space. Largest metaphor was “field” followed

by “area” Other metaphors in this category are

“byways”, “regions” etc. Researchers refer to their

work as part of a particular “field” or “area” [6]

b) Metaphors of Travel: Word that was found most in

metaphors under this category is “Steps”. Other words

found are “Track”, “Path”, and “Journey”. Words like

“flow”, “Sprint”, “Wading”, and “Embark” which

indicate movement. This kind of analogy gives the

reader the thought of investigation, of opening up new

ranges of examination, of taking off into the separation

to discover new information. It proposes a sense of

development included in research that exploration

requires a considerable measure of activity to convey it

to realization that nothing is found by sitting still, just

by moving into the unknown.

c) Metaphors of Action: Large number of words is found

commonly in metaphors under this category. Words

like “Working”, “Delve”, “Reap”, and “Combing”

which refer to some action involved in conducting

research.

d) Metaphors of Body: Number of words were found in

metaphors are related to human body and animal body.

For example: words like “Body”, “Corpus”, “Grasp”,

“Infancy”. It is found that research in metaphors

doesn’t limit to a specific field, but it is spread across

various areas.

e) Metaphors of Ordeal: Words related to ordeal are used

in metaphors under this context. “Struggle”, “Fighting”,

“Crushed”, “Drown”, and “Inflict” etc. are used.

A. The Comparison of Metaphorical Concepts:

“The comparison of metaphorical concepts accounts for a

number of different actions and experiences. Barkfelt (2003) for

example in her study on metaphors of depression, found in

autobiographical writings, works out that some authors

experienced their illness as a light-dark contrast ("die Welt wird

zunehmend grau" - "the world is becoming increasingly grey");

others described their depression as an "Überfall" ("attack"),

which hits them unexpectedly and "niederwirft" ("knocked them

down"). The comparison of the two metaphorical concepts

points to different experiences of the illness, which manifests

itself at different speeds. The use of metaphor in terms of light

dark gives the perception of a transition, thus allowing room for

maneuvers, which is not possible when depression is perceived

as an "attack." In the latter, on the other hand, the illness is more

clearly defined as a personal and dangerous enemy than in the

first metaphorical concept. From this, Barkfelt derives a number

of different options for linguistic or therapeutic intervention. Put

more generally: The comparison of metaphorical concepts with

the models of actions they contain allows certain conclusions to

be drawn. However, these conclusions are only possible if the

context is understood fully. Barkfelt is only able to draw such

conclusions because she is able to recognize the various

implications, due to her competence in the field as a therapist, of

the metaphorical concept of depression – beyond any specialist

manual, which might simplify the process of coming to these

conclusions but which cannot produce them”.

B. Limits to the Use of Metaphor:

“In examining the question: "What is the expression-shortening,

knowledge preventing content of the metaphors used?" it is

possible to work out the "hiding" elements, the ideological and

cognitive deficits of a metaphorical concept. Which aspects does

this use of metaphor conceal? Again, making use of the

container image, it is not able to represent temporal aspects; one

is either "dicht" ("shut") or "nicht dicht" ("not shut"). The

"Verlauf" ("passing") of time is better described in the use of the

path metaphor ("im Leben weiter kommen" - "to make headway

in life", "to get ahead", to make "progress"): The image of the

container is not able to do this. Another example that we are

familiar with is the image of the "Großwetterlage" ("general

weather conditions"); used by the media to describe the

economic situation. The metamorphosis of market movements

into nature disguises the fact that one is dealing with a man-

made phenomenon. In nearly all cases, this use of metaphor can

form the basis of a discussion ofadvantages and disadvantages.](https://image.slidesharecdn.com/70a3ffcd-5ced-43fc-b804-c6716bc9f2cd-161110162233/75/Study_Report-2-2048.jpg)

![The deficits and resources of a metaphorical concept can be

reconstructed for the three stages in the usual individual, sub-

cultural and cultural use of metaphor. Naturally, the process of

assessment, in being able to see one aspect of a metaphor as

"highlighting" and another as "hiding," requires a subjectivity

that is able to draw on a culture that has been lived in and is

understood. It is therefore dependent on the discriminatory

ability of the person undertaking the interpretation”. [7]

C. Rules to Identify Metaphors:

Rules to identify metaphors are listed as follows:

a) “A word or phrase, strictly-speaking, can be understood

beyond the literal meaning in context of what is being

said; and

b) The literal meaning stems from an area of physical or

cultural experience (source area)

c) Which, however, is - in this context - transferred to a

second,often abstract, area (target area)”. [7]

The following examples of metaphors and their explanations are

taken from the paper titled “Systematic Metaphor Analysis as a

Method of Qualitative Research” by Rudolf Schmitt.

EXAMPLE 1:

“You just don’t experience problems like that as being so

weighty (‘gewichtig’) when you are drunk.”

“It is easier (‘leichter’) to get into a conversation with people

when you’re no longer sober.”

“It was simply less burdensome (‘unbeschwerter’) after the

second beer.”

All three quotations are related to various states of drunkenness,

which is also the target area in a current investigation entitled

Which Experiences and Expectations are related to Alcohol

Consumption?

The common source area can be formulated in terms of a

“burden”, “effort”, and “weight” – the most suitable term will

become evident upon the discovery of further metaphors.

Thus, I would offer the following as an initial formulation of the

metaphorical concept:

“Drunkenness makes difficulties easier to bear”. [7]

EXAMPLE 2:

“They met (‘getroffen’) there and got into an argument.”

“He tried to find (‘finden’) a way to reach him” (“Zugang zu

ihm”).

It could be argued that to meet (“treffen”) and find (“finden”)

require space to take place... But in this case it is a very strained

construction of a source area, which uses “space” in its most

abstract quality (i.e., it’s somehow simply being present).

Speaking metaphorically, it is an attempt to give a skinhead a

perm. Therefore, we have no common source area, no

metaphorical model here, even if the target area (interaction) is

the same. [7]

EXAMPLE 3:

“He got out of his way” (“aus dem Weg gegangen”).

387 The Qualitative Report June 2005

“He is making progress (‘Fortschritte’) with his therapy.”

The same source area (path metaphor) but no common target

area. First interaction then individual development, therefore not

suitable for grouping in a common model. [7]

EXAMPLE 4:

“She bubbled over with life” (‘gesprudelt vor Leben’).

“She effervesced (‘gesprüht’) as she told her story.”

“And then the dams burst (‘Dämme gebrochen’) as she told her

story and wept.”

We are able to ascribe the metaphors to the same source area

(moving liquid) and the same target area (emotional exchange).

The corresponding titles might be:

Emotional vitality is running water.

Emotional vitality is overflowing water.

Emotional vitality is pressurized liquid.

A decision for one of these titles cannot yet be made; they are

provisional constructions. [7]

Experience shows that it is also too early to formulate a title

based on just three metaphors. We may well find further

metaphors to add to the image of the bursting dams.

It is true but hardly noticed by many that all of us speak in

metaphors quite often. Nobody has realized that we even live by

metaphors. George Lakoff came up with a huge list of

metaphors based on the different aspects of life. According to

him metaphors shape and refine our way of thinking, makes us

more imaginative and helps us in structuring our thoughts in a

better way. An example is quoted here, that is taken from a

source online “Thinking of marriage as a "contract agreement,"

for example, leads to one set of expectations, while thinking of it

as "teamplay," "a negotiated settlement," "Russian roulette," "an

indissoluble merger," or "a religious sacrament" will carry

different sets of expectations. When a government thinks of its

enemies as "turkeys or "clowns" it does not take them as serious

threats, but if the are "pawns" in the hands of the communists,

they are taken seriously indeed.” [8]

D. Concepts and metaphors we live by:

Most people think that they don’t need and use metaphors in

their daily lives but George Lakoff says that according to his

studies, metaphors are a important part of everyone’s lives even

if they fail to notice it. In his article, George took an example to

explain how metaphors are thought of as concepts and how they

are used in everyday lives. A conceptual example “Metaphor is

War” is taken. [8] This conceptual metaphor is used in different

forms and expressions by many of us.Those are:

“Your claims are indefensible.

He attacked every weak point in my argument.

His criticisms were right on target.

I demolished his argument.

I've never won an argument with him.

You disagree? Okay, shoot!

If you use that strategy, he'll wipe you out.](https://image.slidesharecdn.com/70a3ffcd-5ced-43fc-b804-c6716bc9f2cd-161110162233/75/Study_Report-3-2048.jpg)

![ He shot down all of my arguments.” [8]

Here, Argument is war metaphor is the one that we live by, or to

be clearer, it means that we structure our actions based on the

term arguing. People can also have different views and thoughts

about arguments or doesn’t consider the above statements as

arguments at all. This is what makes it a conceptual metaphor.

“The essence of metaphor is understanding and experiencing

one kind of thing in terms of another” [8]

‘Time’ is explained as how it is used in metaphors by using

simple English words. Few metaphors are quoted here that are

taken from Lakoff list of metaphors.

“You're wasting my time.

This gadget will save you hours. I don't have the time

to give you.

How do you spend your time these days? That flat tire

cost me an hour.

I've invested a lot of time in her.

I don't have enough time to spare for that. You're

running out of time.

You need to budget yourtime.

Put aside aside some time for ping-pong.

Is that worth your while?

Do you have much time left?

He's living on I borrowed time.

You don't use your time, profitably.

I lost a lot of time when I got sick.

Thank you for your time.

She spends hertime unwisely.

The diversion should buy him some time.

Time is money

Time heals all wounds.

Time will make you forget.

Time had made her look old.

Time had not been kind to him.

The ravages of time”. [1] [8]

A significantly more subtle instance of how a metaphorical

idea can conceal a part of our experience can be seen in what

Michael Reddy has called the "conduit metaphor."' Reddy

watches that our dialect about language is organized generally

by the following metaphor. Conduit metaphors are those in

which an idea is put into words and sent to a person. Michael

Reddy documented many metaphors in this category during his

research. Few examples of those are taken from his works:

“It's hard to get that idea across to him.

I gave you that idea.

Your reasons came through to us.

It's difficult to put my ideas into words.

When you have a good idea, try to capture it

immediately in words.

Try to pack more thought into fewer words.

You can't simply stuff ideas into a sentence any old

way.

The meaning is right there in the words.

Don't force your meanings into the wrong words.

His words carry little meaning.

The introduction has a great deal of thought content.

Your words seem hollow.

The sentence is without meaning.

The idea is buried in terribly dense paragraphs”.[8]

There are also orientation metaphors where emotions or words

are described using directions/orientations. For example: Happy

is up and sad is down. Here direction up is used to denote

happiness as it has a positive meaning. Similarly, direction down

is used to show sadness. Few examples are taken from George

Lakoff papers and his works. They are:

“I'm feeling up.

That boosted my spirits.

My spirits rose.

You’re in high spirits.

Thinking about her always gives me a lift.

I'm feeling down.

He's really low these days.

My spirits sank.” [8]

Orientation up always has a positive meaning whereas down

always has a negative meaning. Similar examples pointing to

direction up and down are:

The number of books printed each year keeps going up.

His draft number is high.

My income rose last year.

The amount of artistic activity in this state has gone

down in the past year.

The number of errors he made is incredibly low.

His income fell last year.

If you're 100 hot,turn the heat down. [8]

III. PROJECT DESCRIPTION

Metaphors are collected into a csv or text file from various

sources. A program is written in Java programming language

using regex matcher and regex pattern classes. Regex Matcher

class has a Matcher method that matches a complete input line

against a pattern. Java Matcher class is used to find multiple

occurrences of a regular expression in a text file. Pattern class is

used to work with regular expressions. As this class identifies

the patterns of regular expressions. This is also called as pattern

matching. Pattern.Matches can be used to see if a text matches

the regular expression (pattern). Pattern.compile can also be

used with a pattern object to check for the text matching the

regular expression. This is useful when multiple files are to be

compared.

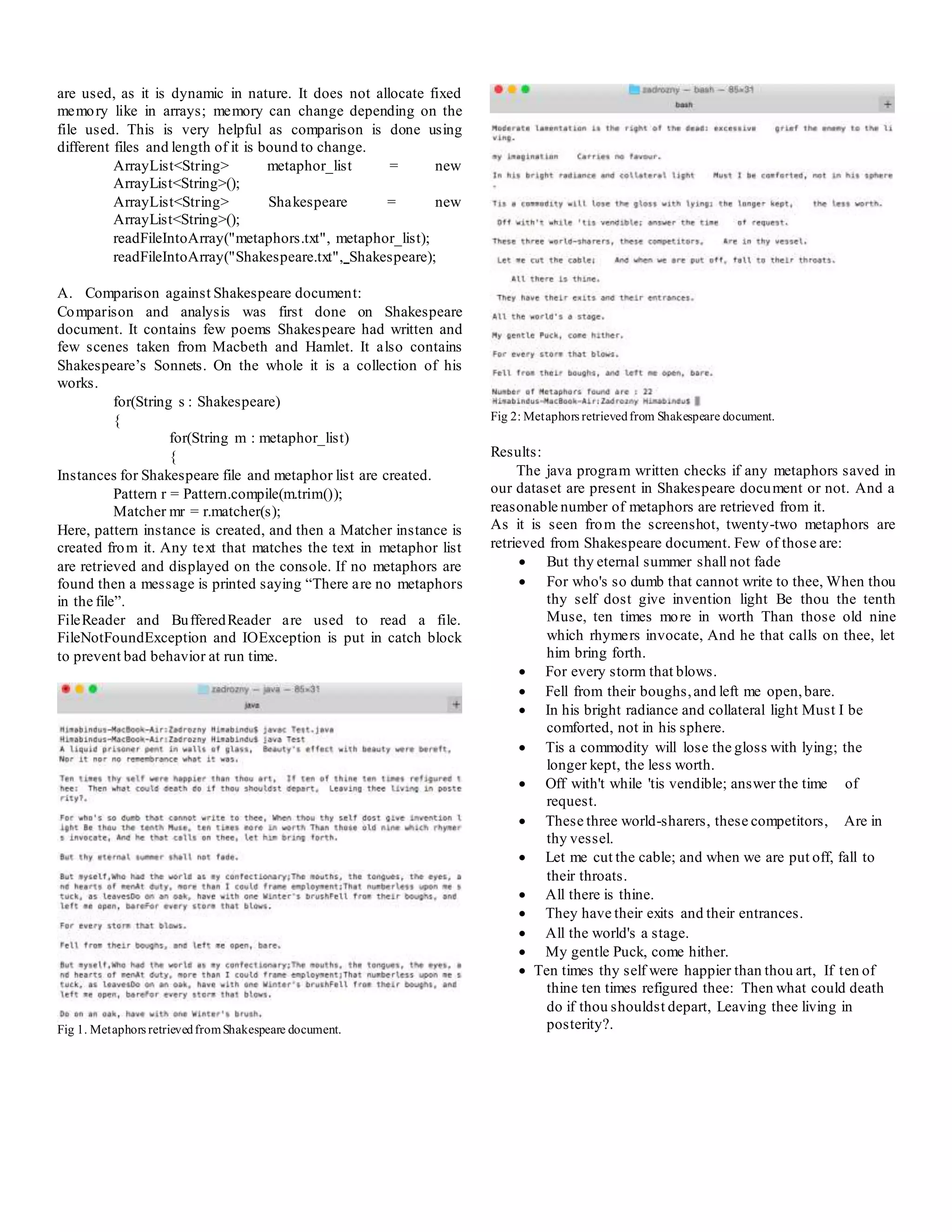

Metaphors file is taken as input into ArrayList and similarly

technical document is also taken into the array. Here ArrayLists](https://image.slidesharecdn.com/70a3ffcd-5ced-43fc-b804-c6716bc9f2cd-161110162233/75/Study_Report-4-2048.jpg)

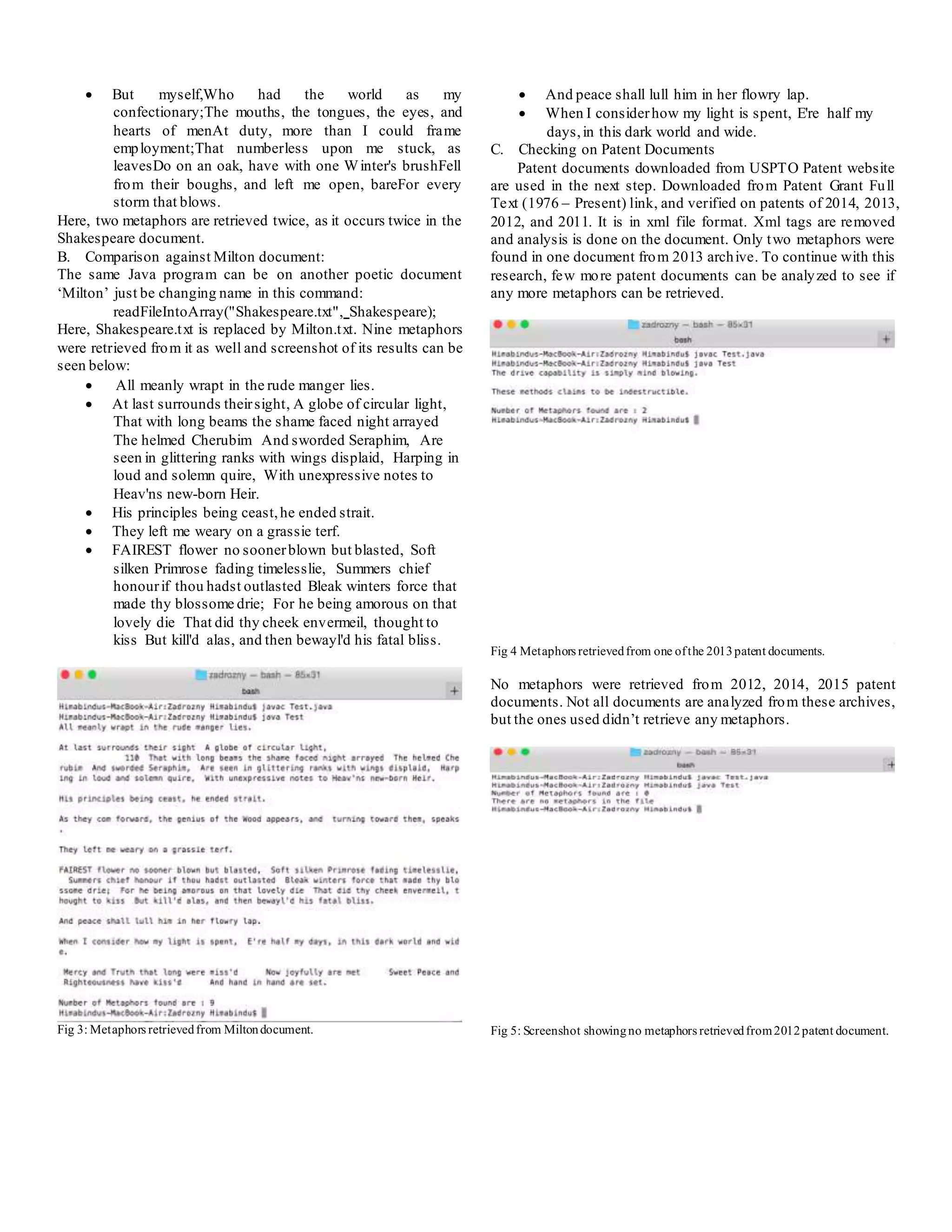

![IV. WORKING IN HADOOP

Firstly, Hadoop has to be installed on the machine.

Requirements to install Hadoop on a Mac OS X are:

a) Java Version 1.6 or later

b) Ruby, Home brew

c) SSH and Remote Login

Homebrew installs Hadoop 2.3. Commands given to install

Hadoop using brew is $brew install Hadoop. Homebrew is a

package manager that installs and uninstalls software. This tool

is of great help as Hadoop installation makes it easier.

Homebrew installs a single node cluster. Commands used to run

Hadoop locally on machine are:

$ ./start –dfs.sh

$ ./start –yarn.sh

$jps is a command used to check how many nodes are running

and to verify if Hadoop is running or not.

Access to Hadoop on university server has to be granted. After it

is granted, check if all the nodes are running or not.

Technical documents like USPTO patent files are to be used to

check for metaphors against our dataset. For more analysis

poetic documents like Shakespeare and Milton can also be used

to check for metaphors.

V. CONCLUSION

Metaphors are expressions that are used to describe a thing,

object or a situation etc. Metaphors can be divided into various

categories. For this project, documents were compared against a

couple of thousand metaphors. Around twenty-two metaphors

were retrieved from Shakespeare document, nine from Milton

and a very less number from one patent document. Whole

project is done in Java programming language, using the concept

of regular expressions.

VI. FUTURE SCOPE

Dataset of metaphors taken here is small when compared to the

ocean of metaphors that are available. The dataset can be

modified and compared against various other technical and

poetic documents. Time taken by the program to retrieve results

is a bit high, which can be optimized in the future.

REFERENCES

[1] Index of /lakoff/ metaphors, retrieved from

http://www.lang.osaka-u.ac.jp/ ~sugimoto / MasterMetaphorList

/metaphors/

[2] Metaphorexamples, E reading worksheets, retrieved from

http://www.ereadingworksheets.com/figurative-

language/figurative-language-examples/metaphor-examples/

[3] Metaphorexamples, retrieved from

http://examples.yourdictionary.com/metaphor-examples.html

[4] MetaphorDefination, Literary Devices, retrieved from

http://literarydevices.net/metaphor/

[5] Shakespeare's Metaphors, A compliation of Shakespeare's

most powerful metaphors by Shakespearean scholar Henry

Norman Hudson.Available: http://www.shakespeare-

online.com/biography/metaphorlist.html

[6] Pitcher, Rod. "The metaphors that research students live

by." The qualitative report 18.36 (2013): 1-8.

[7] Schmitt, Rudolph. "Systematic metaphor analysis as a

method of qualitative research." The Qualitative Report 10.2

(2005): 358-394.

[8] The Systematicity of Metaphorical Concepts,retrieved from

http://theliterarylink.com/metaphors.html

[9] Henry V, William Shakespeare, retrieved from

http://www.schillerinstitut.dk/metafor240312.pdf

[10] 10 Java Regular expressions you should know, by mkyong,

retrieved from http://www.mkyong.com/regular-expressions/10-

java-regular-expression-examples-you-should-know/

[11] USPTO Patent Grant Full Text, retrieved from

https://www.google.com/googlebooks/uspto-patents-grants-

text.html#2013

[12] 200 short and sweet metaphor examples, retrieved from

http://literarydevices.net/a-huge-list-of-short-metaphor-

examples/

[13] Shakespeare's Metaphors and Similes, from Shakespeare:

His Life, Art, and Characters, Volume I. New York: Ginn and

Co. Available: http://www.shakespeare-

online.com/biography/imagery.html

[14] SHAKESPEARE’S SONNETS , BY William Shakespeare,

retrieved from

http://www.sparknotes.com/shakespeare/shakesonnets/section4.r

html

[15] Shakespeare’s most popular quotes by Shakespeare,

retrieved from

http://www.shakespearemag.com/summer03/dozen.asp

[16] Meta, Milton and Metaphor: Models of Subjective

Experience, by Penny Tompkins and James Lawley, First

published in Rapport,journal of the Association for NLP (UK),

Issue 36, August 1996. Available:](https://image.slidesharecdn.com/70a3ffcd-5ced-43fc-b804-c6716bc9f2cd-161110162233/75/Study_Report-7-2048.jpg)

![http://www.cleanlanguage.co.uk/articles/articles/2/1/Meta-

Milton-Metaphor-Models-of-Subjective-Experience/Page1.html

[17] Metaphorically Speaking Unlocking the meaning of

Shakespeare’s metaphors. Retrieved from

http://teacher.scholastic.com/lessonrepro/reproducibles/profbook

s/shakespeare.pdf

[18] Regular Expression Language - Quick Reference, retrieved

from https://msdn.microsoft.com/en-

us/library/az24scfc(v=vs.110).aspx

[19] Java Regex - Pattern (java.util.regex.Pattern), retrieved

from http://tutorials.jenkov.com/java-regex/pattern.html

[20] Methods ofthe Pattern Class, retrieved from

https://docs.oracle.com/javase/tutorial/essential/regex/pattern.ht

ml

[21] Java Regex - Matcher (java.util.regex.Matcher), retrieved

from http://tutorials.jenkov.com/java-regex/matcher.html

APPENDIX

Source Code:

import java.util.regex.Matcher;

import java.util.regex.Pattern;

import java.io.*;

import java.util.ArrayList;

import java.util.StringTokenizer;

public class Test {

public static void main(String [] args) {

ArrayList<String> metaphor_list = new

ArrayList<String>();

ArrayList<String> Shakespeare = new

ArrayList<String>();

readFileIntoArray("metaphors.txt", metaphor_list);

readFileIntoArray(“Shakespeare.txt", Shakespeare);

int i =0;

int j=0;

for(String s : Shakespeare)

{

for(String m : metaphor_list)

{

Pattern r = Pattern.compile(m.trim());

Matcher mr = r.matcher(s);

if(mr.find()) {

System.out.println(m);

System.out.printf("%n");

j++;

}

//System.out.println(s);

}

}

System.out.println("Number of Metaphors found are : "

+j);

if (j==0)

System.out.println("There are no metaphors in the

file");

}

public static void readFileIntoArray(String fileName,

ArrayList<String> list)

{

String line = null;

StringBuilder builder = new StringBuilder();

try {

FileReader fileReader = new FileReader(fileName);

BufferedReader bufferedReader = new

BufferedReader(fileReader);

while((line = bufferedReader.readLine()) != null) {

builder.append(line);

}

bufferedReader.close();

}

catch(FileNotFoundException ex) {

System.out.println("Unable to open file '" + fileName +

"'");

}

catch(IOException ex) {

System.out.println("Error reading file '"+ fileName +

"'");

}

catch(OutOfMemoryError ex){

System.out.println("File occupying too much space,

unable to open '" + fileName + "'");

}

StringTokenizer stringTokenizer = new

StringTokenizer(builder.toString(), ".");

while (stringTokenizer.hasMoreTokens())

{

list.add(stringTokenizer.nextToken()+".");

}

}

}](https://image.slidesharecdn.com/70a3ffcd-5ced-43fc-b804-c6716bc9f2cd-161110162233/75/Study_Report-8-2048.jpg)