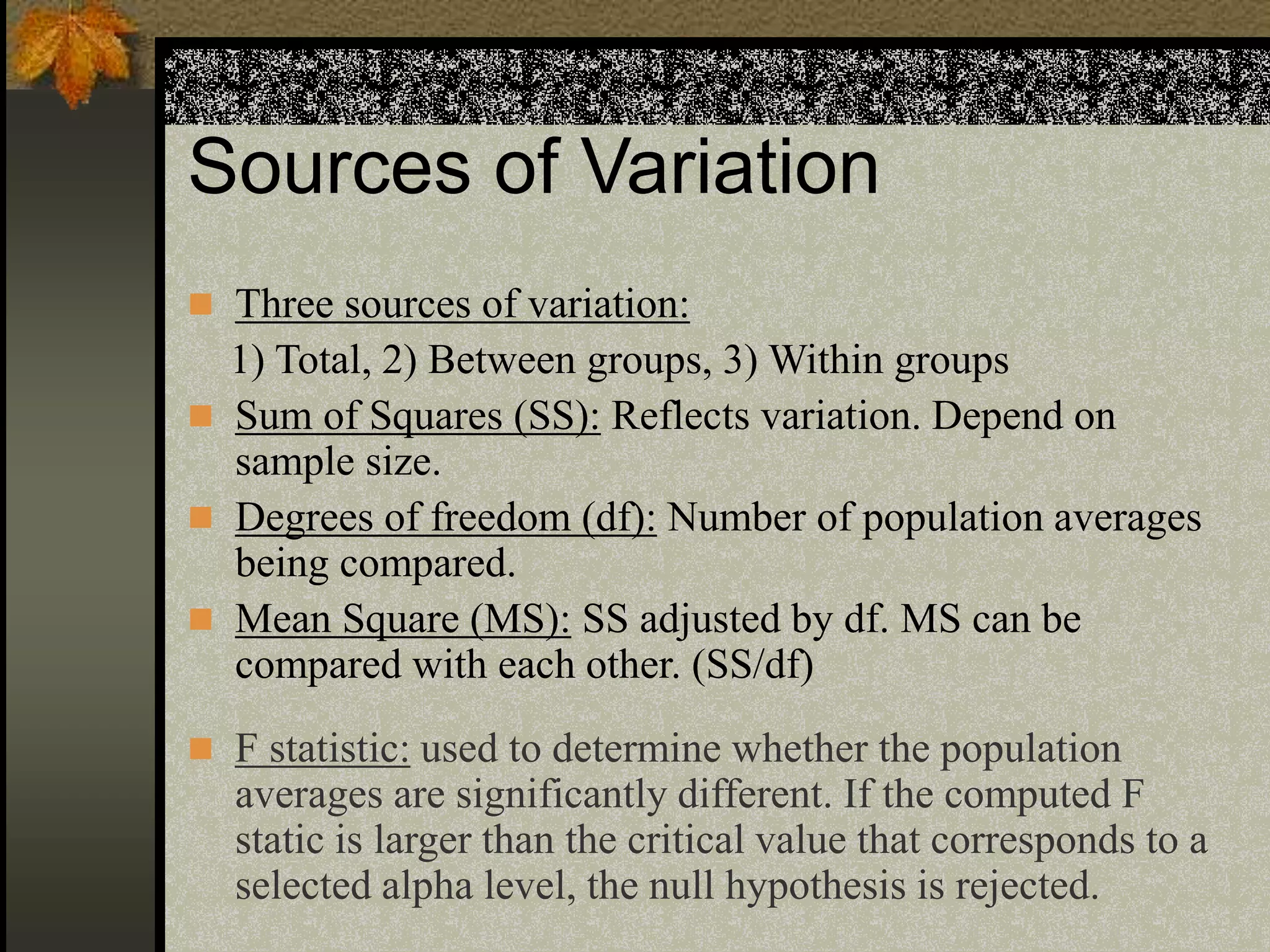

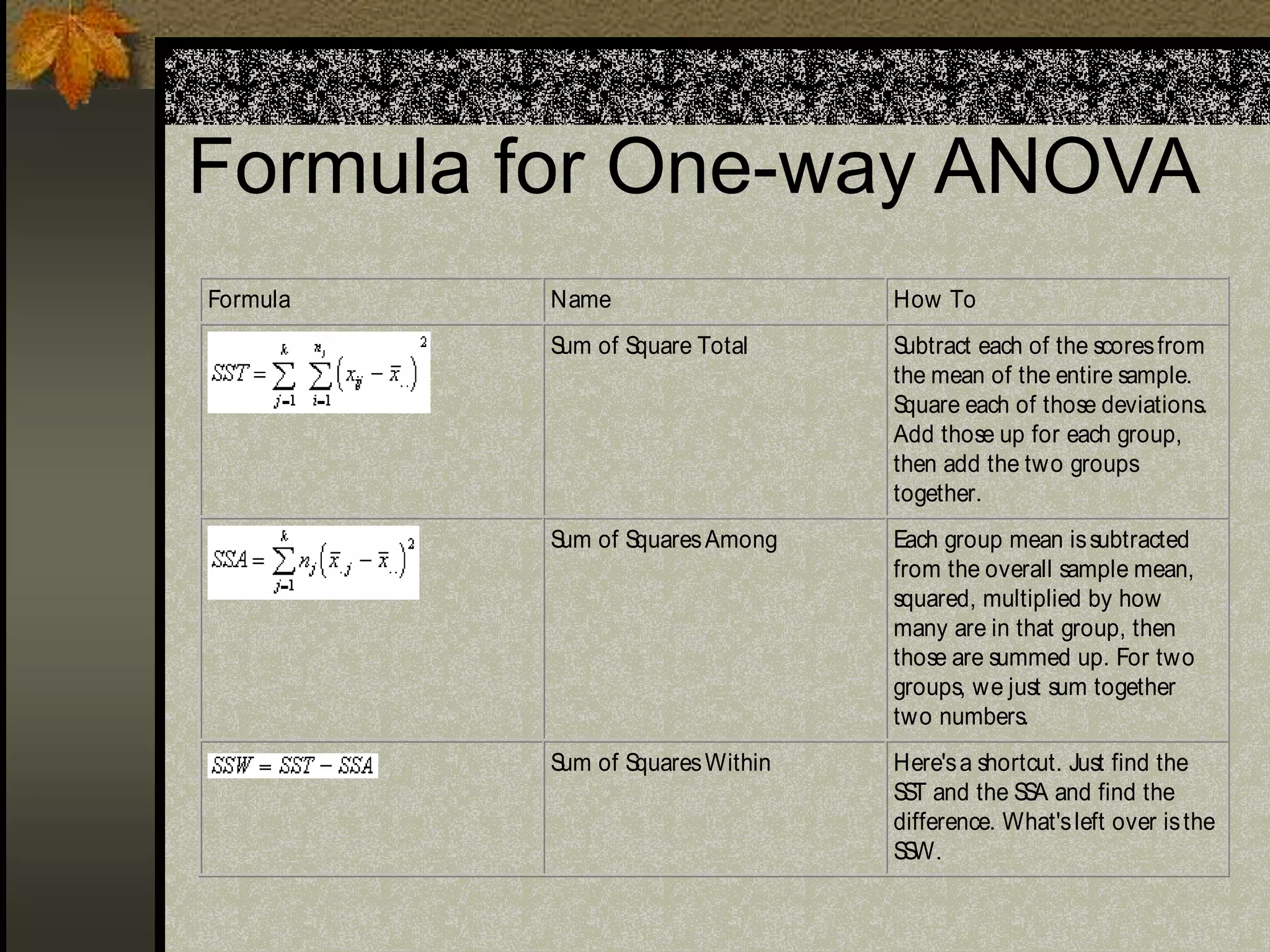

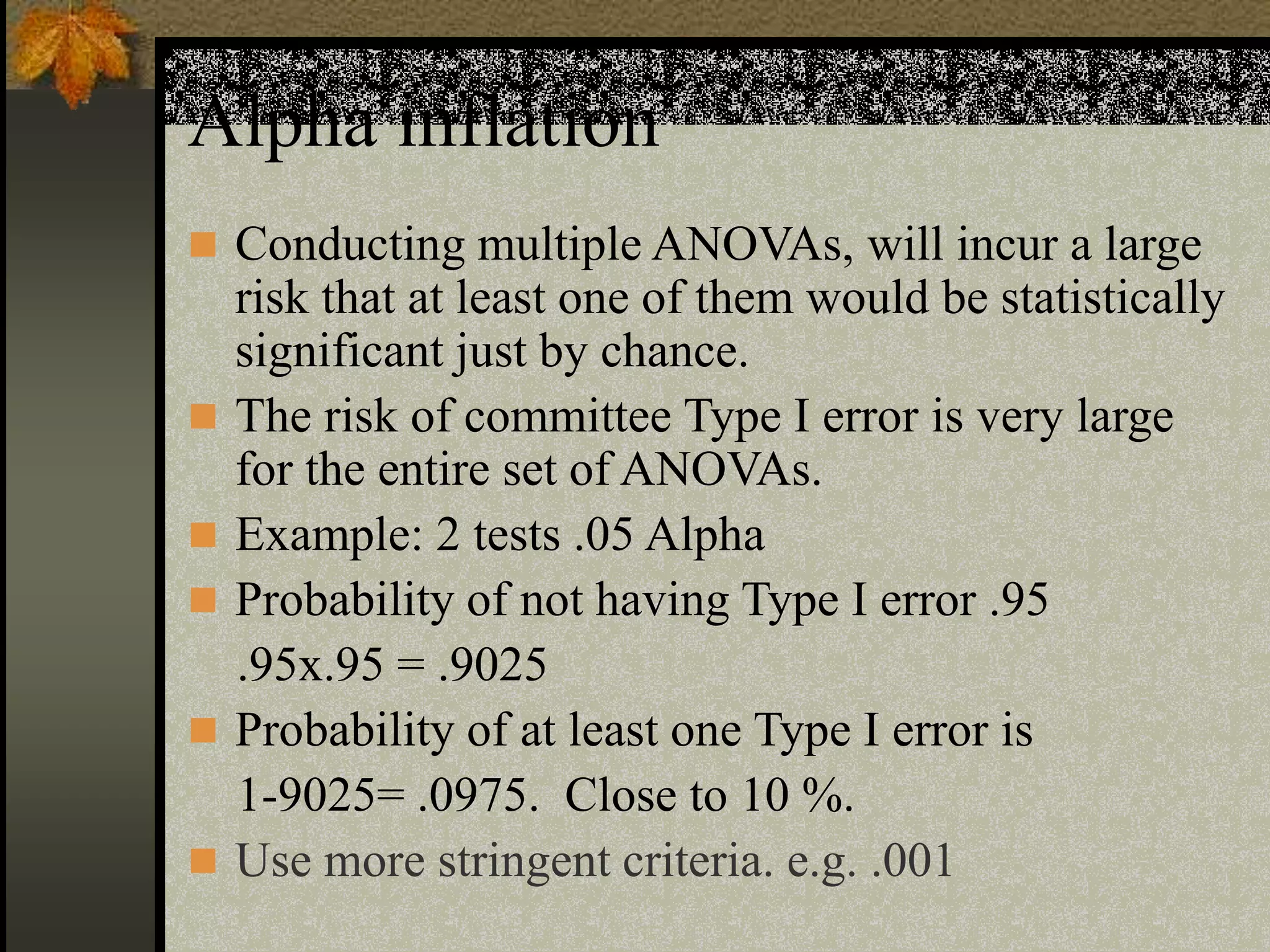

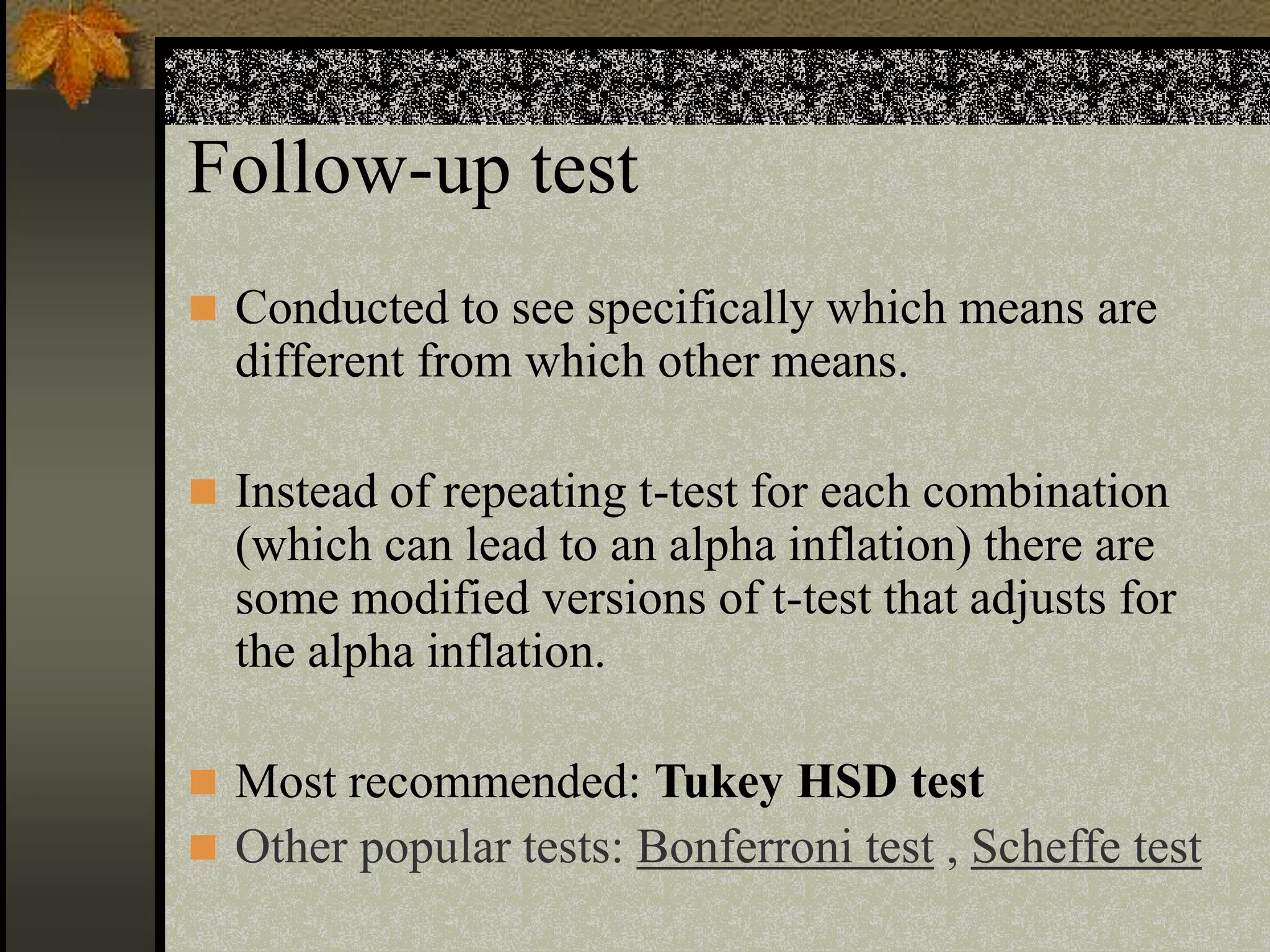

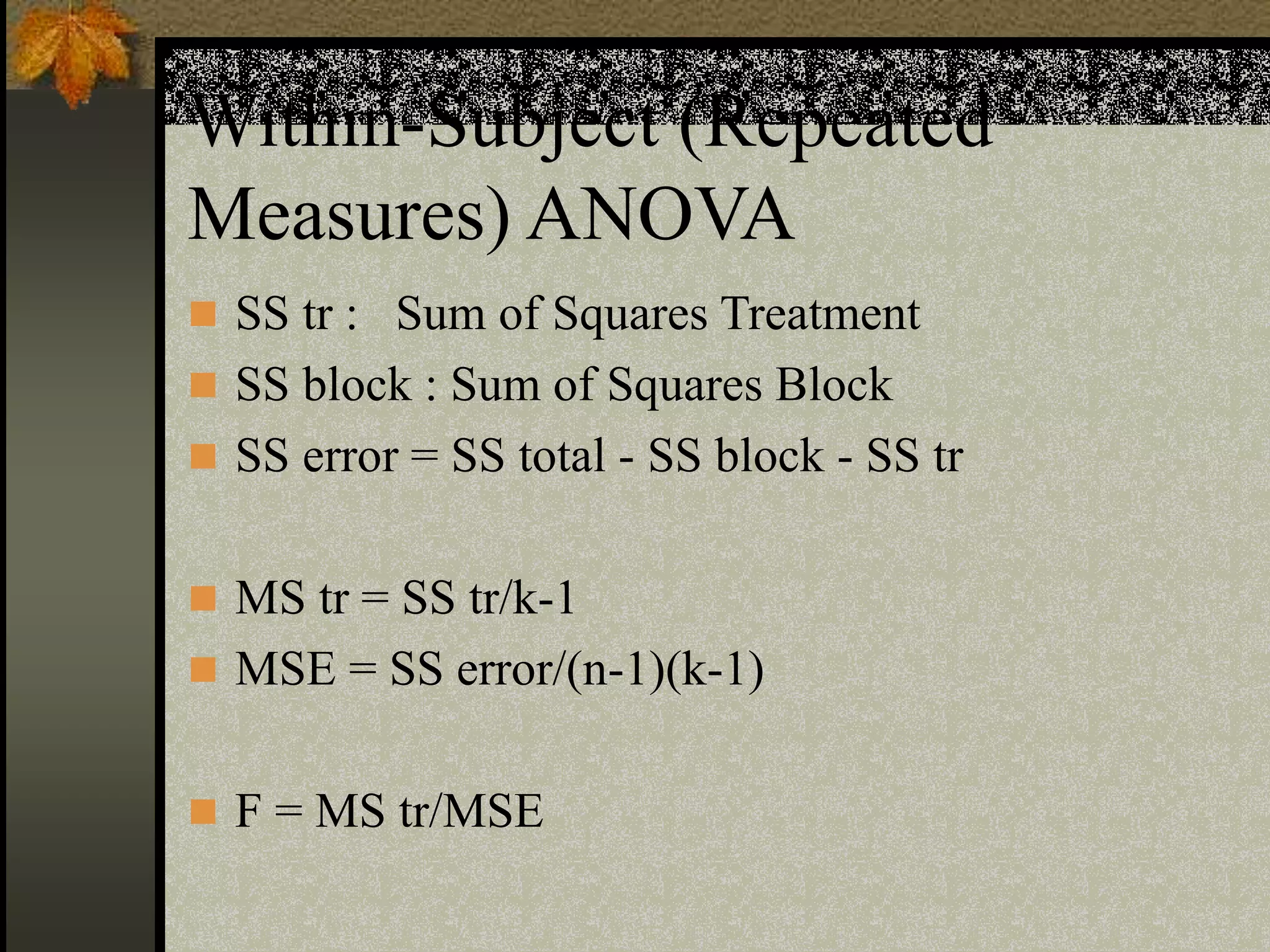

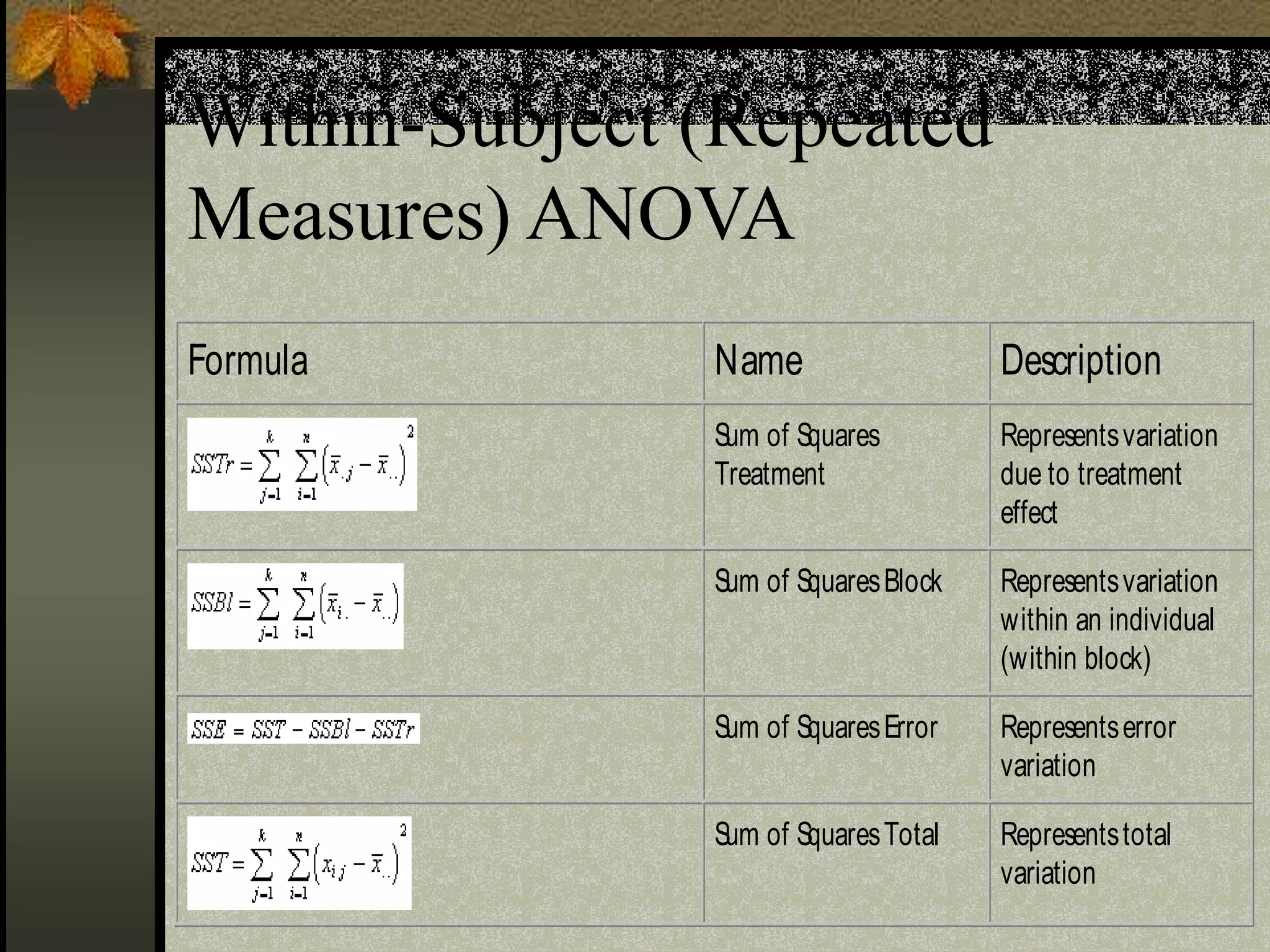

This document provides an overview of t-tests and analysis of variance (ANOVA) statistical methods. It defines different types of t-tests including one-sample t-tests, independent-samples t-tests, and paired-samples t-tests. It also explains one-way ANOVA, within-subjects ANOVA, factorial ANOVA, main effects and interaction effects in ANOVA. Key concepts such as confidence intervals, significance tests, and assumptions of normality are discussed. Examples are provided to illustrate how to set up null and alternative hypotheses and interpret statistical significance.