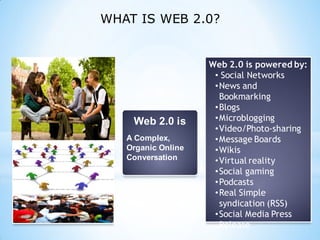

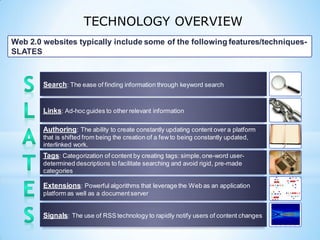

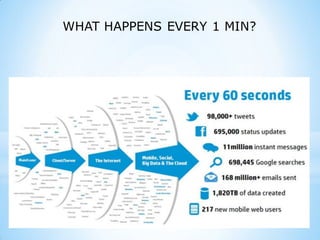

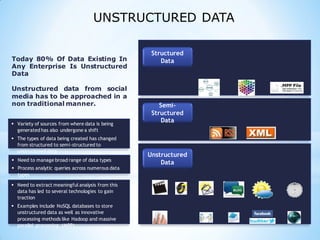

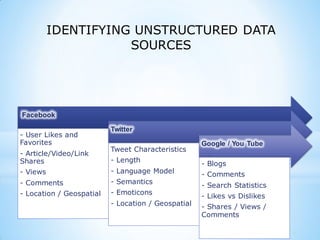

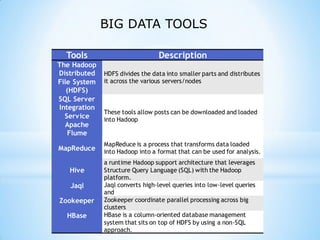

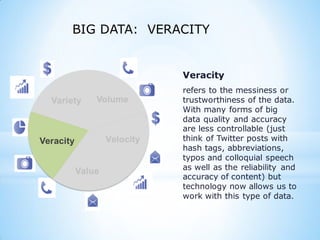

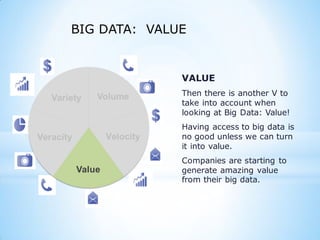

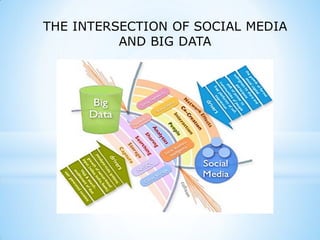

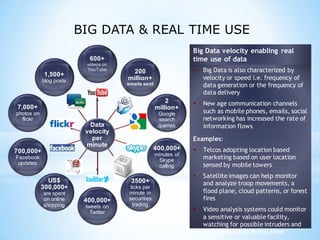

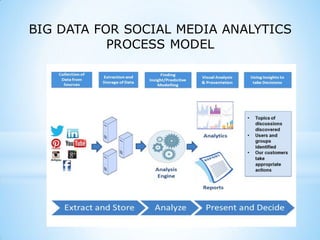

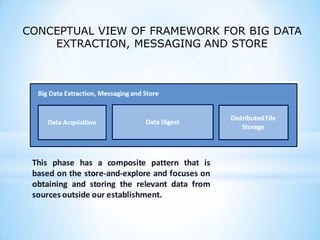

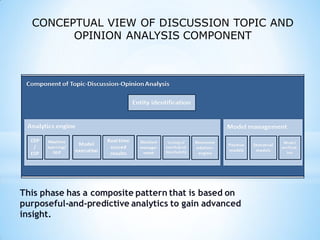

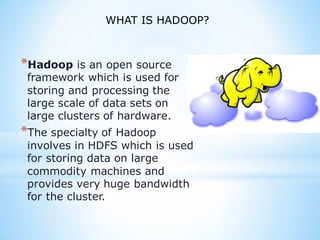

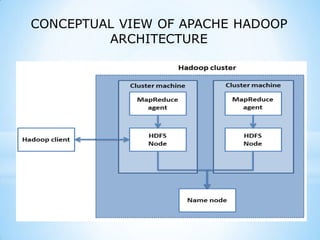

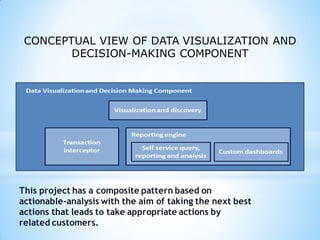

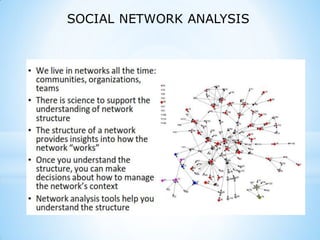

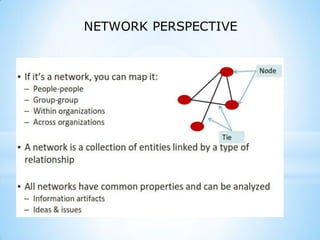

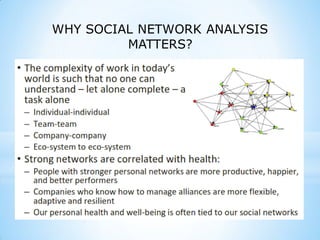

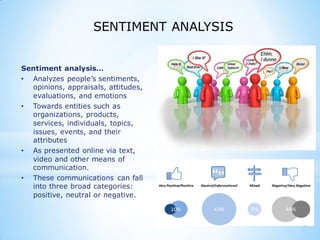

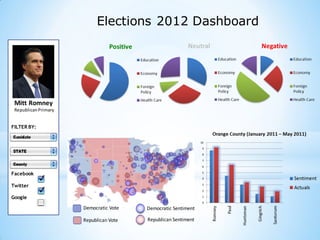

This document provides an overview of social media and big data analytics. It discusses key concepts like Web 2.0, social media platforms, big data characteristics involving volume, velocity, variety, veracity and value. The document also discusses how social media data can be extracted and analyzed using big data tools like Hadoop and techniques like social network analysis and sentiment analysis. It provides examples of analyzing social media data at scale to gain insights and make informed decisions.