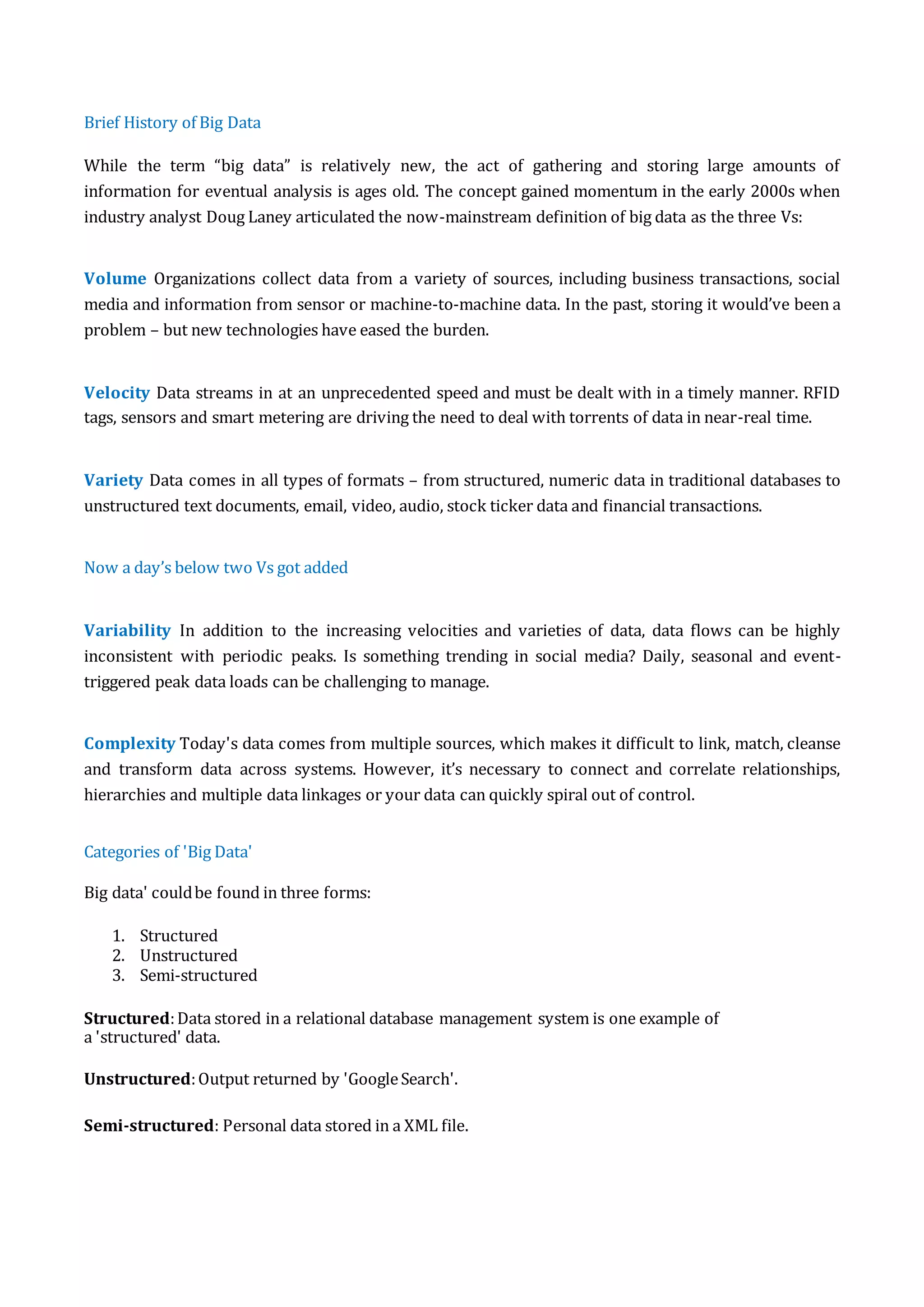

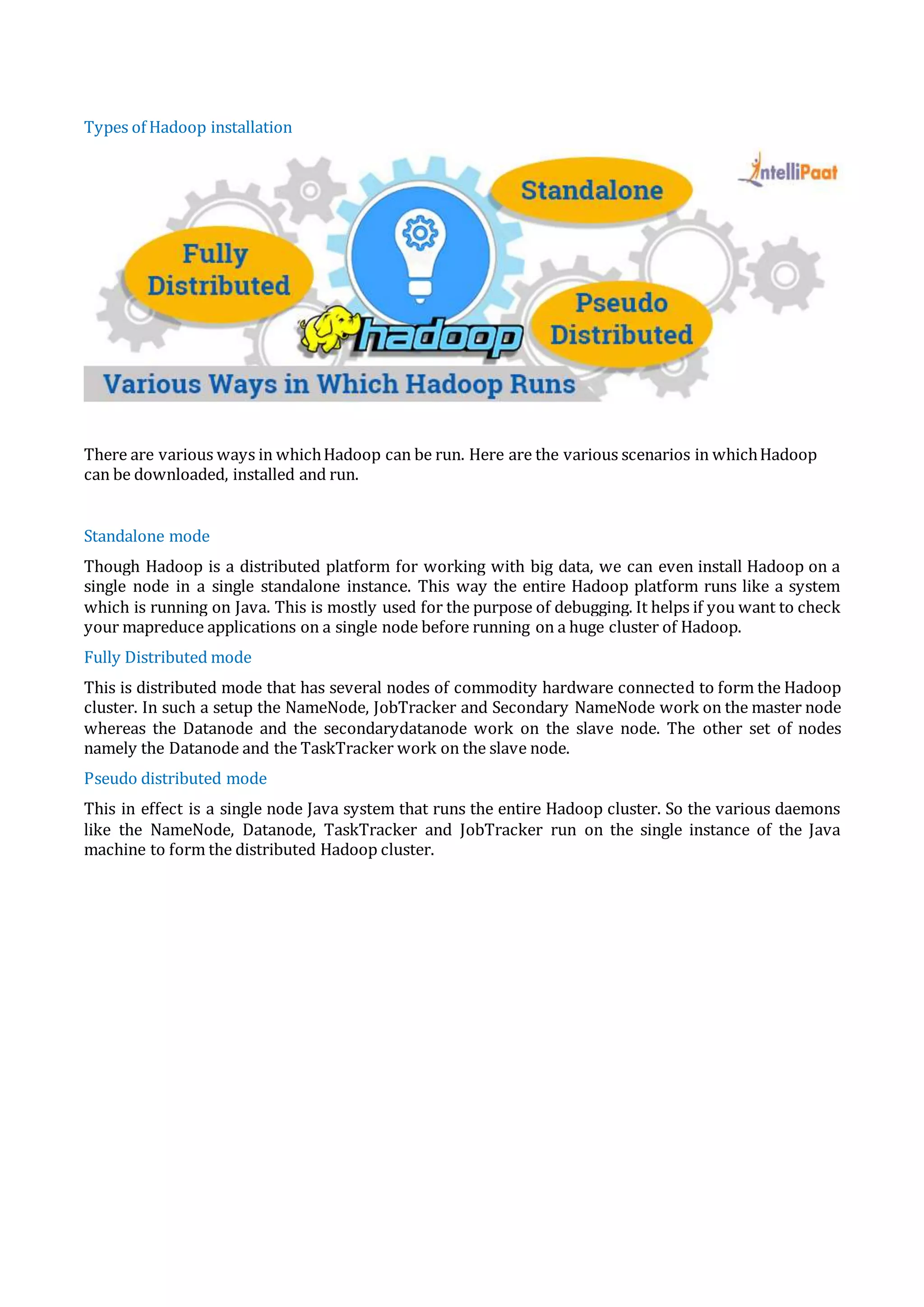

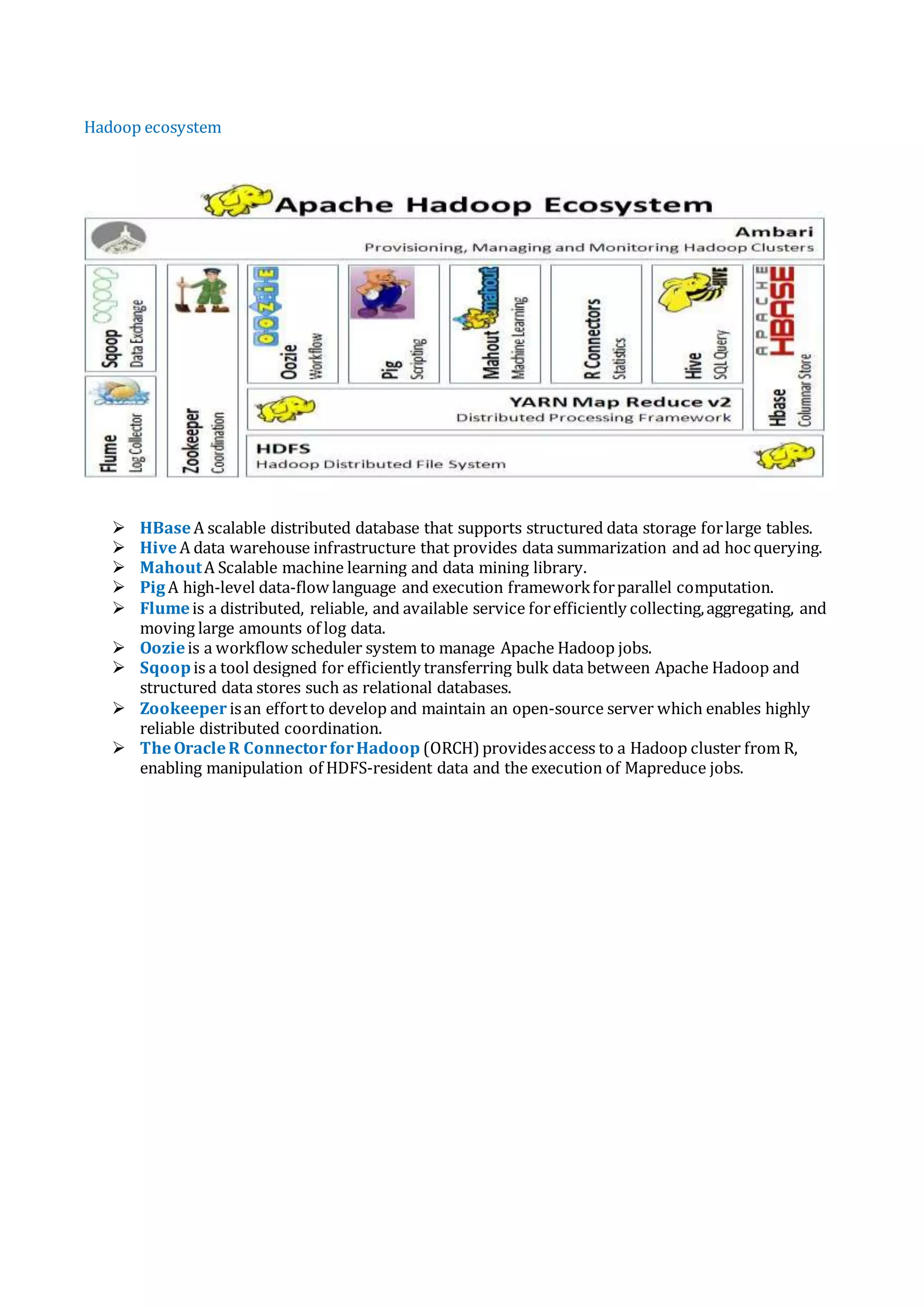

Big data refers to the vast amounts of structured and unstructured data generated daily, which traditional data management tools struggle to process efficiently. It is crucial for businesses as it enables cost and time reductions, product development, and informed decision-making when analyzed with high-powered analytics. Hadoop is an open-source framework that processes and stores large volumes of data quickly and reliably, offering scalability, fault tolerance, and flexibility, essential for managing the complexities of big data.