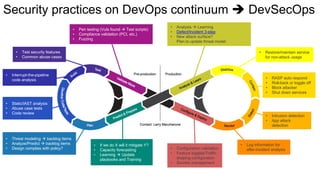

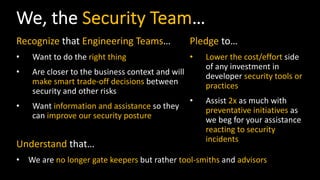

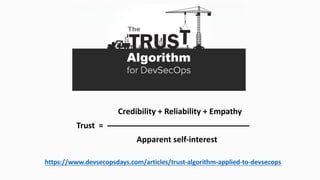

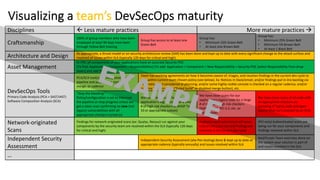

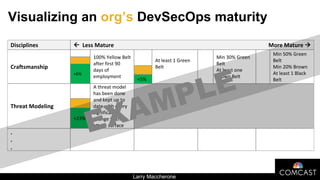

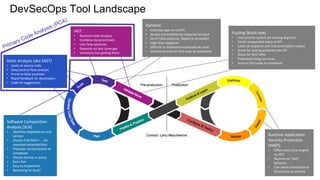

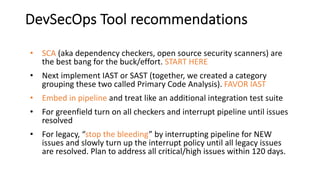

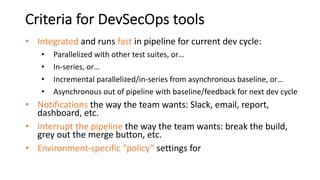

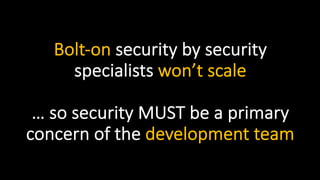

This document proposes a 3-part framework for adopting DevSecOps practices and culture change. The first part is to win over developers by building trust between security and development teams. The second part is to make security practices easy for developers to understand and implement. Visual tools are proposed to show maturity levels and guide progress. The third part is to provide transparency to management on adoption status through regular reporting. Easy-to-use security tools integrated into the development pipeline are also recommended, starting with software composition analysis and integrating static and interactive application security testing.

![LinkedIn.com/in/LarryMaccherone

Dev[Sec]Ops is…

empowered engineering teams

taking ownership

of how their product

performs in production

[including security]](https://image.slidesharecdn.com/whitesourcewebinar5-180508162816/85/Security-at-the-Speed-of-Software-Development-5-320.jpg)