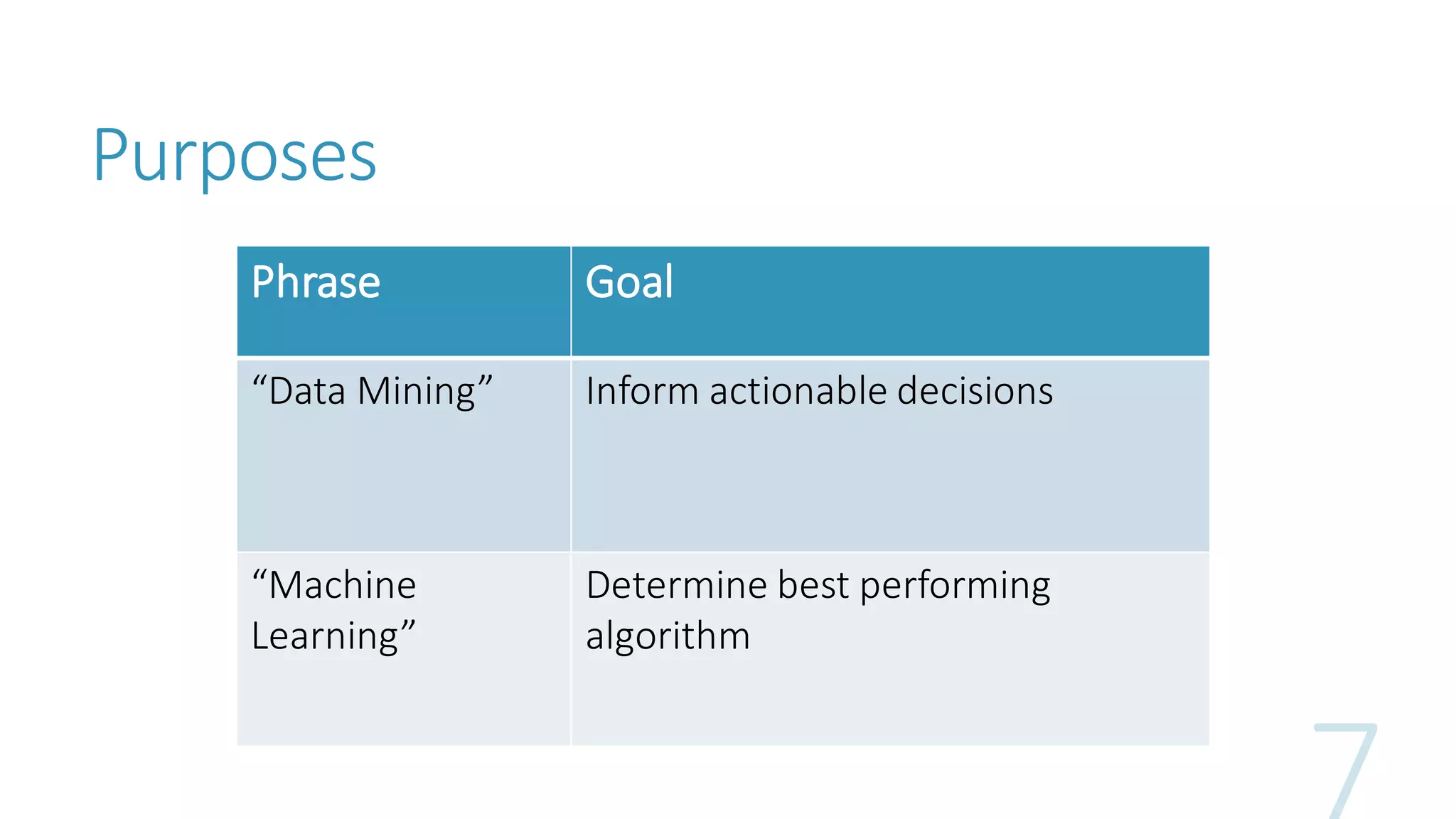

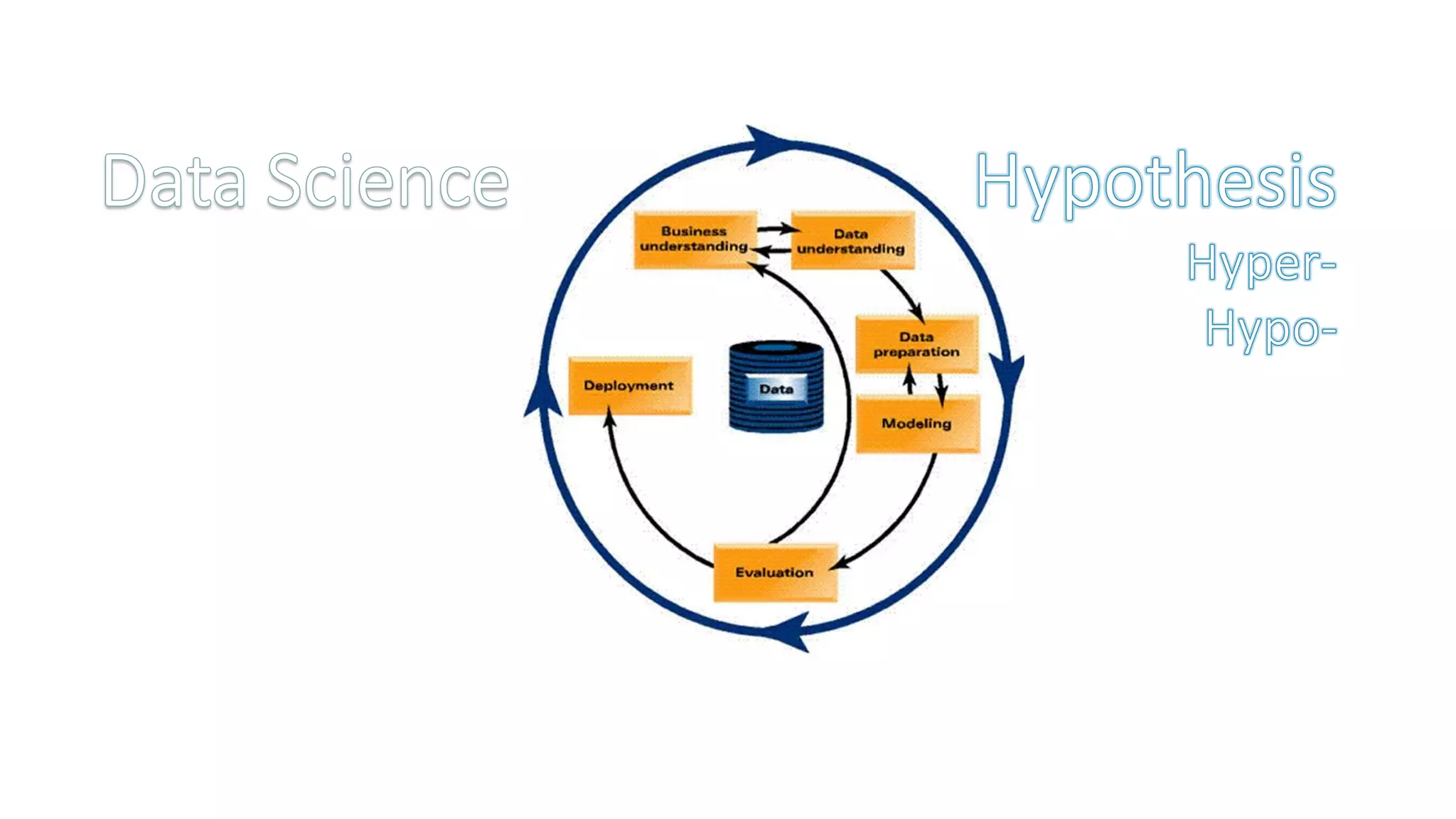

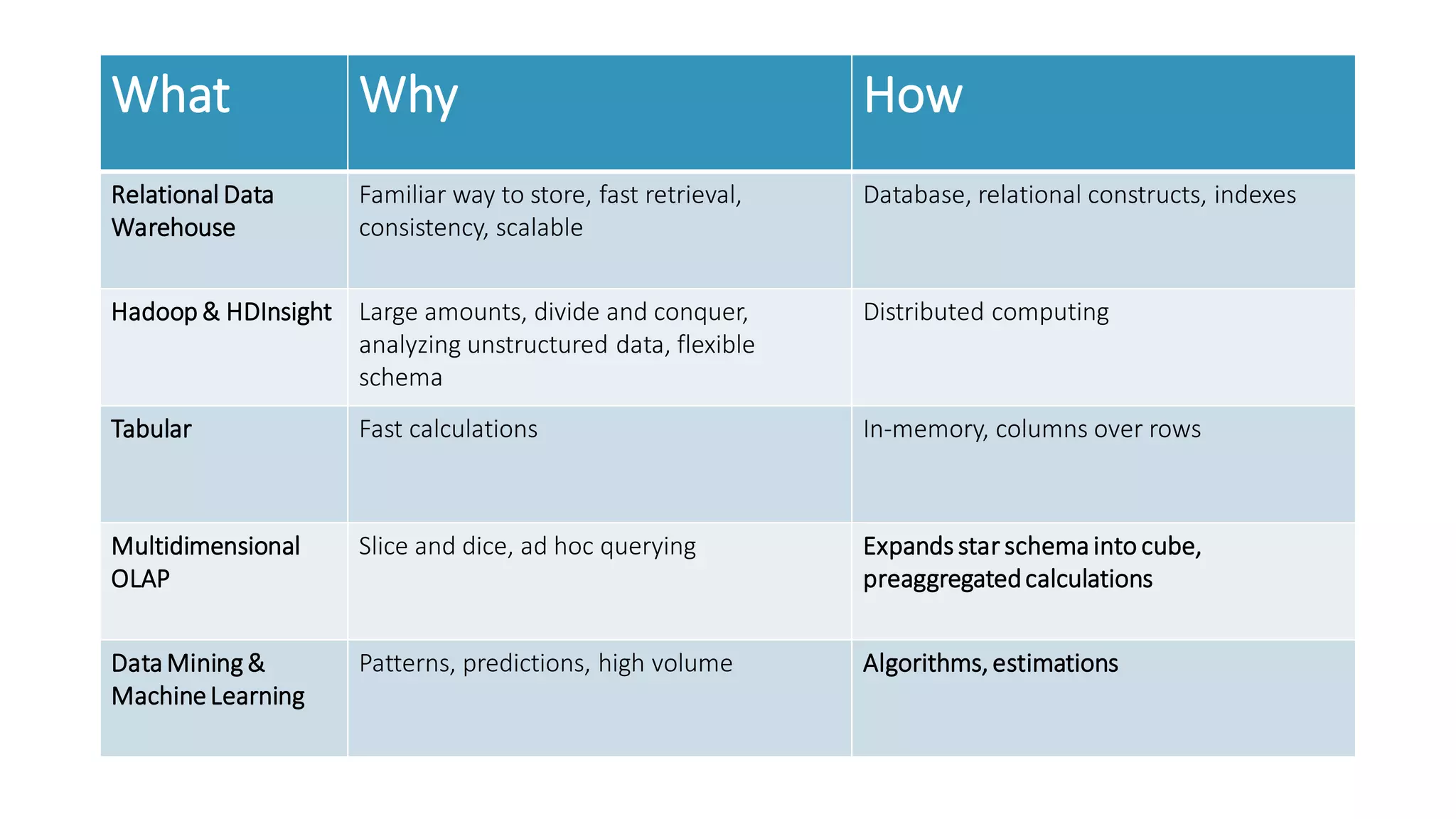

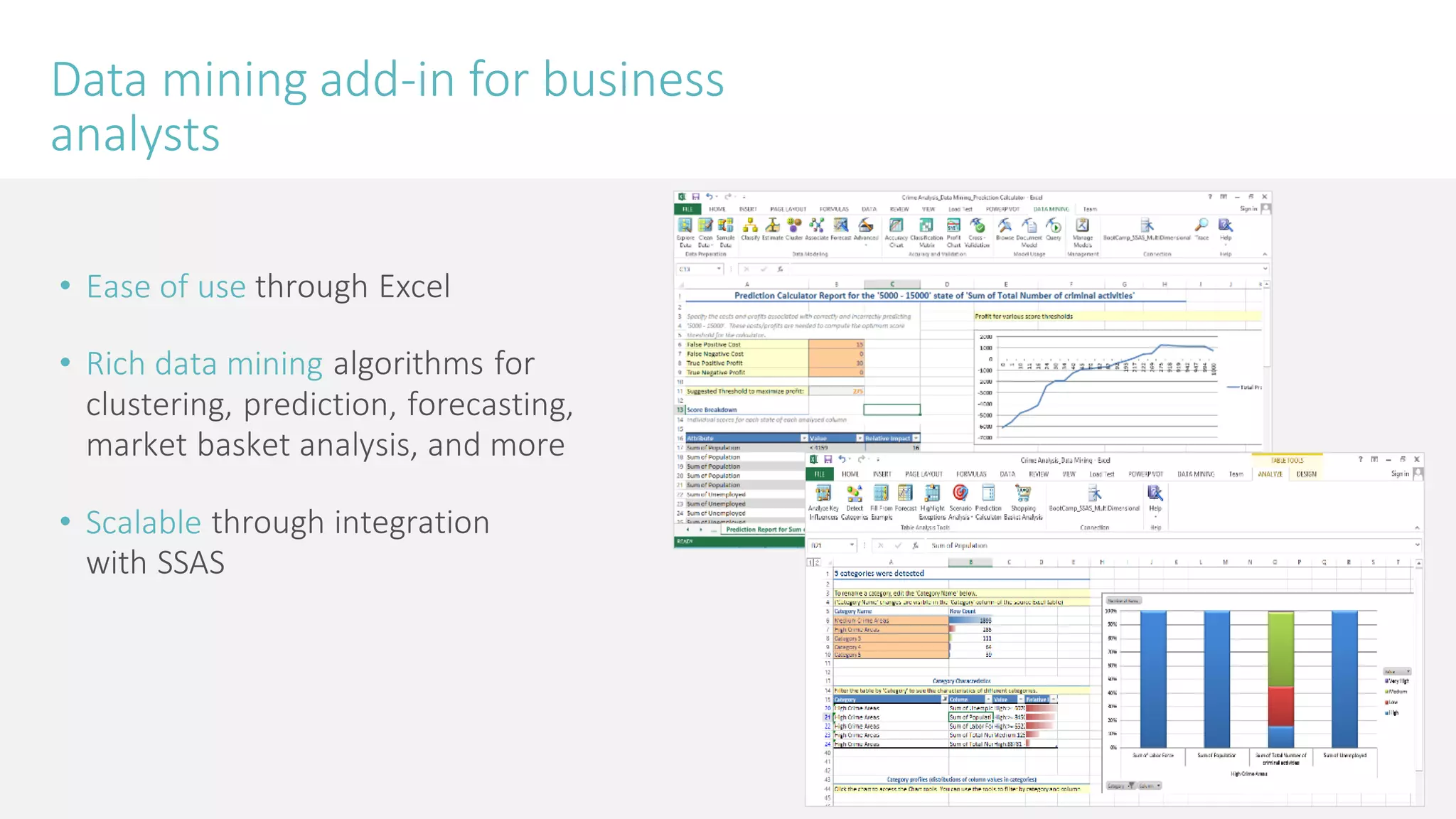

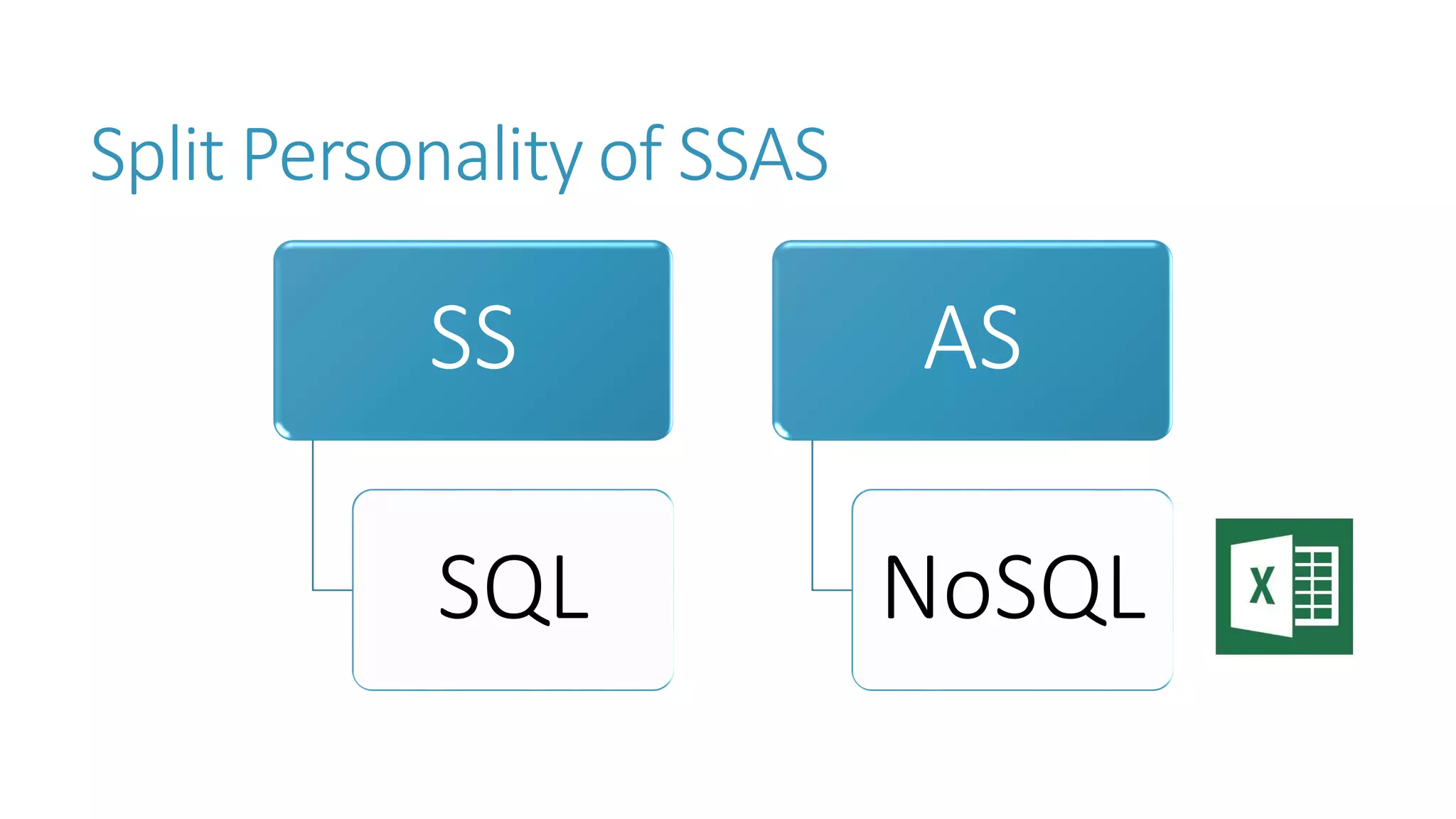

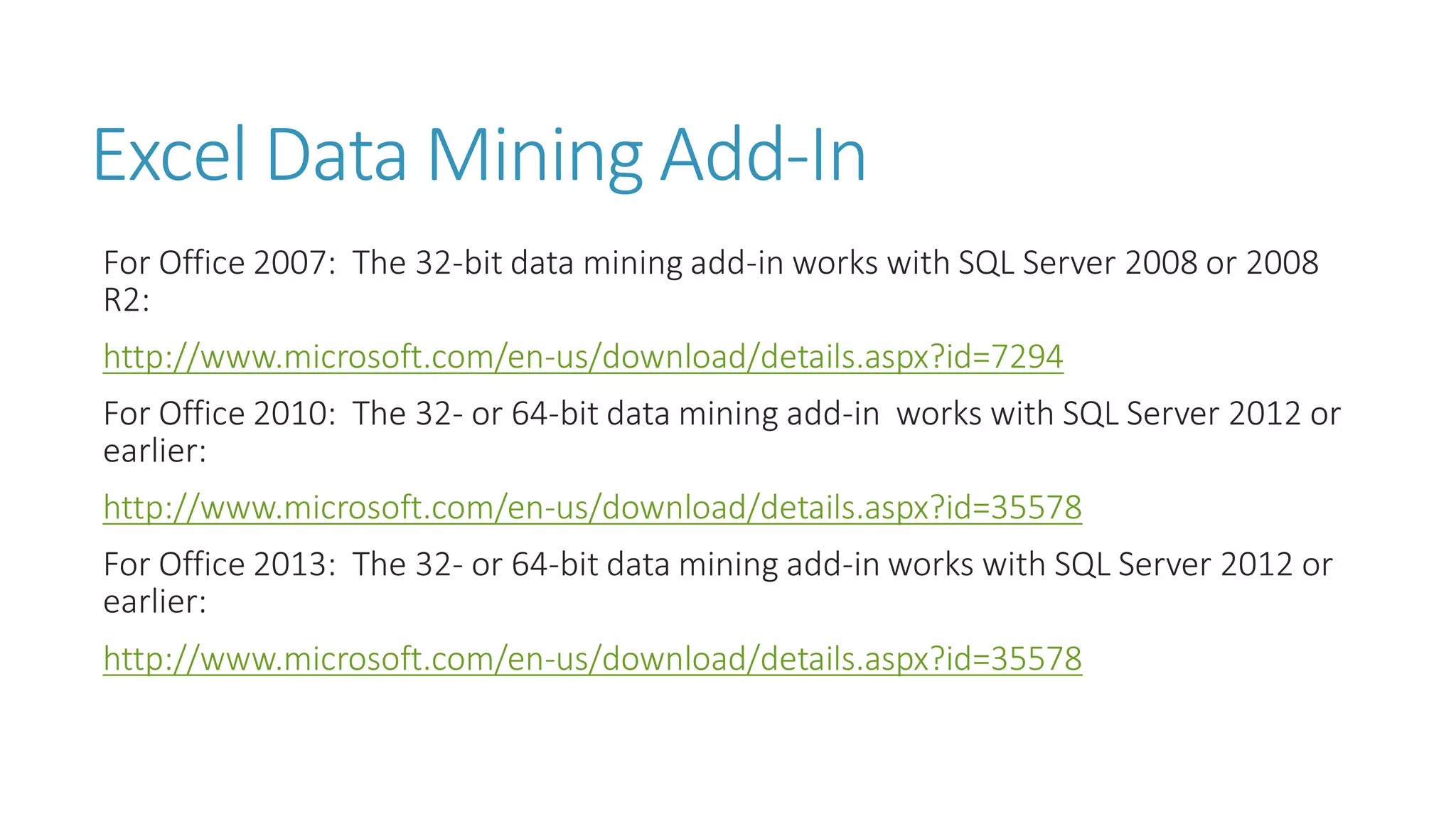

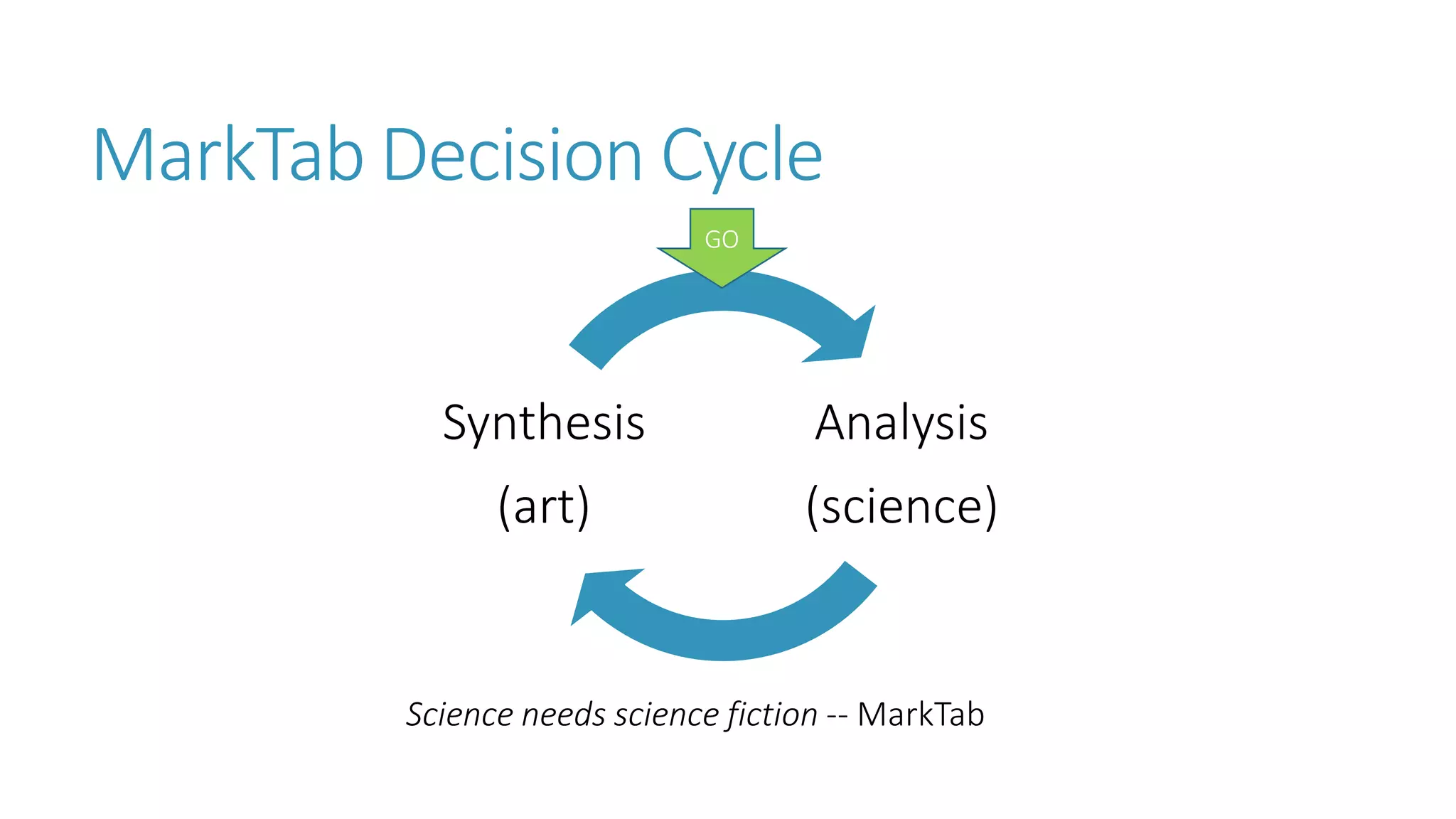

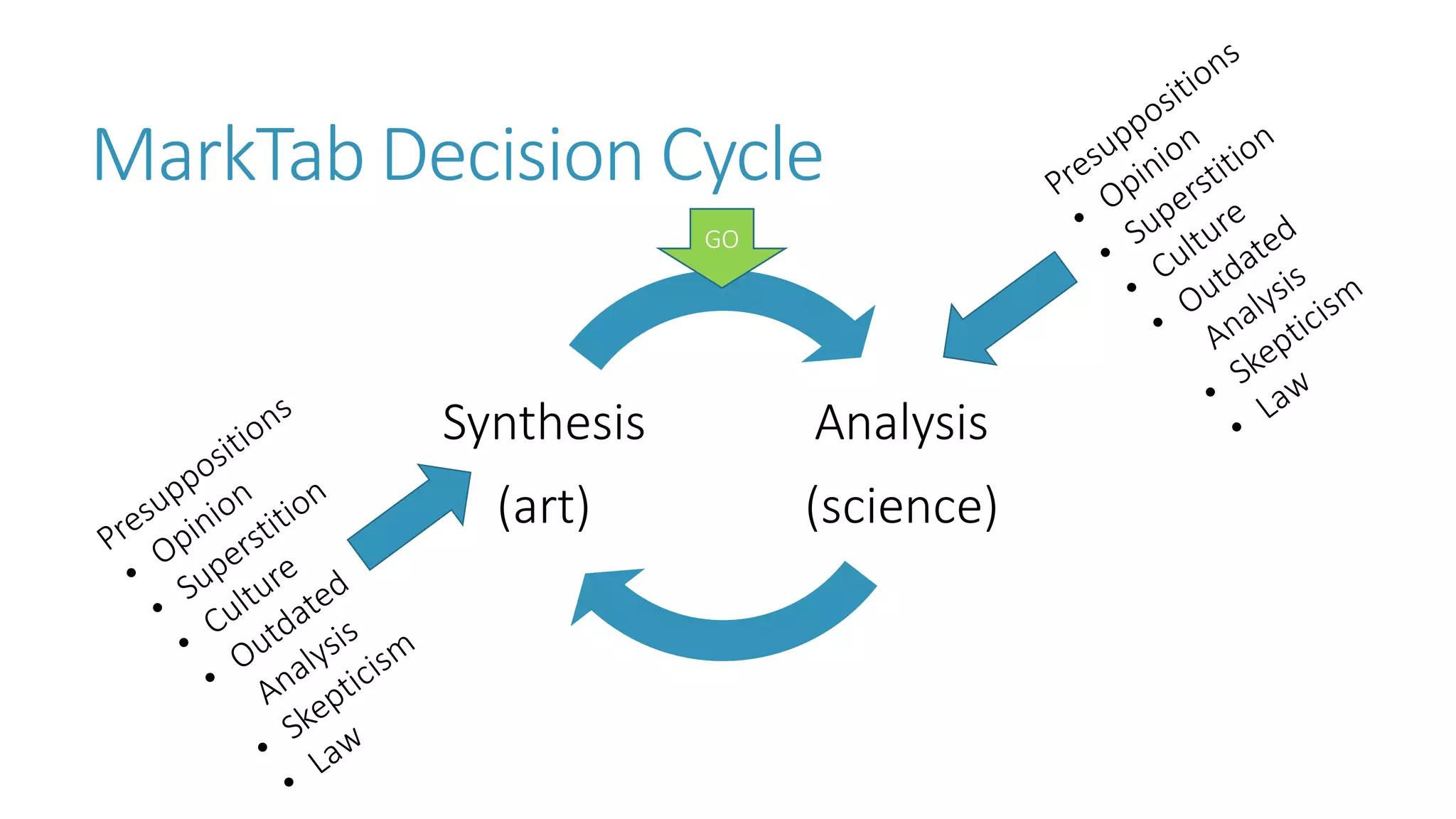

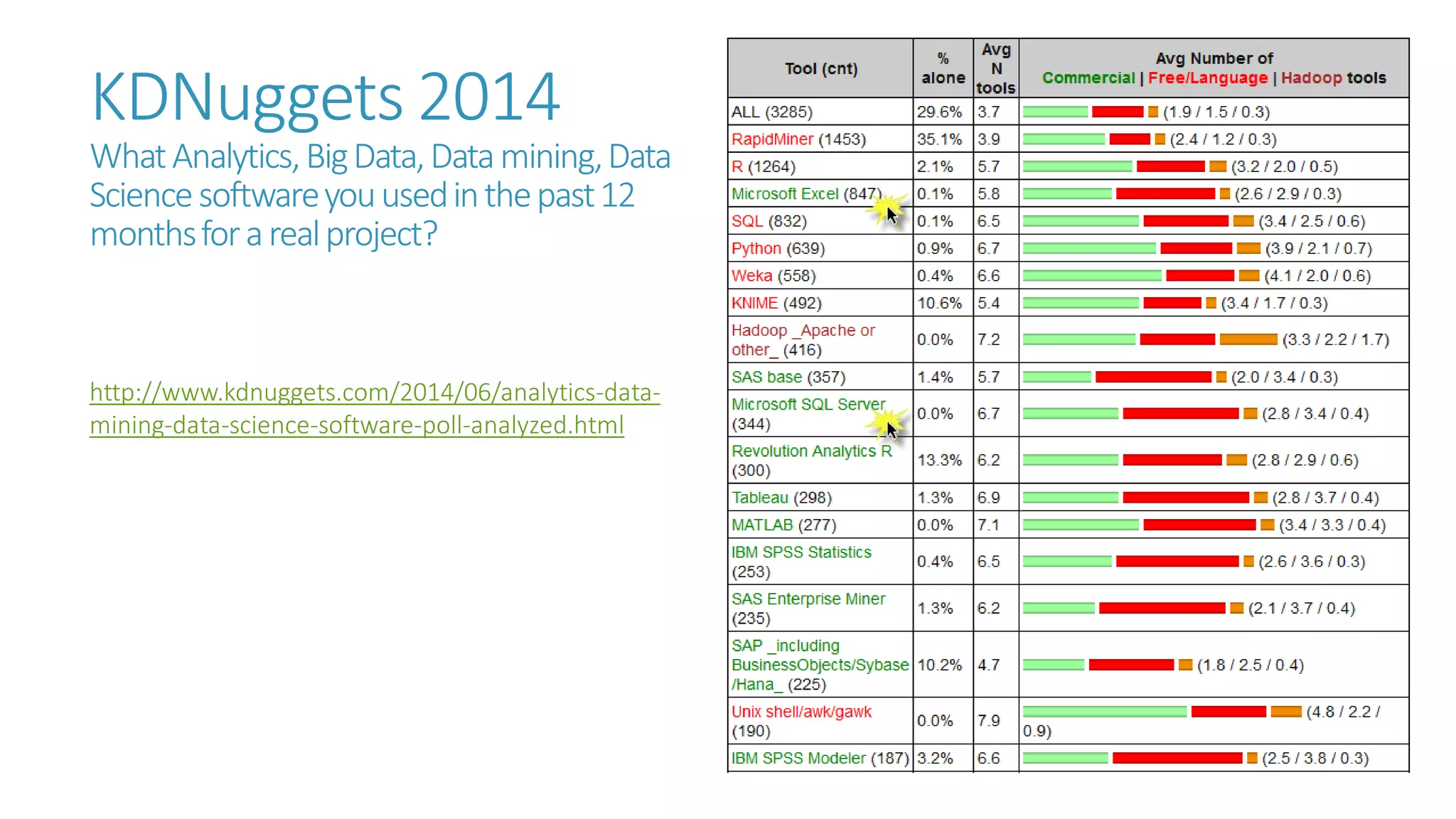

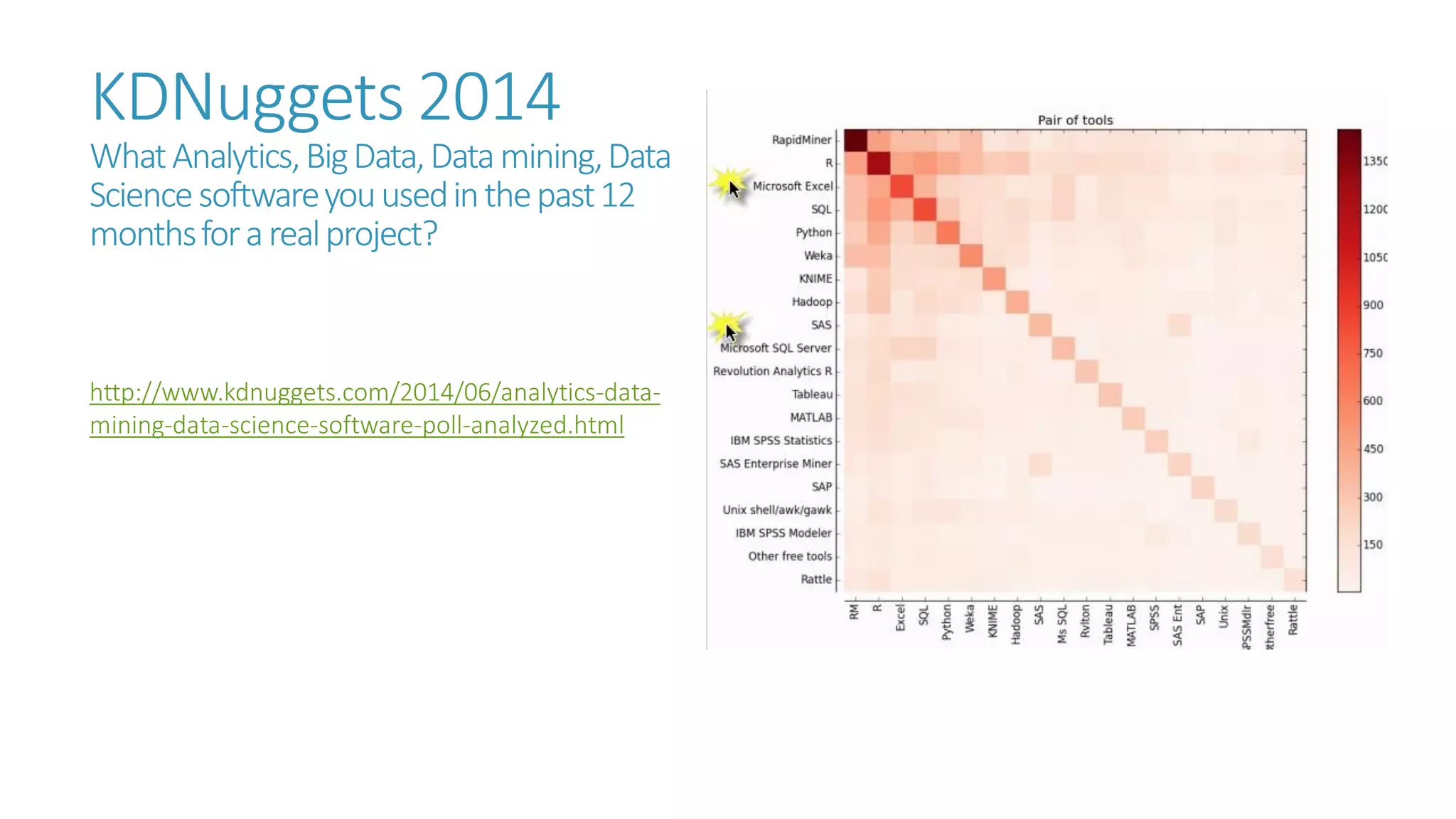

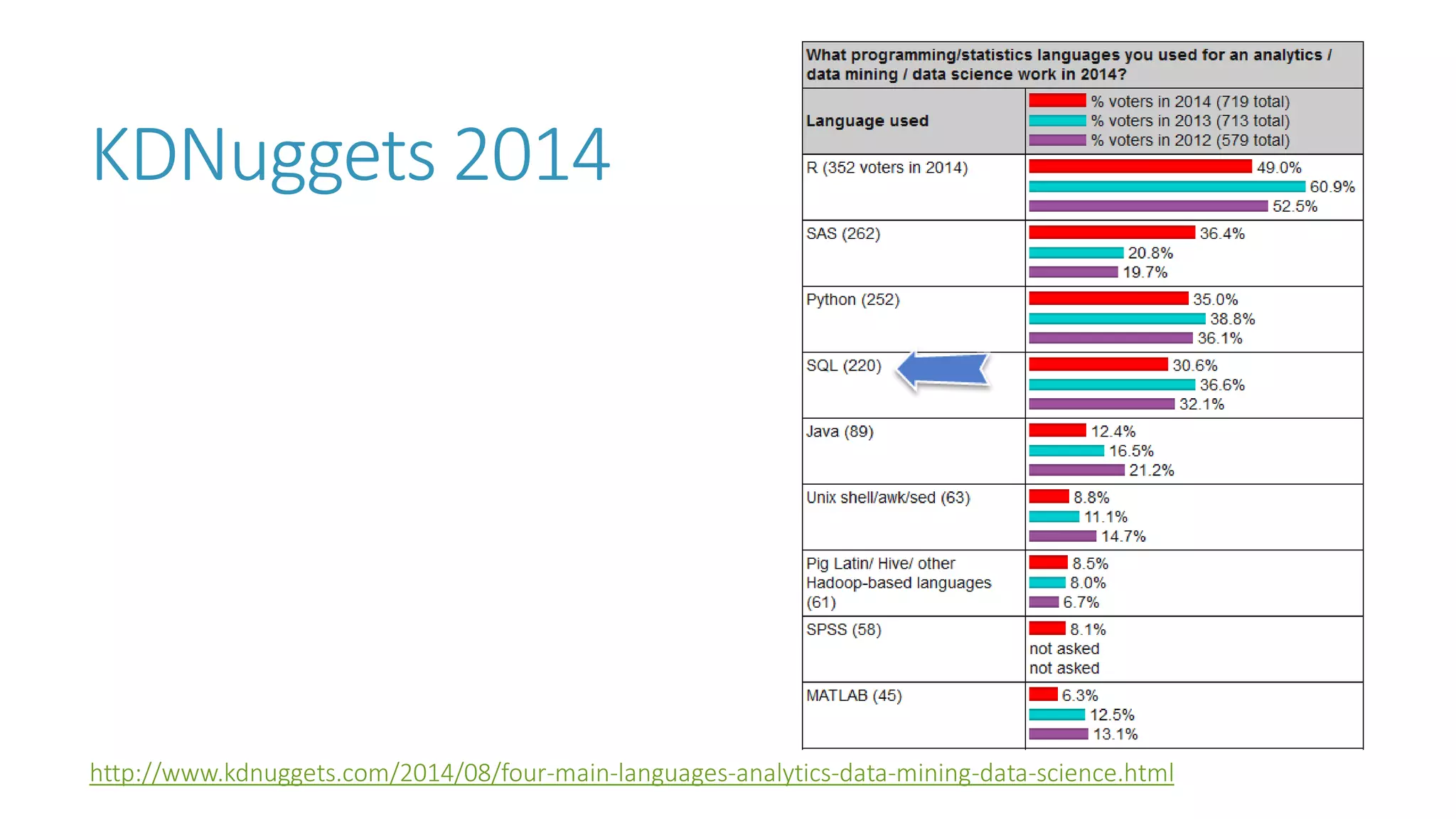

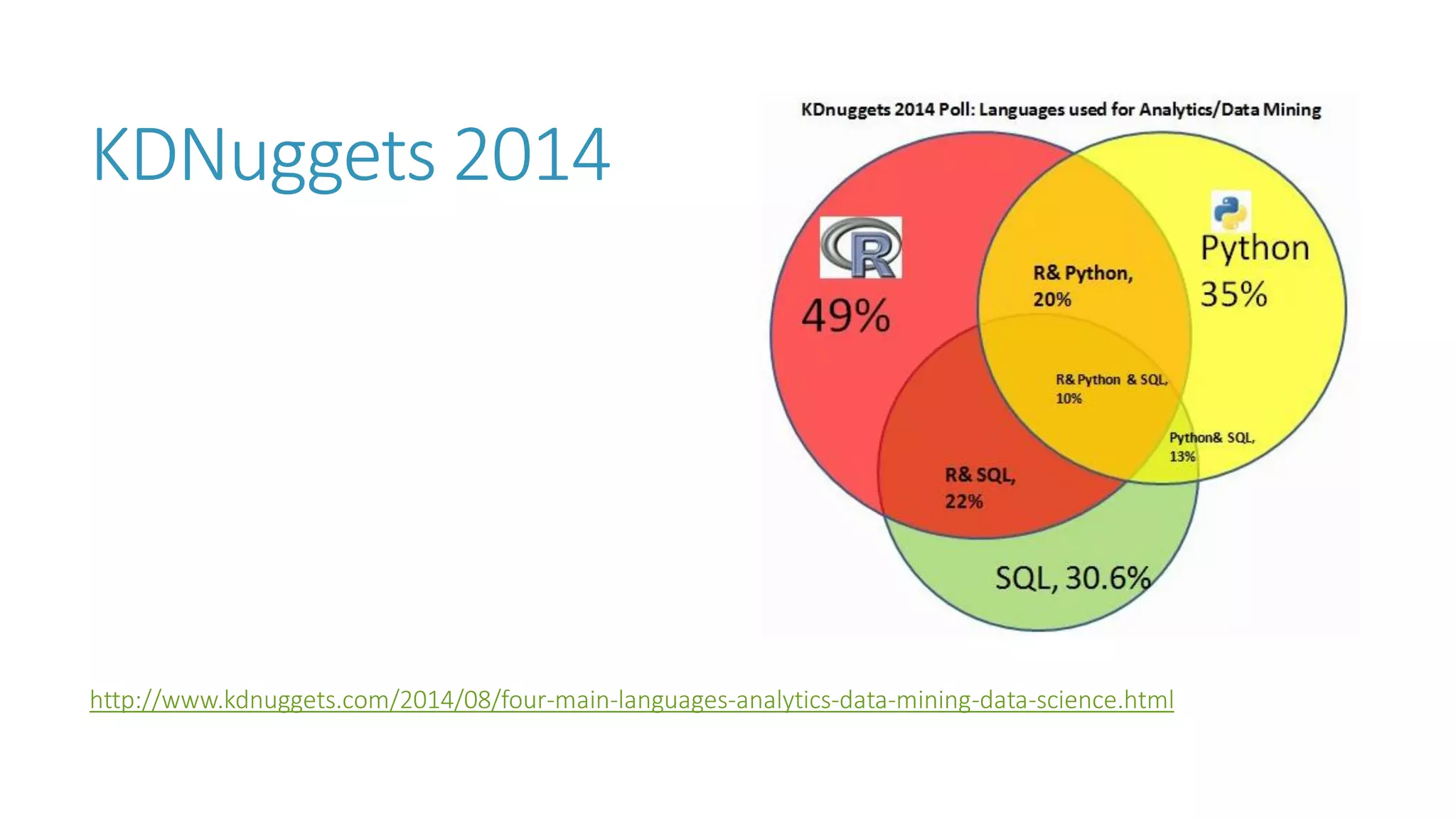

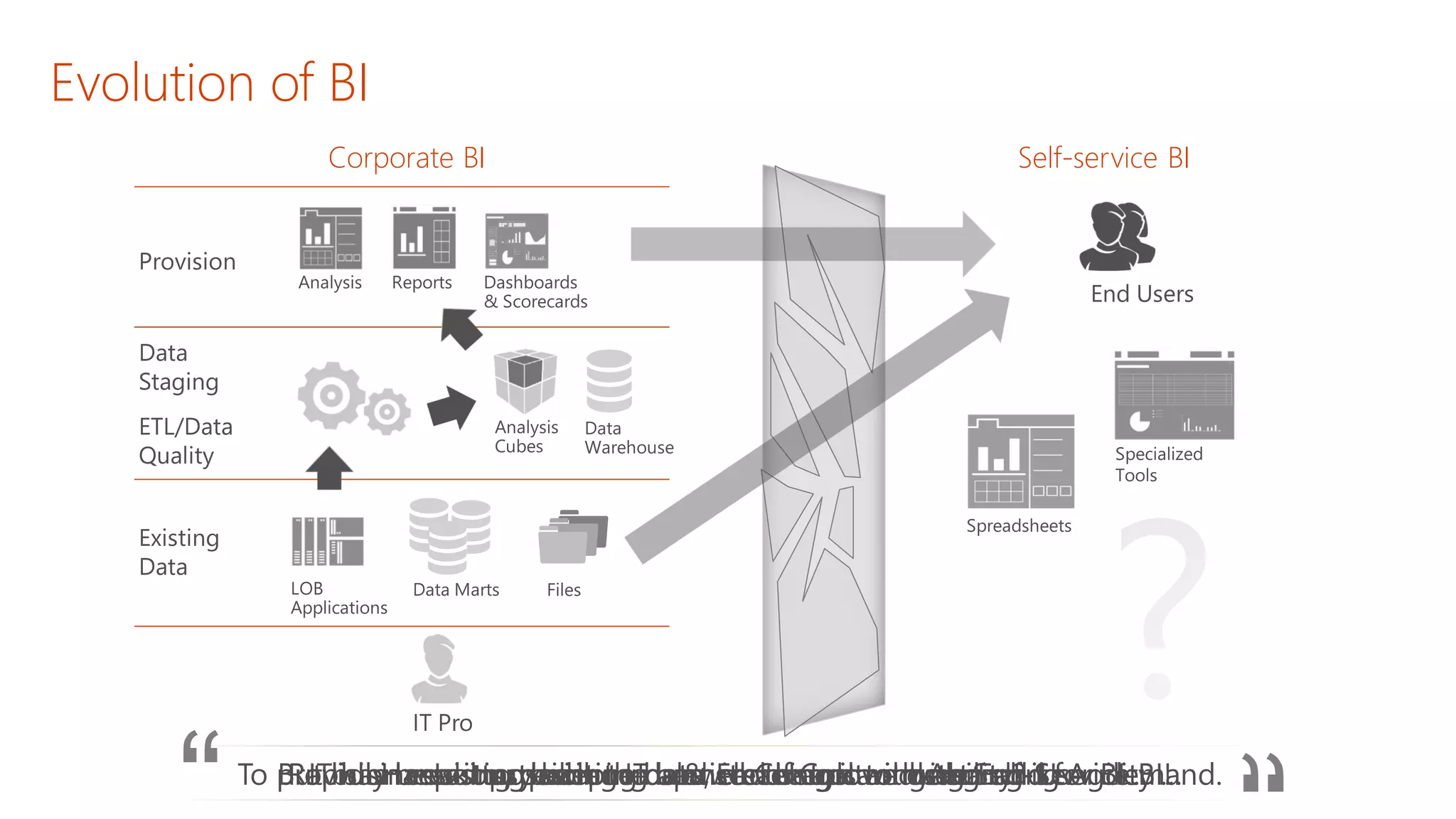

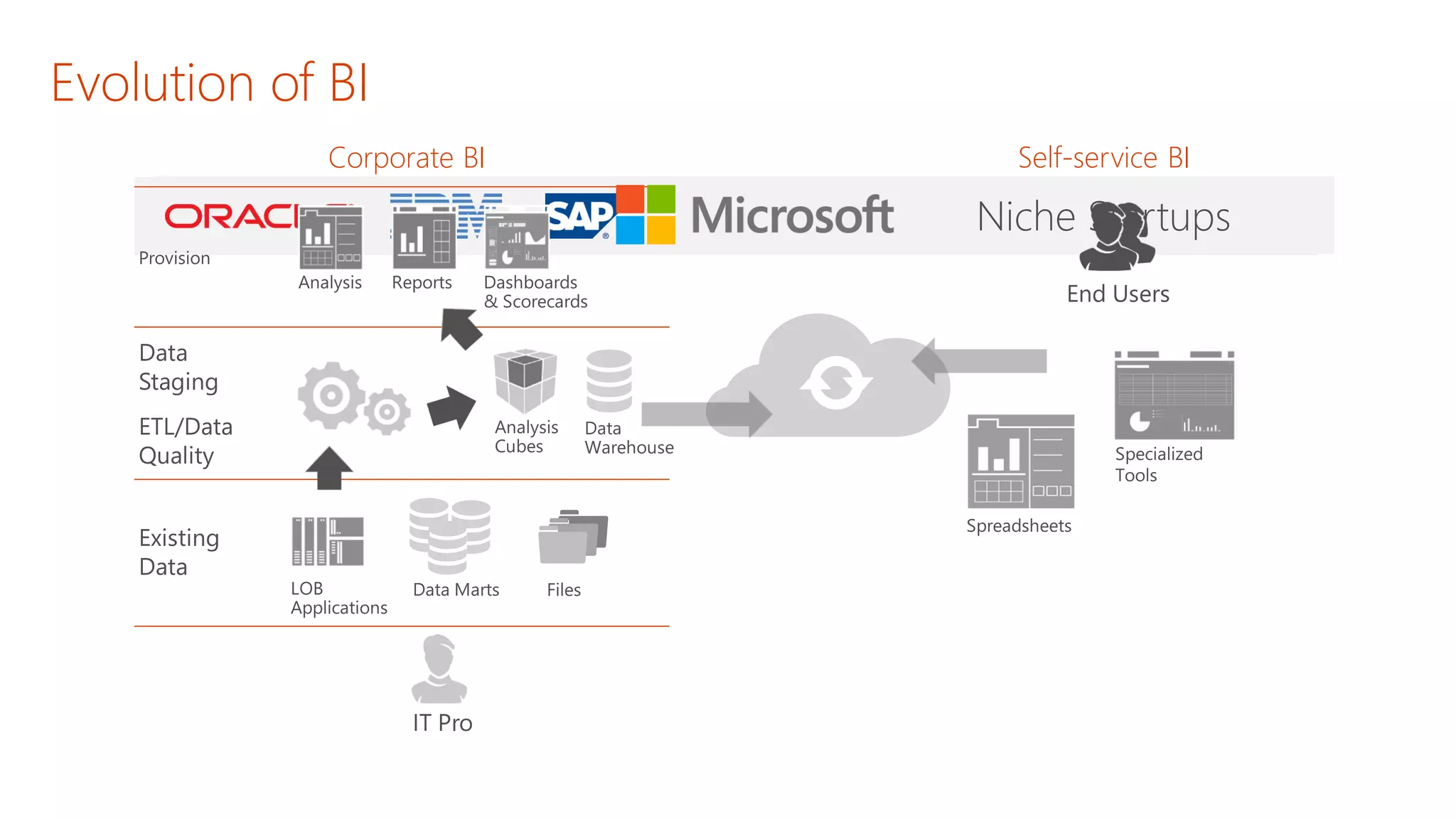

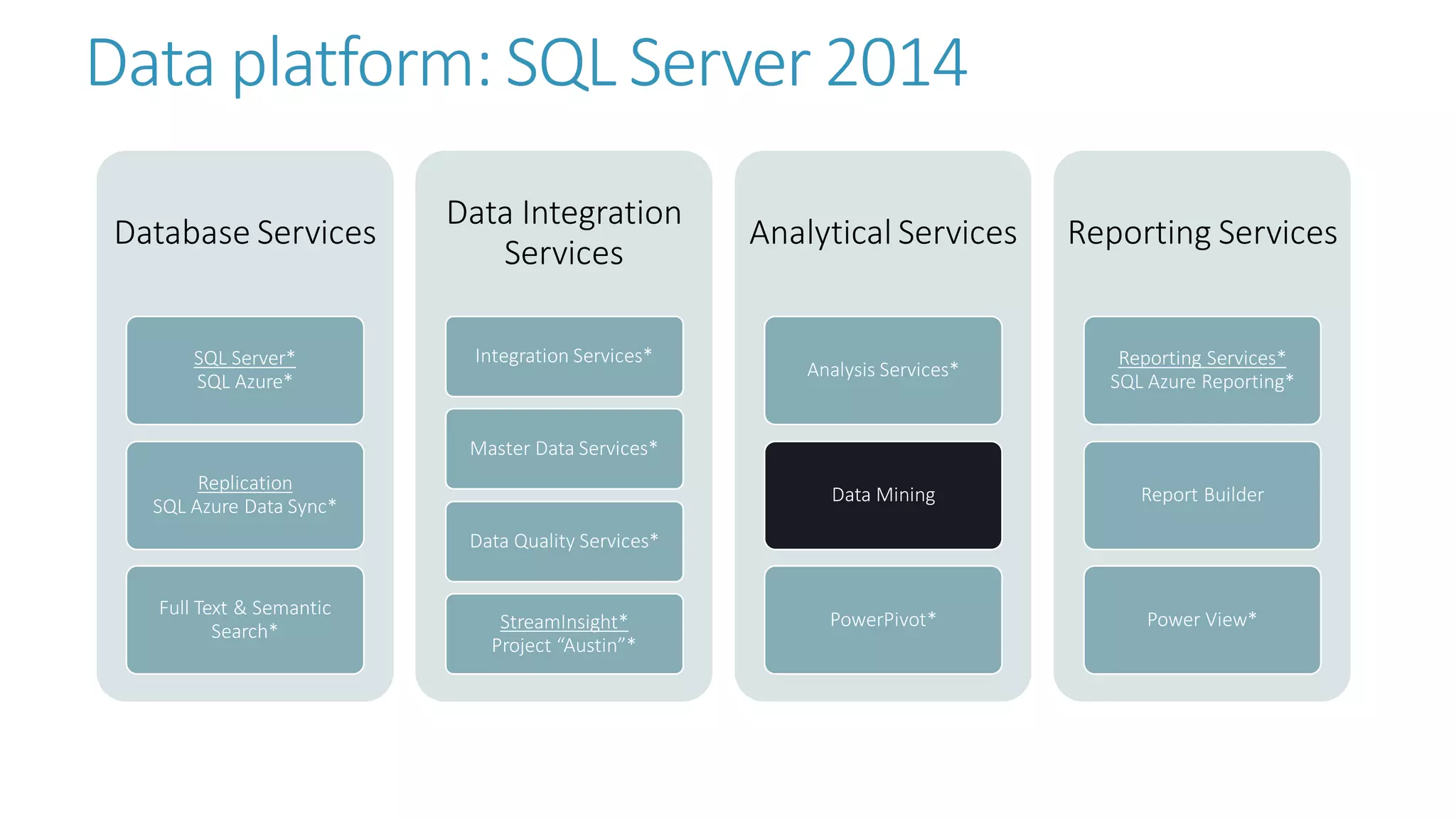

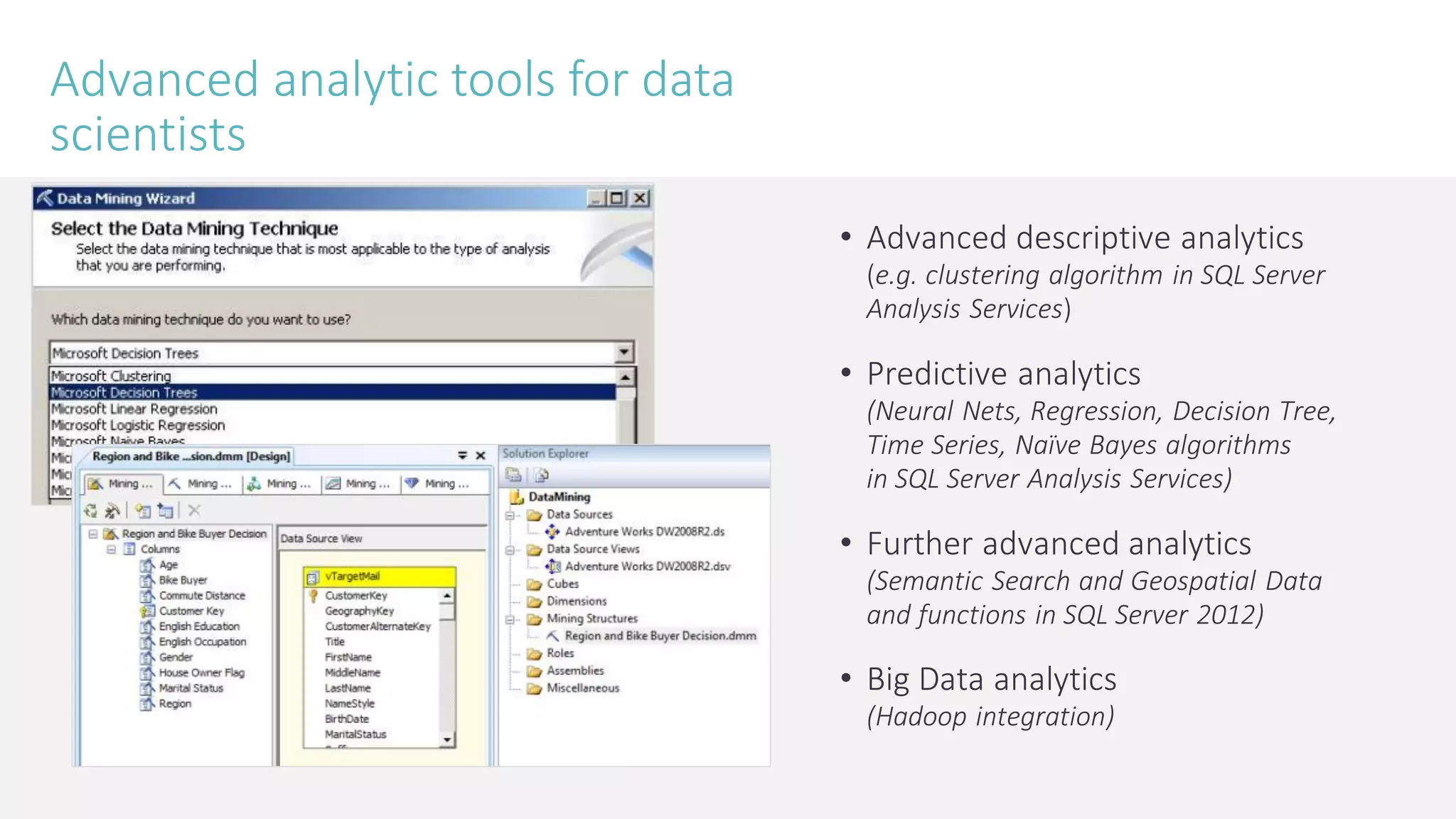

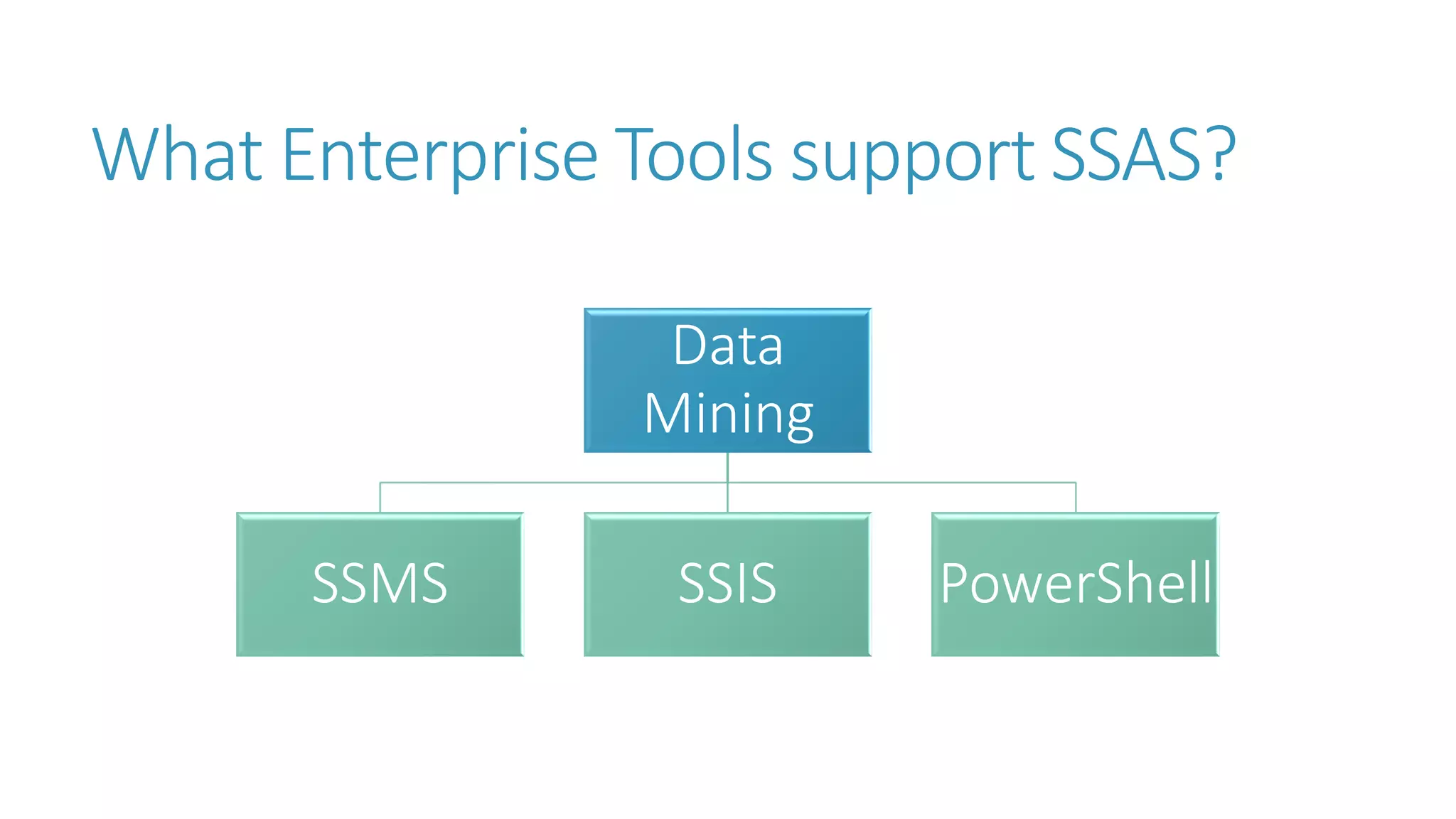

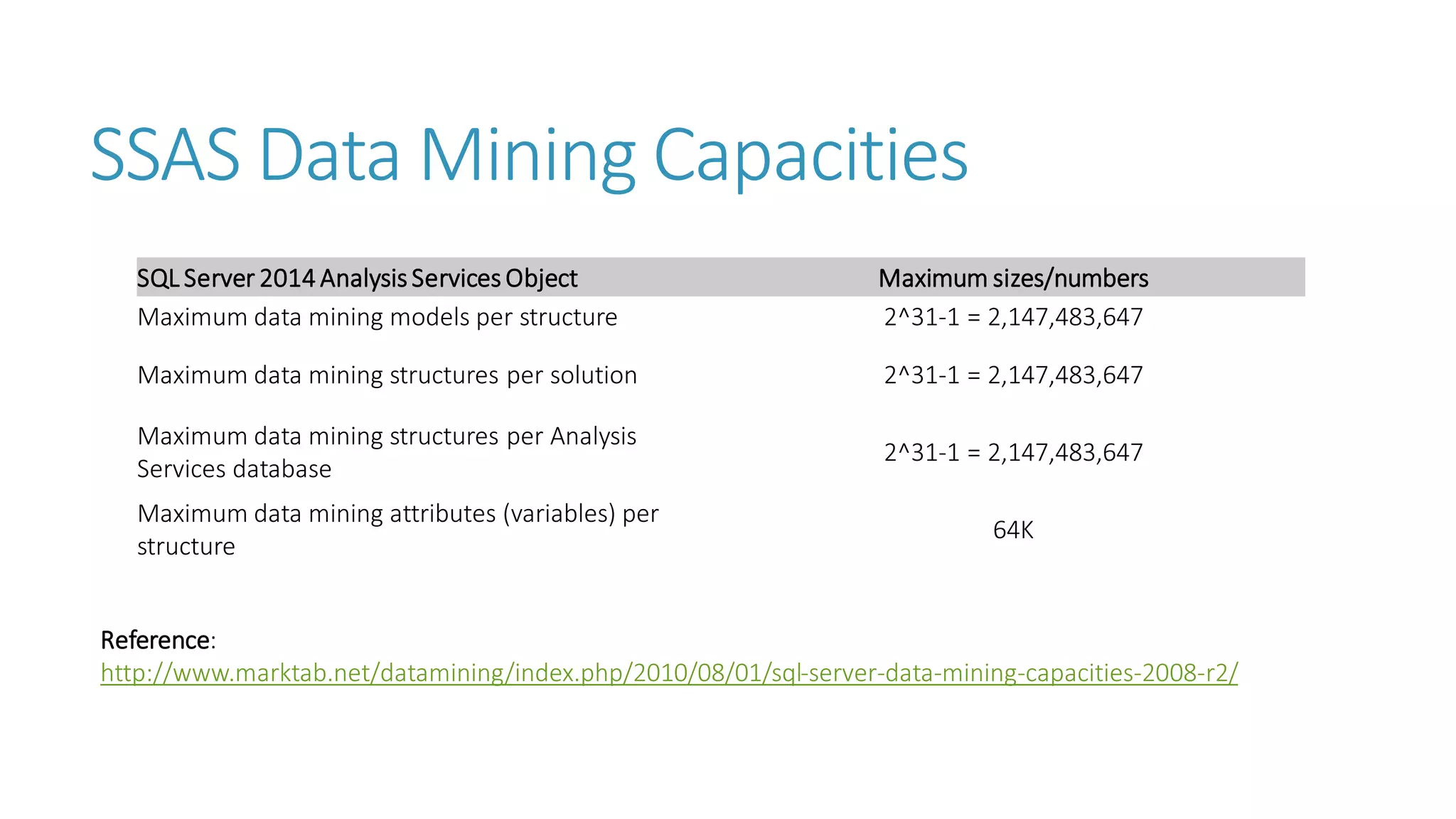

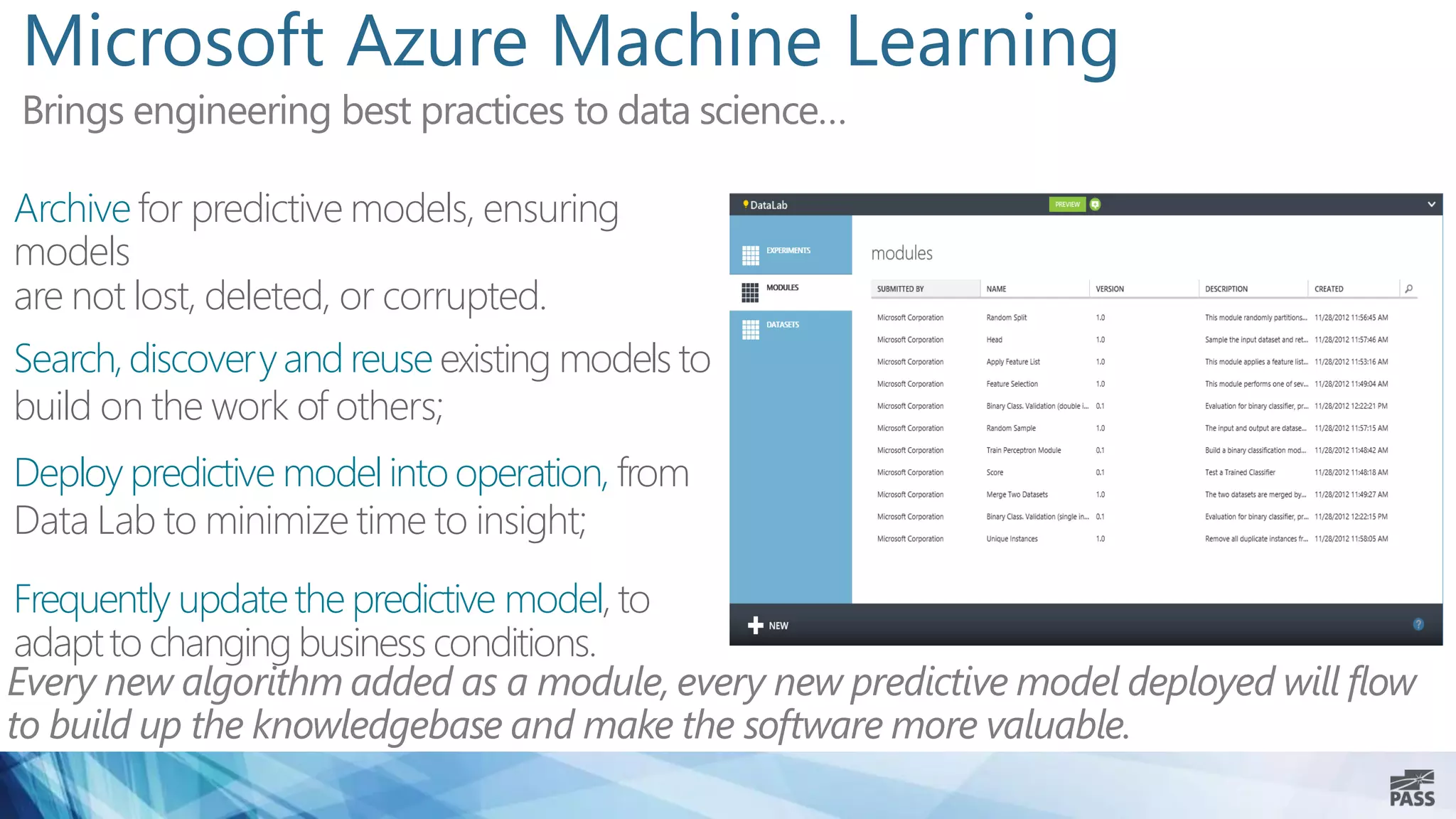

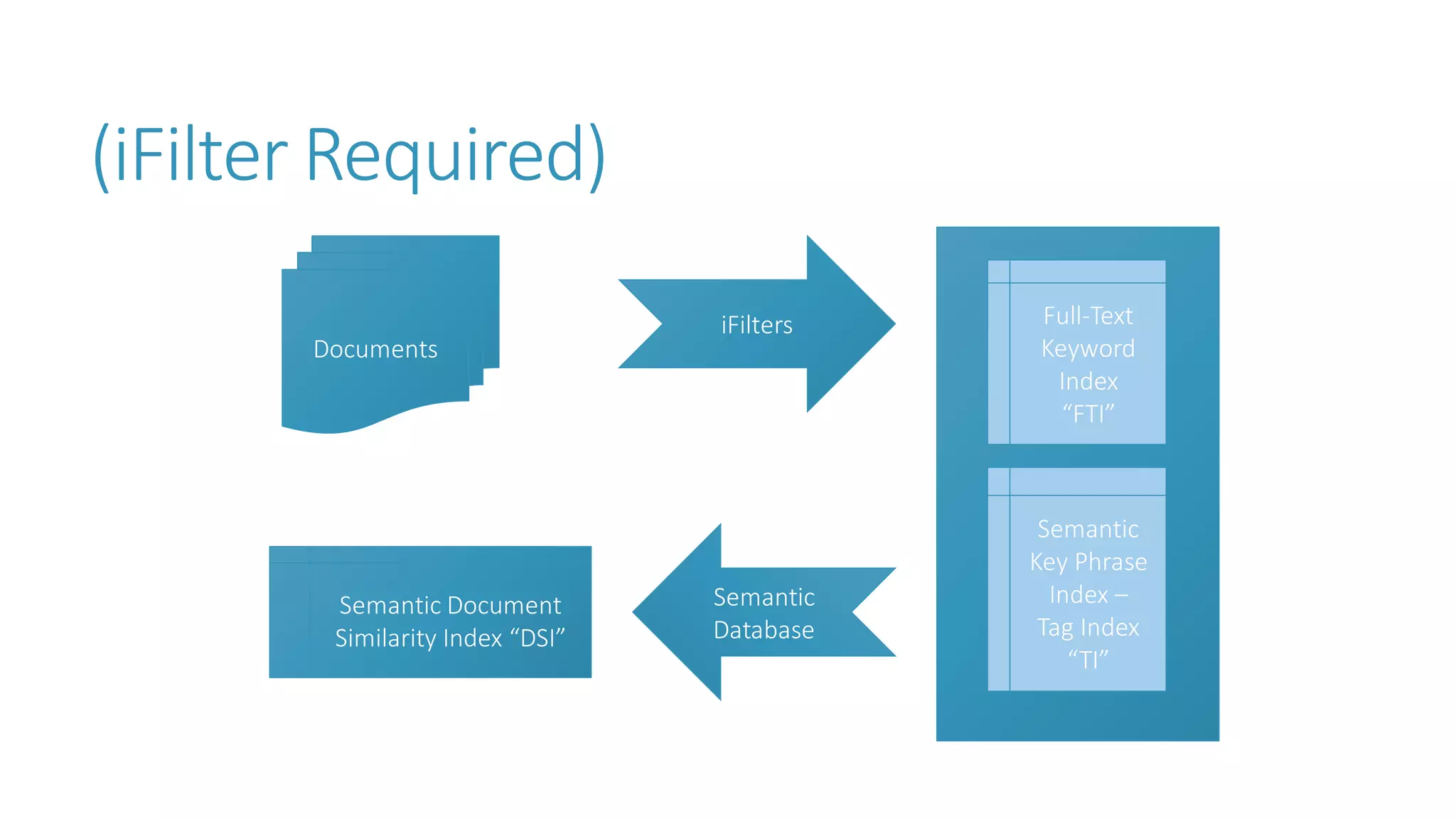

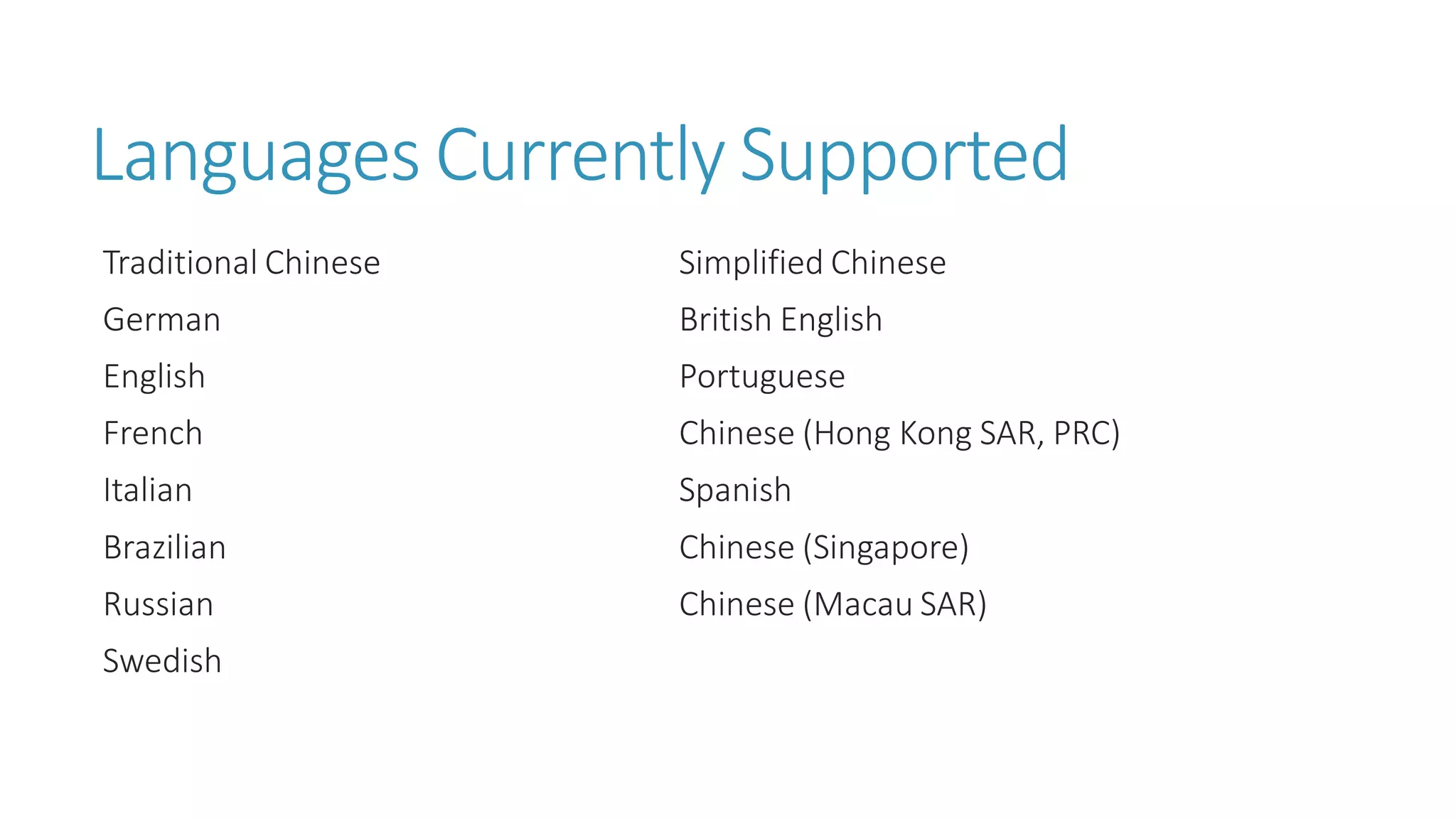

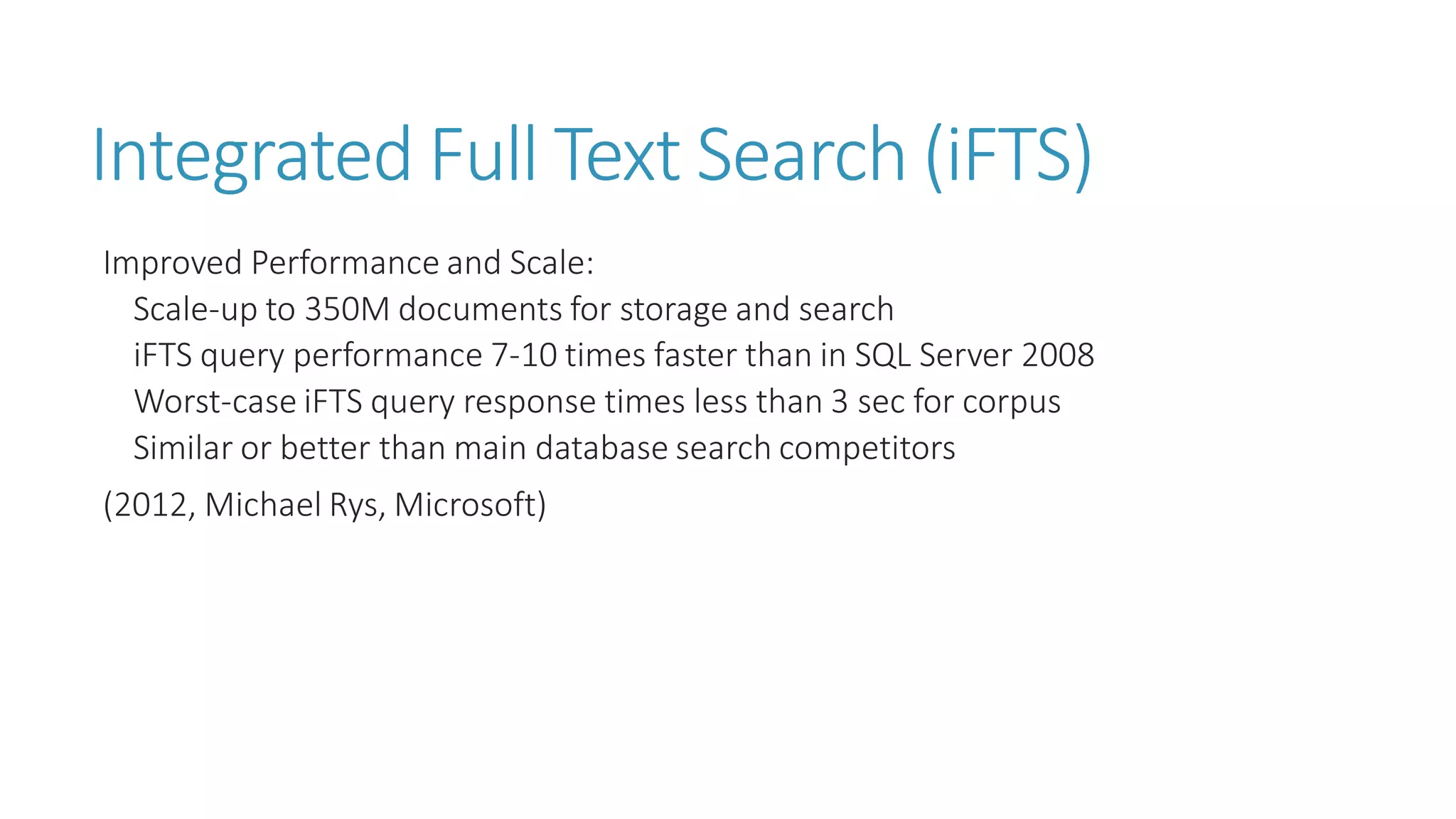

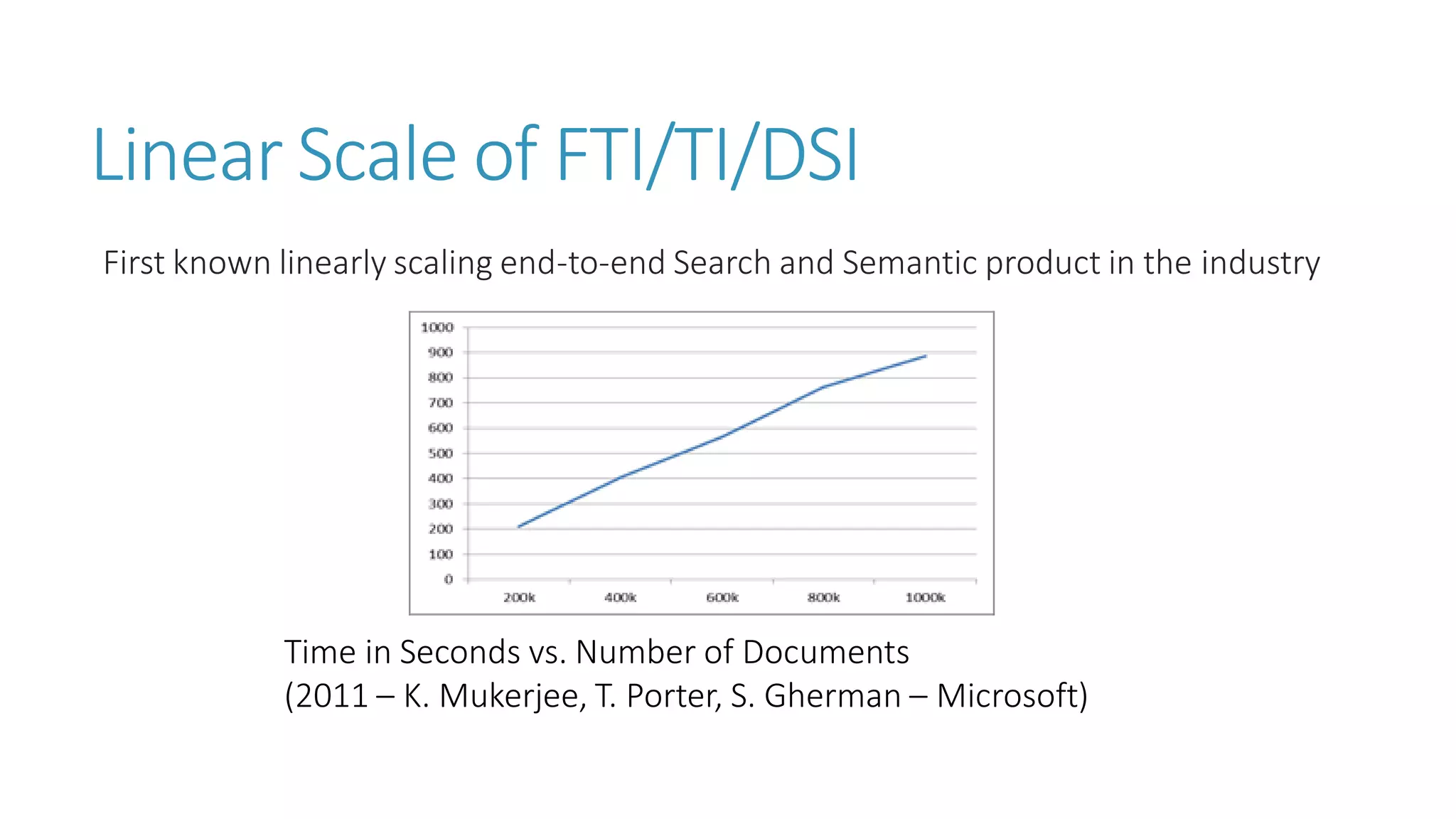

The document discusses the fundamentals and applications of data mining and machine learning, emphasizing their roles in discovering patterns and making informed business decisions using various technologies like SQL Server and Azure. It highlights the importance of tools such as Excel data mining add-ins, noting Microsoft’s solutions for analytics and data mining within its SQL Server offerings. Key insights include the capabilities of SQL Server 2014 for data mining, the integration of data science practices, and the potential for semantic search in handling large datasets.