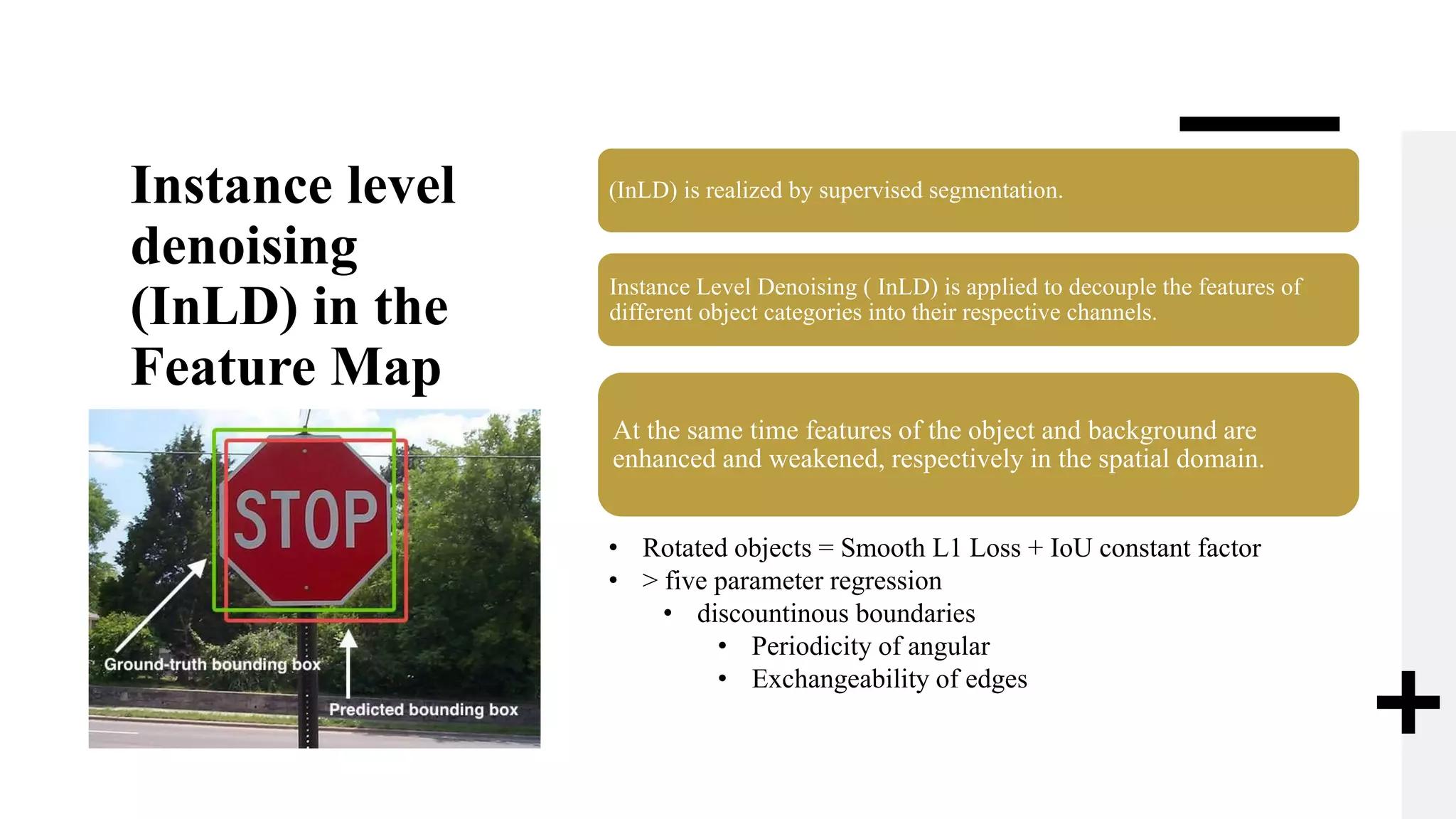

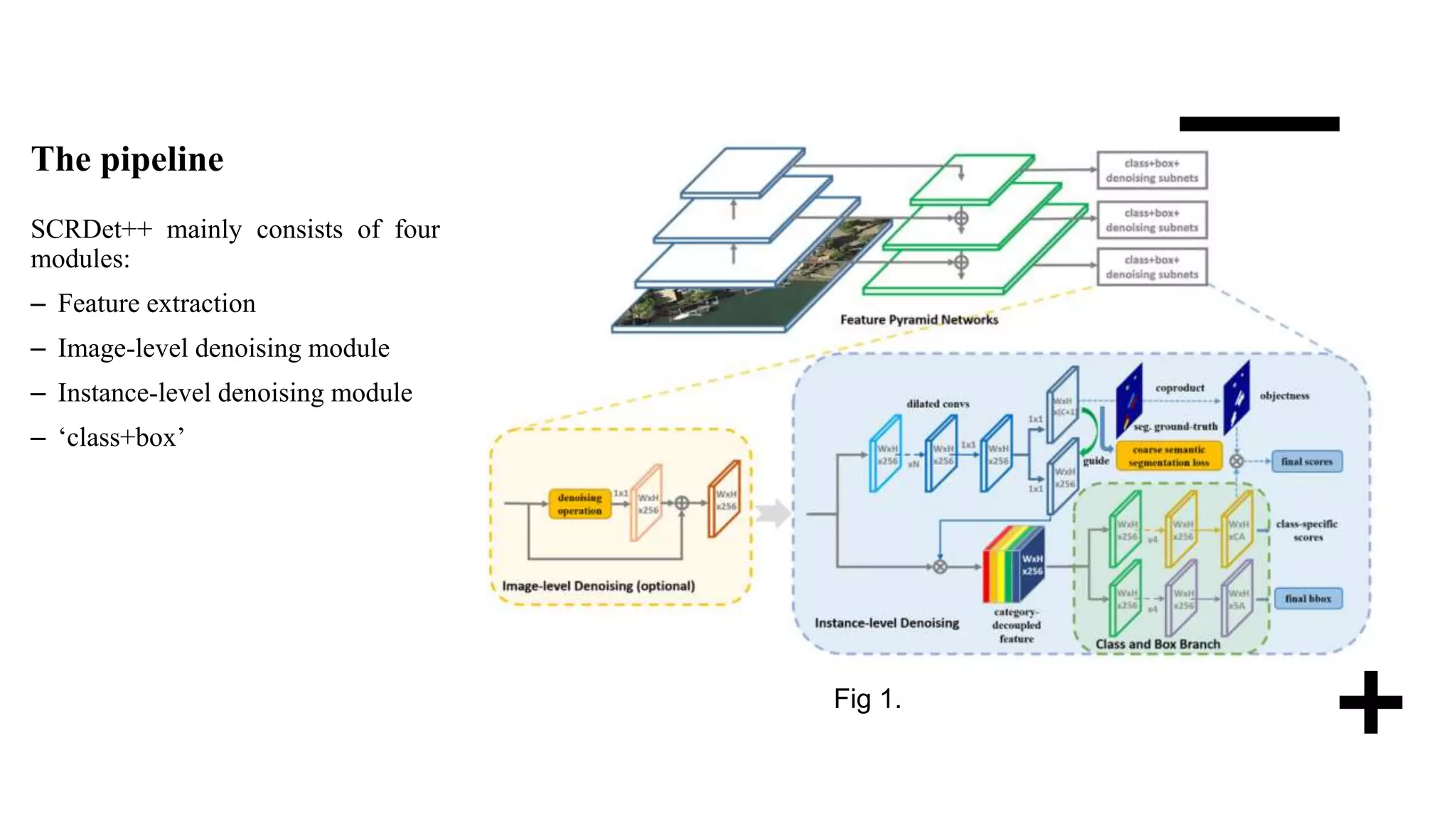

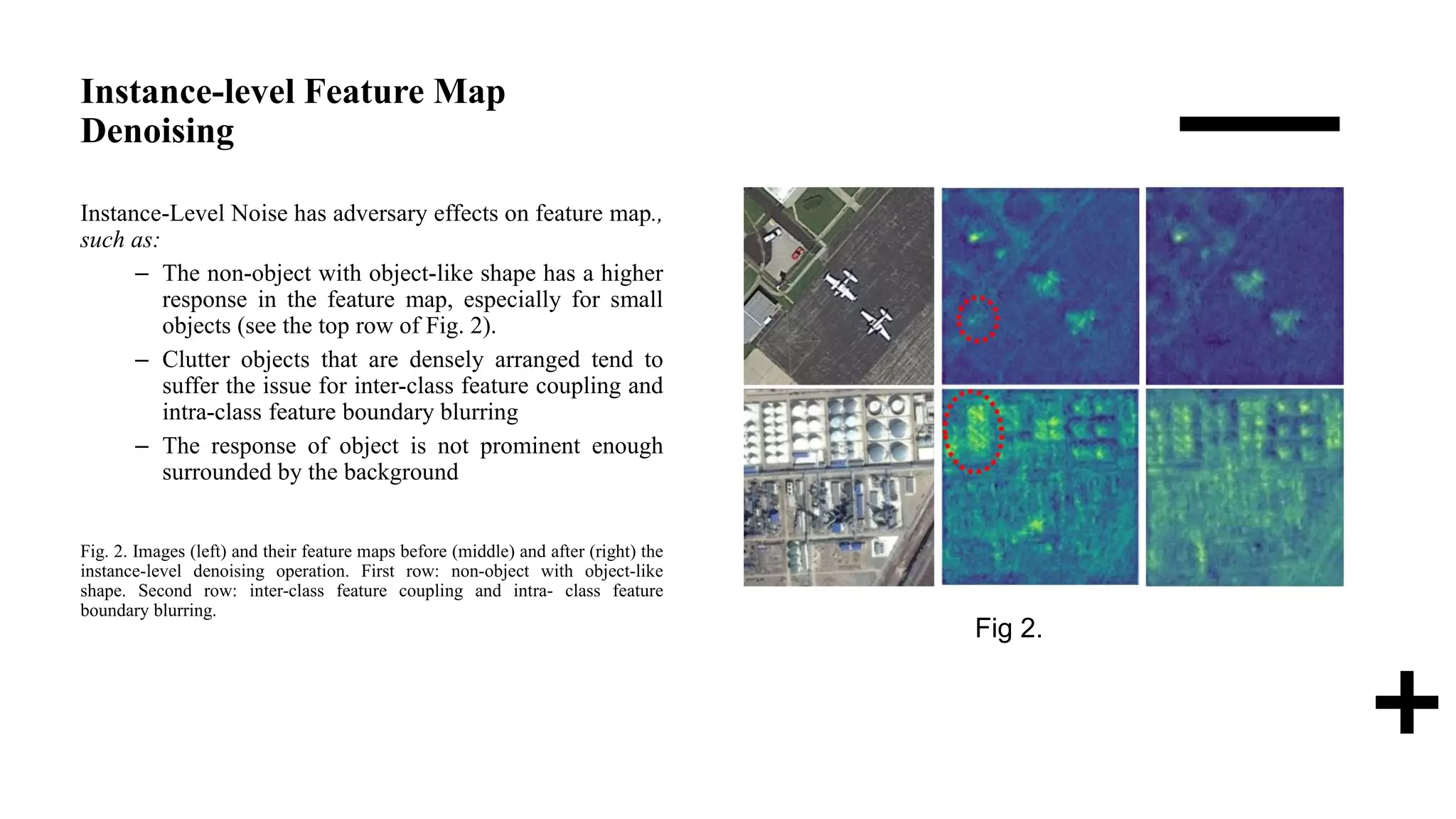

The document discusses an advanced framework called SCRDet++ for detecting small, cluttered, and rotated objects in images through instance-level feature denoising and improved rotation loss, addressing limitations of current detectors. It details a four-module architecture incorporating image-level and instance-level denoising to enhance detection performance, particularly for small and rotated objects, and summarizes experimental results demonstrating superior accuracy over existing methods. The study also compares various datasets and emphasizes the effectiveness of the proposed techniques in achieving state-of-the-art performance.

![Mathematical foundation to remove

instance level noise

– Reweight the convolutional response maps [10].

– Important parts > uninformative ones

Fig 3.

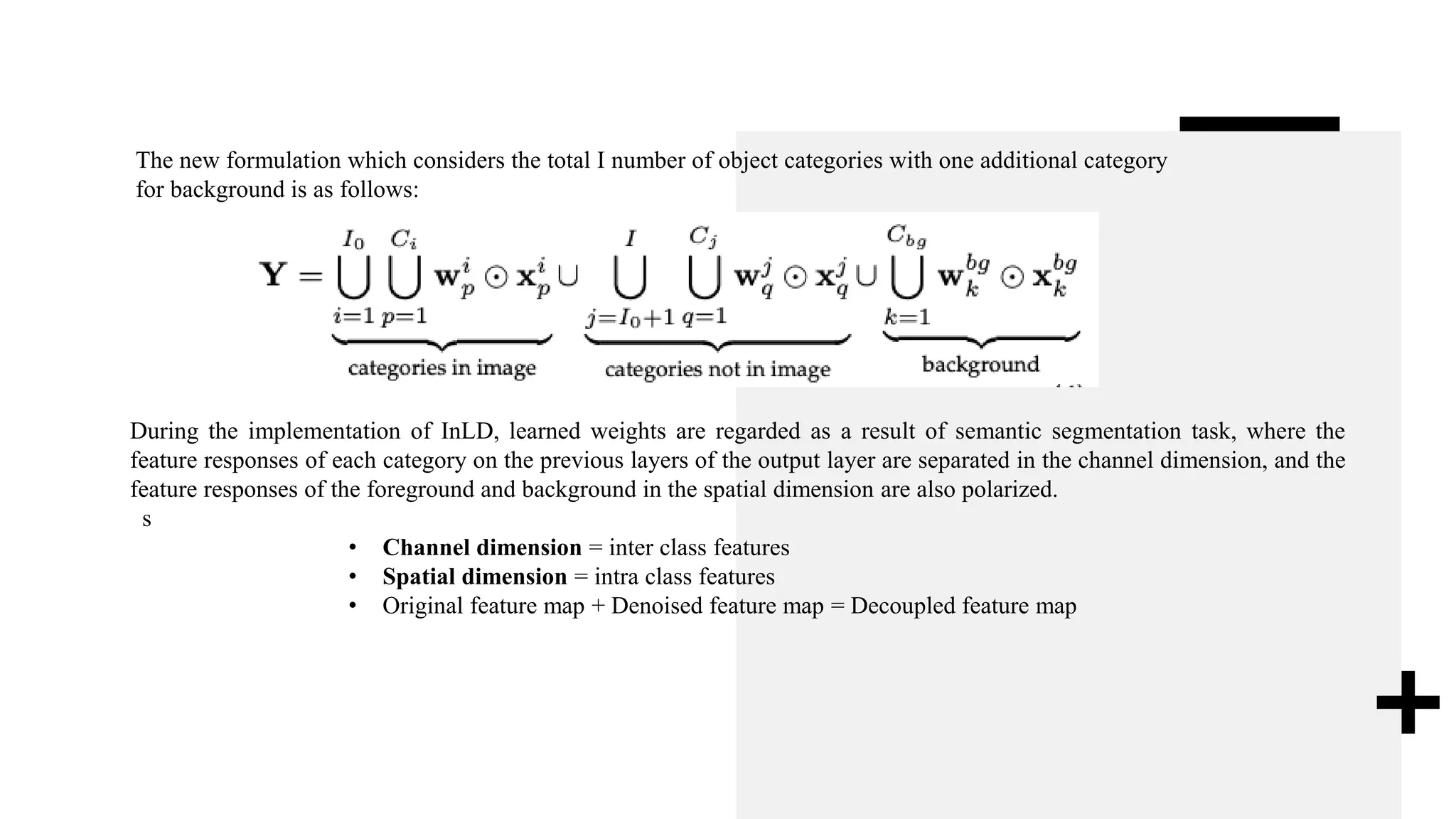

- X, Y ∈ R^(C ×H ×W) are two feature maps of input image

- A(X) is an attention function

- ⊙ is the element-wise product

- Ws ∈ R^H×W and Wc ∈ R^C denote the spatial weight and

channel weight

- Wci indicates the weight of the i-th channel

- U, concatenation operation for connecting tensor among the

feature map](https://image.slidesharecdn.com/scrdetanalysis-220218175216/75/Scrdet-analysis-9-2048.jpg)

![Datasets

DOTA DIOR UCAS-AOD BSTLD S2 TLD

• 2806 Aerial

images

• 15 object classes

• 188,282

instances

• 23,463 Aerial

images

• 20 object classes

• 190,288

instances

• 1510 Aerial

images

• 2 object classes

• 14,596 instances

• 13,427 camera

images

• Few instances of

many categories

• 5,786 images

• 5 object

categories

• 14,130 instances

In addition to the above datasets, they also use natural image dataset COCO [8] and scene text dataset

ICDAR2015 [28] for further evaluation.](https://image.slidesharecdn.com/scrdetanalysis-220218175216/75/Scrdet-analysis-13-2048.jpg)

![Experiment

Server with a GeForce RTX 2080 Ti and 11G memory.

– Initialization by ResNet50 [14] by default.

– The weight decay and momentum for all experiments are set 0.0001 and 0.9, respectively.

– A Momentum Optimizer was employed over 8 GPUs with a total of 8 images per minibatch.

– Standard evaluation protocol of COCO, while for other datasets, the anchors of RetinaNet-based

method were used with seven aspect ratios {1, 1/2, 2, 1/3, 3, 5, 1/5} and three scales {20 , 21/3 ,

22/3 }.

– For rotating anchor-based method (RetinaNet-R), the angle is set by an arithmetic progression

from −90◦ to −15◦ with an interval of 15 degrees.](https://image.slidesharecdn.com/scrdetanalysis-220218175216/75/Scrdet-analysis-14-2048.jpg)

![Effect of Instance-Level Denoising

– Improved accuracy

– Effect of IoU-Smooth L1 Loss

– Eliminates the boundary effects of the angle,

– Model easily regresses the object coordinates.

– The new loss improves three detectors’(RetinaNet-R [4],SCRDet [3], FPN [15] ) accuracy to 69.83%, 68.65% and

76.20%, respectively.

– Effect of Data Augmentation and Backbone.

– Used ResNet101

– Improvement from 69.81% → 72.98%.

– Final performance of the model was improved from 72.98% to 74.41% by using ResNet152 as backbone.](https://image.slidesharecdn.com/scrdetanalysis-220218175216/75/Scrdet-analysis-15-2048.jpg)

![– InLD with the state-of-the-art algorithms on

two datasets DOTA [16] and DIOR [17]

outperforms all other models and achieves

the best performance, 76.56% and 76.81%

respectively.

– Methods achieve the best performance,

76.56% and 76.81% respectively 77.80%

and 75.11% mAP on FPN and RetinaNet

based methods.

– Table.1 illustrates the comparison of

performance on UCAS-AOD dataset.

– Method achieves 96.95% for OBB task and

is the best out of all the existing published

methods.

Method mAP Plane Car

YOLOv2 [18] 87.90 96.60 79.20

R-DFPN [12] 89.20 95.90 82.50

DRBox [19] 89.95 94.90 85.00

S2 ARN [20] 94.90 97.60 92.20

RetinaNet-H

[4]

95.47 97.34 93.60

ICN [21] 95.67 - -

FADet [22] 95.71 98.69 92.72

R3 Det [4] 96.17 98.20 94.14

SCRDet++ (R3

Det-based)

96.95 98.93 94.97

TABLE: 1 Performance by accuracy (%) on UCAS-AOD dataset.

Results:](https://image.slidesharecdn.com/scrdetanalysis-220218175216/75/Scrdet-analysis-16-2048.jpg)

![References:

[1] S.M.Azimi,E.Vig,R.Bahmanyar,M.Ko ̈rner,andP.Reinartz,“To- wards multi-class object detection in unconstrained remote sens- ing imagery,” in Asian Conference on

Computer Vision. Springer, 2018, pp. 150–165.

[2] J. Ding, N. Xue, Y. Long, G.-S. Xia, and Q. Lu, “Learning roi transformer for oriented object detection in aerial images,” in Proceedings of the IEEE Conference on Computer

Vision and Pattern Recognition (CVPR), June 2019.

[3] X. Yang, J. Yang, J. Yan, Y. Zhang, T. Zhang, Z. Guo, X. Sun, and K. Fu, “Scrdet: Towards more robust detection for small, cluttered and rotated objects,” in Proceedings of the

IEEE International Conference on Computer Vision (ICCV), October 2019.

[4] X. Yang, Q. Liu, J. Yan, and A. Li, “R3det: Refined single-stage detector with feature refinement for rotating object,” arXiv preprint arXiv:1908.05612, 2019.

[5] W. Qian, X. Yang, S. Peng, Y. Guo, and C. Yan, “Learn- ing modulated loss for rotated object detection,” arXiv preprint arXiv:1911.08299, 2019.

[6] Y. Xu, M. Fu, Q. Wang, Y. Wang, K. Chen, G.-S. Xia, and X. Bai, “Gliding vertex on the horizontal bounding box for multi-oriented object detection,” IEEE Transactions on

Pattern Analysis and Machine Intelligence, 2020.

[7] H.Wei,L.Zhou,Y.Zhang,H.Li,R.Guo,andH.Wang,“Oriented objects as pairs of middle lines,” arXiv preprint arXiv:1912.10694, 2019.

[8] Z. Xiao, L. Qian, W. Shao, X. Tan, and K. Wang, “Axis learning for orientated objects detection in aerial images,” Remote Sensing, vol. 12, no. 6, p. 908, 2020.

[9] X. Wang, R. Girshick, A. Gupta, and K. He, “Non-local neural networks,” in Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2018,

pp. 7794–7803.

[10] J. Hu, L. Shen, and G. Sun, “Squeeze-and-excitation networks,” in Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2018, pp.

7132–7141.

[11] X. Yang, Q. Liu, J. Yan, and A. Li, “R3det: Refined single-stage detector with feature refi

[12] X. Yang, H. Sun, K. Fu, J. Yang, X. Sun, M. Yan, and Z. Guo, “Automatic ship detection in remote sensing images from google earth of complex scenes based on multiscale

rotation dense feature pyramid networks,” Remote Sensing, vol. 10, no. 1, p. 132, 2018.](https://image.slidesharecdn.com/scrdetanalysis-220218175216/75/Scrdet-analysis-17-2048.jpg)

![[13] X. Yang, H. Sun, X. Sun, M. Yan, Z. Guo, and K. Fu, “Position detection and direction prediction for arbitrary-oriented ships via multitask

rotation region convolutional neural network,” IEEE Access, vol. 6, pp. 50 839–50 849, 2018.

[14] K. He, X. Zhang, S. Ren, and J. Sun, “Deep residual learning for image recognition,” in Proceedings of the IEEE Conference on Computer

Vision and Pattern Recognition (CVPR), 2016, pp. 770–778.

[15 ] T.-Y. Lin, P. Dolla ́r, R. B. Girshick, K. He, B. Hariharan, and S. J. Belongie, “Feature pyramid networks for object detection.” in

Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), vol. 1, no. 2, 2017, p. 4.

[16] G.-S. Xia, X. Bai, J. Ding, Z. Zhu, S. Belongie, J. Luo, M. Datcu, M. Pelillo, and L. Zhang, “Dota: A large-scale dataset for object detection

in aerial images,” in Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2018.

[17] K. Li, G. Wan, G. Cheng, L. Meng, and J. Han, “Object detection in optical remote sensing images: A survey and a new benchmark,” ISPRS

Journal of Photogrammetry and Remote Sensing, vol. 159, pp. 296–307, 2020.

[18] J. Redmon and A. Farhadi, “Yolo9000: better, faster, stronger,” in Proceedings of the IEEE Conference on Computer Vision and Pattern

Recognition (CVPR), 2017, pp. 7263–7271.

[19] L. Liu, Z. Pan, and B. Lei, “Learning a rotation invariant detector with rotatable bounding box,” arXiv preprint arXiv:1711.09405, 2017.

[20] S. Bao, X. Zhong, R. Zhu, X. Zhang, Z. Li, and M. Li, “Single shot anchor refinement network for oriented object detection in optical remote

sensing imagery,” IEEE Access, vol. 7, pp. 87150–87161, 2019.

[21] S.M.Azimi,E.Vig,R.Bahmanyar,M.Ko ̈rner,andP.Reinartz,“To- wards multi-class object detection in unconstrained remote sens- ing

imagery,” in Asian Conference on Computer Vision. Springer, 2018, pp. 150–165.

[22] C. Li, C. Xu, Z. Cui, D. Wang, T. Zhang, and J. Yang, “Feature- attentioned object detection in remote sensing imagery,” in 2019 IEEE

International Conference on Image Processing (ICIP). IEEE, 2019, pp. 3886–3890.](https://image.slidesharecdn.com/scrdetanalysis-220218175216/75/Scrdet-analysis-18-2048.jpg)