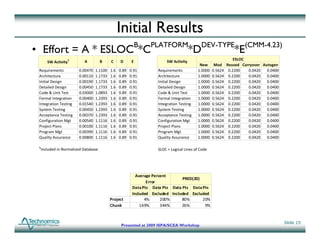

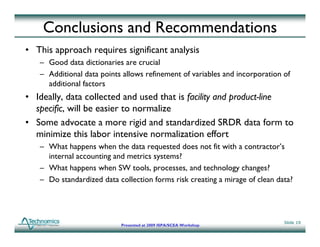

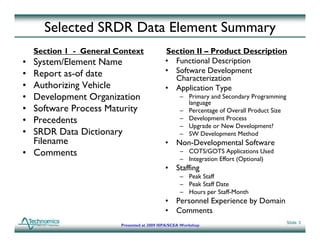

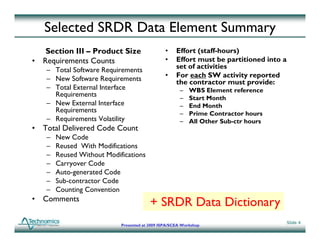

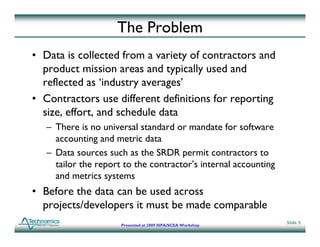

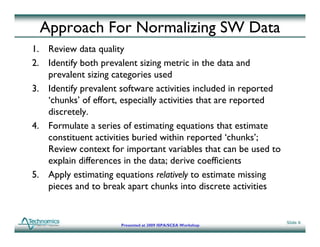

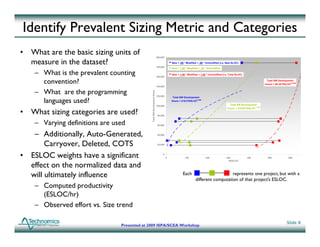

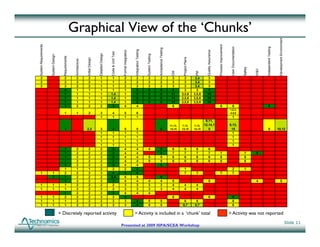

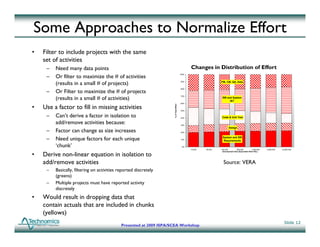

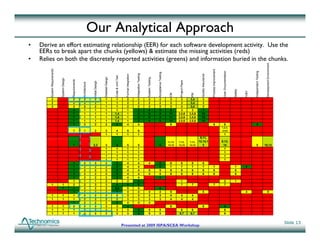

The document discusses the normalization of software data for the Army's software database, emphasizing the need for consistent reporting standards among contractors. It outlines the steps to ensure data comparability, including data quality reviews and the formulation of estimating equations to break down reported efforts into discrete activities. The presentation highlights the challenges in aggregating diverse contractor data and the importance of establishing common metrics for software development efforts.

![General Formulation of Approach

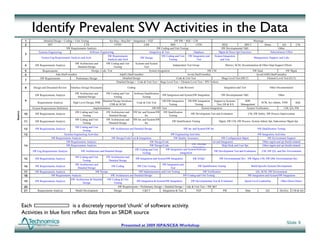

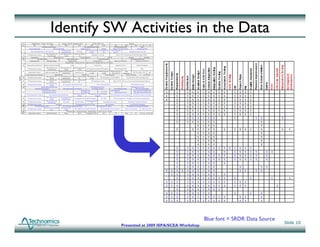

• Each ‘chunk’ consists of a set of estimated activities

chunk ET = Total Reported Project Effort

ECH =

ˆ (∑ E )

ˆ

i

ET = Total Estimated Project Effort

ˆ

E = Estimated Chunk Effort

ˆ

• Total estimated project effort consists of a set of estimated chunks

CH

Ei = Estimated effort of activity i

ˆ

ET =

ˆ (∑ E )

ˆ

CH

• Each effort estimating relationship (EER) provides an estimate for one activity

Ei = Ai [ki (ESLOCi )bi * (EAF )

ˆ * (EAF2 ) * (EAF3 ) * (EAF4 )

Platform Dev_Type CMM SystemEffe

ct

1 ]

where Challenges Encountered

• Not enough data points. 211

points

Ai = 1 if activityi is includedin total effort (ET ); else Ai = 0

unknowns, but only 168 d.o.f. in

ESLOCi = EquivalentNew Lines of Code for Activityi the data

Platform = 1 if platformis Air; else Platform = 0 • ‘Partial’ programs drove analysis

Developer Type = 1 if single developeror subcontrac else DeveloperT = 0

tor; ype to illogical results

CMM = CMM Levelof Developer- Avg CMM Levelof Database • Required significant tailoring

SystemEffe = 1 if systemis part of larger developmen project;else SystemEffe = 0

ct t ct – Reduced SLOC

categories

• ESLOC is specified uniquely for each activity – Common ESLOC

weights

m

ESLOC = New * CF + ∑ Not _ New * CF * w

i i j i ij

j =1 – CCommon D O S for

D.O.S. f

where CFi = Counting Convention Factor groups of activities

w i = Equivalent new fractional cost

j = SLOC categories that are considered " Not New"

• Use constrained optimization to solve for coefficients that minimize residuals of estimated

effort at both the project level and chunk level

Slide 14

Presented at 2009 ISPA/SCEA Workshop](https://image.slidesharecdn.com/scea2009gallo-1253279925643-phpapp03/85/Initial-Results-Building-a-Normalized-Software-Database-Using-SRDRs-14-320.jpg)