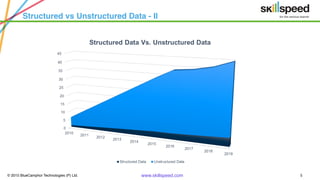

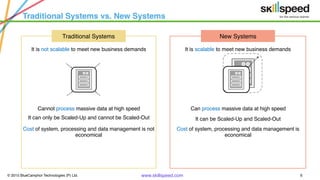

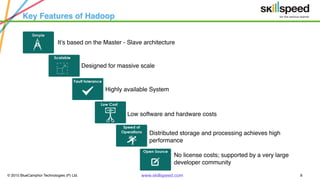

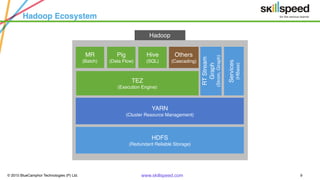

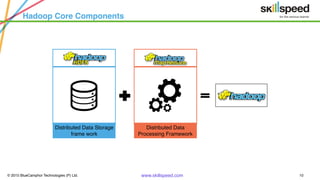

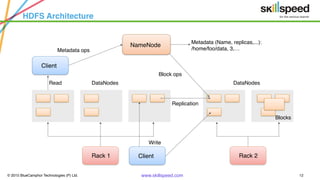

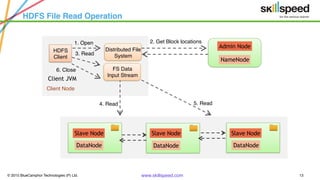

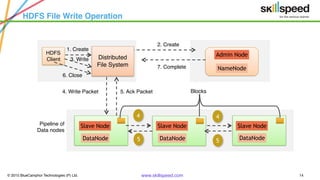

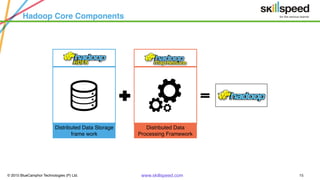

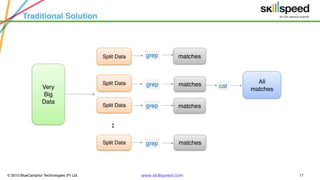

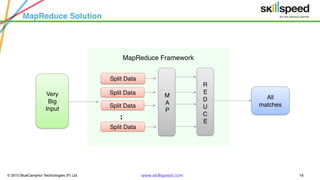

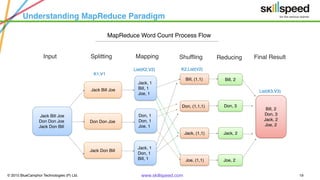

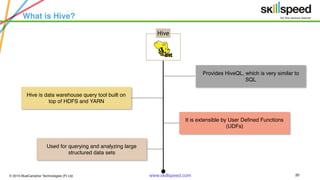

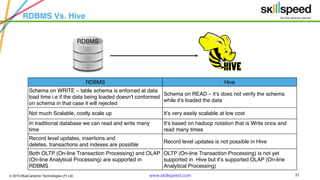

This document provides an overview of Hadoop and related big data technologies. It begins with defining big data and discussing why traditional systems are inadequate. It then introduces Hadoop as a framework for distributed storage and processing of large datasets. The key components of Hadoop - HDFS for storage and MapReduce for processing - are described at a high level. HDFS architecture and read/write operations are outlined. MapReduce paradigm and an example word count job are also summarized. Finally, Hive is introduced as a data warehouse tool built on Hadoop that provides SQL-like queries for large datasets.