Embed presentation

Download to read offline

![Deliverables

According to the proposal described, the

project will deliver the results in two ways:

● Extension for OpenRefine 2.6.x, bundling

together the implemented features

[Product, Open Source, APL 2.0]

● Screencast introducing the tool

developed and performing a practical

demonstration [Report, Public, CC 4.0]](https://image.slidesharecdn.com/redfine-proposalforthefusepoolopencallfordevelopers-140411014049-phpapp01/85/Redfine-4-320.jpg)

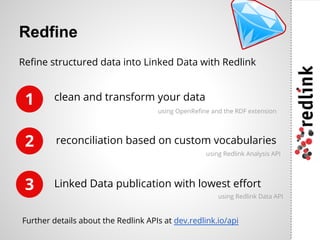

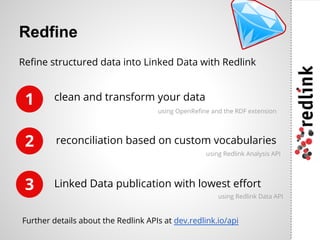

Redlink GmbH is an Austrian startup offering cloud services focused on content analysis, linked data publishing, and semantic search, founded by contributors to notable open-source projects. The initiative aims to create an extension for OpenRefine and provide a screencast demonstrating its features. Developers are invited to participate in the project, which highlights tools for data transformation and publication.

![Deliverables

According to the proposal described, the

project will deliver the results in two ways:

● Extension for OpenRefine 2.6.x, bundling

together the implemented features

[Product, Open Source, APL 2.0]

● Screencast introducing the tool

developed and performing a practical

demonstration [Report, Public, CC 4.0]](https://image.slidesharecdn.com/redfine-proposalforthefusepoolopencallfordevelopers-140411014049-phpapp01/85/Redfine-4-320.jpg)