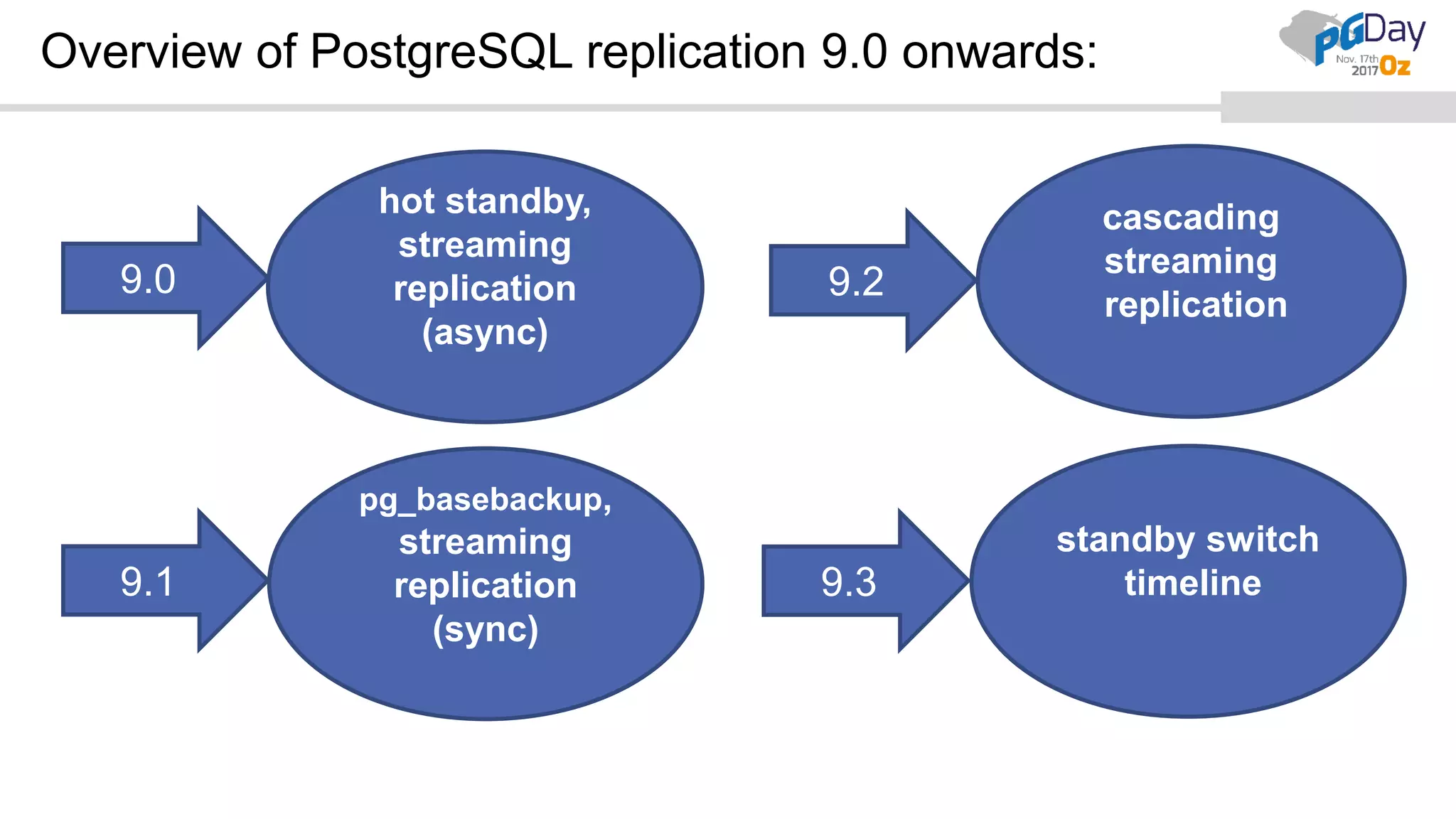

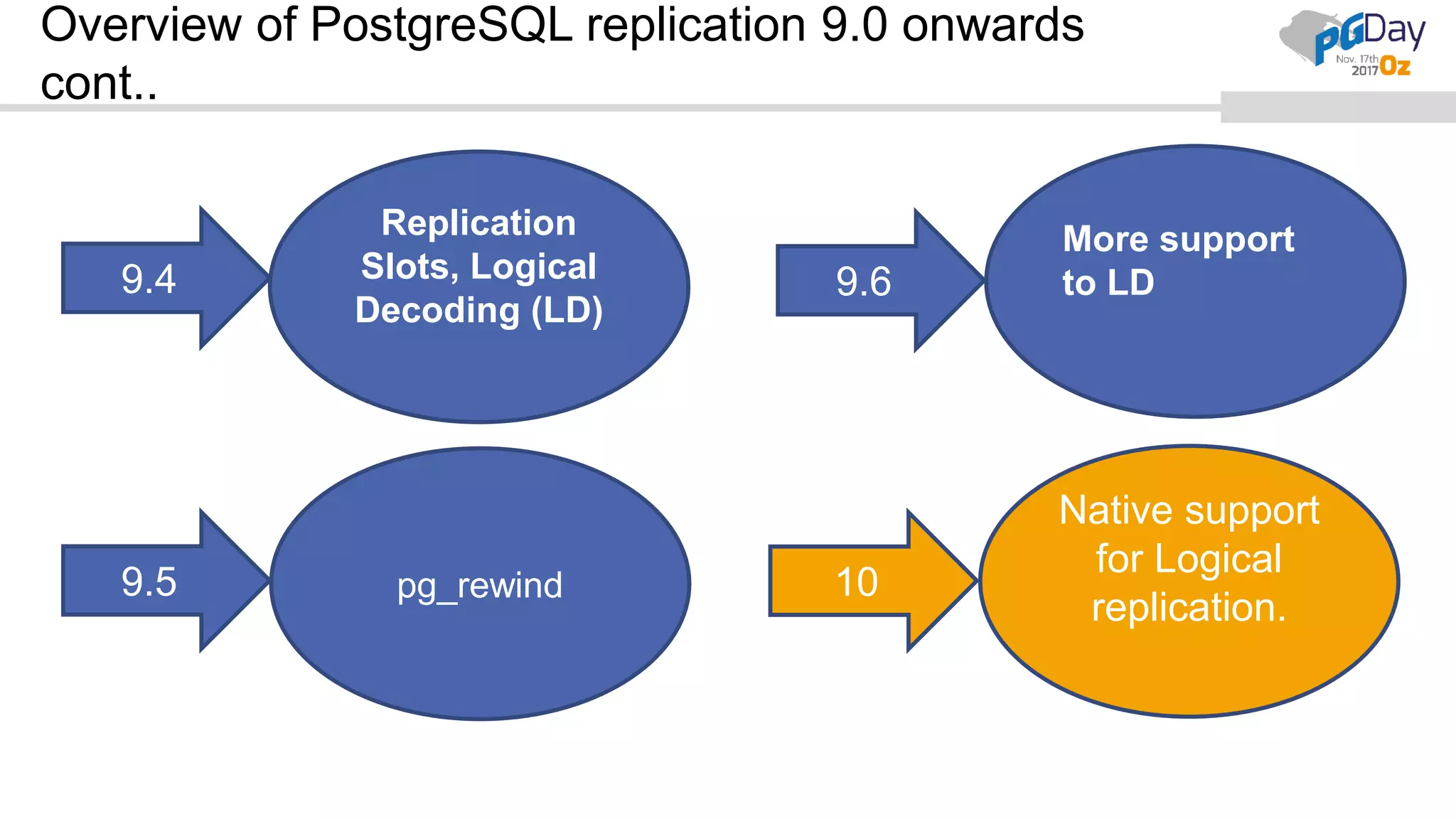

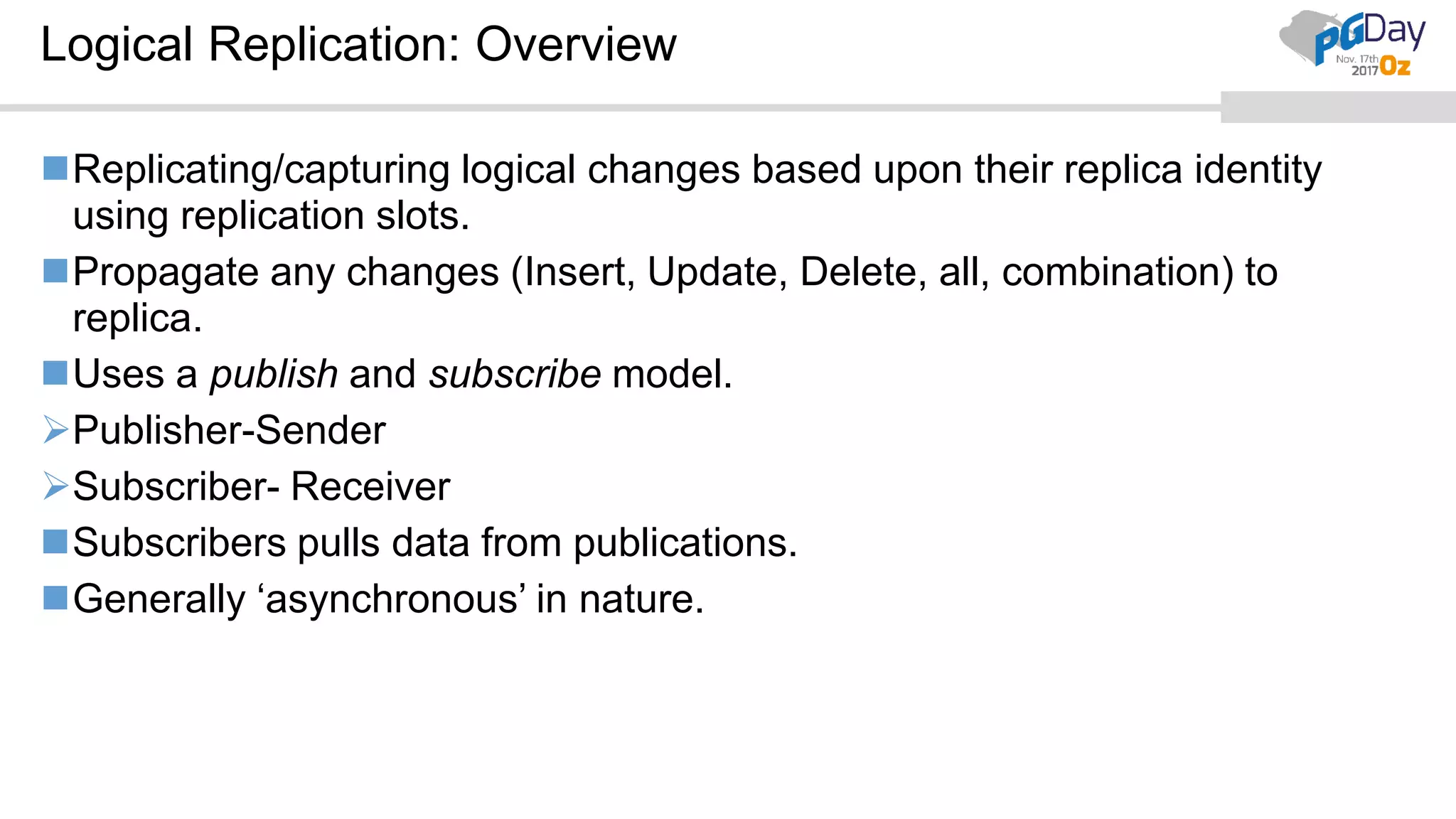

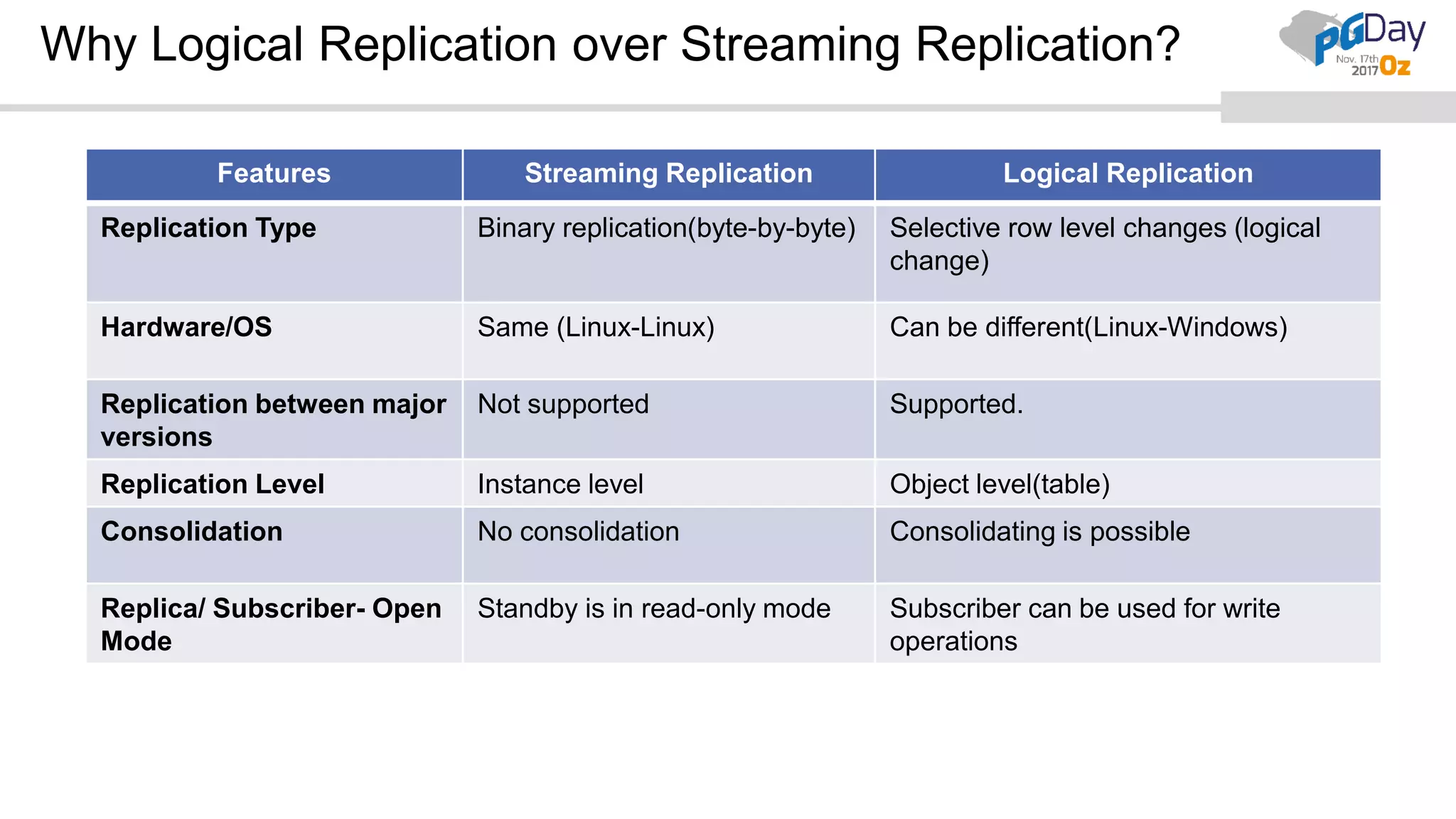

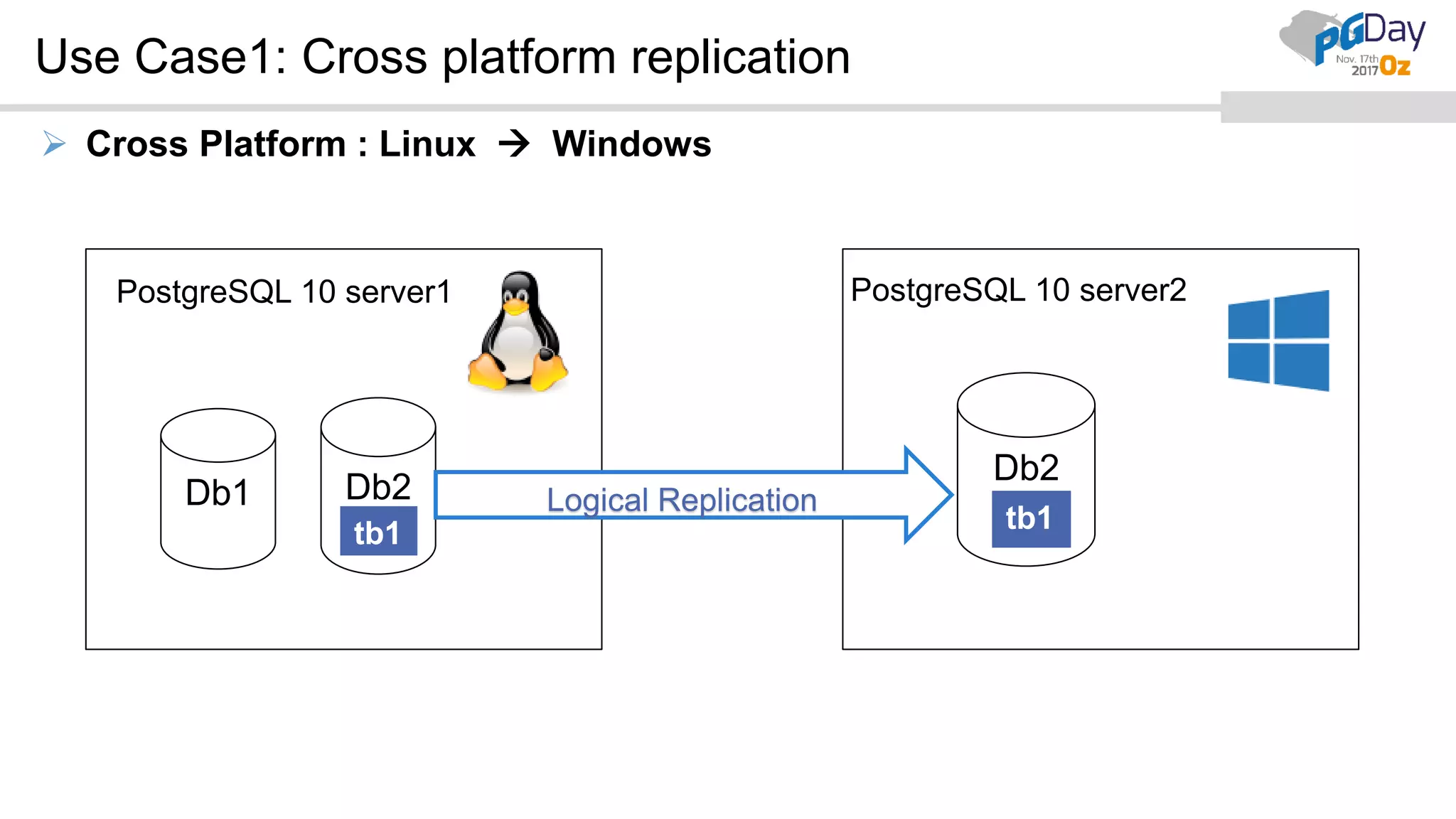

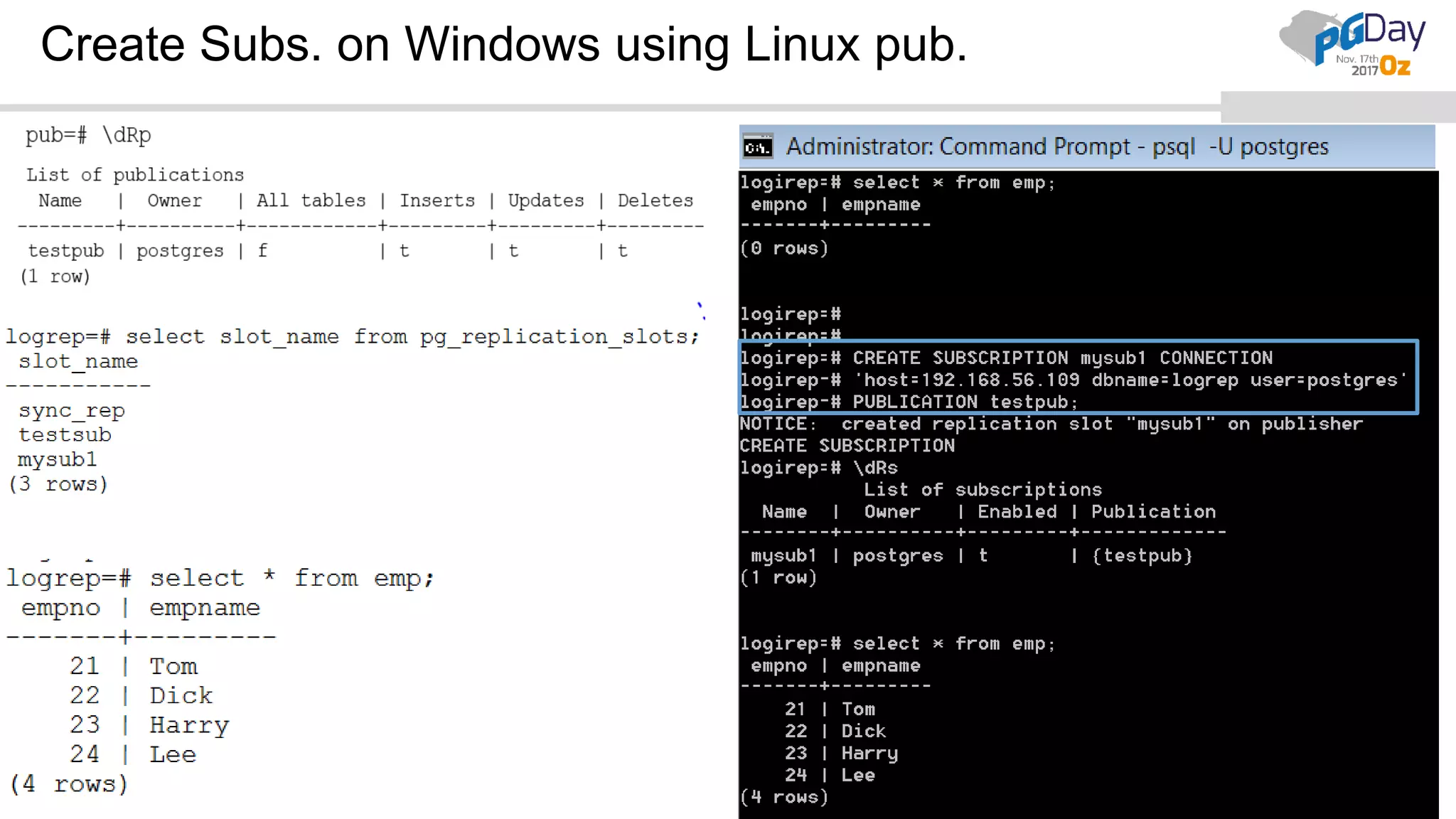

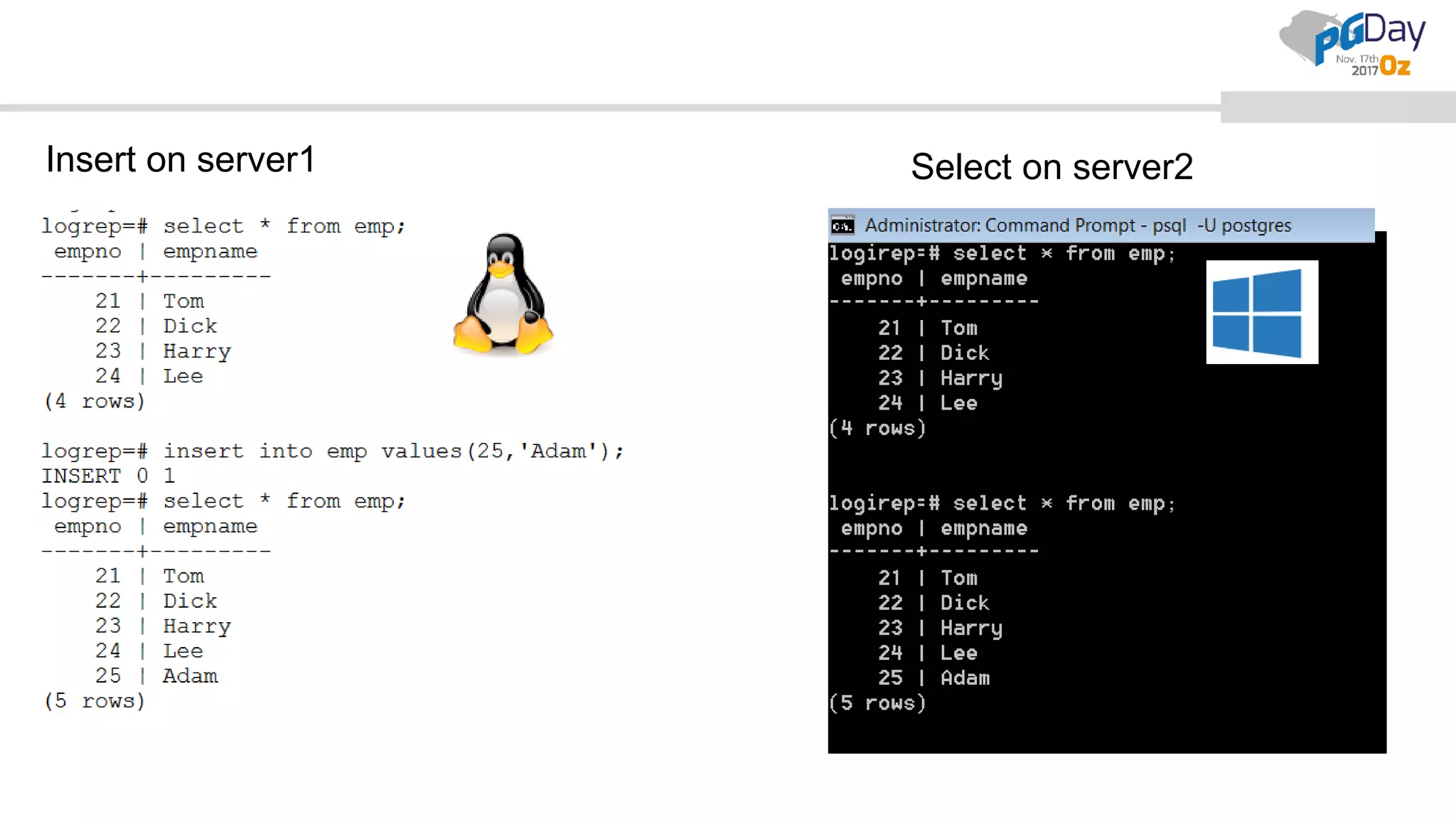

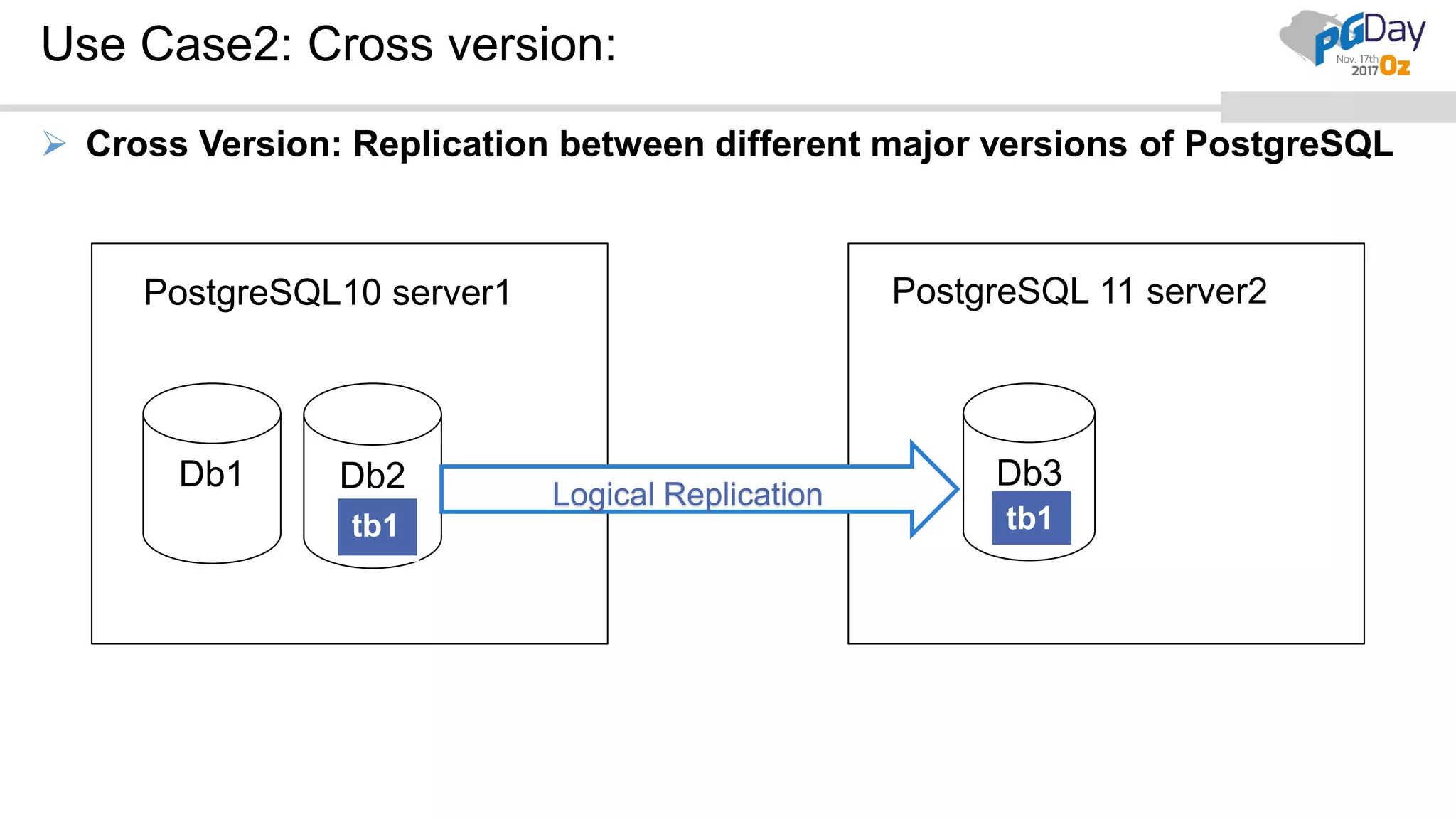

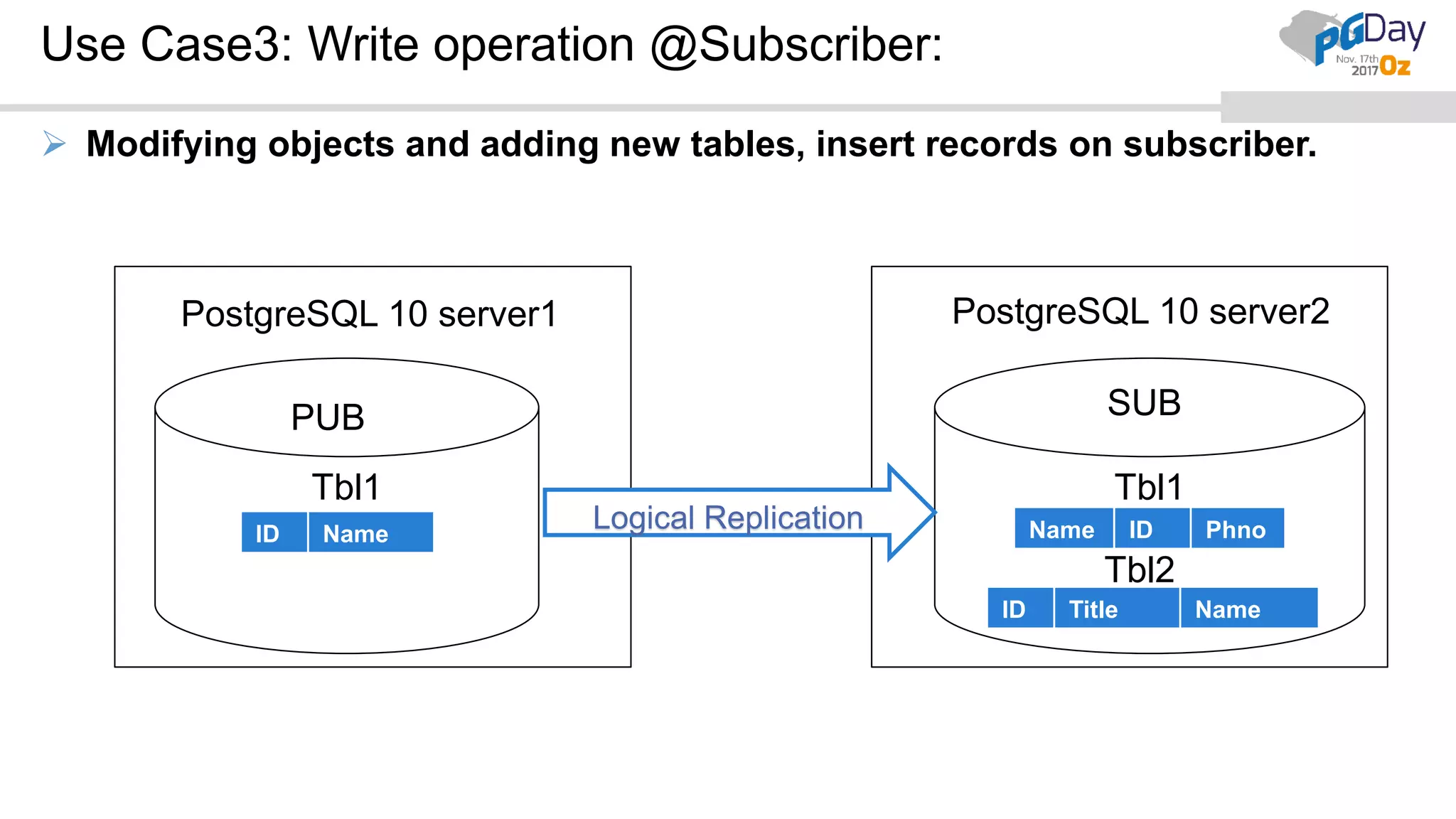

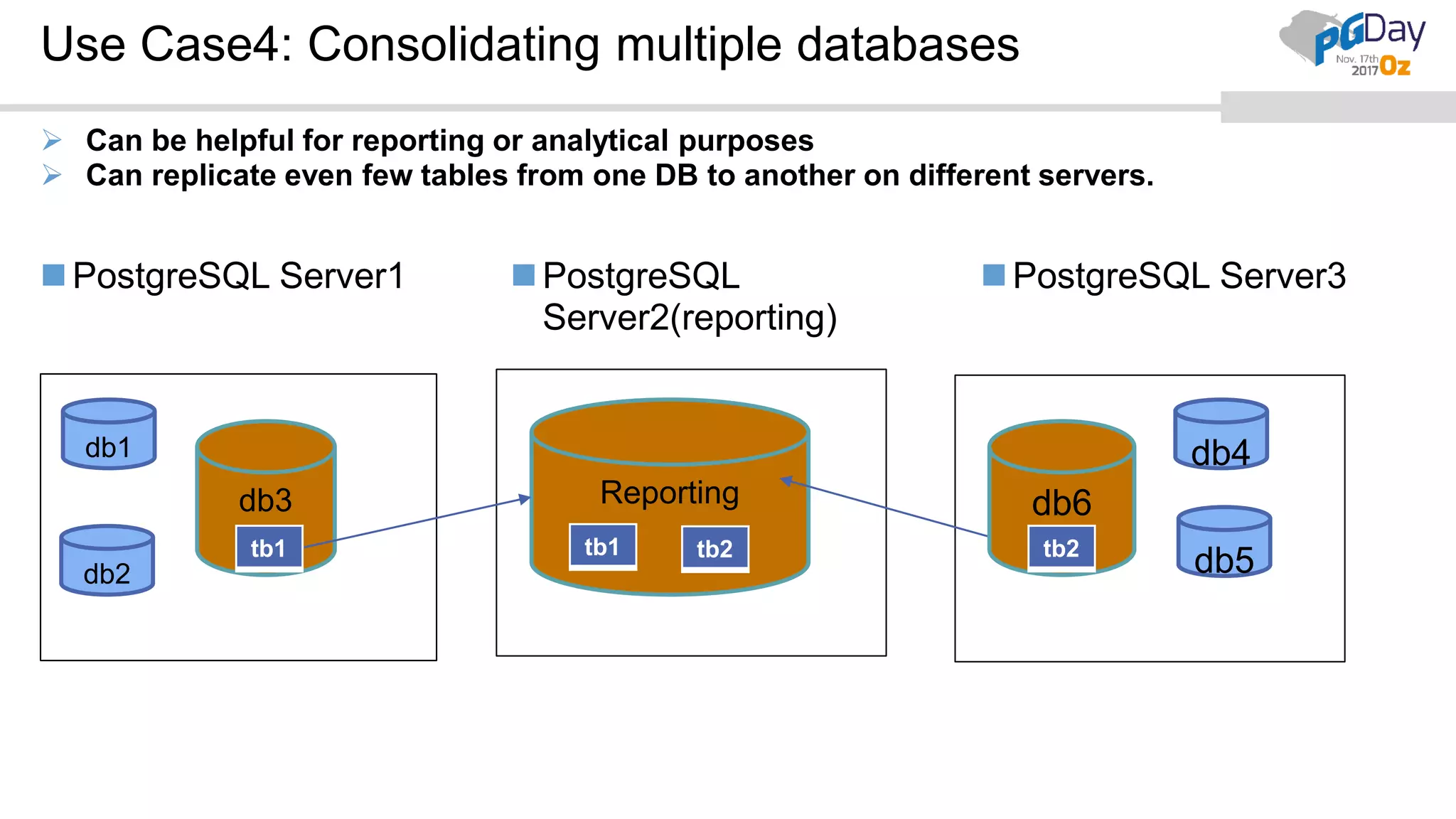

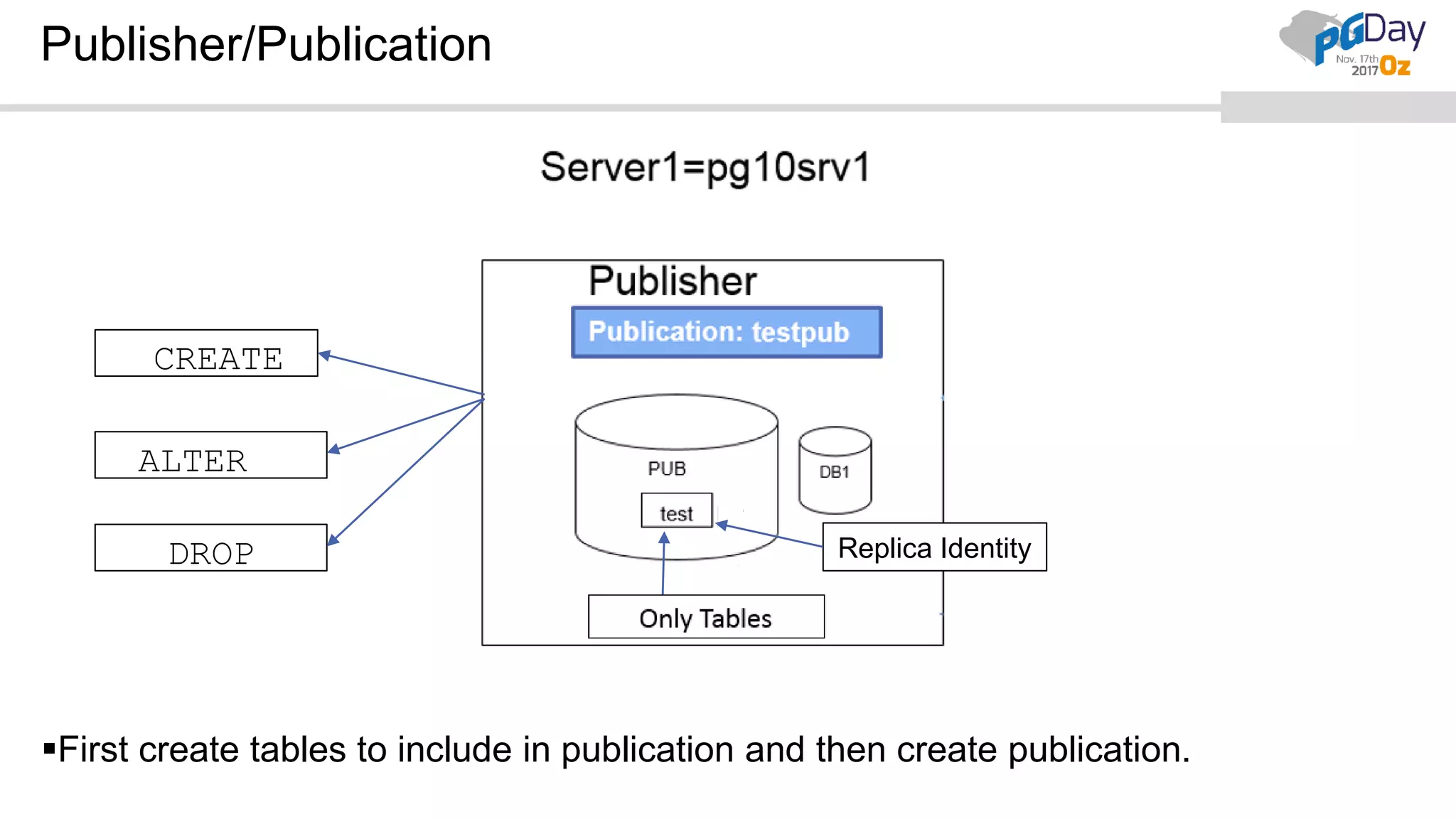

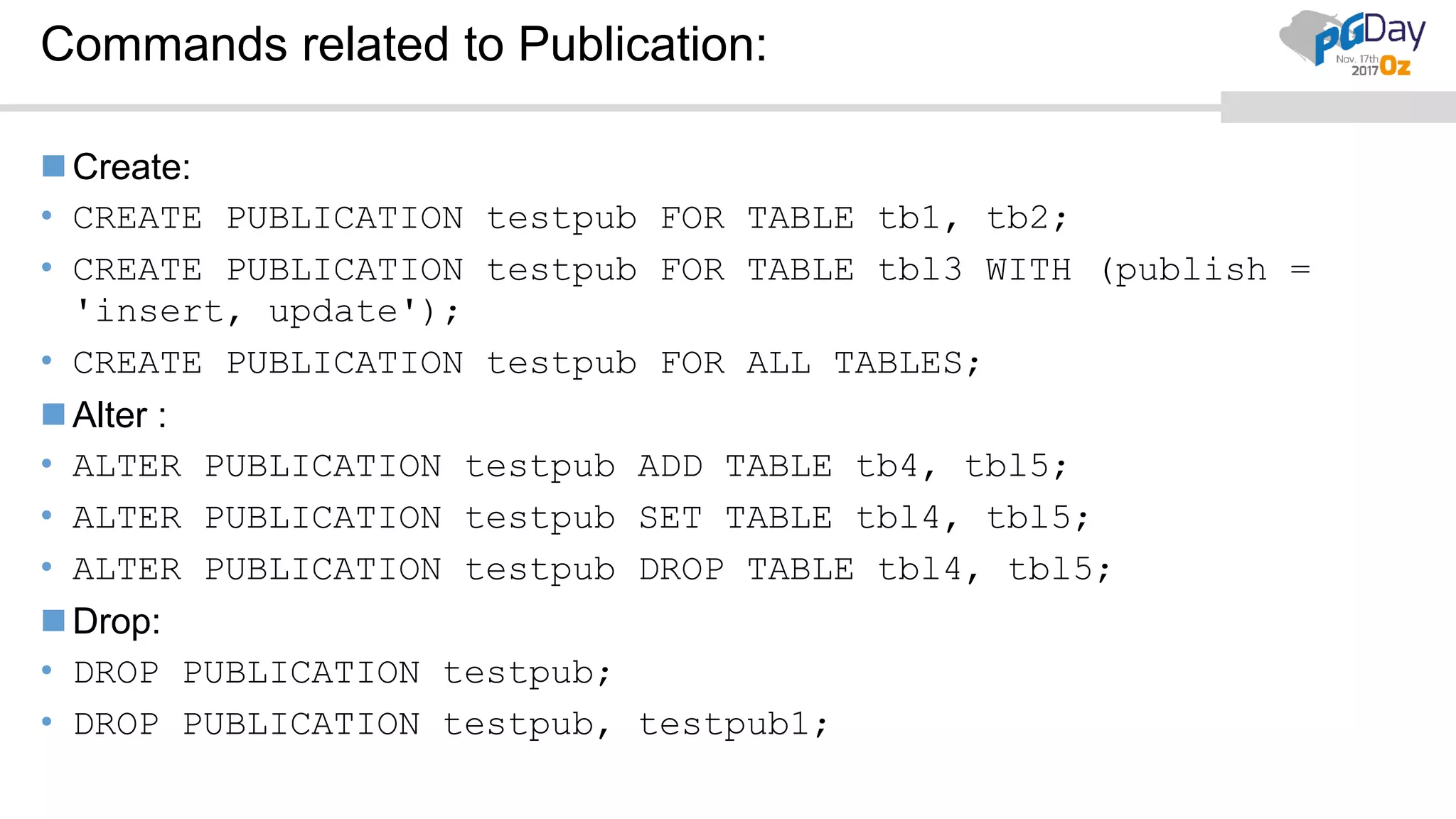

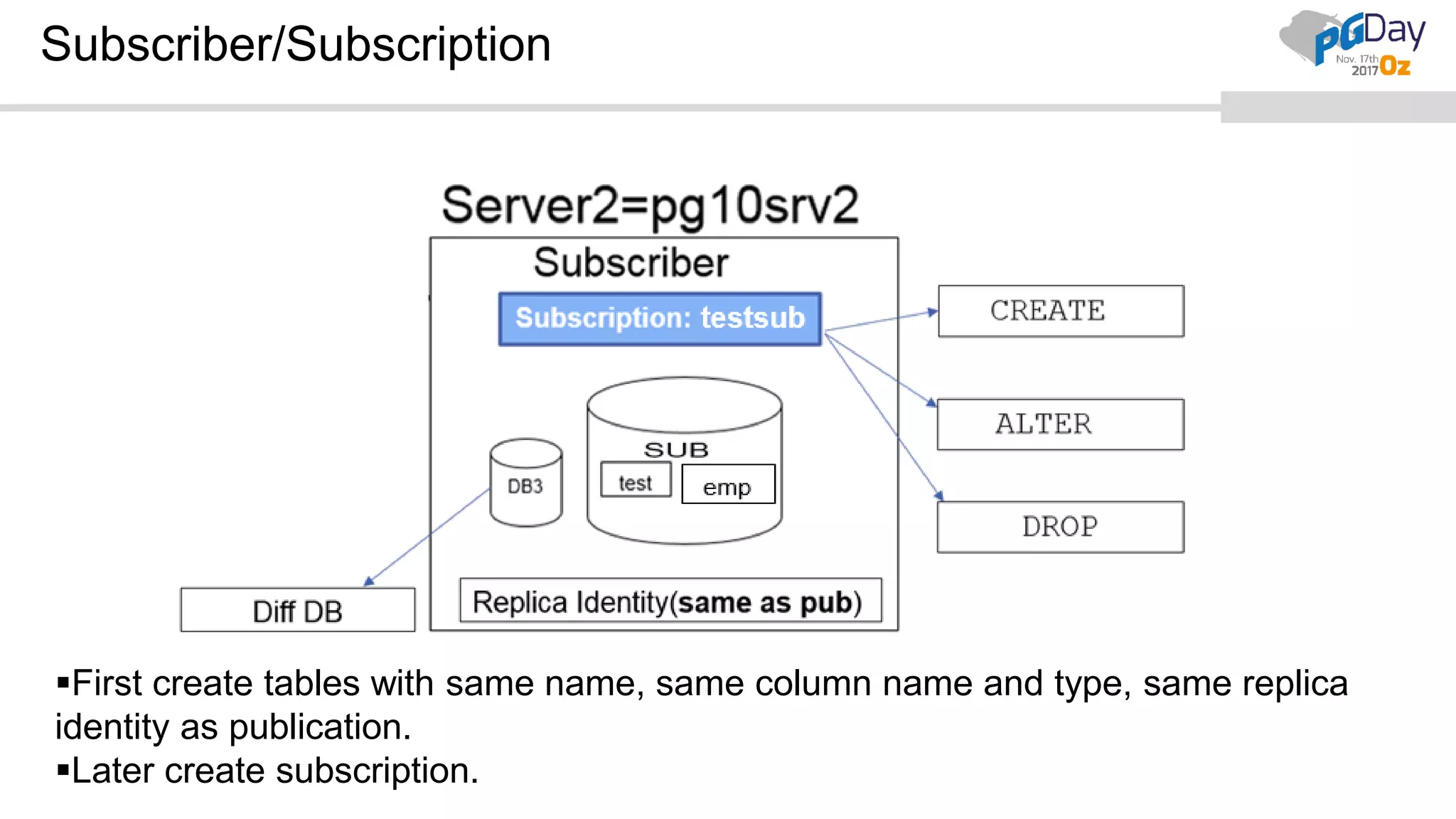

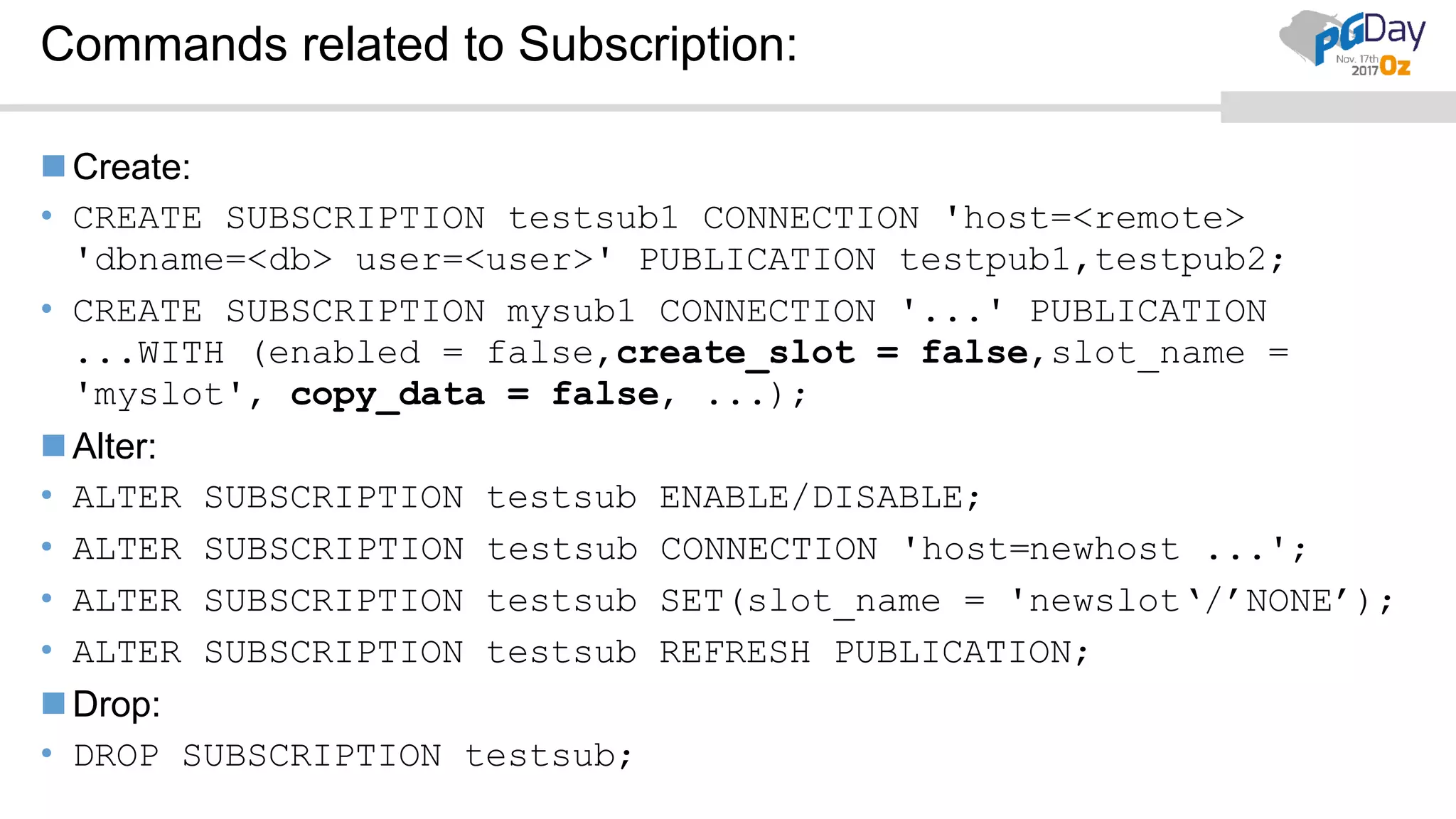

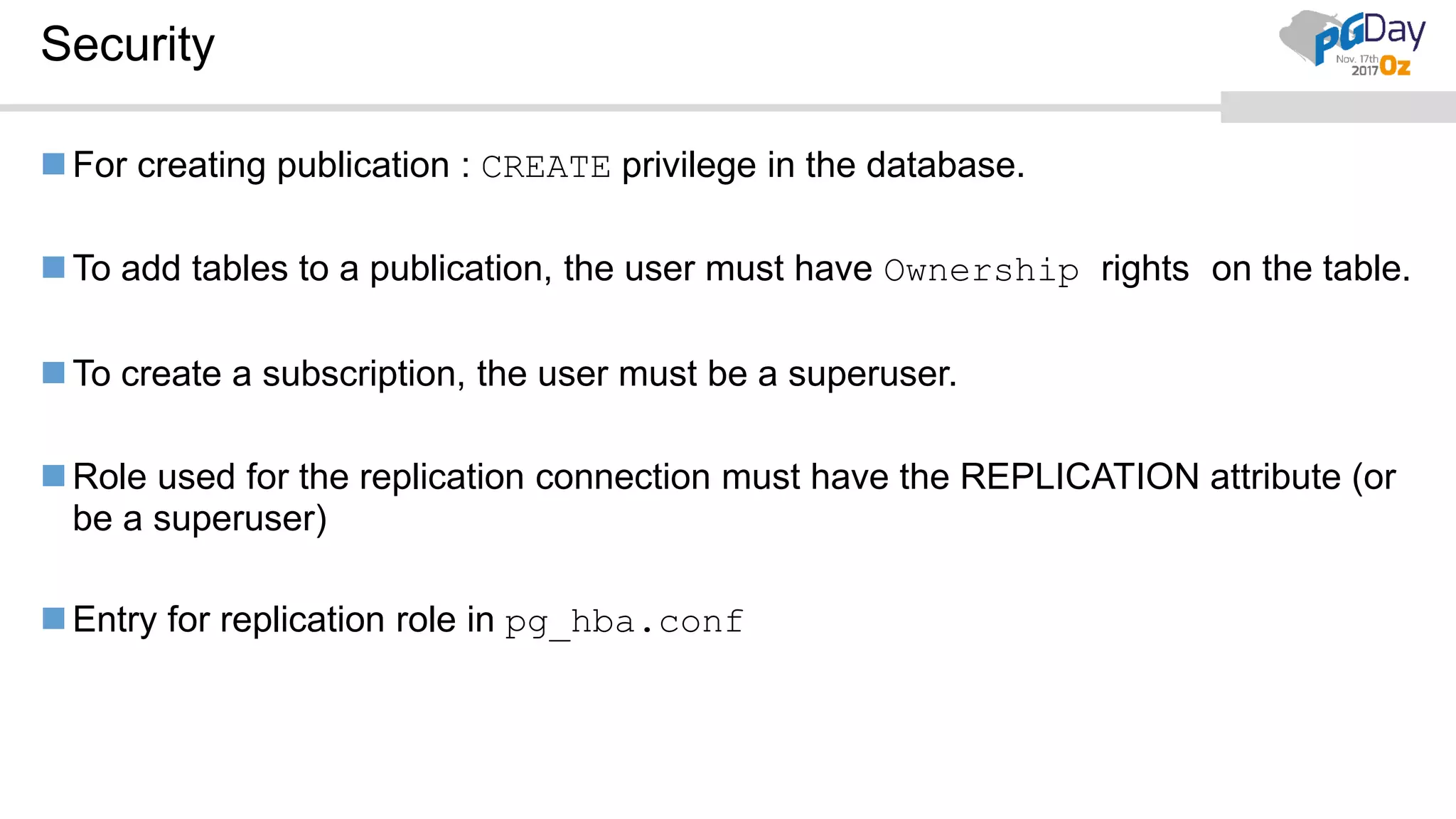

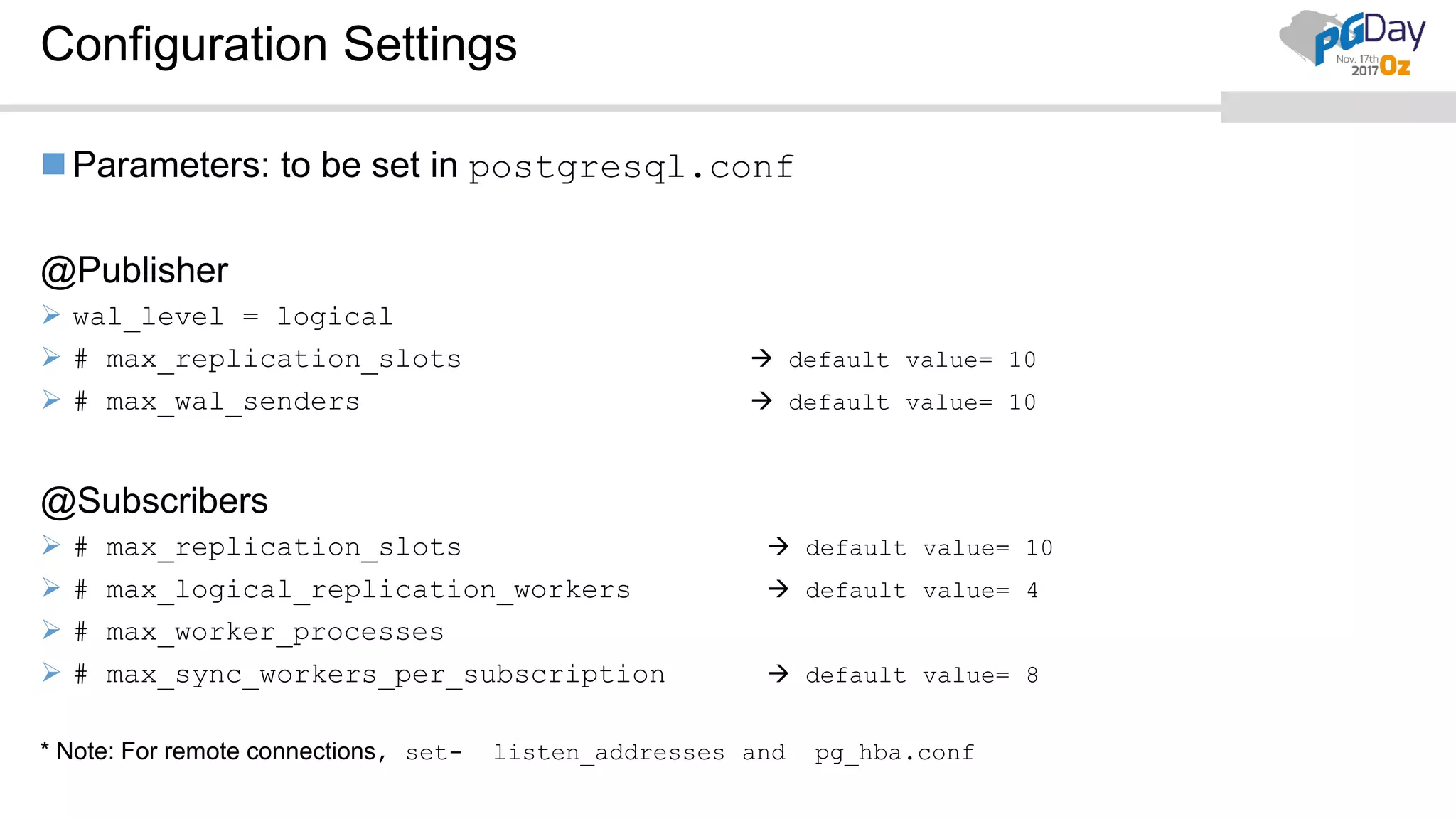

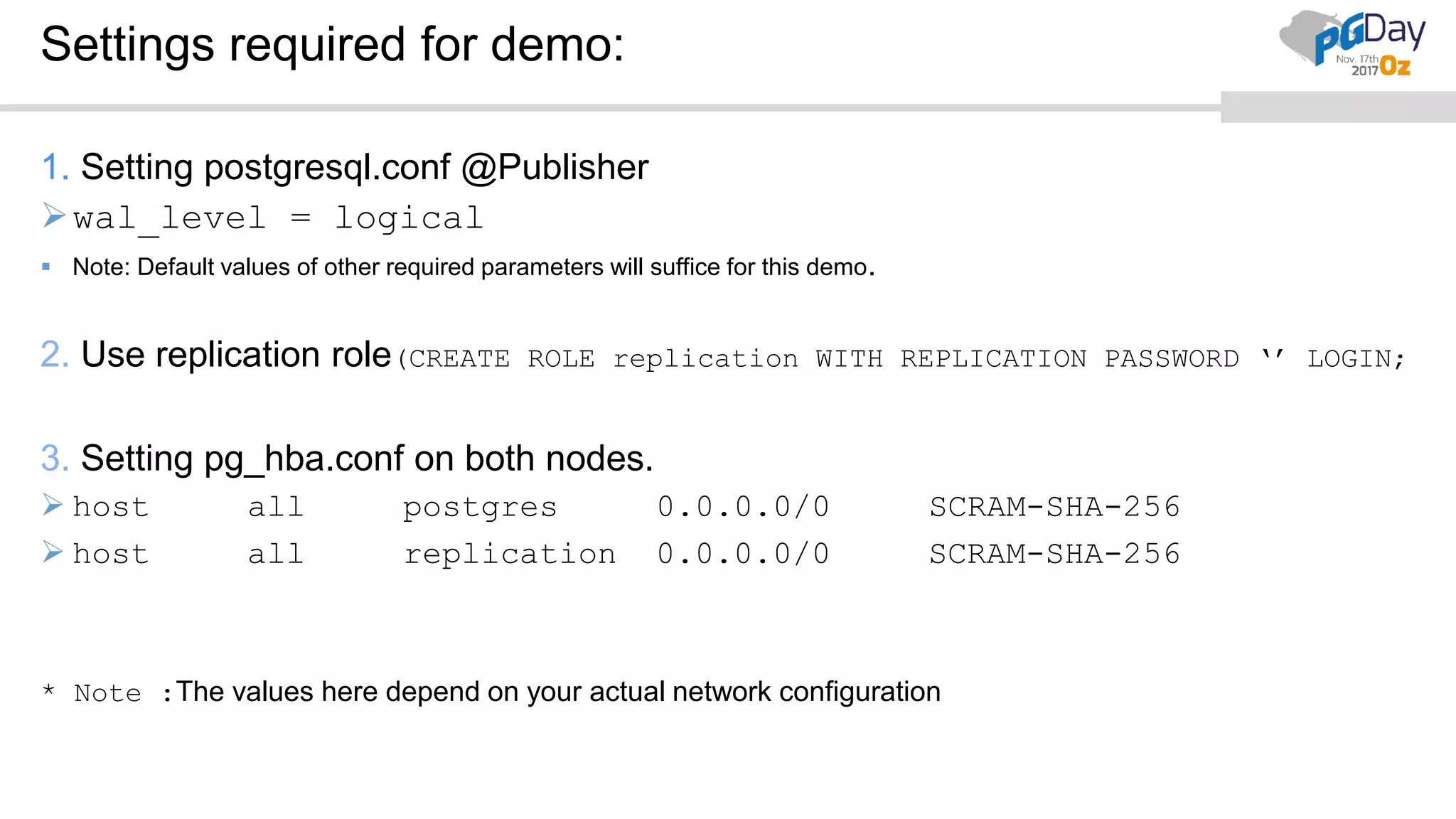

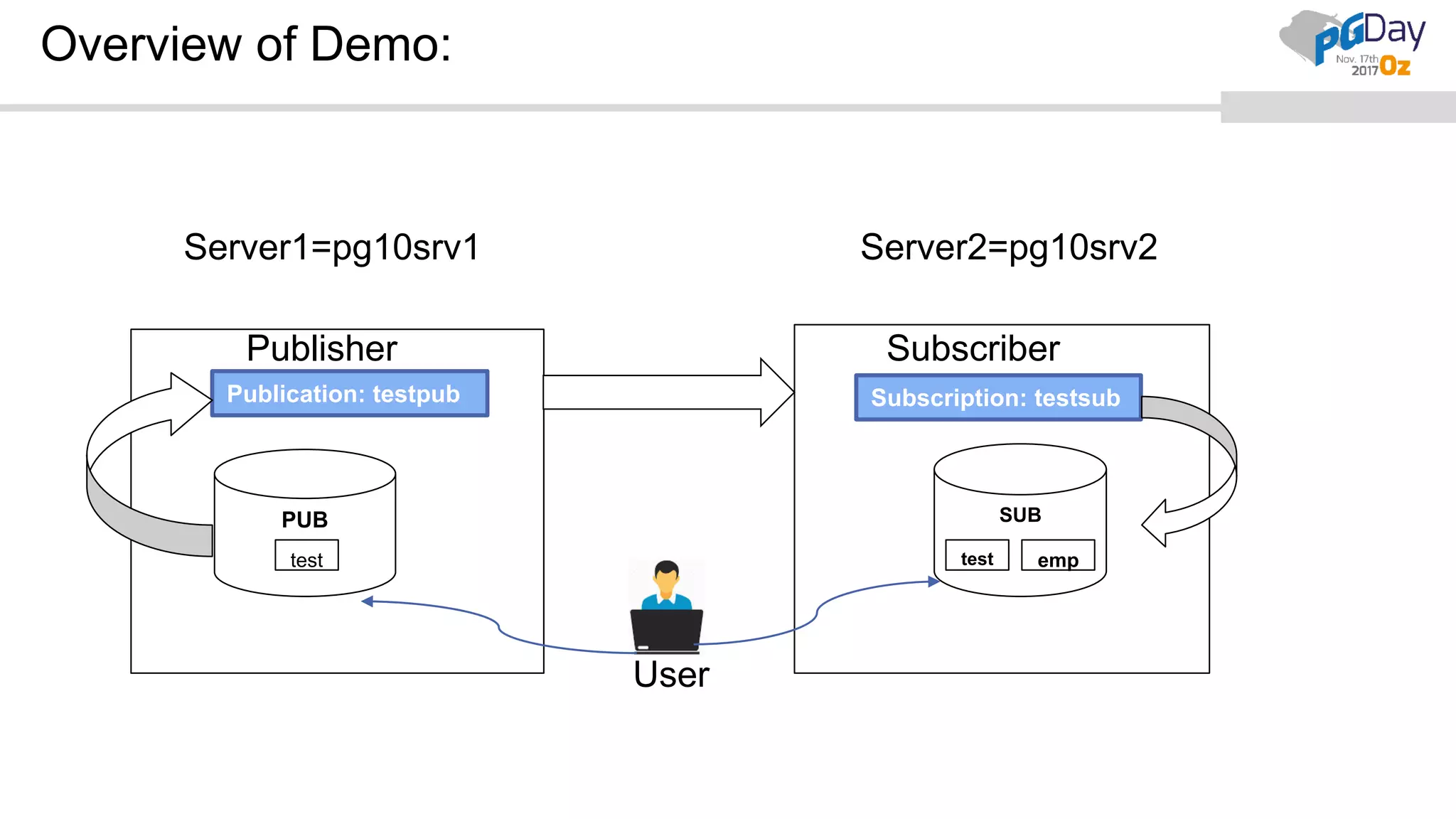

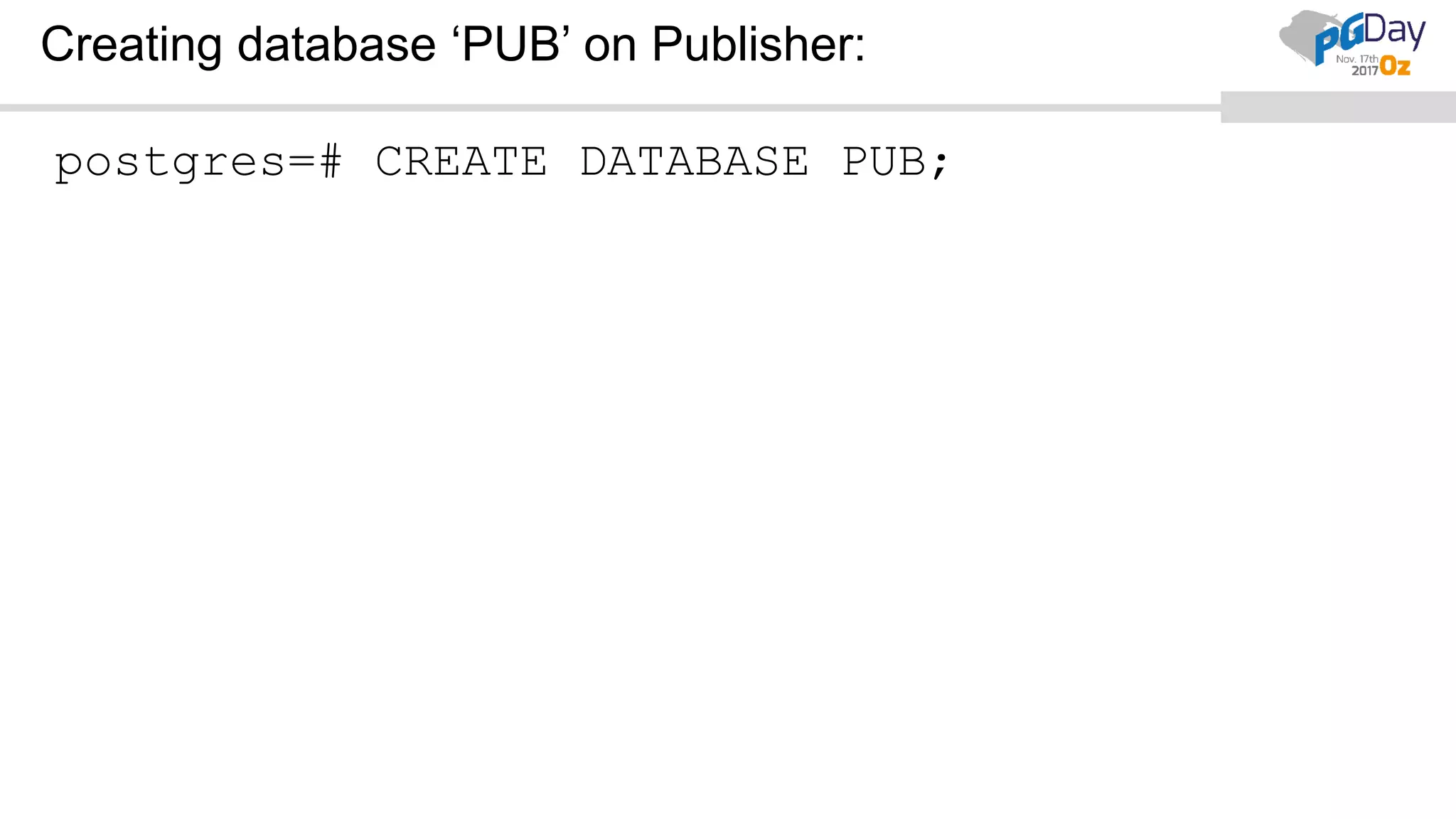

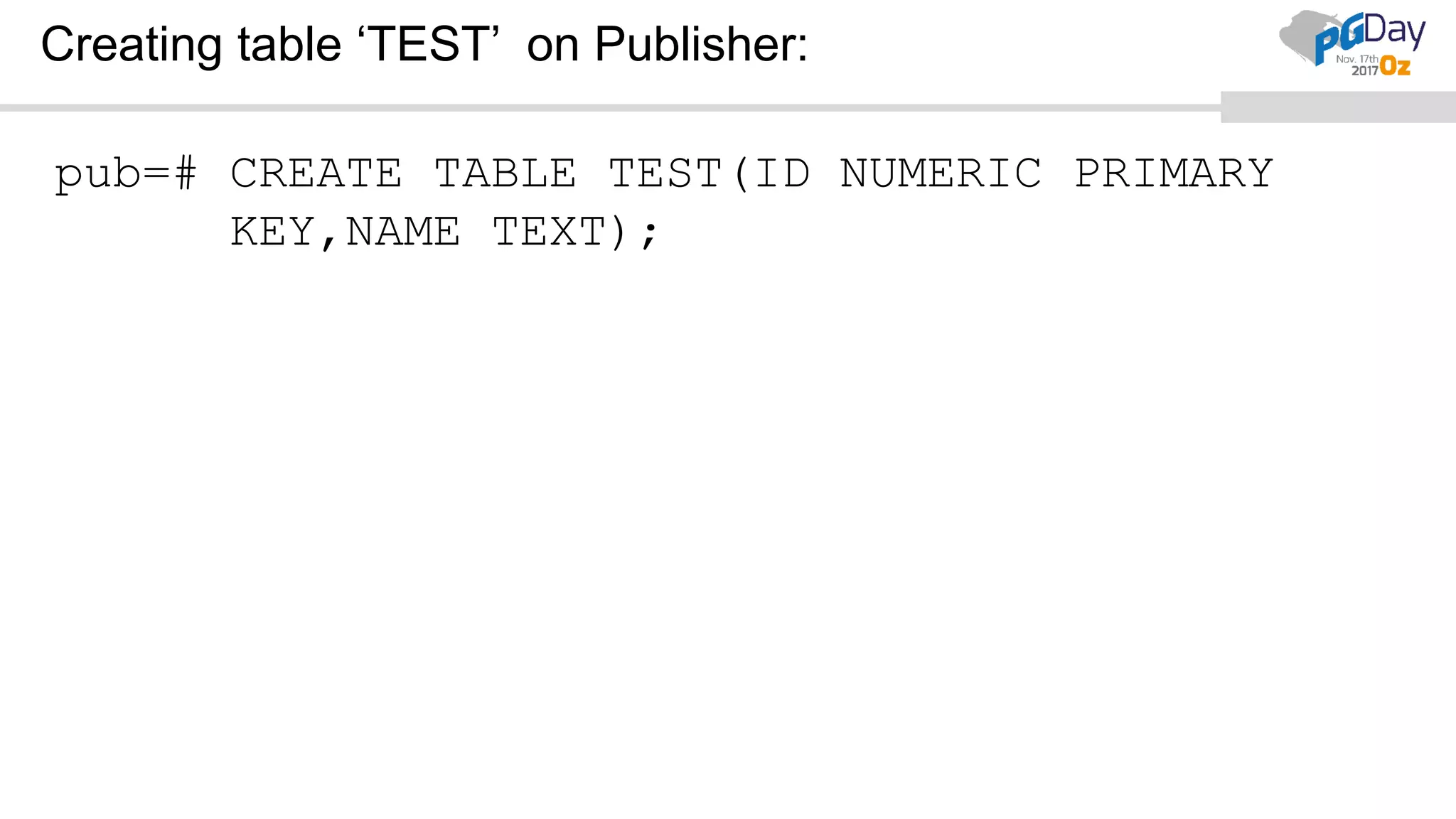

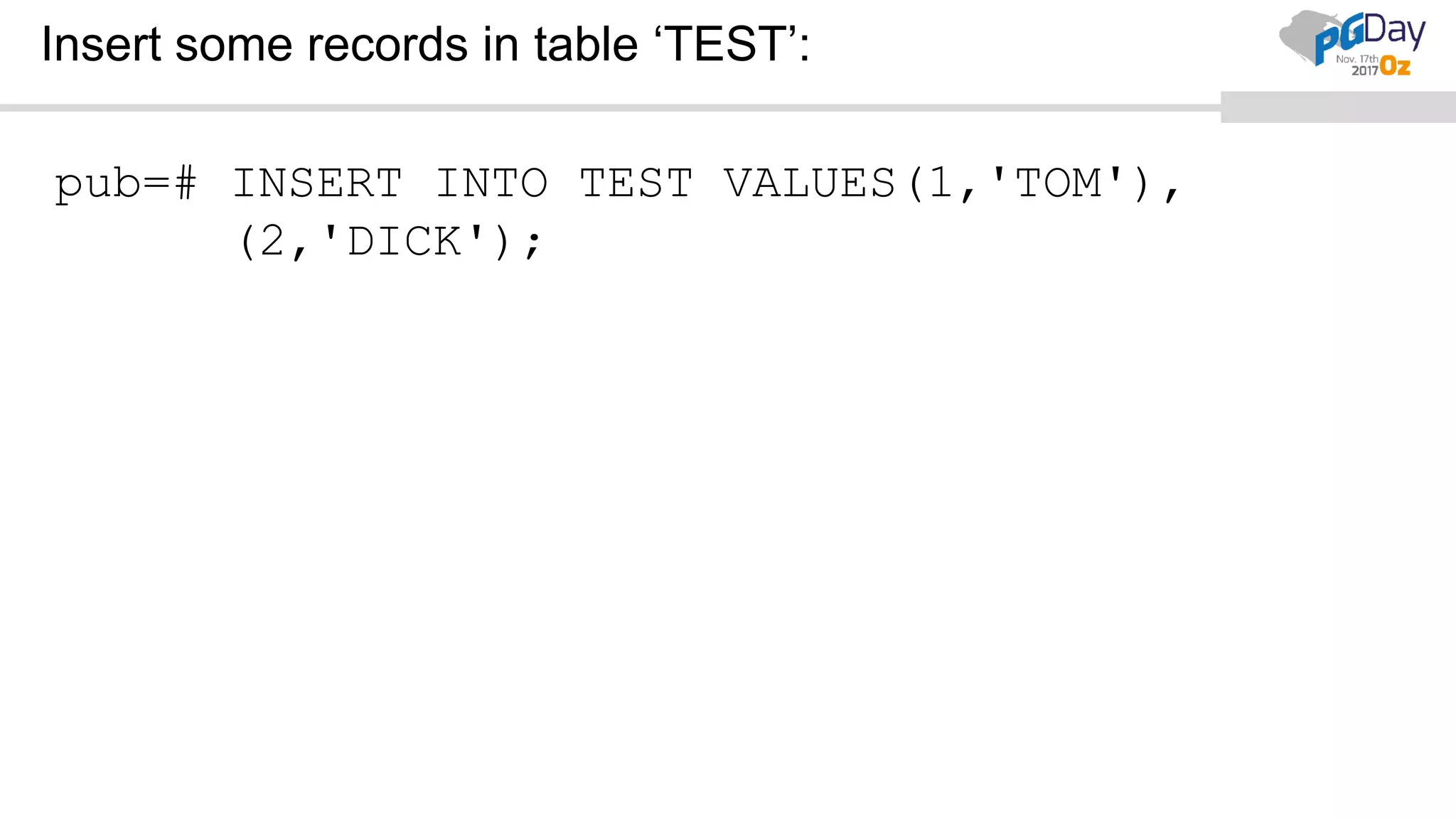

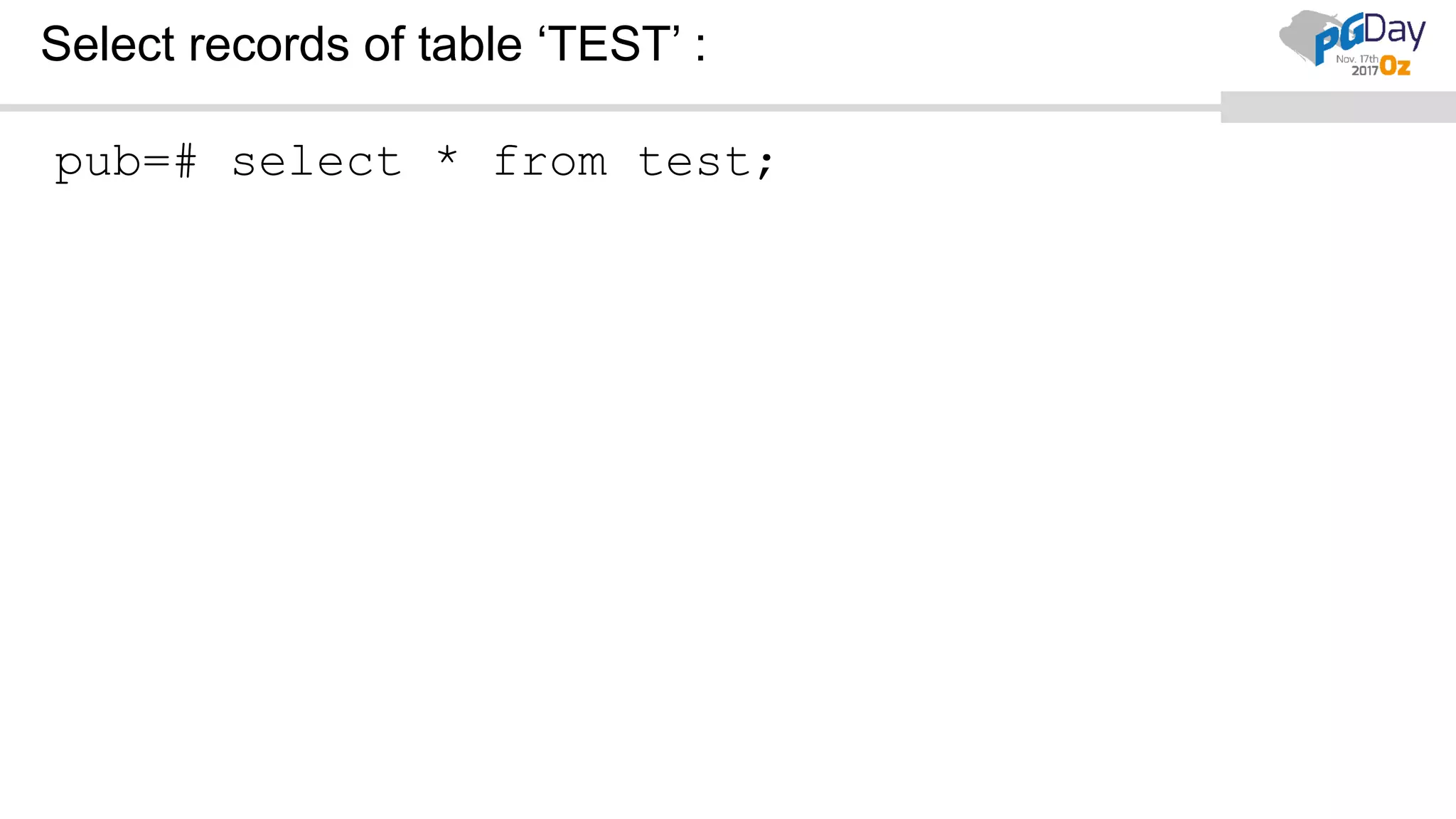

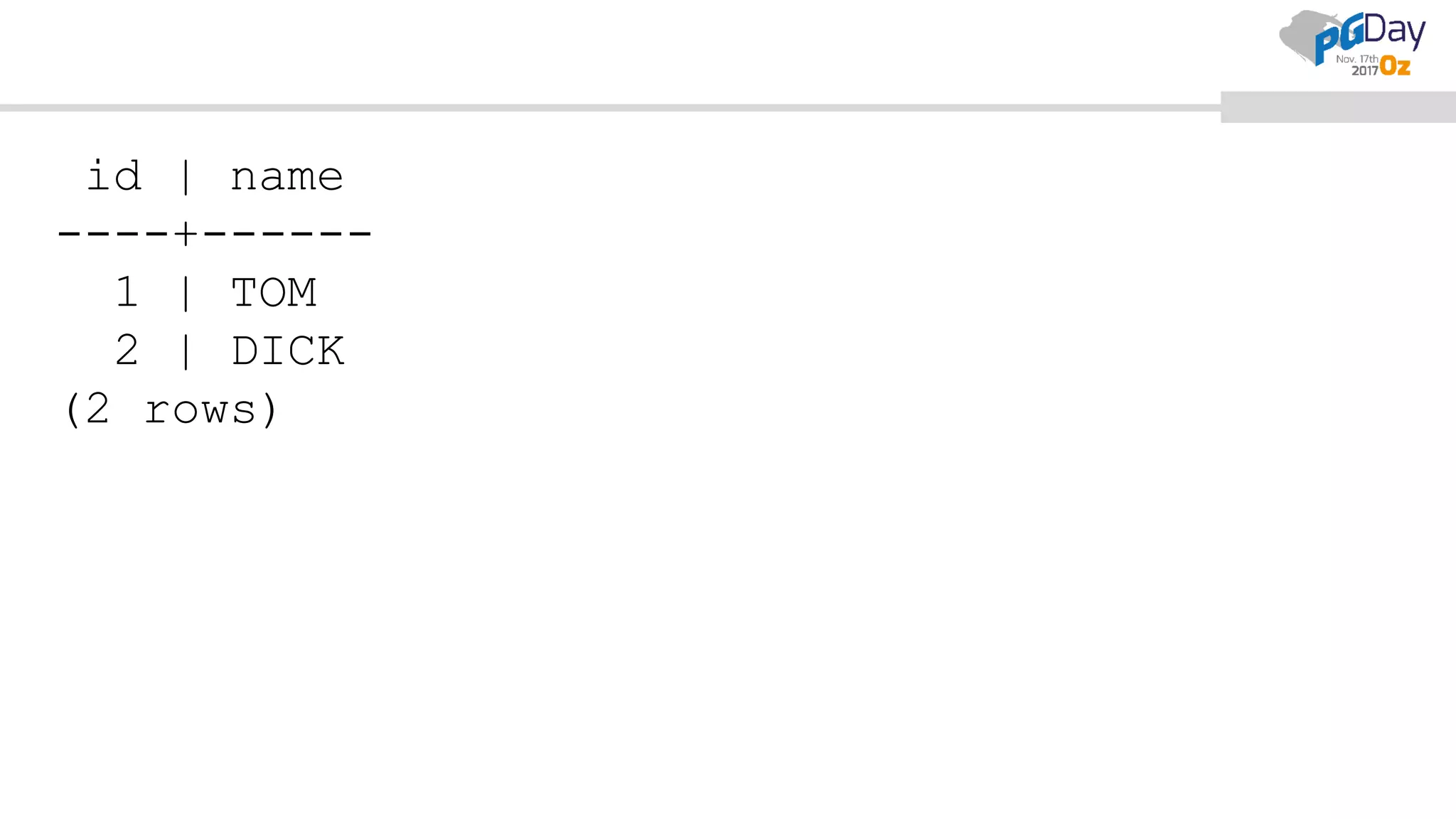

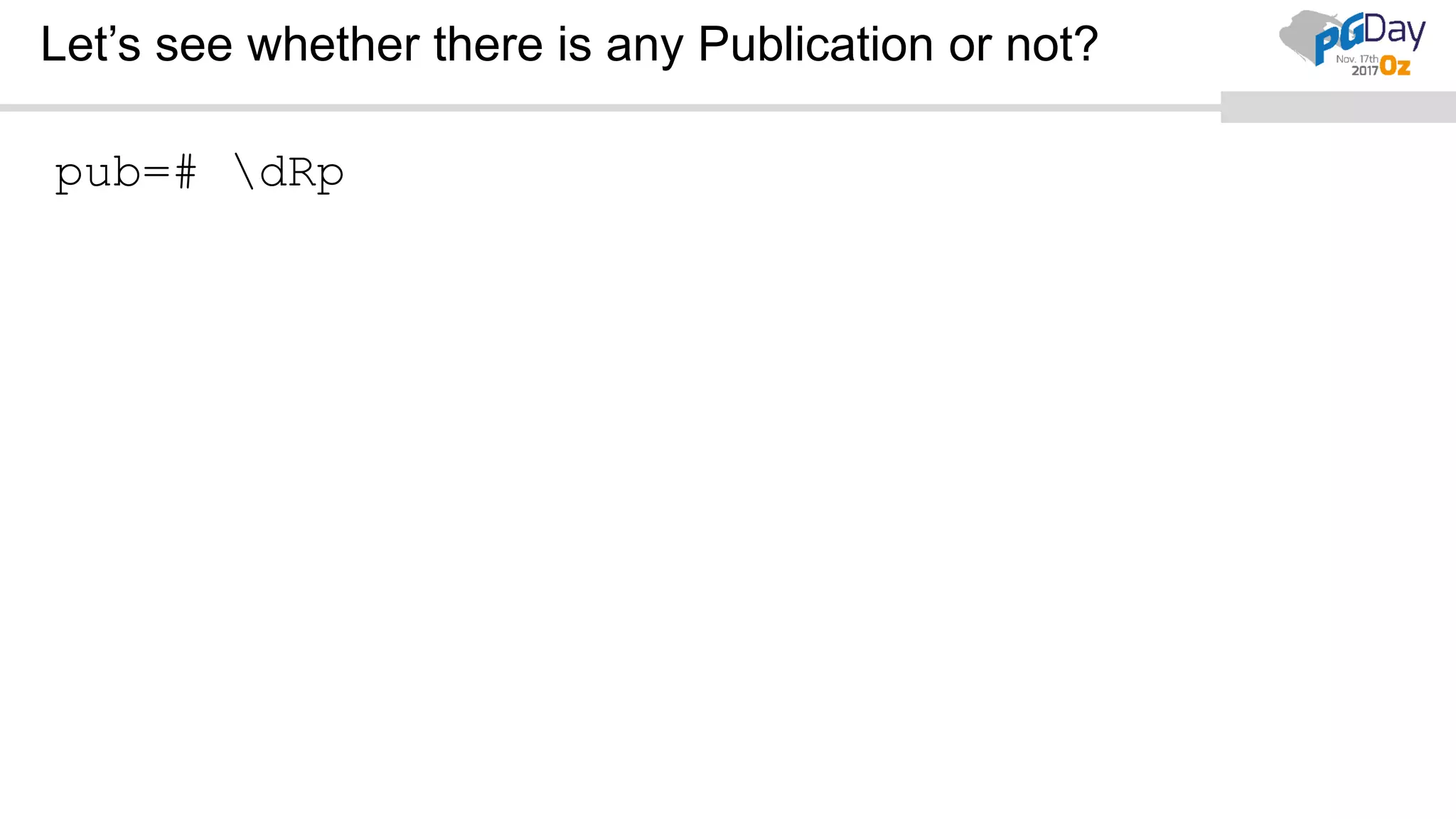

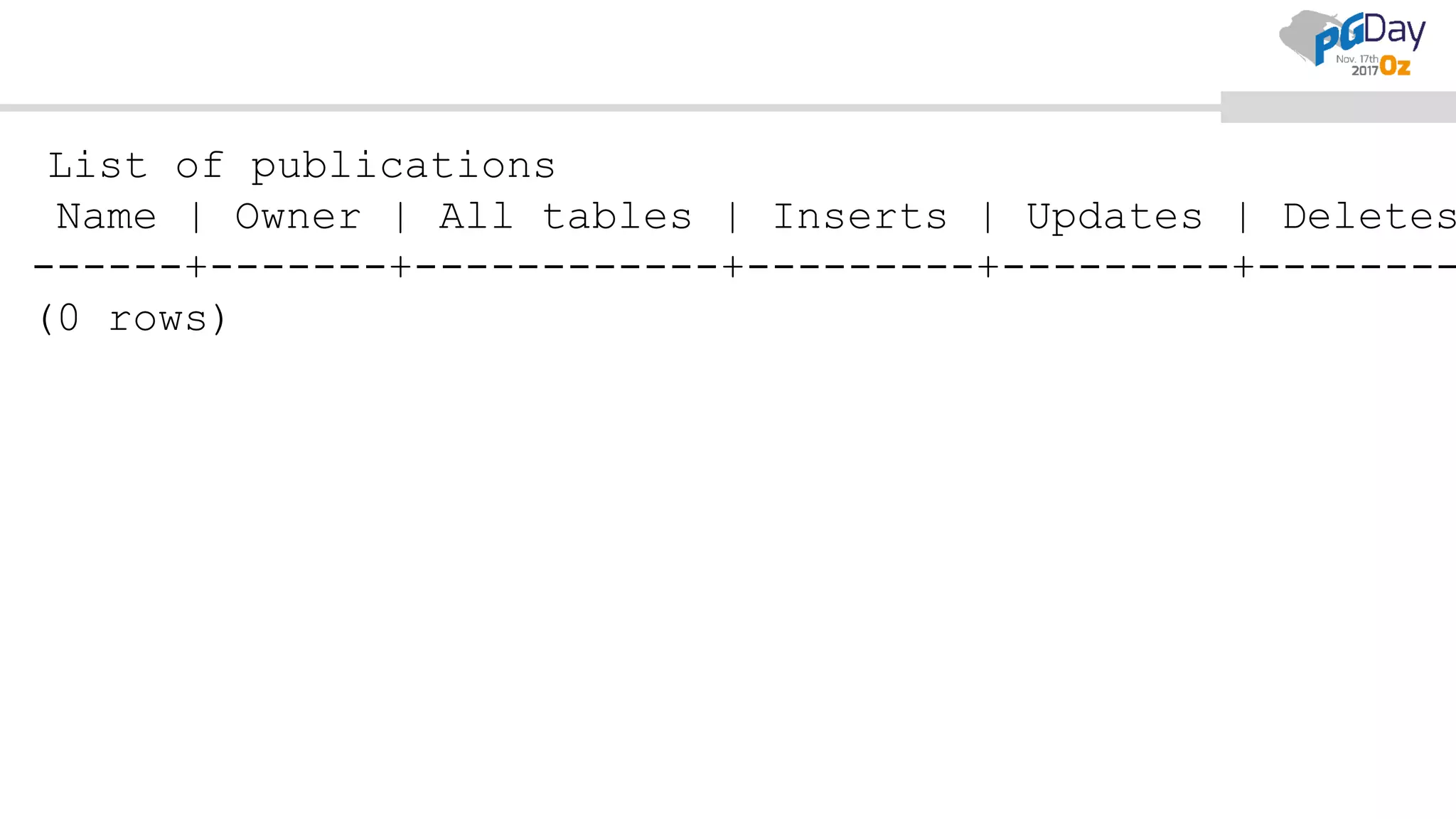

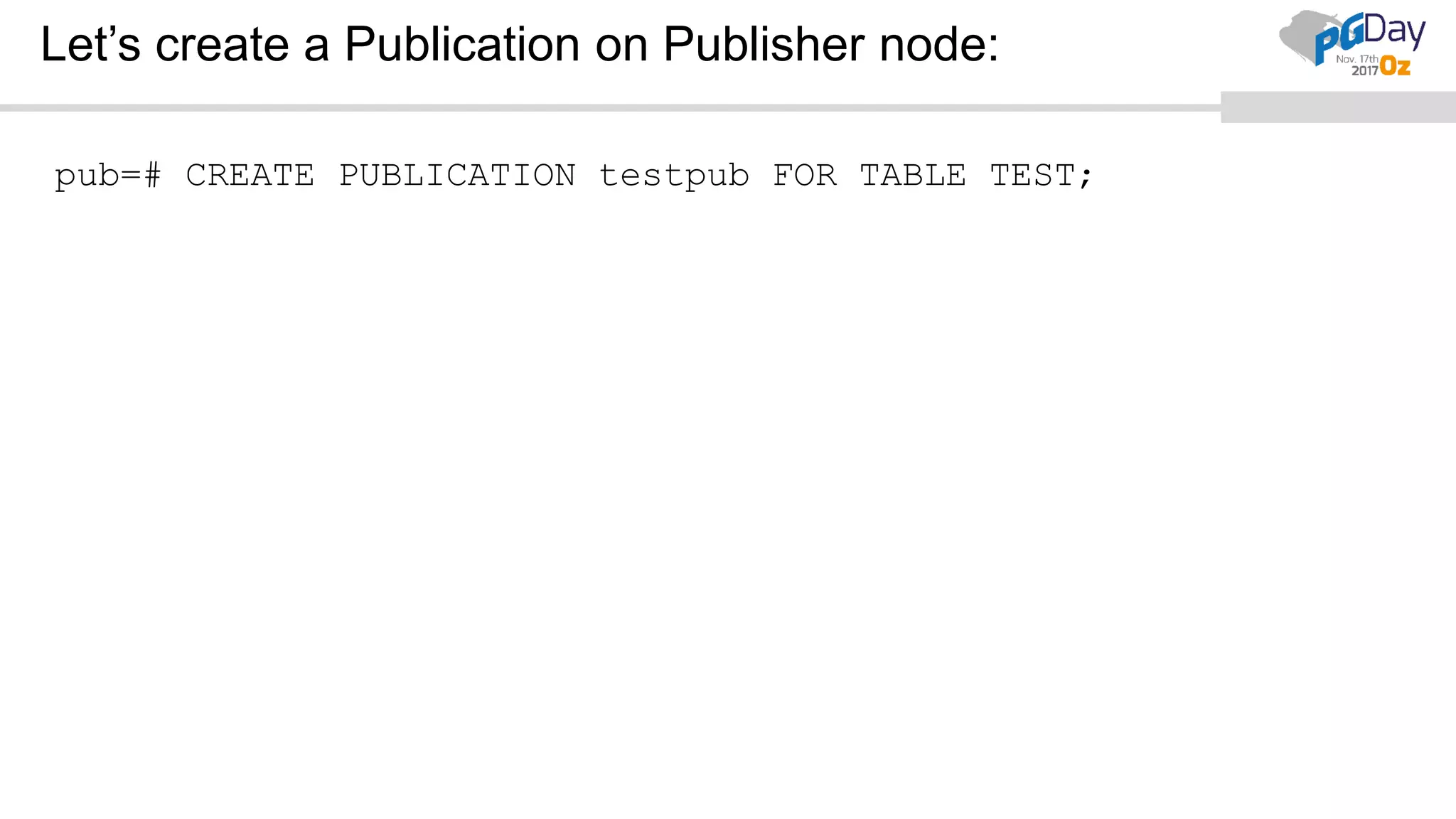

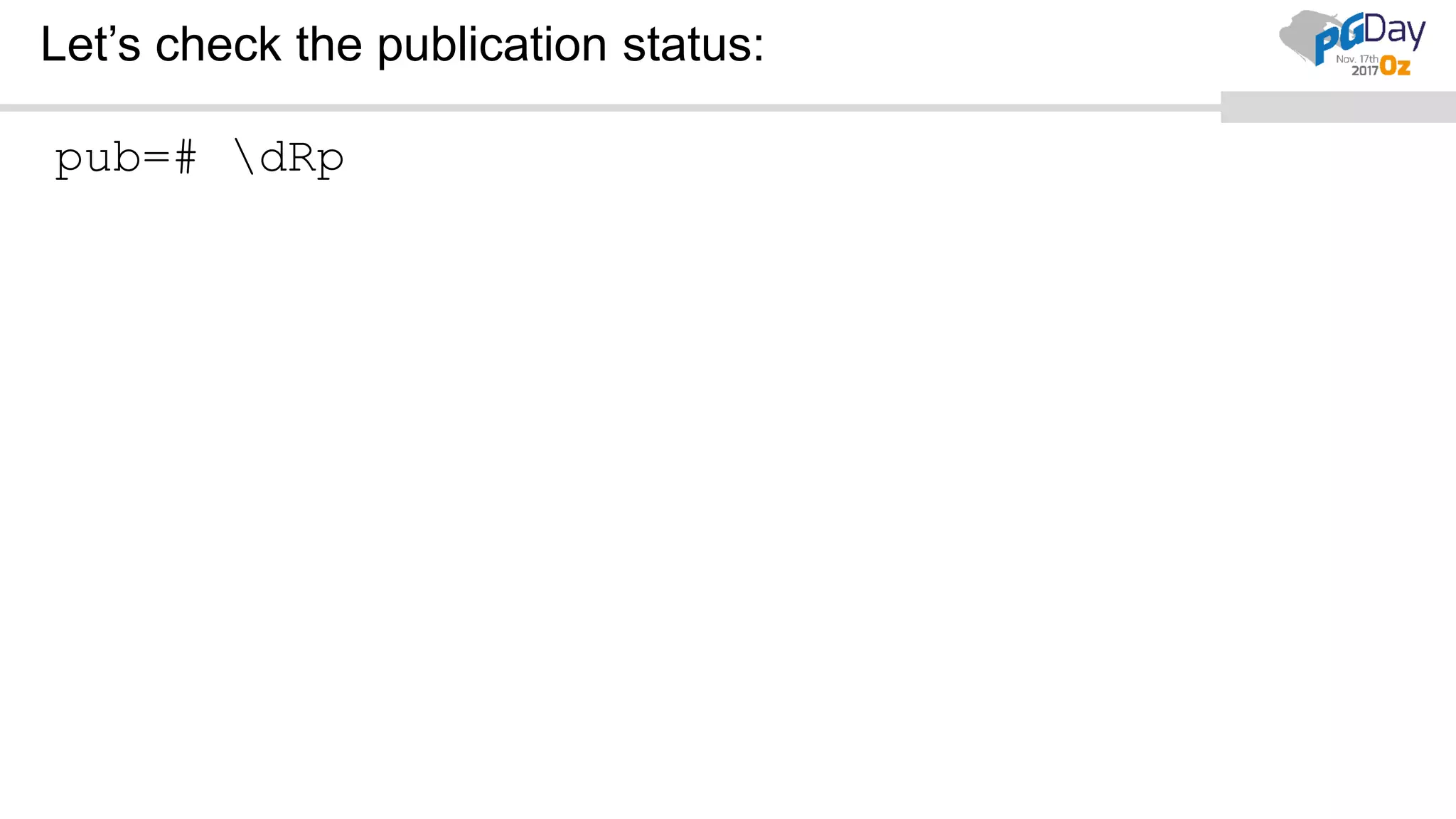

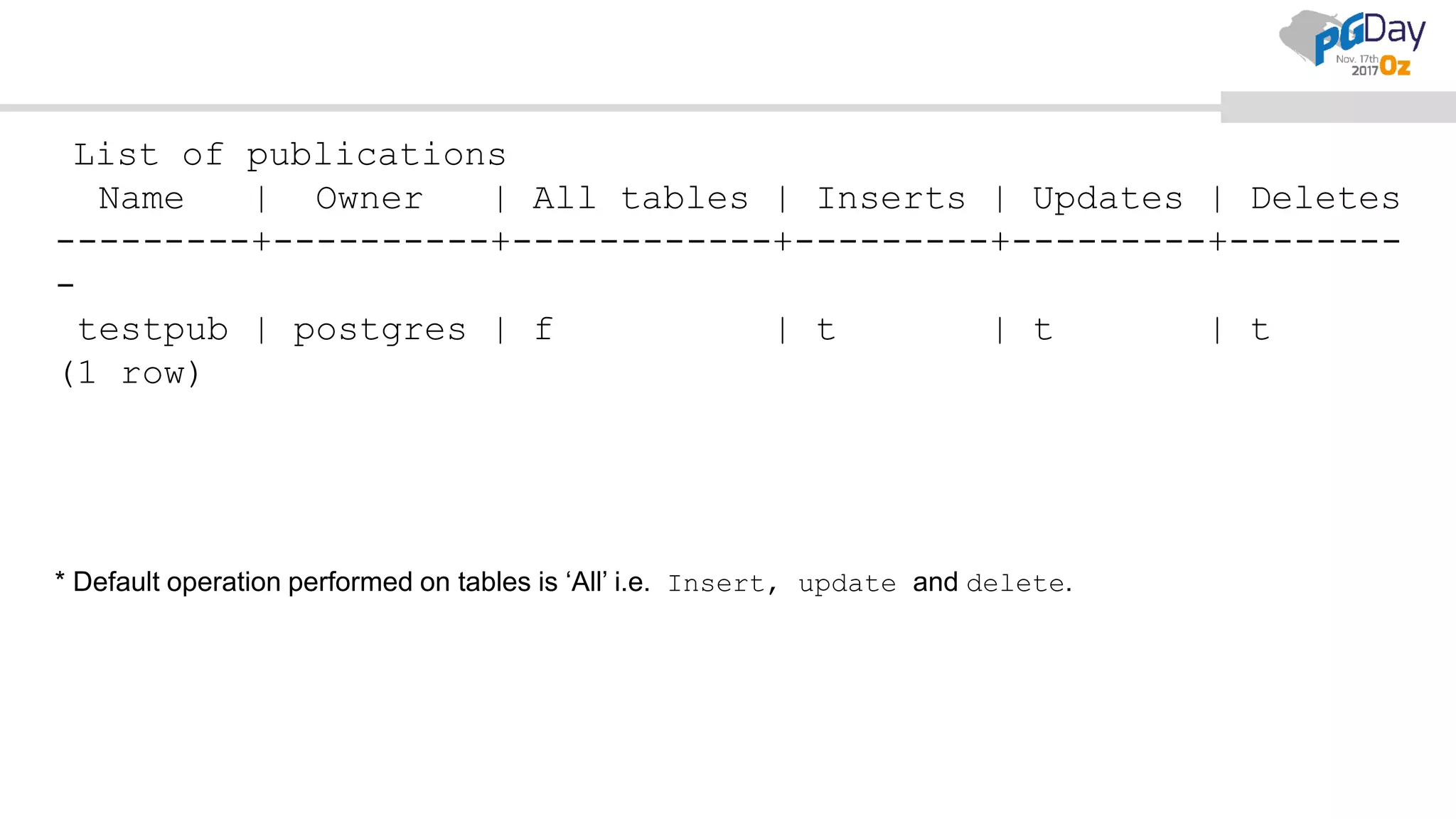

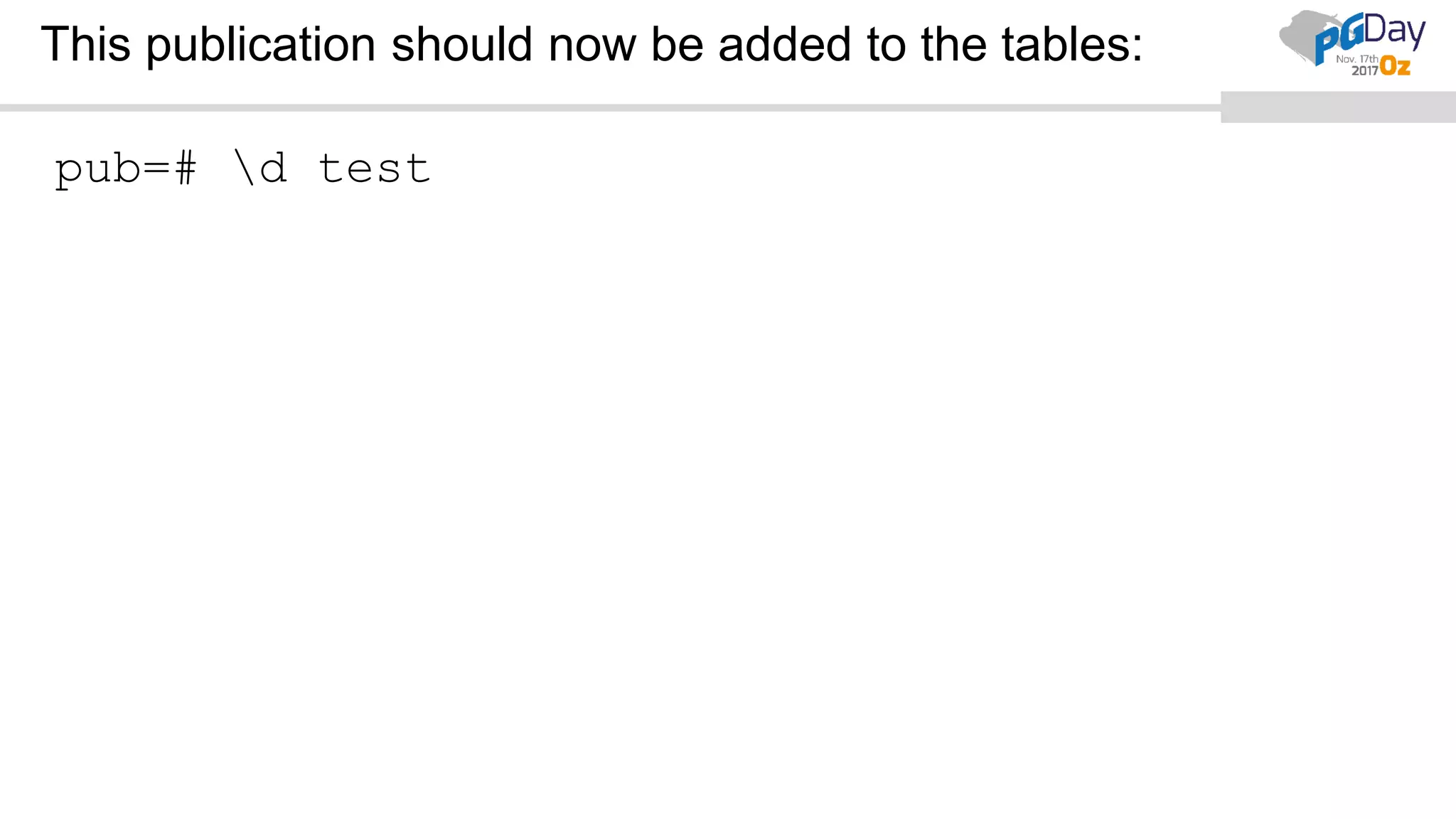

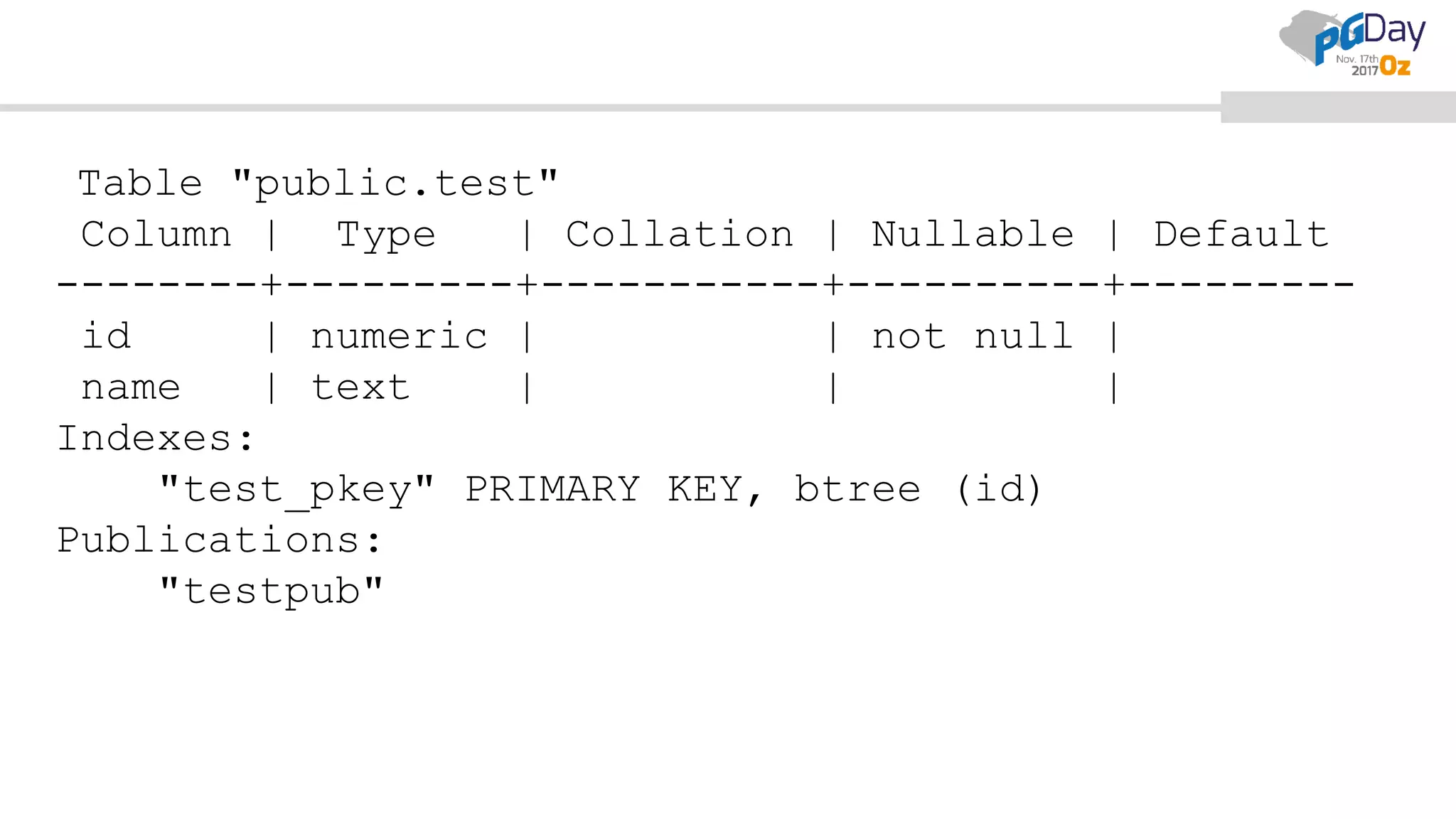

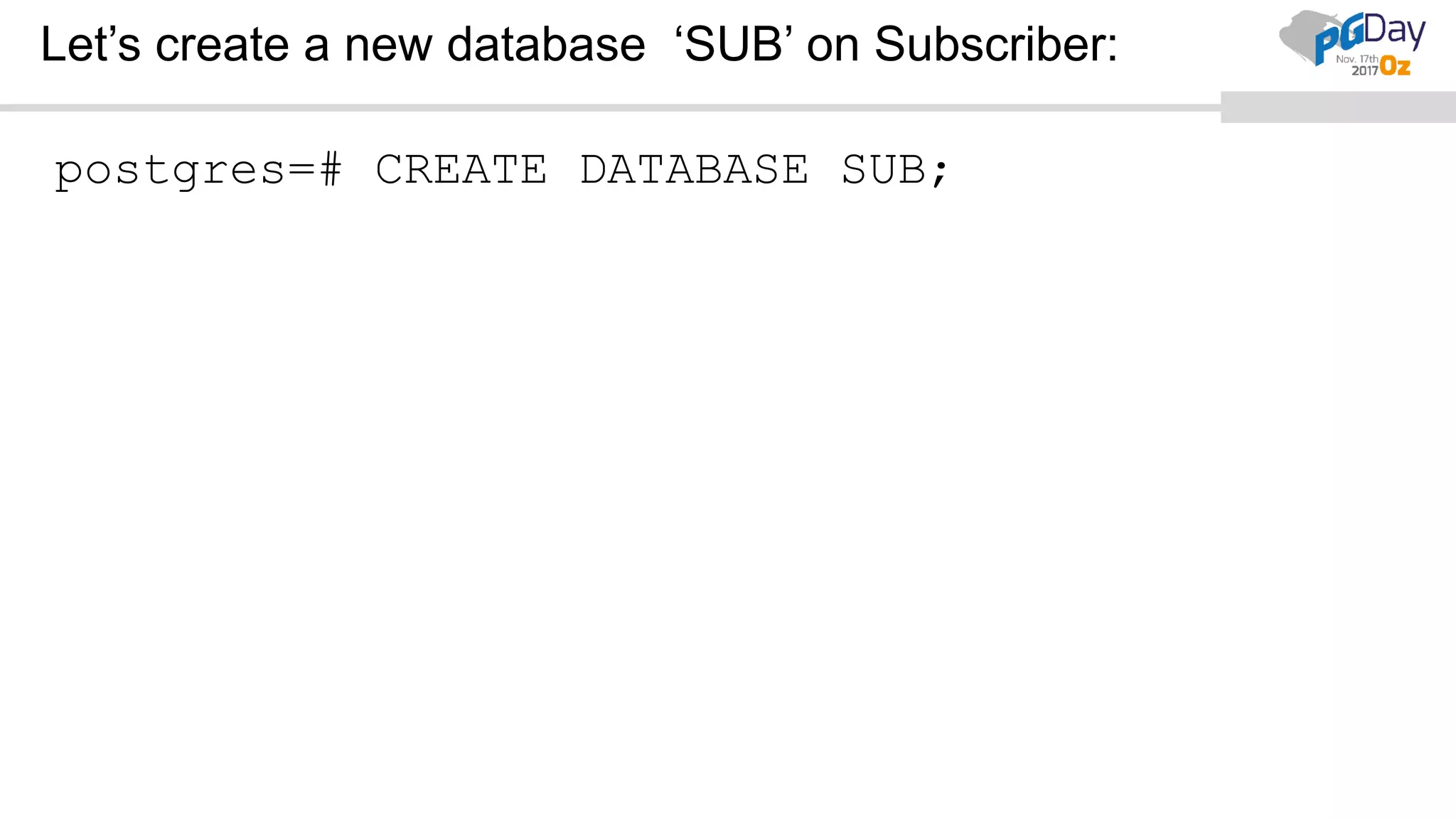

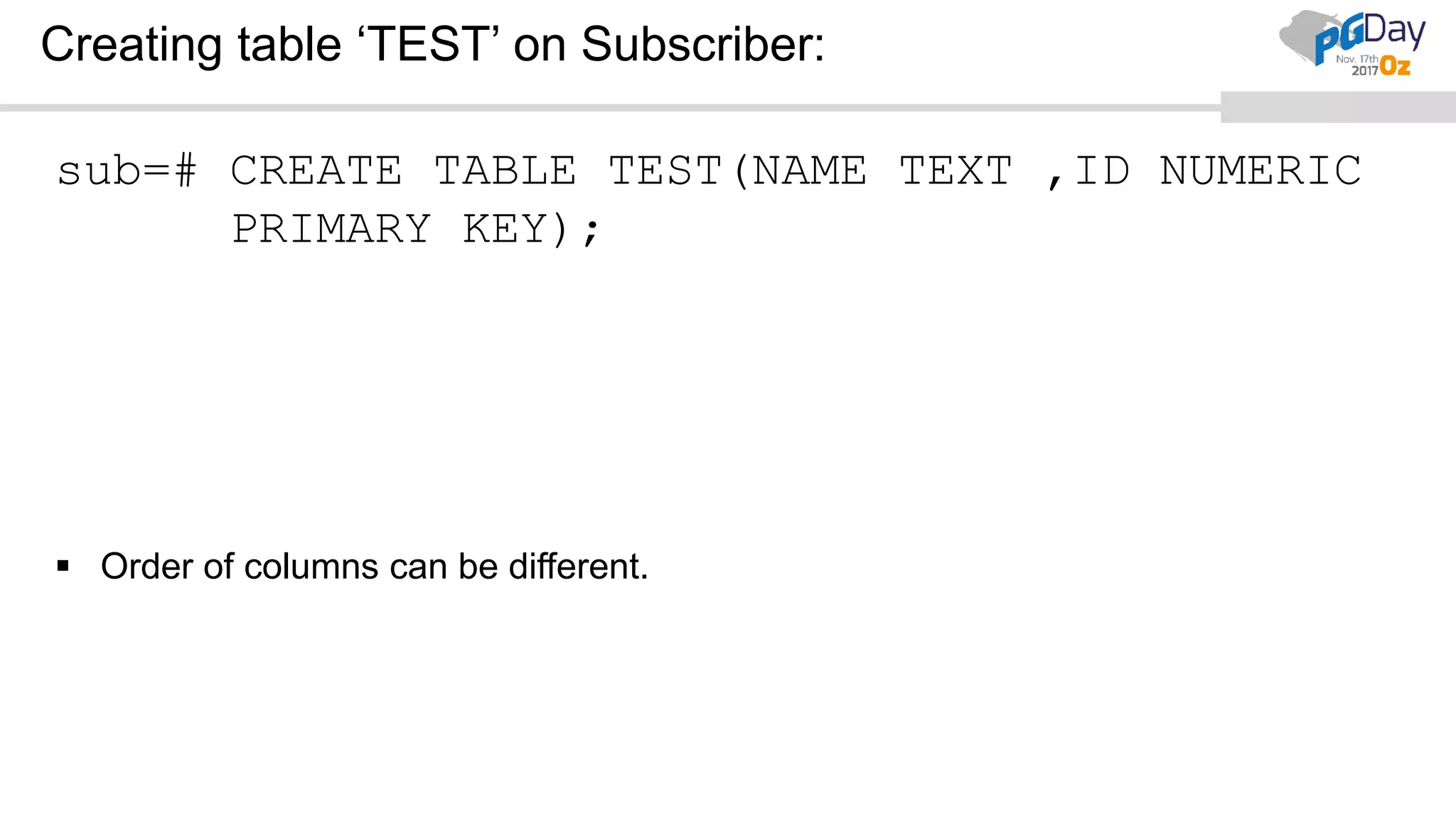

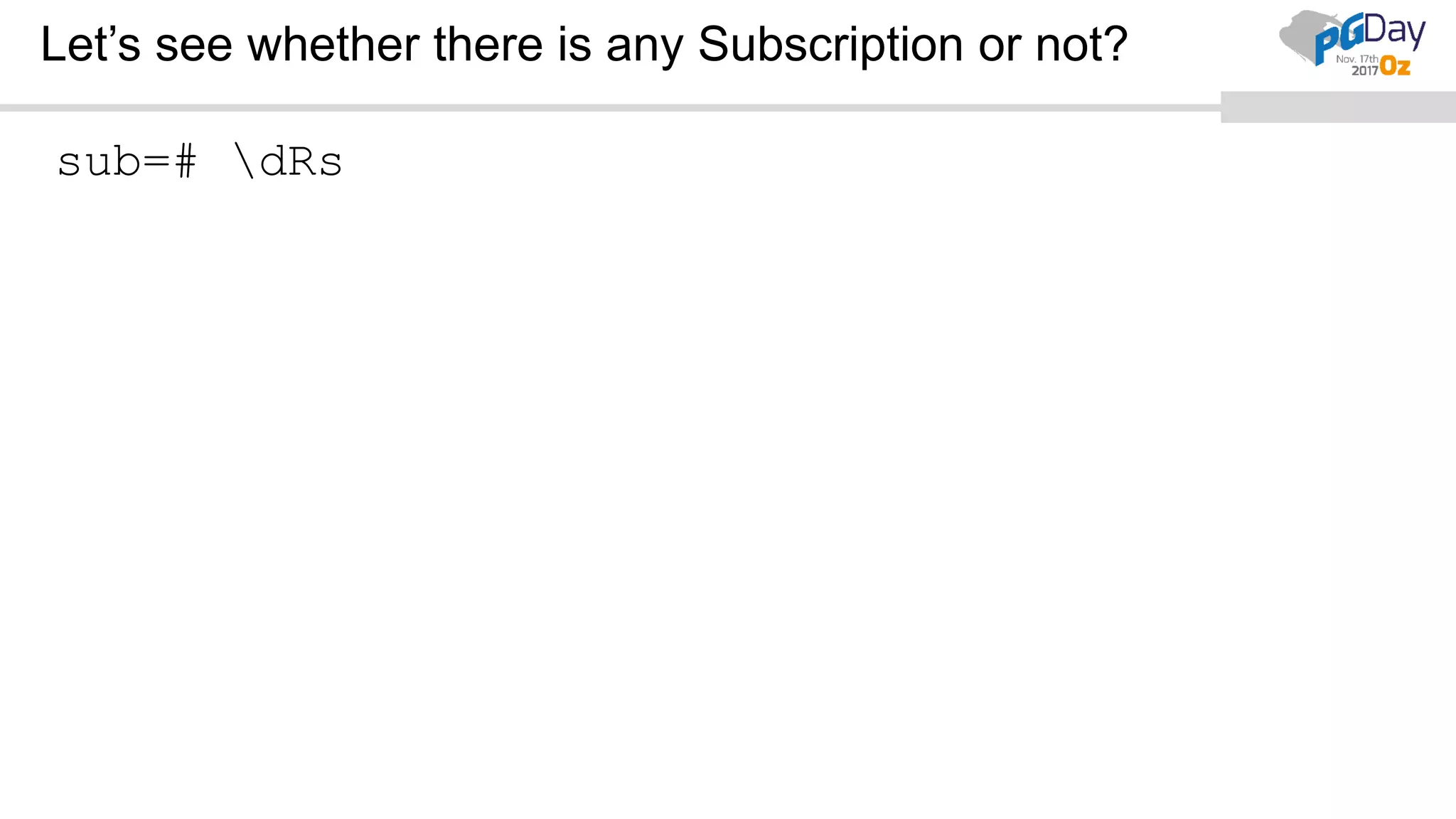

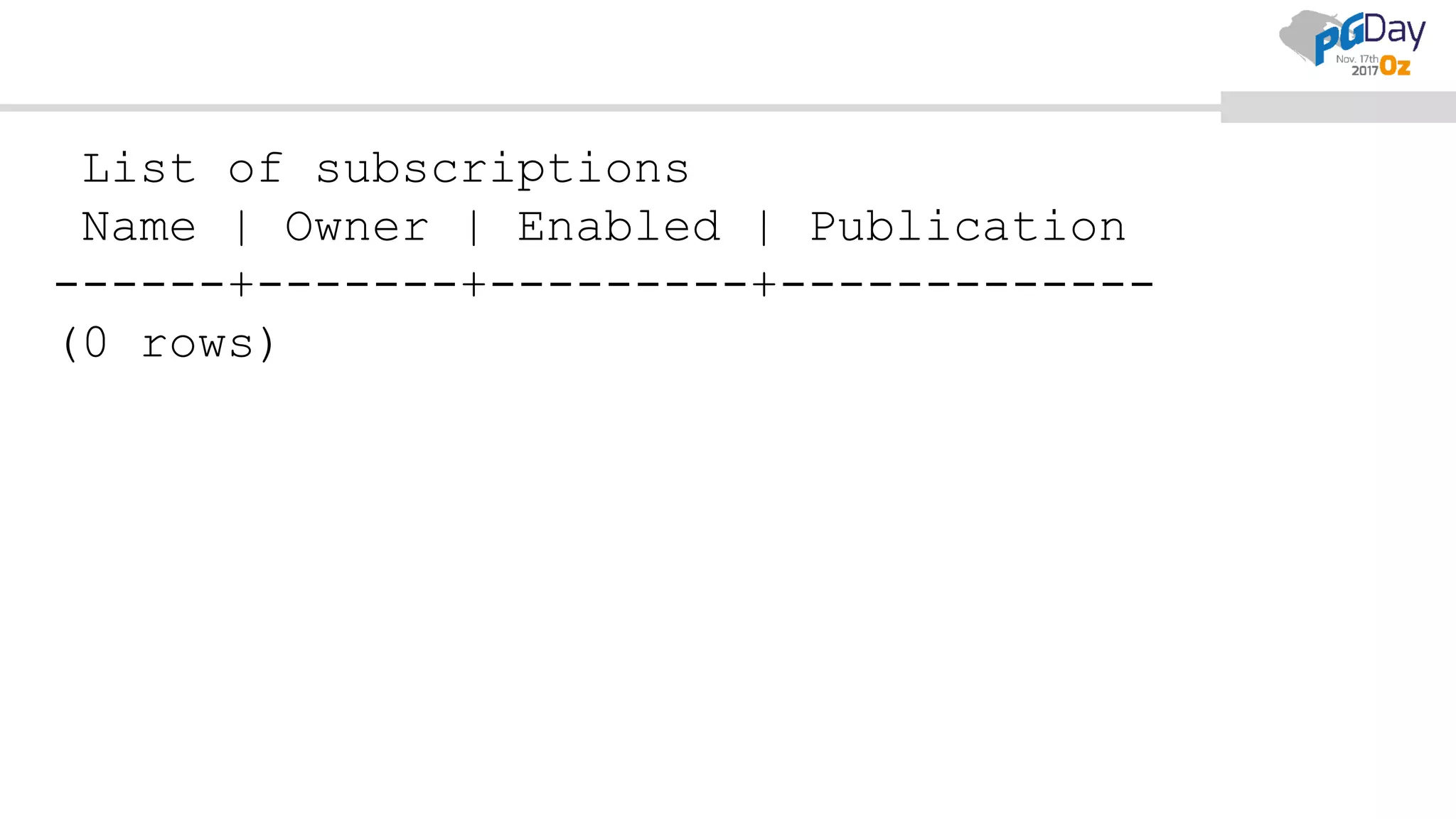

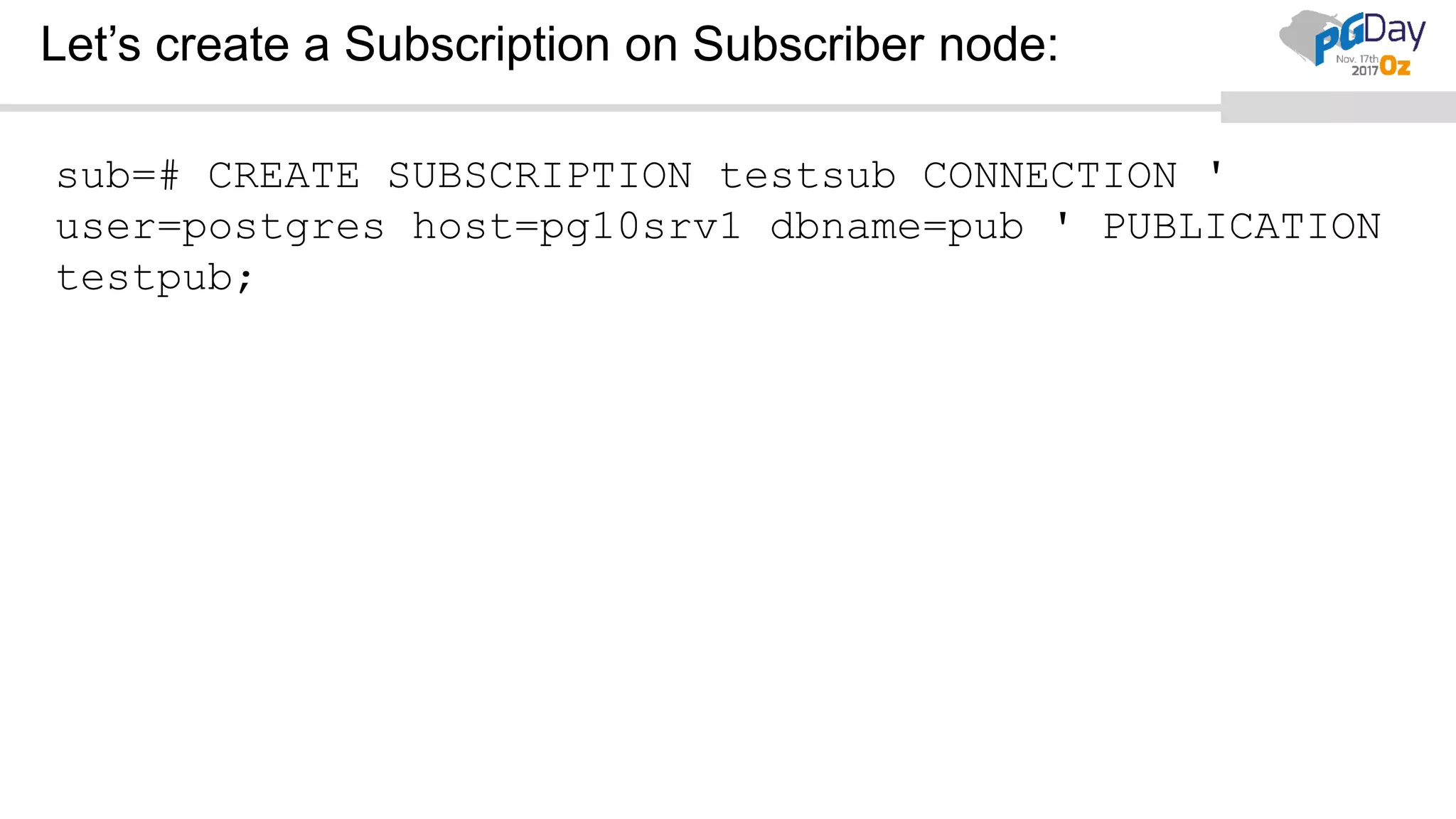

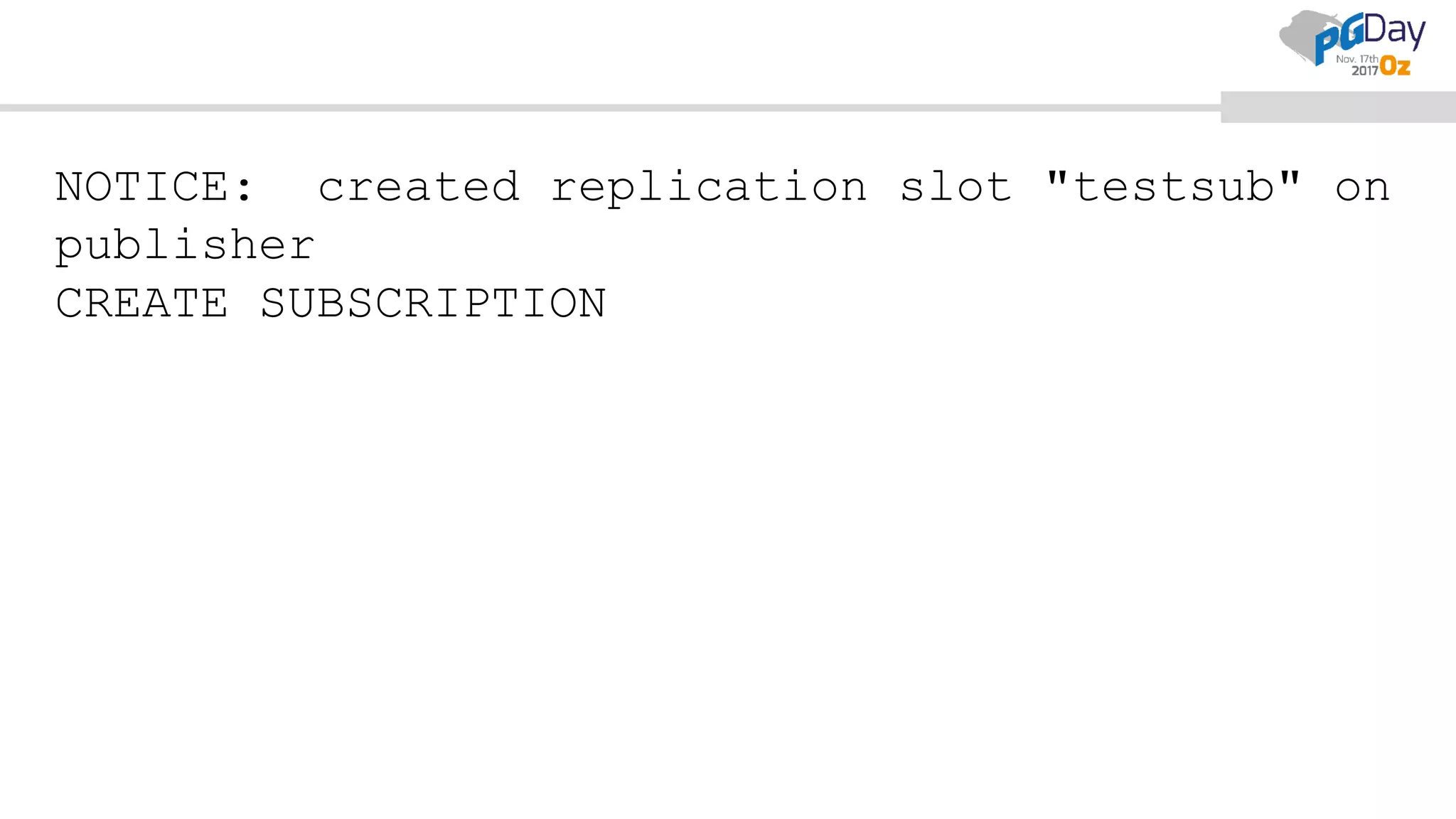

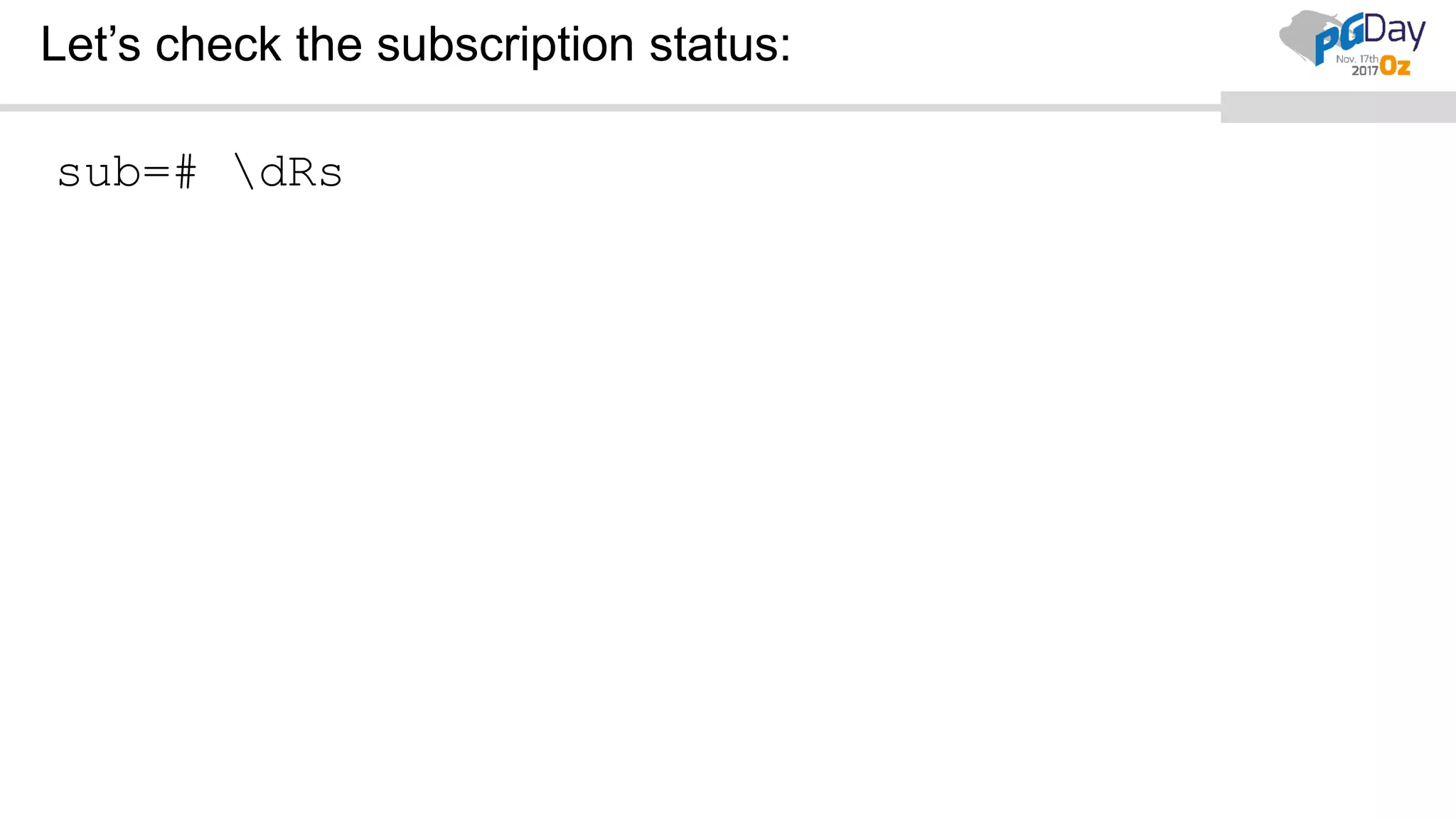

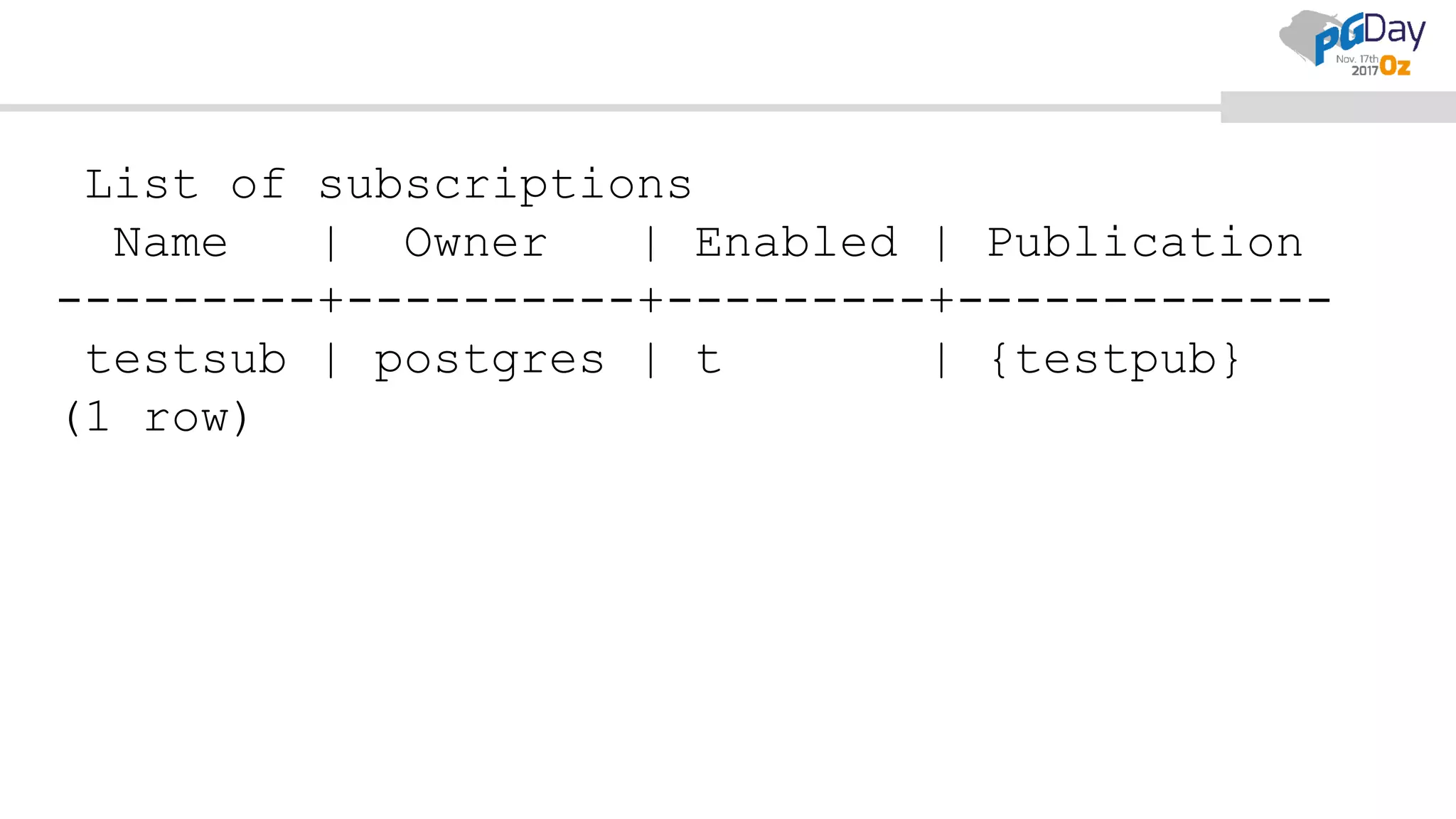

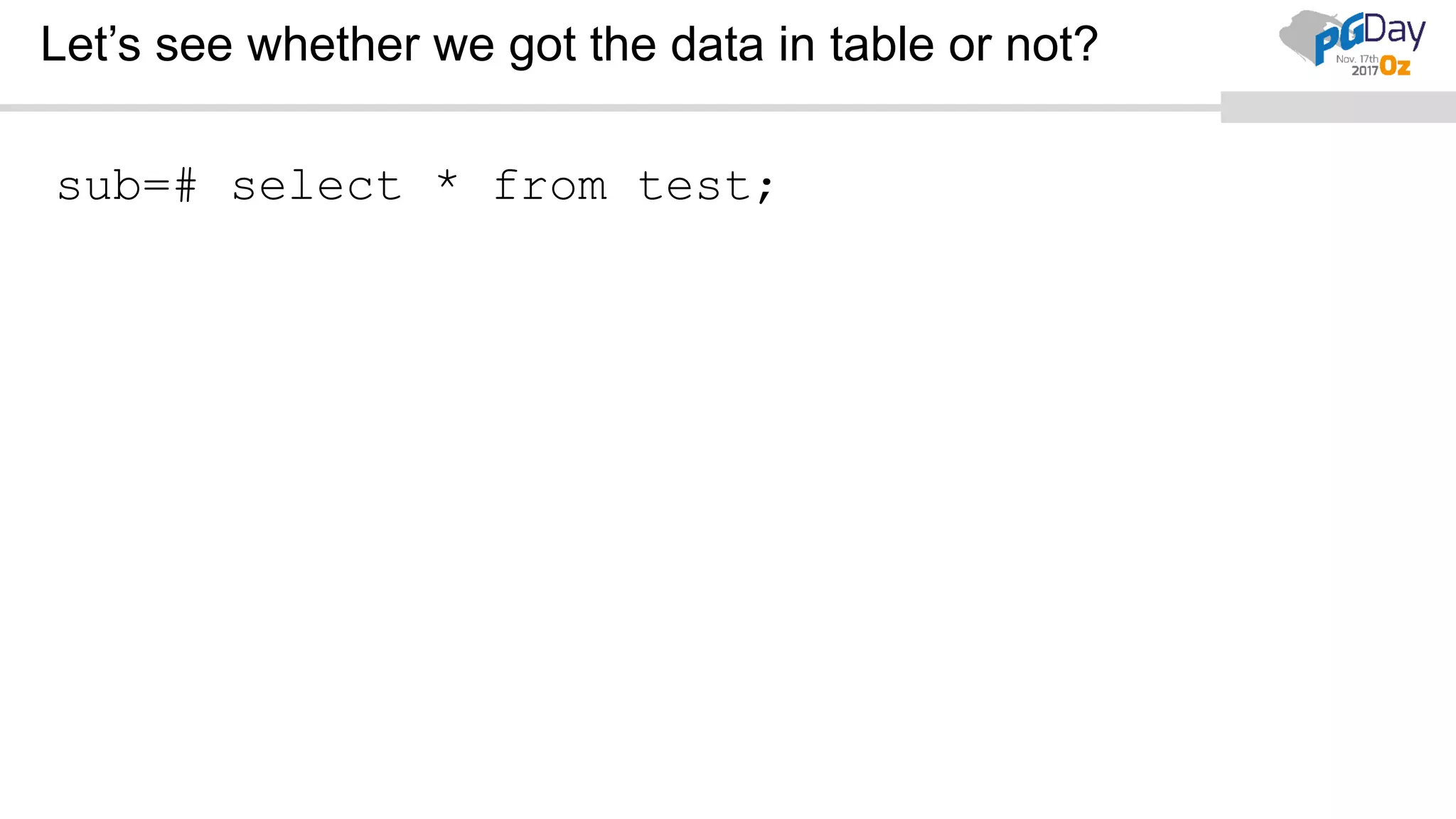

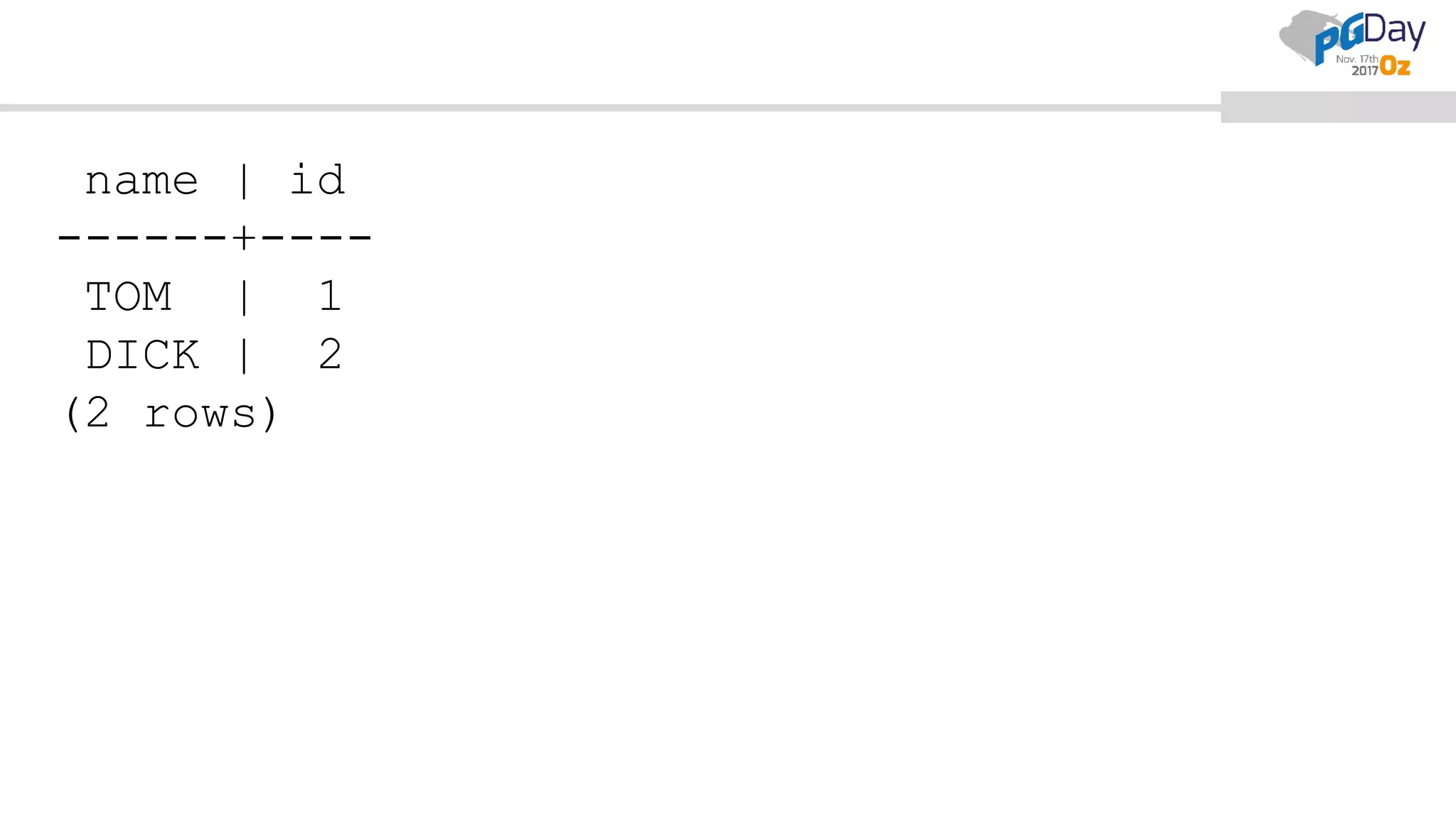

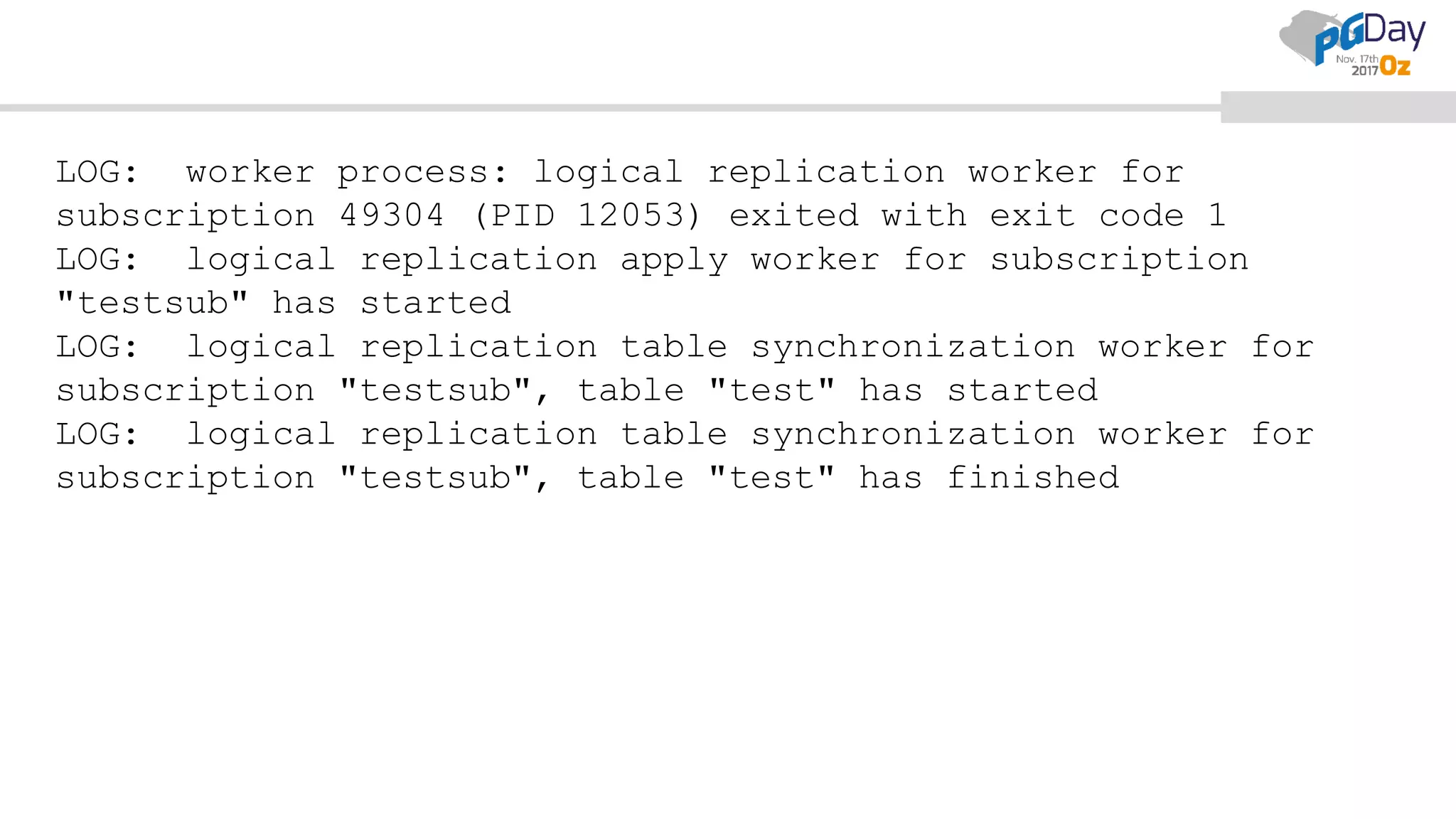

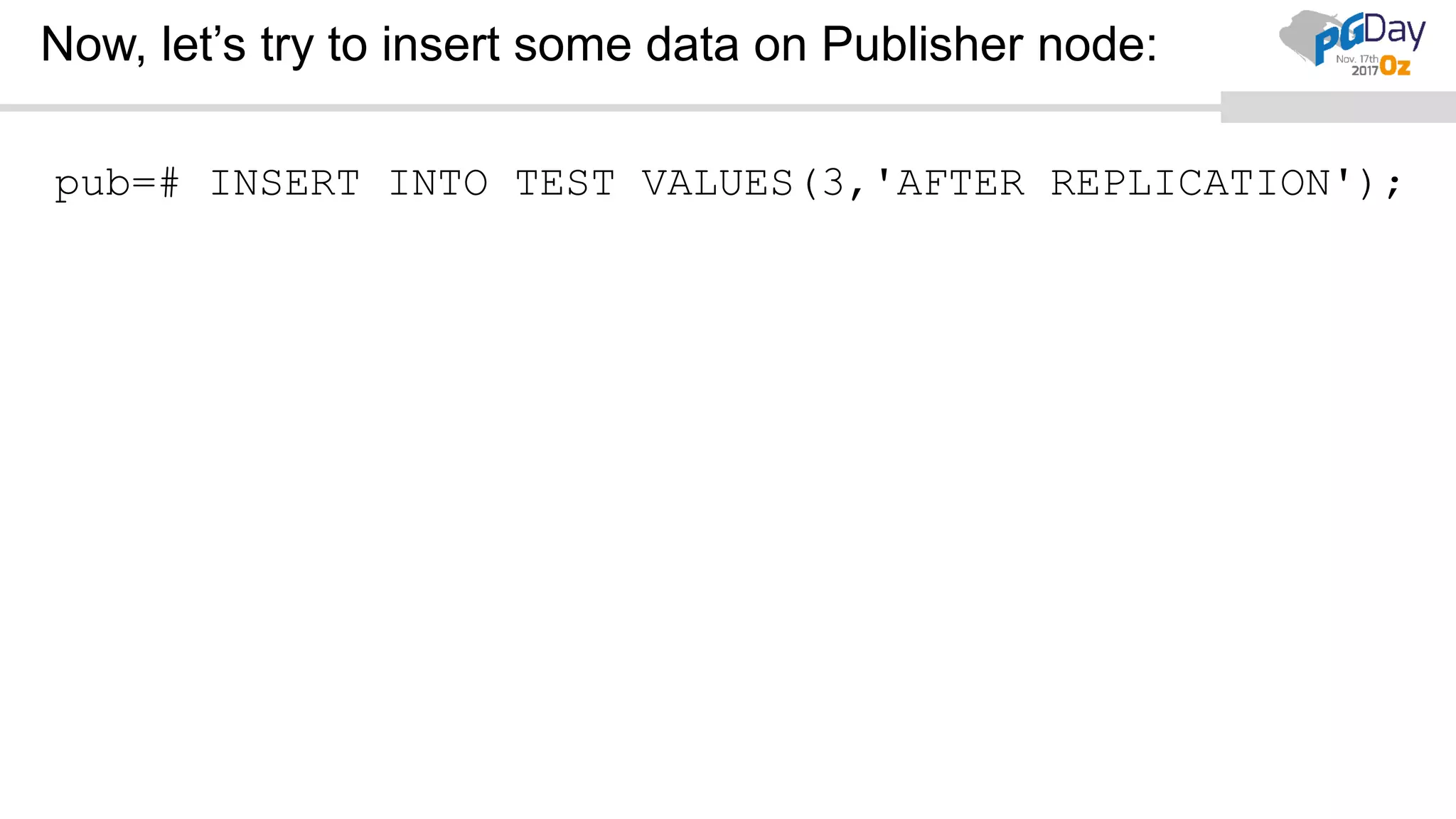

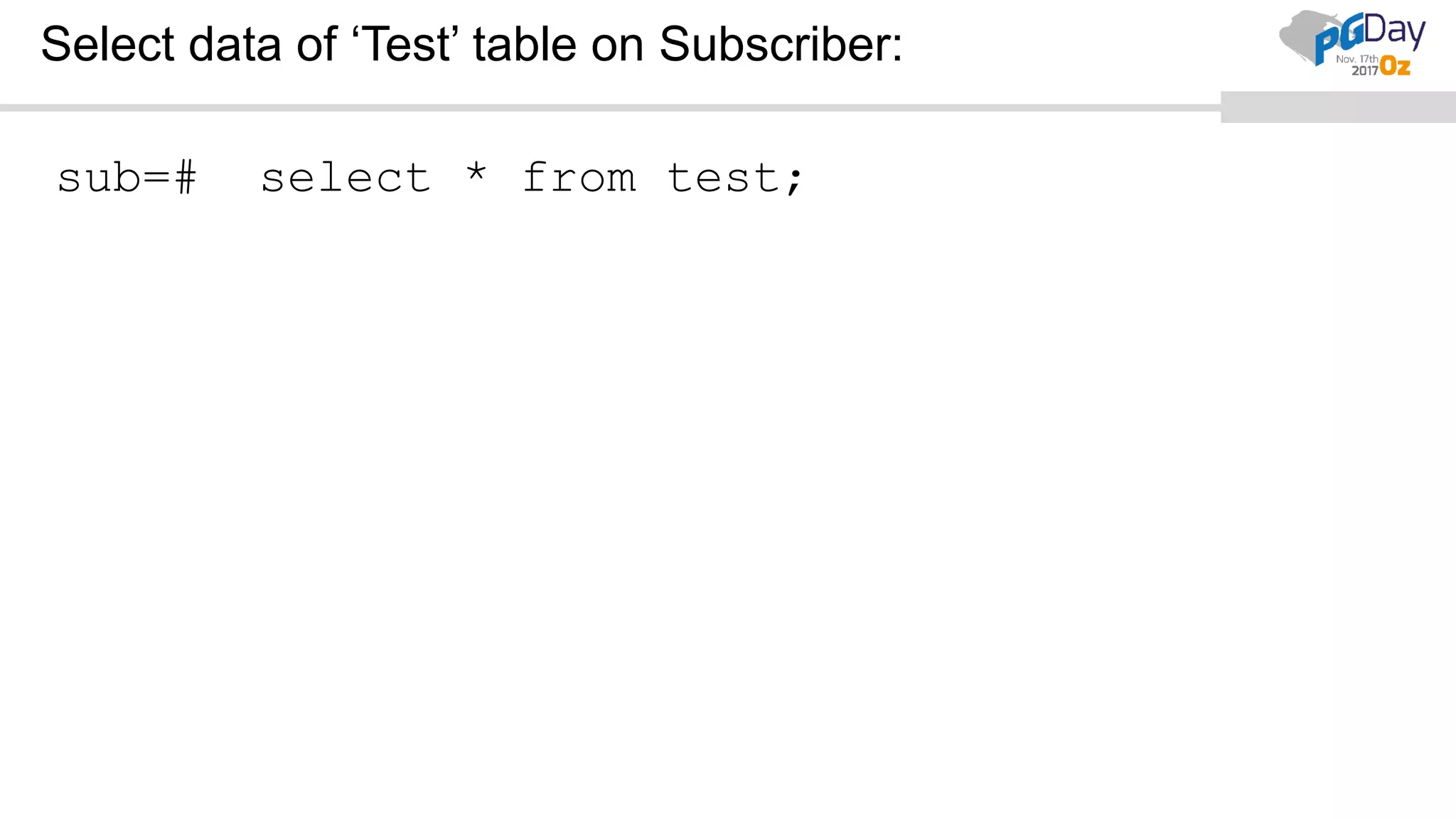

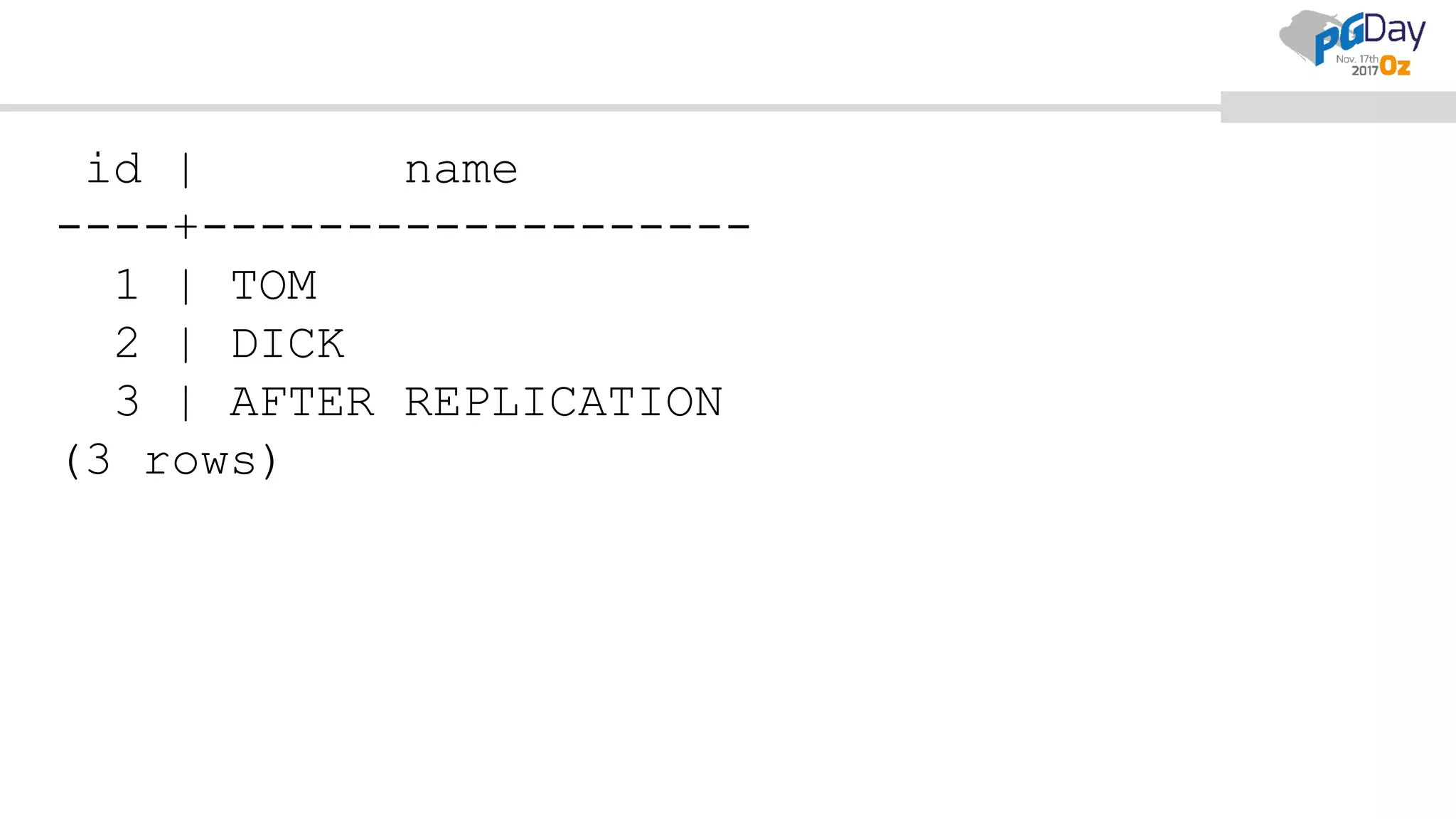

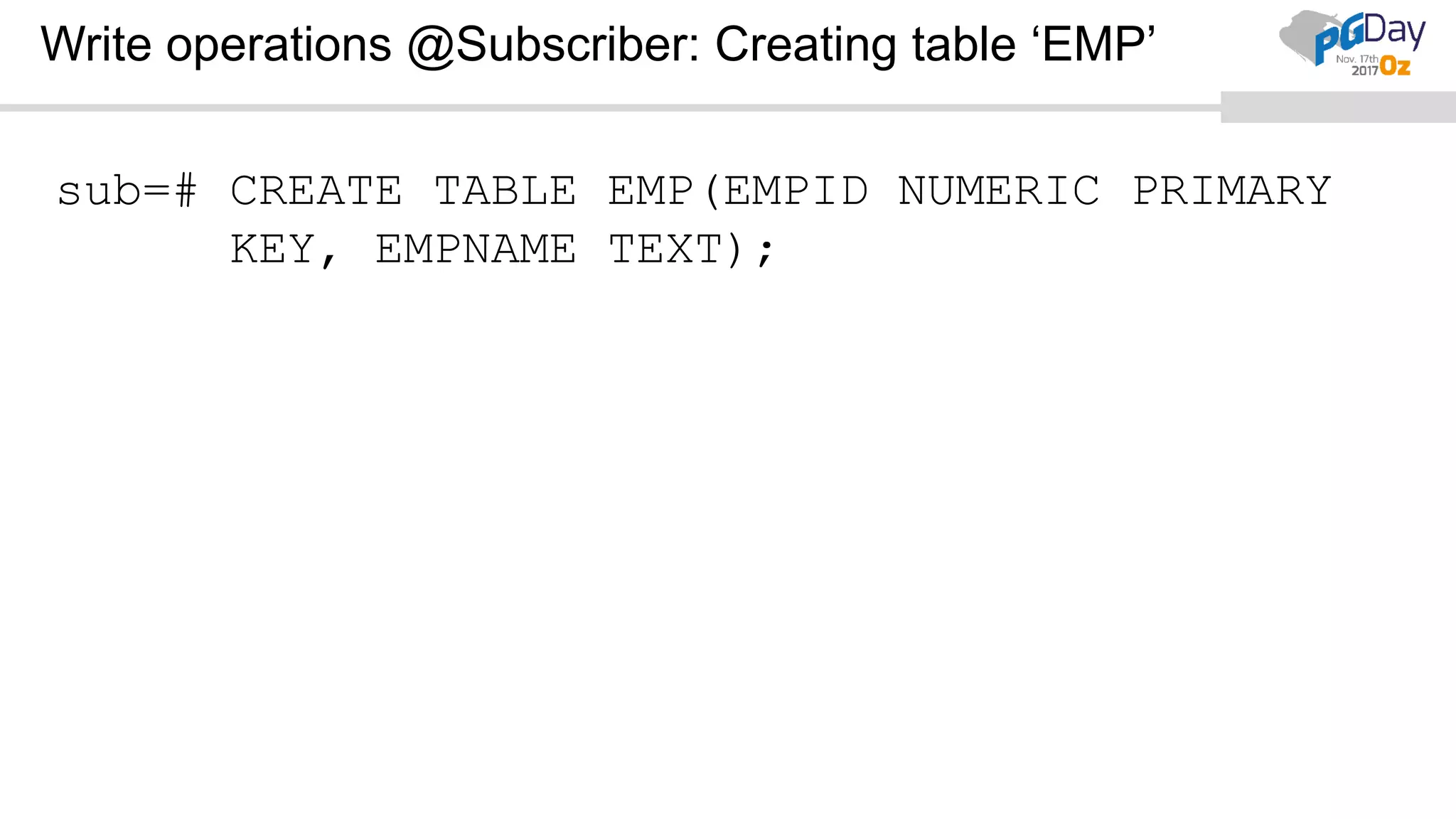

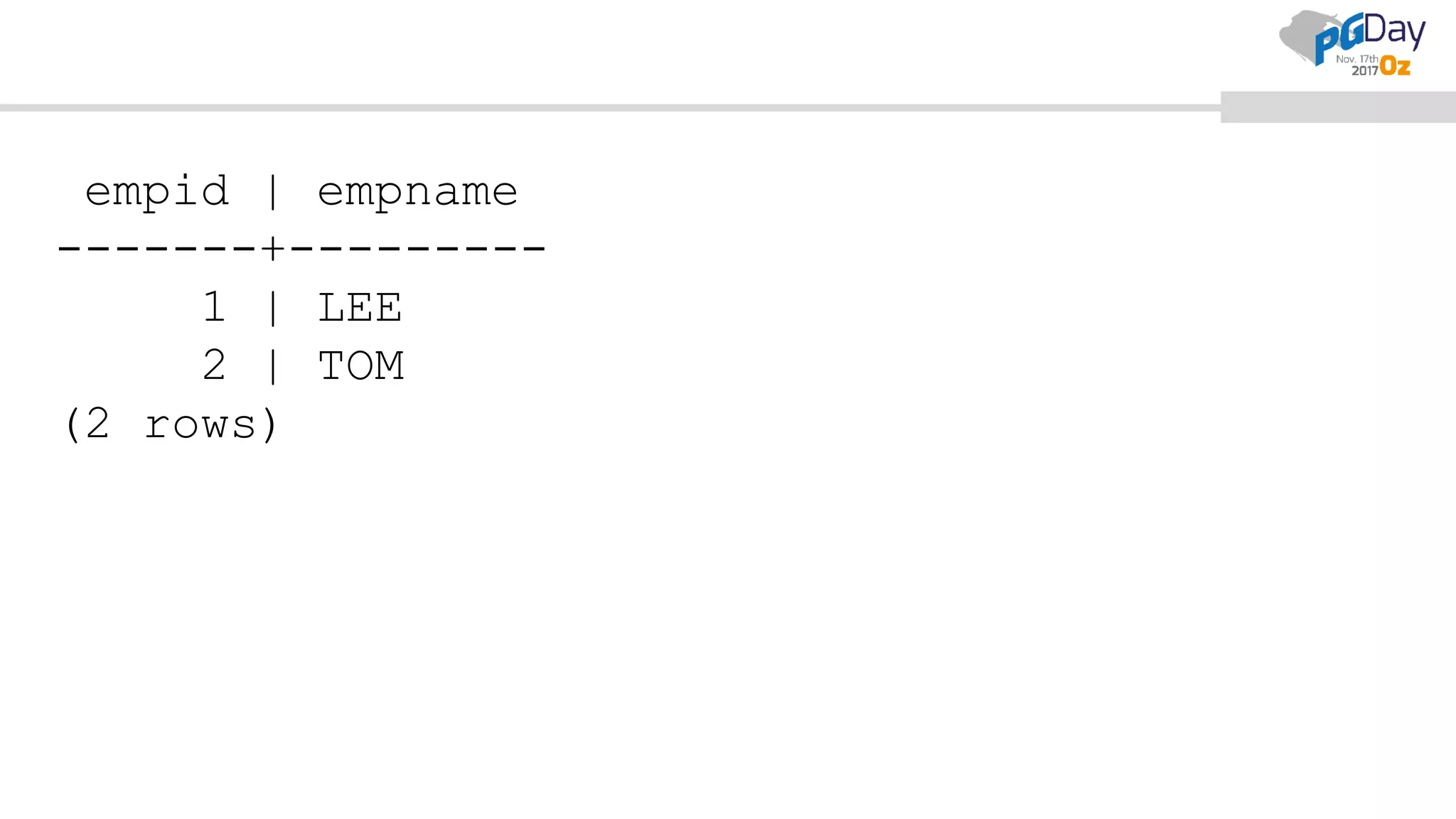

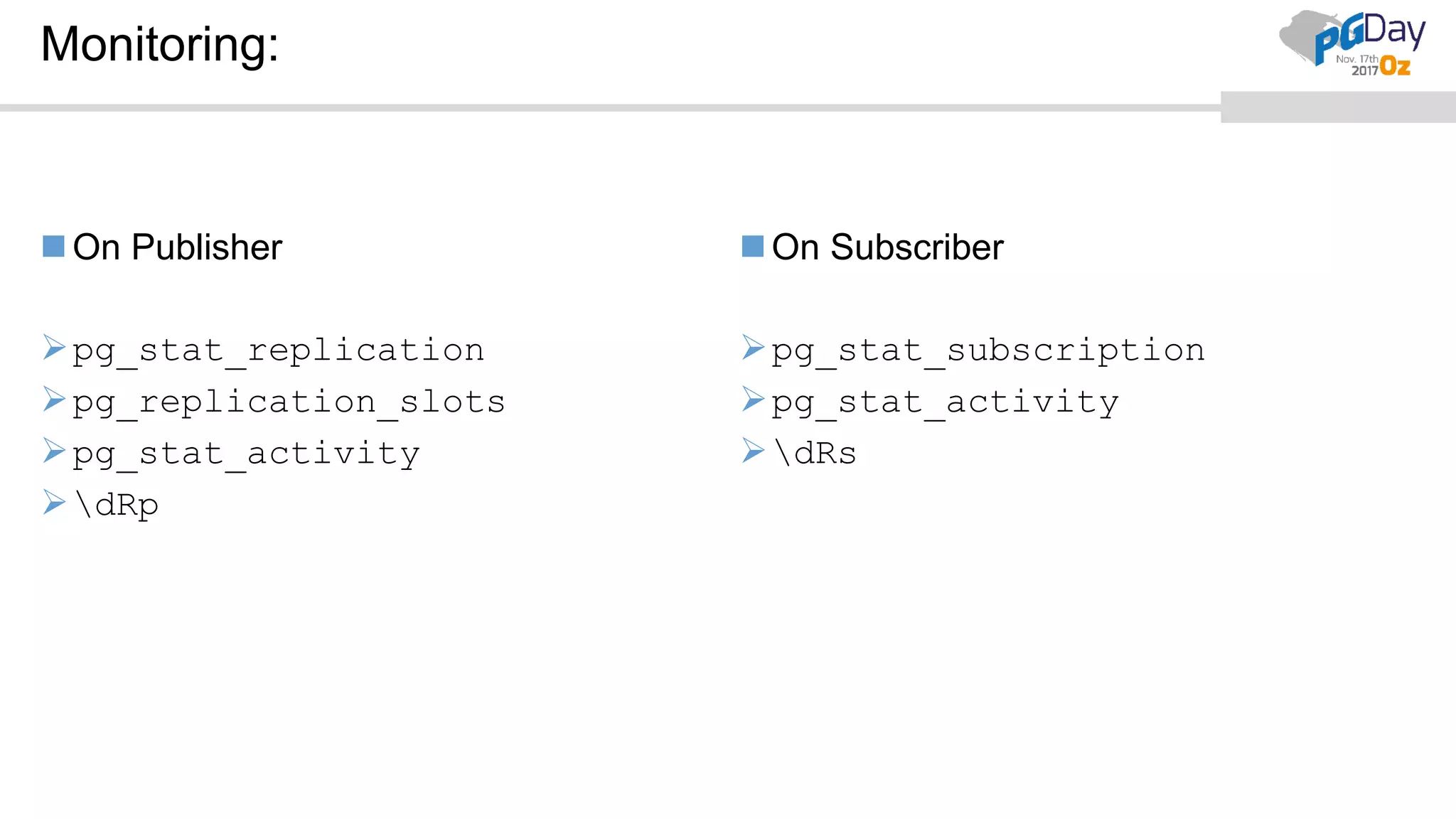

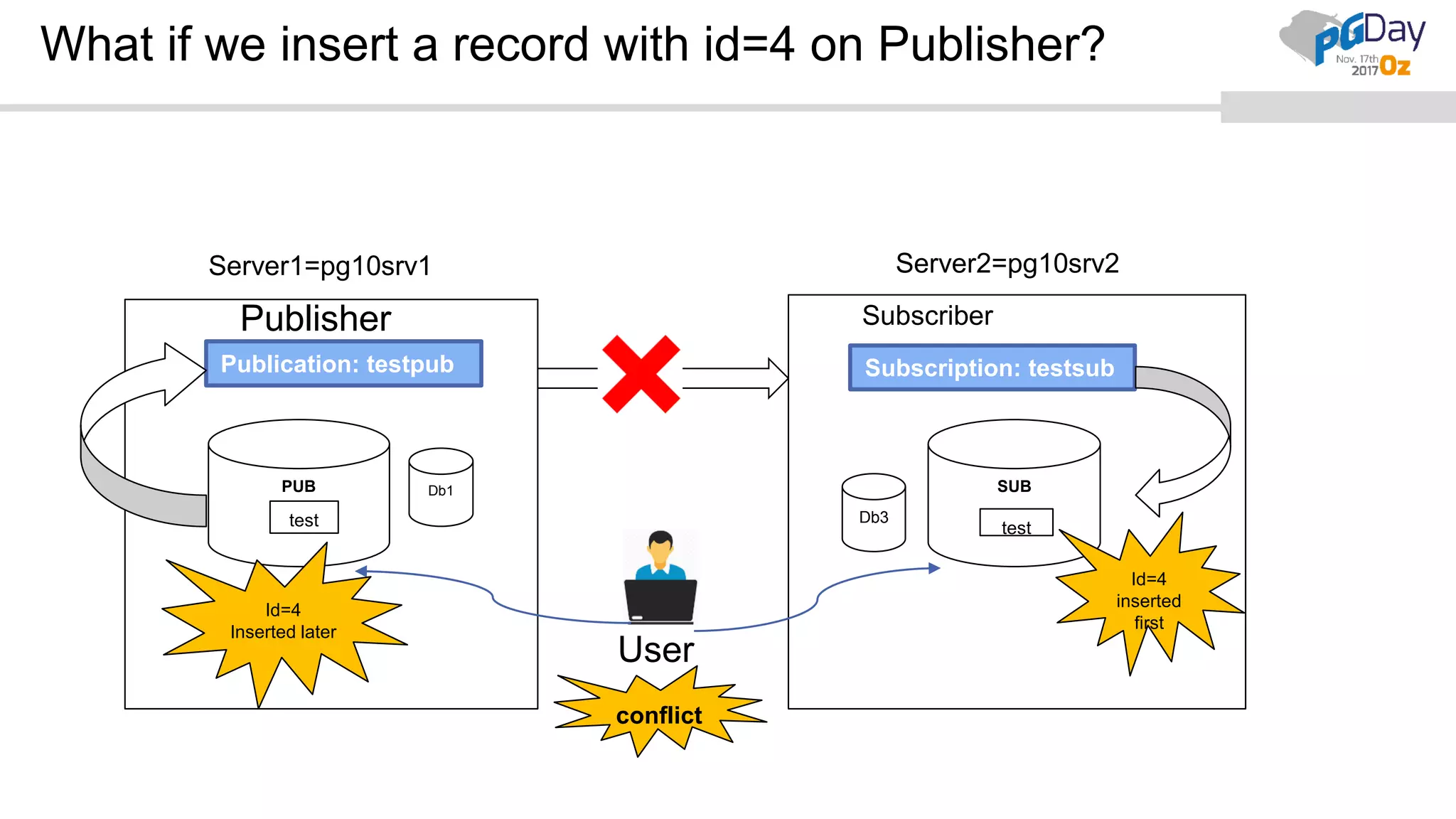

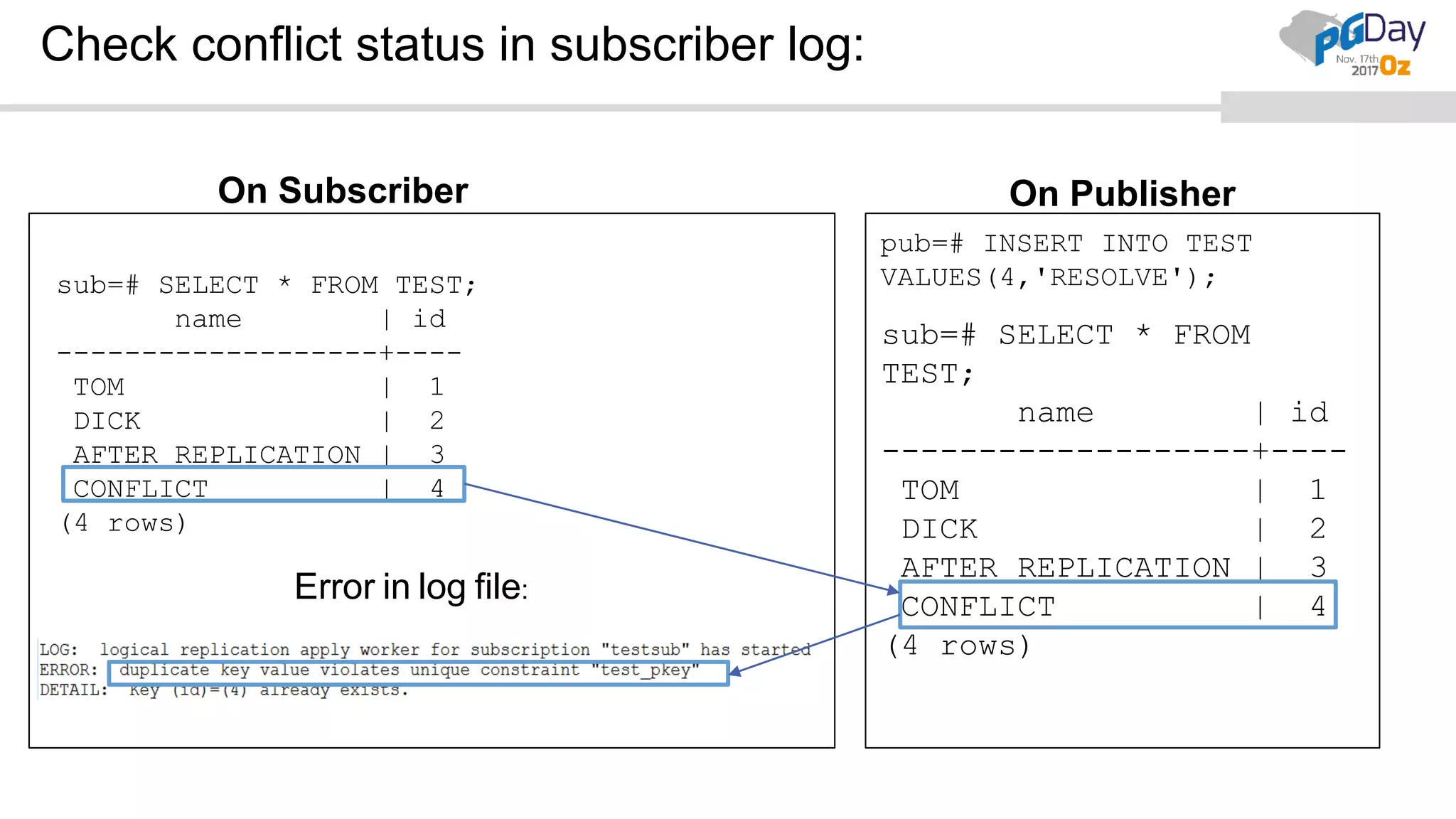

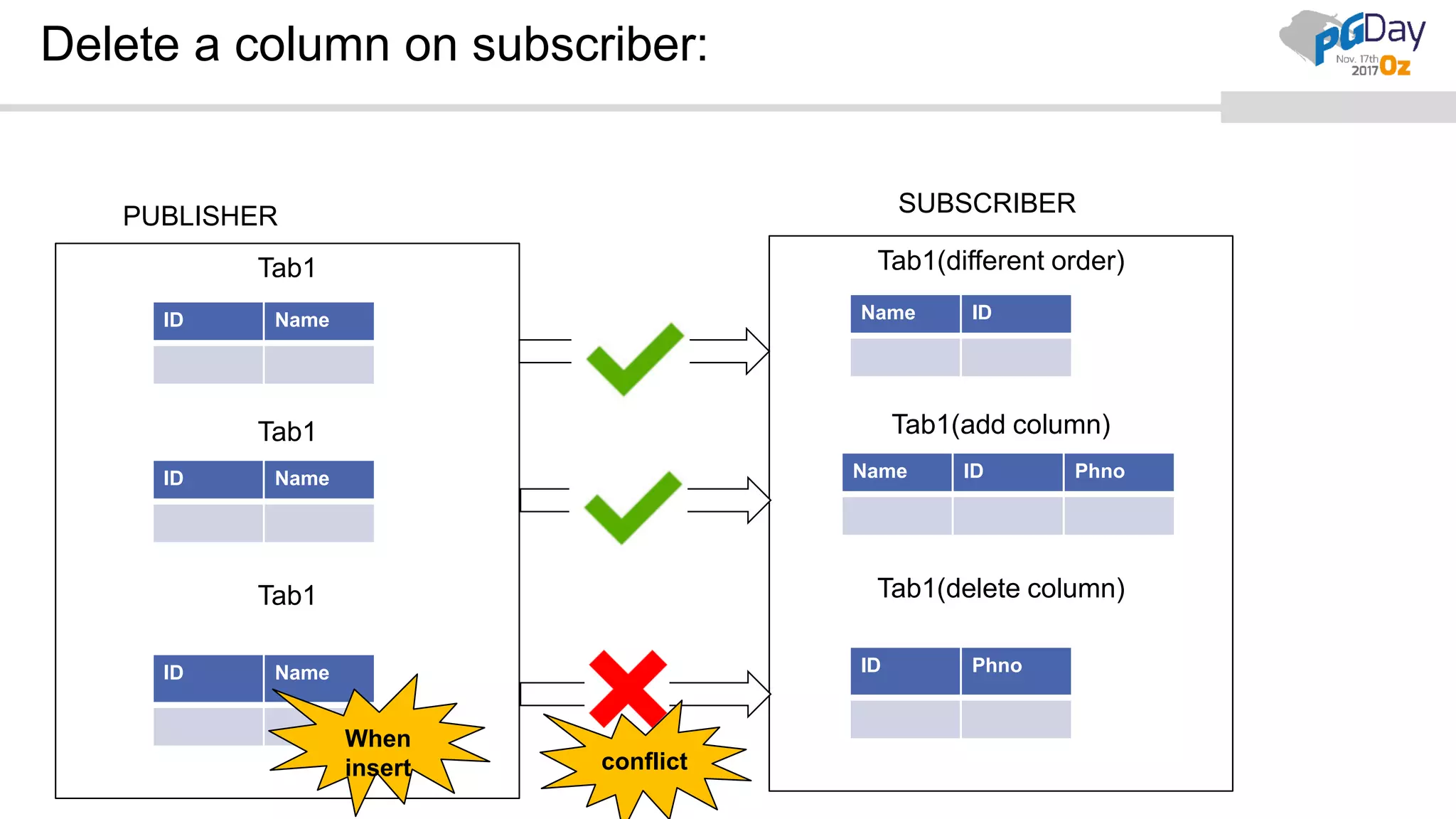

This document provides an overview of logical replication in PostgreSQL 10. It discusses the history of PostgreSQL replication, the key concepts of logical replication including publications, subscriptions, and replication slots. It presents several use cases for logical replication such as cross-platform, cross-version, and write operations at the subscriber. The document also covers configuration settings, a quick setup demonstration, monitoring, resolving conflicts, and limitations of logical replication.