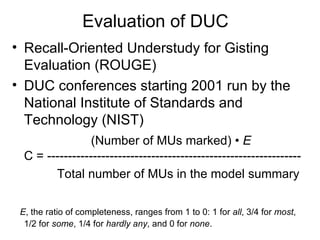

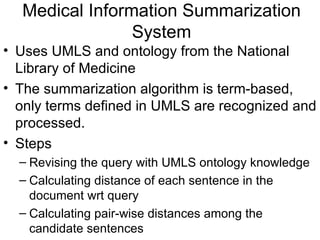

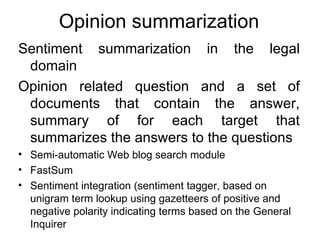

The document discusses query-based summarization, including defining the task, evaluation criteria, and different approaches. Some key approaches discussed are using document graphs to relate sentences, linguistic analysis techniques, machine learning methods like using support vector machines, and domain-specific systems for tasks like medical summarization and opinion summarization. Evaluation is typically done using the ROUGE method to assess recall against a model summary.