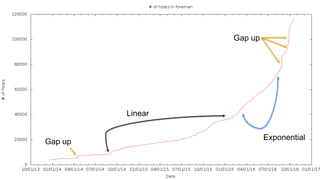

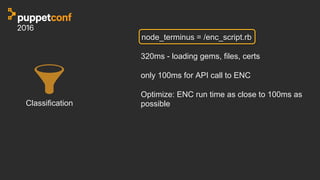

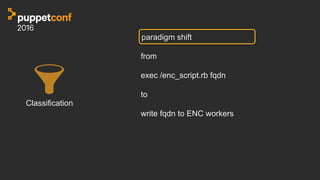

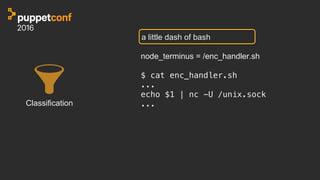

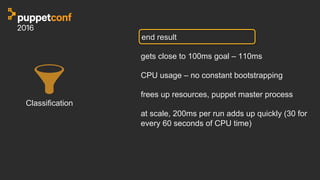

The document discusses multi-tenant puppet automation, elaborating on performance and scalability challenges with infrastructure management. It highlights optimizations for performance, particularly in terms of reducing catalog compilation and report processing times. Additionally, it addresses the need for responsive Puppet runs triggered by infrastructure events, emphasizing the importance of using modern tooling and structured approaches for orchestration.

![file monitoring

{!

"file_paths": {!

"homes": [!

"/root/.ssh/%%",!

"/home/%/.ssh/%%"!

],!

”binaries": [!

"/usr/bin/%%",!

"/sbin/%%"!

],!

"etc": [!

"/etc/%%"!

],!

"tmp": [!

"/tmp/%%"!

]!

}!

}!](https://image.slidesharecdn.com/track4frijawed-161102170445/85/PuppetConf-2016-Multi-Tenant-Puppet-at-Scale-John-Jawed-eBay-Inc-32-320.jpg)