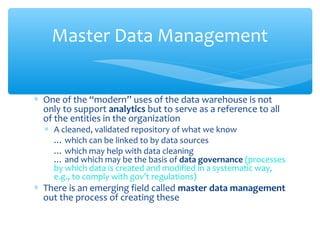

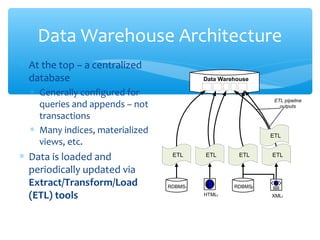

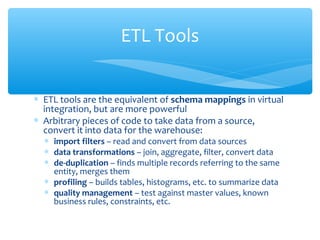

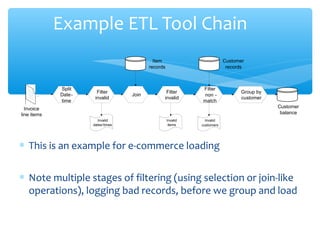

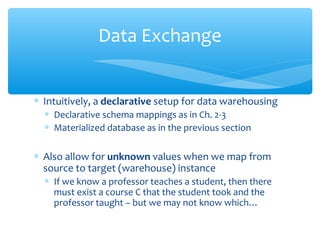

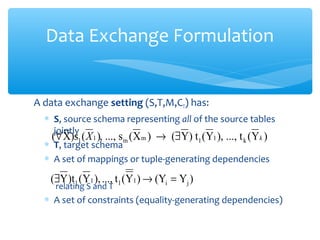

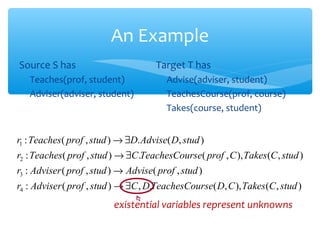

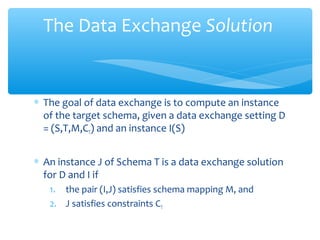

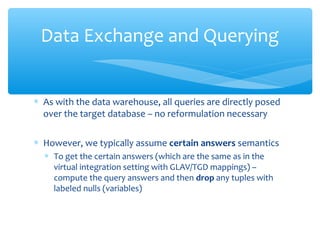

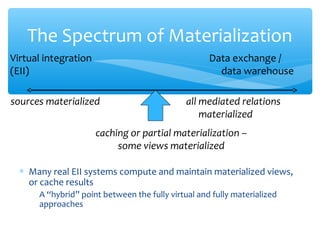

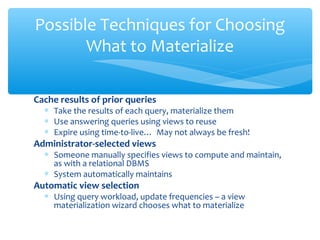

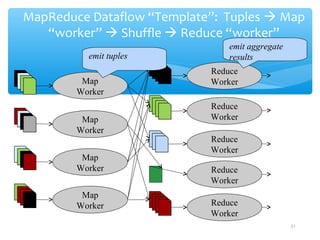

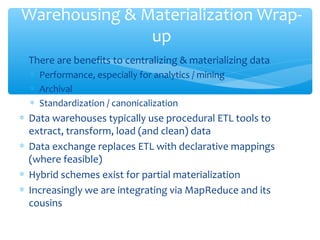

The document discusses techniques for virtual data integration and transformation as well as the role of data warehouses in master data management. It emphasizes the benefits of a centralized database for improved performance and data governance, detailing the use of ETL tools for extracting, transforming, and loading data. Additionally, it explores the evolving landscape of data integration with hybrid approaches, including the use of MapReduce for querying large datasets.