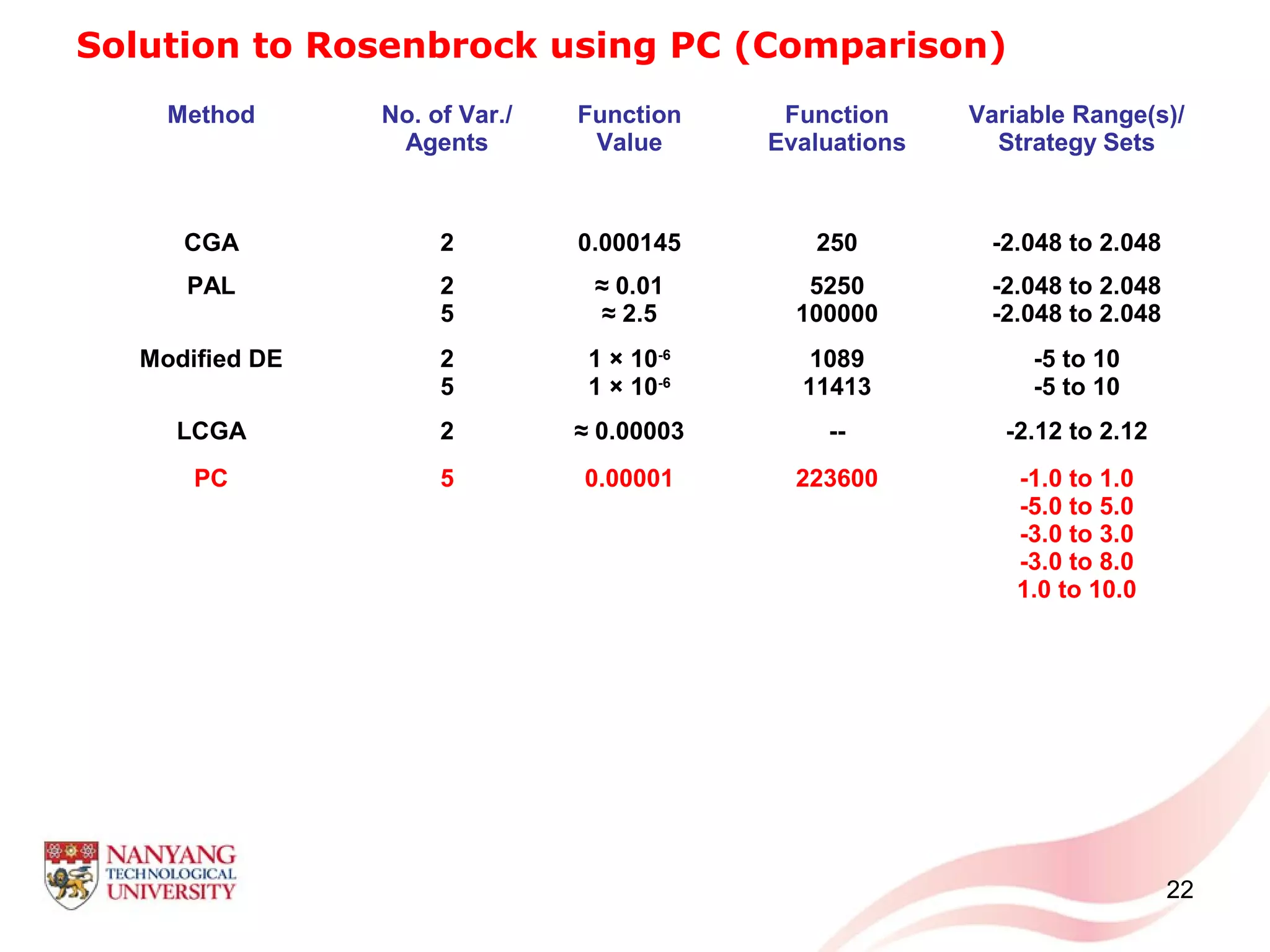

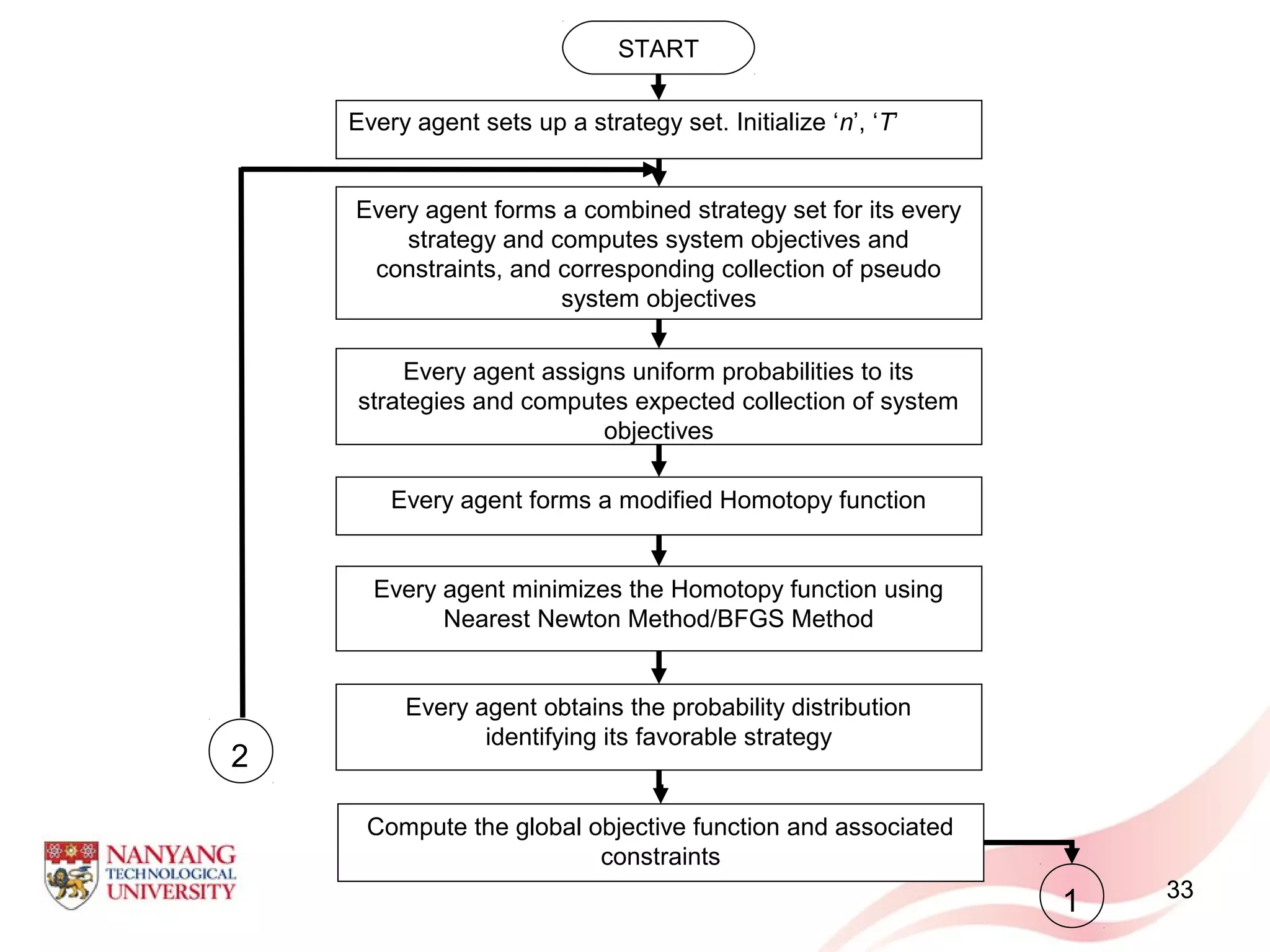

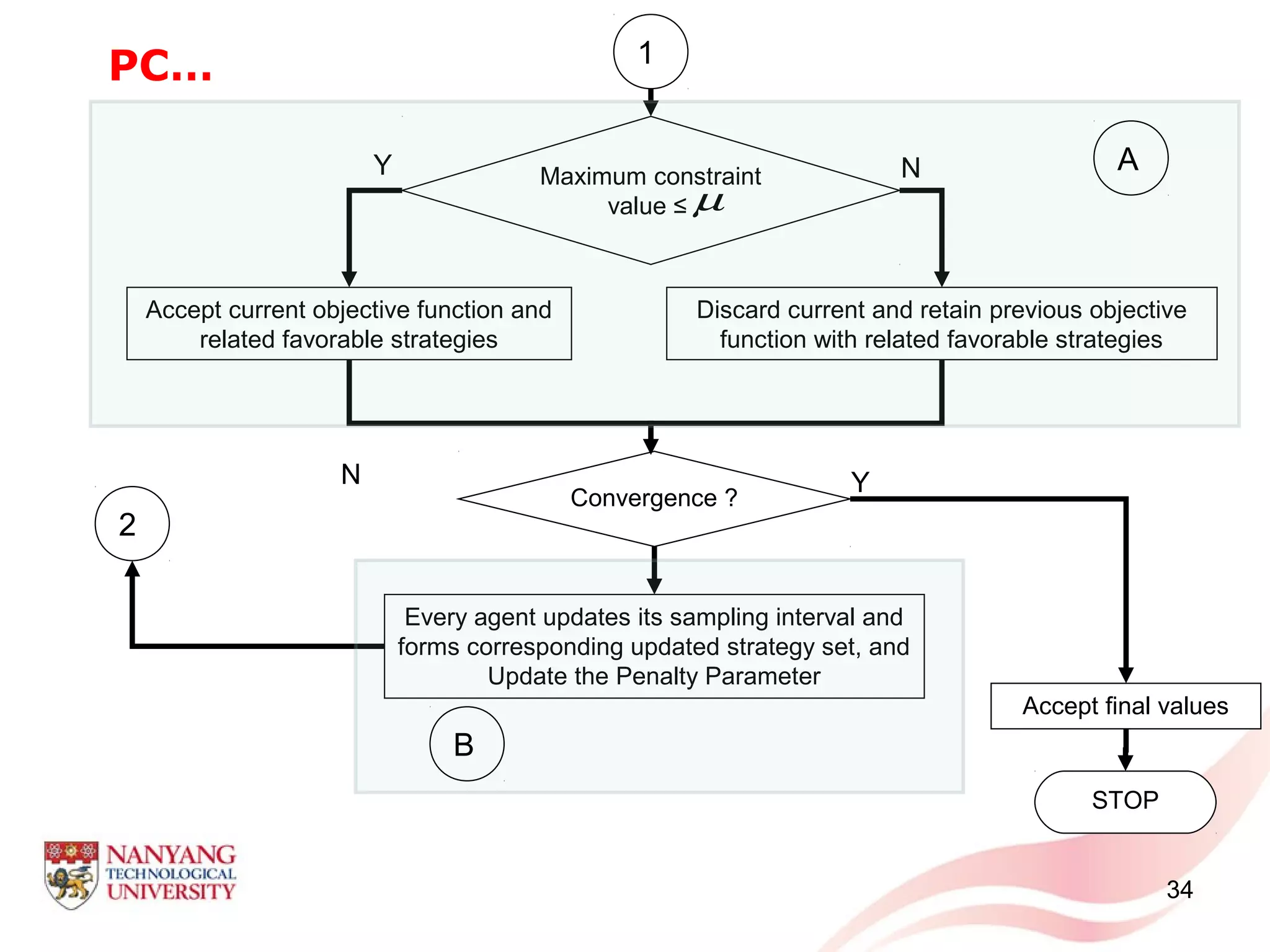

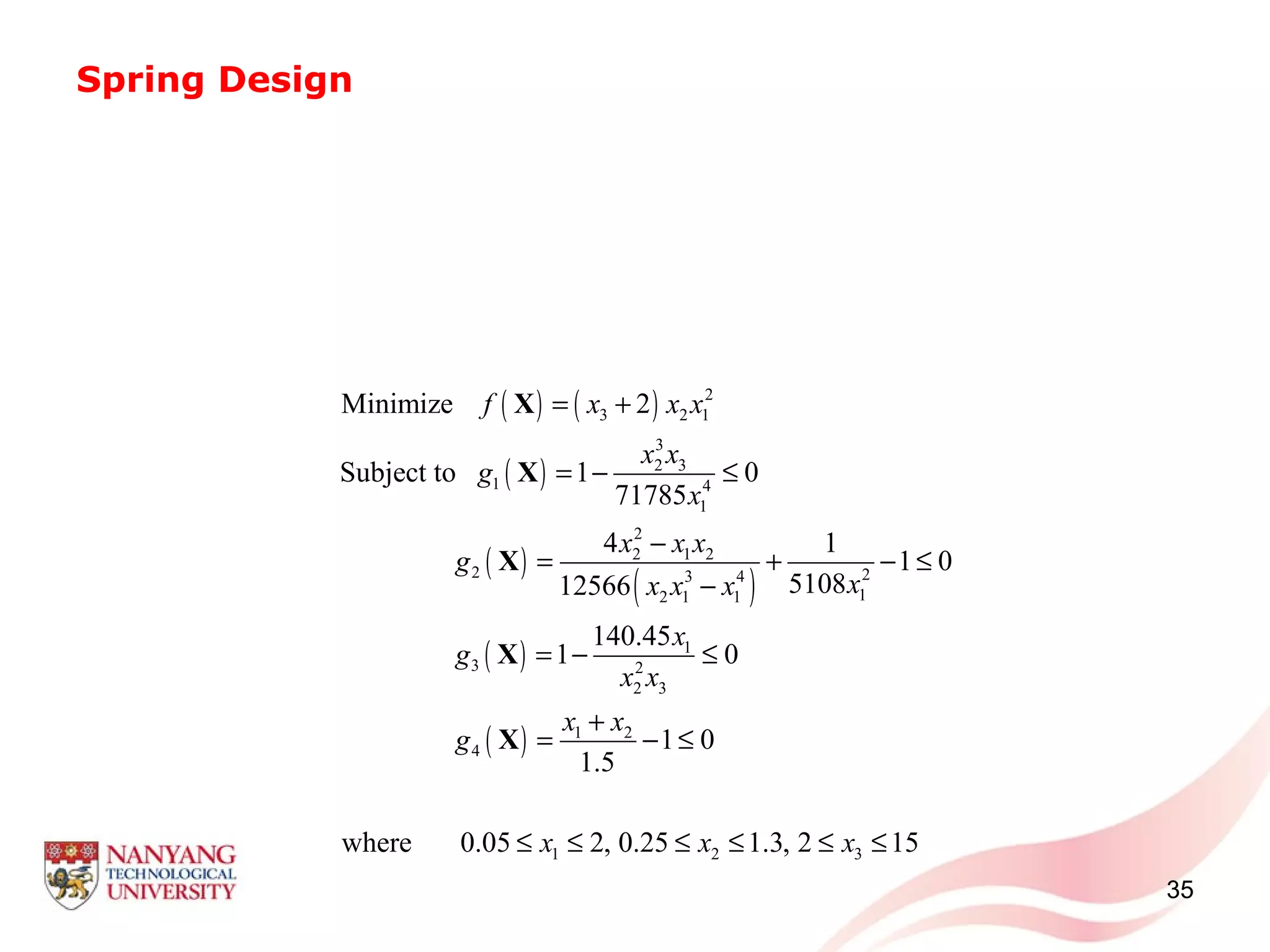

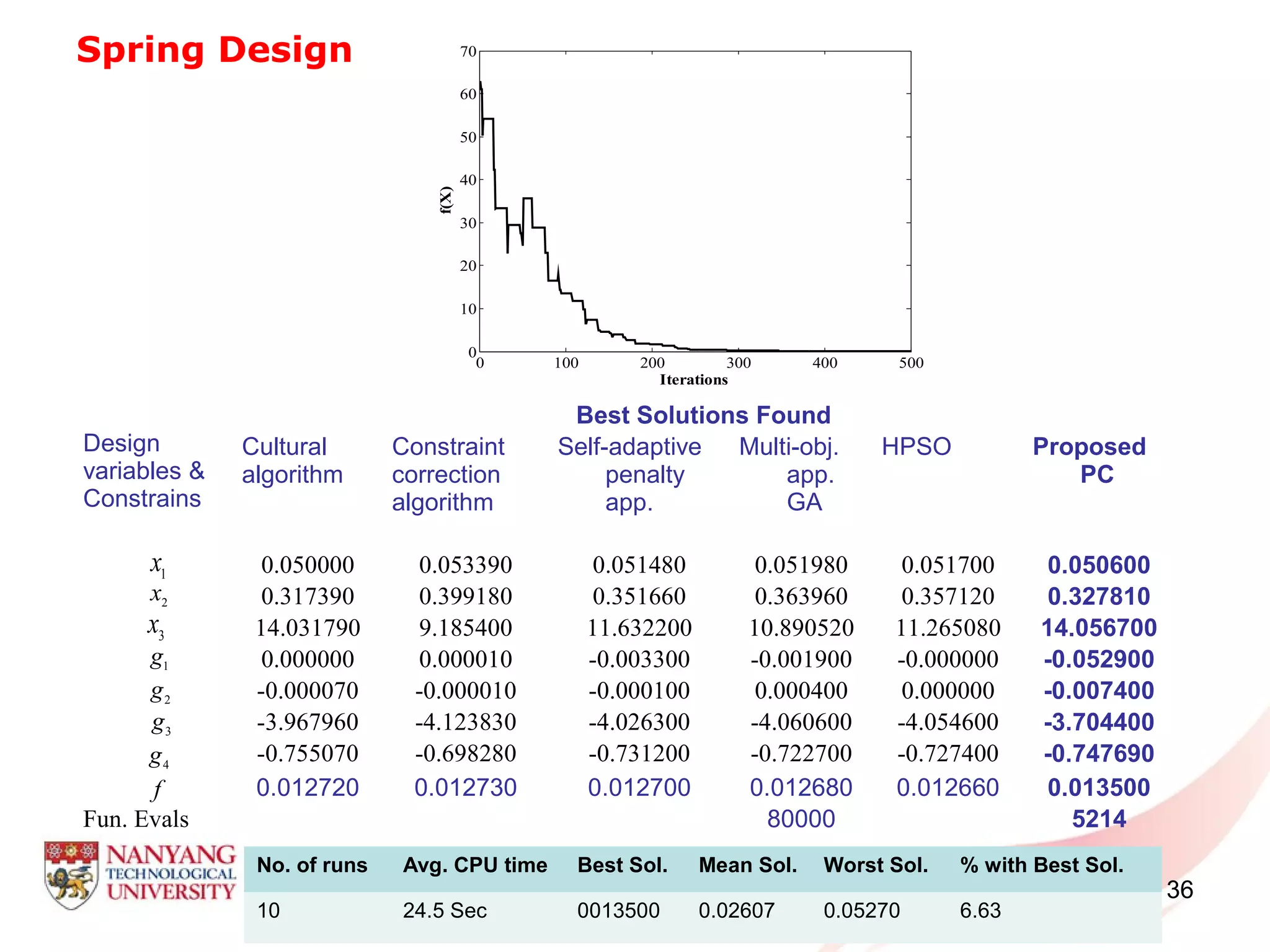

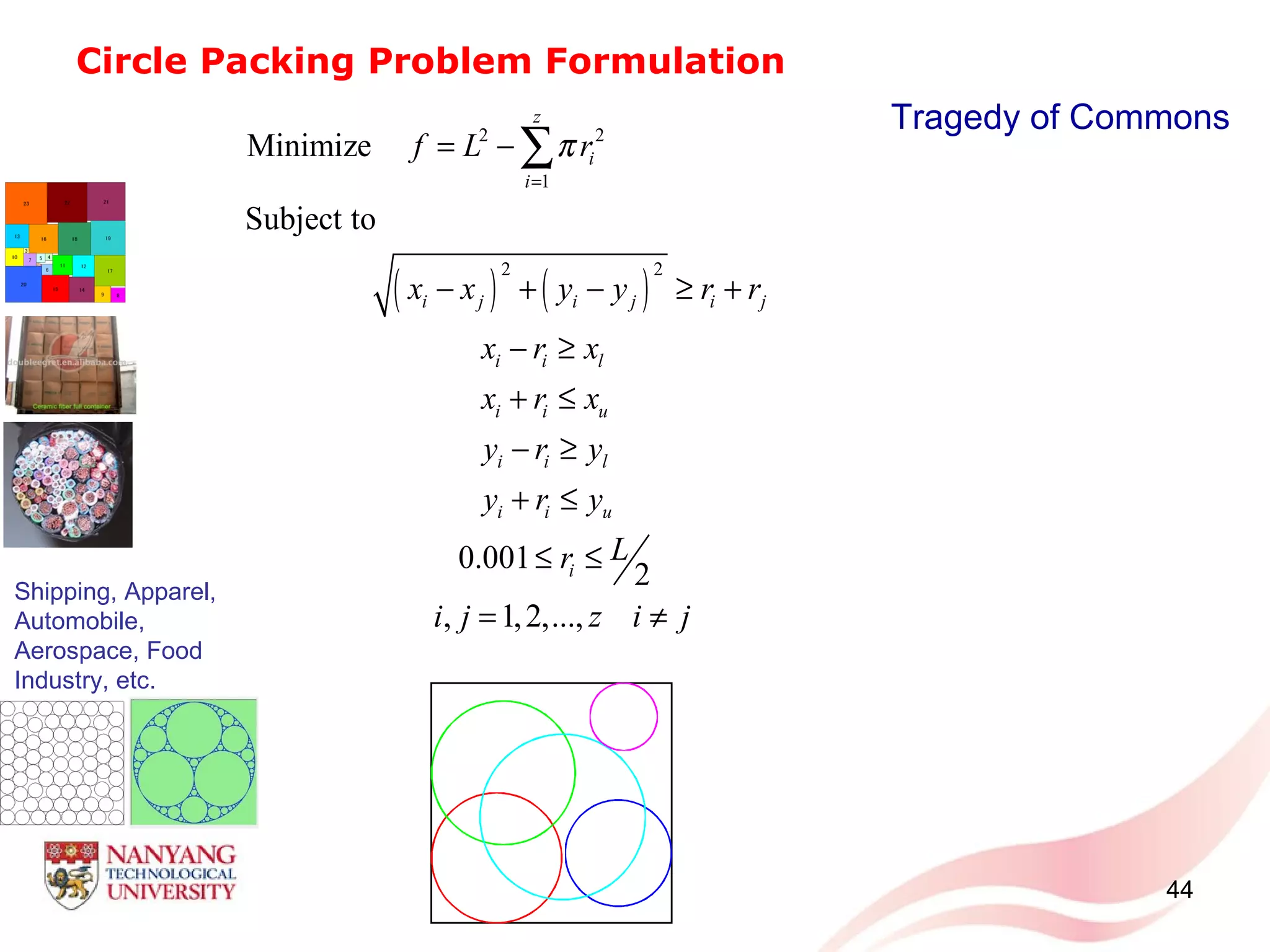

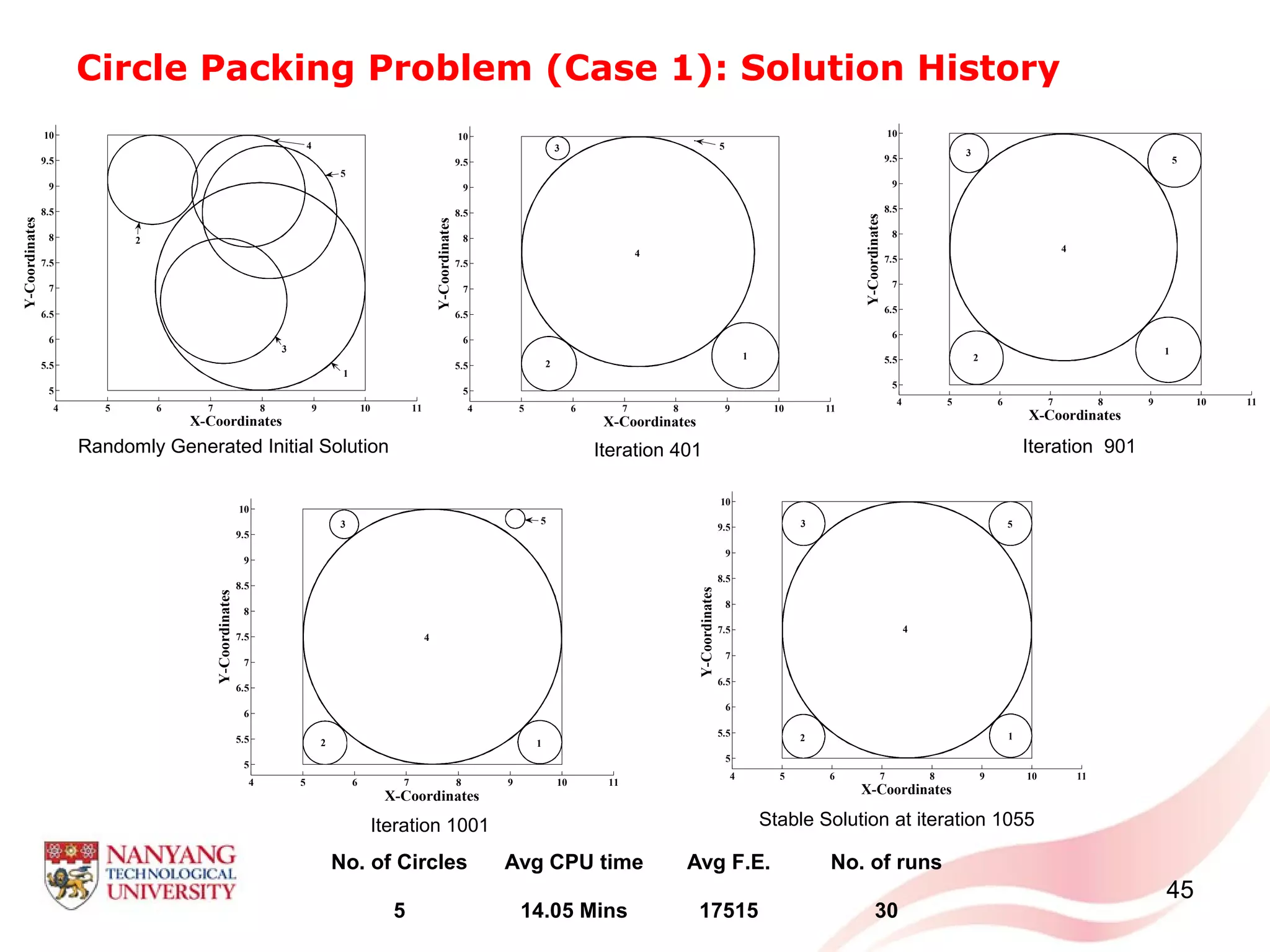

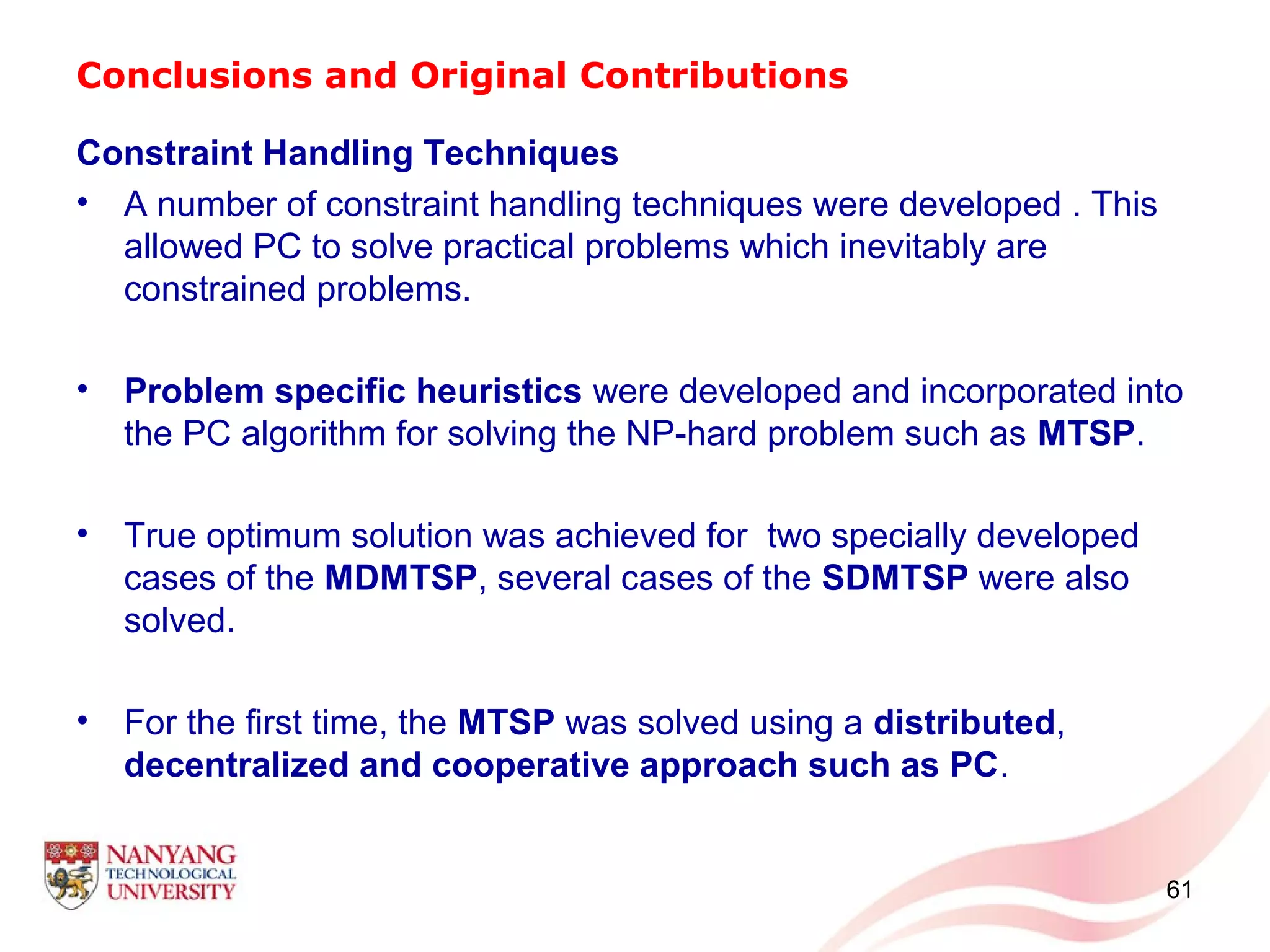

The document presents an overview of probability collectives (PC), a distributed optimization approach for solving complex multi-agent systems. PC formulates the problem as agents (variables) that iteratively sample strategies and update their probability distributions to minimize a global objective function in a cooperative manner. The key characteristics of PC include exploiting concepts from game theory, statistical physics, and optimization. PC can handle continuous, discrete, and mixed variable problems in a scalable way and is robust to agent failures. Constraint handling techniques are developed to apply PC to constrained optimization problems.

![Formulation of Unconstrained PC

• Consider a general unconstrained problem (in minimization sense)

comprising variables

• Variables Agents/Players of a game being played iteratively.

• Initially, every agents is given a sampling interval/space

• Every agent randomly samples strategies from within the

corresponding sampling interval .

12

( ) ( )1 2 1

, ,..., ,..., ,i N N

G f X X X X X−

=X

,lower upper

i i i

Ψ ∈ Ψ Ψ

N

[ ][1] [2] [ ]

{ , ,..., ,..., } , 1,2,...,imr

i i i i iX X X X i N and= =X

1 2 1... ...i N Nm m m m m−= = = = = =

i im

iΨ](https://image.slidesharecdn.com/thesispresentationjan-12-2012-151104082441-lva1-app6891/75/Probability-Collectives-12-2048.jpg)

![13

Formulation of Unconstrained PC

{ }[1] [?] [?] [1] [?] [?]

1 2 1, ,..., ,..., ,i i N NX X X X X−=Y

Agent selects its first strategy and samples randomly from other

agents’ strategies as well.

( )[1]

iG Y

1 [ ] [ ][ ][1] [2] [1] [2] [1] [2]

1 1 1 1{ , ,..., } ,..., { , ,..., } ,..., { , ,..., }i Nm mm

i i i i N N N NX X X X X X X X X= = =X X X

{ } ( )

{ } ( )

{ } ( )

{ } ( )

[2] [?] [?] [2] [?] [2]

1 2

[3] [?] [?] [3] [?] [3]

1 2

[ ] [?] [?] [ ] [?] [ ]

1 2

[ ] [ ] [ ][?] [?] [?]

1 2

, ,..., ,...,

, ,..., ,...,

, ,..., ,...,

, ,..., ,...,i i i

i i N i

i i N i

r r r

i i N i

m m m

i i N i

X X X X G

X X X X G

X X X X G

X X X X G

= ⇒

= ⇒

= ⇒

= ⇒

Y Y

Y Y

Y Y

Y Y

M

M

( )[ ]

1

im

r

i

r

G

=

⇒ ∑ Y

i](https://image.slidesharecdn.com/thesispresentationjan-12-2012-151104082441-lva1-app6891/75/Probability-Collectives-13-2048.jpg)

![Formulation of Unconstrained PC

14

• The ultimate goal of every agent is to identify its strategy value

which contributes the most towards the minimization of the sum

(collection) of these system objectives i.e. .

• Possibly many local minima

• Directly minimizing may require excessive computational efforts

• Homotopy Method: modify the function by converting it into

another topological space by constructing a related and easier

function . This forms the Homotopy function:

( )[ ]

1

im

r

i

r

G

=

∑ Y

i

( )( ) ( ) [ )[ ]

1

, ( ) , 0,

im

r

i i i i

r

J q T G T f T

=

= − ∈ ∞∑X Y X

( )if X](https://image.slidesharecdn.com/thesispresentationjan-12-2012-151104082441-lva1-app6891/75/Probability-Collectives-14-2048.jpg)

![Formulation of Unconstrained PC

• Analogy to Helmholtz free energy

One of the ways to achieve the thermal equilibrium and hence minimize

the energy to do work is actually minimizing the internal energy

through an annealing schedule, i.e. stepwise drop the temperature of

the system from to achieving the equilibrium

in every step.

15

( )( ) ( ) [ )[ ]

1

, ( ) , 0,

im

r

i i i i

r

J q T G T f T

=

= − ∈ ∞∑X Y X

L D T S= −

Energy available to do work Internal energy Spontaneous (Random) energy

initialT T= 0 finalT or T T→ →](https://image.slidesharecdn.com/thesispresentationjan-12-2012-151104082441-lva1-app6891/75/Probability-Collectives-15-2048.jpg)

![Formulation of Unconstrained PC

Deterministic Annealing

• It suggests conversion of the variables into random real valued

probabilities which converts the into .

16

( )( ) ( ) [ )[ ] [ ] [ ]

2

1 1

, ( ) ( )log ( ) , 0,

i im m

r r r

i i i i i

r r

J q T E G T q X q X T

= =

= − − ∈ ∞ ÷

∑ ∑X Y

[ ]

1

( )

im

r

i

r

G

=

∑ Y ( )[ ]

1

( )

im

r

i

r

E G

=

∑ Y

( )( ) ( ) [ )[ ]

1

, ( ) , 0,

im

r

i i i i

r

J q T G T f T

=

= − ∈ ∞∑X Y X

( )( ) ( ) [ )[ ]

1

, ( ) , 0,

im

r

i i i i

r

J q T E G T S T

=

= − ∈ ∞∑X Y](https://image.slidesharecdn.com/thesispresentationjan-12-2012-151104082441-lva1-app6891/75/Probability-Collectives-16-2048.jpg)

![Formulation of Unconstrained PC

17

0

0.05

0.1

0.15

1 2 3 4 5 6 7 8 9 10

0

0.05

0.1

0.15

1 2 3 4 5 6 7 8 9 10

[ ]

( ) [ ]

( )1

1 1 1... 1/im

q X q X m= = = [ ]

( ) [ ]

( )1

... 1/im

N N Nq X q X m= = =

L

0

0.05

0.1

0.15

1 2 3 4 5 6 7 8 9 10

L

[ ]

( ) [ ]

( )1

... 1/im

i i iq X q X m= = =

Agent 1 Agent i Agent N

{ }

{ }

{ }

[1] [?] [1] [?]

1

[ ] [?] [ ] [?]

1

[ ] [ ][?] [?]

1

,..., ,...,

,..., ,...,

,..., ,...,i i

i i N

r r

i i N

m m

i i N

X X X

X X X

X X X

=

=

=

Y

Y

Y

M

M

( )( )[ ]

1

im

r

i

r

E G

=

∑ Y

( ) ( ) ( ){ } [ ]

( ) [ ]

( ) ( )

[ ]

( )( )

( )( )

( ) ( ) ( ){ } [ ]

( ) [ ]

( ) ( )

[ ]

( )( )

( )( )

( ) ( ) ( ){ } [ ]

( ) [ ]

( ) ( )

[ ]

( )( )

( )( )

1 1 ?[?] [1] [?] [1]

1

?[?] [ ] [?] [ ]

1

?[ ] [ ][?] [?]

1

,..., ,..., Y

,..., ,..., Y

,..., ,..., Y i ii i

i N i i ii

i

r rr r

i N i i ii

i

m mm m

i N i i ii

i

q X q X q X G q X q X E G

q X q X q X G q X q X E G

q X q X q X G q X q X E G

⇒ =

⇒ =

⇒ =

∏

∏

∏

Y

Y

Y

M

M

Strategies Strategies Strategies](https://image.slidesharecdn.com/thesispresentationjan-12-2012-151104082441-lva1-app6891/75/Probability-Collectives-17-2048.jpg)

![Formulation of Unconstrained PC

• The minimization of the Homotopy function can be carried out using

a suitable second order optimization approach such Nearest

Newton Descent Scheme as well as Broyden-Fletcher-Goldfarb-

Shanno (BFGS) scheme.

18

0

0.05

0.1

0.15

1 2 3 4 5 6 7 8 9 10

0

0.05

0.1

0.15

1 2 3 4 5 6 7 8 9 10

0

0.05

0.1

0.15

1 2 3 4 5 6 7 8 9 10

0

0.2

0.4

0.6

0.8

1

1 2 3 4 5 6 7 8 9 10

0

0.2

0.4

0.6

0.8

1

1 2 3 4 5 6 7 8 9 10

0

0.2

0.4

0.6

0.8

1 2 3 4 5 6 7 8 9 10

[ ] [ ] [ ] [ ] [ ] [ ]

{ } [ ]

( )1 2 1, ,..., ,..., ,fav fav fav fav fav fav fav

i N NX X X X X G−= ⇒Y Y

Favorable Strategy Favorable Strategy Favorable Strategy

Agent 1 Agent i Agent N](https://image.slidesharecdn.com/thesispresentationjan-12-2012-151104082441-lva1-app6891/75/Probability-Collectives-18-2048.jpg)

![Formulation of Unconstrained PC

• Updating of the Sampling Interval (Neighboring Method)

• Convergence and Final Solution

If

If there is no significant change in the system objectives for

successive considerable number of iterations

19

[ ]

( ) [ ]

( ), , 0 1

fav favupper lower upper lower

i i down i i i down i i downX Xλ λ λ Ψ ∈ − Ψ − Ψ + Ψ − Ψ < ≤

[ ] [ ] [ ] [ ] [ ]

{ } [ ], , , , , ,

1 2 1, ,..., , ( )

fav final fav final fav final fav final fav final fav final

N NX X X X G−= ⇒Y Y

0finalT T or T= →

[ ] [ ], , 1

( ) ( )

fav n fav n

G G ε−

− ≤Y Y](https://image.slidesharecdn.com/thesispresentationjan-12-2012-151104082441-lva1-app6891/75/Probability-Collectives-19-2048.jpg)

![20

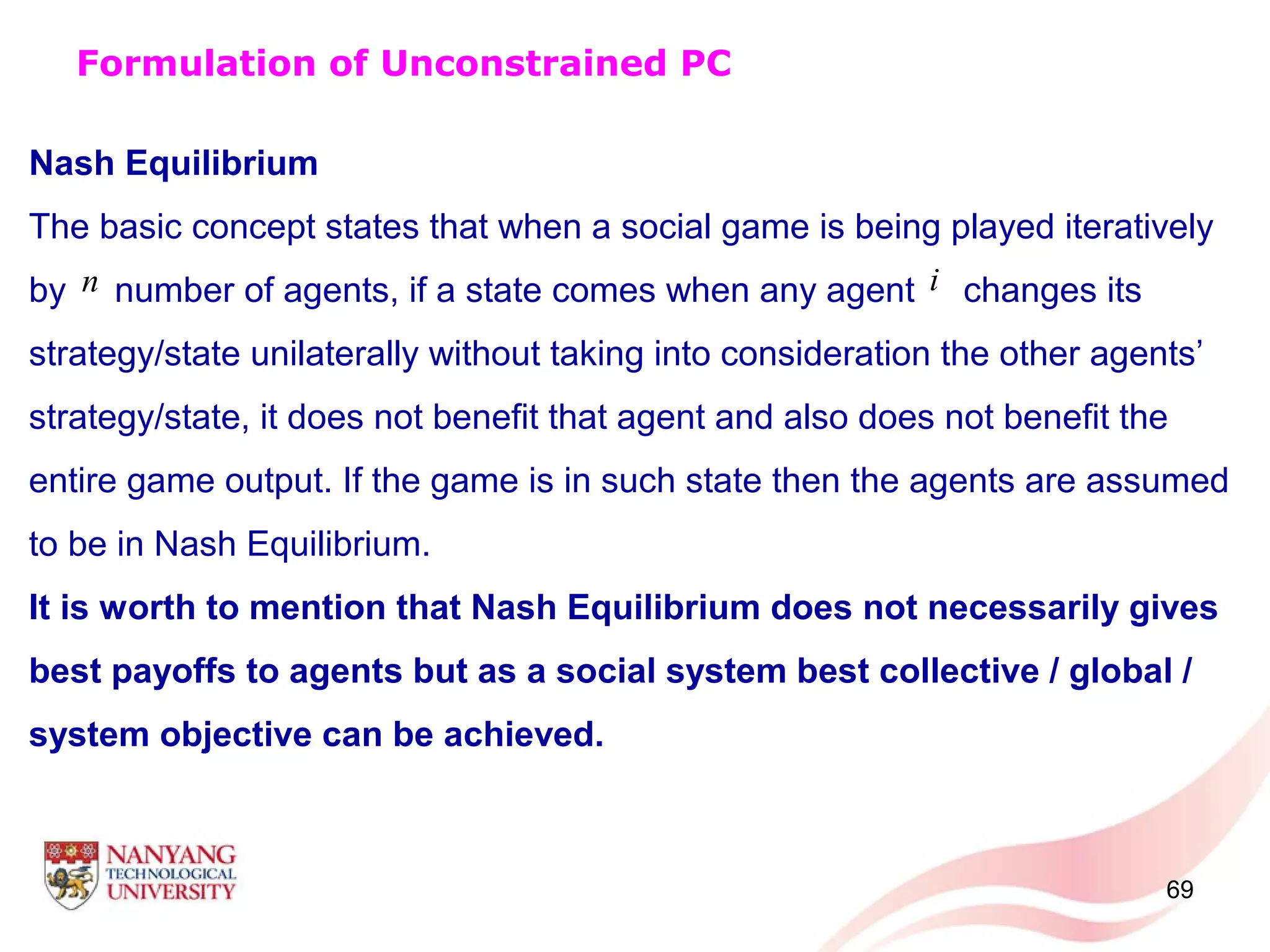

Nash Equilibrium (Necessary Properties):

Rationality: Select the best possible strategy by guessing other agents’

strategies

Convergence: Same class policy of selecting the best possible strategy and

guessing other agents’ strategies (guaranteed: policy does not change)

Nash Equilibrium in PC

: by guessing other agents’ strategies

and : is communicated to every other agent

Formulation of Unconstrained PC

[ ]fav

iX

[ ]fav

iX [ ]

( )

fav

G Y](https://image.slidesharecdn.com/thesispresentationjan-12-2012-151104082441-lva1-app6891/75/Probability-Collectives-20-2048.jpg)

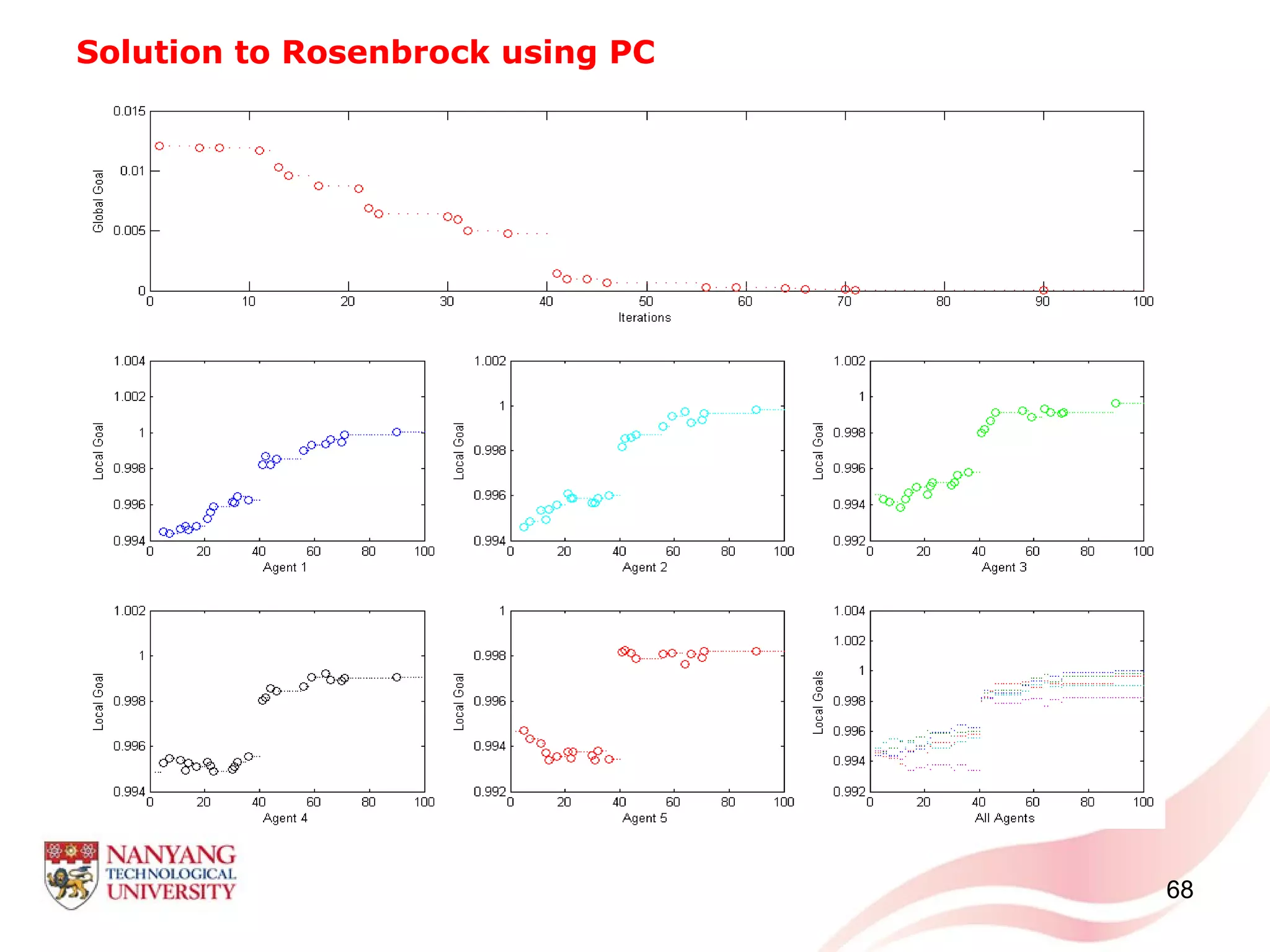

![21

Solution to Rosenbrock Function using PC

( ) ( ) ( )

1 2 22

1

1

100 1

N

i i i

i

f x x x

−

+

=

= − + −

∑X

where [ ]1 2 3....... Nx x x x=X

lower limit upper limit

1,2,...,

ix

i N

≤ ≤

=

Agents/

(Variables)

Strategy Values Selected with maximum Probability

Trial-1 Trial-2 Trial-3 Trial-4 Trial-5 Range of Values

Agent-1 1.0000 0.9999 1.0002 1.0001 0.9997 -1.0 to 1.0

Agent-2 1.0000 0.9998 1.0001 1.0001 0.9994 -5.0 to 5.0

Agent-3 1.0001 0.9998 1.0000 0.9999 0.9986 -3.0 to 3.0

Agent-4 0.9998 0.9998 0.9998 0.9995 0.9967 -3.0 to 8.0

Agent-5 0.9998 0.9999 0.9998 0.9992 0.9937 1.0 to 10.0

Fun. Value 2 x 10-5

1 x 10-5

2 x 10-5

2 x 10-5

5 x 10-5

Fun. Evals. 288100 223600 359050 204750 242950

Results](https://image.slidesharecdn.com/thesispresentationjan-12-2012-151104082441-lva1-app6891/75/Probability-Collectives-21-2048.jpg)

![Constrained PC (Approach 2): Penalty Function Approach

32

• Penalty based methods are the most generalized constraint handling

methods: simplicity, ability to handle non linear constraints and

compatibility with most of the unconstrained optimization methods

• Converts constrained optimization problem into unconstrained one.

[ ]

( ) ( ) ( ) ( )

2 2

[ ] [ ] [ ]

1 1

s t

r r r r

i i j i j i

j j

G g hφ θ +

= =

= + +

∑ ∑Y Y Y Y

( ) ( )( )[ ] [ ]

max 0,r r

j i j iwhere g g and is scalar parameterθ+

=Y Y](https://image.slidesharecdn.com/thesispresentationjan-12-2012-151104082441-lva1-app6891/75/Probability-Collectives-32-2048.jpg)

![Constrained PC (Approach 3): Feasibility-based Rule I

• Updating of the Sampling Space and Perturbation Approach

In order to jump out of this possible local minimum, every agent

perturbs its current feasible strategy

The value of and +/- sign are selected based on preliminary trials.

Every agent expands the sampling space as follows:

43

i

[ ] [ ] [ ]

( )

( ) [ ]

( ) [ ]

1 1

2 2

1

,

1

,

fav fav fav

i i i i

lower upper

fav

i

i

lower upper

fav

i

X X X fact

randomvalue if

X

where fact

randomvalue if

X

σ σ γ

σ σ γ

= ± ×

∈ ≤

=

∈ >

1 1 2 20 1lower upper lower upper

σ σ σ σ< < ≤ < <

γ

( ) ( ), , 0 1lower upper lower upper upper lower

i i up i i i up i i upλ λ λ Ψ ∈ Ψ − Ψ − Ψ Ψ + Ψ − Ψ < ≤

](https://image.slidesharecdn.com/thesispresentationjan-12-2012-151104082441-lva1-app6891/75/Probability-Collectives-43-2048.jpg)

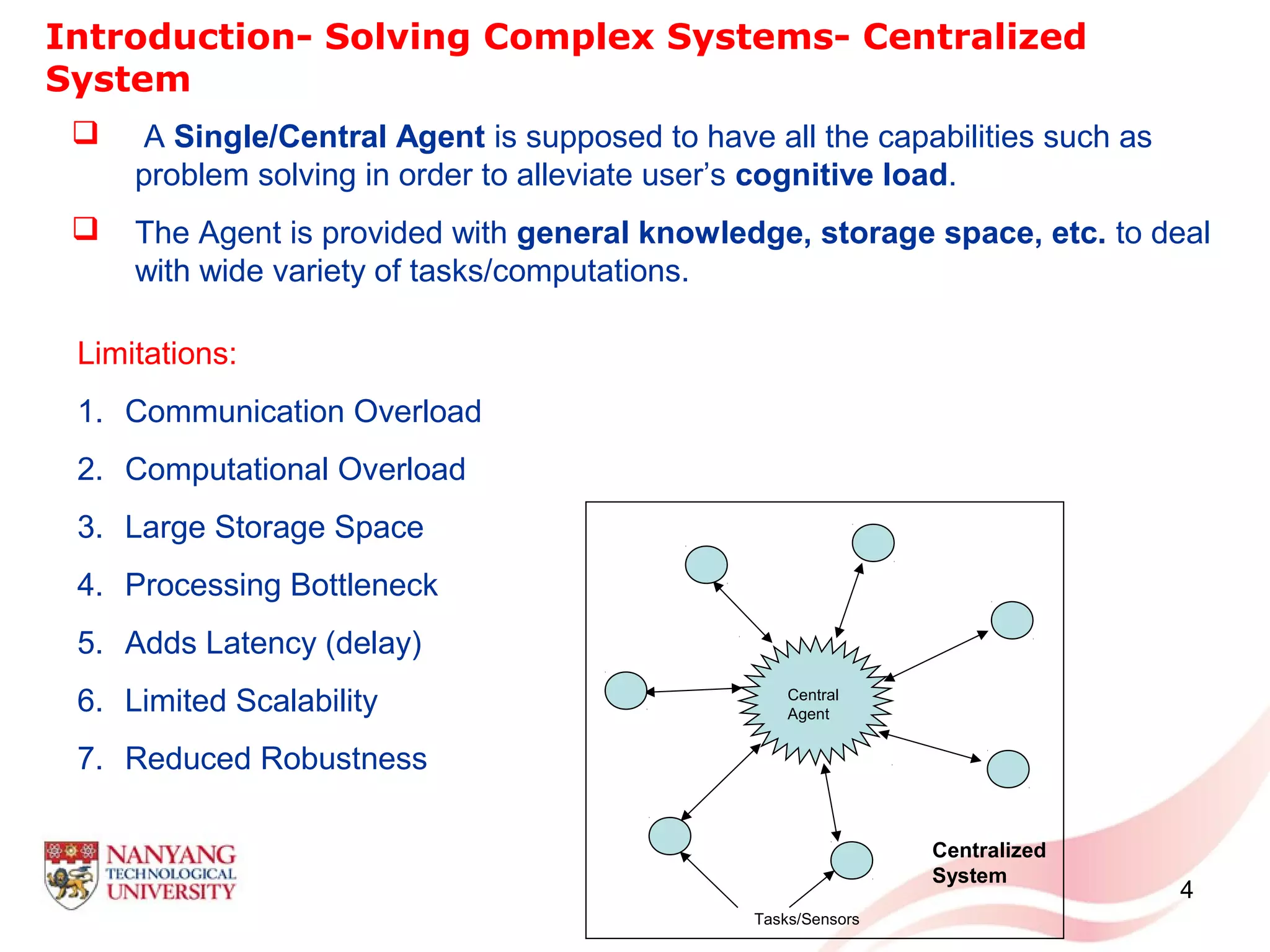

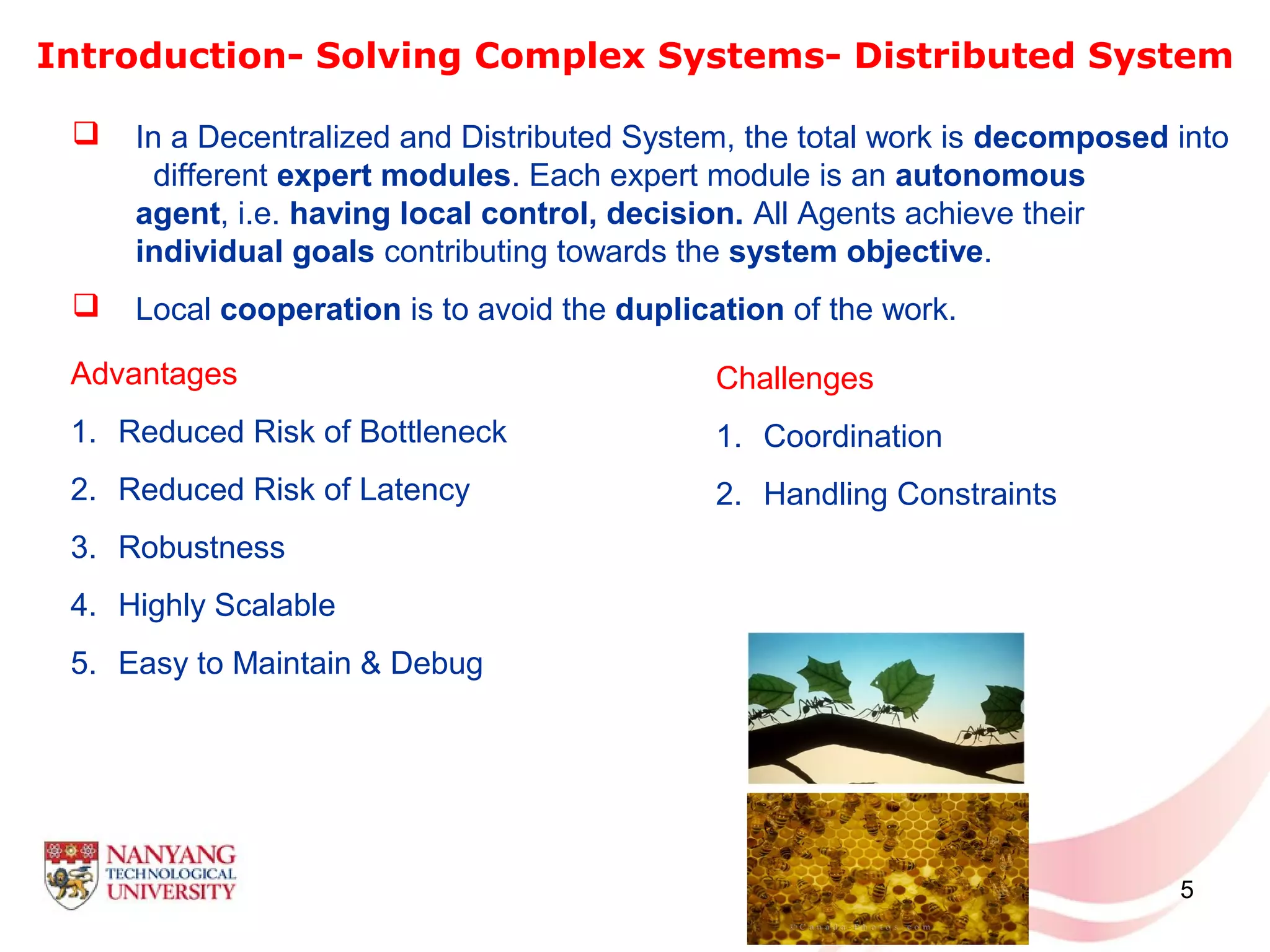

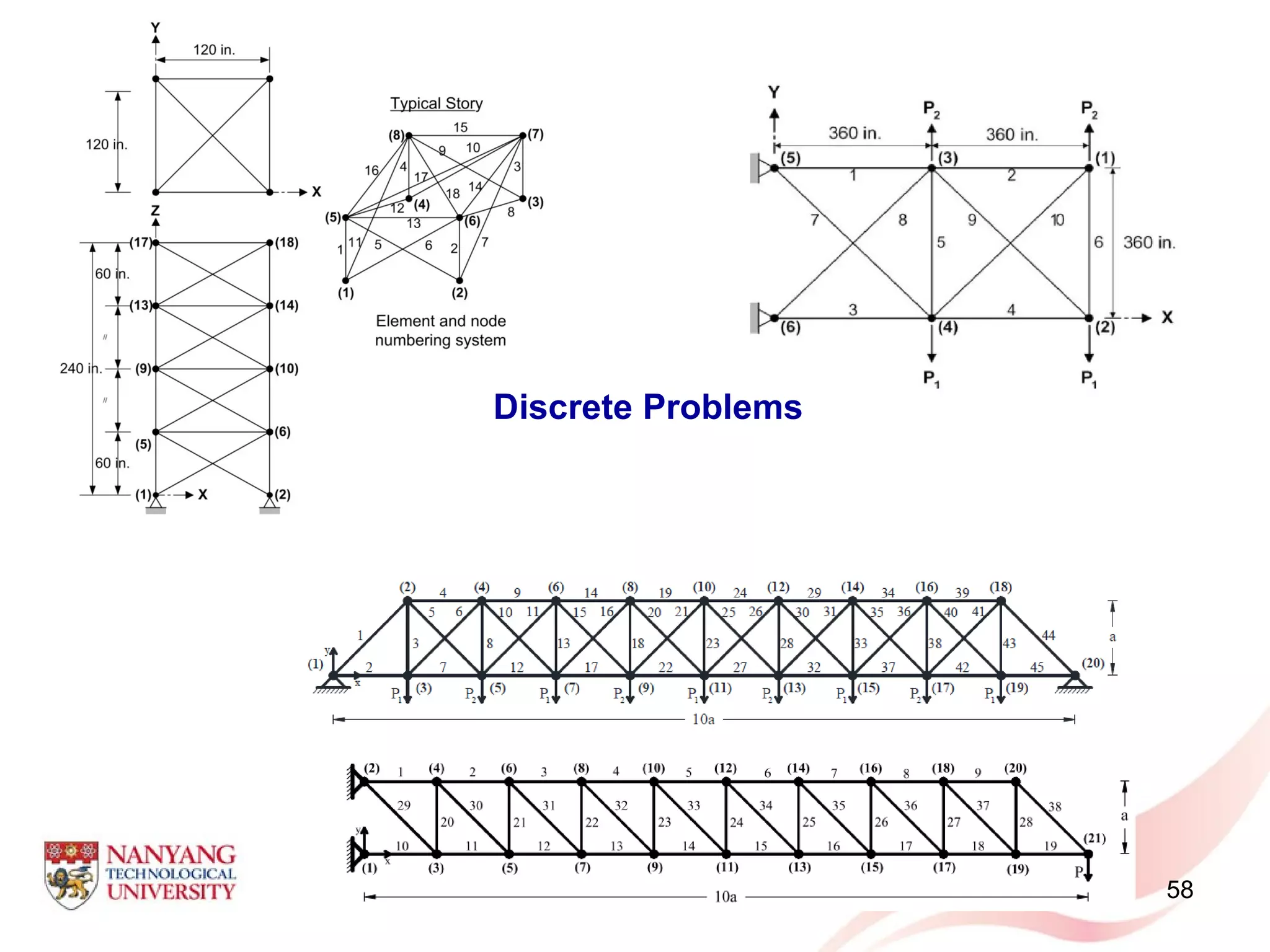

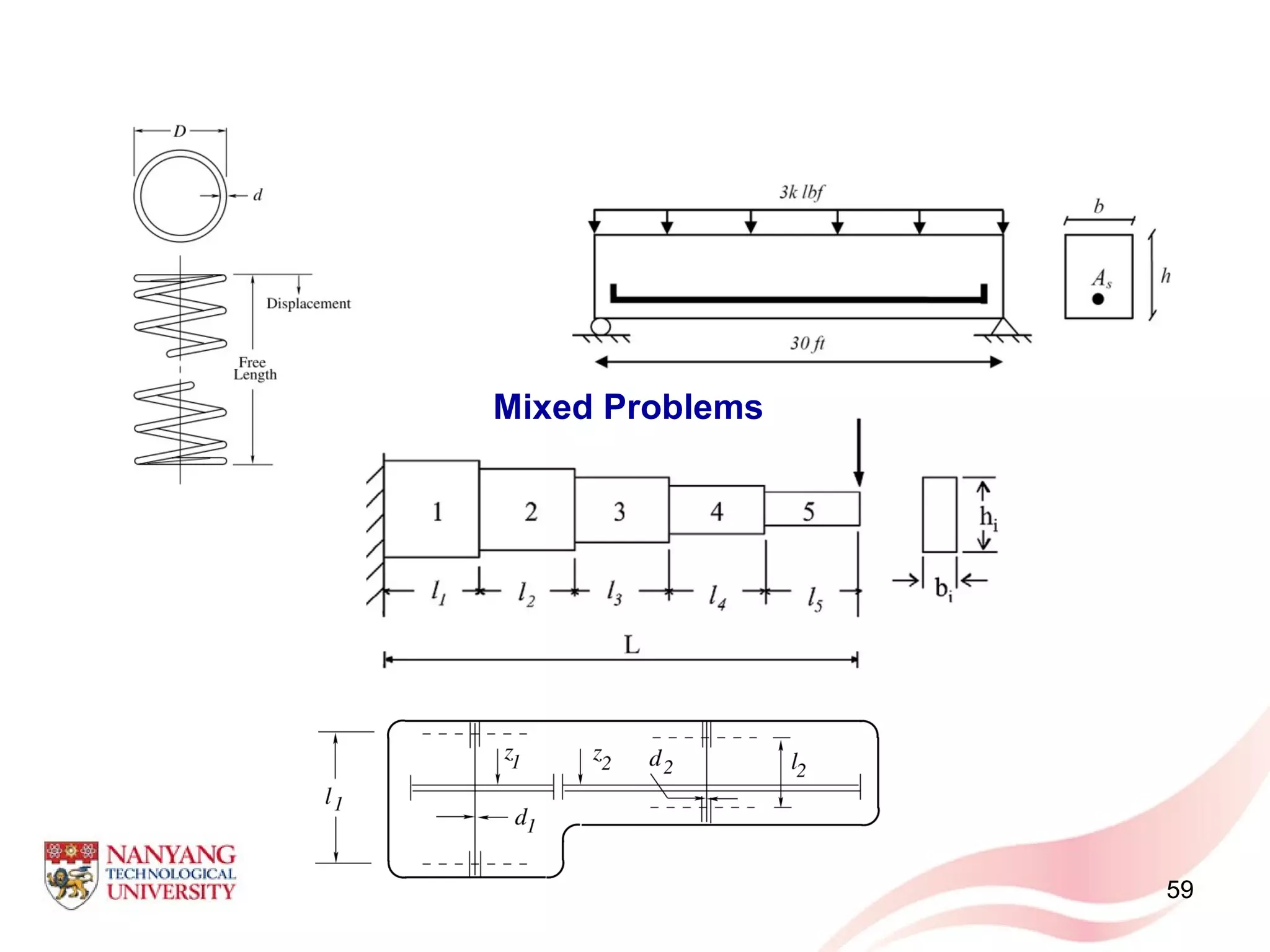

![57

• Discrete Problems

Variables GA

[31]

HS

[34]

PSO

[36]

PSOPC

[36]

HPSO

[36]

DHPSACO

[37]

Proposed

PC

0.4 0.01 0.01 0.01 0.01 0.01 0.01

2.0 2.0 2.6 2.0 2.0 1.6 0.4

3.6 3.6 3.6 3.6 3.6 3.2 3.6

0.01 0.01 00.01 0.01 0.01 0.01 0.01

0.01 0.01 0.4 0.01 0.01 0.01 2

0.8 0.8 0.8 0.8 0.8 0.8 0.8

2.0 1.6 1.6 1.6 1.6 2.0 0.01

2.4 2.4 2.4 2.4 2.4 2.4 4

563.52 560.59 566.44 560.59 560.59 551.61 477.16684

Variables GA

[31]

PSO

[36]

PSOPC

[36]

HPSO

[36]

DHPSACO

[37]

Proposed

PC

0.307 1.000 0.111 0.111 0.111 0.111

1.990 2.620 1.563 2.130 2.130 0.563

3.130 2.620 3.380 3.380 3.380 3.13

0.111 0.250 0.111 0.111 0.111 0.141

0.141 0.307 0.111 0.111 0.111 1.8

0.766 0.602 0.766 0.766 0.766 0.766

1.620 1.457 1.990 1.620 1.620 0.111

2.620 2.880 2.380 2.620 2.620 3.88

556.49 567.49 567.49 551.14 551.14 464.14708

Case 1: The discrete variables are selected from

the set

{0.01, 0.4, 0.8, 1.2, 1.6, 2.0, 2.4, 2.8, 3.2, 3.6,

4.0, 4.4, 4.8, 5.2, 5.6, 6.0}

.](https://image.slidesharecdn.com/thesispresentationjan-12-2012-151104082441-lva1-app6891/75/Probability-Collectives-57-2048.jpg)

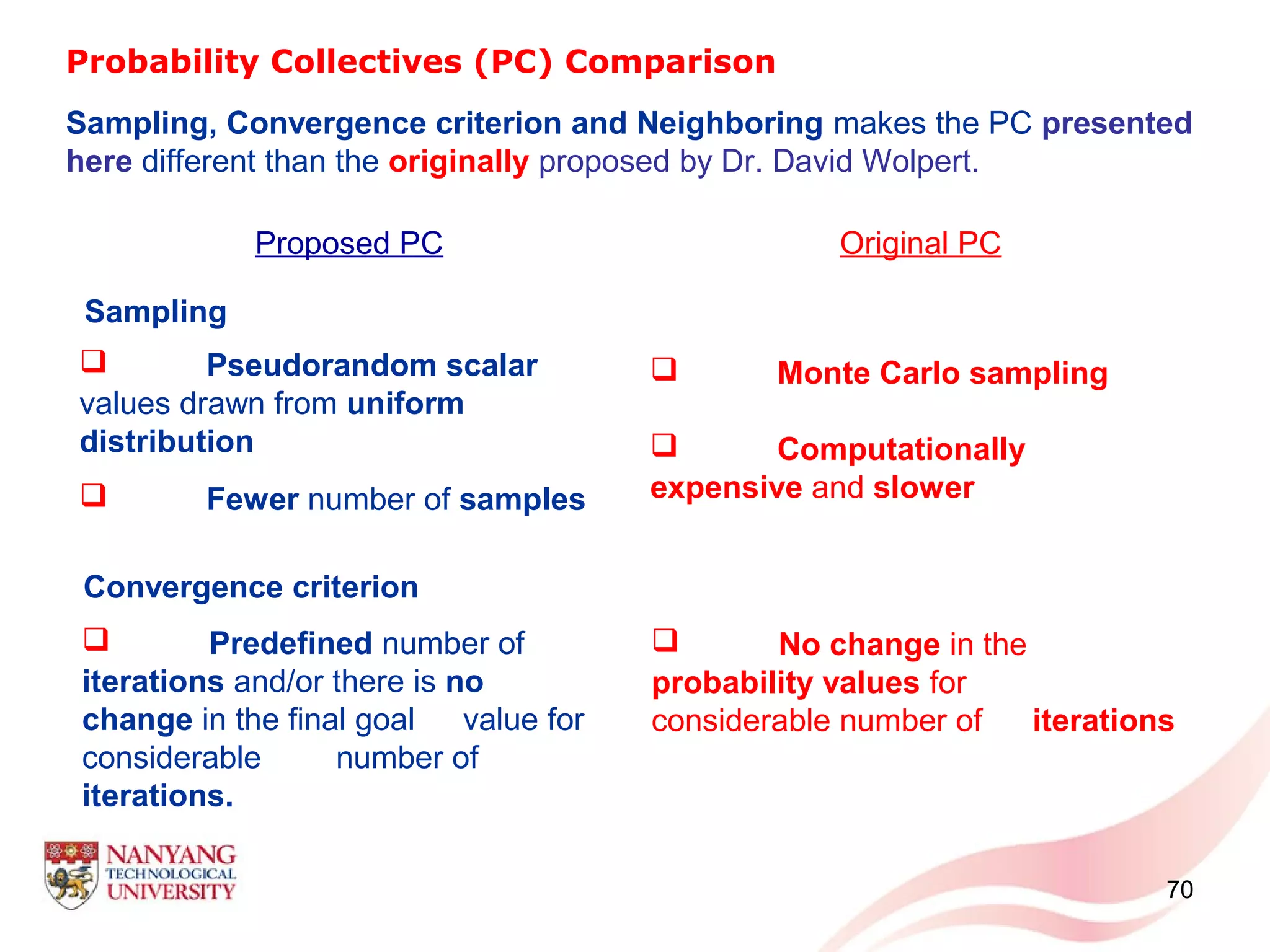

![71

Probability Collectives (PC) Comparison

Proposed PC Original PC [1, 3]

Neighboring

Sample around the

‘favorable strategy values’

and continue from the beginning.

Narrows down the sampling

options of Agents forcing

them to sample only from the

neighbored range.

Increases convergence

speed.

Computationally cheaper

Regression

Data-aging

Computationally

expensive/Large

memory](https://image.slidesharecdn.com/thesispresentationjan-12-2012-151104082441-lva1-app6891/75/Probability-Collectives-71-2048.jpg)

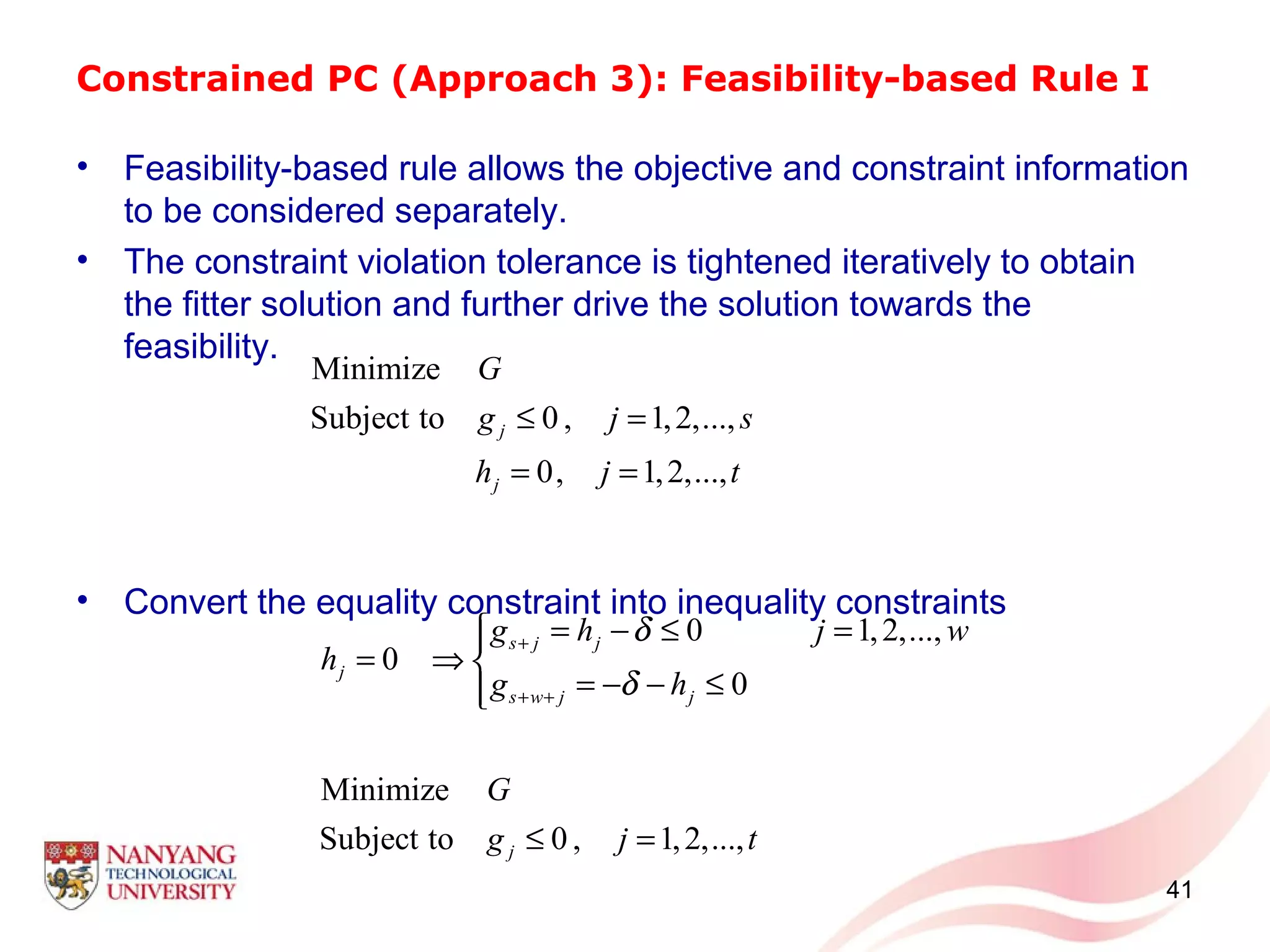

![Constrained PC (Approach 3): Feasibility-based Rule I

• Procedure starts with initializing the constraint violation tolerance

where is the cardinality of .

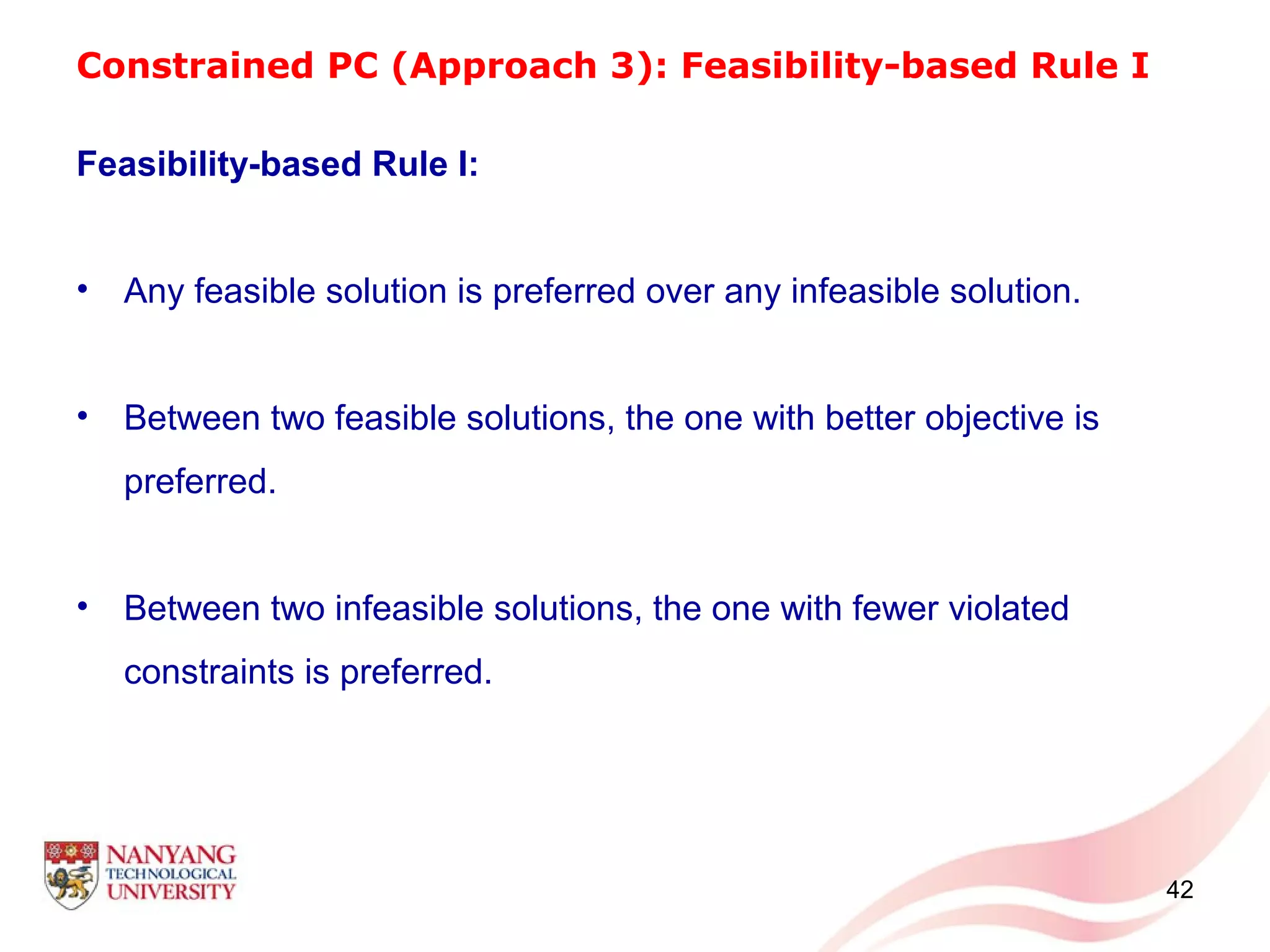

Feasibility-based Rule I

• Any feasible solution is preferred over any infeasible solution

If the current system objective as well as the previous

solution are infeasible, accept the current system objective

and corresponding as the current solution if the number of

constraints violated is less than or equal to , i.e. ,

and then the value of is updated to , i.e. .

73

µ = C [ ]1 2 ... tg g g=CC

[ ]

( )fav

G Y

[ ]fav

Y

[ ]

( )fav

G Y

violatedC µ violatedC µ≤

µ violatedC violatedCµ =](https://image.slidesharecdn.com/thesispresentationjan-12-2012-151104082441-lva1-app6891/75/Probability-Collectives-73-2048.jpg)

![Constrained PC (Approach 3): Feasibility-based Rule I

• Between two feasible solutions, the one with better objective is

preferred

If the current system objective is feasible, and the previous

solution is infeasible, accept the current system objective

and corresponding as the current solution and then the value of

is updated to , i.e. .

74

[ ]

( )fav

G Y

[ ]fav

Y

[ ]

( )fav

G Y

0

µ

0violatedCµ = =](https://image.slidesharecdn.com/thesispresentationjan-12-2012-151104082441-lva1-app6891/75/Probability-Collectives-74-2048.jpg)

![Constrained PC (Approach 3): Feasibility-based Rule I

• Between two infeasible solutions, the one with fewer violated

constraints is preferred.

If the current system objective is feasible, i.e. and

is not worse than the previous feasible solution, accept the current

system objective and corresponding as the current

solution.

• If all the above conditions are not met, then discard current system

objective and corresponding , and retain the previous

iteration solution.

75

[ ]

( )fav

G Y

[ ]fav

Y

[ ]

( )fav

G Y

0violatedC =

[ ]

( )fav

G Y [ ]fav

Y](https://image.slidesharecdn.com/thesispresentationjan-12-2012-151104082441-lva1-app6891/75/Probability-Collectives-75-2048.jpg)

![Constrained PC (Approach 3): Feasibility-based Rule I

Updating of the Sampling Space and Perturbation Approach

• On completion of pre-specified iterations,

• If then shrink the sampling intervals:

• If and are feasible and

the system objective is referred to as .

76

[ ] [ ], ,

( ) ( )testfav n fav n n

G G

−

≤Y Y

testn

[ ]

( ) [ ]

( ), , 0 1

fav favupper lower upper lower

i i down i i i down i i downX Xλ λ λ Ψ ∈ − Ψ − Ψ + Ψ − Ψ < ≤

[ ],

( )

fav n

G Y [ ],

( )testfav n n

G

−

Y

[ ] [ ], ,

( ) ( )testfav n fav n n

G G ε−

− ≤Y Y

[ ],

( )

fav n

G Y [ ],

( )

fav s

G Y](https://image.slidesharecdn.com/thesispresentationjan-12-2012-151104082441-lva1-app6891/75/Probability-Collectives-76-2048.jpg)

![Constrained PC (Approach 3): Feasibility-based Rule I

• Updating of the Sampling Space and Perturbation Approach

In order to jump out of this possible local minimum, every agent

perturbs its current feasible strategy

The value of and +/- sign are selected based on preliminary trials.

Every agent expands the sampling space as follows:

77

i

[ ] [ ] [ ]

( )

( ) [ ]

( ) [ ]

1 1

2 2

1

,

1

,

fav fav fav

i i i i

lower upper

fav

i

i

lower upper

fav

i

X X X fact

randomvalue if

X

where fact

randomvalue if

X

σ σ γ

σ σ γ

= ± ×

∈ ≤

=

∈ >

1 1 2 20 1lower upper lower upper

σ σ σ σ< < ≤ < <

γ

( ) ( ), , 0 1lower upper lower upper upper lower

i i up i i i up i i upλ λ λ Ψ ∈ Ψ − Ψ − Ψ Ψ + Ψ − Ψ < ≤

](https://image.slidesharecdn.com/thesispresentationjan-12-2012-151104082441-lva1-app6891/75/Probability-Collectives-77-2048.jpg)

![Constrained PC (Approach 3): Feasibility-based Rule II

Feasibility-based Rule II:

• Any feasible solution is preferred over any infeasible solution

If the current system objective as well as the previous

solution are infeasible, accept the current system objective

and corresponding as the current solution if the number of

improved constraints is greater than or equal to , i.e. ,

and then the value of is updated to , i.e. .

80

[ ]

( )fav

G Y

[ ]fav

Y

[ ]

( )fav

G Y

µ improvedC µ≥

µ improvedC improvedCµ =](https://image.slidesharecdn.com/thesispresentationjan-12-2012-151104082441-lva1-app6891/75/Probability-Collectives-80-2048.jpg)

![Constrained PC (Approach 3): Feasibility-based Rule II

• Between two feasible solutions, the one with better objective is

preferred

If the current system objective is feasible, and the previous

solution is infeasible, accept the current system objective

and corresponding as the current solution and then the value of

is updated to , i.e. .

• Between two infeasible solutions, the one with more number of

improved constraint violations is preferred.

If the current system objective is feasible, and is not worse

than the previous feasible solution, accept the current system

objective and corresponding as the current solution.

81

[ ]

( )fav

G Y

[ ]fav

Y

[ ]

( )fav

G Y

0

µ

0improvedCµ = =

[ ]

( )fav

G Y

[ ]fav

Y

[ ]

( )fav

G Y](https://image.slidesharecdn.com/thesispresentationjan-12-2012-151104082441-lva1-app6891/75/Probability-Collectives-81-2048.jpg)

![Constrained PC (Approach 3): Feasibility-based Rule II

• If the solution remains feasible and unchanged for successive

predefined number of iterations, and current feasible system

objective is worse than the previous iteration feasible solution,

accept the current solution.

If the solution remains feasible and unchanged for successive pre-

specified iterations i.e. and are feasible and ,

and the current feasible system objective is worse than the previous

iteration feasible solution, accept the current system objective

and corresponding as the current solution.

82

( )[ ],fav n

G Y

[ ]fav

Y

testn ( )[ ], testfav n n

G −

Y

( )[ ]fav

G Y](https://image.slidesharecdn.com/thesispresentationjan-12-2012-151104082441-lva1-app6891/75/Probability-Collectives-82-2048.jpg)

![84

Formulation of Unconstrained PC

{ }[1] [?] [?] [1] [?] [?]

1 2 1, ,..., ,..., ,i i N NX X X X X−=Y

Agent selects its first strategy and samples randomly from other

agents’ strategies as well.

( )[1]

iG Y

[ ] [ ] [ ][1] [2] [1] [2] [1] [2]

1 1 1 1{ , ,..., } ,..., { , ,..., } ,..., { , ,..., }N i Nm m m

i i i i N N N NX X X X X X X X X= = =X X X

{ } ( )

{ } ( )

{ } ( )

{ } ( )

[2] [?] [?] [2] [?] [2]

1 2

[3] [?] [?] [3] [?] [3]

1 2

[ ] [?] [?] [ ] [?] [ ]

1 2

[ ] [ ] [ ][?] [?] [?]

1 2

, ,..., ,...,

, ,..., ,...,

, ,..., ,...,

, ,..., ,...,i i i

i i N i

i i N i

r r r

i i N i

m m m

i i N i

X X X X G

X X X X G

X X X X G

X X X X G

= ⇒

= ⇒

= ⇒

= ⇒

Y Y

Y Y

Y Y

Y Y

M

M

( )[ ]

1

im

r

i

r

G

=

⇒ ∑ Y

i](https://image.slidesharecdn.com/thesispresentationjan-12-2012-151104082441-lva1-app6891/75/Probability-Collectives-84-2048.jpg)

![Formulation of Unconstrained PC

85

0

0.05

0.1

0.15

1 2 3 4 5 6 7 8 9 10

0

0.05

0.1

0.15

1 2 3 4 5 6 7 8 9 10

[ ]

( ) [ ]

( )1

1 1 1... 1/im

q X q X m= = = [ ]

( ) [ ]

( )1

... 1/im

N N Nq X q X m= = =

L

0

0.05

0.1

0.15

1 2 3 4 5 6 7 8 9 10

L

[ ]

( ) [ ]

( )1

... 1/im

i i iq X q X m= = =

Agent 1 Agent i Agent N

{ }

{ }

{ }

[2] [?] [2] [?]

1

[ ] [?] [ ] [?]

1

[ ] [ ][?] [?]

1

,..., ,...,

,..., ,...,

,..., ,...,i i

i i N

r r

i i N

m m

i i N

X X X

X X X

X X X

=

=

=

Y

Y

Y

M

M

( )( )[ ]

1

im

r

i

r

E G

=

∑ Y

( ) ( ) ( ){ } [ ]

( ) [ ]

( ) ( )

[ ]

( )( )

( )( )

( ) ( ) ( ){ } [ ]

( ) [ ]

( ) ( )

[ ]

( )( )

( )( )

( ) ( ) ( ){ } [ ]

( ) [ ]

( ) ( )

[ ]

( )( )

( )( )

1 1 1[?] [2] [?] [2]

1

[?] [ ] [?] [ ]

1

[ ] [ ][?] [?]

1

,..., ,..., Y

,..., ,..., Y

,..., ,..., Y i i ii i

i N i i ii

i

r r rr r

i N i i ii

i

m m mm m

i N i i ii

i

q X q X q X G q X q X E G

q X q X q X G q X q X E G

q X q X q X G q X q X E G

⇒ =

⇒ =

⇒ =

∏

∏

∏

Y

Y

Y

M

M](https://image.slidesharecdn.com/thesispresentationjan-12-2012-151104082441-lva1-app6891/75/Probability-Collectives-85-2048.jpg)

![86

[ ]

( )( ) [ ]

( ) [ ]

( ) ( )

[ ]

( )( )

Y

r r r r

i i i i

i

E G G q X q X= ∏Y

[ ]

( ) [ ] [ ]

( )

[ ]

( )

?

1 1

( ) (Y ) ( ) ( )

i im m

r r r

i i i i

r r i

E G G q X q X

= =

=∑ ∑ ∏Y](https://image.slidesharecdn.com/thesispresentationjan-12-2012-151104082441-lva1-app6891/75/Probability-Collectives-86-2048.jpg)