This document provides an agenda for a presentation on integrating Apache Cassandra and Apache Spark. The presentation will cover RDBMS vs NoSQL databases, an overview of Cassandra including data model and queries, and Spark including RDDs and running Spark on Cassandra data. Examples will be shown of performing joins between Cassandra and Spark DataFrames for both simple and complex queries.

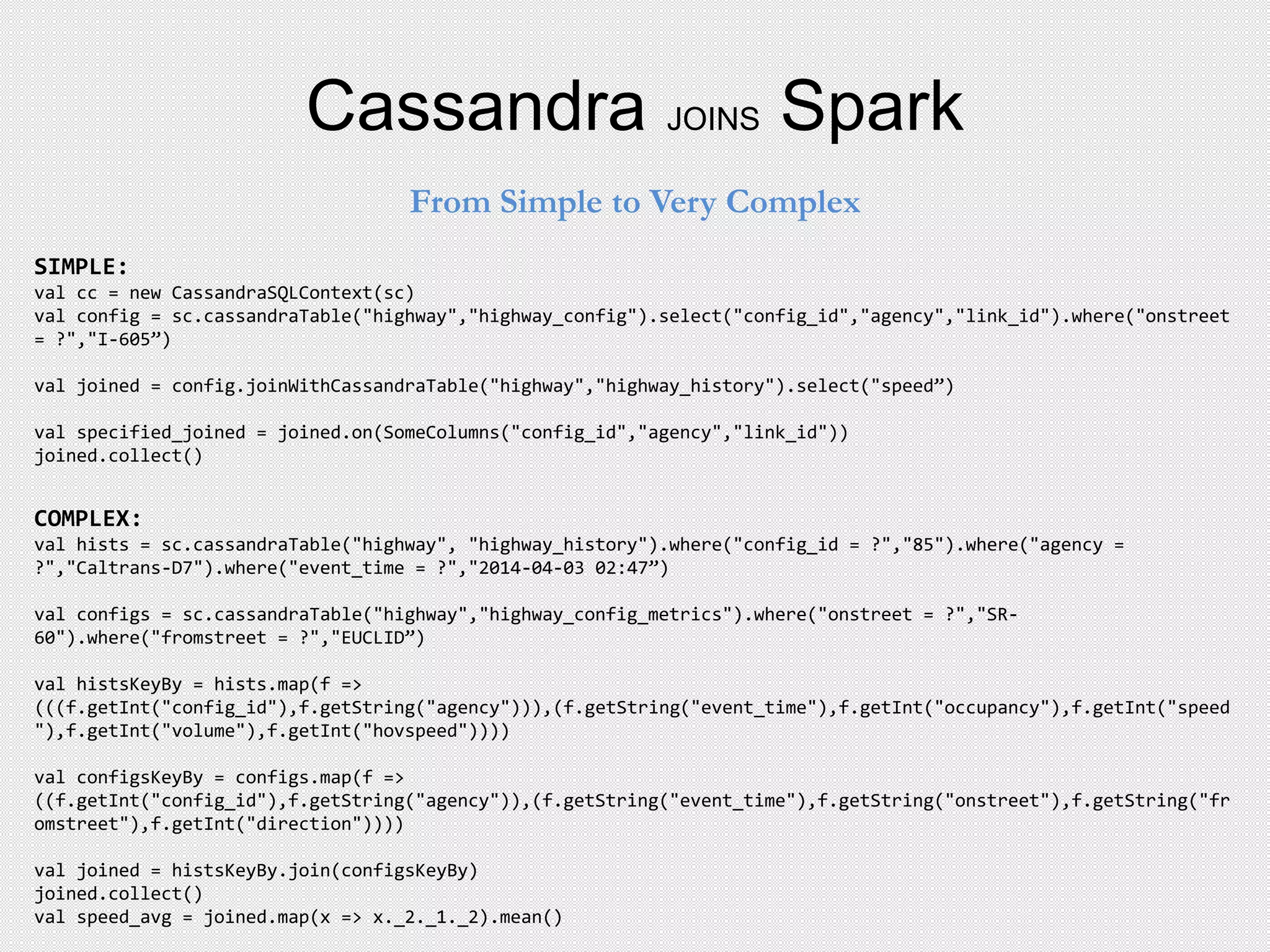

![Partitioners

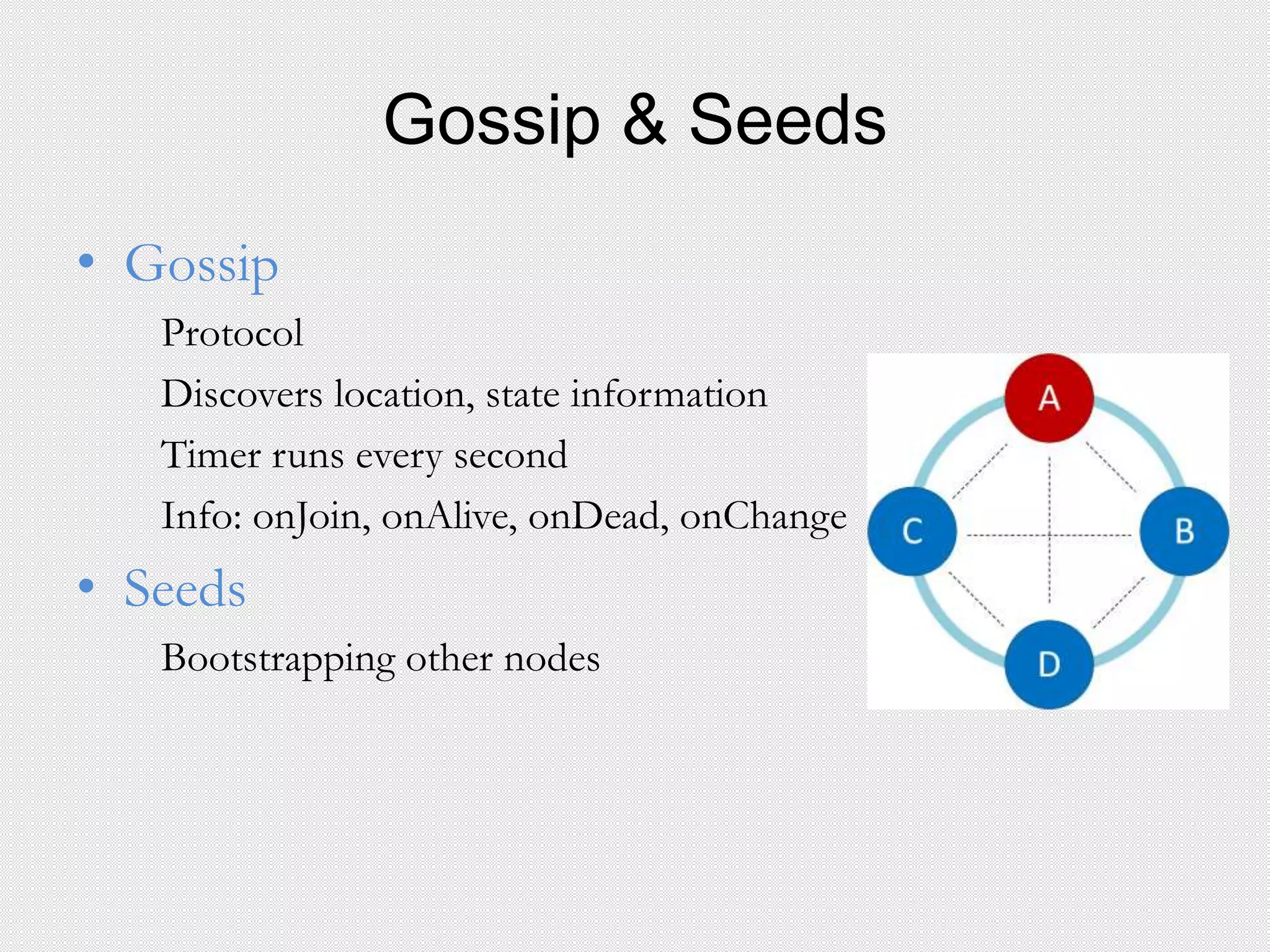

• Defines the Hash function for Consistent Hashing

• Compute the token for each row key

Types

Murmur3Partitioner

Values: [ -263 … 0 …. +263 ]

Random Partitioner

Values: [ 0…2127 – 1 ]

ByteOrderedPartitioner (Not Recommended)](https://image.slidesharecdn.com/presentationfinal-150629023645-lva1-app6892/75/Presentation-11-2048.jpg)

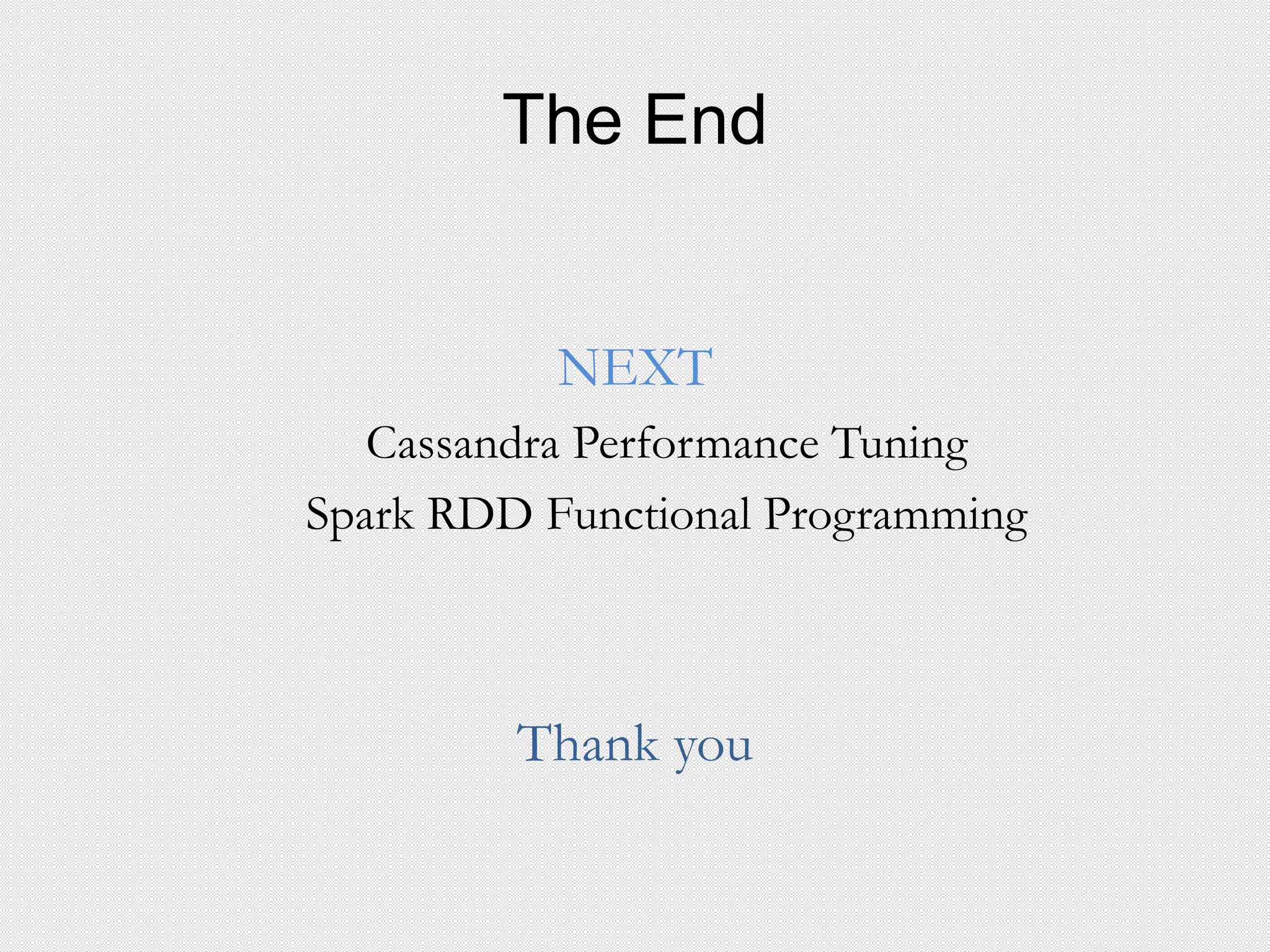

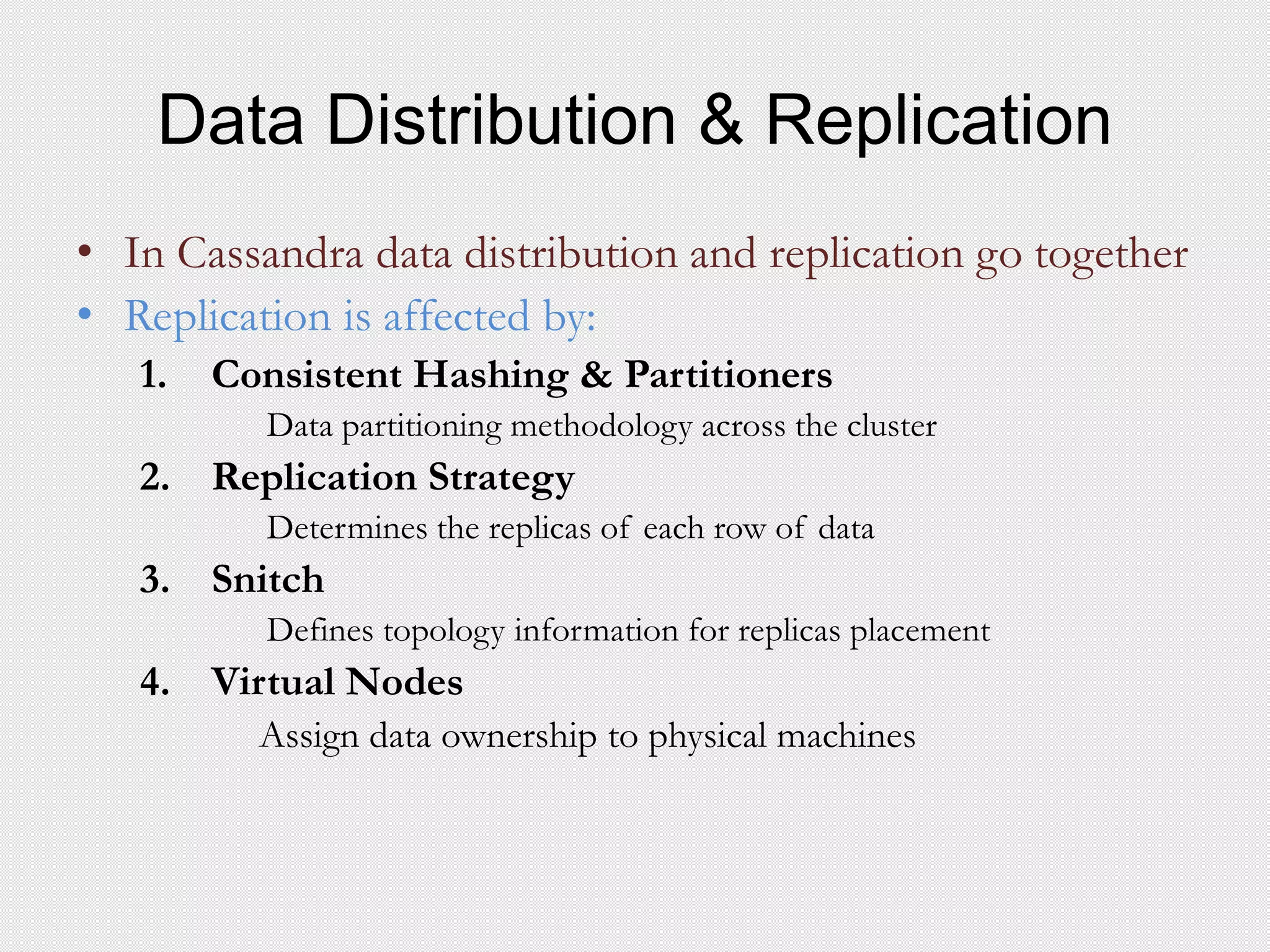

![C* Data Model

Next Concepts

– Column based key value store (multi level dictionary)

– Think of it as a JSON representation or Map [String, Map [String, Data] ]

– SST: Sorted String Table

Column Family

| Columns

↓ |

{"Street Monitor": ↓

{"Hollywood": { "avg.speed": 75,

↑ "vehicles": 45,

| "time": "2015-03-02 09:35” }

| ↑

Keys |

| Values

↓

{"Santa Monica": { "avg_speed": 35,

"vehicles": 50,

"time": "2015–03–02 10:35"

}

}](https://image.slidesharecdn.com/presentationfinal-150629023645-lva1-app6892/75/Presentation-20-2048.jpg)

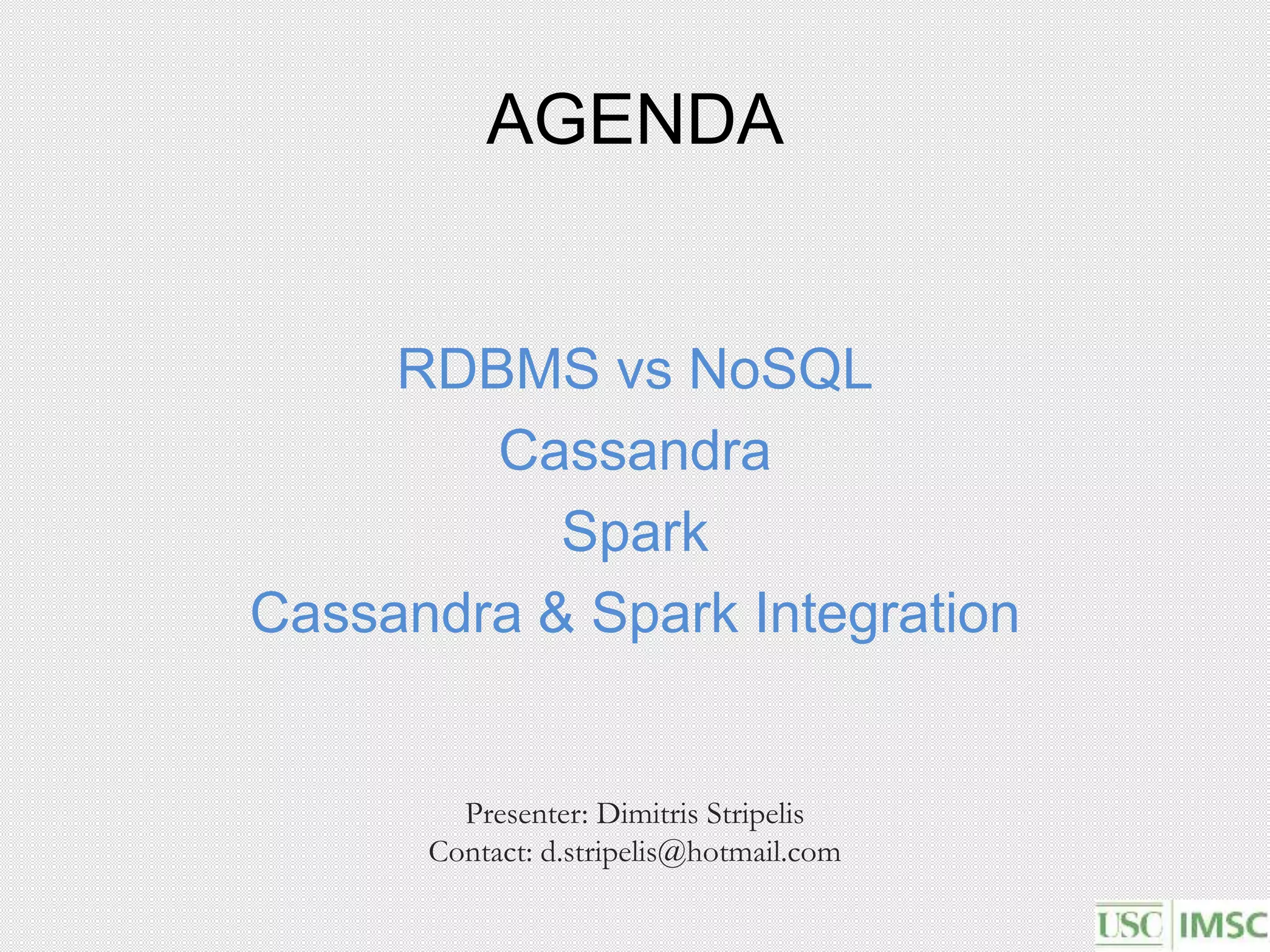

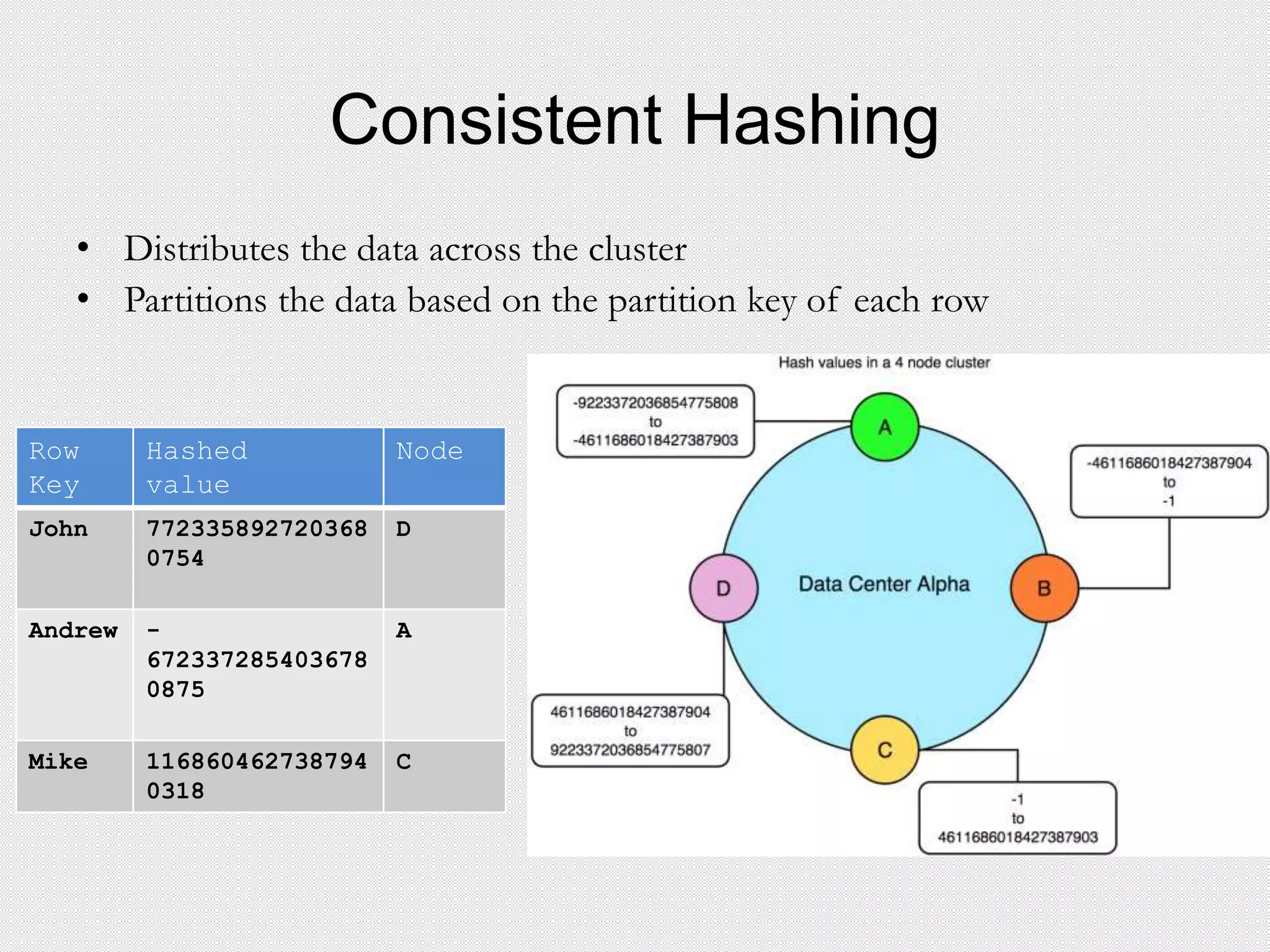

![C* Queries

• Pure CQL does not support:

JOINS, and Sub queries || GroupBy and OrderBy only on clustering columns

• No Aggregate Functions supported at Cassandra 2.0.+, later versions will

• Always need to restrict the preceding part of subject

Guidelines:

Partition key columns support the = operator

The last column in the partition key supports the IN operator

Clustering columns support the =, >, >=, <, and <= operators

Secondary index columns support the = operator

Query1 – Some parts of Partition key

SELECT * FROM highway.street_monitoring WHERE onstreet=‘I-10’ AND month=3

Error Message: cassandra.InvalidRequest: code=2200 [Invalid query] message="Partition key part year must be

restricted since preceding part is”

Query2 – Full partition key

SELECT * FROM highway.street_monitoring WHERE onstreet=‘I-10’ AND year=2015 AND month

IN(2,4)

Error Message: None](https://image.slidesharecdn.com/presentationfinal-150629023645-lva1-app6892/75/Presentation-24-2048.jpg)

![C* Queries

Query3 – Range on Partition Key

SELECT * FROM highway.street_monitoring WHERE onstreet=‘I-10’ AND year=2014 AND

month<=1

Error Message: cassandra.InvalidRequest: code=2200 [Invalid query] message="Only EQ and IN relation are

supported on the partition key (unless you use the token() function)"

Query4 – Only secondary index

SELECT * FROM highway.street_monitoring WHERE day>=2 AND day<=3

Bad Request: Cannot execute this query as it might involve data filtering and thus may have unpredictable

performance. If you want to execute this query despite the performance unpredictability, use ALLOW

FILTERING

Query5 – Range on Secondary Index

SELECT * FROM highway.street_monitoring WHERE onstreet='47' AND year=2014 AND month=2

AND day=21 AND time>=360 AND time<=7560 AND speed>30

Error Message: cassandra.InvalidRequest: code=2200 [Invalid query] message="No indexed columns present in

by-columns clause with Equal operator"](https://image.slidesharecdn.com/presentationfinal-150629023645-lva1-app6892/75/Presentation-25-2048.jpg)

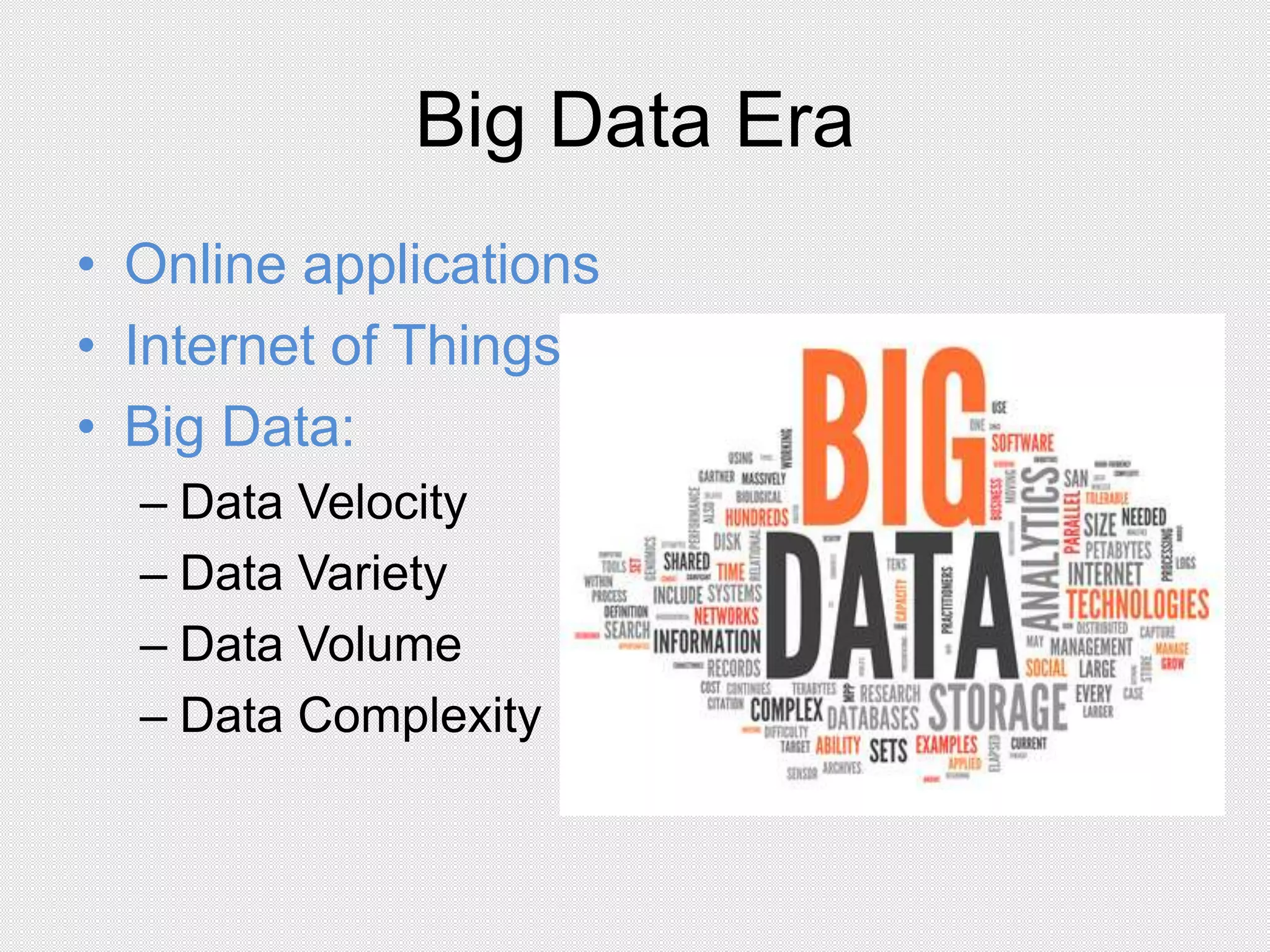

![Executors

• Properties

Worker Processes

Launch at the start

Die when application ends

• Mission

1. Run individual Tasks &

return results to the Spark Driver

2. In memory storage for the RDDs

[ .cache() | .persist() ]](https://image.slidesharecdn.com/presentationfinal-150629023645-lva1-app6892/75/Presentation-38-2048.jpg)